Step up the digitalization of your business with Alibaba Cloud 2020 Double 11 Big Sale! Get new user coupons and explore over 16 free trials, 30+ bestselling products, and 6+ solutions for all your needs!

By Suishou

AMAP provides abundant and accurate map data to significantly improve our travel experience. It uses image recognition technology to automatically produce data in order to transform the conventional processes of map data collection and production. Scene text recognition (STR) is a key part of image recognition technology. Text recognition technology is designed to recognize text in all kinds of complex scenarios in a comprehensive, accurate, and fast manner, such as stylized text on business signboards, various logos, text against a complex or partially obstructed background, and text in low-quality images. This article is divided into the following parts:

1) Evolution and practices of text recognition technology in the AMAP data production process

2) Development and frameworks of proprietary text recognition algorithms

3) Future development and challenges

As a popular app with more than 100 million daily active users (DAUs), AMAP provides a wide variety of query, location, and navigation services every day. The abundance and accuracy of map data determine the quality of user experience. Data is typically collected by data collection devices, and the collected data is manually edited and published to provide users with the desired map data. Data update is slow and data processing is costly. To solve this problem, AMAP uses image recognition technology to directly recognize various map data elements in the collected data. Then, machines automatically produce map data. AMAP collects data from the real world at a high frequency and uses image algorithm capabilities to automatically detect and recognize the content and location of each map element from massive libraries of collected images. This produces basic map data that can be updated in real time. Point of interest (POI) data and road data are two important types of basic map data and can be used to create the basemap of AMAP, which hosts user behaviors and merchants' dynamic data.

Image recognition capabilities determine the efficiency of automated data production. STR assumes an important role in this process. STR is used to retrieve text information from the image information stored in different collections of devices in a comprehensive, accurate, and fast manner. In POI scenarios, recognition algorithms must be able to recognize as much text information as possible from new stores on streets and produce results with an accuracy greater than 99% in order to enable automated production of POI names. As regards automated production of road data, recognition algorithms must be able to detect subtle changes to road signs and process large volumes of returned data on a daily basis to promptly update road information, such as speed limits and directions. AMAP uses an STR algorithm that deals with more complex images from a variety of collection sources and environments. Examples:

With the fast business growth of AMAP, existing text recognition technology can no longer deal with the difficulty of algorithm-based recognition and the complexity of recognition needs. Therefore, AMAP independently developed and iterated the STR algorithm which provides recognition capabilities for multiple products.

STR has evolved from conventional image algorithms to deep learning algorithms, making the transition in 2012.

Before 2012, mainstream text recognition algorithms relied on imaging technology and statistical machine learning methods. A conventional text recognition approach can be divided into three steps: image preprocessing, text recognition, and post-processing.

Conventional text recognition approaches perform well in simple scenarios. However, it is cumbersome to independently design the parameters of each module to suit different scenarios. In addition, it is difficult to design a model with high adaptability to different complex scenarios.

Text recognition began using deep learning algorithms as deep learning began to be widely applied in computer vision in 2012. Text recognition frameworks are gradually streamlined by deep learning. Two text recognition solutions are widely used: the two-phase solution for text line detection and text recognition, and the end-to-end text recognition solution.

1) Two-phase Solution for Text Line Detection and Text Recognition

This solution locates text lines and then recognizes their content. Text line detection methods include text box regression[1], segmentation or instance segmentation[2], and a combination of regression and segmentation[3]. Text line detection has evolved from the detection of text within multidirectional rectangles to polygons[2]. Now, we are researching how to detect text lines with arbitrary shapes. Text recognition has evolved from character detection and recognition to text sequence recognition. Connectionist temporal classification (CTC)[4] and attention mechanism[5] are two main approaches to text sequence recognition.

2) End-to-end Text Recognition Solution

This solution uses a model to complete the two tasks of text line detection and text recognition with high real-time performance. The two tasks are jointly trained by the same model so that they can mutually improve each other's performance.

AMAP has upgraded its text recognition technology multiple times over many years. Segmentation based on the fully convolutional network (FCN) and character detection and recognition have evolved to detection based on instance segmentation and the combination of character detection and recognition with sequence recognition. In actual business scenarios, AMAP does not use the end-to-end recognition framework that is widely applied in the academic community. The end-to-end framework requires sufficient high-quality text lines and tagging data to produce recognition results. However, the tagging costs are high, and there is not enough synthetic virtual data to replace real data. Therefore, the end-to-end framework is divided into the text detection model and text recognition model, which are optimized separately.

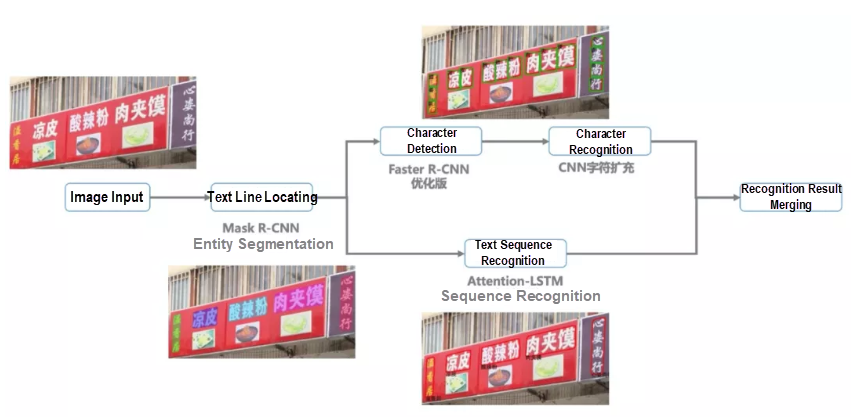

As shown in the following figure, AMAP uses an algorithm framework that consists of modules for text line detection, character detection and recognition, and sequence recognition. The text line detection module detects the target text area and predicts the text mask to correct text distortions, such as vertical text, deformed text, and bent text. The sequence recognition module recognizes text in the detected text area. The character detection and recognition module supplements the sequence recognition module when the latter does not perform well in recognizing stylized text and text with unusual alignment.

Text recognition framework

The text areas in natural scenarios are varied and irregular, with different text sizes and uncontrollable imaging angles and quality. The text on images collected from different sources has varying sizes and different degrees of fuzziness and obstruction. We conducted an experiment to optimize the two-phase instance segmentation model in order to solve actual problems.

The text line detection model can predict the results of text area segmentation and the location information for text lines. The model is integrated with a deep convolutional network (DCN) to retrieve the features of the text in different directions and increase the feature size for the mask branch. The model is also integrated with the atrous spatial pyramid pooling (ASPP) module to improve the accuracy of text area segmentation. Based on the text segmentation results, the model generates the minimum external convex hull to be used by the subsequent recognition procedure. The generic performance of the model can be improved by the online data augmentation approach, which is used to rotate, flip, and mix up data during the training process. The following figure shows examples of text detection results.

Examples of text detection results

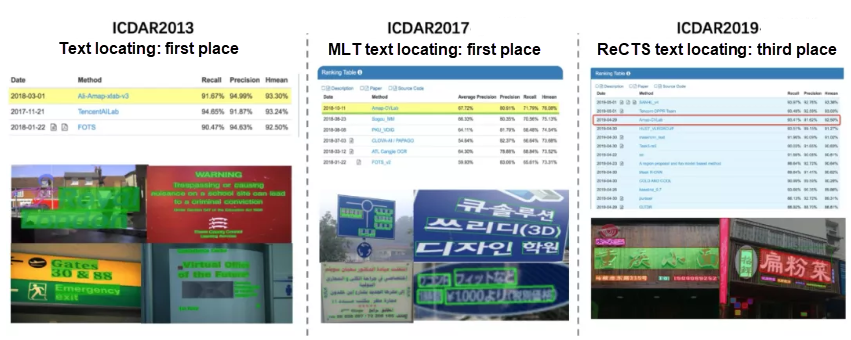

Text detection capabilities are already widely used by AMAP to produce POI and road data. The text detection model was verified in the ICDAR2013 (March 2018), ICDAR2017-MLT (October 2018), and ICDAR2019-ReCTS public data sets, demonstrating excellent performance.

Results of a text line detection contest

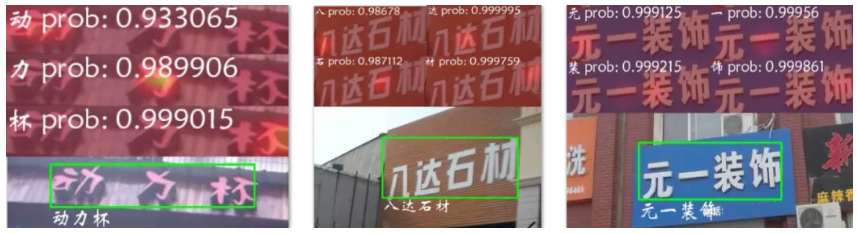

As described in the "Background" section, text recognition results can be used for automated production of POI and road data only when two requirements are met: One requirement is to recognize text line content as completely as possible. The other requirement is to identify the highly accurate (over 99% accuracy) portion of algorithm-calculated results. The results of text recognition are typically evaluated based on characters. However, AMAP focuses on the results of text line recognition, so we defined the following text recognition evaluation criteria based on the actual business situation:

The all-correct rate evaluates the ability to recognize POI and road names. The high-confidence ratio evaluates the ability to identify the text lines that are recognized with high accuracy through an algorithm. The two abilities are closely related to our businesses. We researched mainstream text recognition algorithms to find the ones that could meet our business needs.

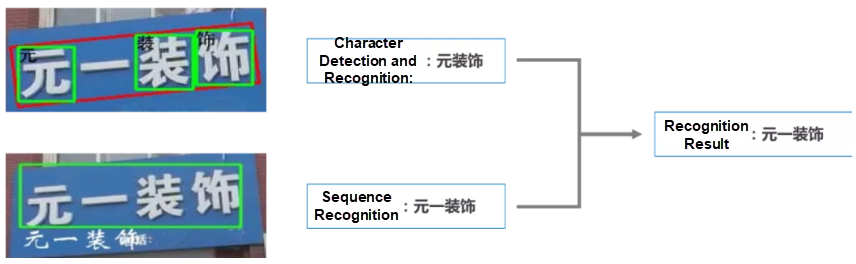

There are two main approaches to text recognition. One is character detection and recognition, and the other is sequence recognition. It is easy to organize training samples and train models for character detection and recognition, which is not affected by text layout. However, this approach may generate errors when it detects and recognizes Chinese characters with an up-down or left-right structure. The sequence recognition approach includes more context information and does not require exact location information for characters. This reduces recognition loss due to the special structures of Chinese characters. However, this approach does not perform well with complex text layouts, such as the top-down and left-to-right layouts. Text recognition accuracy can be improved by combining these two approaches.

Results of recognition that combines character detection and recognition with sequence recognition

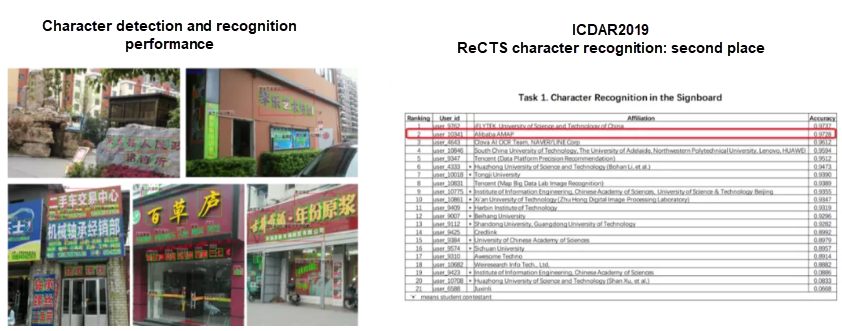

Character detection uses the Faster R-CNN method and produces results that meet business needs. Character recognition uses the SENet structure and supports more than 7,000 Chinese and English characters and digits. In the character recognition model, we optimized Skip Connections and activation functions based on the identity mapping design and MobileNetV2 architecture and added random sample variations to the training process to significantly improve text recognition capabilities. In April 2019, our text recognition algorithm ranked second in the ICDAR2019-ReCTS contest, achieving an accuracy only 0.09% less than that of the first-place finisher.

Character detection and recognition performance

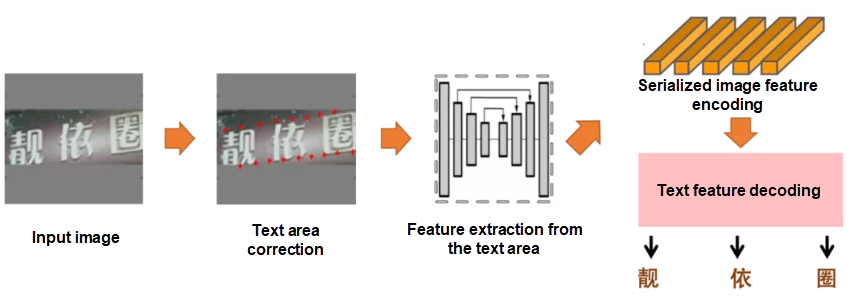

Mainstream text sequence recognition algorithms that are developed over recent years, such as Aster and DTRT, can be divided into four categories by task: text area correction, text area feature extraction, serialized image feature encoding, and text feature decoding. Text area correction and text area feature extraction algorithms correct deformed text lines into horizontal text lines and extract features. This allows the recognition algorithm to recognize text more easily. Serialized image feature encoding and text feature decoding algorithms, which form the encoder-decoder structure, recognize text based on image textures, and add strong contextual semantic information to supplement the recognition results.

Universal sequence recognition structure

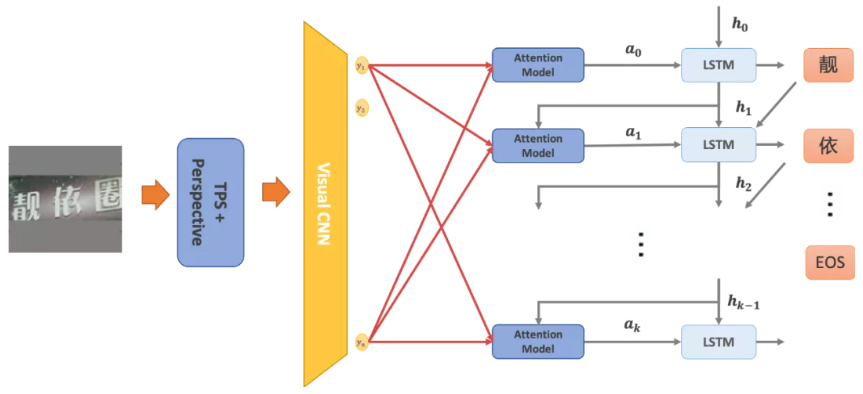

AMAP often needs to recognize short- and medium-length text in real-world settings. Such text often has serious geometric deformation, distortion, and fuzziness. To recognize multidirectional text through a single model, we use the TPS-Inception-BiLSTM-Attention structure for sequence recognition. The following figure shows the structure of the text sequence recognition model.

Structure of the text sequence recognition model

After a text line is detected, the model performs the perspective transformation of the text line based on angular points and uses thin plate splines (TPS) to obtain text in the horizontal and vertical directions. Then, the model scales the long side to the specified length and pads the text into a square image against a gray background. This preprocessing method keeps the semantic integrity of the input image and allows the image to be rotated and translated freely within the square during the training and testing processes. This effectively improves the recognition performance for bent and deformed text. The preprocessed image is imported to CNN to extract image features. The image features are encoded into serialized features through BiLSTM, and the encoded features are then decoded one by one through the attention model to obtain prediction results. As shown in the following figure, the text sequence recognition model uses the attention mechanism to assign different weights to image features in different decoding phases in order to implicitly express the alignment relationships between predicted characters and features. This enables the simultaneous prediction of text in multiple directions. The model supports English letters, the level-1 Chinese characters library, and a library of commonly used traditional Chinese characters. It provides good recognition performance for stylized text and fuzzy text.

Sequence recognition performance

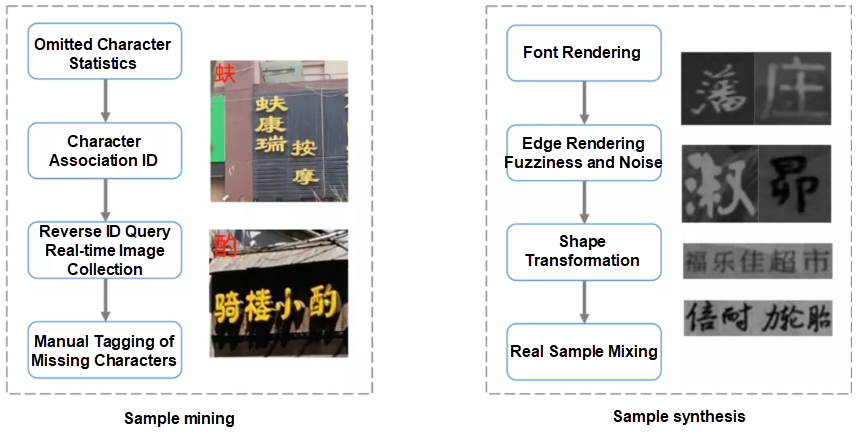

In map data production, we often encounter rarely seen place names on road signs or rarely used characters and even traditional Chinese characters on POI signage. Therefore, to optimize the text recognition effect, we must compensate for samples with omitted characters in addition to model optimization. To do so, we use real sample mining and artificial sample synthesis. Based on our business characteristics, we analyze the names that contain rarely used characters in the database to reversely mine the images that may contain rarely used characters and manually tag these images. We also use image rendering technology to artificially synthesize text samples. Real samples and synthetic samples are used in conjunction to significantly improve text recognition capabilities.

Sample mining and synthesis solution

With the refined text recognition algorithm and the merging of many recognition results, AMAP's solution can be used in a variety of scenarios. Computer vision, a key technology in the field of text recognition, is widely applied to the automated production of AMAP data. In some collection scenarios, machines produce all data automatically. Machines produce and publish more than 70% of POI data automatically, and more than 90% of road data is automatically updated. This cuts down on the skills required by data process engineers, which reduces training costs and overhead.

The STR approach used by AMAP is based on deep learning. Compared with other languages, the Chinese language contains more characters with complex structures. This requires AMAP to produce more diverse map data to generate satisfying results and makes data insufficiency our primary difficulty. In addition, fuzzy images often affect the performance of automatic identification and efficiency of data production. AMAP has to work out a way to identify and process fuzzy images. The following sections explain how to solve the problems of data insufficiency and fuzzy image recognition from the perspectives of data and model design as well as how to improve text recognition capabilities.

Data is important. A general research topic in the image field is how to automatically scale out data size when we do not have enough manpower and resources for tagging. One approach is to scale out data sample size through data augmentation. Google DeepMind proposed AutoAugment at CVPR 2019. AutoAugment is an algorithm used to search for the optimal data augmentation policy through reinforcement learning. Another approach is data integration. For example, SwapText developed by Alibaba DAMO Academy produces data through style transfer.

STR technology often encounters problems of character omission and failed recognition due to fuzzy images. In academia, super resolution (SR) is one of the main methods for fuzzy image recognition. TextSR uses the super-resolution generative adversarial network (SRGAN) to apply SR to text to recover a high-resolution (HR) image from a fuzzy text image. According to the explanation provided by Seoul National University and The University of Massachusetts in the paper Better to Follow, unlike TextSR, GAN, when applying SR to features, does not directly create an image but integrates the SR network with the detection network. GAN produces a result similar to that of TextSR but significantly improves computing efficiency because it uses end-to-end mode.

We usually refer to prior semantic information when we try to understand complex text. With the development of natural language processing (NLP) technology in recent years, computers have the ability to obtain semantic information. It is worthwhile to study how to improve text recognition capabilities based on the relationship between prior semantic information and images, with reference to the way we understand complex text. For example, at CVPR 2020, SEED proposed that a language model be added to a recognition model to improve text recognition capabilities through a comprehensive evaluation of image features and semantic features.

The movement from the cloud to terminals is another trend in the development of text recognition models. This helps reduce the traffic overhead generated by uploading data to the cloud as well as the workload of cloud servers. When we design a cloud-to-terminal model, we should explore the possibility of a highly accurate, lightweight detection and recognition framework based on the research and optimization work on optical character recognition (OCR) algorithms so that the model can be compressed into a proper size for terminal deployment without any loss of speed.

AMAP's Transformation from Navigation Positioning to High-Precision Positioning

AMAP Front-end Technology Development Over the Last Five Years

Alibaba Clouder - June 17, 2020

amap_tech - November 20, 2019

Alibaba Cloud Community - December 18, 2025

Alibaba Cloud Community - August 22, 2025

amap_tech - March 16, 2021

amap_tech - March 23, 2021

Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Black Friday Cloud Services Sale

Black Friday Cloud Services Sale

Get started on cloud with $1. Start your cloud innovation journey here and now.

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn MoreMore Posts by amap_tech