With the rapid development of the AMAP, also known as AutoNavi, business along with the overall growth of algorithm business of navigation services, various small- and medium-sized businesses are generating increasing numbers of urgent demands for algorithm strategies. In the past two years, some new projects took only one week to complete, from algorithm research to application launch. For example, these demands include various algorithm services related to shared travel, and business needs of systems such as risk control, scheduling, marketing, and others. The matured structure and development method in traditional navigation feature a long cycle, high traffic, and low latency. Thus, the traditional navigation methods no longer meet the needs for quick trial-and-error and optimization and improvement in the initial stage of such businesses. Therefore, it is imperative to find suitable algorithm services to drive the business.

In the implementation process, integrated architecture is adopted. The integration means that algorithm development and engineering are integrated. It involves the end-to-end interconnection of data and systems to realize systematic data flows. The research and development of algorithm business are conducted along with engineering service development, and the testing and verification process is automated and intelligent, forming a closed-loop of business and facilitating rapid iteration.

In the initial stage of the project, quick trial-and-error and strategy optimization are required. In this case, the required Queries Per Second (QPS) performance is usually not high but lower than 1 KB/s. Therefore, traditional application development and deployment methods slow down the business process and waste a lot of resources.

In this process, we hope to make offline strategy research equal to service logic development, and the completion of offline research equal to service completion. Achieving this significantly reduces the time from algorithm research to strategy launch. Since the performance requirements such as the Queries Per Second (QPS) and Response Time (RT) are not demanding, it is possible to achieve quick development in Python offline.

In the long run, as the business gradually matures, fast algorithm combination, service call volume, and service performance become important evaluation metrics for algorithm services. At this point, reasonable optimization must be advanced. For example, implementing high-performance logic of core algorithms will be an important task. Hence, the construction process of the integrated algorithm engineering also fulfills the iterative process of business from the initial stage to the mature stage.

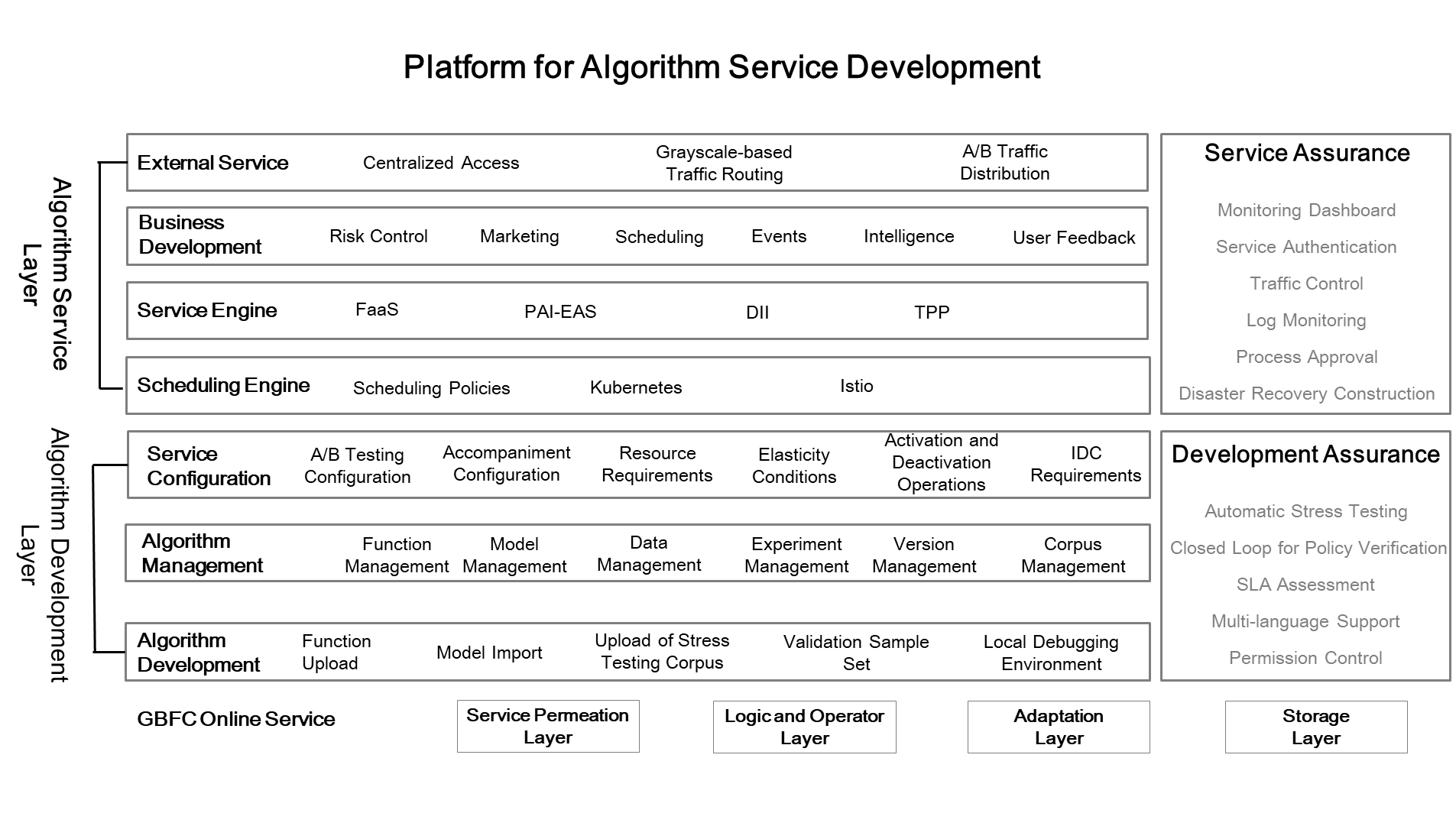

The entire platform consists of several logical components, as shown in the preceding figure.

Note: GBFC is the data service layer. It is mainly used to obtain the data and features required by various algorithms, to ensure that the business algorithm services achieve stateless conditions. It also facilitates data sharing and co-construction in different business lines.

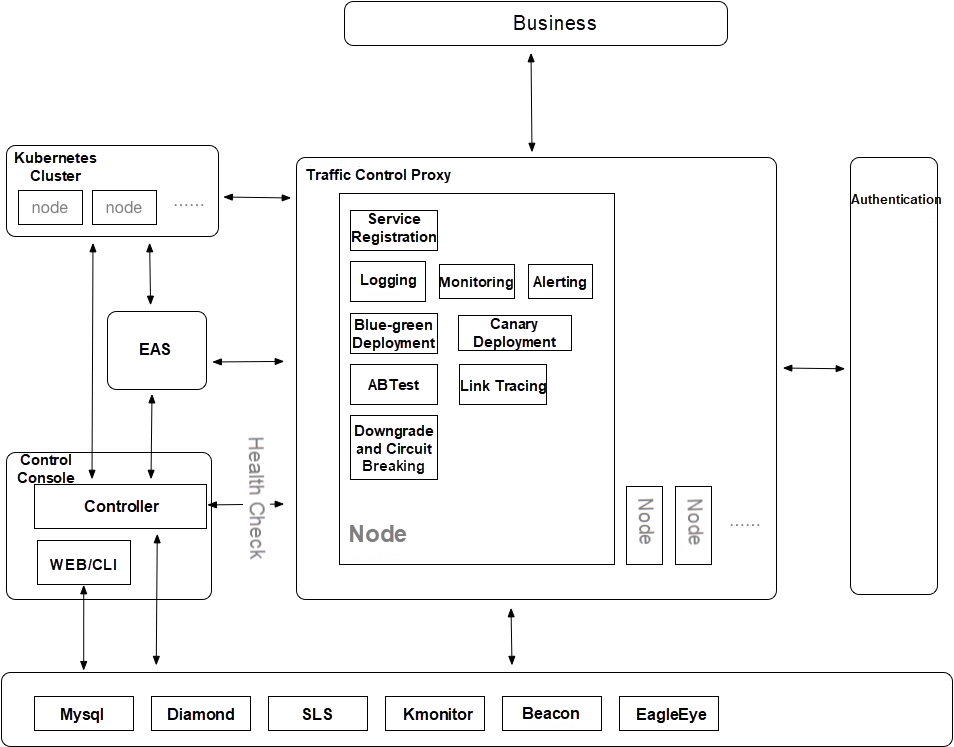

The gateway service isolates various algorithm APIs to provide atomic services and composite services. When the business front needs to call algorithms such as value judgment, risk estimation, and marketing recommendation algorithms, the centralized gateway provides a unified algorithm API for each business to avoid repeated service calls. The algorithm gateway monitors algorithms in a centralized manner, including monitoring service performance and interface availability. Similarly, it also collects data to facilitate data management and feature production, implement real-time online learning, improve the user experience, and ensure algorithm performance.

The gateway service also performs common pre-processing operations, such as authentication, routing, throttling, downgrade, circuit breaking, gray release, A/B testing, and accompaniment, to ensure service availability and scalability. In addition, it combines services, such as speech recognition and image processing, to organically combine various algorithm services, to ensure that the service algorithm layer only maintains atomic services to process complex businesses.

Therefore, the gateway service inevitably needs the support of a flexible, elastic, lightweight, and stateless algorithm business layer. In terms of technology selection, the currently popular serverless architecture effectively meets the preceding requirements.

In 2009, Berkeley defined cloud computing from a unique perspective. In the last four years, his article has been widely quoted as its viewpoint corresponds with the technical scenarios at the initial stage of the business. For example, reducing service-oriented work and focusing only on business logic or algorithm logic to achieve fast iteration and computing on demand.

In 2019, Berkeley further proposed that the interfaces provided by serverless architecture simplify the programming of cloud computing. It represents another revolution in programmer productivity, just as the programming language has evolved from the assembly language era to the advanced language era.

In the current exploration of serverless architecture, Function as a Service (FaaS) is widely regarded as the best example, which is highly related to the characteristics of FaaS. FaaS is a lightweight, stateless service. It runs fast and is especially suitable for stateless applications. Although FaaS has bottlenecks at the cold startup level, this architecture solves many problems. The quick practice of algorithms at the initial stage of business is aligned with the characteristics of this architecture.

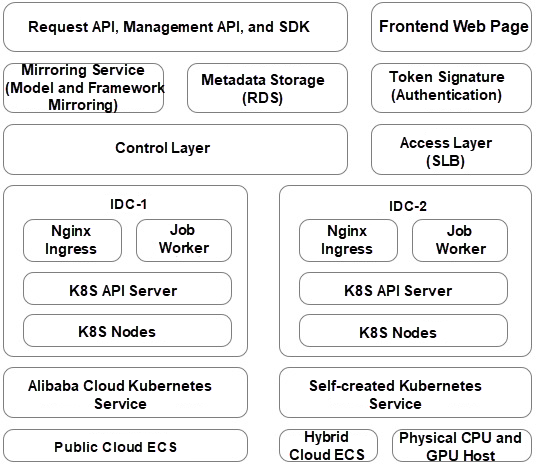

Based on the serverless architecture, we have deployed algorithm services shown in the following figure.

As shown in the preceding figure, after the local development of an algorithm service is completed, the algorithm is released directly as a function. This means that the completion of offline strategy research equals the completion of service code, as discussed earlier in this article. This feature also solves the problem where algorithm personnel is disconnected from the business. Many algorithm personnel are unable to independently develop the entire engineering service but need to submit the code to engineering personnel for integration, packaging, and publishing. From the perspective of the entire business chain, this collaboration is apparently unreasonable.

On the one hand, algorithm personnel cannot provide business support independently. On the other hand, engineering personnel not only need to handle logic calls, but also need to spend time trying to understand the current algorithm implementation methods, principles, data requirements, exceptions, and other problems. In most cases, the meetings and communication about a relatively simple algorithm service account for a majority of their valuable work time. Therefore, the introduction of FaaS not just simplifies this business development process but also maximizes the automation of algorithm deployment, facilitating assembly and reuse among businesses.

In our implementation process, we first built a Python runtime environment, so that Python code and related models are directly transformed to services. The models support PMML and TensorFlow, meeting the requirements at the initial business phase, such as fast iteration and reduced trial-and-error costs.

After this, we gradually improved the runtime environments based on Golang and Java. The reason for choosing Golang is that it supports parallelism well. Not only it meets the performance requirements of the business but also retains the highlight of relatively simple development.

After algorithm atomization and functionalization, algorithm personnel is able to independently manage an online business. However, this also incurs some serious concerns, including service stability, project quality, and service correctness. Simply passing these problems on to algorithm personnel, is nothing but a shift in workloads. Even worse, it may cause the risk of downtime for the entire business. Therefore, the construction of a quality assurance system becomes an important step.

Many people think that quality assurance practice is submitting to testing personnel for testing or regression testing, which however is false. The labor costs saved from the prior two steps are transferred to testing personnel. Since the engineering capabilities of algorithm personnel are relatively weak, it's critical to ascertain whether we should add more testing personnel for verification. If we hold on to thoughts like this, we will still not be able to accelerate business iteration. So, we must consider this: Can the quality assurance process be completely automated? The answer is yes.

In the process of strategy research, we generally go through the following steps:

1) Data analysis

2) Algorithm design

3) Data verification

4) Manual or automatic evaluation

5) Policy iteration by repeating steps 1 to 4

This process includes the data, data sets, testing sets, and verification sets that are required for the quality assurance system. Algorithm personnel use these three sets to implement an automated stress testing process and output QPS, RT, consistency rate, and other relevant metrics. Among these sets, the data set is used to implement stability testing, the testing set is used to verify logical correctness, and the verification set is used to conduct performance testing.

These measures only compose part of the entire quality assurance system. Generally, A/B verification or full commissioning verification is required for fast business iteration. During the implementation process, we implement full accompaniment verification through drainage and process chains to perform a comprehensive quality evaluation for algorithms proposed to be launched.

The centralized construction of algorithm development and engineering has basically fulfilled the demand for fast iteration at the initial stage of the business. In the process of project implementation, the challenges to engineering personnel come not only from engineering practices but also from a clear understanding of the stage where the business operates at that point in time.

Currently, algorithm development and engineering can be independently carried out on the premise that data and algorithms are separate, which implies that the algorithm services are stateless and functionalized. This in turn increases the time spent on the construction of the feature service layer. Likewise, the feature service layer can also be built and shared based on similar logic.

Finally, we must notice that the algorithm-engineering integrated architecture basically meets the demands of business algorithms. For artificial intelligence (AI) projects that require massive computing power, there are several bottlenecks caused by frequent data acquisition needs that significantly affect algorithm innovation. The industry is integrating data storage units with computing units to produce innovative hardware architecture for computing-storage integration, and to remove the bottlenecks of AI computing power.

AMAP's Transformation from Navigation Positioning to High-Precision Positioning

amap_tech - March 23, 2021

amap_tech - March 16, 2021

amap_tech - October 29, 2020

amap_tech - April 20, 2020

amap_tech - November 11, 2019

Alibaba Clouder - June 17, 2020

Serverless Workflow

Serverless Workflow

Visualization, O&M-free orchestration, and Coordination of Stateful Application Scenarios

Learn More Serverless Application Engine

Serverless Application Engine

Serverless Application Engine (SAE) is the world's first application-oriented serverless PaaS, providing a cost-effective and highly efficient one-stop application hosting solution.

Learn More Architecture and Structure Design

Architecture and Structure Design

Customized infrastructure to ensure high availability, scalability and high-performance

Learn More DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn MoreMore Posts by amap_tech