This article was written jointly by the Alibaba Cloud Data Lake Formation (DLF) Team and the Databricks DataInsight (DDI) Team. It aims to give readers have a deeper understanding of Alibaba Cloud Data Lake Formation and Databricks DataInsight to build a one-stop cloud data lake solution.

Chen Xinwei (Xikang), Technical Expert of Alibaba Cloud Computing Platform

Feng Jialiang (Jialiang), Technical R&D of Alibaba Cloud Computing Platform

Since the development in the data era, the amount of data is growing explosively, and the data forms are becoming more diverse. The traditional data warehouse model has increasingly prominent problems, such as high costs, slow response, and few supported formats. As a result, a data lake with a lower cost of ownership, richer data formats, and more flexible analysis and computing capabilities was created.

As a centralized data warehouse, data lakes support a variety of data types, including structured, semi-structured, and unstructured data. The data sources include database data, incremental binlog data, log data, and inventory data on existing data warehouses. Data lakes can manage the centralized storage of these data from different sources and formats in the cost-effective storage, Object Storage Service (OSS), and provide a unified data catalog to support multiple computing and analysis methods. This effectively solves the problem of data silos faced by enterprises and reduces the cost of storing and using data for enterprises significantly.

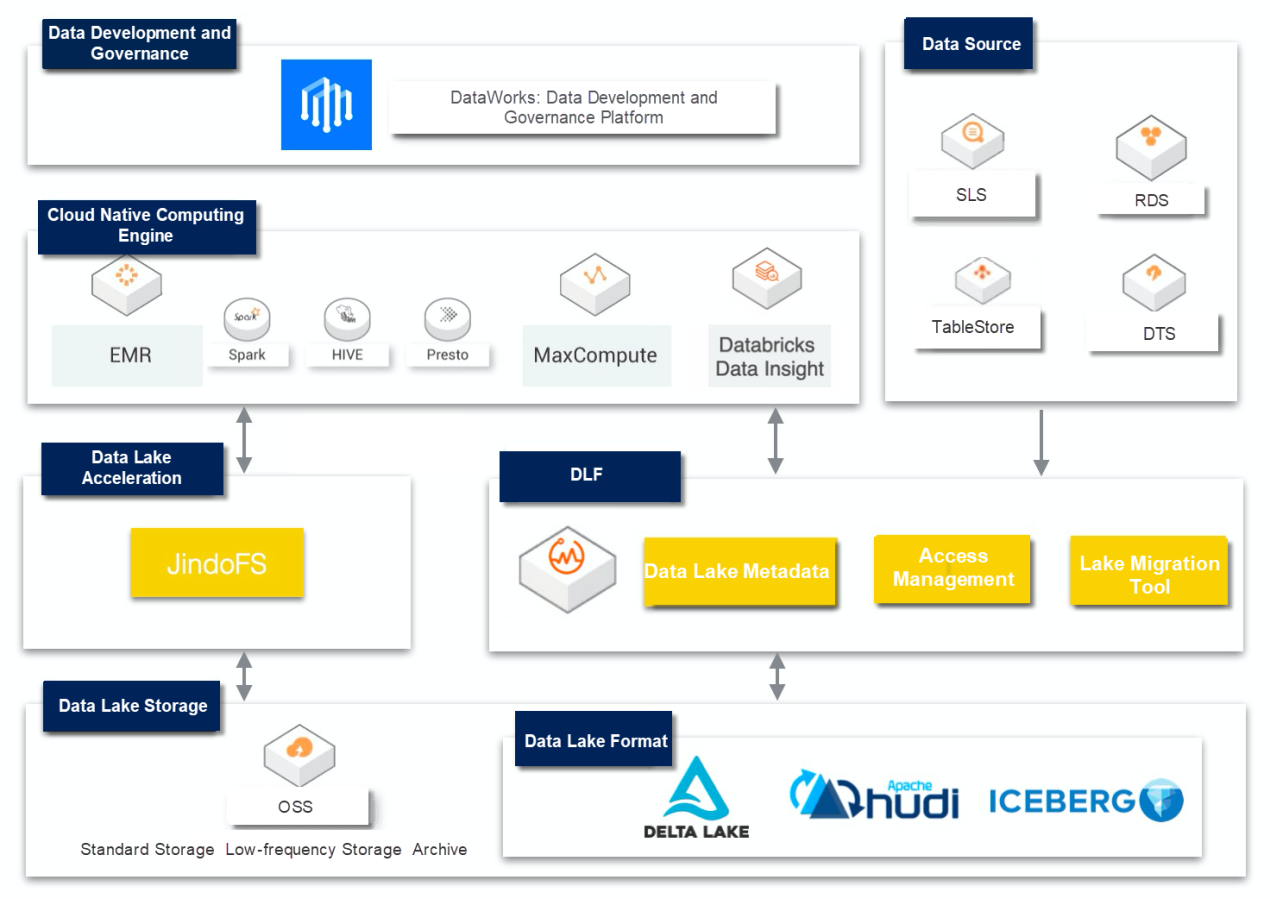

The enterprise-level data lake architecture is pictured below:

Data lake storage mainly uses Object Storage Service as the main medium, which has the advantages of low costs, high stability, and high scalability.

We can use data lake storage formats that support ACID (atomicity, consistency, isolation, durability), such as Delta Lake, Hudi, and Iceberg. These data lake formats have their own data meta management capabilities and can support operations, such as Update and Delete. They solve the problem of real-time data updates in big data scenarios in a batch-stream integrated manner. In the current solution, we mainly introduce the core capabilities and use scenarios of Delta Lake.

Delta Lake is a unified data management system that brings data reliability and fast analysis to data lakes on the cloud. Delta Lake runs on top of an existing data lake and is fully compatible with Apache Spark's API. With Delta Lake, you can speed up the import of high-quality data into your data lake. Teams can also use this data quickly on cloud services, securely and in a scalable way.

The raw data of an enterprise exists in a variety of databases or storage systems, such as the relational database MySQL, the log system SLS, the NoSQL database HBase, and the message database Kafka. Most of the online storage is for online transactional businesses and is not suitable for online analysis scenarios. Therefore, data needs to be synchronized in a non-intrusive manner to Object Storage Service that is lower in cost and more suitable for computing and analysis.

Commonly used data synchronization methods include batch synchronization based on data synchronization tools, such as DataX and Sqoop. At the same time, in scenarios with high real-time requirements, streaming synchronization links, such as Kafka + Spark Streaming / Flink, are used together. Currently, many cloud vendors provide a one-stop solution to enter the lake to help customers realize data entering the lake in a faster and lower-cost way, such as Alibaba Cloud DLF data entering the lake.

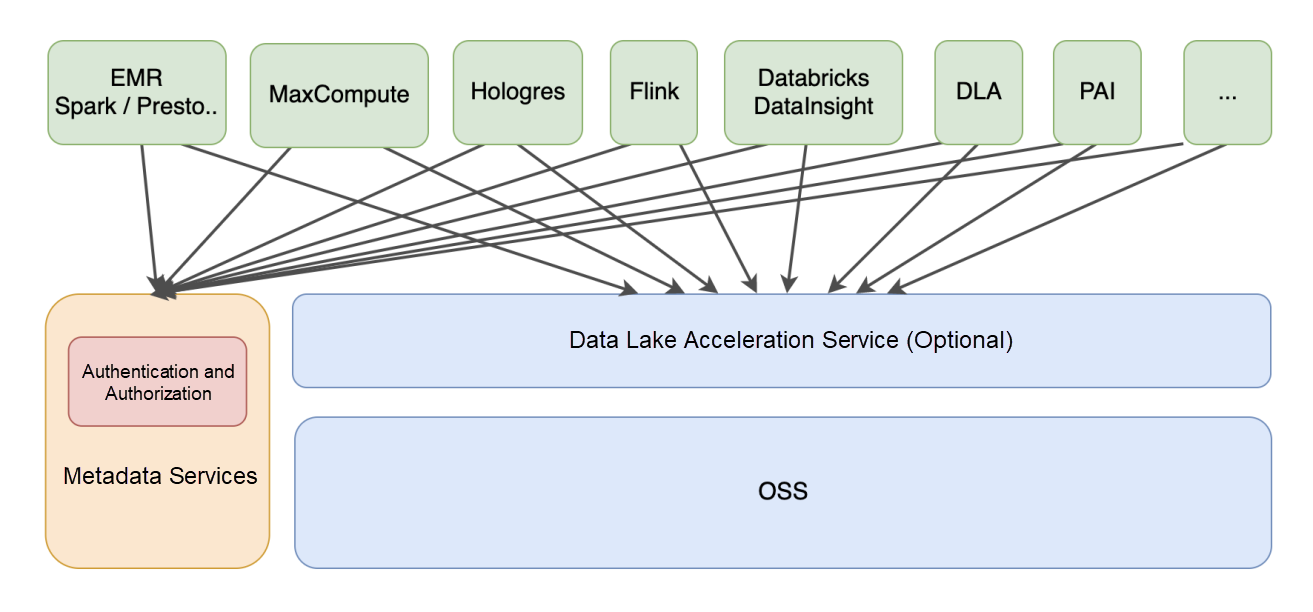

Object Storage Service (OSS) does not have semantics for big data analysis. It needs to combine metadata services, such as Hive Metastore Service to provide metadata information for various upper-layer analysis engines. The design goal of the data lake metadata service is to build a unified metadata view of different storage systems, formats, and different computing engines in an environment of big data engine and storage diversity. It should have unified permissions and metadata. It should also be compatible and expand the open-source big data ecosystem metadata service, support the automatic acquisition of metadata, and achieve the purpose of managing multiple uses at one time so that it can be compatible with the open-source ecosystem. It is also easy to use.

Compared with data warehouses, data lakes connect to a variety of different computing engines in a more open way, such as the traditional open-source big data computing engines Hive, Spark, Presto, and Flink. They also support big data engines developed by cloud service providers, such as Alibaba Cloud MaxCompute and Hologres. Between data lake storage and computing engines, data lake acceleration services are generally provided to improve the performance of computing and analysis, while reducing the cost and pressure of bandwidth.

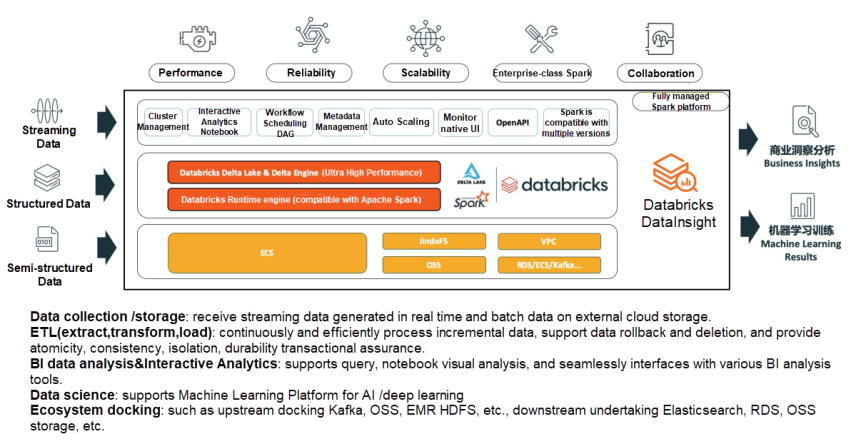

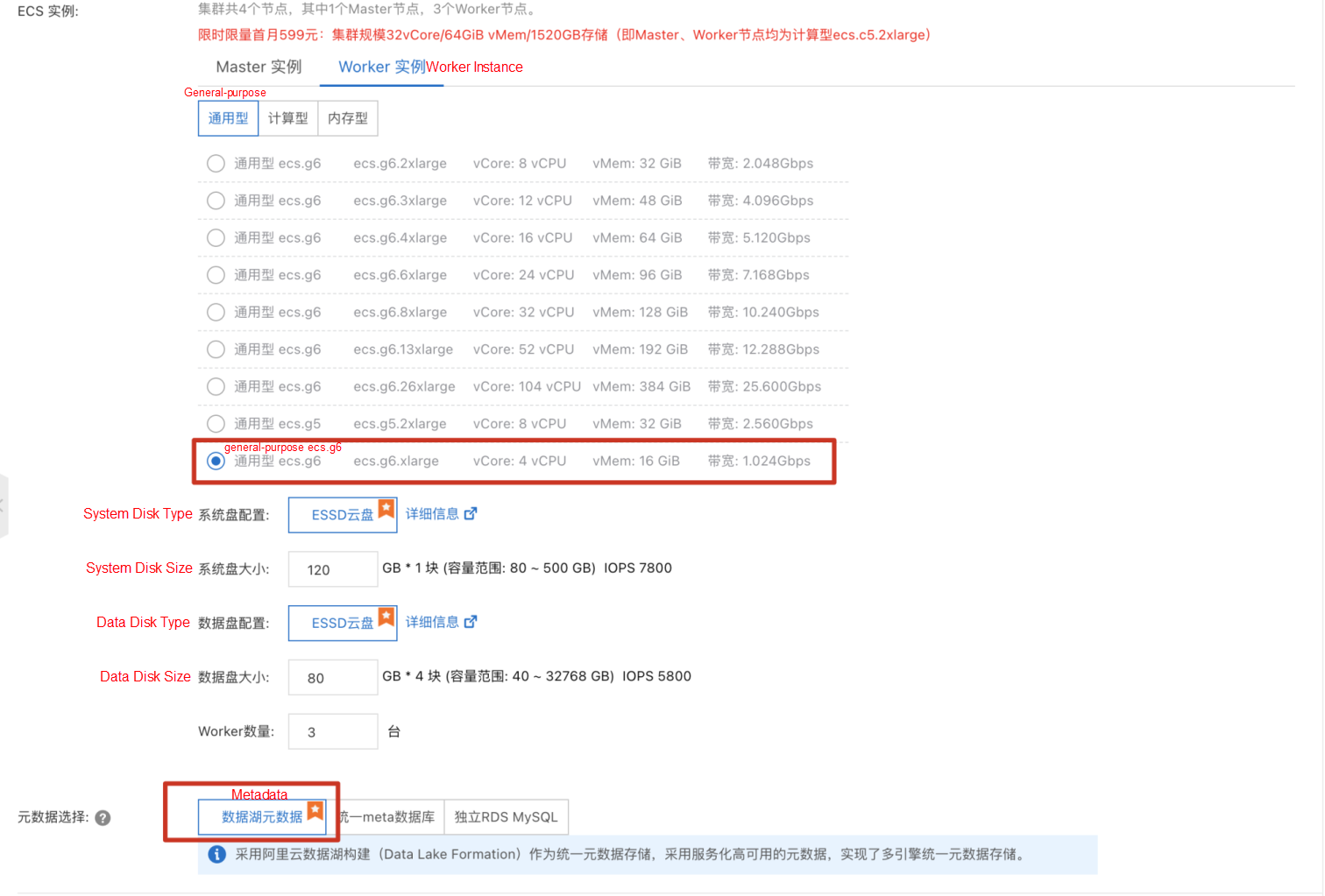

As a fully managed Spark analytics engine on Alibaba Cloud, DataBricks DataInsight helps users calculate and analyze data in a data lake.

When an enterprise builds and uses a data lake, the entire process involves data ingestion, data lake storage and management, and data lake exploration and analytics. This article mainly introduces how to build a one-stop data entry into the lake based on Alibaba Cloud Data Lake Formation (DLF) and Databricks DataInsight (DDI).

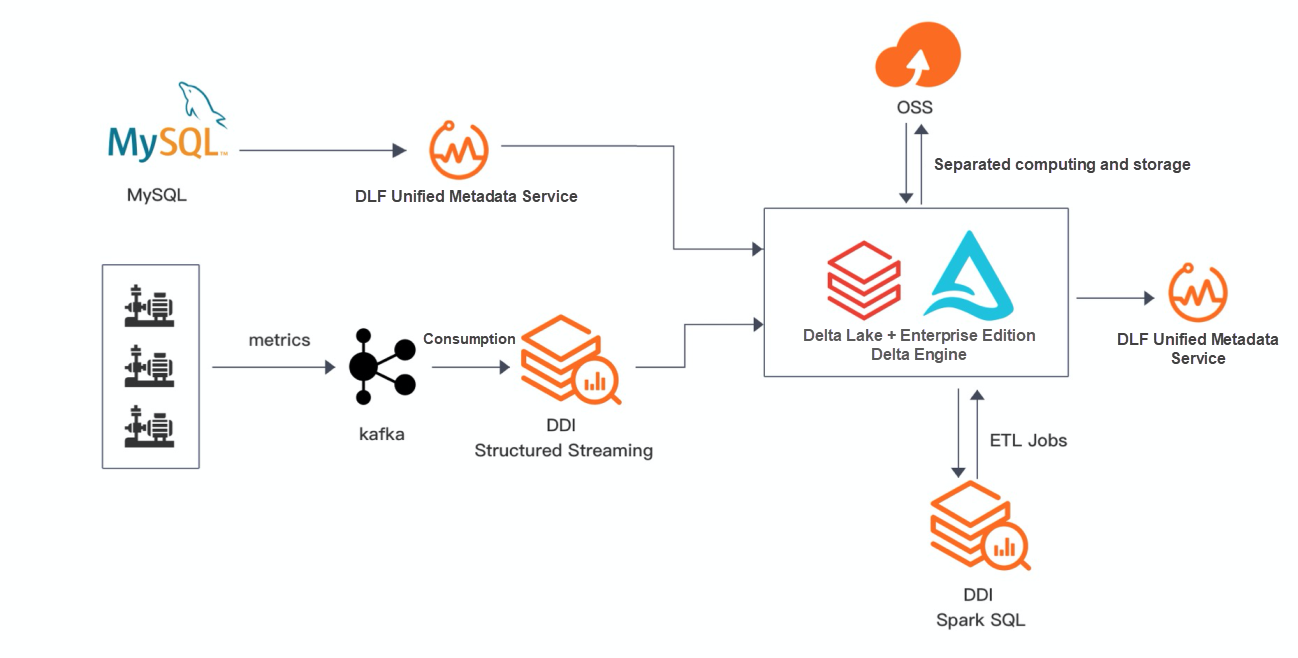

Stream Processing Scenarios:

Real-Time Scenario maintenance updates two Delta tables:

delta_aggregates_func table: RDS data enters the lake in real-time.delta_aggregates_metrics: Industrial metric data is collected to Kafka through the IoT platform and entered into the lake in real-time through the Spark Structured Streaming.Batch Processing Scenarios:

Two DELTAs are generated in real-time scenarios as data sources for data analysis, and the execution of Spark jobs are scheduled through Databrick DataInsight.

Make sure that cloud services, such as DLF, OSS, Kafka, DDI, RDS, and DTS, are activated. Note: DLF, RDS, Kafka, and DDI instances must be in the same region.

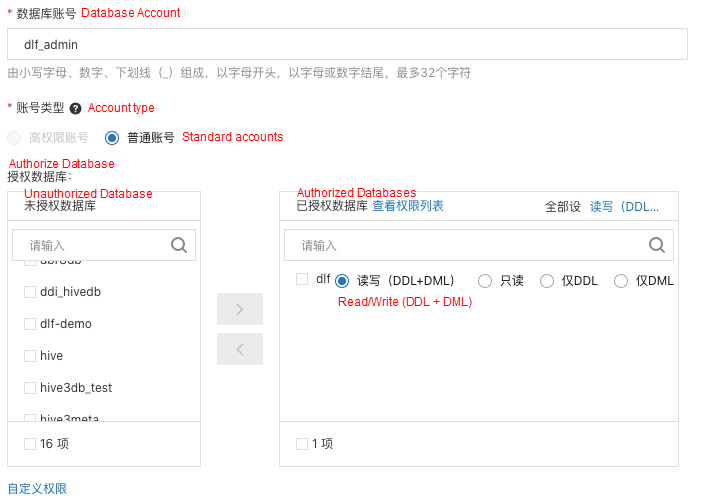

RDS Data Preparation: Create the dlfdb database in RDS. Create a user account that can read engine_funcs databases in the account center, such as dlf_admin.

Log on to the database through DMS, run the following statement to create the engine_funcs table, and insert a small amount of data.

CREATE TABLE `engine_funcs` ( `emp_no` int(11) NOT NULL,

`engine_serial_number` varchar(20) NOT NULL,

`engine_serial_name` varchar(20) NOT NULL,

`target_engine_serial_number` varchar(20) NOT NULL,

`target_engine_serial_name` varchar(20) NOT NULL,

`operator` varchar(16) NOT NULL,

`create_time` DATETIME NOT NULL,

`update_time` DATETIME NOT NULL,

PRIMARY KEY (`emp_no`)

) ENGINE=InnoDB DEFAULT CHARSET=utf8

INSERT INTO `engine_funcs` VALUES (10001,'1107108133','temperature','1107108144','temperature','/', now(), now());

INSERT INTO `engine_funcs` VALUES (10002,'1107108155','temperature','1107108133','temperature','/', now(), now());

INSERT INTO `engine_funcs` VALUES (10003,'1107108155','runTime','1107108166','speed','/', now(), now());

INSERT INTO `engine_funcs` VALUES (10004,'1107108177','pressure','1107108155','electricity','/', now(), now());

INSERT INTO `engine_funcs` VALUES (10005,'1107108188','flow' ,'1107108111','runTime','/', now(), now());

Create a bucket in OSS named databricks-data-source

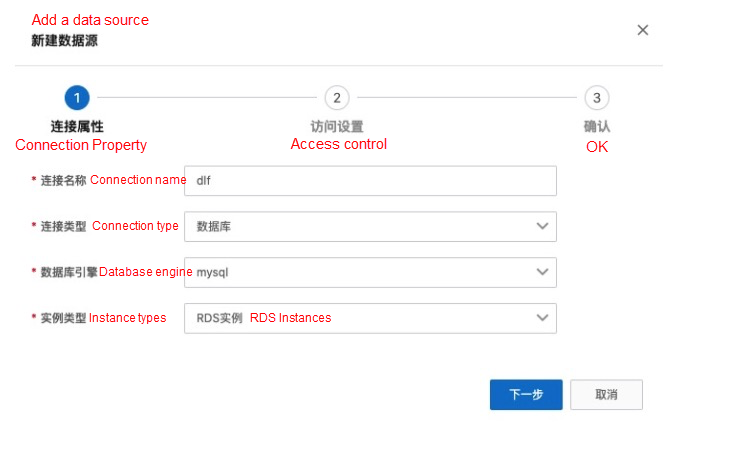

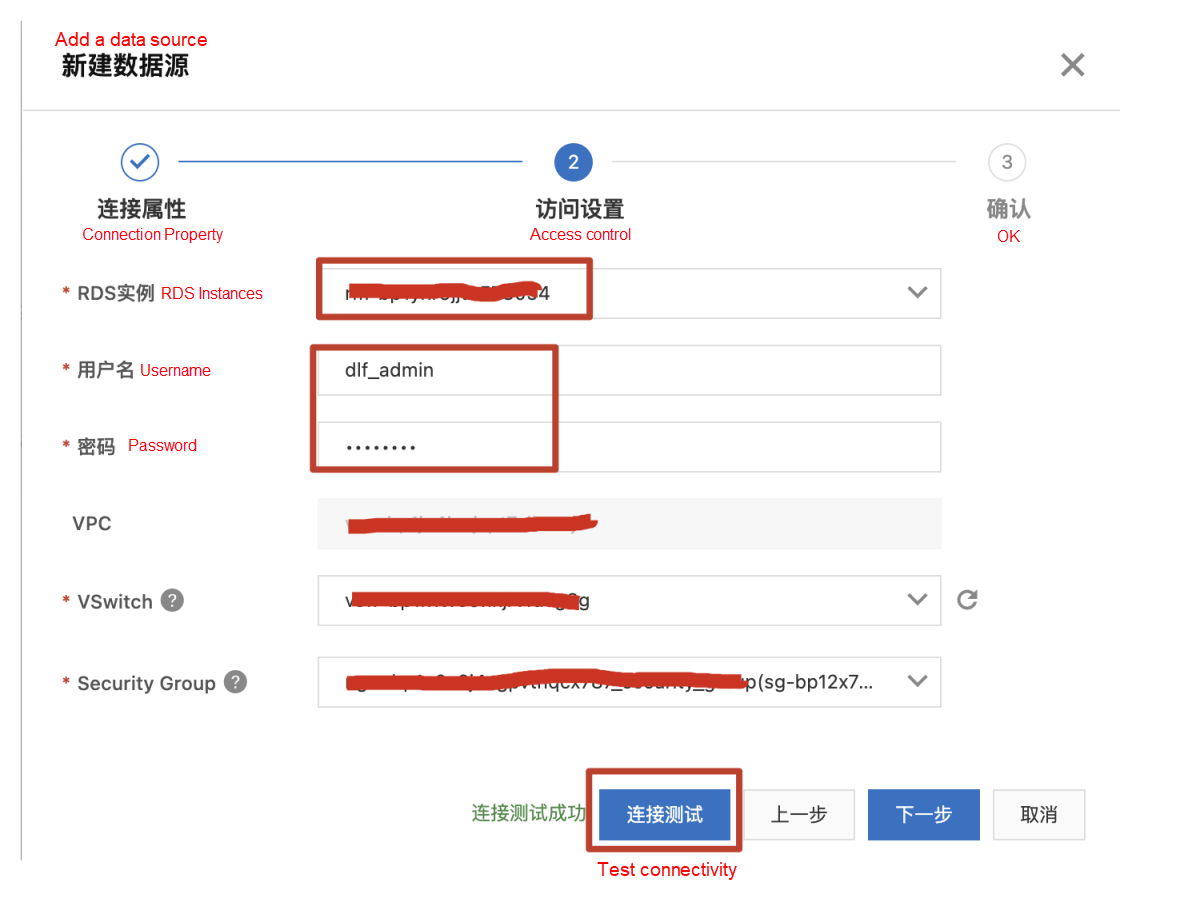

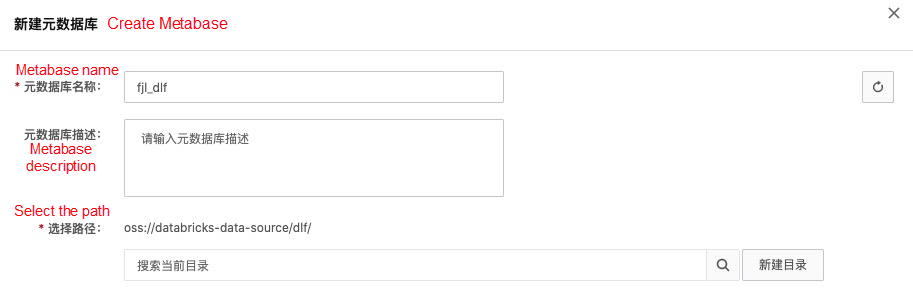

On the left-side navigation pane, choose "metadata management" → "metabase" and click "new metabase." Enter a name, create a new directory dlf/, and choose the directory.

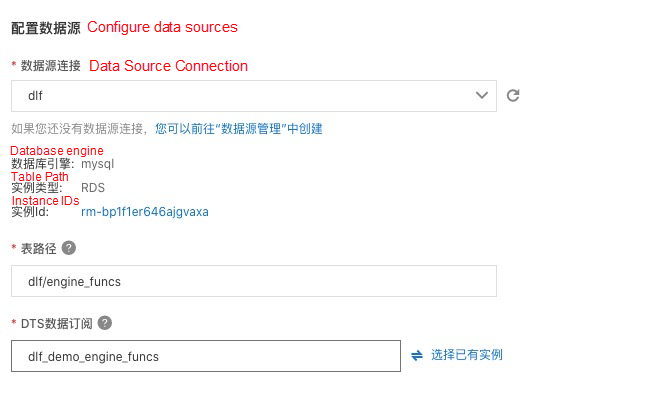

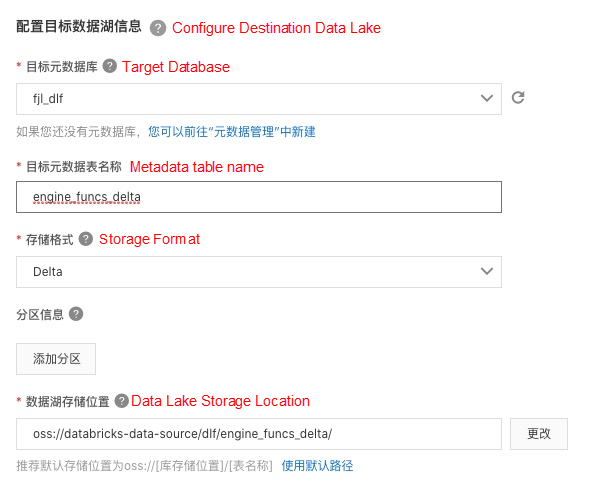

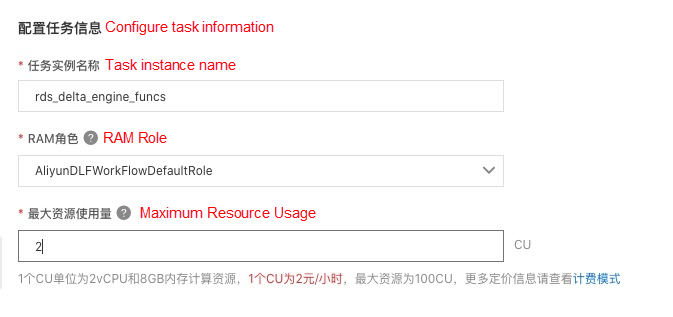

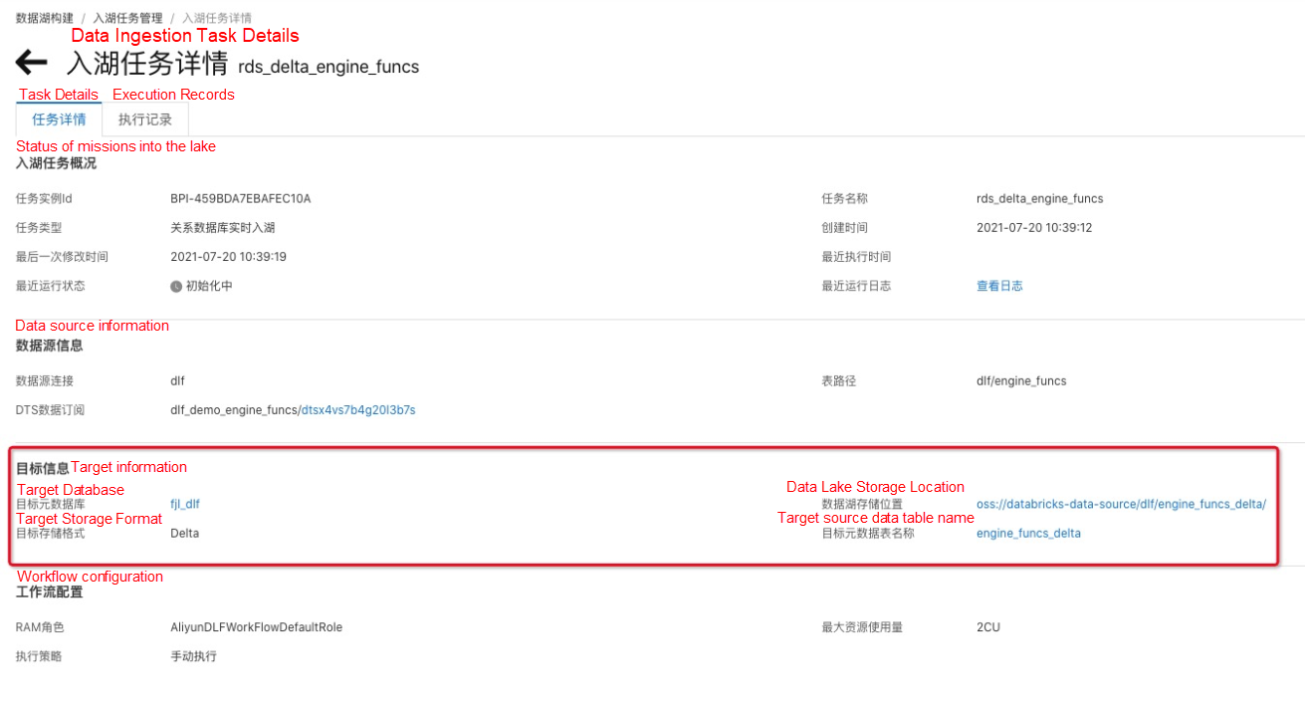

This data entry task belongs to the full and incremental entry task. After about 3-5 minutes, the full data will be imported and enter the real-time monitoring states automatically. If the data is updated, the data is updated to Delta Lake automatically.

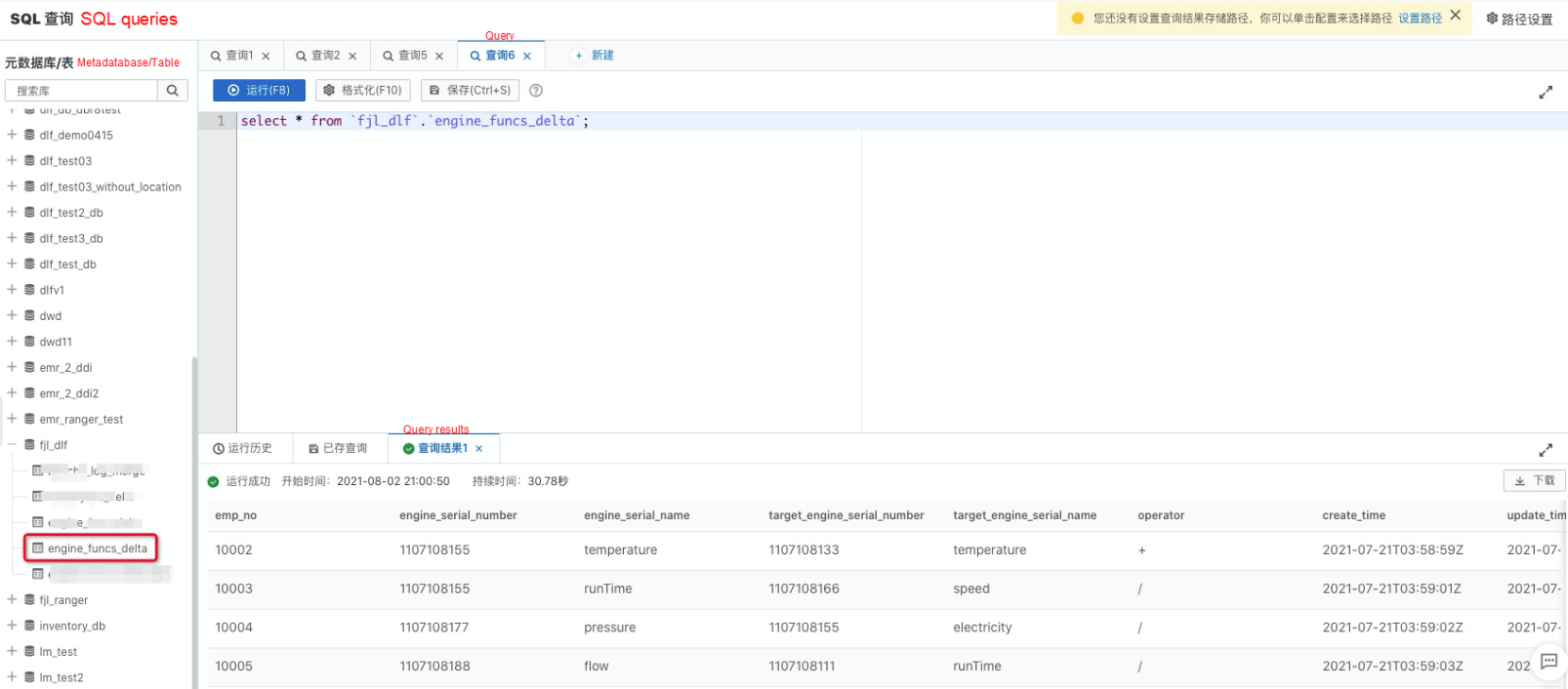

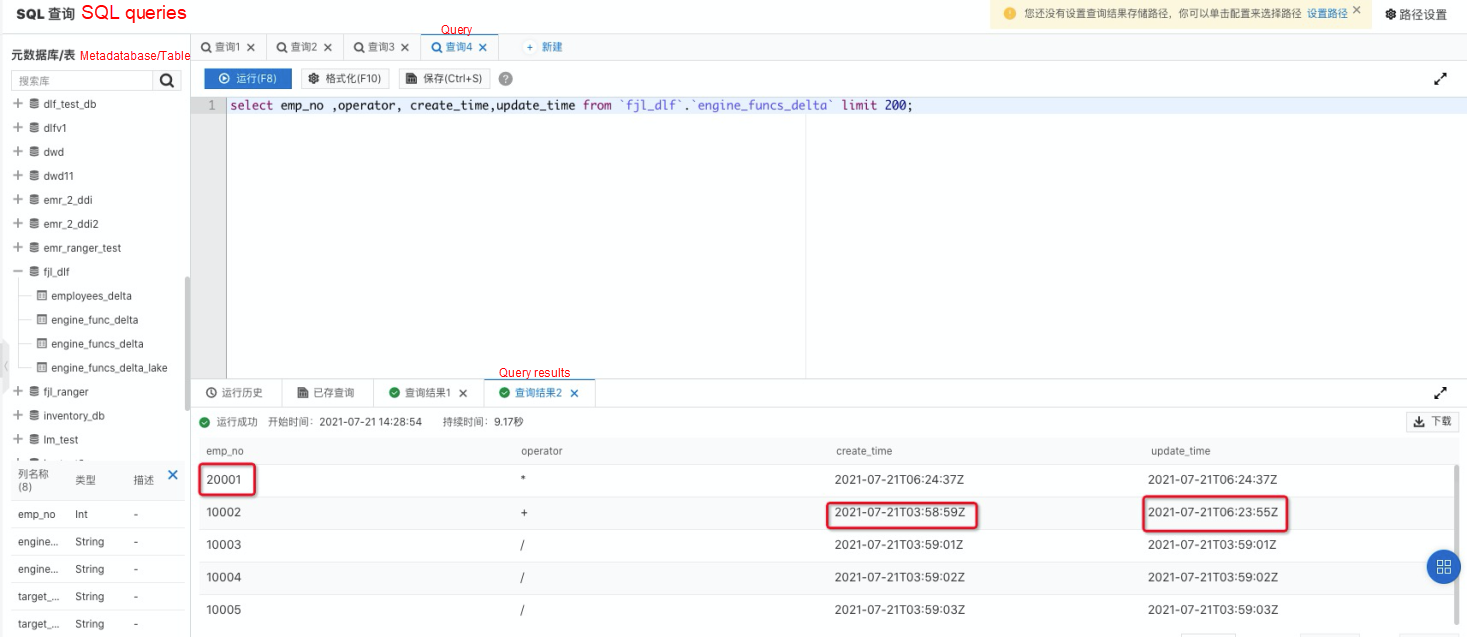

DLF products provide lightweight data preview and exploration functions. Choose "Data Exploration" → "SQL Query" to enter the data query page.

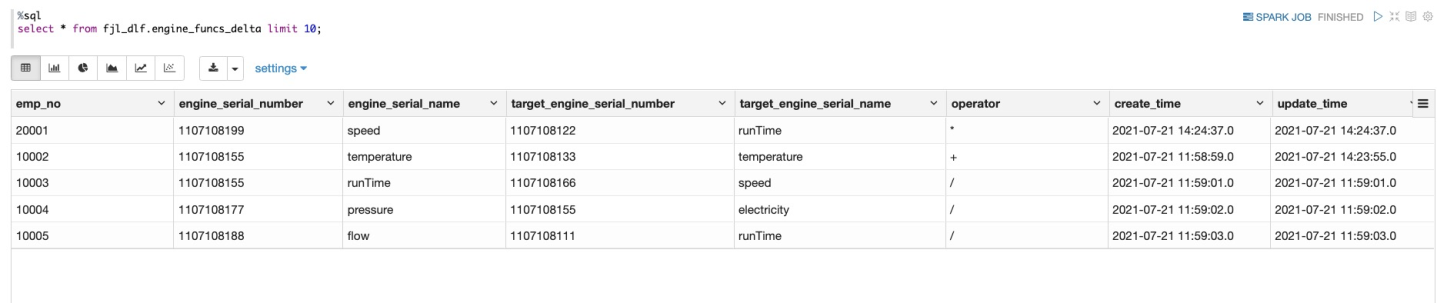

In the metadatabase table, find "fjl_dlf" and expand it to see that the engine_funcs_delta table has been created automatically. Double-click the table name, and the SQL statement for querying the table appears in the SQL edit box on the right. Then, click "run" to obtain the data query result.

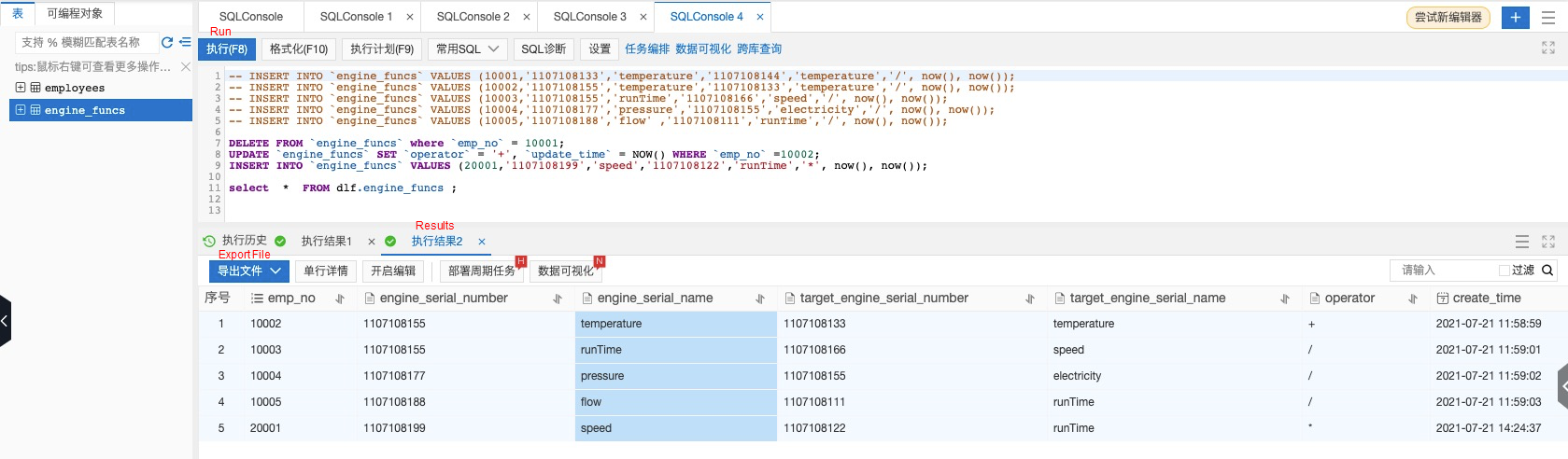

Go back to the DMS console and run the DELETE and INSERT SQL statements below.

DELETE FROM 'engine_funcs' where 'emp_no'=10001;

UPDATE 'engine_funcs' SET 'operator'='+', 'update_time'=NOW () WHERE 'emp_no'=10002;

INSERT INTO 'engine_funcs' VALUES (20001, '1107108199', 'speed', '1107108122', 'runTime', '*', now (), now ());

After about 1-3 minutes, execute the select statement in DLF data exploration again. All data updates have been synchronized to the data lake.

engine_funcs_delta table data synchronized to Delta Lake.

spark-sql-kafka tripartite Dependencies% spark. conf

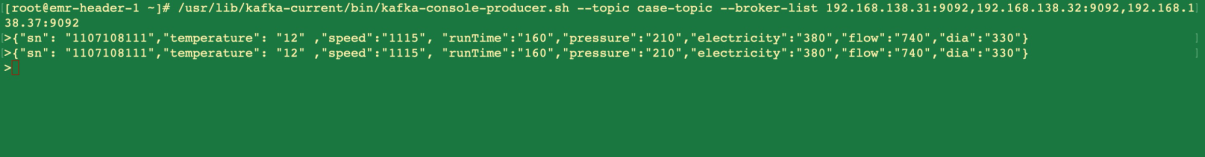

spark. jars. packages org. apache. spark :spark-sql-kafka -0-10_2.12:3.0.1The format of the test data sent to Kafka is listed below:

{"sn": "1107108111","temperature": "12" ,"speed":"1115", "runTime":"160","pressure":"210","electricity":"380","flow":"740","dia":"330"}

{"sn": "1107108122","temperature": "13" ,"speed":"1015", "runTime":"150","pressure":"220","electricity":"390","flow":"787","dia":"340"}

{"sn": "1107108133","temperature": "14" ,"speed":"1215", "runTime":"140","pressure":"230","electricity":"377","flow":"777","dia":"345"}

{"sn": "1107108144","temperature": "15" ,"speed":"1315", "runTime":"145","pressure":"240","electricity":"367","flow":"730","dia":"430"}

{"sn": "1107108155","temperature": "16" ,"speed":"1415", "runTime":"155","pressure":"250","electricity":"383","flow":"750","dia":"345"}

{"sn": "1107108166","temperature": "10" ,"speed":"1515", "runTime":"145","pressure":"260","electricity":"350","flow":"734","dia":"365"}

{"sn": "1107108177","temperature": "12" ,"speed":"1115", "runTime":"160","pressure":"210","electricity":"377","flow":"733","dia":"330"}

{"sn": "1107108188","temperature": "13" ,"speed":"1015", "runTime":"150","pressure":"220","electricity":"381","flow":"737","dia":"340"}

{"sn": "1107108199","temperature": "14" ,"speed":"1215", "runTime":"140","pressure":"230","electricity":"378","flow":"747","dia":"345"}%spark

import org.apache.spark.sql._

import io.delta.tables._

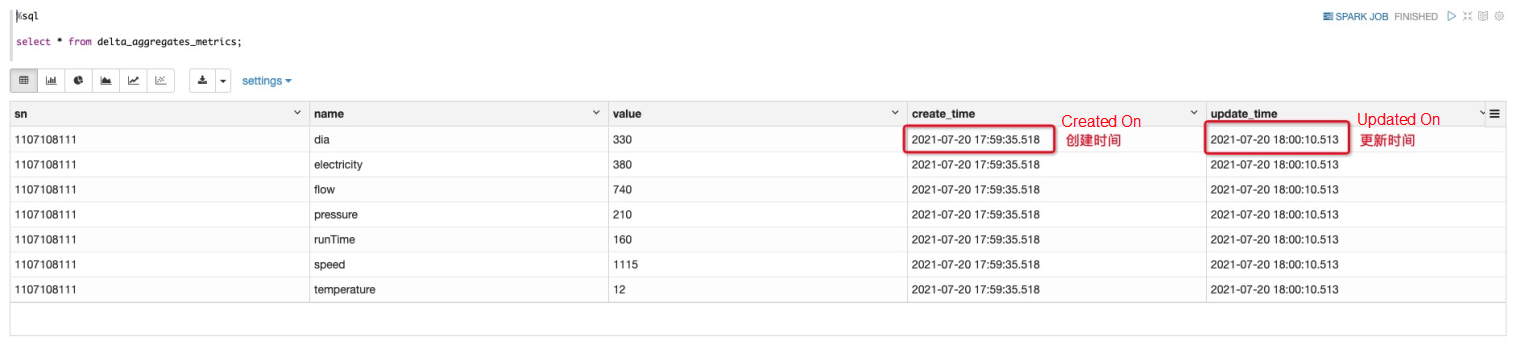

def upsertToDelta(microBatchOutputDF: DataFrame, batchId: Long) {

microBatchOutputDF.createOrReplaceTempView("dataStream")

// Execute the column-to-row operation for streaming data to the DF;

val df=microBatchOutputDF.sparkSession.sql(s"""

select `sn`,

stack(7, 'temperature', `temperature`, 'speed', `speed`, 'runTime', `runTime`, 'pressure', `pressure`, 'electricity', `electricity`, 'flow', `flow` , 'dia', `dia`) as (`name`, `value` )

from dataStream

""")

df.createOrReplaceTempView("updates")

// Update dynamic data in real time and merge the result into the table.

val mergedf=df.sparkSession.sql(s"""

MERGE INTO delta_aggregates_metrics t

USING updates s

ON s.sn = t.sn and s.name=t.name

WHEN MATCHED THEN UPDATE SET

t.value = s.value,

t.update_time=current_timestamp()

WHEN NOT MATCHED THEN INSERT

(t.sn,t.name,t.value ,t.create_time,t.update_time)

values (s.sn,s.name,s.value,current_timestamp(),current_timestamp())

""")

}%spark

import org.apache.spark.sql.functions._

import org.apache.spark.sql.streaming.Trigger

def getquery(checkpoint_dir:String,servers:String,topic:String ){

var streamingInputDF =

spark.readStream

.format("kafka")

.option("kafka.bootstrap.servers", servers)

.option("subscribe", topic)

.option("startingOffsets", "latest")

.option("minPartitions", "10")

.option("failOnDataLoss", "true")

.load()

var streamingSelectDF =

streamingInputDF

.select(

get_json_object(($"value").cast("string"), "$.sn").alias("sn"),

get_json_object(($"value").cast("string"), "$.temperature").alias("temperature"),

get_json_object(($"value").cast("string"), "$.speed").alias("speed"),

get_json_object(($"value").cast("string"), "$.runTime").alias("runTime"),

get_json_object(($"value").cast("string"), "$.electricity").alias("electricity"),

get_json_object(($"value").cast("string"), "$.flow").alias("flow"),

get_json_object(($"value").cast("string"), "$.dia").alias("dia"),

get_json_object(($"value").cast("string"), "$.pressure").alias("pressure")

)

val query = streamingSelectDF

.writeStream

.format("delta")

.option("checkpointLocation", checkpoint_dir)

.trigger(Trigger.ProcessingTime("5 seconds")) // Execute stream processing interval

.foreachBatch(upsertToDelta _) // Reference the upsertToDelta function.

.outputMode("update")

.start()

}% spark

val my_checkpoint_dir="oss://databricks-data-source/checkpoint/ck"

val servers="***.***.***.***:9092"

val topic="your-topic"

getquery(my_checkpoint_dir,servers,topic)

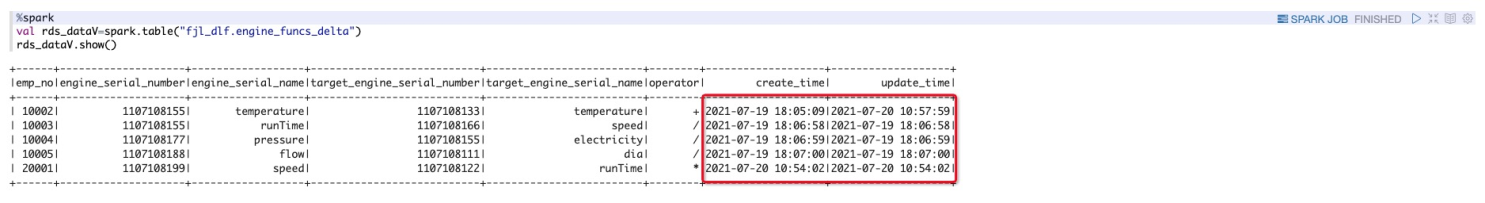

engine_funcs_delta data synchronized from MySQL to the lake in real-time% spark

val rds_dataV=spark. table ("fjl_dlf.engine_funcs_delta")

rds_dataV. show ()

You need to join the Value parameter in the corresponding delta_aggregates_metrics to the engine_funcs_delta table in combination with the business.

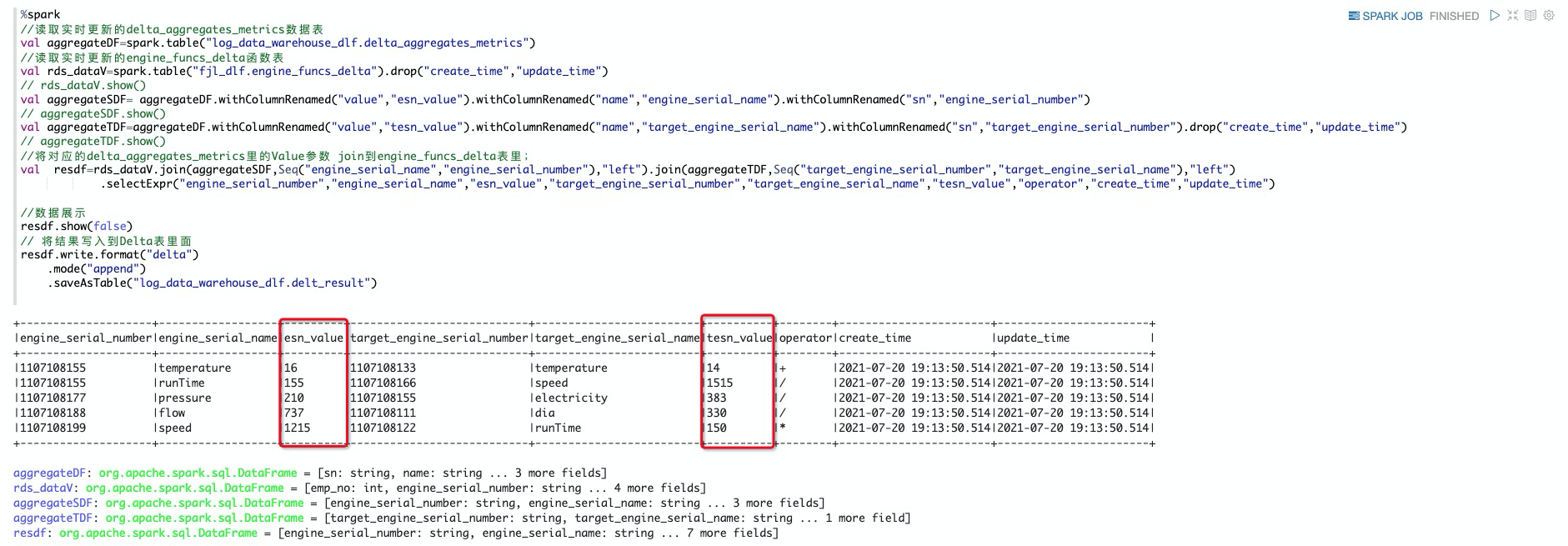

%spark

// Read the updated delta_aggregates_metrics data table in real time.

val aggregateDF=spark.table("log_data_warehouse_dlf.delta_aggregates_metrics")

// Read the engine_funcs_delta function table that is updated in real time.

val rds_dataV=spark.table("fjl_dlf.engine_funcs_delta").drop("create_time","update_time")

// rds_dataV.show()

val aggregateSDF= aggregateDF.withColumnRenamed("value","esn_value").withColumnRenamed("name","engine_serial_name").withColumnRenamed("sn","engine_serial_number")

// aggregateSDF.show()

val aggregateTDF=aggregateDF.withColumnRenamed("value","tesn_value").withColumnRenamed("name","target_engine_serial_name").withColumnRenamed("sn","target_engine_serial_number").drop("create_time","update_time")

// aggregateTDF.show()

// Join the Value parameter in the corresponding delta_aggregates_metrics to the engine_funcs_delta table;

val resdf=rds_dataV.join(aggregateSDF,Seq("engine_serial_name","engine_serial_number"),"left").join(aggregateTDF,Seq("target_engine_serial_number","target_engine_serial_name"),"left")

.selectExpr("engine_serial_number","engine_serial_name","esn_value","target_engine_serial_number","target_engine_serial_name","tesn_value","operator","create_time","update_time")

// Data display

resdf.show(false)

// Write the result to the Delta table.

resdf.write.format("delta")

.mode("append")

.saveAsTable("log_data_warehouse_dlf.delta_result")

In stream processing scenarios, a large number of small files are generated. The existence of a large number of small files seriously affects the read performance of the data system. Delta Lake provides the OPTIMIZE command to merge and compress small files. In addition, for Ad-Hoc query scenarios, we use the Z-Ordering mechanism provided by Delta Lake to improve the query performance because it involves queries on data in multiple dimensions of a single table. This improves the performance of reading tables significantly. Delta Lake provides the Auto Optimize option, but it sacrifices a small amount of write performance and increases the latency for writing data to the delta table. On the contrary, executing the OPTIMIZE command does not affect the write performance because Delta Lake supports MVCC and simultaneous write operations of OPTIMIZE. Therefore, we adopt the scheme of triggering the execution of OPTIMIZE periodically, merging small files through OPTIMIZE every hour, and executing VACCUM to clean up stale data files:

OPTIMIZE log_data_warehouse_dlf. delta_result ZORDER by engine_serial_number;

VACUUM log_data_warehouse_dlf. delta_result RETAIN 1 HOURS;I. Introduction to the Basics and Performance of Delta Lake:

II. Delta Lake Optimization:

Click the link below for more detailed information about Databricks DataInsight:

https://databricks.com/product/alibaba

Click the link below for more detailed information about Data Lake Analytics:

https://www.alibabacloud.com/product/data-lake-analytics

Alibaba Big Data Practices on Cloud-Native – EMR Spark on ACK

62 posts | 7 followers

FollowHologres - December 2, 2022

Alibaba EMR - June 8, 2021

Alibaba EMR - May 10, 2021

Alibaba Cloud Big Data and AI - April 15, 2026

Alibaba EMR - June 2, 2021

Alibaba EMR - February 15, 2023

62 posts | 7 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Message Queue for Apache Kafka

Message Queue for Apache Kafka

A fully-managed Apache Kafka service to help you quickly build data pipelines for your big data analytics.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn MoreMore Posts by Alibaba EMR