According to Wikipedia: "A data lake is a system or repository of data stored in its natural/raw format, usually object blobs or files. A data lake is usually a single store of all enterprise data including raw copies of source system data and transformed data used for tasks such as reporting, visualization, advanced analytics, and machine learning."

By definition, a data lake is a general-purpose data storage service that can store any type of data.

A USB flash drive is a data lake based on the definition. Data lake also can be in the form of Object Storage Service (OSS), Hadoop Distributed File System (HDFS), or Pangu - an Apsara Distributed File System. They all strictly conform to the data lake definition. When selecting the technical specification of an enterprise data lake, a top priority is choosing a specific storage medium or system as the data lake solution. Obviously, different storage media or storage systems have their own advantages and disadvantages. For example, some storage systems have quicker response times for random reads or better throughput for batch reads. Others have lower storage costs, better system scalability, or better-structured data organization. Therefore, exploring the advantages and avoiding the disadvantages of all the storage systems is crucial.

In theory, it is impossible to solve this contradiction once and for all. The smart approach is to provide a logical storage solution. With such a solution, you can transfer the data with varying access characteristics across different underlying storage systems conveniently. Through convenient data transport and data format conversion, the advantages of each storage system can be realized. Overall, a mature data lake must be a logical storage system formed by multiple types of storage systems in its bottom layer.

Metadata management, data integration, and data development are the three major issues of the data lake. As a general-purpose big data platform, DataWorks not only solves various problems in data warehouse scenarios but also solves the core pain point in the data lake scenario.

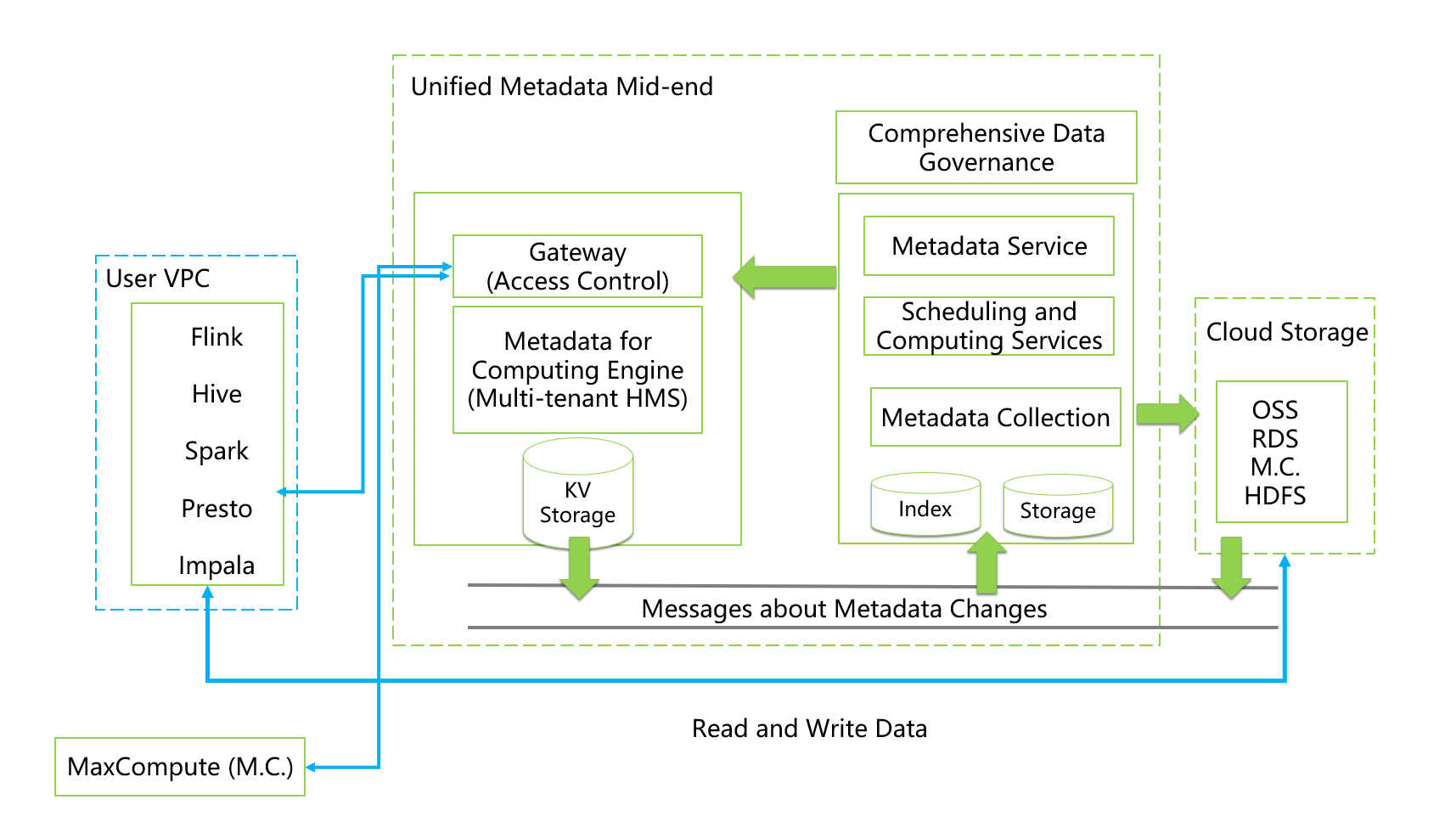

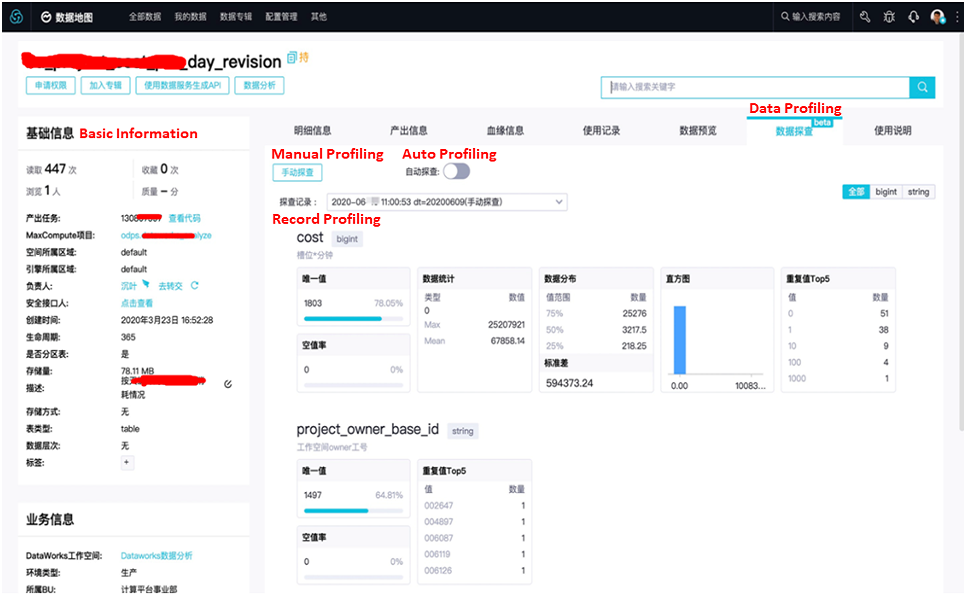

Users need a unified and centralized management capability in the data lake, which is also the first core capability of the data lake. You can use the data governance capability of DataWorks to manage the metadata of various storage systems in the data lake. It currently manages 11 types of metadata of cloud data sources, including OSS, e-MapReduce (EMR), MaxCompute, Hologres, MySQL, PostgreSQL, SQL Server, Oracle, AnalyticDB for PostgreSQL, AnalyticDB for MySQL v2.0, and AnalyticDB for MySQL v3.0. In function, DataWorks supports metadata collection, storage and retrieval, online metadata service, data preview, category tagging, data lineage, data exploration, impact analysis, and resource optimization.

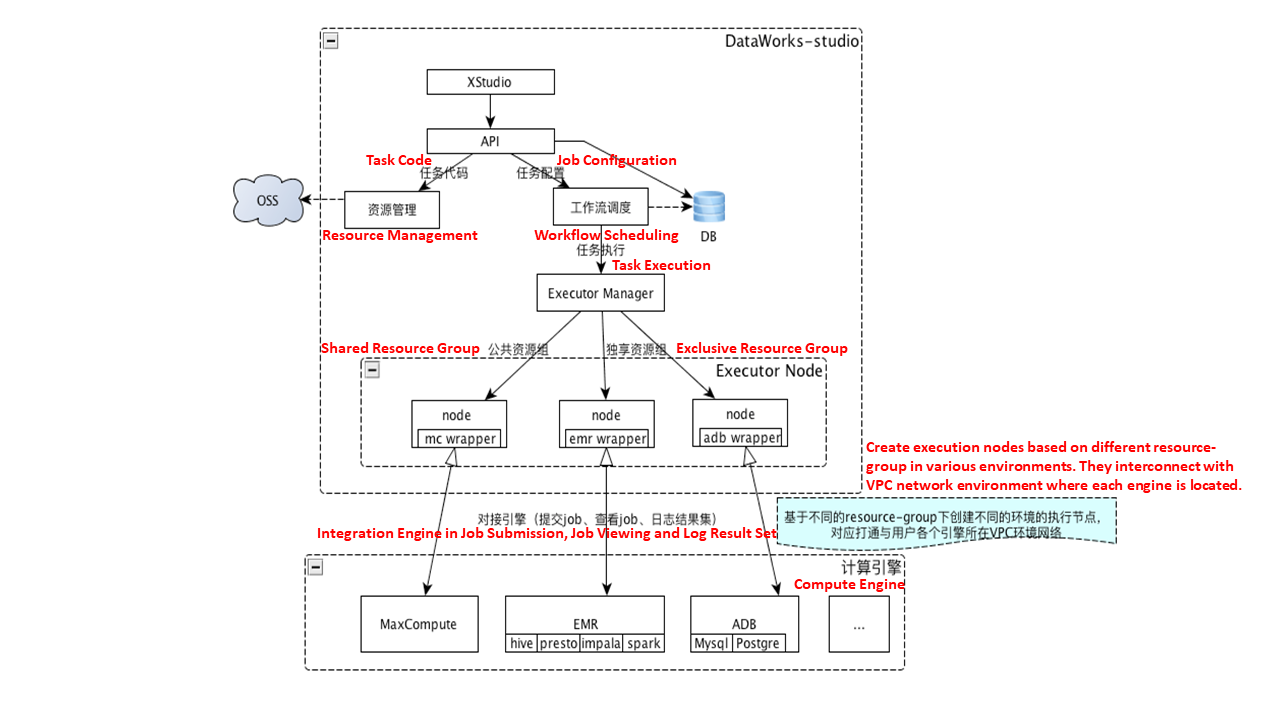

The following figure shows its technical macro-architecture:

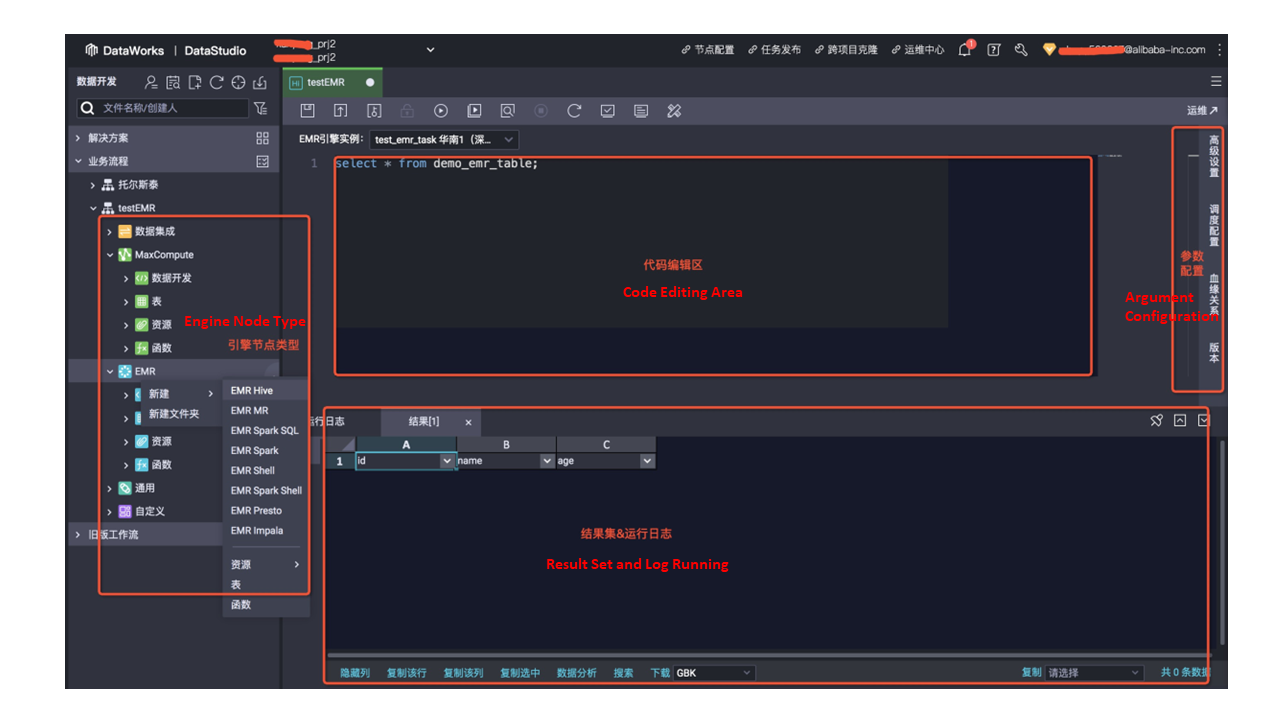

The following figures show its product form:

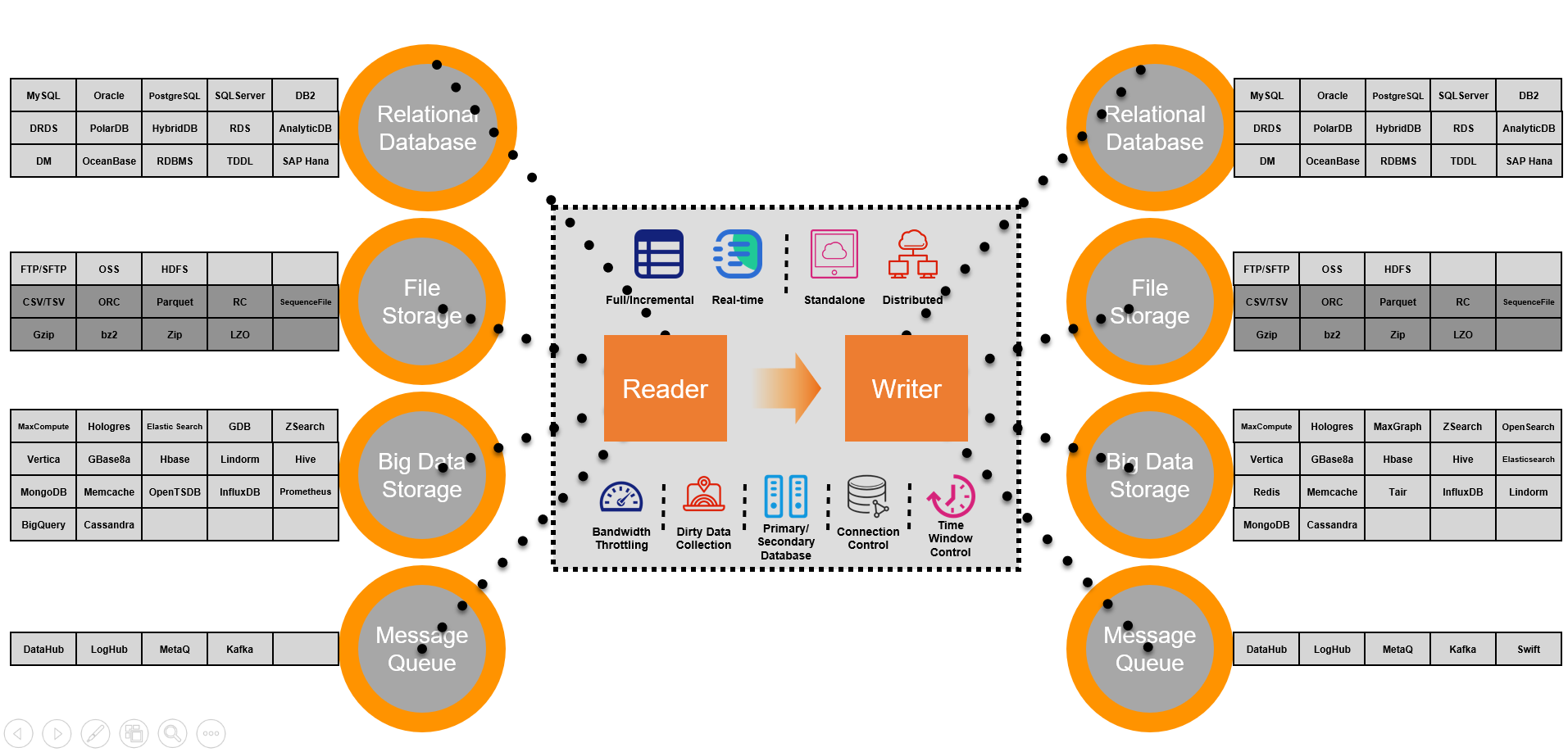

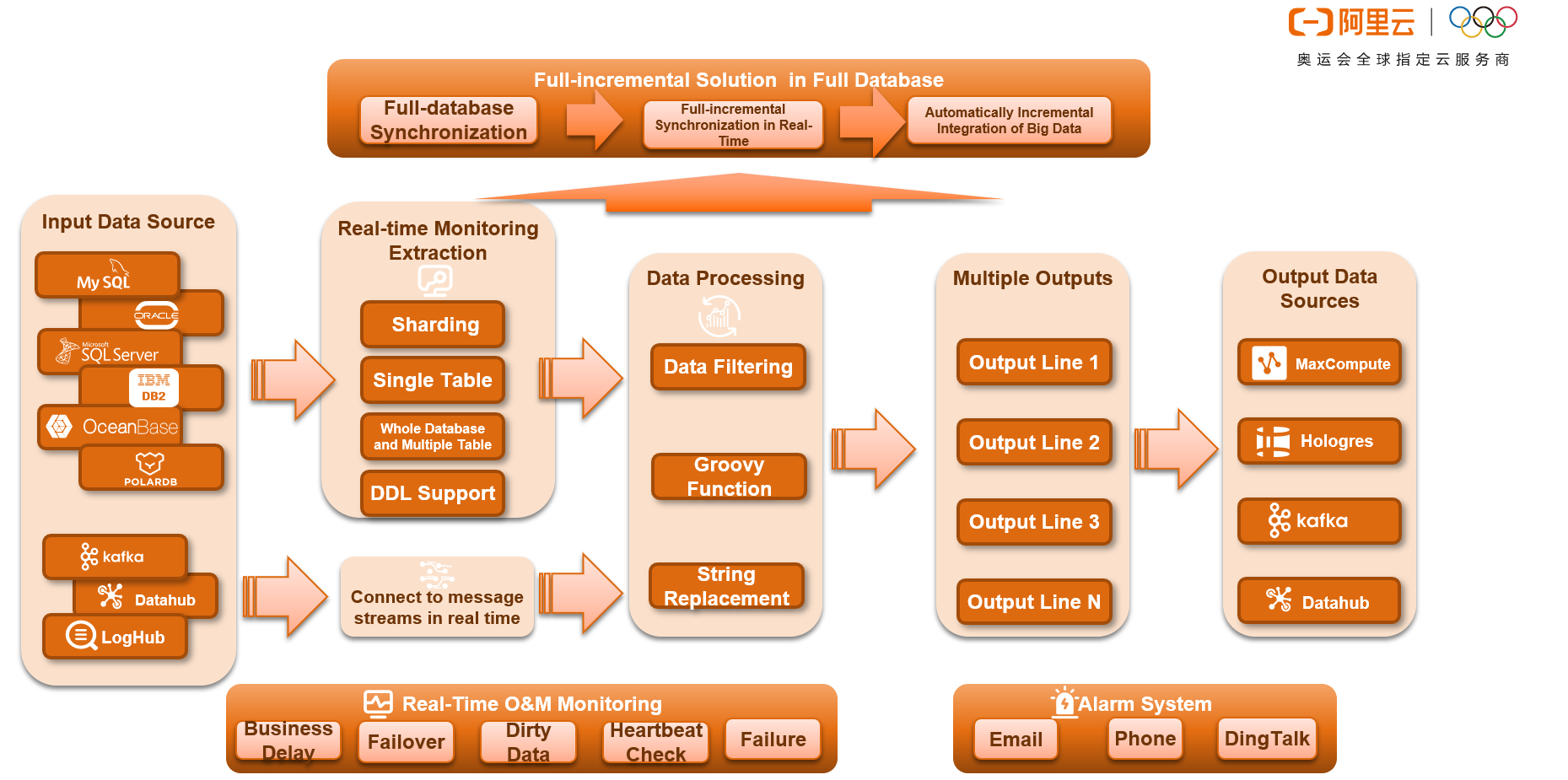

During the data management in a data lake, you will encounter the challenge of data transport and transformation among various storage systems. To solve the problem, DataWorks provides data integration capabilities for data import and export and data format conversion for 40 types of common data sources. It also covers offline and real-time synchronization scenarios, and solves complex network scenarios for external docking.

The following figure shows the core features of data integration:

The following figure shows the features of offline synchronization:

The following figure shows the features of real-time synchronization:

After implementing storage management and data transport in the data lake, you must find better ways to serve the business by enabling the data in the data lake. This requires the introduction of various computing engines. The business department of the computing platform provides various computing engines, including open-source computing engines such as Spark, Presto, Hive, and Flink. They also offer proprietary computing engines like MaxCompute and Hologres. The difficulty is making full use of each engine's advantages, allowing free data access and computing in the data lake. To solve this problem, DataWorks provides a convenient data transport method, which is suitable for data to transport through various engines in an all-in-one data development environment. From ad hoc query to periodic ETL development, DataWorks supports the development and O&M of unified computing tasks for each computing engine.

The following figure shows data development products:

By resolving the differences between the storage systems at the bottom layer of the data lake, DataWorks provides a comprehensive solution for metadata management, data governance, data migration, data conversion, and data computing in the data lake. Therefore, the data lake of Alibaba Cloud can maximize the business value for customers.

JindoFS: Computing and Storage Separation for Cloud-native Big Data

JindoFS Cache-based Acceleration for Machine Learning Training in a Data Lake

62 posts | 7 followers

FollowAlibaba EMR - June 8, 2021

Alibaba EMR - July 9, 2021

Alibaba Cloud Community - September 17, 2021

Alibaba Cloud MVP Team - October 25, 2019

Alibaba EMR - May 14, 2021

Alibaba Cloud New Products - January 19, 2021

62 posts | 7 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Hybrid Cloud Distributed Storage

Hybrid Cloud Distributed Storage

Provides scalable, distributed, and high-performance block storage and object storage services in a software-defined manner.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More OSS(Object Storage Service)

OSS(Object Storage Service)

An encrypted and secure cloud storage service which stores, processes and accesses massive amounts of data from anywhere in the world

Learn MoreMore Posts by Alibaba EMR