By Xiao Changjun (Qionggu) and Sang Jie

ChaosBlade is Alibaba's open-source chaos engineering project created in 2019. It has been added to CNCF Sandbox. At first, ChaosBlade was a multi-environment and multi-language chaos engineering experimental tool but developed into a multi-cluster, multi-environment, and multi-language chaos engineering platform called chaosblade-box. The platform supports experimental tool hosting and automatic tool deployment. The user's energy is focused on solving high-availability problems in the cloud-native process through chaos engineering and a unified user experiment interface. This article introduces ChaosBlade in detail from three stages, including the abstraction of the chaos experimental model, the open-source process of the chaos experimental tool, and the update of the chaos engineering platform.

In this year's trusted cloud evaluation, the Alibaba Cloud fault drill platform passed the highest level (advanced certification required by the trusted cloud chaos engineering platform) with the highest score.

The ChaosBlade project covers chaos experimental scenarios, such as basic resources, application services, and container services. In the beginning, the design of the experimental tool considers the unification of scenarios and the model, which facilitates the expansion and accumulation of scenarios and provides a model basis for the platform-hosted experimental tool to realize the unified scenario call. All experimental scenarios in the ChaosBlade project follow this experimental model design, which is described in detail below through the derivation, introduction, significance, and specific application of the experimental model.

Chaos experiments mainly include fault simulation. We generally describe faults in the following ways:

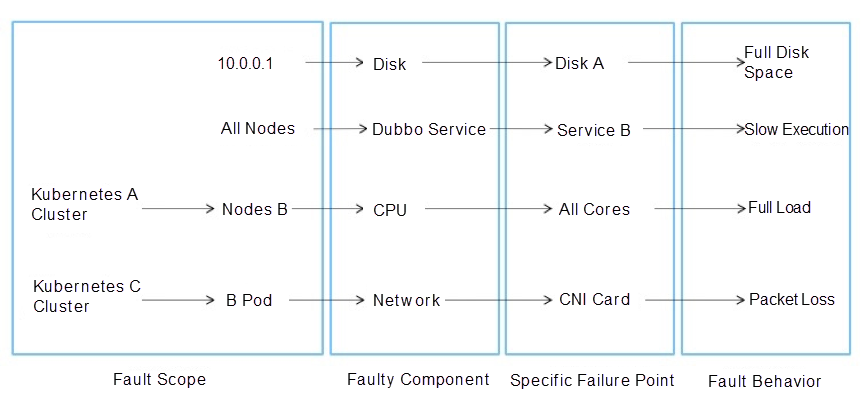

We can use the following sentence to describe the failure: A certain component on a certain machine (or resources in the cluster, such as node and pod) failed, which caused the related impact. We can also view the fault detail by splitting the description, as shown in the following figure:

These four parts can be used to describe the existing fault scenario. Therefore, we have abstracted a fault scenario model, also known as the chaos experimental model.

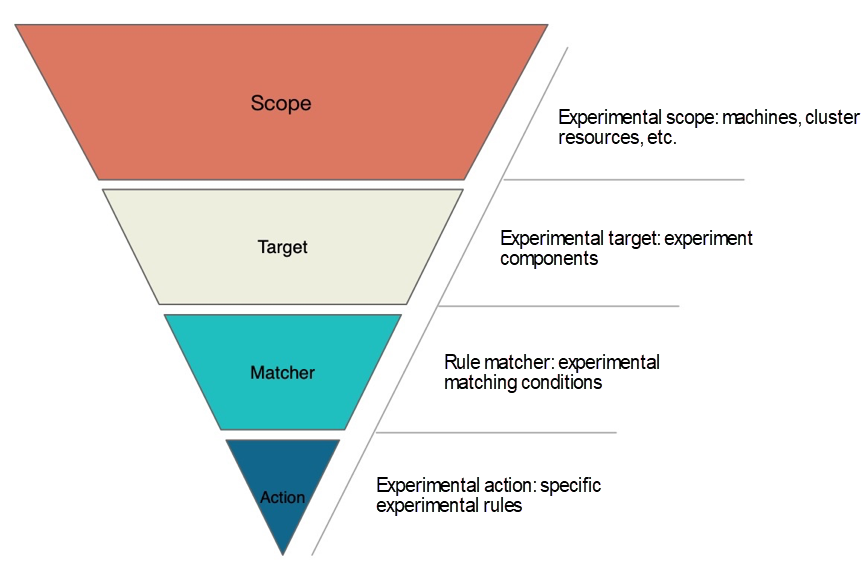

This experimental model is described in detail below:

This model can answer the following questions that need to be clarified in implementing chaos experiments:

The model has the following features:

This model has the following meanings:

The application of the chaos experimental model is summarized below:

The following section focuses on ChaosBlade, a chaos engineering tool implemented based on this model.

In the beginning, Alibaba introduced chaos engineering to solve the dependency problem of microservices. Later, chaos engineering was used to verify the steady state of business services and cloud services. Then, it was used to guarantee the business continuity of public cloud and private cloud and verify the stability of cloud-native systems. Alibaba has accumulated rich scenarios and practical experience. At that time, the open-source tools related to chaos engineering had problems, such as scattered scenario capabilities, difficulty getting started, lack of experimental model standards, and difficulty expanding and accumulating scenarios. These problems make it difficult to realize platformization, and it is difficult to include these tools on one platform. Therefore, the execution tool ChaosBlade of chaos engineering experiment was opened. The section below describes it in detail through scenarios, usage methods, architecture design, and examples.

At the beginning of designing ChaosBlade, the ease of use and the convenience of scenario expansion are considered, which helps everyone use the tool and expand more experimental scenarios according to their needs. Following the chaos experimental model, a unified and concise execution tool was provided. The chaos experimental tool supports system platforms, such as Linux, Windows, Docker, and Kubernetes. It covers applications in Java, Golang, JavaScript, and C++. It involves more than 200 experimental scenarios and more than 3,000 experimental parameters (v1.0.0-GA). The following scenarios are included:

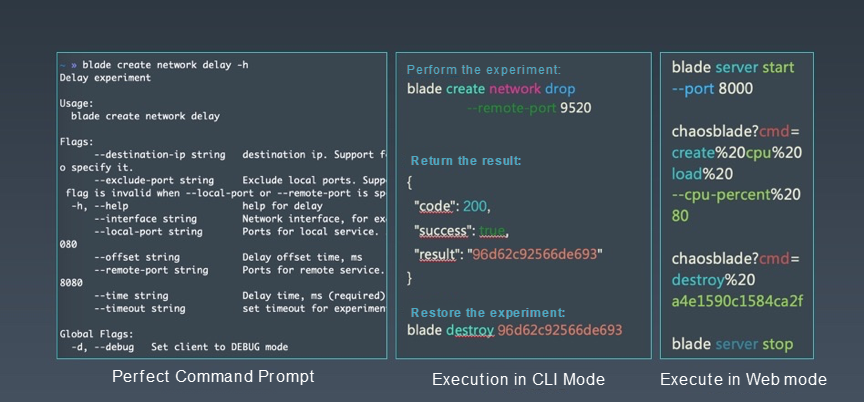

ChaosBlade is a tool that can be used by downloading and decompressing directly. It does not need to be installed. The calling mode it supports includes a CLI mode that directly executes blade commands.

For example, in the figure above about network latency, if you add the -h parameter, you can see a very perfect command prompt. For instance, if I want to call port 9520 to experiment on network packet loss and align the previous experimental model, its target is network, its action is packet loss, and its matcher is to call the remote service port 9520. After successful execution, the experimental results will be returned. Each experimental scenario is regarded as an object, and it will return the UID. This UID is used for subsequent experimental management, such as destroying and querying experiments. To destroy the experiment, that is, to restore the experiment, execute the blade destroy command.

Another way to call ChaosBlade is Web mode, which exposes HTTP services by executing server commands. At the upper level, if you build your chaos experimental platform, you can call ChaosBlade directly through HTTP requests.

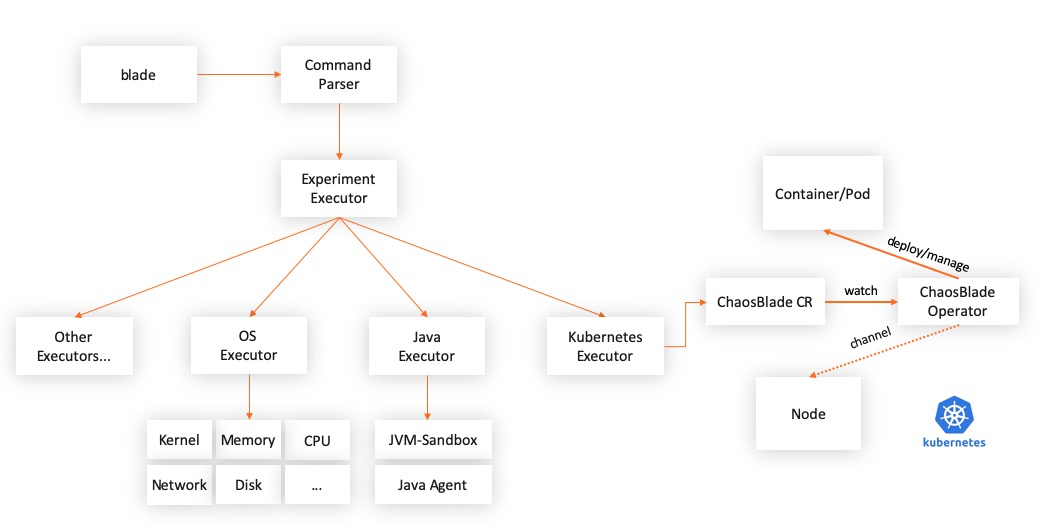

ChaosBlade is packaged into independent projects according to the field, and each project is implemented according to the best practices of each field. This meets the usage habits of each field and establishes the relationship with the chaosblade cli project through the chaos experimental model, making it convenient to use ChaosBlade for uniform calls. The experimental scenarios in each field are described in YAML files based on the chaos experimental model and exposed to the upper chaos experiment platform. The platform automatically senses the changes of the experimental scenario according to the changes in the description files. The platform can also be developed when no new scenarios are needed. This way, the chaos platform can focus more on other parts of the chaos project. The currently included actuator items are listed below:

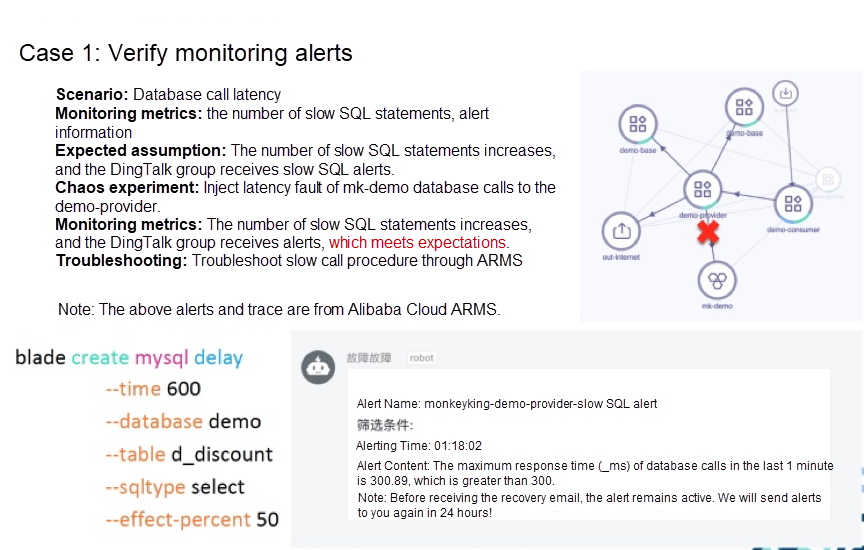

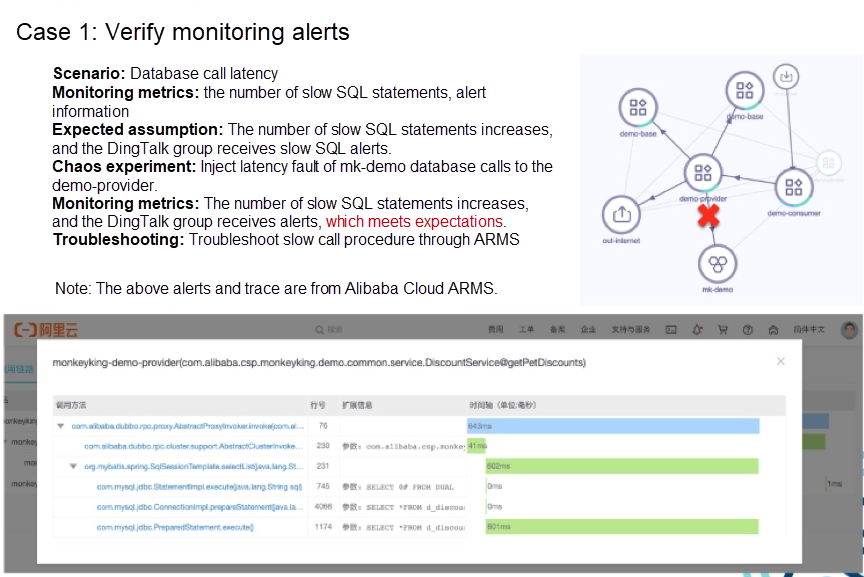

An example of Dubbo microservice is used to introduce the use of ChaosBlade. This microservice Demo involves three-level calls. The consumer calls the provider, and the provider calls the base. The provider also calls the mk-demo database. The provider and the base have two instances.

The experimental scenario of this example is database call delay. First, we define the monitoring metrics: the number of slow SQL statements and alert information. The expected assumption: the number of slow SQL statements increases, and the DingTalk group receives slow SQL alerts. Next, perform the experiment. We use chaosblade directly. You can look at the lower left part of the figure above. When we inject the calls of MySQL query for demo-provider, if the database is demo and the table name is d_discount, 50% of query operations will be delayed by 600 milliseconds.

We use Alibaba Cloud ARMS for monitoring and alerting. As you can see, the DingTalk group receives an alert soon after performing the chaos experiment. The effect meets the expectation by comparing it with the monitoring metrics defined earlier. However, it should be noted that the results from this instance does not mean it will meet the expectation in the future, so continuous verification by chaos engineering is needed. If slow SQL statements occur, ARMS tracing analysis can be used to troubleshoot and locate them. You can see which statement is slow in execution.

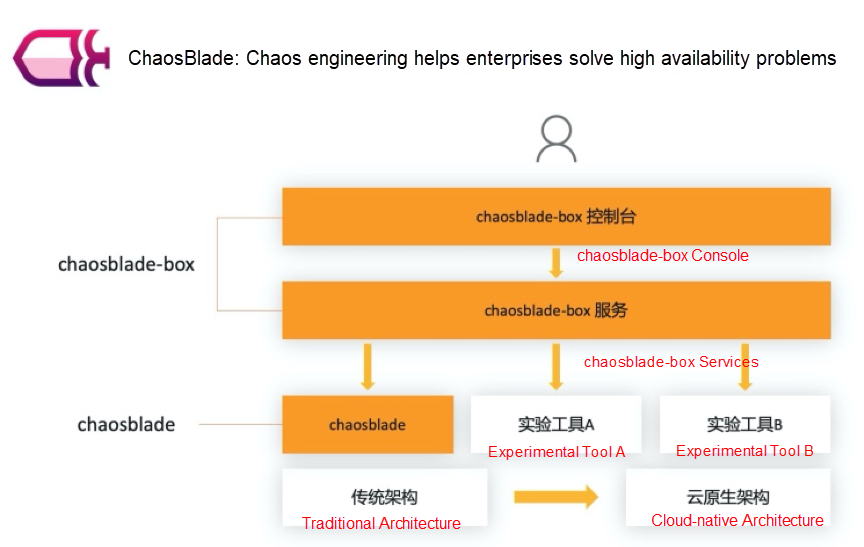

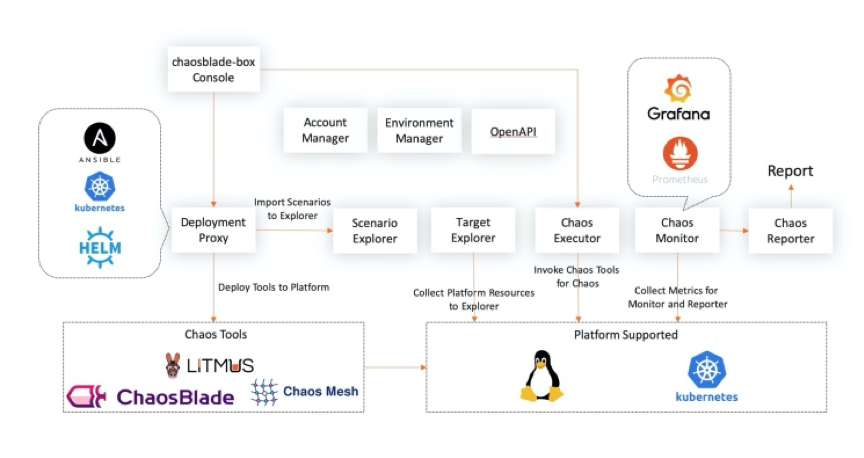

Users expect to focus on solving system high availability problems through chaos engineering rather than the selection and deployment of experimental tools. Thus, ChaosBlade is upgraded, and the open-source chaosblade-box, a chaos engineering platform, is provided. The platform hosts mainstream chaos experimental tools, automates the deployment of tools, and implements chaos engineering through a unified operation page.

The following section describes chaosblade-box through its features, architecture design, and use cases.

It has the following features:

You can use the console page to automate the deployment of managed tools, such as chaosblade and LitmusChaos, and unify the experimental scenarios according to the chaos experimental model established by the community. You can also divide the target resources according to hosts, Kubernetes, and applications and use the target manager to control them. On the experiment creation page, you can select target resources in a visible way. The platform executes experimental scenarios of different tools by calling chaos executor. You can observe the experiment metrics with access to Prometheus monitoring. Rich experiment reports will be provided in the future. The deployment of chaosblade-box is also very simple.

You can see the details on here.

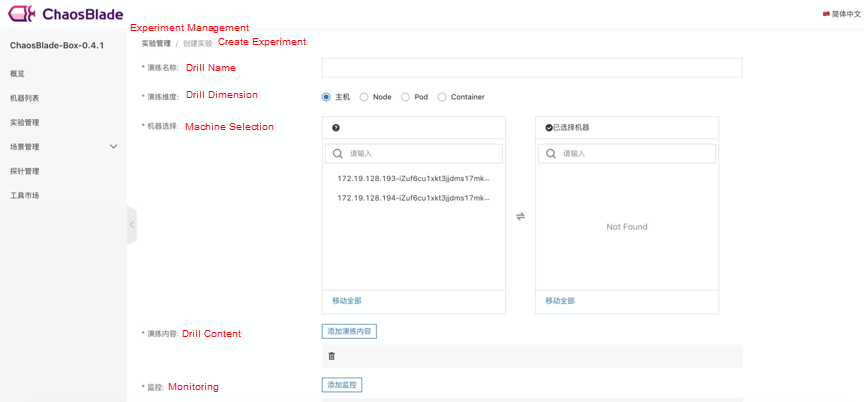

After the installation and deployment are completed, you can configure the Kubernetes cluster or host information to view the cluster or host data on the Machine List page. Click Experiment Management to create the experiment. The drill dimensions include the host, node, pod, and container. After you select the corresponding dimension, the corresponding resource list appears, and you can select it easily. The drill contains all hosted experimental scenarios. After the experiment is created, you are redirected to the drill details page automatically. Click Execute to jump to the task details page.

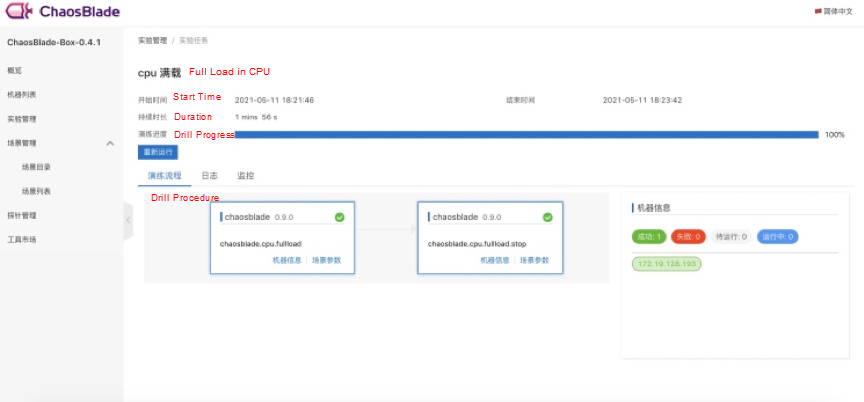

The page of drill task details displays the basic information of the experiment and the status of the experiment task. You can easily control the experiment and clarify the status of the experiment task.

Based on cloud-native, ChaosBlade will provide a chaos engineering platform and chaos engineering experimental tools for multiple clusters, multiple environments, and multiple languages. Experimental tools continue to focus on the richness and stability of experimental scenarios, support more Kubernetes resource scenarios, normalize the experimental scenario standards of application service, and provide a standard implementation for experimental scenarios in multiple languages.

In the future, the core functions of the Alibaba Cloud fault drill platform (with Advanced Certification of Chaos Engineering Platform in Trusted Cloud) will be open-sourced and integrated with the existing chaos engineering platform to open more capabilities. At the same time, it simplifies the deployment and implementation of chaos engineering tools. In the future, it will host more chaos experimental tools and be compatible with mainstream platforms. This way, it can implement scenario recommendations, provide integrated business and system monitoring, output experimental reports, and complete closed-loop chaos engineering operations on an easy-to-use basis.

Xiao Changjun (Qionggu) is an Alibaba Technical Expert, Founder and Maintainer of the open-source project ChaosBlade, Head of Alibaba Cloud fault drill platform, an expert of Trusted Cloud Standards, and a chaos engineering expert with years of experience in distributed system architecture and stability construction.

Sang Jie works in the R&D Center of Agricultural Bank of China and is engaged in big data R&D in financial-related systems.

Serverless Engineering Practices: From Cloud Computing to Serverless

706 posts | 57 followers

FollowAlibaba Clouder - May 24, 2019

Alibaba Cloud Native Community - April 22, 2021

Alibaba Cloud Native Community - June 29, 2022

Alibaba Developer - January 19, 2022

Alibaba Cloud Community - October 10, 2022

Alibaba Cloud Community - October 14, 2022

706 posts | 57 followers

Follow ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Registry

Container Registry

A secure image hosting platform providing containerized image lifecycle management

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Cloud Native Community

Dikky Ryan Pratama May 9, 2023 at 5:55 am

thank you very much Xiao Changjun for sharing the information