Industrial and civic IoT deployments generate continuous, high-velocity data streams from edge devices, junction sensors, environmental monitors, and network nodes. The engineering challenge is not data collection alone. It is the design of an end-to-end pipeline that ingests, validates, transforms, stores, and visualises this data with operational latency low enough to be useful while maintaining the durability and historical queryability that long-term analysis demands.

Most organisations approaching this problem encounter the same structural tension: real-time processing and batch analytics have historically required separate infrastructure, separate tooling, and separate operational teams. Alibaba Cloud resolves this through a native service stack designed to function as a single integrated pipeline. This article documents the architecture, key configuration decisions, and operational considerations for building this pipeline using IoT Platform, Realtime Compute for Apache Flink, MaxCompute, and DataV.

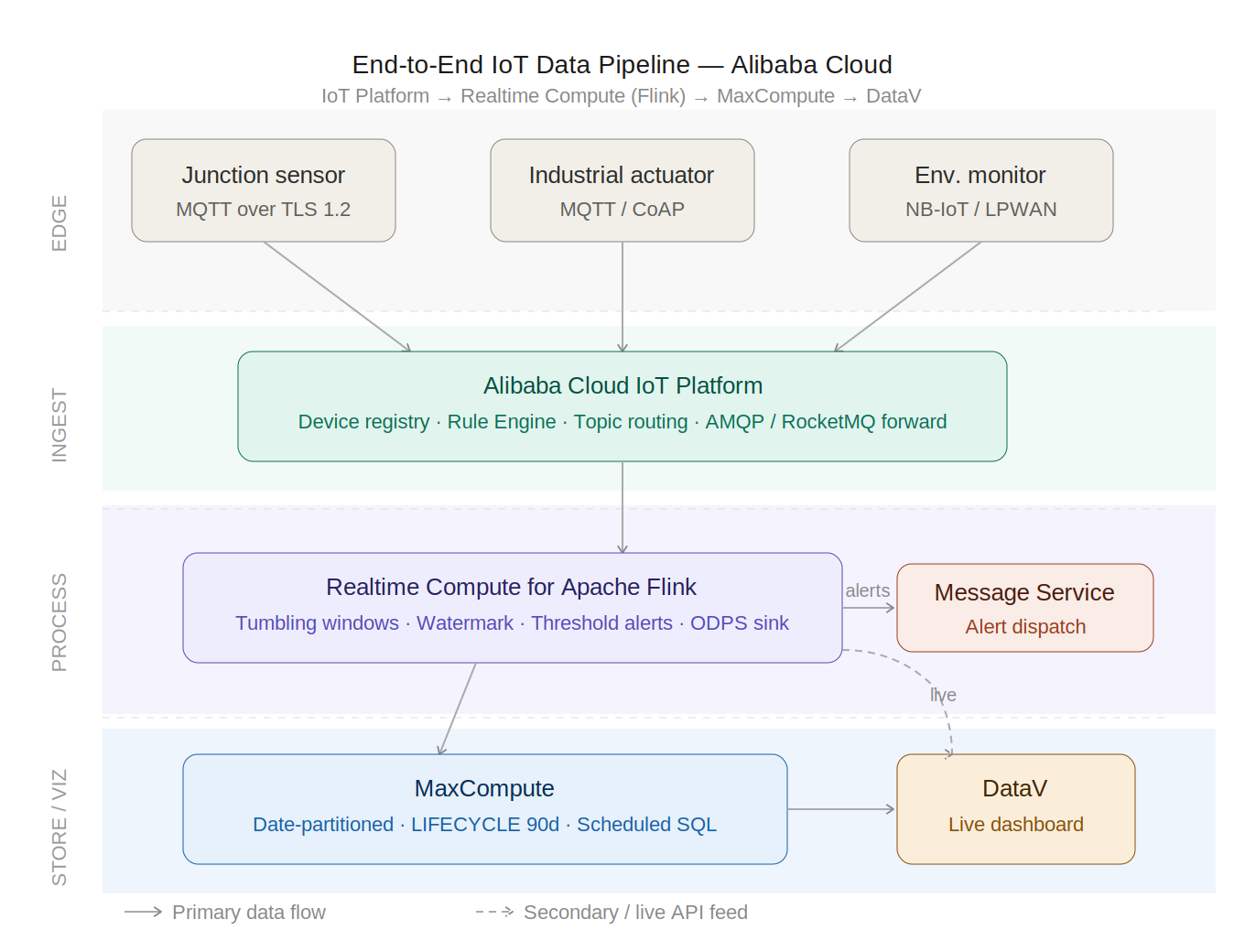

The architecture operates across four successive layers, each handled by a dedicated Alibaba Cloud service. Data flows unidirectionally from the edge to visualisation, with the option to route control signals back to devices where operational feedback loops are required.

IoT Platform handles device registration, authentication, and message routing, the entry point through which all sensor telemetry enters the pipeline. Every device is registered within a Product definition that establishes a shared schema; individual devices inherit their certificate credentials from that product. Authentication uses a triple-credential model: ProductKey, DeviceName, and DeviceSecret, with HMAC-SHA256 signing applied to derive the MQTT connection password. Credentials are never transmitted in plaintext.

MQTT connections run on port 8883 with TLS 1.2 transport encryption enforced. Port 1883 is available for token-based authentication on constrained devices where TLS termination is not feasible, though this trades transport security for device resource efficiency and should be scoped to isolated network segments.

Message flow is organised through a structured topic hierarchy. IoT Platform's Rule Engine intercepts messages on configured topic patterns and forwards them downstream. Wildcard topic subscriptions enable a single rule to aggregate telemetry from all devices registered under a product, eliminating the need for per-device rule configuration and significantly reducing operational overhead as device fleets scale. The Rule Engine extracts and transforms fields before forwarding to a Flink source table via AMQP or RocketMQ connector.

Flink sits at the centre of the pipeline's real-time processing layer. It receives the continuous message stream from the IoT Platform and runs two workloads simultaneously, one producing the windowed aggregations that feed operational dashboards, the other watching for threshold breaches and generating alerts the moment they occur.

Before Flink can process incoming records, it needs to decide how long to wait for late-arriving data. Sensors do not always deliver readings in the order they were generated. Network conditions, device constraints, and transmission delays all introduce out-of-order records. The watermark tolerance setting governs this wait. Set it to 5 seconds, and the pipeline handles most LTE-connected sensors cleanly, with window output arriving well within a 60-second dashboard refresh cycle. Tighten it to 2 seconds; latency drops, but more records are discarded later. Widen it to 15–30 seconds, and NB-IoT and LPWAN devices get the time they need, but every window takes proportionally longer to close. There is no universal right answer; the correct value comes from measuring the actual P95 delivery latency of the target device network, not from guessing based on device type.

For dashboard consumption, the pipeline uses tumbling windows fixed, non-overlapping one-minute buckets that start and end at predictable boundaries. This matters because DataV's time-series widgets expect deterministic, regularly spaced data points. Each window produces a per-zone, per-device summary: sample count, average, maximum, and minimum readings, written to MaxCompute through the ODPS sink connector.

Anomaly detection runs on a completely separate query against the same source stream. Rather than waiting for a window to close, this query emits an alert record the instant a reading crosses a threshold, keeping detection latency independent of aggregation cycles. Alert records go to a dedicated MaxCompute table and, where downstream notification is needed, to a Message Service queue. Threshold values live in an external configuration store, not in the SQL itself, so they can be adjusted at runtime without touching or redeploying the Flink job.

MaxCompute acts as the analytics storage component of the pipeline, where it receives the output of real-time aggregation generated by Flink and historical query requests required for trend analysis. The IoT data is stored using partitioning by dates, which allows partition pruning when queries are executed based on date ranges, which are the typical use cases of dashboards and historical analysis.

The MAXCOMPUTE LIFECYCLE command will automatically drop the partitions that are older than the retention period specified, making it convenient to manage the cost of storage without having to manually delete old partitions. For a typical IoT deployment in an urban setting, the best retention period would be 90 days.

MaxCompute utilizes columnar storage for its database, ensuring that dashboard queries read only the necessary columns within selected partitions. If the same dashboard queries are performed frequently, MaxCompute Scheduled SQL can create summary tables that can be queried directly by DataV.

DataV provides the operational interface through which health, infrastructure, and operations decision-makers interact with pipeline output. Within a single dashboard, DataV supports concurrent bindings to multiple data sources. In this pipeline, an API connection to Flink REST endpoints for live window data and a direct MaxCompute connection for historical trend widgets.

Dashboard widget composition for a production IoT operations environment typically includes real-time numeric indicators for live sensor readings by zone, geographic heat maps displaying current device status and threshold breaches, time-series trend panels showing indicator trajectories against historical baselines, and alert panels surfacing anomaly detection outputs. Refresh intervals are configured per widget: live operational indicators at 10–30 second cycles; trend charts at 60–300 seconds to manage query frequency against MaxCompute.

DataV's direct integration with both API endpoints and MaxCompute eliminates the need for a separate API middleware tier for dashboard data delivery, reducing the operational surface area of the pipeline.

Three operational factors determine whether the pipeline performs reliably under production load.

The four-layer architecture, IoT Platform, Realtime Compute for Apache Flink, MaxCompute, and DataV, provides a complete, production-viable path from edge sensor telemetry to operational visualisation on Alibaba Cloud. Each layer is designed for independent horizontal scaling, which enables the pipeline to accommodate device fleet growth without architectural rework. The Flink SQL interface reduces implementation complexity for windowed aggregation and anomaly detection relative to custom stream processing code, while the MaxCompute ODPS connector provides an operationally straightforward bridge between real-time and batch analytical layers.

Engineers extending this architecture should evaluate three patterns based on their workload. Session windows in place of tumbling windows suit device fleets with irregular or burst transmission intervals, closing on inactivity gaps rather than fixed time boundaries. Log Service acts as an intermediate fan-out buffer where multiple downstream consumers require independent replay of the same IoT stream, decoupling Flink job scaling from consumer count. For sub-100 messages-per-second deployments, Function Compute provides a lightweight alternative to a dedicated Flink cluster, triggered directly from the IoT Platform Rule Engine and eliminating infrastructure management overhead where stateful processing complexity is not required.

AI-Powered Urban Health Monitoring: Inside Alibaba Cloud's PAI, MaxCompute, and DataV Architecture

84 posts | 2 followers

FollowAlibaba Cloud Big Data and AI - March 10, 2026

Neel_Shah - August 8, 2025

Apache Flink Community - April 10, 2025

Alibaba Cloud New Products - September 11, 2020

Alibaba Clouder - February 4, 2020

OpenAnolis - March 10, 2026

84 posts | 2 followers

Follow Architecture and Structure Design

Architecture and Structure Design

Customized infrastructure to ensure high availability, scalability and high-performance

Learn More AliwareMQ for IoT

AliwareMQ for IoT

A message service designed for IoT and mobile Internet (MI).

Learn More DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn More DataV

DataV

A powerful and accessible data visualization tool

Learn MoreMore Posts by PM - C2C_Yuan