AnalyticDB for PostgreSQL (previously known as HybridDB for PostgreSQL) is a fast, easy-to-use, and cost-effective warehousing service that can process petabytes of data. Use Data Transmission Service (DTS) to synchronize data from a PolarDB for MySQL cluster to an AnalyticDB for PostgreSQL instance. This is common for ad hoc query and analysis, extract, transform, and load (ETL) operations, and data visualization.

Prerequisites

Before you begin, ensure that you have:

-

Binary logging enabled for the PolarDB for MySQL cluster. For more information, see How to enable Binlog.

-

Primary keys on all tables to be synchronized from the PolarDB for MySQL cluster.

-

An AnalyticDB for PostgreSQL instance created. For more information, see Create an AnalyticDB for PostgreSQL instanceCreate an AnalyticDB for PostgreSQL instance.

Supported synchronization topologies

-

One-way one-to-one synchronization

-

One-way one-to-many synchronization

-

One-way many-to-one synchronization

Limitations

| Limitation | Applies to |

|---|---|

| Only tables can be selected as objects to be synchronized. | Full load and incremental synchronization |

| The following data types cannot be synchronized: BIT, VARBIT, GEOMETRY, ARRAY, UUID, TSQUERY, TSVECTOR, TXID_SNAPSHOT, and POINT. | Full load and incremental synchronization |

| Prefix indexes cannot be synchronized. If the source database contains prefix indexes, synchronization may fail. | Full load and incremental synchronization |

| Do not use gh-ost or pt-online-schema-change to perform DDL operations on objects during synchronization. Doing so may cause synchronization failures. | Incremental synchronization |

| DML operations supported: INSERT, UPDATE, DELETE. | Incremental synchronization |

| DDL operation supported: ADD COLUMN only. CREATE TABLE is not supported. To synchronize data from a new table, add the table to the selected objects. For more information, see Add an object to a data synchronization task. | Incremental synchronization |

Term mappings

| PolarDB for MySQL | AnalyticDB for PostgreSQL |

|---|---|

| Database | Schema |

| Table | Table |

Set up a synchronization task

Step 1: Purchase a DTS instance

Purchase a DTS instance. On the buy page, set the following options:

-

Source Instance: PolarDB

-

Target Instance: AnalyticDB for PostgreSQL

-

Synchronization Topology: One-Way Synchronization

Step 2: Configure the synchronization channel

-

Log on to the DTS console.

-

In the left-side navigation pane, click Data Synchronization.

-

At the top of the Synchronization Tasks page, select the region where the destination instance resides.

-

Find the synchronization instance, then click Configure Synchronization Channel in the Actions column.

-

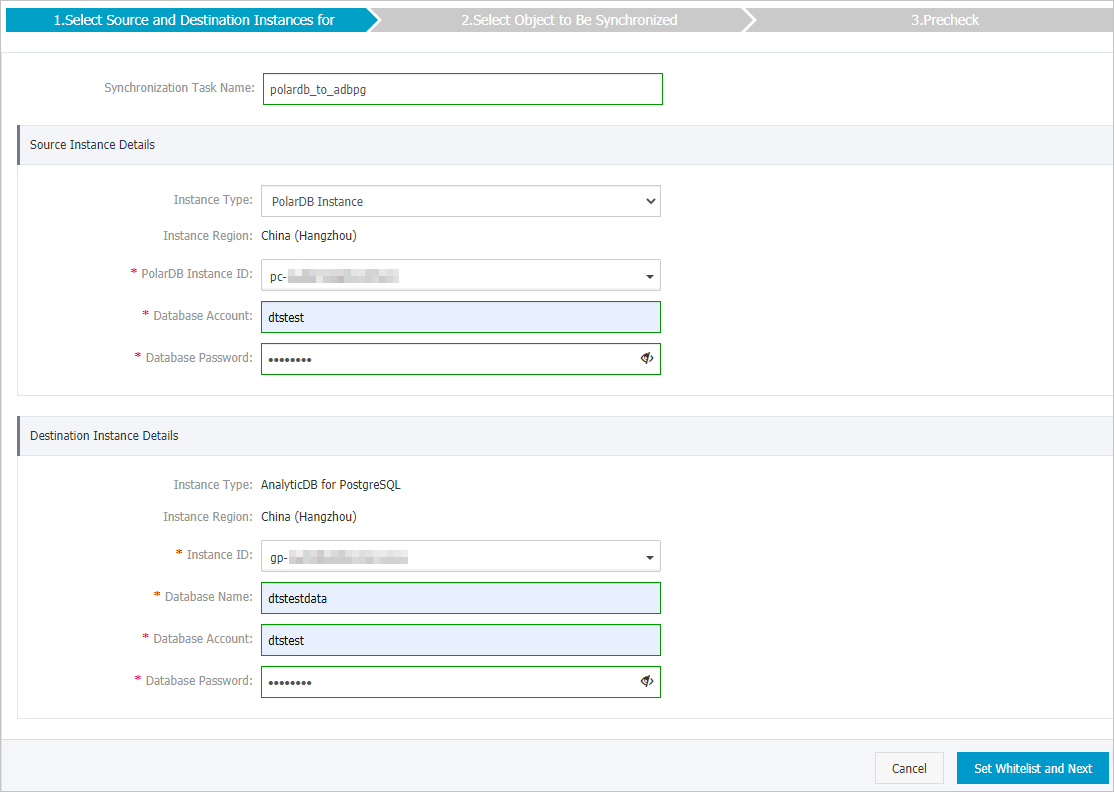

Configure the source and destination instances.

Source instance details

Parameter Description Synchronization task name DTS automatically generates a name. Specify a descriptive name for easy identification. The name does not need to be unique. Instance type Fixed as PolarDB Instance. Instance region The source region you selected on the buy page. Read-only. PolarDB instance ID Select the ID of the PolarDB for MySQL cluster. Database account Enter the database account of the PolarDB for MySQL cluster. The account must have read permissions on the objects to be synchronized. Database password Enter the password of the database account. Destination instance details

Parameter Description Instance type Fixed as AnalyticDB for PostgreSQL. Instance region The destination region you selected on the buy page. Read-only. Instance ID Select the ID of the AnalyticDB for PostgreSQL instance. Database name Enter the name of the destination database in the AnalyticDB for PostgreSQL instance. Database account Enter the initial account of the AnalyticDB for PostgreSQL instance. You can also use an account that has the RDS_SUPERUSER permission. For more information, see Create a database account and Manage users and permissions. Database password Enter the password of the database account.

-

Click Set Whitelist and Next in the lower-right corner.

DTS adds the CIDR blocks of DTS servers to the whitelists of both the PolarDB for MySQL cluster and the AnalyticDB for PostgreSQL instance. This lets DTS servers connect to the source cluster and the destination instance.

-

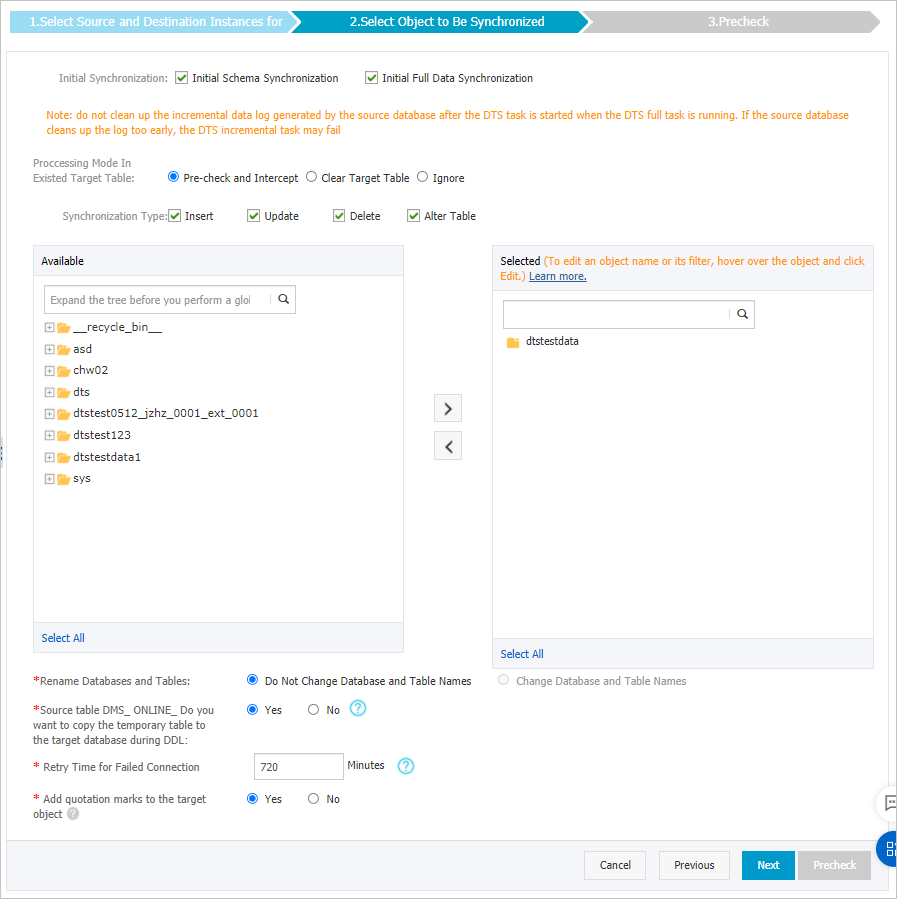

Select the synchronization policy and the objects to be synchronized.

Setting Parameter Description Synchronization policy Initial synchronization Select both Initial Schema Synchronization and Initial Full Data Synchronization in most cases. DTS synchronizes the schemas and data of the required objects from the source to the destination after the precheck. These form the basis for subsequent incremental synchronization. Processing mode of conflicting tables Clear Target Table: Skips the Schema Name Conflict item during the precheck. Clears data in the destination table before initial full data synchronization. Select this mode if you want to synchronize business data after testing. Ignore: Skips the Schema Name Conflict item during the precheck. Adds data to existing data during initial full data synchronization. Select this mode if you want to synchronize data from multiple tables to one table. Synchronization type Select the operation types to synchronize: Insert, Update, Delete, AlterTable. Objects to synchronize N/A Select tables from the Available section and click the  icon to move them to the Selected section. Only tables can be selected.

icon to move them to the Selected section. Only tables can be selected.Rename databases and tables N/A Use the object name mapping feature to rename objects in the destination instance. For more information, see Object name mapping. Replicate temporary tables when DMS performs DDL operations N/A If you use Data Management (DMS) to perform online DDL operations on the source database, choose whether to synchronize the temporary tables generated by those operations. Yes: DTS synchronizes temporary table data. If online DDL operations generate a large amount of data, the synchronization task may be delayed. No: DTS skips temporary tables and synchronizes only the original DDL data from the source. Tables in the destination database may be locked. Retry time for failed connections N/A By default, DTS retries failed connections for up to 720 minutes (12 hours). If DTS reconnects within the specified period, the synchronization task resumes. Otherwise, the task fails. You are charged for the DTS instance during retry attempts. We recommend that you specify the retry time based on your business needs. You can also release the DTS instance at your earliest opportunity after the source and destination instances are released.

-

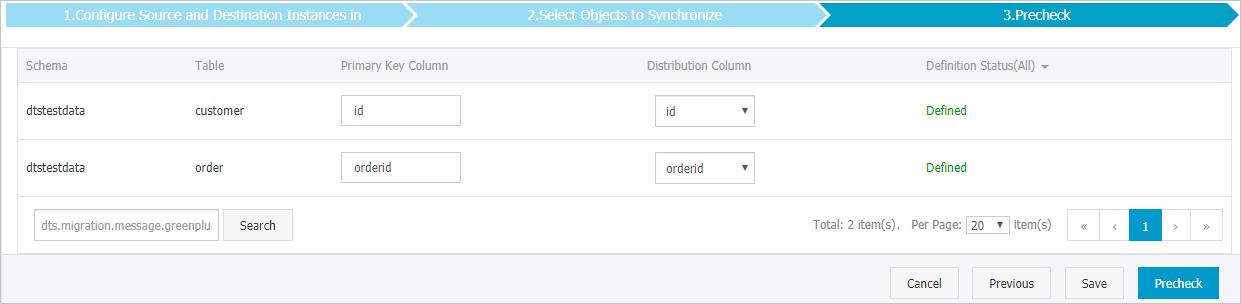

Specify the primary key column and distribution column for each table to be synchronized to the AnalyticDB for PostgreSQL instance.

This step only appears if you selected Initial Schema Synchronization. For more information about primary key columns and distribution columns, see Define constraints and Define table distribution.

-

Click Precheck in the lower-right corner.

DTS runs a precheck before starting the synchronization task. The task starts only after the precheck passes. If any items fail, click the

icon next to the failed item to view details. Fix the issues and run the precheck again. If you choose not to fix certain issues, ignore those failed items and run the precheck again.

icon next to the failed item to view details. Fix the issues and run the precheck again. If you choose not to fix certain issues, ignore those failed items and run the precheck again. -

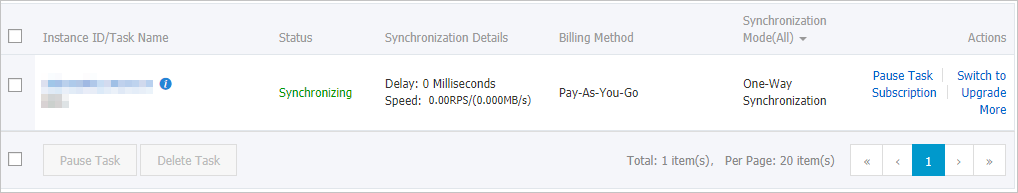

After The precheck is passed. is displayed, close the dialog box. The synchronization task starts automatically.

-

Wait for the initial synchronization to complete. The task status changes to Synchronizing on the Synchronization Tasks page.

Performance considerations

DTS uses read and write resources of both the source and destination databases during initial full data synchronization. This increases load on both servers. If the database has a low-performance specification or a large data volume, the additional load may cause service disruption. Common triggers include:

-

A large number of slow SQL queries on the source database

-

Tables without primary keys

-

Deadlocks in the destination database

Schedule synchronization during off-peak hours when CPU utilization on both databases is below 30%.

Concurrent INSERT operations during initial full data synchronization cause fragmentation in destination tables. After initial full data synchronization completes, the tablespace of the destination instance is larger than that of the source cluster.