Use Data Transmission Service (DTS) to migrate data from an Amazon Aurora MySQL cluster to a PolarDB for MySQL cluster. DTS supports schema migration, full data migration, and incremental data migration. Combining all three types lets your services continue running during migration with minimal downtime.

When to use DTS for this migration

DTS is the right tool when:

-

The source Amazon Aurora MySQL cluster is publicly accessible or can be reached via VPN

-

You need incremental data synchronization to minimize cutover downtime

-

You want automated precheck, retry, and monitoring without managing custom scripts

If you prefer a tool-based offline migration (for example, using mysqldump for small datasets), see the Amazon Aurora MySQL documentation for native export options.

Prerequisites

Before you begin, make sure you have:

-

Network connectivity between DTS and the source Amazon Aurora MySQL cluster. Set Publicly accessible to Yes in the network and security settings of the source cluster, then set Access Method to Public IP Address when configuring the migration task. DTS connects to the source cluster over the internet.

To connect DTS to Amazon Aurora MySQL through a private network, see Use IPsec-VPN to connect Alibaba Cloud VPCs to Amazon VPCs.

-

A PolarDB for MySQL cluster. See Purchase an Enterprise Edition cluster.

-

Available storage in the PolarDB for MySQL cluster that exceeds the total data size in the Amazon Aurora MySQL cluster.

How it works

DTS supports three migration types that you can combine based on your needs:

Schema migration — Migrates the schemas of tables, views, triggers, stored procedures, and functions. Events are not supported. During migration, DTS changes the SECURITY attribute from DEFINER to INVOKER for views, stored procedures, and stored functions. DTS does not migrate user information, so you must grant INVOKER read and write permissions to call these objects in the destination database.

Full data migration — Copies historical data from the Amazon Aurora MySQL cluster to PolarDB for MySQL. Concurrent INSERT operations during this phase cause fragmentation in destination tables, so the destination tablespace size will be larger than the source after migration completes.

Incremental data migration — After full data migration completes, DTS reads binary log files from the Amazon Aurora MySQL cluster and continuously synchronizes changes to PolarDB for MySQL. This keeps the two databases in sync so you can switch workloads with minimal downtime.

Billing

| Migration type | Task configuration fee | Internet traffic fee |

|---|---|---|

| Schema migration and full data migration | Free | Charged only when data is migrated from Alibaba Cloud over the internet. See Billing overview. |

| Incremental data migration | Charged. See Billing overview. |

Permissions required for database accounts

| Database | Schema migration | Full data migration | Incremental data migration |

|---|---|---|---|

| Amazon Aurora MySQL | SELECT on objects to migrate | SELECT on objects to migrate | SELECT, REPLICATION SLAVE, REPLICATION CLIENT, SHOW VIEW on objects to migrate |

| PolarDB for MySQL | Read and write on objects to migrate | Read and write on objects to migrate | Read and write on objects to migrate |

Grant the required permissions to your Amazon Aurora MySQL account. Replace <dts_user> with your account name:

-- Required for all migration types

GRANT SELECT ON <database_name>.* TO '<dts_user>'@'%';

-- Additional permissions for incremental data migration

GRANT REPLICATION SLAVE ON *.* TO '<dts_user>'@'%';

GRANT REPLICATION CLIENT ON *.* TO '<dts_user>'@'%';

GRANT SHOW VIEW ON <database_name>.* TO '<dts_user>'@'%';For detailed setup instructions, see:

-

Amazon Aurora MySQL: Create an account for a self-managed MySQL database and configure binary logging

-

PolarDB for MySQL: Create a database account

Limitations

General:

-

DTS uses read and write resources on both databases during full data migration, which increases server load. Run migrations during off-peak hours when CPU utilization on both databases is below 30%.

-

The source database must have PRIMARY KEY or UNIQUE constraints, and all fields must be unique. Without these constraints, the destination database may contain duplicate records. Workaround: Add a primary key or unique index to the source tables before starting migration.

-

If the source database name is invalid, create the database in the PolarDB for MySQL cluster before configuring the migration task. See Database Management for naming conventions.

-

If a migration task fails and DTS resumes it automatically, stop or release the task before switching workloads to the destination database. Otherwise, the resumed task will overwrite destination data with source data.

Data type handling:

-

DTS uses the

ROUND(COLUMN,PRECISION)function for FLOAT and DOUBLE columns. The default precision is 38 digits for FLOAT and 308 digits for DOUBLE. Verify these defaults meet your requirements before migrating. Workaround: If your application requires different precision, pre-create the destination tables with explicit column definitions before starting migration.

Prepare the source database

Configure your Amazon Aurora MySQL cluster to allow DTS connections and enable binary logging for incremental migration.

Add DTS server CIDR blocks to the security group:

-

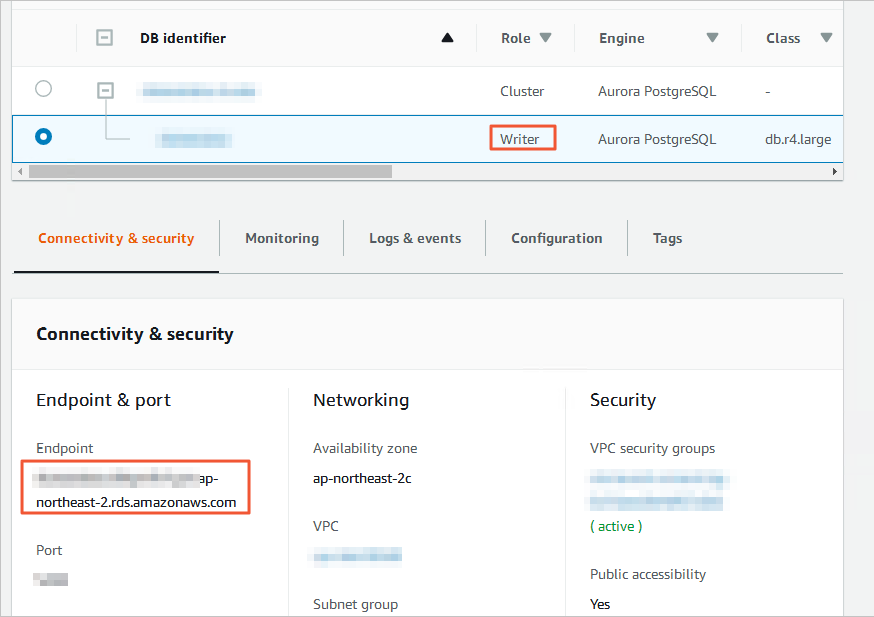

Log on to the Amazon Aurora console and click Databases in the left navigation pane.

-

Click the DB identifier of the node with Role set to Writer instance.

-

On the Connectivity & security tab, click the VPC security group name.

-

On the Security Groups page, click the security group ID.

-

On the Inbound rules tab, click Edit inbound rules.

-

Click Add rule, add the CIDR blocks of DTS servers in the same region as your destination database, then click Save rules. See Add the CIDR blocks of DTS servers for the full list of CIDR blocks.

Add only the CIDR blocks for the region where your destination database resides. For example, if the source is in Singapore and the destination is in China (Hangzhou), add only the China (Hangzhou) CIDR blocks. You can add all required blocks in a single rule.

Set the binary log retention period (required for incremental data migration):

Log on to the source Amazon Aurora MySQL cluster and run:

call mysql.rds_set_configuration('binlog retention hours', 24);This sets the retention period to 24 hours. The maximum is 168 hours (7 days).

Also confirm the following binary logging settings are in place:

| Parameter | Required value | Why |

|---|---|---|

binlog_format |

row |

Row-based logging captures the exact data changes needed for accurate replication. |

binlog_row_image |

full (MySQL 5.6 and later) |

Full row images ensure DTS can reconstruct all column values, not just changed columns. |

Contact Amazon support or see the Amazon documentation if you need to modify these settings.

Create the migration task

The following steps use the new DTS console. See Create the migration task (old console) if you are using the legacy interface.

-

Go to the Data Migration Tasks page:

-

In the Data Management (DMS) console, click DTS in the top navigation bar, then choose DTS (DTS) > Data Migration in the left navigation pane.

-

Or go directly to Data Migration Tasks.

Navigation options vary by DMS console mode. See Simple mode and Customize the layout and style of the DMS console.

-

-

From the drop-down list next to Data Migration Tasks, select the region where the migration instance will reside.

-

Click Create Task. Read the Limits displayed at the top of the page before configuring source and destination databases. Configure the source database: Configure the destination database:

Parameter Value Select a DMS database instance Select an existing instance or leave blank to configure manually. Database Type MySQL Access Method Public IP Address Instance Region The region where the Amazon Aurora MySQL cluster resides. If the region isn't listed, select the geographically closest region. Hostname or IP Address The endpoint of the Amazon Aurora MySQL cluster. Find this on the cluster's basic information page. Port Number 3306 (default) Database Account The account with the permissions listed in Permissions required for database accounts. Database Password The account password. Parameter Value Select a DMS database instance Select an existing instance or leave blank to configure manually. Database Type PolarDB for MySQL Access Method Alibaba Cloud Instance Instance Region The region where the PolarDB for MySQL cluster resides. PolarDB Cluster ID The ID of the destination PolarDB for MySQL cluster. Database Account The account with the permissions listed in Permissions required for database accounts. Database Password The account password. -

Click Test Connectivity and Proceed.

-

If an IP address whitelist is configured, add the DTS server CIDR blocks to it, then click Test Connectivity and Proceed.

WarningAdding DTS server CIDR blocks to database whitelists or security group rules carries security risks. Before proceeding, take protective measures such as: using strong credentials, limiting exposed ports, authenticating API calls, auditing whitelist rules regularly, and removing unauthorized CIDR blocks. Alternatively, connect the database to DTS using Express Connect, VPN Gateway, or Smart Access Gateway.

-

Configure the objects to migrate:

Parameter Description Migration Types Select Schema Migration and Full Data Migration for a one-time migration. Select all three types to keep services running during migration. If you omit Incremental Data Migration, stop writing to the source database during migration to maintain data consistency. Processing Mode of Conflicting Tables Precheck and Report Errors: Fails the precheck if source and destination have tables with identical names. Use object name mapping to rename conflicting tables. Ignore Errors and Proceed: Skips the identical-name check. DTS will skip records with matching primary keys, and schema differences may cause partial migration or task failure. Method to Migrate Triggers in Source Database Available when Schema Migration is selected. See Synchronize or migrate triggers from the source database. Capitalization of Object Names in Destination Instance Controls case for database names, table names, and column names in the destination. Default is DTS default policy. See Specify the capitalization of object names in the destination instance. Source Objects Select objects from the Source Objects section and click  to add them to Selected Objects. Selecting tables or columns excludes views, triggers, and stored procedures.

to add them to Selected Objects. Selecting tables or columns excludes views, triggers, and stored procedures.Selected Objects Right-click an object to rename it or set WHERE filter conditions. Click Batch Edit to rename multiple objects at once. Note that renaming objects may break dependent objects. See Map object names and Set filter conditions. -

Click Next: Advanced Settings and configure:

Parameter Description Data Verification Settings See Configure data verification. Dedicated Cluster for Task Scheduling By default, DTS uses a shared cluster. Purchase a dedicated cluster for isolated workloads. See What is a DTS dedicated cluster? Monitoring and Alerting Set to Yes to receive alerts when the task fails or latency exceeds the threshold. See Configure monitoring and alerting when you create a DTS task. Select the engine type of the destination database InnoDB (default) or X-Engine (an online transaction processing (OLTP) storage engine). Copy the temporary table of the Online DDL tool that is generated in the source table to the destination database Controls how DTS handles temporary tables from online DDL tools. Yes: migrates temporary table data (may cause latency for large DDL operations). No, Adapt to DMS Online DDL: migrates only the original DDL from DMS (may lock destination tables). No, Adapt to gh-ost: migrates only the original DDL from gh-ost (may lock destination tables). Note: pt-online-schema-change is not supported and will cause the task to fail. Retry Time for Failed Connections How long DTS retries after a connection failure. Range: 10–1440 minutes. Default: 720 minutes. Set this to 30 minutes or more. If multiple tasks share a source or destination database, the shortest retry time applies across all tasks. Retry Time for Other Issues How long DTS retries after DDL or DML failures. Range: 1–1440 minutes. Default: 10 minutes. Set this to 10 minutes or more. This value must be less than Retry Time for Failed Connections. Enable Throttling for Full Data Migration Set to Yes to limit the queries per second (QPS), requests per second (RPS), or bytes per second (BPS) to the source database. Available when Full Data Migration is selected. Enable Throttling for Incremental Data Migration Set to Yes to limit the RPS or BPS for incremental migration. Available when Incremental Data Migration is selected. Configure ETL Set to Yes to enable extract, transform, and load (ETL) processing. Enter data transformation statements in the code editor. See Configure ETL in a data migration or data synchronization task. Whether to delete SQL operations on heartbeat tables of forward and reverse tasks Yes: DTS does not write heartbeat table operations to the source database. Latency may appear in the DTS instance. No: DTS writes heartbeat table operations, which may affect physical backup and cloning of the source database. -

Click Next: Save Task Settings and Precheck. DTS runs a precheck before starting the task. If the precheck fails:

-

Click View Details next to each failed item, fix the issue, then click Precheck Again.

-

For alert items that can be ignored, click Confirm Alert Details, then Ignore, then OK, then Precheck Again. Ignoring alerts may result in data inconsistency.

Click Preview OpenAPI parameters to view the API parameters for this configuration before saving.

-

-

Wait until the success rate reaches 100%, then click Next: Purchase Instance.

-

Configure the instance class:

Parameter Description Resource Group Settings The resource group for the migration instance. Default: default resource group. See What is Resource Management? Instance Class The migration speed varies by instance class. See Instance classes of data migration instances. -

Select the Data Transmission Service (Pay-as-you-go) Service Terms check box.

-

Click Buy and Start. Monitor progress in the task list.

Create the migration task (old console)

-

Log on to the DTS console.

If redirected to the DMS console, click the

icon in the

icon in the  to go back to the previous DTS console.

to go back to the previous DTS console. -

In the left navigation pane, click Data Migration.

-

At the top of the Migration Tasks page, select the region where the destination cluster resides.

-

In the upper-right corner, click Create Migration Task.

-

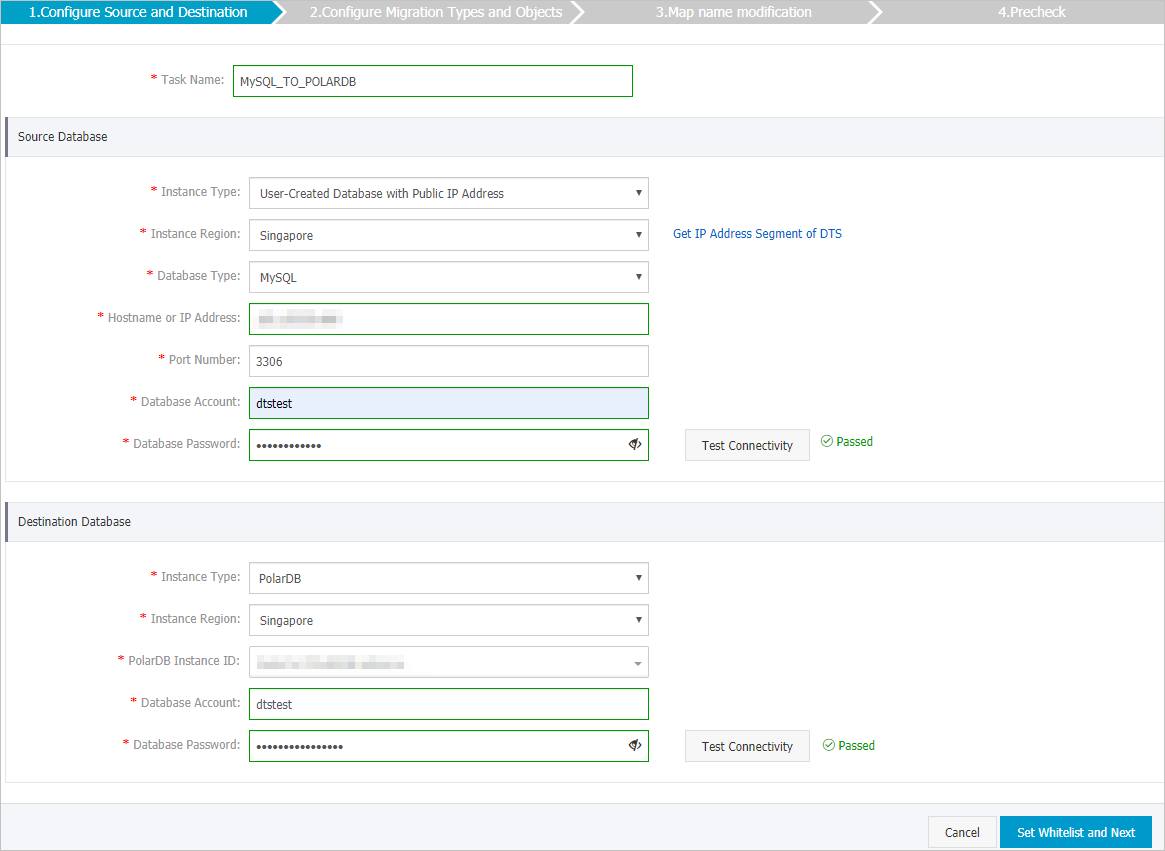

Configure the source and destination databases. Source database: Destination database:

Parameter Value Task Name A descriptive name for the task. Names don't need to be unique. Instance Type User-Created Database with Public IP Address Instance Region Not required when User-Created Database with Public IP Address is selected. Database Type MySQL Hostname or IP Address The endpoint of the Amazon Aurora MySQL cluster. Find this on the cluster's basic information page.

Port Number 3306 (default) Database Account The account with the permissions listed in Permissions required for database accounts. Database Password The account password. Click Test Connectivity to verify. A Passed message confirms the connection. Parameter Value Instance Type PolarDB Instance Region The region where the PolarDB for MySQL cluster resides. PolarDB Instance ID The ID of the destination PolarDB for MySQL cluster. Database Account The account with the permissions listed in Permissions required for database accounts. Database Password The account password. Click Test Connectivity to verify.

-

Click Set Whitelist and Next. DTS automatically adds its CIDR blocks to the whitelist of Alibaba Cloud database instances and ECS security groups. For self-managed databases from other providers, add the CIDR blocks manually. See Add the CIDR blocks of DTS servers.

WarningAdding DTS server CIDR blocks to database whitelists or ECS security group rules carries security risks. Before proceeding, take protective measures such as: using strong credentials, limiting exposed ports, authenticating API calls, auditing whitelist and security group rules regularly, and removing unauthorized CIDR blocks. Alternatively, connect the database to DTS using Express Connect, VPN Gateway, or Smart Access Gateway.

-

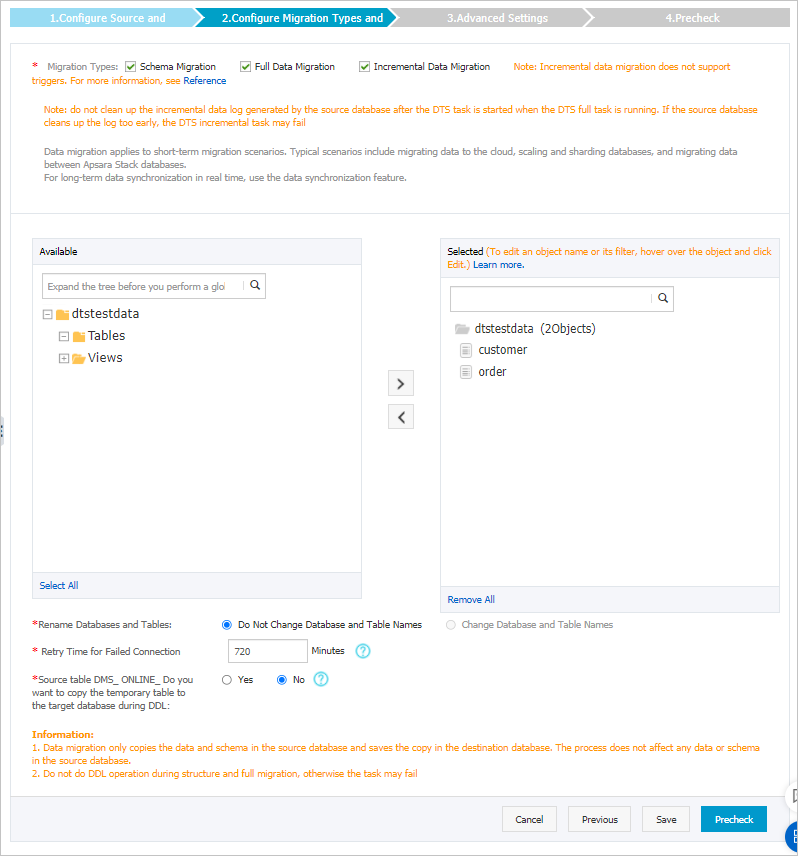

Select the objects to migrate and the migration types.

Setting Description Select the migration types Select Schema Migration and Full Data Migration for a one-time migration. Select all three types to keep services running during migration. If you omit Incremental Data Migration, stop writing to the Amazon Aurora MySQL cluster during migration to maintain data consistency. Select the objects to be migrated Select objects from Available and click  to move them to Selected. You can select columns, tables, or databases. By default, migrated objects keep their original names. Use object name mapping to rename them. Note that renaming objects may break dependent objects. See Object name mapping.

to move them to Selected. You can select columns, tables, or databases. By default, migrated objects keep their original names. Use object name mapping to rename them. Note that renaming objects may break dependent objects. See Object name mapping.Specify whether to rename objects See Object name mapping. Specify the retry time range for a failed connection Default: 12 hours. Adjust based on your requirements. If DTS reconnects within the retry window, the task resumes automatically. When DTS retries, you are charged for the instance. Specify whether to copy temporary tables to the destination database when DMS performs online DDL operations Yes: DTS migrates temporary table data from online DDL operations (may cause latency for large operations). No: DTS migrates only the original DDL data from Data Management (DMS) (may lock tables in the PolarDB for MySQL cluster).

-

Click Precheck in the lower-right corner. Fix any failed items before proceeding.

-

After the precheck passes, click Next.

-

In the Confirm Settings dialog, configure Channel Specification and select Data Transmission Service (Pay-As-You-Go) Service Terms.

-

Click Buy and Start.

Stop the migration task and switch workloads

How you stop the task depends on the migration types selected.

Schema migration and full data migration only:

Do not manually stop the task. Wait for it to stop automatically. Stopping early may leave the destination database with incomplete data.

Schema migration, full data migration, and incremental data migration:

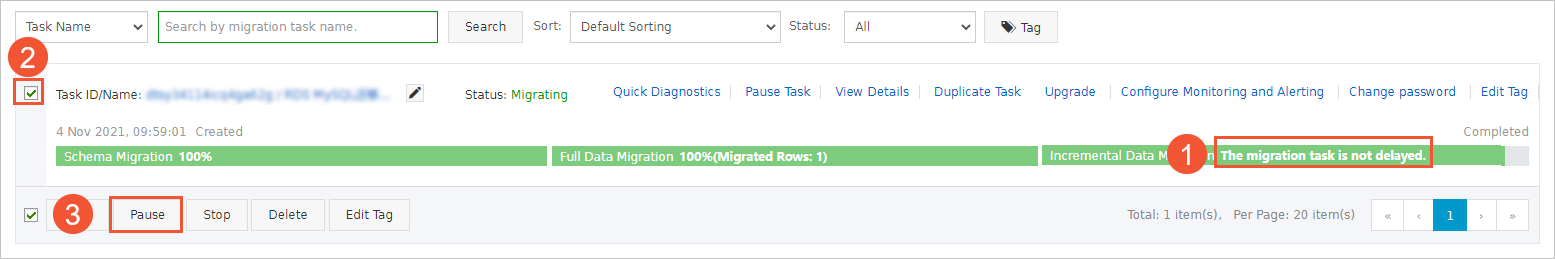

Incremental migration does not stop automatically. Stop it manually at the right time to minimize data loss:

-

Wait until the progress bar shows Incremental Data Migration and The migration task is not delayed.

-

Stop writing data to the source database for a few minutes.

-

Wait until the incremental migration status shows The migration task is not delayed again.

-

Manually stop the migration task.

Stop the task during off-peak hours or immediately before switching workloads to the destination cluster.

After stopping the task, switch your workloads to the PolarDB for MySQL cluster.

What's next

-

Database Management — Manage databases in your PolarDB for MySQL cluster

-

Add the CIDR blocks of DTS servers — Reference for DTS server IP ranges

-

Billing overview — DTS pricing details