The database proxy sits between your application and the PolarDB cluster, enabling read/write splitting, connection pooling, and read consistency control without application code changes. This topic explains how to configure proxy settings for a cluster endpoint.

Prerequisites

Before you begin, ensure that you have:

A PolarDB for MySQL Cluster Edition cluster. For details on product editions, see Enterprise Edition

Usage notes

Only PolarDB for MySQL 8.0 clusters support enabling parallel query and setting the degree of parallelism in the proxy configuration.

Configure proxy settings

Log on to the PolarDB console. In the left navigation pane, click Clusters. Select the region where your cluster is located, then click the cluster ID.

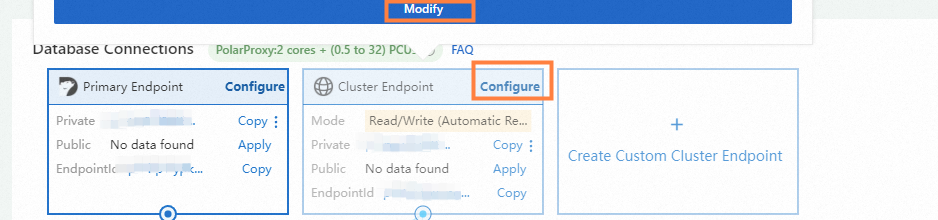

On the Basic Information page, scroll to the Database Connection section. Find the cluster endpoint you want to configure, then click Configure next to the endpoint name.

In the dialog box, modify the settings as needed. The following tables describe each configuration item.

Network Information

Configuration item Description Network Information PolarDB provides a private endpoint for each cluster endpoint by default. To modify the endpoint or request a public endpoint, see Manage endpoints. Cluster Settings

Configuration item Description Read/Write The read/write mode of the cluster endpoint. Valid values: Read-only and Read/Write (Automatic Read/Write Splitting). > NoteIf you change the read/write mode after creating a custom endpoint, the change applies to new connections only. Existing connections retain their original mode.

Endpoint Name The name of the cluster endpoint. Node Settings

Configuration item Description Available Nodes and Selected Nodes Select nodes from the Available Nodes list (which includes the primary node and all read-only nodes), then click the arrow icon to move them to the Selected Nodes list. Node selection controls which nodes process read requests, but does not override the routing behavior of the read/write mode: <br>- Read/Write (Automatic Read/Write Splitting) mode: Write requests always go to the primary node, regardless of whether it is in Selected Nodes. <br>- Read-only mode: Read requests are distributed to read-only nodes based on the load balancing policy, even if the primary node is in Selected Nodes. <br>- Multi-master Cluster (Limitless) Edition: Because routing is based on database and table partitioning, add the primary node of the target database and table to the endpoint, or select all primary nodes. Evaluate the routing impact before selecting nodes. Automatically Associate New Nodes Specifies whether to automatically add new nodes to this endpoint. Load Balancing Settings

Configuration item Description Load Balancing Policy The policy used to distribute read requests across nodes when read/write splitting is enabled. <br>- Active Request-based Load Balancing: Routes each request to the node with the fewest active requests. When you reduce a node's weight to 0, new requests stop going to that node immediately — use this policy when you need to drain a node safely before removing it. <br>- Connections-based Load Balancing: Balances load only at connection establishment. Existing connections remain pinned to their current node; reducing a node's weight to 0 does not reroute existing connections. <br>For details, see Load balancing policy. Primary Node Accepts Read Requests Controls whether query statements can be sent to the primary node. <br>- No: Query statements go to read-only nodes only, reducing load on the primary node. Select this option to protect primary node stability in write-heavy workloads. <br>- Yes: Query statements can go to both the primary node and read-only nodes. <br>For details, see Primary node accepts read requests. > NoteThis setting applies only in Read/Write (Automatic Read/Write Splitting) mode.

Transaction Splitting Enable or disable transaction splitting. For details, see Transaction splitting. > NoteThis setting applies only in Read/Write (Automatic Read/Write Splitting) mode.

On-demand Connection Establishment Enable or disable on-demand connection establishment. For details, see On-demand connection establishment. > NoteThis setting applies only when Load Balancing Policy is set to Active Request-based Load Balancing.

Consistency Settings

Configuration item Description Consistency Level The read consistency guarantee for this endpoint. <br>- Read/Write (Automatic Read/Write Splitting) mode: Choose Eventual Consistency (Weak), Session Consistency (Medium), or Global Consistency (Strong). Use Eventual Consistency (Weak) for highest throughput; use Global Consistency (Strong) when reads must reflect the latest writes. <br>- Read-only mode: Fixed at Eventual Consistency (Weak); cannot be changed. <br>For details, see Consistency level. > ImportantChanges to the consistency level take effect immediately for all connections. Global consistency (high-performance mode) must take effect on all endpoints in the cluster at the same time. If you switch away from Global Consistency (high-performance mode), all other endpoints in the cluster revert to their previous consistency state.

Global Consistency Timeout How long a read-only node waits for the latest data before falling back to the timeout policy. Range: 0–60,000 ms. Default: 20 ms. Increase this value if replication lag is consistently high; decrease it to fail over to the primary node more quickly. > NoteThis setting applies only when Consistency Level is set to Global Consistency (Strong) and Global Consistency Mode is set to Traditional Mode.

Global Consistency Timeout Policy The action taken when a read-only node times out waiting for replication. <br>- Send Requests to Primary Node (Default): Routes the timed-out read to the primary node. Use this option to avoid read errors at the cost of slightly higher primary node load. <br>- SQL Exception: Wait replication complete timeout, please retry. Use this option when you want the application to handle timeouts explicitly. > NoteThis setting applies only when Consistency Level is set to Global Consistency (Strong) and Global Consistency Mode is set to Traditional Mode.

Global Consistency Read Timeout (High-performance Mode) How long a read-only node waits for replication in high-performance mode. Range: 1–1,000,000 ms. Default: 100 ms. > ImportantEnabling Global Consistency (high-performance mode) on one endpoint enables it on all endpoints in the cluster simultaneously. This setting applies only when Consistency Level is Global Consistency (Strong) and Global Consistency Mode is High-performance Mode.

Global Consistency Read Timeout Policy (High-performance Mode) The action taken when a read-only node times out in high-performance mode. <br>- 0 (default): Route the request to the primary node. <br>- 1: Return a timeout error to the client. <br>- 2: Downgrade to a regular read request and return results without an error. > NoteThis setting applies only when Consistency Level is Global Consistency (Strong) and Global Consistency Mode is High-performance Mode.

Session Consistency Read Timeout How long a read-only node waits for replication under session consistency. Range: 0–60,000 ms. Default: 0 ms (no timeout). > ImportantThis setting applies only when Consistency Level is Session Consistency (Medium). If Global Consistency (high-performance mode) is currently enabled, switching to session consistency will revert all endpoints in the cluster to their previous consistency state.

Session Consistency Read Timeout Policy The action taken when the session consistency read timeout is reached. <br>- 0 (default): Route the request to the primary node. <br>- 1: Return an SQL error: Wait replication complete timeout, please retry.>NoteThis setting applies only when Consistency Level is Session Consistency (Medium).

Connection Pool Settings

Configuration item Description Connection Pool The connection pooling mode. Valid values: Off (default), Session-level, and Transaction-level. For details, see Connection pool. > NoteThis setting applies only in Read/Write (Automatic Read/Write Splitting) mode. Changes take effect for new connections only — restart your application or rebuild database connections for the change to take effect.

HTAP Optimization

Configuration item Description Parallel Query Enable or disable elastic parallel query (ePQ) and set the degree of parallelism. ePQ uses idle CPU cores across the cluster to accelerate complex analytical queries. For details, see Elastic parallel query. > NoteSince April 1, 2023, ePQ is enabled by default with a degree of parallelism of 2 for new clusters with 8 or more CPU cores, and for existing clusters where you create a new custom endpoint and the cluster has 8 or more CPU cores. Only PolarDB for MySQL 8.0 clusters support this setting.

Transactional/Analytical Processing Splitting Enable or disable automatic request routing between row store and column store nodes. For details, see Configure automatic request distribution between row store and column store nodes. > NoteThis setting applies only to PolarDB for MySQL 8.0.1 clusters with minor engine version 8.0.1.1.22 or later, in Read/Write (Automatic Read/Write Splitting) mode, with at least one read-only column store node in Selected Nodes.

Column Store Node Accepts OLTP Requests Enable or disable OLTP request processing on column store nodes. When enabled, column store nodes accept both OLAP and OLTP requests, and the proxy routes OLTP read requests to column store nodes based on active request counts. This increases the load on column store nodes. > NoteThis setting applies only when Transactional/Analytical Processing Splitting is enabled.

Security Protection

Configuration item Description Overload Protection Enable or disable overload protection. For details, see Overload protection. Click OK.

Related API operations

| API | Description |

|---|---|

| Query the endpoint information of a PolarDB cluster | Query cluster endpoints. |

| Modify the attributes of a PolarDB cluster endpoint | Modify a cluster endpoint. |

| Release a custom PolarDB cluster endpoint | Release a custom cluster endpoint. |