When drivers like Java Database Connectivity (JDBC) wrap requests in transactions, or when your cluster has many read-only nodes, most read traffic lands on the primary node while read-only nodes sit idle. PolarDB's load balancing distributes read requests across read-only nodes using two policies and three complementary features—transaction splitting, weight-based routing, and on-demand connections—so you can reduce primary node load without changing application code.

Choose a load balancing policy

| Policy | How routing works | Connection count | Advanced features | Best for |

|---|---|---|---|---|

| Connections-based | Routes a new connection to the read-only node with the fewest existing connections. The connection stays on that node for its entire lifetime—requests within the session are not redistributed. | Sum of the maximum connections across all read-only nodes | Not supported (no consistency level, transaction splitting, persistent connections, or automatic row store/column store routing) | Maximizing throughput and connection count when you don't need per-request balancing or advanced features |

| Active request-based | Routes each request to the node with the fewest active requests at that moment | Minimum of the maximum connections across all read-only nodes | Supported | Clusters with mixed node specs, real-time load balancing, or any advanced feature (transaction splitting, consistency levels, on-demand connections) |

A cluster endpoint in Read-only mode supports both policies. A cluster endpoint in Read/Write (Automatic Read/Write Splitting) mode supports only Active Request-based Load Balancing.

For Read-only mode cluster endpoints, read requests are never forwarded to the primary node, regardless of which policy is configured.

Primary node accepts read requests

By default, a Read/Write (Automatic Read/Write Splitting) cluster endpoint may forward read requests to the primary node. Set Primary Node Accepts Read Requests to No to stop this.

When set to No, the following requests are still forwarded to the primary node:

High-consistency reads within transactions

Broadcast requests such as

SETandPREPAREAny reads when all read-only nodes are unavailable

To further reduce reads on the primary node, lower the consistency level to eventual consistency or enable transaction splitting.

This parameter is available only when Read/Write is set to Read/Write (Automatic Read/Write Splitting). For configuration steps, see Configure PolarProxy.

When the new value takes effect:

| PolarProxy version | Connection type | When it takes effect |

|---|---|---|

| 1.x.x or 2.5.1 and later | Any | Immediately |

| 2.x.x earlier than 2.5.1 | Persistent connection | After re-establishing the connection |

| 2.x.x earlier than 2.5.1 | Short-lived connection | Immediately |

Transaction splitting

Best for: Clusters where JDBC or similar drivers wrap all requests in transactions, causing heavy primary node load while read-only nodes are underutilized.

How it works

Drivers such as JDBC wrap requests in transactions automatically. Because PolarProxy must preserve data consistency within a session, it routes all in-transaction requests to the primary node—even read requests. This can overload the primary node while read-only nodes sit idle.

Transaction splitting sends read requests within a transaction to read-only nodes without requiring code or configuration changes on the application side.

PolarDB for MySQL supports two splitting levels:

Read request splitting before first write request (default)

PolarProxy forwards read requests in a transaction to read-only nodes up until the first write request. All subsequent requests in that transaction go to the primary node.

Full transaction splitting (read request splitting before and after first write request)

All read requests in a transaction—both before and after the first write—are routed to read-only nodes. Before routing a split read request to a read-only node, PolarProxy checks whether all prior writes in the session (for session consistency) or across all sessions (for global consistency) have replicated to that node. If not, the read is routed to the primary node instead. Full transaction splitting is not available with eventual consistency.

Prerequisites

Transaction splitting requires:

One of the following engine versions:

PolarDB for MySQL 5.6: revision 5.6.1.0.29 or later

PolarDB for MySQL 5.7: revision 5.7.1.0.9 or later

PolarDB for MySQL 8.0.1: revision 8.0.1.1.18 or later

PolarDB for MySQL 8.0.2: any revision

loose_query_cache_typeset toOFF. This is the default for 5.6, 5.7, and 8.0.1 clusters. For 8.0.2 clusters, the default isON—change it and restart the cluster.

Usage notes

Only transactions at the Read Committed isolation level can be split. Transaction splitting is enabled by default.

If a read-only node doesn't meet the configured consistency requirements, reads are routed to the primary node instead.

PolarProxy earlier than 2.4.14 supports only read request splitting before write operations, not full transaction splitting.

If PolarProxy is 2.4.14 or later and a persistent connection is in use, re-establish the connection to activate transaction splitting. Short-lived connections use transaction splitting immediately.

Disable transaction splitting

When transaction splitting is disabled, all requests in a transaction are routed to the primary node.

For configuration steps, see Configure PolarProxy.

Weight-based load balancing

Best for: Clusters with nodes of different specifications, such as directing report or analytics queries to larger nodes while keeping standard OLTP traffic on smaller ones.

By default, PolarProxy routes each request to the node with the fewest concurrent requests. Weight-based load balancing adds a custom weight to that decision, letting you steer more traffic toward specific nodes.

The routing formula is:

Dynamic weight = Custom weight / Number of concurrent requests

A higher dynamic weight makes a node more likely to receive the next request. Traffic gradually shifts to match the configured ratio; results converge over time rather than switching instantly.

PolarProxy 2.8.3 or later is required.

Configure weights

Weights can be configured at two levels:

Global dimension: applies to all endpoints

Endpoint dimension: applies to one endpoint and overrides the global setting

Usage notes

All nodes start with a weight of 1.

Weights range from 0 to 100. A weight of 0 means no requests are routed to the node as long as any other node is available.

Serverless clusters do not support weight configuration at the endpoint dimension.

For clusters with multiple read-only column store nodes, In-Memory Column Index (IMCI) requests are balanced based on the weights of those nodes.

The actual traffic ratio may differ slightly from the specified ratio and converges gradually.

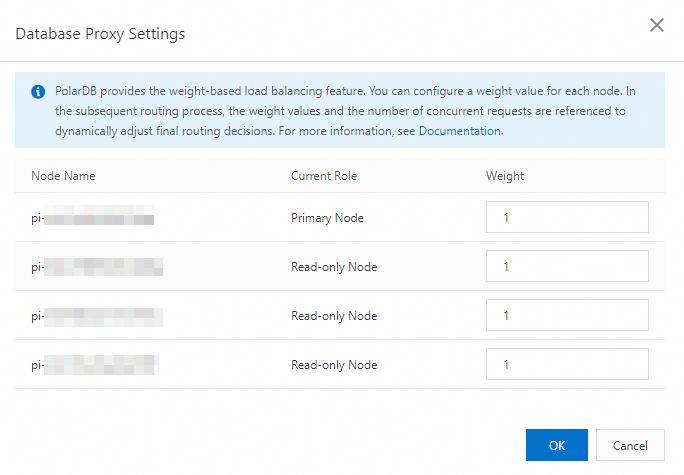

Configure weights in the global dimension

Log on to the PolarDB console.

In the upper-left corner, select the region where the cluster is deployed.

Find the target cluster and click its ID.

In the Standard Enterprise Edition or Dedicated Enterprise Edition section of the Basic Information page, click Database Proxy Settings.

In the Database Proxy Settings dialog box, set a weight for each node.

Click OK.

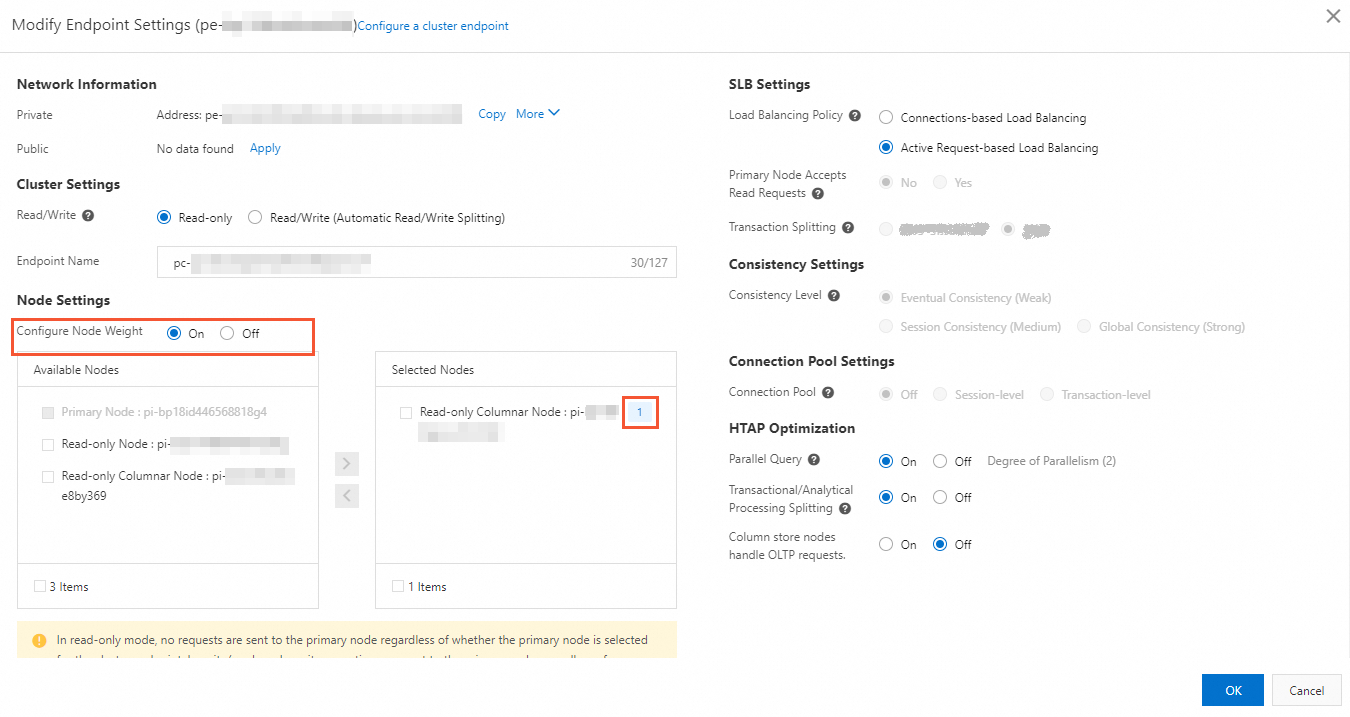

Configure weights in the endpoint dimension

Log on to the PolarDB console.

In the upper-left corner, select the region where the cluster is deployed.

Find the target cluster and click its ID.

In the Standard Enterprise Edition or Dedicated Enterprise Edition section of the Basic Information page, click Configure in the upper-right corner of the cluster endpoint or custom endpoint.

In the Node Settings section of the Modify Endpoint Settings dialog box, select Configure Node Weight and set a weight for the node.

Click OK.

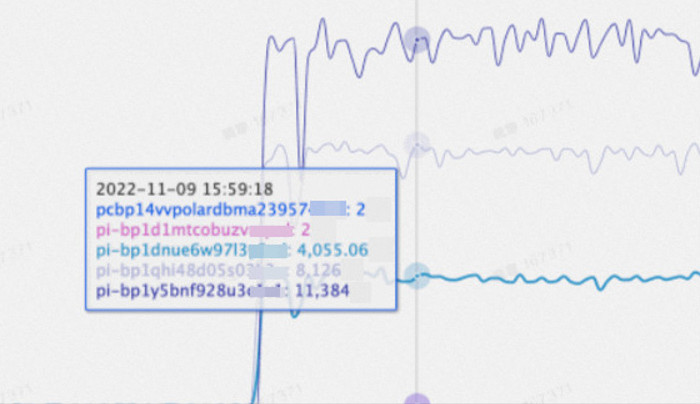

Test data

The following figure shows test results using sysbench oltp_read_only with a weight ratio of 1:2:3 (1 for the primary node).

The internal nodes _pi-bp1d1mtcobuzv\*\*\*\*_ and _pcbp14vvpolardbma23957\*\*\*\*_ are not involved in routing requests. Their metrics can be ignored.

On-demand connections

Best for: Clusters with many read-only nodes, short-lived connections, sessions with many broadcast statements (for example, PHP applications where the first statement is SET NAMES utf8mb4), or workloads where most queries use short PREPARE statements.

How it works

With Active Request-based Load Balancing, PolarProxy uses full connections by default: when a client opens a session, PolarProxy establishes a backend connection to every node in the endpoint. Broadcast requests such as SET statements are forwarded to all nodes. As the number of nodes increases, this overhead grows.

On-demand connections change this behavior: PolarProxy connects to backend nodes only when needed. In most cases, a session uses one primary node connection and one read-only node connection (eventual consistency is provided only). Read-only sessions typically connect to one read-only node only.

The following table compares routing and data reading efficiency for a cluster with one primary node and three read-only nodes:

| Scenario | Connections per session | Broadcast statement routing |

|---|---|---|

| Non-on-demand connections | 4 (all nodes) | Routed to all 4 nodes |

| On-demand connections for read-only sessions | 1 (one read-only node) | Routed to that read-only node only |

| On-demand connections for read/write sessions | 2 (one primary, one read-only) | Routed to the 2 connected nodes only |

Limitations

PolarProxy 2.8.34 or later is required. For steps to check the PolarProxy version, see Check the version number.

SHOW PROCESSLISTSmay not display all active connections.KILLstatements may not terminate connections to all nodes.

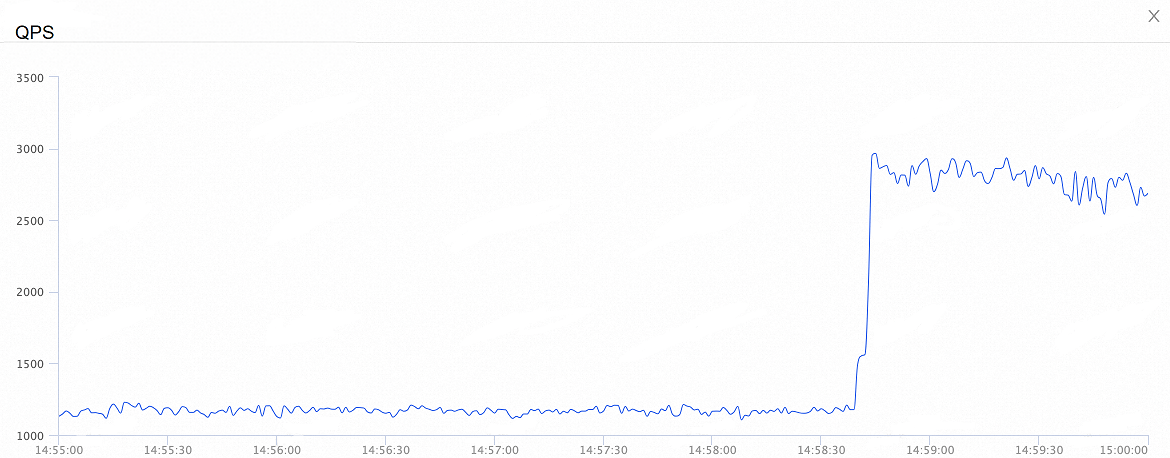

Performance test results

Test environment: 1 primary node and 7 read-only nodes; SQL: SET NAMES utf8mb4 and SELECT 1; tool: Sysbench

| Scenario | CPU utilization | Total connections | QPS |

|---|---|---|---|

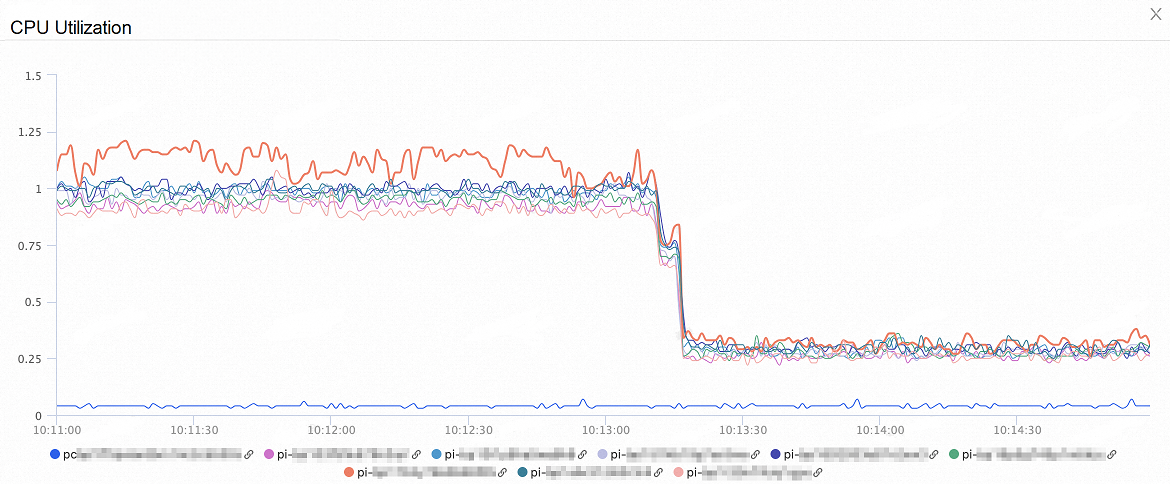

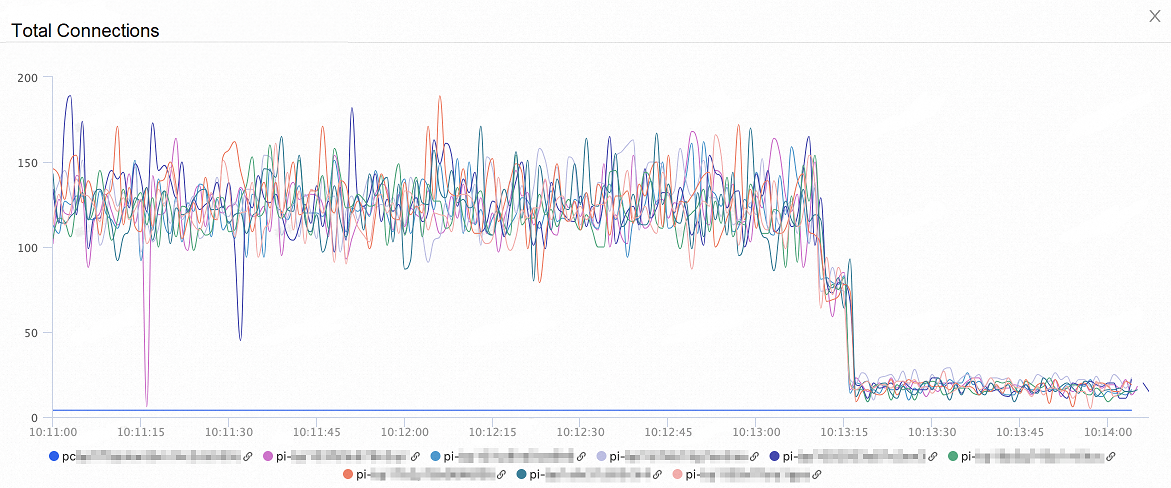

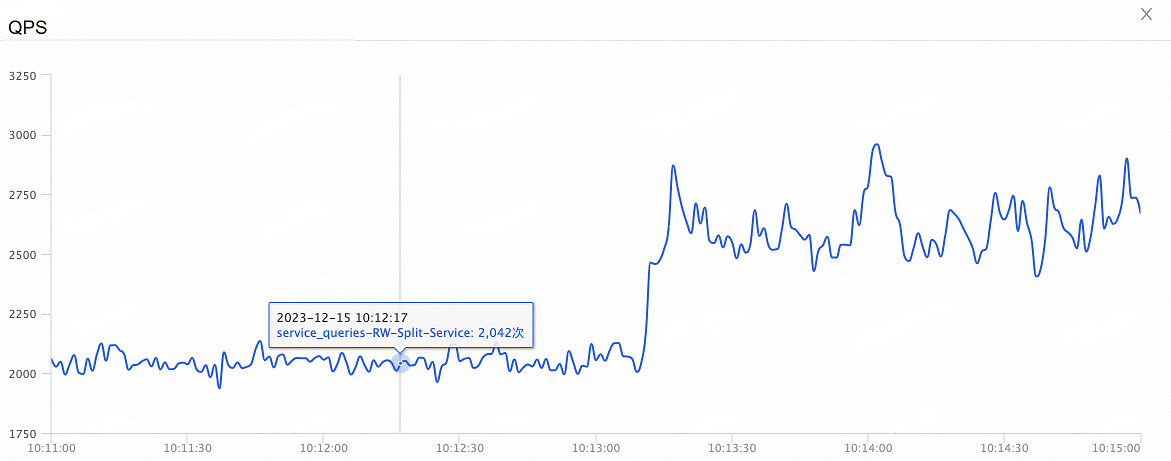

| No connection pool | Decreases >60% | Decreases >80% | Increases 35% |

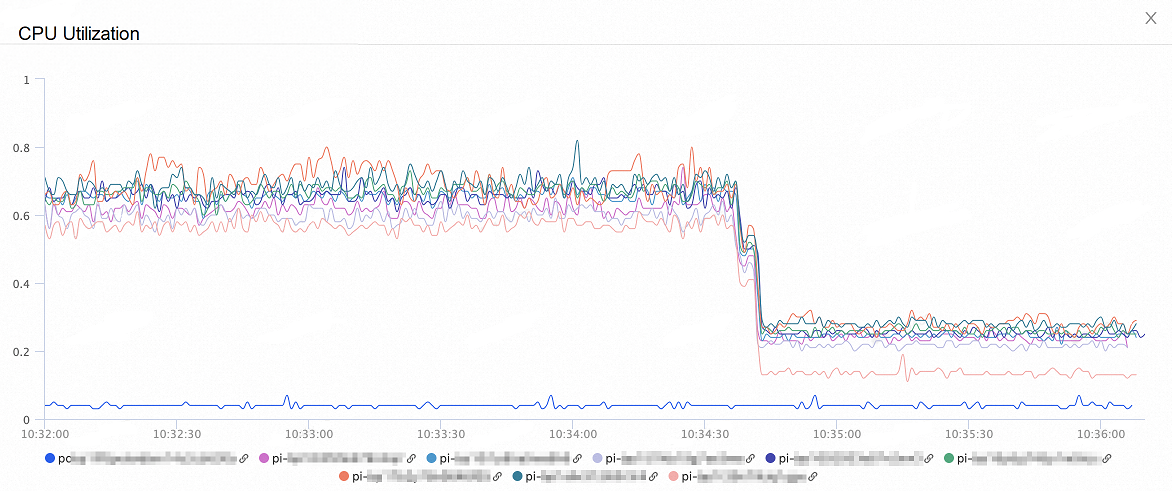

| Session-level connection pool | Decreases 50%–60% | Decreases 60% | Increases 30% |

| Transaction-level connection pool | Decreases 60% | Decreases 50% | Increases 260% |

No connection pool — CPU utilization

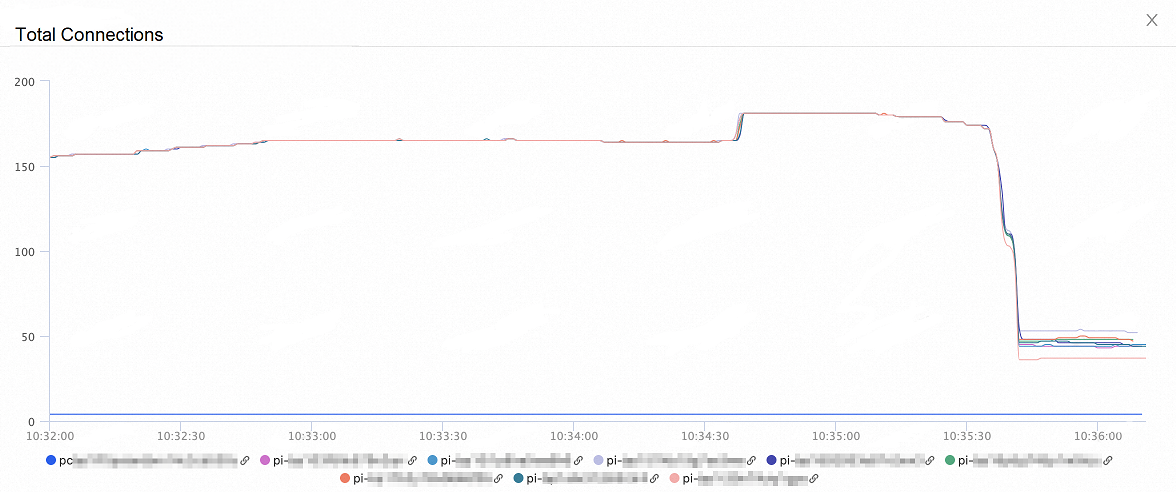

No connection pool — total connections

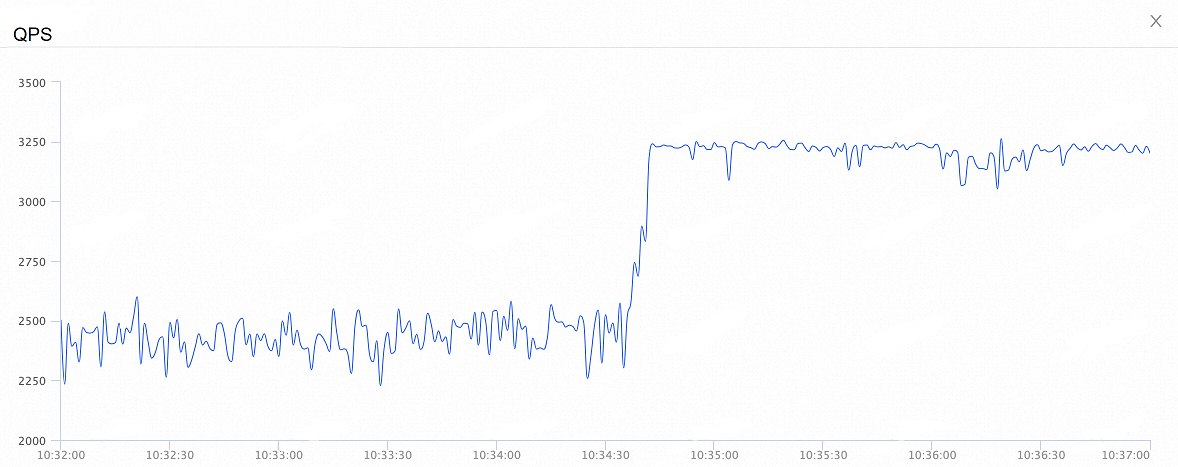

No connection pool — QPS

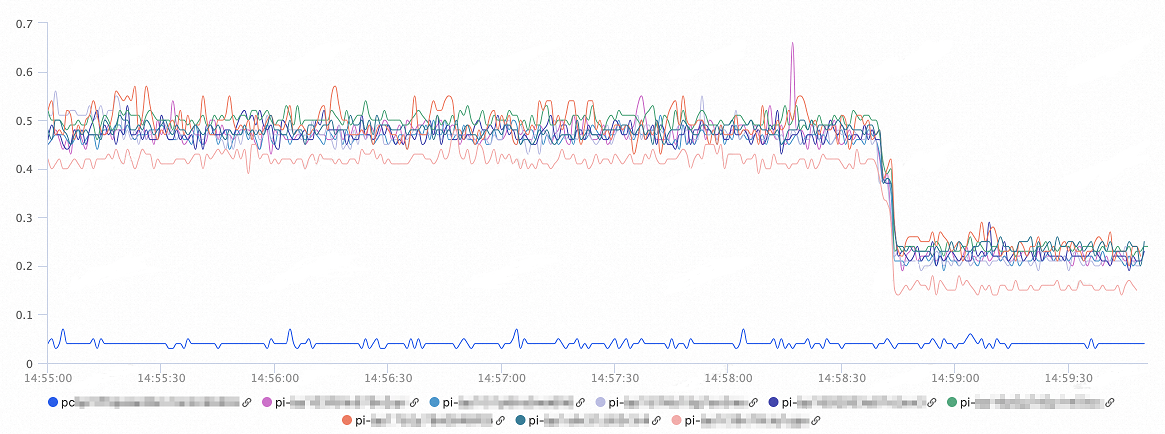

Session-level connection pool — CPU utilization

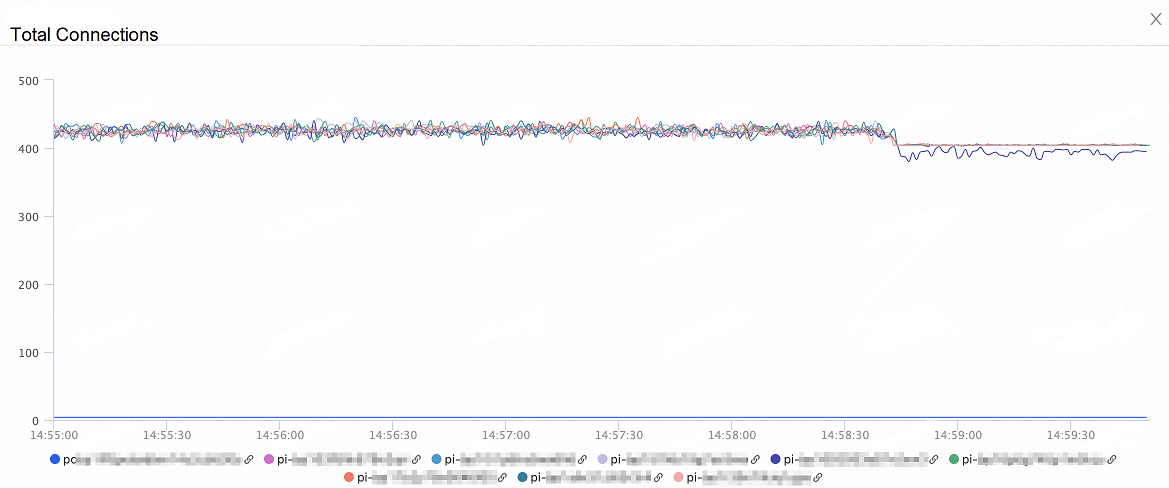

Session-level connection pool — total connections

Session-level connection pool — QPS

Transaction-level connection pool — CPU utilization

Transaction-level connection pool — total connections

Transaction-level connection pool — QPS