This topic describes how to use the Stable Diffusion WebUI tool.

Log on to the PAI ArtLab console.

Prerequisites

You have activated PAI ArtLab and granted the required permissions. For more information, see Activate PAI ArtLab and grant permissions.

(Optional) You have claimed free trial resources or coupons, or purchased a resource plan. For more information, see PAI ArtLab billing.

The resources, coupons, or resource plan must be used within their validity period. For more information, see View usage and validity period.

Procedure

This topic provides an example of how to generate an image using the text-to-image feature of Stable Diffusion (Shared) and then generate a new image from the original one.

Step 1: Generate an image from text

Log on to PAI ArtLab. In the upper-right corner, hover over the

icon and select the China (Shanghai) region.

icon and select the China (Shanghai) region.On the Toolbox page, click Stable Diffusion (Shared Edition) to start the tool.

For Stable Diffusion Model, select a Checkpoint model.

On the tab, configure the following parameters.

Parameter

Description

Prompt area

Positive prompt (Summer):

Green, Spring, Bamboo Forest, River:1.2, Flowing Water, Nature, Poetic Atmosphere, Green Theme, jade, light green, Masterpiece:1.2, Best Picture Quality, High Definition, Original, Extremely Good Wallpaper, Perfect Light, Extremely Good CG:1.2, Best Picture Quality, Magical Light Effect, super rich, super detailed, 32k, Abstract, 3D<lora:shuimo:0.5> <lora:green:0.1> <lora:guofeng:0.8>Negative prompt:

lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts, signature, watermark, username, blurry

Steps

Set to 30.

Sampler

Select DPM++2M Karras.

Width

Set to 512.

Height

Set to 768.

Seed

Select one of the following values:

-1, 994414790, 2897581471, 4112712676, or 460787179.

On the tab, configure the following parameters.

Parameter

Description

Single Image

Upload the sample image and select Enable, Pixel Perfect, and Allow Preview.

Control Type

Select Lineart.

Preprocessor

Select lineart_coarse.

Model

Select control_v11p_sd15_lineart[3ead383e].

Control Weight

Set to 0.8.

Click the

icon to preview the pre-processing effect.

icon to preview the pre-processing effect.

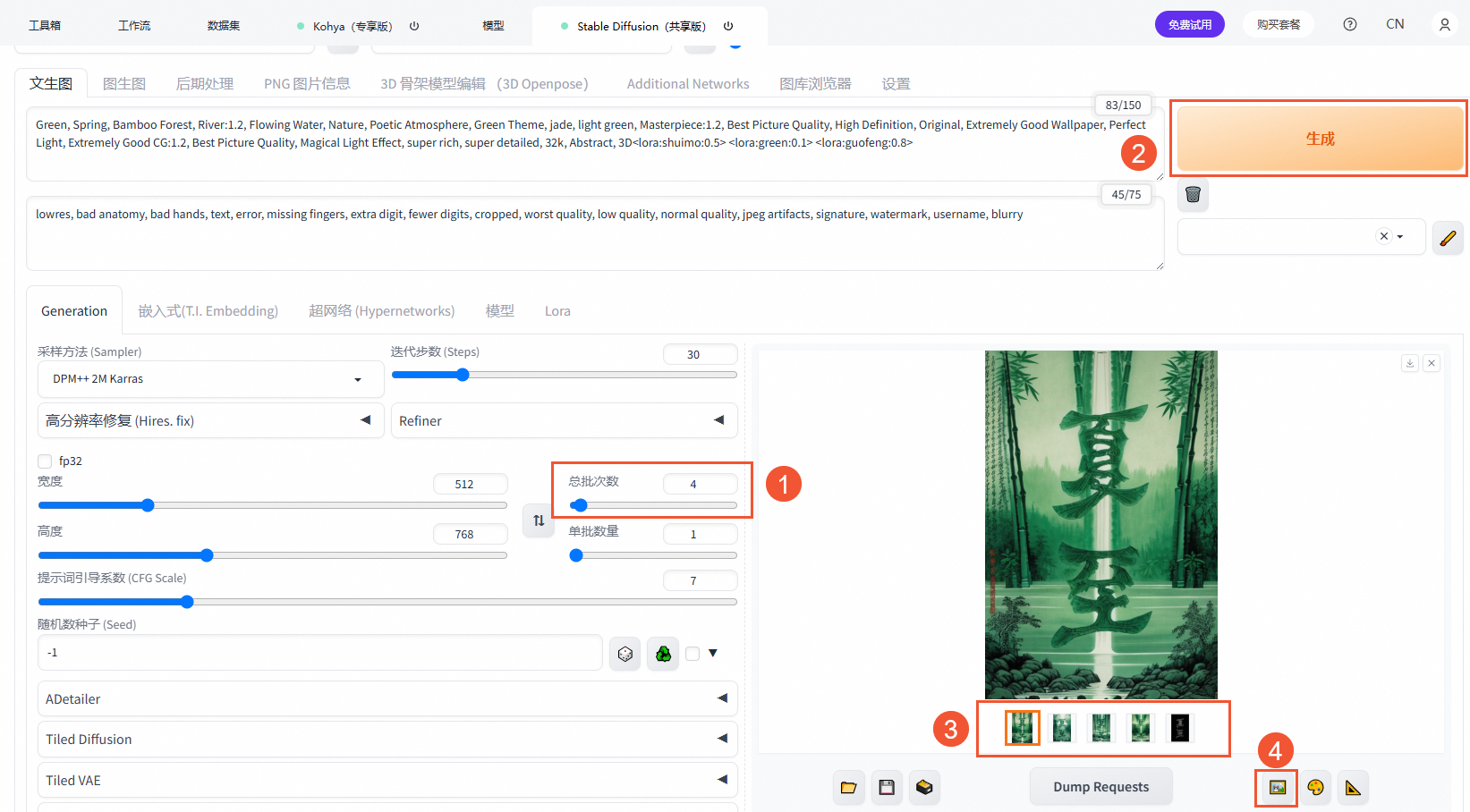

Click Generate.

You can use different combinations of prompts and models to generate images in various styles without changing other parameters.

Step 2: Generate an image from an image

You can send the generated image to the image-to-image feature using one of the following methods. The image is sent with all the information that was used to generate it.

Method 1: On the Text-to-image tab, set Batch Count to 4 and click Generate. Select your preferred image and click the

icon to send it to the image-to-image feature.

icon to send it to the image-to-image feature.

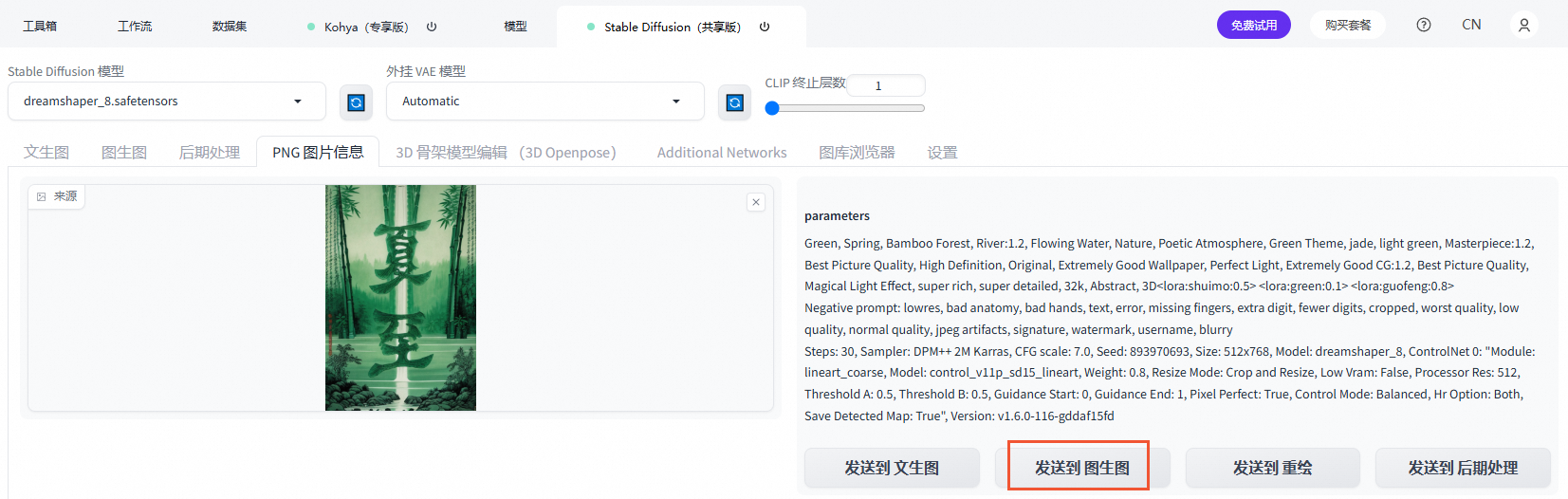

Method 2: On the PNG Info tab, upload the generated image and click Send To Img2img.

On the tab, configure the following parameters.

Parameter

Description

Single Image

Upload the generated image and select Enable, Pixel Perfect, Allow Preview, and Upload independent control image.

Control Type

Select Tile.

Keep the default settings for Preprocessor and Model.

Control Weight

Set to 0.8.

On the tab, configure the following parameters.

Parameter

Description

Script

Select Ultimate SD upscale.

Target size type

Select Scale from image size.

Upscaler

Select 4x-UltraSharp.

Click Generate.

Related operations

Install Stable Diffusion WebUI plugins

Only the Exclusive Edition of Stable Diffusion WebUI supports installing plugins. For more information, see Upload local model plugins to the PAI ArtLab platform.

Add models from the Model Gallery

For more information, see Use models from the Model Gallery.

Appendix: Differences between the PAI ArtLab Stable Diffusion WebUI tool and the open-source Stable Diffusion WebUI

PAI ArtLab's Stable Diffusion is based on PAI-EAS (Elastic Algorithm Service) and lets you quickly deploy and use the open-source Stable Diffusion project in the cloud.

To meet the specific needs of cloud deployment and enterprise users, the PAI team has customized the open-source project. Unlike the open-source project, which recommends a single-PC deployment, the PAI Stable Diffusion WebUI solution is tailored for Alibaba Cloud services and enterprise requirements while ensuring compatibility. The solution provides the following features:

Agile deployment

After you obtain an Alibaba Cloud account, you can complete the deployment in less than 5 minutes and obtain a WebUI access link. The user experience is comparable to a local deployment.

The solution includes pre-installed common plugins and provides accelerated downloads for popular open-source models.

It provides a wide range of GPU resources on the cloud, including A10, GU60, and GU100 specifications. Video memory options include 24 GB, 48 GB, 80 GB, and 96 GB, which helps you run tasks of varying complexity.

Enterprise-grade features

It features backend and frontend decoupling and supports multi-user, multi-GPU cluster scheduling. For example, 10 users can share 3 GPUs.

It supports authentication for Alibaba Cloud RAM users. This ensures that models and outputs are isolated while access portals and GPU resources are shared.

The billing system supports bill splitting at the studio and cluster levels to simplify Financial Management.

Deep optimization and plugin ecosystem

It integrates PAI-blade to significantly accelerate image generation. It is 2 to 3 times faster than the original version and 20% to 60% faster than the xformer optimization, without affecting the quality of the generated model.

It provides a filebrowser plugin to facilitate the transfer of models and images in the cloud.

It provides a self-developed modelzoo plugin that accelerates open-source model downloads and enables you to manage prompts, image results, and parameters on a per-model basis.

For enterprise users, it supports centralized plugin management and personalized user configurations.