Deploy, fine-tune, evaluate, and compress Qwen2.5-Coder models (0.5B to 32B) using Model Gallery—optimized for code generation, completion, and repair across 92 programming languages.

Qwen2.5-Coder capabilities

Qwen2.5-Coder is Alibaba Cloud's coding model series with six sizes (0.5B, 1.5B, 3B, 7B, 14B, 32B). It supports up to 128K tokens context length and 92 programming languages. Qwen2.5-Coder-Instruct is the instruction-tuned version with improved task performance.

-

Multilingual coding support

Supports over 40 programming languages including niche ones, validated on McEval benchmark.

-

Code reasoning

Strong reasoning ability on CRUXEval benchmark. Code reasoning improvements enhance complex instruction execution.

-

Mathematical ability

Excels at both math and code tasks, demonstrating solid scientific and technical competence.

-

Core capabilities

Retains Qwen2.5's general capabilities, confirming broad applicability and stability.

Prerequisites

-

Available regions: China (Beijing), China (Shanghai), China (Shenzhen), China (Hangzhou), China (Ulanqab), and Singapore.

-

GPU resource requirements:

Model size

Resource requirements

Qwen2.5-Coder-0.5B/1.5B

-

Training: GPUs with ≥16 GB VRAM (T4, P100, or V100)

-

Deployment: Minimum single P4; recommended single GU30, A10, V100, or T4

Qwen2.5-Coder-3B/7B

-

Training: GPUs with ≥24 GB VRAM (A10 or T4)

-

Deployment: Minimum single P100, T4, or V100 (gn6v); recommended single GU30 or A10

Qwen2.5-Coder-14B

-

Training: GPUs with ≥32 GB VRAM (V100)

-

Deployment: Minimum single L20, single GU60, or dual GU30; recommended dual GU60 or dual L20

Qwen2.5-Coder-32B

-

Training: GPUs with ≥80 GB VRAM (A800 or H800)

-

Deployment: Minimum two GU60, two L20, or four A10; recommended four GU60, four L20, or eight V100-32G

-

Deploy, train, evaluate, and compress models

Deploy and invoke the model

-

Go to Model Gallery.

-

Log on to the PAI console.

-

In the top-left corner, select your region.

-

In the left-side navigation pane, click Workspaces, then click your workspace name.

-

In the left-side navigation pane, click Getting Started > Model Gallery.

-

-

Click the Qwen2.5-Coder-32B-Instruct model card to open the model details page.

-

Click Deploy in the upper-right corner. Configure deployment method, inference service name, and resource settings. Set vLLM accelerated deployment as the deployment method.

-

Use the inference service.

After deployment completes, use the inference method shown on the model details page to call the model service.

Fine-tune the model

Model Gallery includes built-in supervised fine-tuning (SFT) and direct preference optimization (DPO) algorithms for Qwen2.5-Coder-32B-Instruct.

SFT supervised fine-tuning

SFT training accepts JSON-formatted input. Each sample contains an instruction and an output, represented by "instruction" and "output" fields. Example:

[

{

"instruction": "Create a function to calculate the sum of a sequence of integers.",

"output": "# Python code\ndef sum_sequence(sequence):\n sum = 0\n for num in sequence:\n sum += num\n return sum"

},

{

"instruction": "Generate a Python code for crawling a website for a specific type of data.",

"output": "import requests\nimport re\n\ndef crawl_website_for_phone_numbers(website):\n response = requests.get(website)\n phone_numbers = re.findall('\\d{3}-\\d{3}-\\d{4}', response.text)\n return phone_numbers\n \nif __name__ == '__main__':\n print(crawl_website_for_phone_numbers('www.example.com'))"

}

]DPO direct preference optimization

DPO training accepts JSON-formatted input. Each sample contains a prompt, preferred response, and rejected response, represented by "prompt", "chosen", and "rejected" fields. Example:

[

{

"prompt": "Create a function to calculate the sum of a sequence of integers.",

"chosen": "# Python code\ndef sum_sequence(sequence):\n sum = 0\n for num in sequence:\n sum += num\n return sum",

"rejected": "[x*x for x in [1, 2, 3, 5, 8, 13]]"

},

{

"prompt": "Generate a Python code for crawling a website for a specific type of data.",

"chosen": "import requests\nimport re\n\ndef crawl_website_for_phone_numbers(website):\n response = requests.get(website)\n phone_numbers = re.findall('\\d{3}-\\d{3}-\\d{4}', response.text)\n return phone_numbers\n \nif __name__ == '__main__':\n print(crawl_website_for_phone_numbers('www.example.com'))",

"rejected": "def remove_duplicates(string): \n result = \"\" \n prev = '' \n\n for char in string:\n if char != prev: \n result += char\n prev = char\n return result\n\nresult = remove_duplicates(\"AAABBCCCD\")\nprint(result)"

}

]-

Click the Qwen2.5-Coder-32B-Instruct model card to open the model details page.

-

Click Train in the upper-right corner. Configure the following:

-

Dataset: Upload to OSS bucket, select from NAS or CPFS, or use PAI public datasets for testing.

-

Compute resources: Requires GPUs with ≥80 GB VRAM. Ensure sufficient resource quota. For other model sizes, see Prerequisites.

-

Hyperparameters: See supported hyperparameters below. Adjust based on your dataset and resources, or use defaults.

Hyperparameter

Type

Default value

Required

Description

training_strategy

string

sft

Yes

Training algorithm. Valid values: sft, dpo.

learning_rate

float

5e-5

Yes

Learning rate. Controls weight adjustments during training.

num_train_epochs

int

1

Yes

Number of iterations over the training dataset.

per_device_train_batch_size

int

1

Yes

Samples processed per GPU in one training iteration. Larger batches improve efficiency but increase VRAM usage.

seq_length

int

128

Yes

Maximum tokens processed in one training step.

lora_dim

int

32

No

LoRA dimension. When lora_dim > 0, uses LoRA or QLoRA lightweight training.

lora_alpha

int

32

No

LoRA weight. Takes effect when lora_dim > 0.

dpo_beta

float

0.1

No

Weight of preference signals during training.

load_in_4bit

bool

false

No

Load model in 4-bit precision.

When lora_dim > 0, load_in_4bit is true, and load_in_8bit is false, uses 4-bit QLoRA lightweight training.

load_in_8bit

bool

false

No

Load model in 8-bit precision.

When lora_dim > 0, load_in_4bit is false, and load_in_8bit is true, uses 8-bit QLoRA lightweight training.

gradient_accumulation_steps

int

8

No

Steps to accumulate gradients before updating weights.

apply_chat_template

bool

true

No

Apply model’s default chat template to training data. For Qwen2-series models:

-

Problem:

<|im_end|>\n<|im_start|>user\n + instruction + <|im_end|>\n -

Response:

<|im_start|>assistant\n + output + <|im_end|>\n

system_prompt

string

You are a helpful assistant

No

System prompt used during model training.

-

-

-

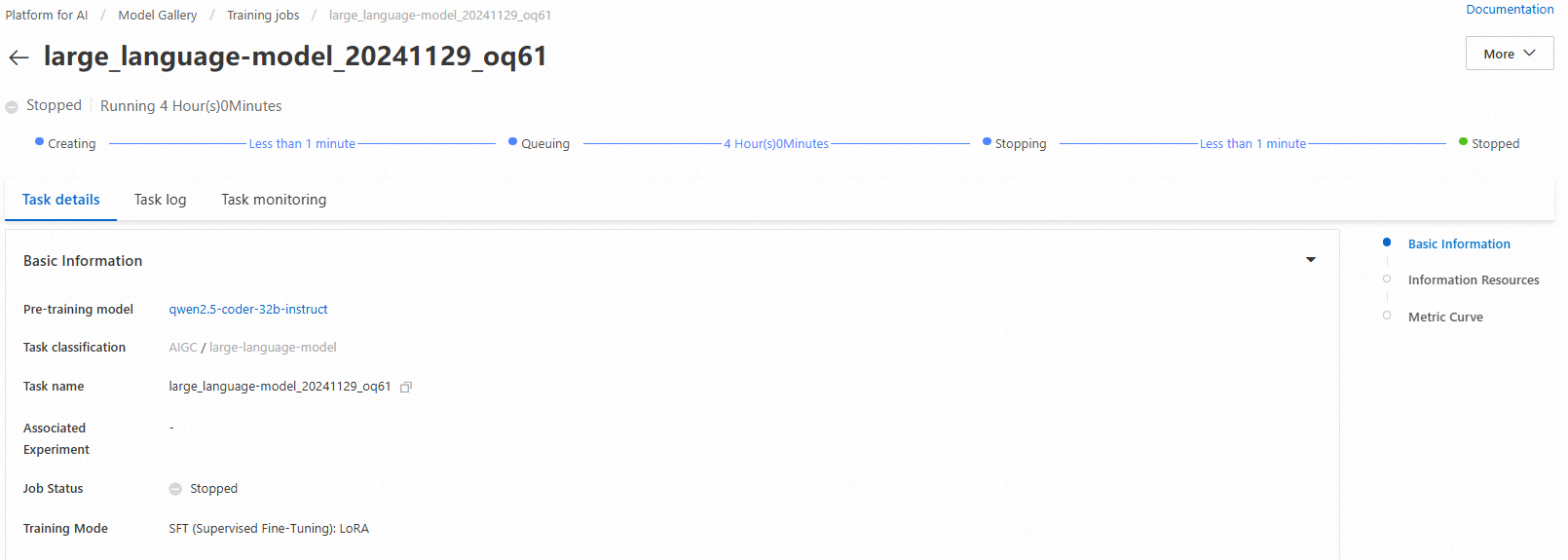

Click Train. Model Gallery opens the task details page and starts training. Monitor training job status and logs.

Trained models register automatically in AI Assets > Model Management. View or deploy them from there. For details, see Register and manage models.

Evaluate the model

Model evaluation helps measure and compare model performance, guiding precise model selection and optimization to accelerate AI innovation and adoption.

Model Gallery includes built-in evaluation algorithms for Qwen2.5-Coder-32B-Instruct. Evaluate the base model or fine-tuned versions. For full instructions, see Model evaluation and Large Language Model Evaluation Best Practices.

Compress the model

Before deployment, quantize and compress trained models to reduce storage and compute resource usage. For full instructions, see Model compression.