Evaluate Large Language Models using custom datasets, public benchmarks, or LLM-as-a-Judge scoring.

Available evaluation methods

Model evaluation assesses LLMs using two approaches:

-

Custom dataset evaluation

-

Rule-based evaluation: Uses ROUGE and BLEU metrics to measure similarity between model predictions and ground truth answers.

-

LLM-as-a-Judge evaluation: Uses the PAI Qwen2-based judge model to score each model output individually. Works well for open-ended and complex Q&A scenarios.

-

-

Public dataset evaluation

-

Evaluates models on industry-standard public datasets such as MMLU, TriviaQA, HellaSwag, GSM8K, C-Eval, and TruthfulQA.

-

Provides benchmark scores aligned with industry evaluation standards.

-

Supported models: All HuggingFace AutoModelForCausalLM model types.

LLM-as-a-Judge scoring uses a Qwen2-based Large Language Model to score model outputs. Works well for open-ended and complex Q&A scenarios. Try it for free in Expert Mode under Model Evaluation. [2024.09.01]

Use cases

Common use cases:

-

Model benchmarking

-

Evaluates general model capabilities using public datasets.

-

Compares your model against industry benchmarks or other models.

-

-

Domain-specific capability evaluation

-

Applies the model to a specific domain.

-

Compares performance of pre-trained and fine-tuned models across domains.

-

Evaluates how well the model applies domain knowledge.

-

-

Model regression testing

-

Builds a regression test set.

-

Evaluates model performance in real business scenarios.

-

Determines whether the model meets production standards.

-

Billing

-

OSS storage fee: Covers storage of evaluation datasets and results. For more information, see OSS billing information.

-

DLC evaluation task fee: Covers running evaluation tasks. For more information, see DLC billing information.

Data preparation

Model evaluation supports evaluations using custom datasets or public datasets such as C-Eval.

-

Public datasets:

PAI currently maintains the following public datasets: MMLU, TriviaQA, HellaSwag, GSM8K, C-Eval, and TruthfulQA. Use them directly. More public datasets will be added.

-

Custom datasets:

Provide a JSONL-formatted evaluation file. Upload it to OSS and create a custom dataset. For more information, see Upload an OSS file and Create and manage datasets. Required format:

Use

questionto label the question column andanswerto label the answer column. Alternatively, select specific columns on the evaluation page. If performing only custom dataset–LLM-as-a-Judge evaluation, theanswercolumn is optional.{"question": "Did China invent papermaking? Is this correct?", "answer": "Correct"} {"question": "Did China invent gunpowder? Is this correct?", "answer": "Correct"}Example file: eval.jsonl

Workflow

Step 1: Select a model

-

Go to the Model Gallery page.

-

Log on to the PAI console.

-

In the navigation pane on the left, click Workspaces. Then, select and enter your target workspace.

-

In the navigation pane on the left, choose QuickStart > Model Gallery to go to the Model Gallery page.

-

-

Find models that support evaluation.

-

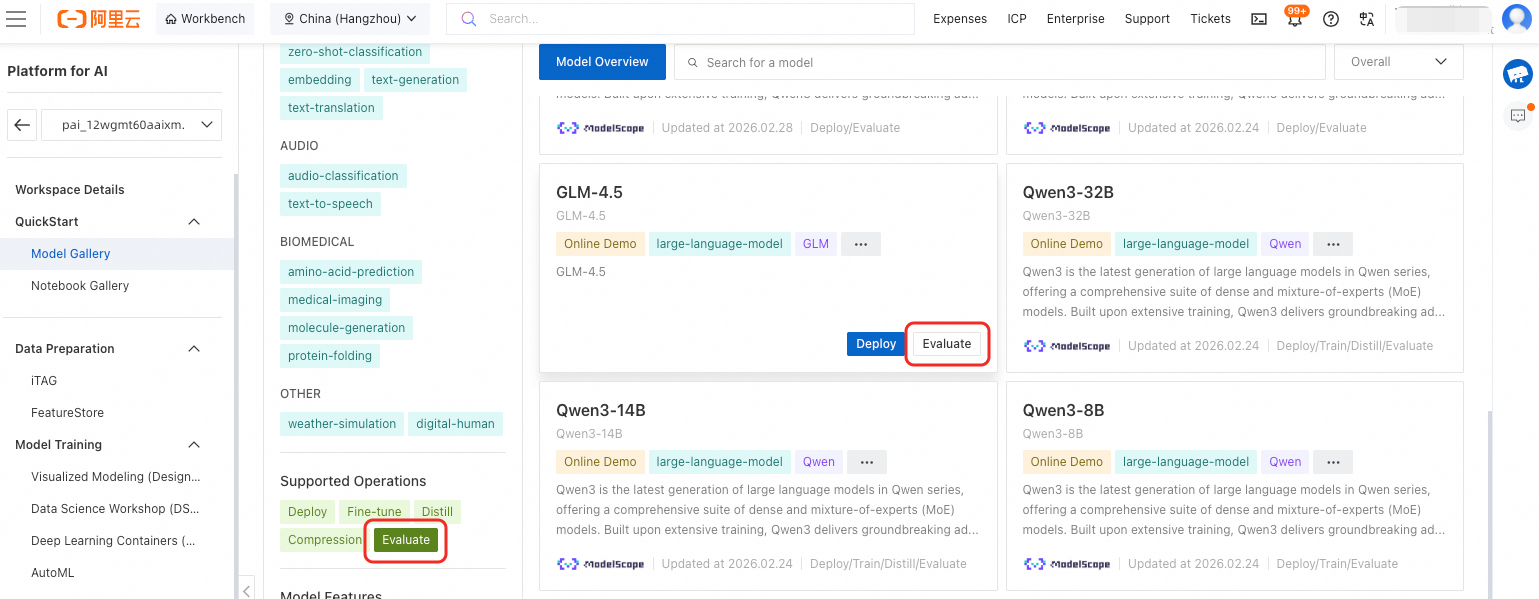

Filter for evaluatable models in the Model Gallery. In the Supported Operations filter section, select Evaluate to display only models that support evaluation.

-

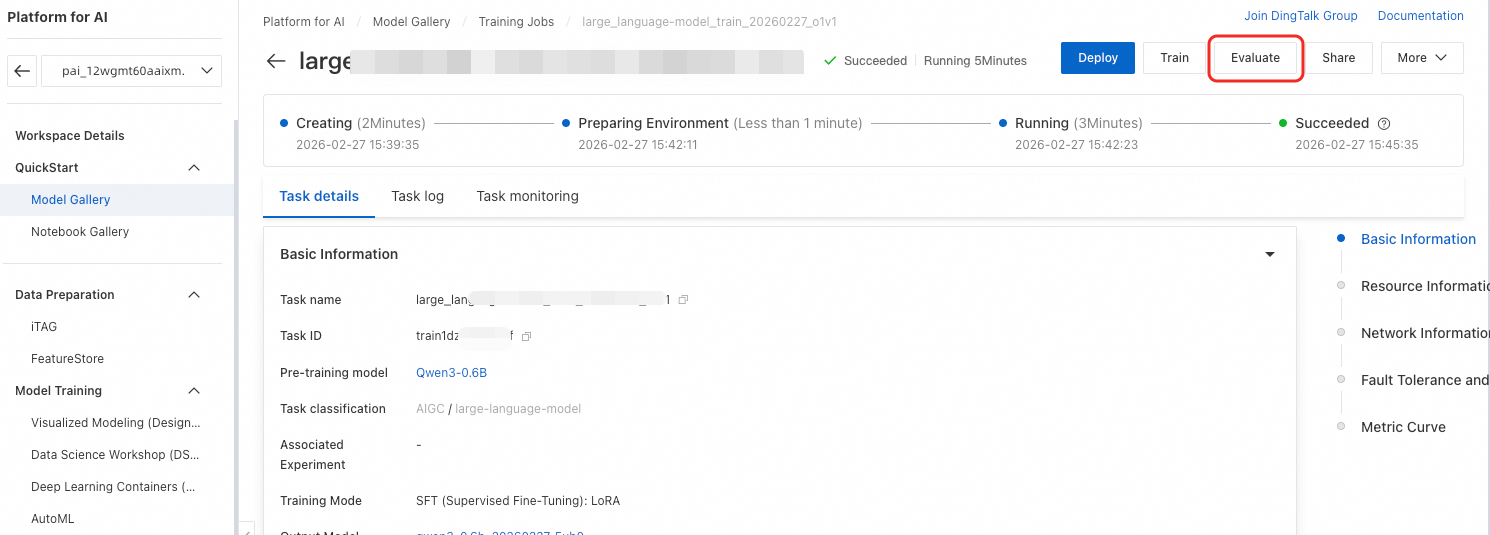

Evaluate a fine-tuned model. If a model supports evaluation, its fine-tuned version also supports evaluation. On the Model Gallery page, click Job Management > Training Jobs in the top-left corner. Click the target job name to open its details page. Click Evaluate in the top-right corner.

-

Step 2: Configure evaluation task

Evaluate using both public and custom datasets. Set hyperparameters, use LLM-as-a-Judge evaluation, and select multiple public datasets:

-

Configure basic parameters:

-

Job Name: An automatically generated unique name.

-

Result Output Path: OSS path where evaluation results are stored.

-

Label: Tags enable searching, locating, batch operations, and cost allocation across multiple dimensions.

-

-

Configure evaluation method:

-

Evaluation Method: Choose one of the following:

-

Set Dataset to Public: Select multiple datasets.

-

Custom Dataset: Specify the question and reference answer columns. If using only LLM-as-a-Judge evaluation, the reference answer column is optional.

-

Dataset Source: Choose Select OSS File or Select an existing dataset..

-

Evaluation Method: Choose Judge Model Evaluation or General Metric Evaluation.

-

PAI-Judge Model Service Token: Auto-configured when using LLM-as-a-Judge evaluation. Obtain it from the LLM-as-a-Judge page.

-

-

-

-

Configure compute resources:

-

Resource Group Type: Select a pay-as-you-go public resource group or a subscription resource quota that you have created.

-

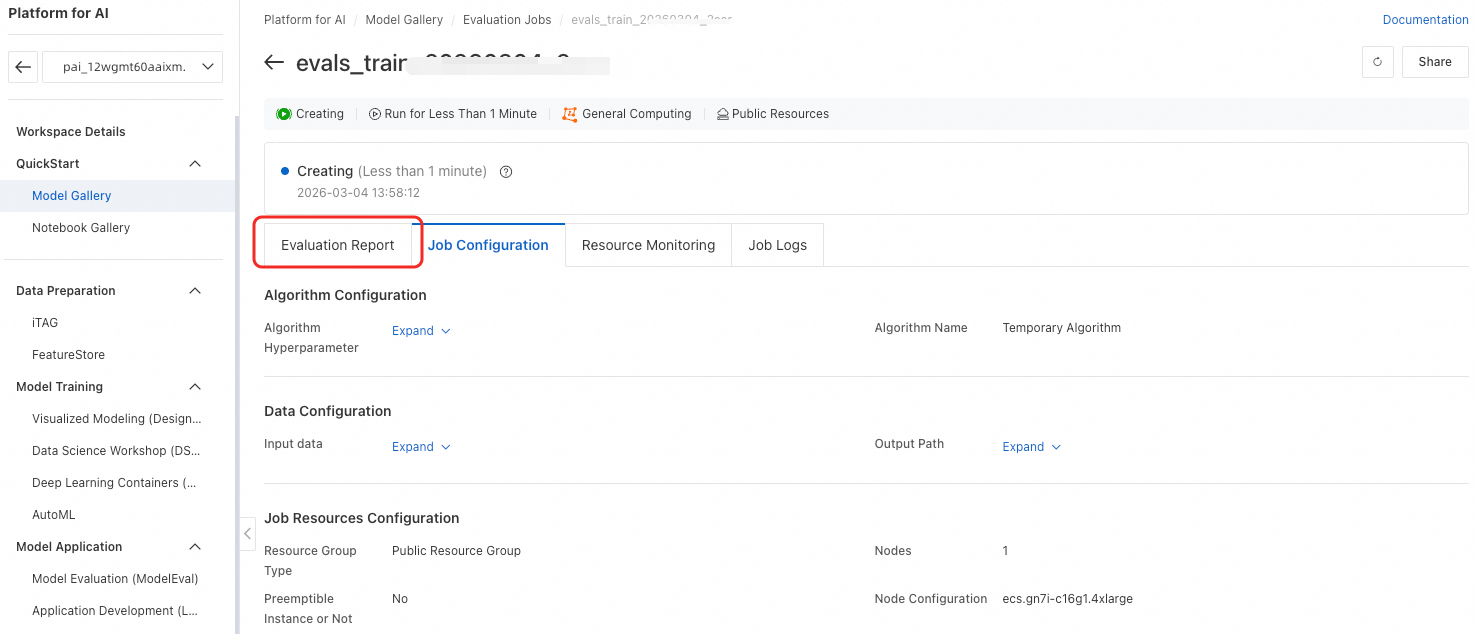

After configuring parameters, click OK to submit the task. The page automatically redirects to the task details page. Wait for the task to complete. Click Evaluation Report to view the report.

View evaluation results

Evaluation task list

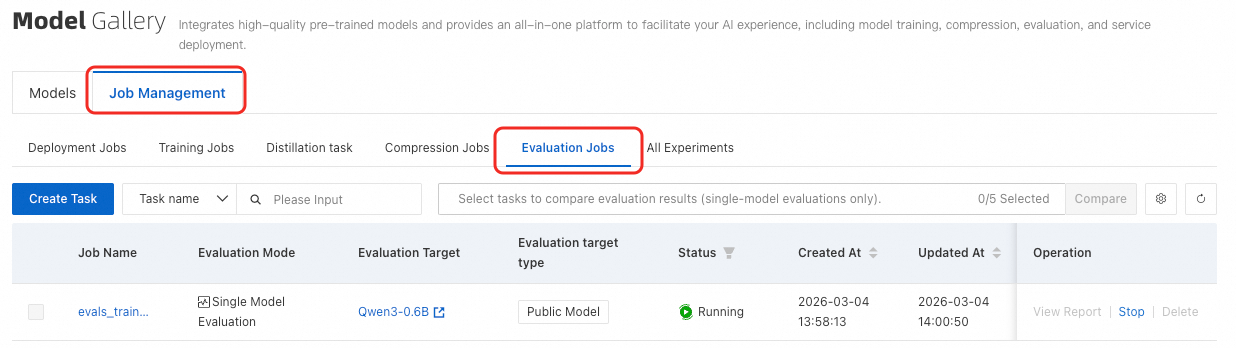

On the Model Gallery page, click Job Management. Then, switch to the Evaluation Jobs tab.

Single-task results

On the evaluation task list page, click View Report in the Actions column for the target evaluation task. This opens the task details page. Under Evaluation Report, view scores for both custom and public datasets.

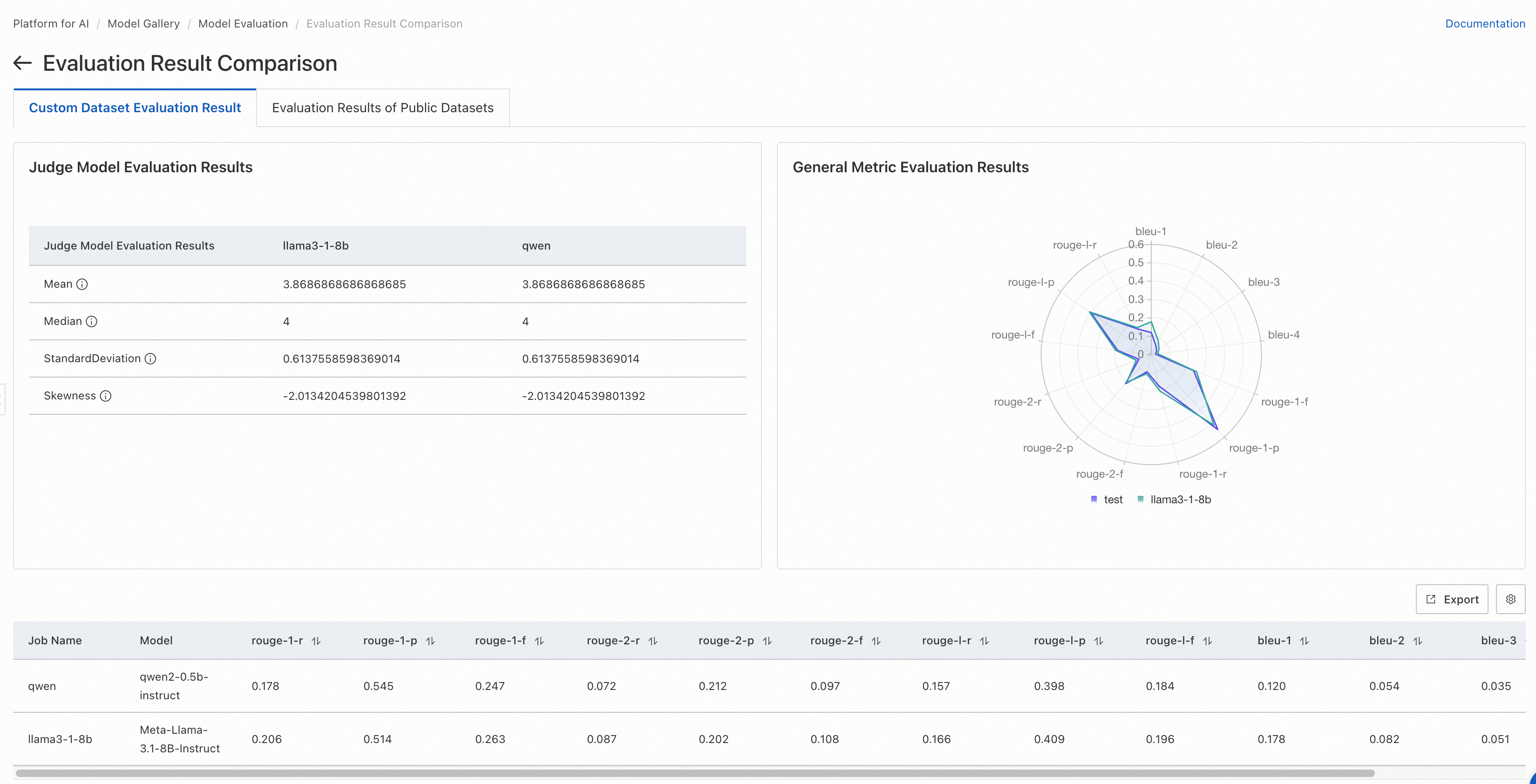

Custom dataset evaluation results page

-

If General Metric Evaluation is selected, a radar chart shows scores for ROUGE and BLEU metrics. Default metrics for custom datasets include: rouge-1-f, rouge-1-p, rouge-1-r, rouge-2-f, rouge-2-p, rouge-2-r, rouge-l-f, rouge-l-p, rouge-l-r, bleu-1, bleu-2, bleu-3, and bleu-4.

-

ROUGE metrics:

-

ROUGE-n metrics measure N-gram overlap. ROUGE-1 and ROUGE-2 are most common, corresponding to unigrams and bigrams:

-

rouge-1-p (Precision): Ratio of unigrams in the system summary that match unigrams in the reference summary.

-

rouge-1-r (Recall): Ratio of unigrams in the reference summary that appear in the system summary.

-

rouge-1-f (F-score): Harmonic mean of precision and recall.

-

rouge-2-p (Precision): Ratio of bigrams in the system summary that match bigrams in the reference summary.

-

rouge-2-r (Recall): Ratio of bigrams in the reference summary that appear in the system summary.

-

rouge-2-f (F-score): Harmonic mean of precision and recall.

-

-

ROUGE-L uses the longest common subsequence (LCS):

-

rouge-l-p (Precision): Precision based on LCS match between system and reference summaries.

-

rouge-l-r (Recall): Recall based on LCS match between system and reference summaries.

-

rouge-l-f (F-score): F-score based on LCS match between system and reference summaries.

-

-

-

BLEU metrics:

BLEU (Bilingual Evaluation Understudy) measures machine translation quality by evaluating N-gram overlap with reference translations.

-

bleu-1: Measures unigram overlap.

-

bleu-2: Measures bigram overlap.

-

bleu-3: Measures trigram (three consecutive words) overlap.

-

bleu-4: Measures 4-gram overlap.

-

-

-

If LLM-as-a-Judge evaluation is selected, the page lists statistical metrics from judge model scores.

-

The judge model is a Qwen2-based LLM fine-tuned by PAI. Its performance matches GPT-4 on open-source benchmarks such as Alighbench. In some scenarios, it outperforms GPT-4.

-

The page shows four statistical metrics from judge model scores:

-

Mean: Average score given by the judge LLM (excluding invalid scores). Scores range from 1 to 5. Higher values indicate better model responses.

-

Median: Median score given by the judge LLM (excluding invalid scores). Scores range from 1 to 5. Higher values indicate better model responses.

-

Standard Deviation: Standard deviation of scores given by the judge LLM (excluding invalid scores). With equal mean and median, smaller standard deviation indicates better model performance.

-

Skewness: Skewness of judge LLM score distribution (excluding invalid scores). Positive skew means a longer tail on the right (high-score side). Negative skew means a longer tail on the left (low-score side).

-

-

-

The page also shows evaluation details for each line in the evaluation file.

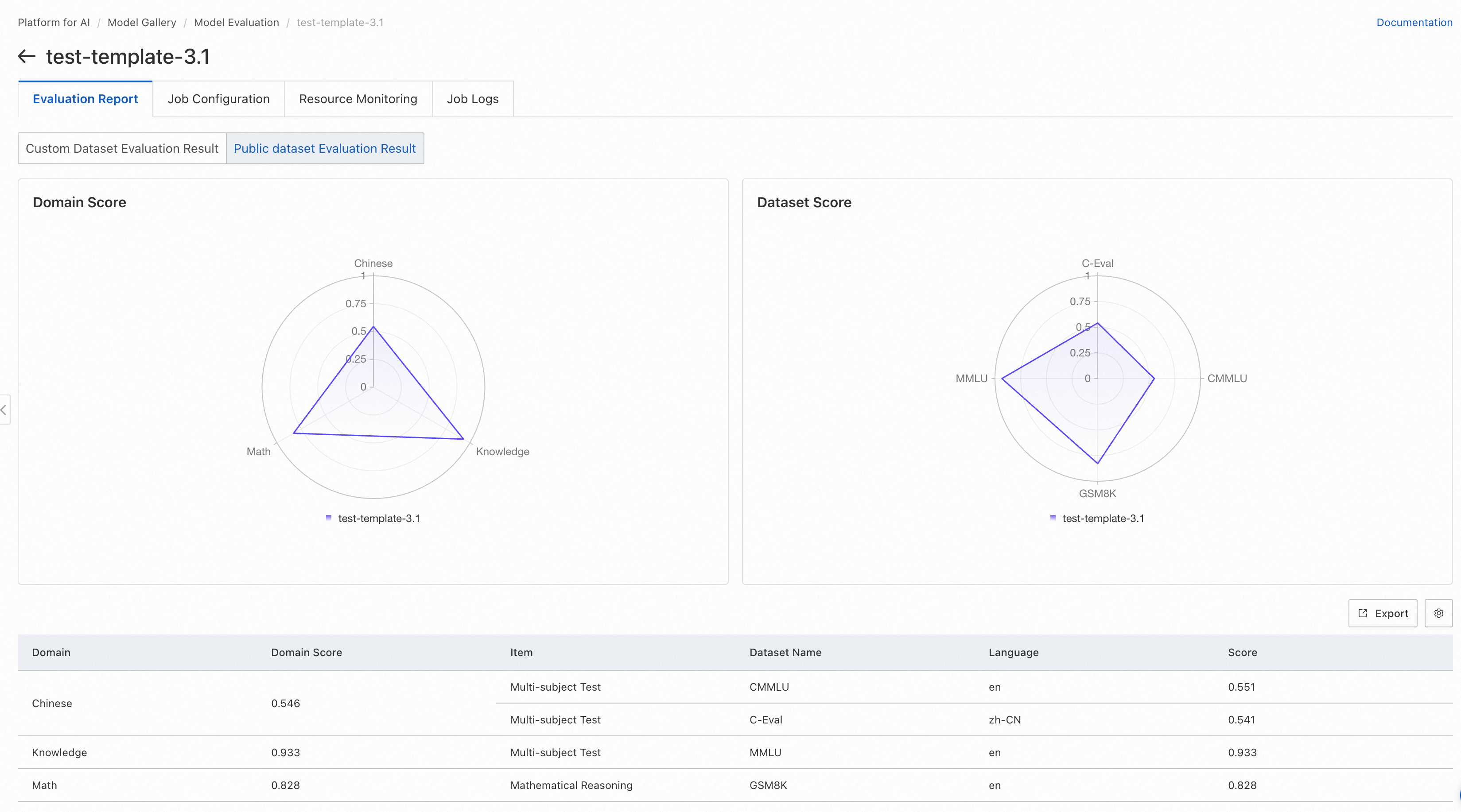

Public dataset evaluation results page

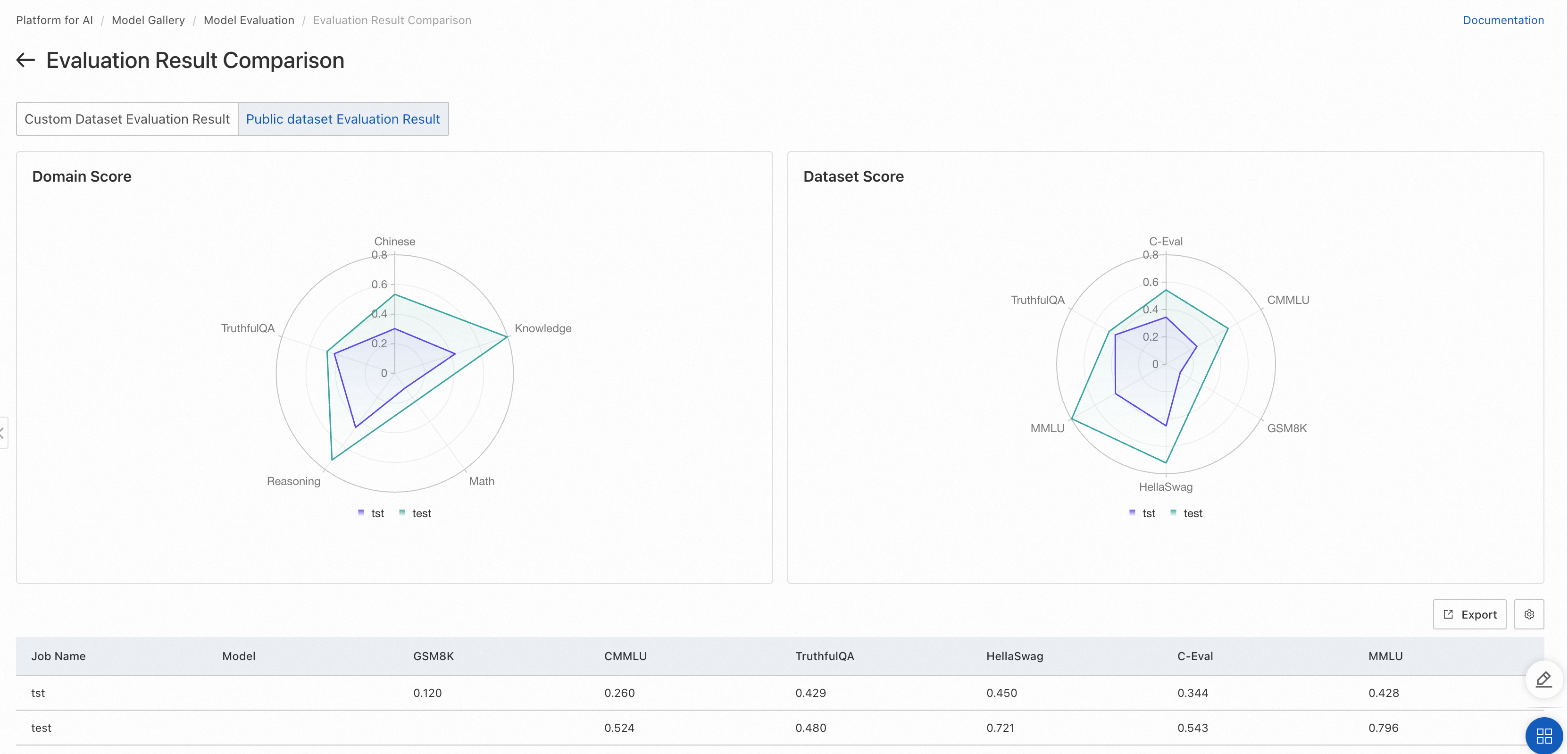

If the evaluation task used a public dataset, a radar chart displays scores across those datasets.

-

The left chart displays scores across domains. Each domain may have multiple related datasets. For datasets in the same domain, model scores are averaged to obtain a single domain score.

-

The right chart displays scores for each public dataset. See each dataset's official documentation for its evaluation scope.

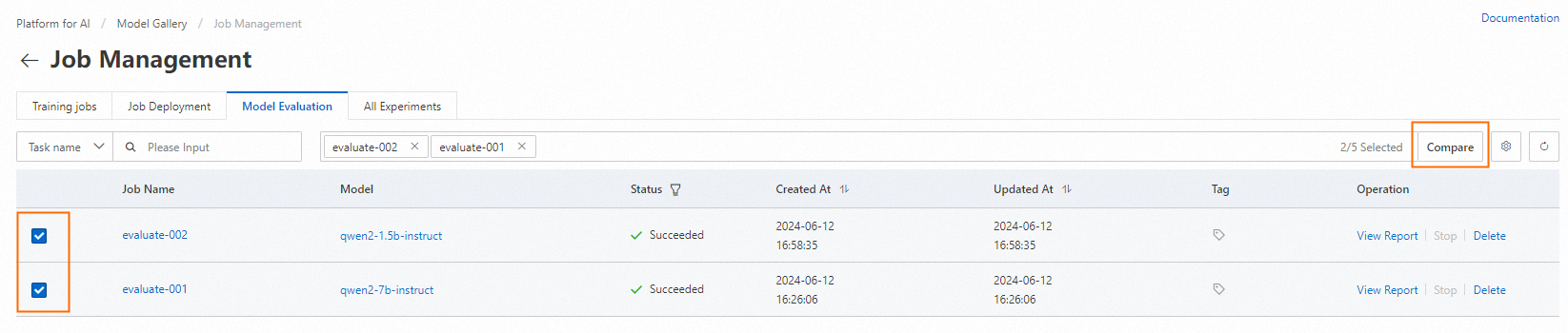

Compare multiple evaluation tasks

To compare results from multiple models, group them on a single page. On the evaluation task list page, select the tasks to compare on the left. Click Compare in the top-right corner to open the comparison page:

Custom dataset comparison results

Public dataset comparison results

Result analysis

Custom dataset evaluation

General metric evaluation: Uses standard NLP text-matching methods to calculate similarity between model output and ground truth. Higher scores indicate better model performance. Best for evaluating how well a model fits a specific scenario using domain-specific data.

LLM-as-a-Judge evaluation: Leverages LLM strengths to evaluate output quality at the semantic level. Higher mean and median scores and lower standard deviation indicate better model performance. Compared to simple text matching, this method provides more accurate assessment of output quality.

Public dataset evaluation

Uses open-source evaluation datasets covering many domains—such as math and coding—to provide comprehensive capability assessment. Higher scores indicate better model performance.

References

Use model evaluation through PAI Python SDK. For more information, see these notebooks: