MaxCompute provides built-in functions for common data processing tasks. When built-in functions cannot express your logic, extend MaxCompute with user-defined functions (UDFs).

Built-in functions outperform UDFs in performance. Use built-in functions whenever possible, and write a UDF only when built-in functions cannot cover your logic.

When to use a UDF

Use a UDF when your transformation logic cannot be expressed with built-in functions. Common scenarios include:

-

Custom string parsing or business-specific transformations

-

Spatial data analysis

-

Cross-project data processing pipelines

For standard aggregations, string operations, and arithmetic, use built-in functions instead. They require no deployment and perform significantly better at scale.

If a UDF and a built-in function share the same name, MaxCompute calls the UDF. To call the built-in function instead, prefix it with::. For example:select ::concat('ab', 'c');

UDF types

In the broad sense, UDFs include user-defined scalar functions (UDF), user-defined aggregate functions (UDAF), and user-defined table-valued functions (UDTF). In the narrow sense, UDFs refer only to user-defined scalar functions.

MaxCompute supports three core UDF types.

| Type | Input-to-output mapping | Behavior |

|---|---|---|

| UDF (user-defined scalar function) | One-to-one | Each input row returns one output value |

| UDTF (user-defined table-valued function) | One-to-many | Each input row returns multiple values as a table |

| UDAF (user-defined aggregate function) | Many-to-one | Multiple input rows are aggregated into one output value |

MaxCompute also provides specialized UDF types for specific scenarios.

| Type | Use case |

|---|---|

| Code-embedded UDFs | Embed Java or Python code directly in SQL scripts to simplify development and improve readability |

| SQL UDFs | Encapsulate reusable SQL logic to reduce code duplication |

| Geospatial UDFs | Apply Hive geospatial functions to analyze spatial data in MaxCompute |

Constraints and considerations

Hard limits

Internet access — UDFs cannot access the Internet by default. To enable Internet access, submit a network connection application. After approval, the MaxCompute technical support team will contact you to establish the connection. For details, see Network Connection Request FormNetwork connection process.

VPC access — UDFs cannot access resources in a virtual private cloud (VPC) by default. To enable VPC access, establish a network connection between MaxCompute and the VPC. For details, see Use UDFs to access resources in VPCs.

Unsupported table types — UDFs, UDAFs, and UDTFs cannot read data from the following table types:

-

Tables on which schema evolution has been performed

-

Tables that contain complex data types

-

Tables that contain JSON data types

-

Transactional tables

Considerations

Memory usage — When a UDF processes large datasets with data skew, the computing job may exceed the default JVM memory allocation. To increase the memory limit, run the following command at the session level:

set odps.sql.udf.joiner.jvm.memory=xxxx;For more information, see FAQ about MaxCompute UDFs.

Develop a UDF

The development process for code-embedded UDFs, SQL UDFs, and Geospatial UDFs differs from the standard UDF, UDTF, and UDAF workflow. See the linked documentation for those types.

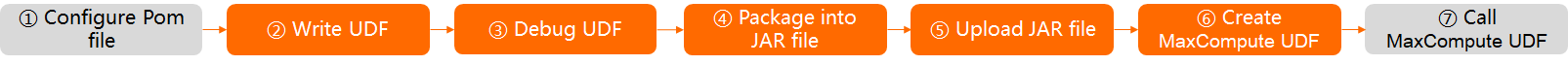

Java

The following figure shows the Java UDF development workflow.

| Step | Required | Action | Platform | Notes |

|---|---|---|---|---|

| 1 | Optional | Add the odps-sdk-udf dependency to your POM file |

IntelliJ IDEA (Maven) | Search for odps-sdk-udf on Maven repositories to get the latest version. Example: 0.29.10-public |

| 2 | Required | Write UDF code | IntelliJ IDEA (Maven), MaxCompute Studio | Follow UDF development specifications and general process (Java) |

| 3 | Required | Debug on your local machine or run unit tests | — | Verify that the output matches expectations |

| 4 | Required | Package the code into a JAR file | — | — |

| 5 | Required | Upload the JAR as a resource to your MaxCompute project | DataWorks console, MaxCompute client, MaxCompute Studio | Three methods available: DataWorks console (visual), MaxCompute client (SQL), or MaxCompute Studio (code). See the platform-specific guides linked. |

| 6 | Required | Create the UDF from the uploaded JAR | — | — |

| 7 | Optional | Call the UDF in queries | — | — |

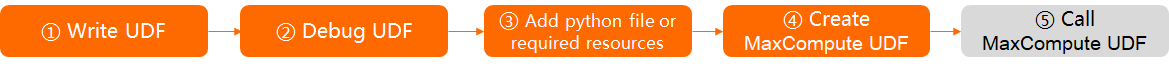

Python

The following figure shows the Python UDF development workflow.

| Step | Required | Action | Platform | Notes |

|---|---|---|---|---|

| 1 | Required | Write UDF code | MaxCompute Studio | Follow UDF development specifications and general process (Python 3) or Python 2 |

| 2 | Required | Debug on your local machine or run unit tests | — | Verify that the output matches expectations |

| 3 | Required | Upload Python files and any required resources (file resources, table resources, third-party packages) to your MaxCompute project | DataWorks console, MaxCompute client, MaxCompute Studio | Three methods available: DataWorks console (visual), MaxCompute client (SQL), or MaxCompute Studio (code). See the platform-specific guides linked. |

| 4 | Required | Create the UDF from the uploaded files | — | — |

| 5 | Optional | Call the UDF in queries | — | — |

SDK reference

The following SDKs support Java UDF development. For package and class details, see MaxCompute SDK.

| SDK | Description |

|---|---|

| odps-sdk-core | Manages basic MaxCompute resources |

| odps-sdk-commons | Common utilities for Java |

| odps-sdk-udf | UDF APIs |

| odps-sdk-mapred | MapReduce API |

| odps-sdk-graph | Graph API |

Call a UDF

After registering a UDF, call it the same way as a built-in function.

Within a project — Call the UDF directly, like any built-in function.

Across projects — To use a UDF from project B in project A, reference it with the project prefix:

select B:udf_in_other_project(arg0, arg1) as res from table_t;For cross-project sharing setup, see Cross-project resource access based on packages.

What's next

Explore end-to-end examples to see UDF development in practice: