When MaxCompute's built-in functions don't cover your use case, write a user-defined function (UDF) in Java to extend SQL with custom logic. This topic covers the UDF code structure, data type mappings, and step-by-step development workflows using MaxCompute Studio, DataWorks, or the MaxCompute client (odpscmd).

Prerequisites

Before you begin, ensure that you have:

-

A MaxCompute project

-

One of the following development tools set up and connected to your project:

-

MaxCompute Studio (IntelliJ IDEA plugin)

-

DataWorks workspace associated with a MaxCompute project

-

MaxCompute client (odpscmd) configured with a

configfile

-

UDF code structure

A Java UDF is a class that extends com.aliyun.odps.udf.UDF and implements an evaluate method. The following table describes the components of a valid UDF.

| Component | Required | Description |

|---|---|---|

| Java package | Optional | Groups your classes into a JAR file for reuse |

Base class com.aliyun.odps.udf.UDF |

Required | All Java UDFs must inherit this class. To use complex data types such as STRUCT, import the corresponding class (e.g., com.aliyun.odps.data.Struct). See Overview for the full list. |

@Resolve annotation |

Optional | Defines input and return types in the format @Resolve(<signature>). Required when the UDF uses the STRUCT type, because reflection cannot retrieve field names and types from com.aliyun.odps.data.Struct. This annotation affects only the overloading of the UDF whose input parameters or return value contain the com.aliyun.odps.data.Struct class. Example: @Resolve("struct<a:string>,string->string"). |

| Custom Java class | Required | The organizational unit of your UDF code |

evaluate method |

Required | A non-static public method whose parameter and return types define the UDF's SQL signature. Implement multiple evaluate overloads to support different input types — MaxCompute selects the matching overload at call time. |

setup method |

Optional | void setup(ExecutionContext ctx) — called once before the first evaluate invocation. Use it to initialize shared resources. |

close method |

Optional | void close() — called after the last evaluate invocation. Use it to clean up resources such as open files. |

Sample code

The following examples implement a Lower UDF that converts a string to lowercase.

Using Java data types:

// Package: org.alidata.odps.udf.examples

package org.alidata.odps.udf.examples;

// Inherit the UDF base class

import com.aliyun.odps.udf.UDF;

public final class Lower extends UDF {

// evaluate defines the SQL signature: (String) -> String

public String evaluate(String s) {

if (s == null) {

return null;

}

return s.toLowerCase();

}

}Using Java writable data types:

// Package: com.aliyun.odps.udf.example

package com.aliyun.odps.udf.example;

import com.aliyun.odps.io.Text; // Writable type for STRING

import com.aliyun.odps.udf.UDF;

public class MyConcat extends UDF {

private Text ret = new Text();

// evaluate defines the SQL signature: (Text, Text) -> Text

public Text evaluate(Text a, Text b) {

if (a == null || b == null) {

return null;

}

ret.clear();

ret.append(a.getBytes(), 0, a.getLength());

ret.append(b.getBytes(), 0, b.getLength());

return ret;

}

}MaxCompute also supports Hive UDFs whose Hive version is compatible with MaxCompute. For details, see Hive UDF compatibility.

Constraints

Duplicate class names across JAR files

JAR files for different UDFs must not contain classes with the same fully qualified name but different logic. For example, if udf1.jar and udf2.jar both contain com.aliyun.UserFunction.class with different implementations, calling both UDFs in the same SQL statement causes MaxCompute to load only one version — resulting in unexpected behavior or a compilation error.

Object types, not primitives

The input parameters and return value of an evaluate method must use Java object types (e.g., String, Long, Integer). Primitive types such as int or boolean are not supported.

NULL handling

MaxCompute SQL NULL values map to Java null. Because Java primitive types (int, boolean, float, etc.) cannot hold null, using them in an evaluate signature causes type errors at runtime. Always use the boxed object equivalents (Integer, Boolean, Float, etc.), and explicitly check for null before processing.

// Correct: uses String (object type), handles null

public String evaluate(String s) {

if (s == null) {

return null;

}

return s.toLowerCase();

}

// Wrong: uses primitive int — cannot represent SQL NULL

// public int evaluate(int n) { ... }Internet access

MaxCompute does not allow UDFs to access the Internet by default. To enable Internet access, fill in the network connection application form based on your business requirements and submit it. After approval, the MaxCompute technical support team will help you establish the connection. For details, see network connection application formNetwork connection process.

VPC access

MaxCompute does not allow UDFs to access resources in virtual private clouds (VPCs) by default. To access VPC resources from a UDF, establish a network connection between MaxCompute and the VPC. For details, see Use UDFs to access resources in VPCs.

Table read restrictions

UDFs, user-defined aggregate functions (UDAFs), and user-defined table-valued functions (UDTFs) cannot read data from the following table types:

-

Tables on which schema evolution is performed

-

Tables that contain complex data types

-

Tables that contain JSON data types

-

Transactional tables

Data type mappings

Write Java UDFs based on the following type mappings to ensure consistency between MaxCompute SQL types and Java types.

MaxCompute V2.0 and later support additional data types, including complex types such as ARRAY, MAP, and STRUCT. For details on data type editions, see Data type editions.

| MaxCompute type | Java type | Java writable type | Notes |

|---|---|---|---|

| TINYINT | java.lang.Byte | ByteWritable | |

| SMALLINT | java.lang.Short | ShortWritable | |

| INT | java.lang.Integer | IntWritable | |

| BIGINT | java.lang.Long | LongWritable | |

| FLOAT | java.lang.Float | FloatWritable | |

| DOUBLE | java.lang.Double | DoubleWritable | |

| DECIMAL | java.math.BigDecimal | BigDecimalWritable | |

| BOOLEAN | java.lang.Boolean | BooleanWritable | |

| STRING | java.lang.String | Text | |

| VARCHAR | com.aliyun.odps.data.Varchar | VarcharWritable | |

| BINARY | com.aliyun.odps.data.Binary | BytesWritable | |

| DATE | java.sql.Date | DateWritable | |

| DATETIME | java.util.Date | DatetimeWritable | |

| TIMESTAMP | java.sql.Timestamp | TimestampWritable | |

| INTERVAL_YEAR_MONTH | N/A | IntervalYearMonthWritable | No Java type equivalent; use the writable type only |

| INTERVAL_DAY_TIME | N/A | IntervalDayTimeWritable | No Java type equivalent; use the writable type only |

| ARRAY | java.util.List | N/A | |

| MAP | java.util.Map | N/A | |

| STRUCT | com.aliyun.odps.data.Struct | N/A | Requires the @Resolve annotation; field names and types cannot be retrieved via reflection |

Develop a Java UDF

All three tools follow the same four-step workflow: prepare your environment, write UDF code, upload the JAR file and register the UDF, then debug.

Use MaxCompute Studio

This example creates a Lower UDF that converts strings to lowercase.

Step 1: Prepare your environment

Install MaxCompute Studio and connect it to your MaxCompute project:

Step 2: Write UDF code

-

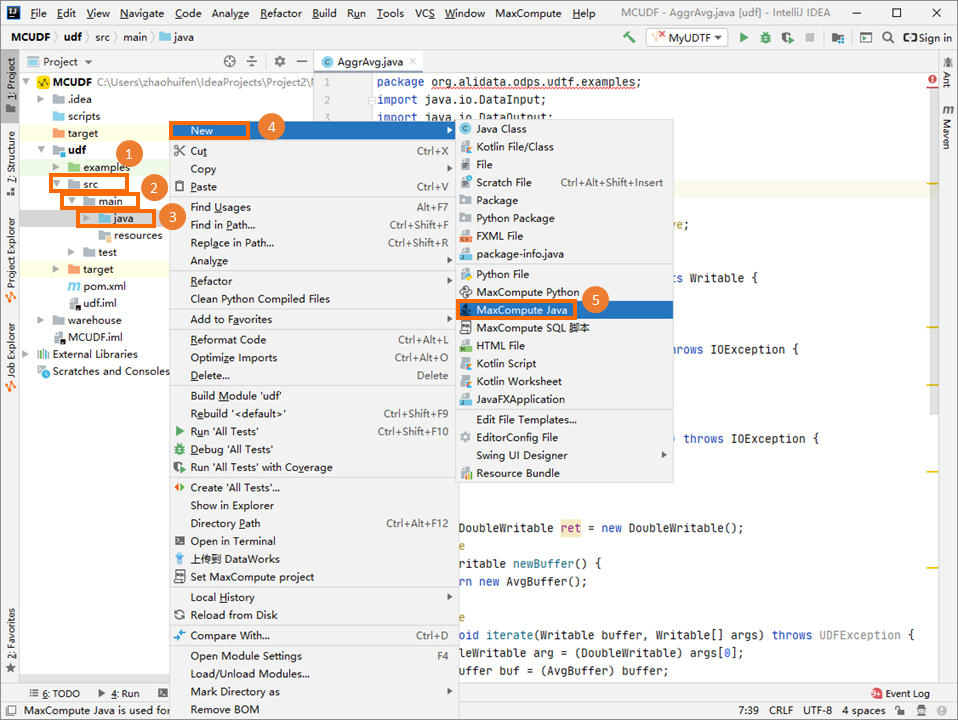

In the Project tab, navigate to src > main > java, right-click java, and choose New > MaxCompute Java.

-

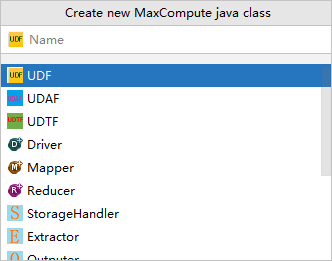

In the Create new MaxCompute java class dialog box, click UDF, enter a class name in the Name field, and press Enter. Enter the name in

PackageName.ClassNameformat. The system creates the package automatically. This example uses the class name Lower.

-

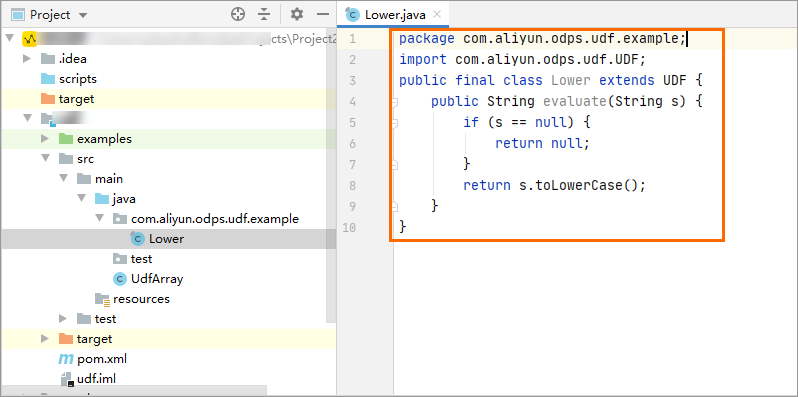

Write your UDF code in the editor.

To debug the UDF locally before uploading, see Develop and debug UDFs.

package com.aliyun.odps.udf.example; import com.aliyun.odps.udf.UDF; public final class Lower extends UDF { public String evaluate(String s) { if (s == null) { return null; } return s.toLowerCase(); } }

Step 3: Upload the JAR file and register the UDF

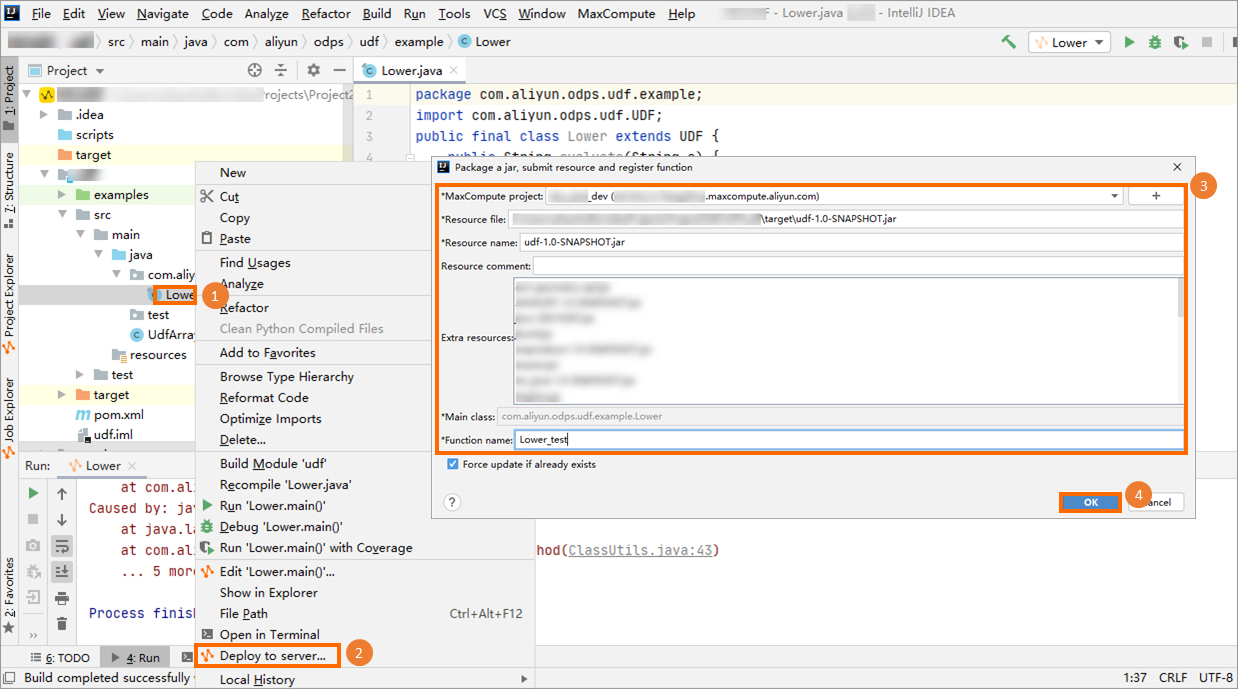

Right-click the JAR file and select Deploy to server.... In the Package a jar, submit resource and register function dialog box, configure the following parameters and click OK.

| Parameter | Description |

|---|---|

| MaxCompute project | The target project. Defaults to the project connected in step 1. |

| Resource file | Path of the resource file the UDF depends on. Keep the default value. |

| Resource name | Name of the resource. Keep the default value. |

| Function name | The SQL function name used to call the UDF. Example: Lower_test. |

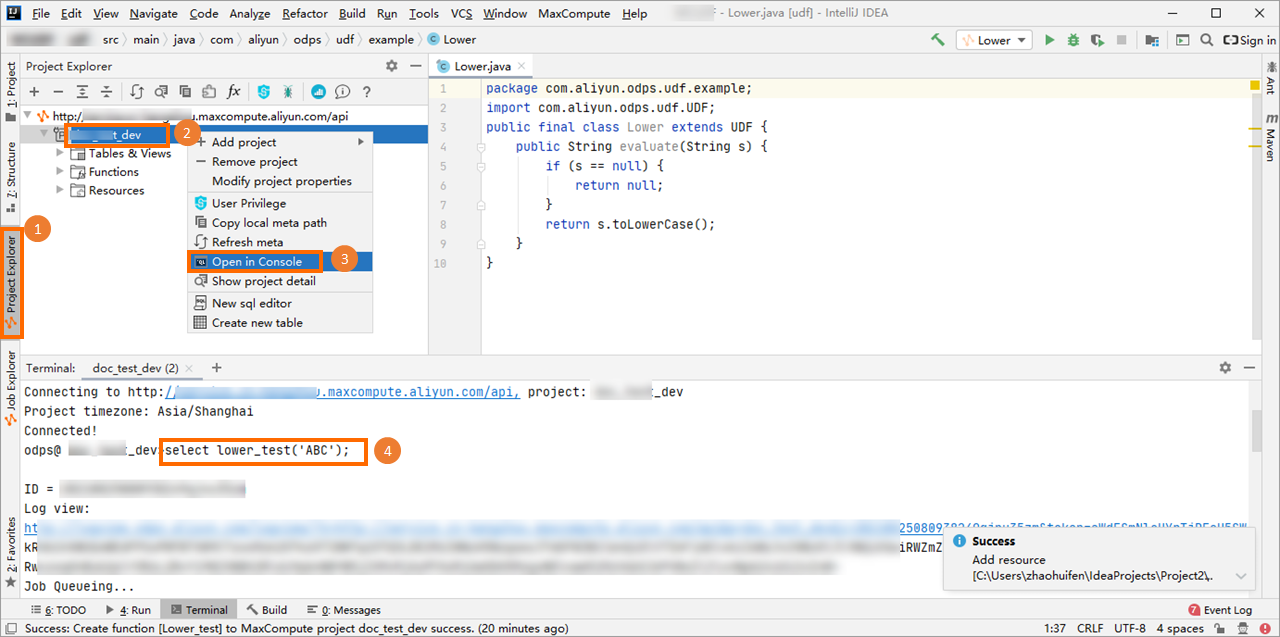

Step 4: Debug the UDF

In the left-side navigation pane, click the Project Explore tab. Right-click your MaxCompute project, select Open Console, and run:

select lower_test('ABC');Expected output:

+-----+

| _c0 |

+-----+

| abc |

+-----+Use DataWorks

Step 1: Prepare your environment

Activate DataWorks and associate a DataWorks workspace with your MaxCompute project. For details, see DataWorks.

Step 2: Write UDF code

Write your UDF in a Java development tool and package it as a JAR file. Sample code:

package com.aliyun.odps.udf.example;

import com.aliyun.odps.udf.UDF;

public final class Lower extends UDF {

public String evaluate(String s) {

if (s == null) {

return null;

}

return s.toLowerCase();

}

}Step 3: Upload the JAR file and register the UDF

Upload the JAR file and register the UDF in the DataWorks console:

Step 4: Debug the UDF

Create an ODPS SQL node in the DataWorks console and run the following SQL statement to verify the UDF. For details on creating an ODPS SQL node, see Create an ODPS SQL node.

select lower_test('ABC');Expected output:

+-----+

| _c0 |

+-----+

| abc |

+-----+Use the MaxCompute client (odpscmd)

Step 1: Prepare your environment

Download the MaxCompute client installation package from GitHub, install the client, and configure the config file to connect to your MaxCompute project. For details, see MaxCompute client (odpscmd).

Step 2: Write UDF code

Write your UDF in a Java development tool and package it as a JAR file. Sample code:

package com.aliyun.odps.udf.example;

import com.aliyun.odps.udf.UDF;

public final class Lower extends UDF {

public String evaluate(String s) {

if (s == null) {

return null;

}

return s.toLowerCase();

}

}Step 3: Upload the JAR file and register the UDF

Run the following commands in odpscmd:

-

Upload the JAR file as a resource — see ADD JAR.

-

Register the UDF — see CREATE FUNCTION.

Step 4: Debug the UDF

Run the following SQL statement to verify the UDF:

select lower_test('ABC');Expected output:

+-----+

| _c0 |

+-----+

| abc |

+-----+Call a UDF

After registering a UDF, call it from MaxCompute SQL in the following ways:

-

Within the same project: Call the UDF the same way you call a built-in function.

-

Across projects: To use a UDF from project B in project A, use the cross-project syntax:

select B:udf_in_other_project(arg0, arg1) as res from table_t;For details on cross-project sharing, see Cross-project resource access based on packages.

Hive UDF compatibility

If your MaxCompute project uses the MaxCompute V2.0 data type edition and has Hive UDF support enabled, you can use Hive UDFs directly — provided their Hive version is compatible.

The compatible Hive version is 2.1.0, which corresponds to Hadoop 2.7.2. If your UDF was compiled against a different Hive or Hadoop version, recompile the JAR file using Hive 2.1.0 or Hadoop 2.7.2.

For a complete walkthrough, see Write a Hive UDF in Java.

What's next

Explore more Java UDF development examples: