EMR Serverless Spark supports interactive development through notebooks. This guide walks you through creating a notebook, loading sample data, running PySpark code, visualizing results, and publishing your first notebook.

Prerequisites

Before you begin, ensure that you have:

-

An Alibaba Cloud account — see Alibaba Cloud account registrationAlibaba Cloud account registration

-

The required roles granted to your account — see Grant roles to an Alibaba Cloud account

-

A workspace created — see Create a workspace

-

A notebook session instance running — see Manage notebook sessions

Step 1: Prepare a test file

Download employee.csv to use in this guide. The file contains employee names, departments, and salaries.

Step 2: Upload the test file to OSS

Notebooks in EMR Serverless Spark read data from Object Storage Service (OSS). Upload employee.csv to an OSS bucket so the notebook can access it. See Upload files for instructions.

Note the OSS path of the uploaded file — you will use it in the next step.

Step 3: Create and run a notebook

Create a notebook

-

On the EMR Serverless Spark page, click Development in the left navigation pane.

-

On the Development tab, click the

icon.

icon. -

In the dialog box, enter a name, select Interactive Analytic > Notebook as the type, and then click OK.

Attach a notebook session

In the upper-right corner, select a running notebook session instance from the drop-down list.

Multiple notebooks can share a single notebook session instance, so you can access the same session resources from multiple notebooks simultaneously without creating a new session for each one. To create a new session instead, select Create Notebook Session from the drop-down list. For details, see Manage notebook sessions.

Run a PySpark job

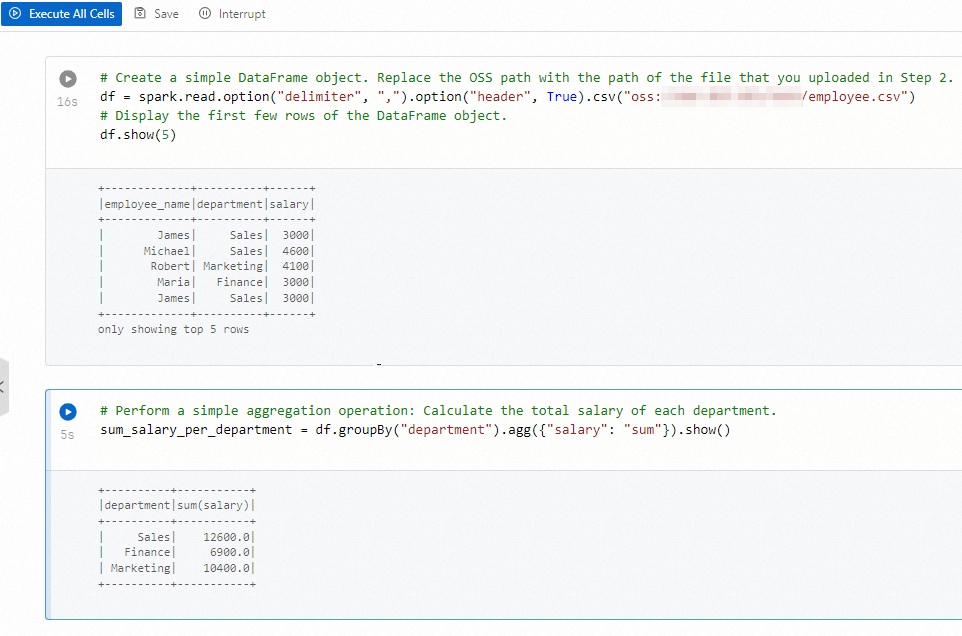

The following code reads the CSV file from OSS, displays the first five rows, and calculates the total salary per department.

-

Copy the following code into the Python cell of the notebook. Replace

oss://path/to/filewith the OSS path of the file you uploaded in Step 2.# Read the CSV file from OSS df = spark.read.option("delimiter", ",").option("header", True).csv("oss://path/to/file") # Display the first five rows df.show(5) # Calculate total salary by department sum_salary_per_department = df.groupBy("department").agg({"salary": "sum"}).show() -

Click Execute All Cells to run the notebook. To run a single cell, click the

icon next to that cell.

icon next to that cell.

-

(Optional) To inspect job execution, hover over the

icon for the current session in the session drop-down list and click Spark UI. The Spark Jobs page opens, where you can view detailed Spark job information.

icon for the current session in the session drop-down list and click Spark UI. The Spark Jobs page opens, where you can view detailed Spark job information.

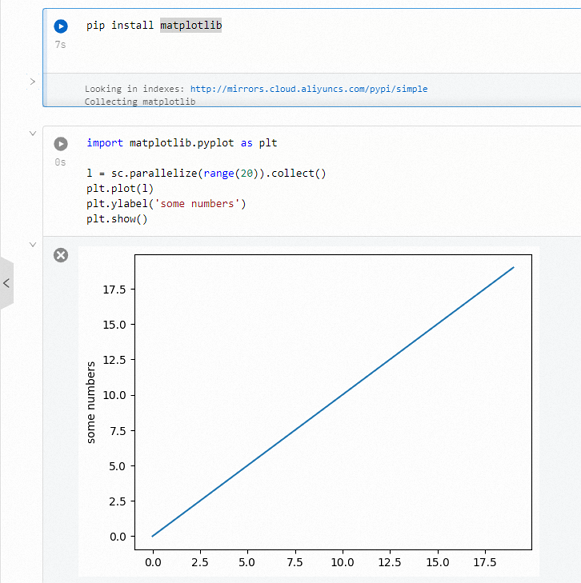

Visualize data with third-party libraries

Notebook sessions come with matplotlib, numpy, and pandas pre-installed. The following example uses matplotlib to plot a simple line chart.

For other third-party libraries, see Use third-party Python libraries in a notebook.

-

Copy the following code into a cell.

import matplotlib.pyplot as plt l = sc.parallelize(range(20)).collect() plt.plot(l) plt.ylabel('some numbers') plt.show() -

Click Execute All Cells to run the notebook. To run a single cell, click the

icon next to that cell.

icon next to that cell.

Step 4: Publish the notebook

After the notebook finishes running, click Publish in the upper-right corner. In the Publish dialog box, configure the parameters and click OK to save the notebook as a new version.

What's next

-

Manage notebook sessions — stop your session to avoid unnecessary charges, or adjust session resources.

-

Use third-party Python libraries in a notebook — install additional libraries beyond the pre-installed matplotlib, numpy, and pandas.