A notebook session is a Spark session in an EMR Serverless Spark workspace. You can use notebook sessions for interactive notebook development. After you create a notebook session, you can select this session for any notebook in the workspace.

Create a notebook session

Prerequisites

Before you begin, ensure that you have:

An EMR Serverless Spark workspace

A resource queue with enough concurrency for the session (a development queue, or a queue shared by development and production environments)

Procedure

Go to the Notebook Session page.

Log on to the EMR console.

In the left-side navigation pane, choose EMR Serverless > Spark.

On the Spark page, click the name of the target workspace.

On the EMR Serverless Spark page, choose Sessions in the left-side navigation pane.

Click the Notebook Session tab.

Click Create Notebook Session.

Configure the session parameters described in the following sections, then click Create.

Set the maximum concurrency of the selected deployment queue to a value greater than or equal to the resource size required by the notebook session. The required value is displayed in the console.

Basic settings

| Parameter | Description |

|---|---|

| Name | A name for the notebook session. The name must be 1 to 64 characters in length and can contain letters, digits, hyphens (-), underscores (_), and spaces. |

| Resource Queue | The queue used to deploy the session. Select a development queue or a queue shared by development and production environments. For details, see Manage resource queues. |

| Engine Version | The Spark engine version for this session. For details, see Engine versions. |

| Use Fusion Acceleration | Enables Fusion Engine, which accelerates Spark workloads and reduces task costs. For billing details, see Billing. |

| Automatic Stop | Enabled by default. The default idle timeout is 120 minutes. Set a custom value after which an inactive session automatically stops. |

Resource Configuration

Configure the Spark driver and executor resources.

| Parameter | Description | Default |

|---|---|---|

| spark.driver.cores | CPU cores for the driver process. Valid values: 1 to 32. | 2 CPU |

| spark.driver.memory | Memory for the driver process. Valid values: 2 to 128 GB. | 8 GB |

| spark.executor.cores | CPU cores per executor process. Valid values: 1 to 32. | 1 CPU |

| spark.executor.memory | Memory per executor process. Valid values: 1 to 64 GB. | 4 GB |

| spark.executor.instances | Number of executors to allocate. | 2 |

Dynamic resource allocation

Disabled by default. Enable this feature to let Spark scale the number of executors automatically based on workload demand.

| Parameter | Description | Default |

|---|---|---|

| Minimum Number of Executors | The fewest executors the session can scale down to. | 2 |

| Maximum Number of Executors | The most executors the session can scale up to. If spark.executor.instances is not set, this defaults to 10. | 10 |

More

The following parameters are located under the More collapsible section of the creation form.

| Parameter | Description |

|---|---|

| Runtime Environment | A custom environment created on the Runtime Environments page. The system pre-installs the libraries defined in the selected environment when the session starts. Only environments in the Ready state are available. |

| Network Connection | Select an existing network connection to access data sources or services in a VPC. For setup instructions, see Establish network connectivity between EMR Serverless Spark and other VPCs. |

| Mount Integrated File Directory | Disabled by default. To use this feature, first add a file directory on the Files page under the Integrated File Directory tab. For details, see Integrated file directory. When enabled, the system mounts the directory to the session driver so you can read and write files directly from the notebook. See Resource cost of mounting for resource consumption details. |

| Mount to Executor | When enabled, the system also mounts the integrated file directory to executors. The percentage of executor resources consumed varies based on file usage in the mounted directory. |

If you mount an integrated NAS file directory, configure a network connection. The VPC of the network connection must match the VPC where the NAS mount target resides.

Resource cost of mounting

Mounting the integrated file directory consumes driver compute resources. The actual cost is the greater of these two values:

Fixed resources: 0.3 CPU core + 1 GB memory

Dynamic resources: 10% of

spark.driverresources (0.1 x spark.driver cores and memory)

Example: If spark.driver is configured with 4 CPU cores and 8 GB of memory, the dynamic resources are 0.4 CPU core + 0.8 GB memory. The actual consumed resources are max(0.3 Core + 1 GB, 0.4 Core + 0.8 GB) = 0.4 CPU core + 1 GB memory.

By default, the directory is mounted only to the driver. To also mount it to executors, enable Mount to Executor.

More Memory Configurations

| Parameter | Description | Default |

|---|---|---|

| spark.driver.memoryOverhead | Non-heap memory for each driver. If not set, Spark calculates this as max(384 MB, 10% x spark.driver.memory). | Auto-calculated |

| spark.executor.memoryOverhead | Non-heap memory for each executor. If not set, Spark calculates this as max(384 MB, 10% x spark.executor.memory). | Auto-calculated |

| spark.memory.offHeap.size | Off-heap memory for Spark. Takes effect only when spark.memory.offHeap.enabled is set to true. With Fusion Engine, off-heap is enabled by default. | 1 GB |

Custom Configuration

| Parameter | Description |

|---|---|

| Spark configuration | Additional Spark configuration entries, separated by spaces. Example: spark.sql.catalog.paimon.metastore dlf. |

View execution records

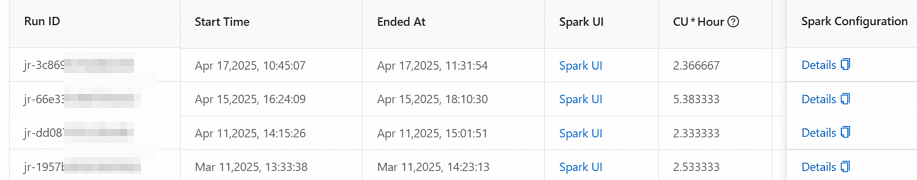

After a notebook session runs tasks, review the execution history for details such as the run ID, start time, Spark UI, CU*Hours, resource queue, engine version, Fusion Acceleration status, and Spark configuration.

On the session list page, click the session name.

Click the Execution Records tab.

References

Manage resource queues -- Queue operations and configuration

Manage users and roles -- Session permissions and role management

Quick start for notebook development -- End-to-end notebook development walkthrough