A node group is the core unit for managing cluster nodes in Alibaba Cloud E-MapReduce (EMR). Each node group consists of Elastic Compute Service (ECS) instances that typically share the same instance type, letting you manage nodes in bulk and mix workload-specific configurations in a single cluster. For example, use memory-optimized instances (1 vCPU:8 GiB) for offline big data processing and compute-optimized instances (1 vCPU:2 GiB) for model training.

For node group management in Hadoop, Data Science, and EMR Studio clusters, see Manage node groups (Hadoop, Data Science, and EMR Studio clusters).

Limitations

-

The operations in this topic apply only to DataLake, DataFlow, OLAP, DataServing, and Custom clusters.

-

Task node groups with a Pay-as-you-go or Preemptible Instance billing method are not eligible for configuration upgrades. For details, see Upgrade node configurations.

Add a node group

Step 1: Go to the Nodes tab

-

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

-

In the top navigation bar, select the region where your cluster resides and select a resource group.

-

On the EMR on ECS page, find the cluster and click Nodes in the Actions column.

Step 2: Configure the node group

On the Nodes page, click Add Node Group. In the Add Node Group pane, configure the following parameters.

Zone

The cluster's zone is selected by default. Click View Zones to select a different zone in the same region.

-

You can only add task node groups in other zones.

-

After adding a cross-zone node group, enable the YARN Node Label feature to partition the cluster into zones. This reduces the impact of cross-zone network bandwidth variance on task performance, particularly during the shuffle phase. For details, see Use Node Labels for node partitioning.

Node group type

| Type | Description | Suited for |

|---|---|---|

| Core | Core node group | Small-to-medium data volumes: log analysis, website traffic statistics |

| Task | Task node group | Temporary compute bursts: batch processing, data cleaning |

| Gateway | Gateway node group. Requires DataLake or DataFlow clusters running EMR V5.10.1 or later. | High-frequency job submission: data scientist model training, data engineer pipelines |

| Master-Extend | Load extension group. Requires high availability clusters running EMR V3.51.1 or later (V3 line) or EMR V5.17.1 or later (V5 line). | Large-scale clusters with high master node load |

Only task node groups support spot instances.

About the Master-Extend node group: If the master node carries a high payload, add a Master-Extend node group to offload services from the master. Services are not deployed to the Master-Extend node group automatically after it is created — select the services to deploy when you create the node group.

Billing method

| Billing method | Supported node group types |

|---|---|

| Pay-as-you-go | Core, Task, Gateway, Master-Extend |

| Spot instance | Task only |

| Subscription | Core, Task, Gateway, Master-Extend |

Node group name

The name must be unique within the cluster.

Components

Only Master-Extend node groups support custom service deployment. The following services can be deployed:

-

Hive: HiveMetaStore, HiveServer

-

Kyuubi: KyuubiServer

-

Spark: SparkHistoryServer, SparkThriftServer

Assign Public Network IP

Enable this option to connect all nodes in the node group to the Internet.

vSwitch

Set a vSwitch in the same VPC when creating the node group.

The vSwitch cannot be changed after the node group is created. The vSwitch must be in the same zone as the cluster. Choose carefully before clicking OK.

Additional security group

(Optional) Associate up to four additional security groups with the node group.

Instance type

-

Subscription: select one instance type.

-

Pay-as-you-go or spot instance (Task node groups only): select up to 10 instance types with the same vCPU count and memory size as fallback options.

Storage configuration

| Disk type | Options | Size range | Recommended minimum |

|---|---|---|---|

| System disk | Enterprise SSD (ESSD) or ultra disk | 60 GiB–500 GiB | 120 GiB |

| Data disk | Enterprise SSD (ESSD) or ultra disk | 40 GiB–32,768 GiB | 80 GiB |

For ESSDs, specify a performance level (PL) based on disk capacity:

-

System disk: PL0, PL1, PL2 (default: PL1)

-

Data disk: PL0, PL1, PL2, PL3 (default: PL1)

For more information, see Disks.

Resource reservation policy

This parameter is available only for Task node groups with Pay-as-you-go billing.

Associates your private ECS capacity pools. To reserve capacity, go to the ECS console first. For details, see Resource Butler overview.

| Option | Behavior |

|---|---|

| Public Pool Only (default) | Fulfills requests directly from public resource pools |

| Private Pool First | Draws from your specified private pool first; falls back to public pools if the private pool lacks capacity |

| Specified Private Pool | Locks the node group to a specific private pool |

Automatic compensation

This parameter is available only for Task node groups.

When enabled, EMR monitors nodes continuously. If an abnormal node is detected, EMR releases it and scales out the same number of replacement nodes automatically. For details, see Node compensation.

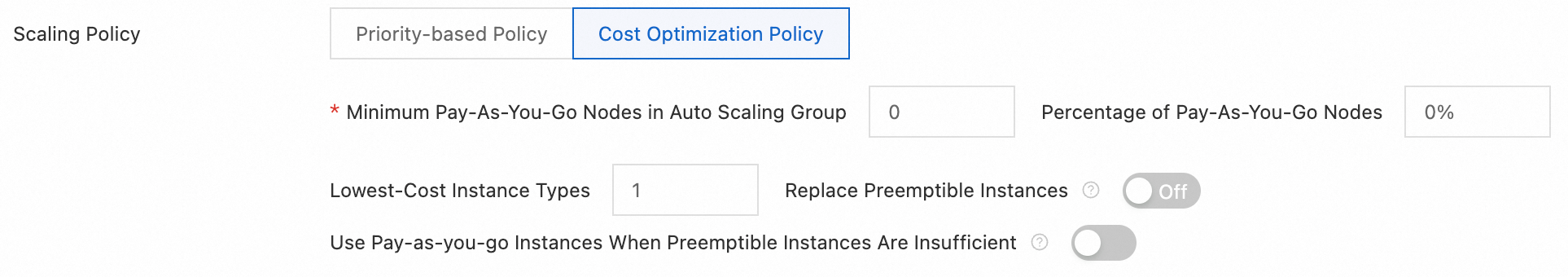

Scaling policy

This parameter is available only when Billing Method is set to Preemptible Instance.

Controls how EMR provisions and reclaims instances when spot capacity fluctuates. Choose a policy based on your priorities:

| Policy | When to use | Behavior |

|---|---|---|

| Priority-based Policy (default) | Instance type consistency matters more than cost | During scale-out, the system tries instance types in list order until one succeeds. The final instance type may vary based on inventory. |

| Cost Optimization Policy | Cost reduction is the priority and you can tolerate variable instance types | During scale-out, Auto Scaling creates instances in ascending order of vCPU unit price. During scale-in, instances are removed in descending order of vCPU unit price. If spot instances cannot be created due to insufficient inventory or price thresholds, the system falls back to pay-as-you-go instances. |

For detailed cost optimization parameters, see Cost optimization mode.

Graceful shutdown

This parameter is available only for clusters where YARN is deployed.

When enabled, EMR waits for running tasks on a node to finish — or for the timeout to elapse — before scaling in that node. Configure the timeout via the yarn.resourcemanager.nodemanager-graceful-decommission-timeout-secs parameter on the YARN service page.

Step 3: Confirm

Click OK. The node group appears on the Nodes page after it is created.

Modify a node group

-

On the Nodes page, click the Node Group Name of the target group.

-

In the Node Group Attributes dialog box, modify the parameters and click Save.

What you can modify depends on the node group type:

| Node group type | Modifiable attributes |

|---|---|

| Master, Core, Gateway, Master-Extend | Node group name, additional security groups |

| Task | Node group name, node specifications, additional security groups, and settings in the Advanced Information section |

Delete a node group

To delete a Task or Core node group, its Operation Status must be Running and its Number Of Nodes must be 0.

-

On the Nodes page, find the node group and click Delete Node Group in the Actions column.

-

In the dialog box, click Delete.

Cost optimization mode

This mode is available only when adding a Task node group with the Preemptible Instance billing method.

Cost optimization mode lets you define detailed policies to balance cost against stability.

| Parameter | Description |

|---|---|

| Minimum Pay-As-You-Go Nodes in Auto Scaling Group | The minimum number of pay-as-you-go instances in the scaling group. If the current count falls below this value, pay-as-you-go instances are provisioned first. |

| Percentage of Pay-As-You-Go Nodes | The share of pay-as-you-go instances to create once the minimum count is met. |

| Lowest-Cost Instance Types | The number of lowest-cost instance types to use (maximum: 3). Spot instances are distributed evenly across the selected types. |

| Preemptible Instance Compensation | When enabled, the system proactively replaces a spot instance approximately five minutes before it is reclaimed. |

| Use Pay-as-you-go Instances When Preemptible Instances Are Insufficient | When enabled, if spot capacity cannot be met due to price or inventory constraints, pay-as-you-go instances fill the gap. |

General vs. mixed-instance scaling groups

Whether you set the Minimum Pay-As-You-Go Nodes, Percentage of Pay-As-You-Go Nodes, and Lowest-Cost Instance Types parameters determines the scaling group type:

-

General cost optimization scaling group: leave all three parameters unset.

-

Mixed-instance cost optimization scaling group: set all three parameters. This gives finer-grained control over the on-demand/spot split.

Both types are fully compatible in terms of interfaces and features. Use mixed-instance settings to replicate any general scaling group behavior:

| Goal | Minimum Pay-As-You-Go Nodes | Percentage of Pay-As-You-Go Nodes | Lowest-Cost Instance Types |

|---|---|---|---|

| Run only pay-as-you-go instances | 0 |

100 |

1 |

| Prefer spot instances, fall back to pay-as-you-go | 0 |

0 |

1 |

What's next

-

Scale out a node group: Scale out an EMR cluster

-

Scale in a node group: Scale in a cluster

-

Expand disk capacity: Expand a disk

-

Set up auto scaling rules: Configure custom auto scaling rules

-

View scaling history: View auto scaling activities