Node labels let YARN isolate jobs at the physical layer by routing them to specific node partitions. This topic walks through configuring YARN Capacity Scheduler queues and node label partitions in E-MapReduce (EMR) to achieve both logical and physical job isolation.

Background

YARN provides two complementary mechanisms for resource management: queues and node labels.

Queues handle logical resource allocation. They divide cluster compute resources among user groups or applications at a preset ratio, so different teams and workload types get fair shares in a shared cluster.

Node labels group physical nodes into partitions. By tagging nodes, you can route jobs to specific hardware—for example, directing memory-intensive real-time jobs to memory-optimized instances, and batch jobs to standard compute instances.

Using queues and node labels together gives you both logical and physical isolation: queues manage who gets how much, and node labels control where jobs actually run.

Partition types

When you create a partition, you can select Exclusive as the partition type. With an Exclusive partition, containers are allocated to nodes in the partition only when the partition is an exact match. This ensures that jobs are physically isolated to their designated nodes.

For workload isolation—the primary use case of this topic—use Exclusive partitions.

Prerequisites

Before you begin, make sure that you have:

-

An EMR cluster with the YARN service running

-

Capacity Scheduler configured as the YARN scheduler (only Capacity Scheduler supports node labels)

Configure queues and node labels

This example uses a DataLake cluster running EMR V5.16.0. The same steps apply to other EMR cluster types and versions.

Scenario: An enterprise needs two child queues (warehouse and analysis) and two partitions (batch and streaming) to isolate offline batch jobs from real-time streaming jobs.

Resource allocation targets:

The configuration covers four steps:

-

Edit resource queues

-

Add partitions and associate nodes

-

Enable partition-queue association management

-

Submit jobs to specific partitions

Step 1: Edit resource queues

-

Log on to the EMR console. On the EMR on ECS page, find your cluster and click Services in the Actions column. On the Services tab, click YARN.

-

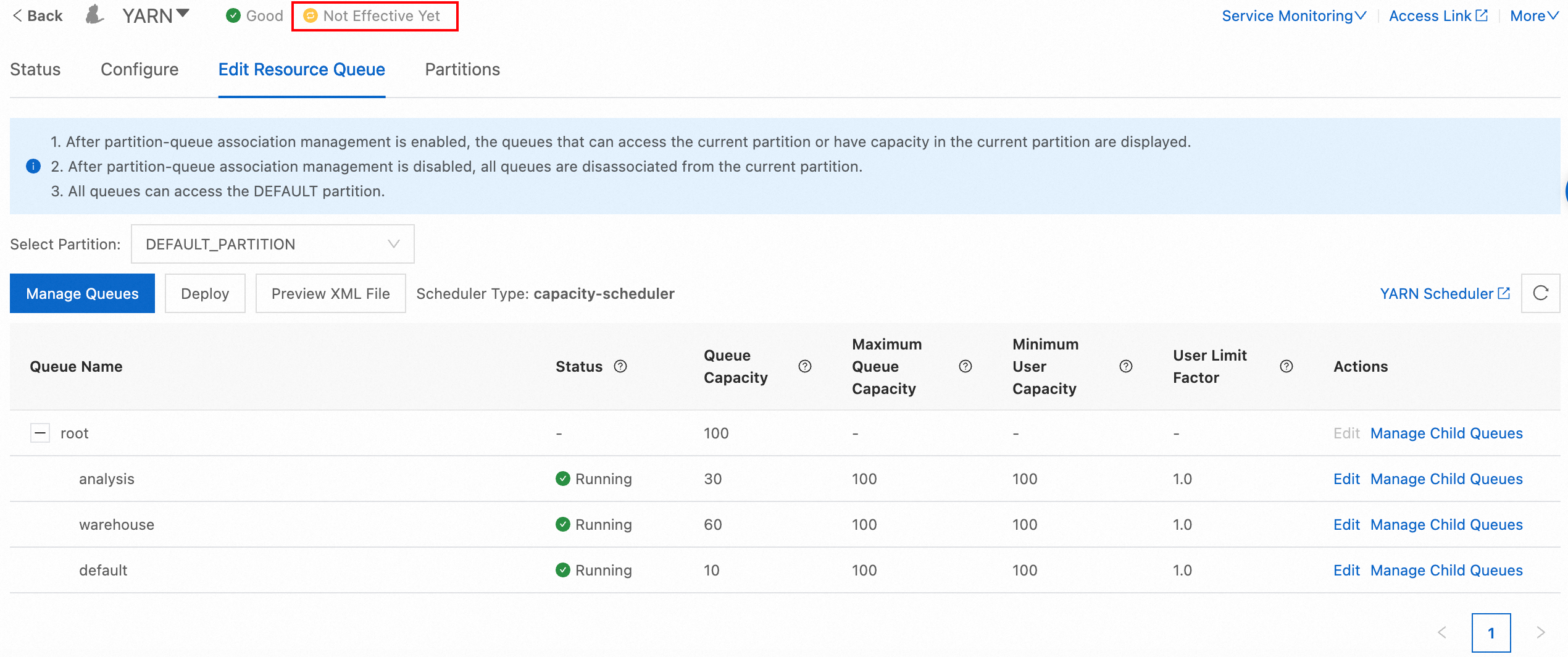

On the YARN service page, click the Edit Resource Queue tab, then click Manage Queues.

-

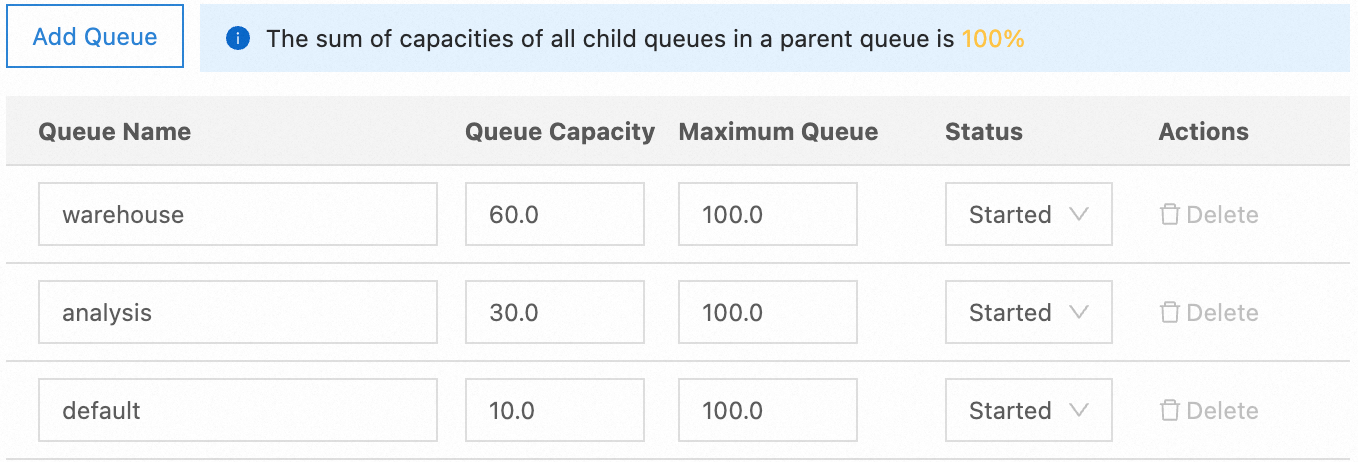

In the Manage Child Queues dialog box, click Add Queue to create the

warehouseandanalysisqueues. Set the Queue Capacity for each queue, and set the Status to Started:Queue Queue capacity warehouse 60 analysis 30 default 10

-

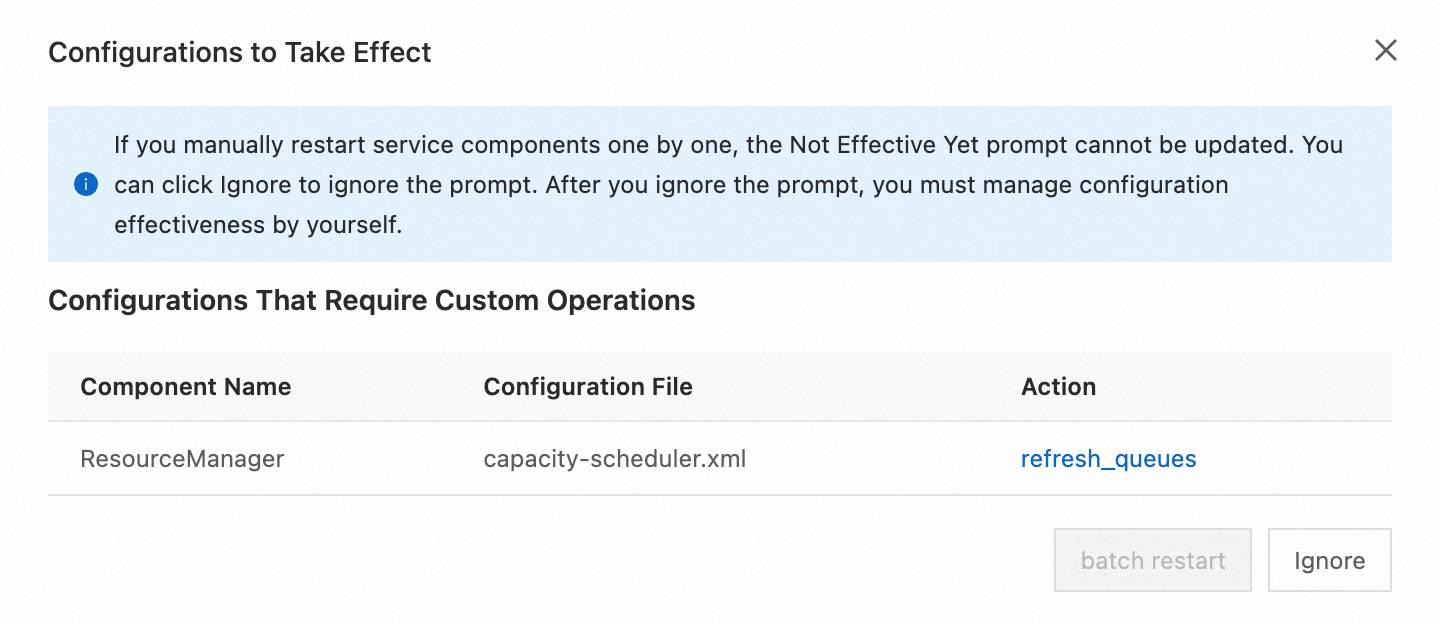

Click Not Effective Yet. In the Configurations to Take Effect dialog box, click refresh_queues in the Actions column.

Step 2: Add partitions and associate nodes

-

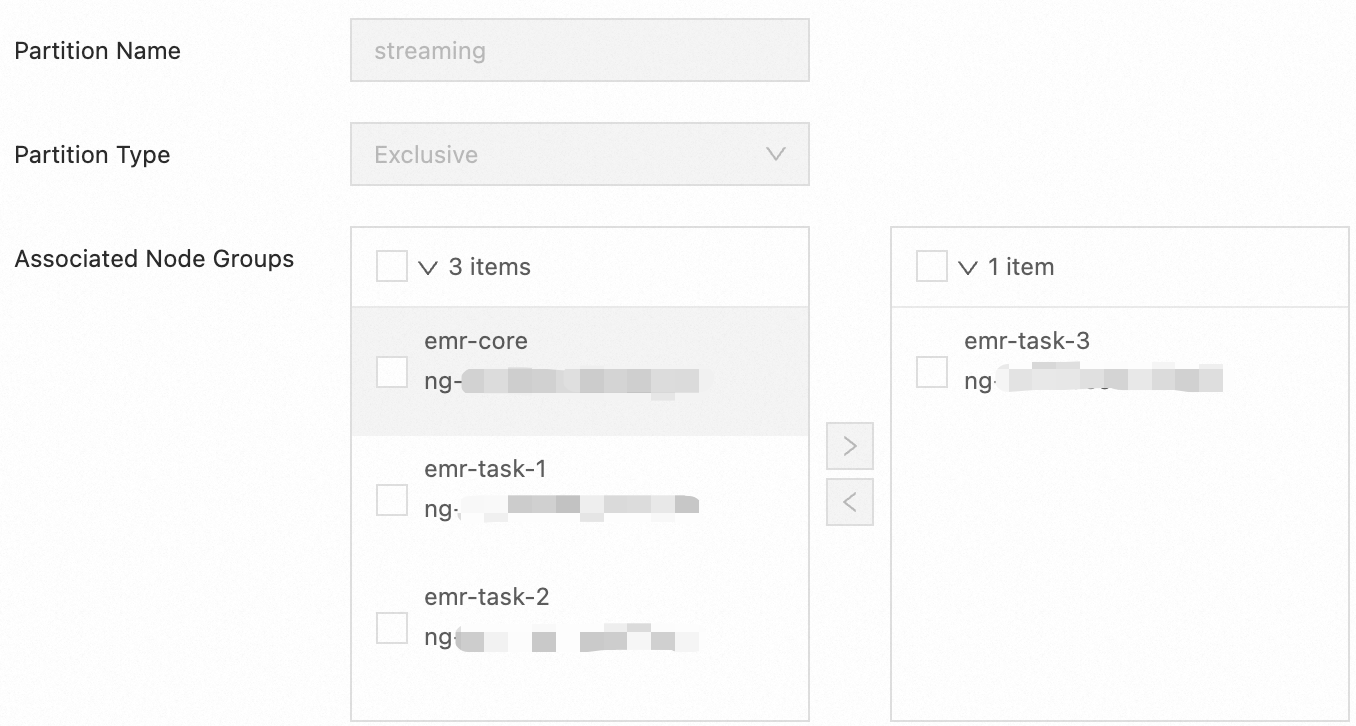

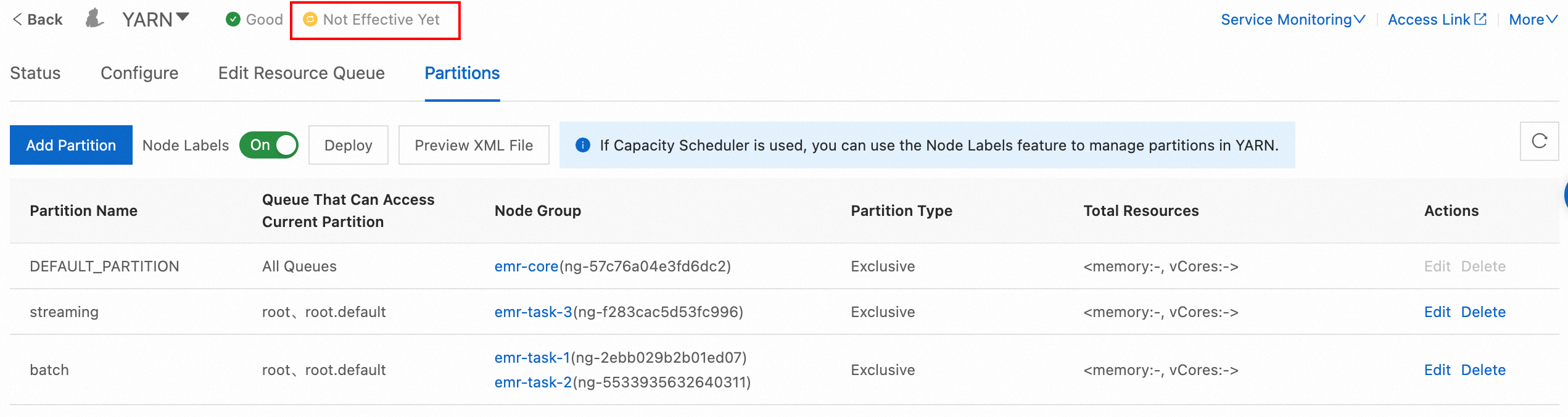

Click the Partitions tab, then click Add Partition to create the

streamingpartition. In the Add Partition dialog box, configure the following parameters:-

Partition Name:

streaming -

Partition Type: Exclusive — containers are allocated to nodes in this partition only when the partition is an exact match.

-

Associated Node Groups: Select the node group to associate with this partition. In this example,

emr-task-3is selected. Theemr-task-3node group contains memory-optimized instances suited for real-time jobs that hold large amounts of intermediate data and require low latency. Choose a node group based on your instance type and workload requirements.

-

-

Repeat the preceding steps to add the

batchpartition.

-

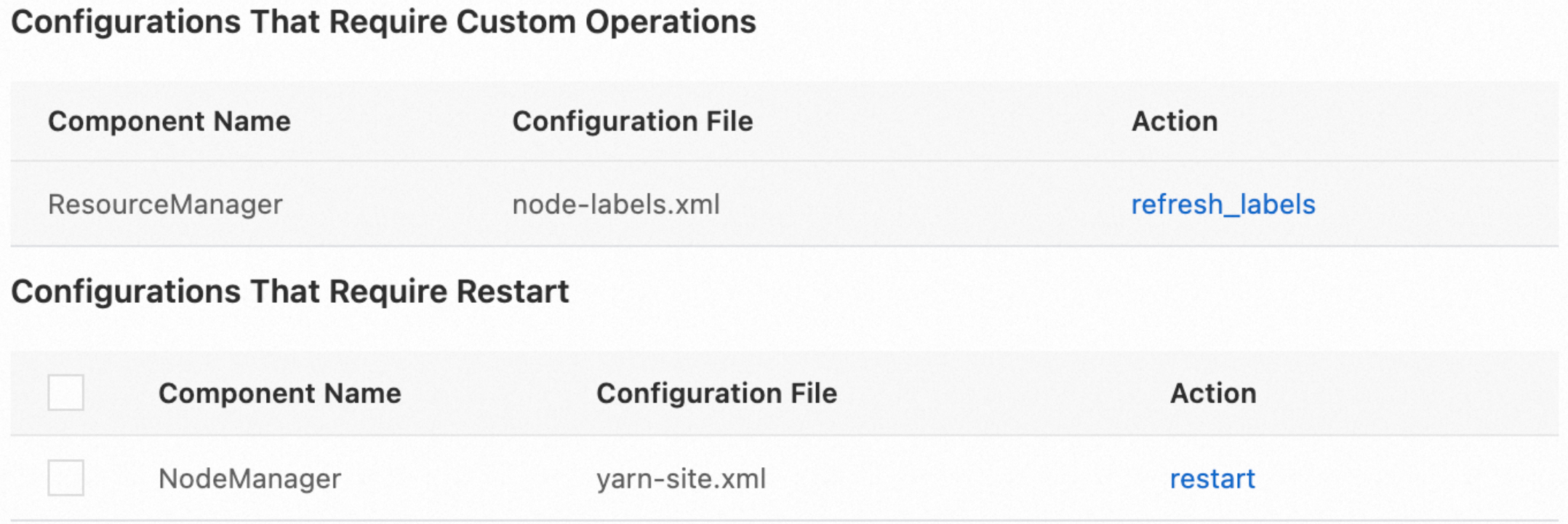

Click Not Effective Yet. In the Configurations to Take Effect dialog box, click refresh_labels in the Actions column.

-

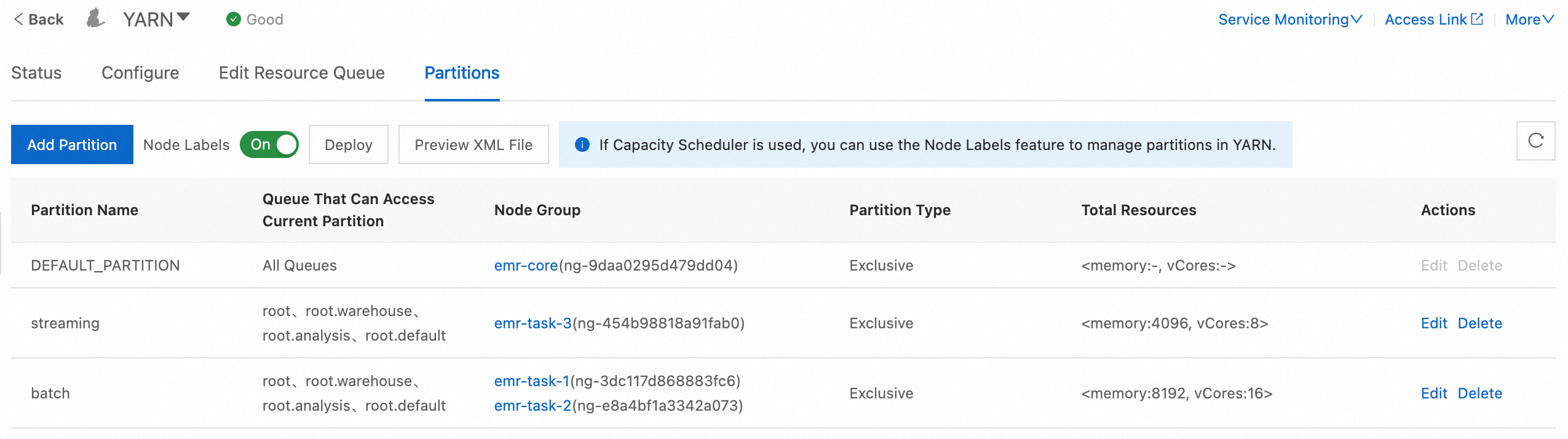

After the configuration takes effect, confirm the total resources for each partition in the Total Resources column.

Step 3: Enable partition-queue association management

-

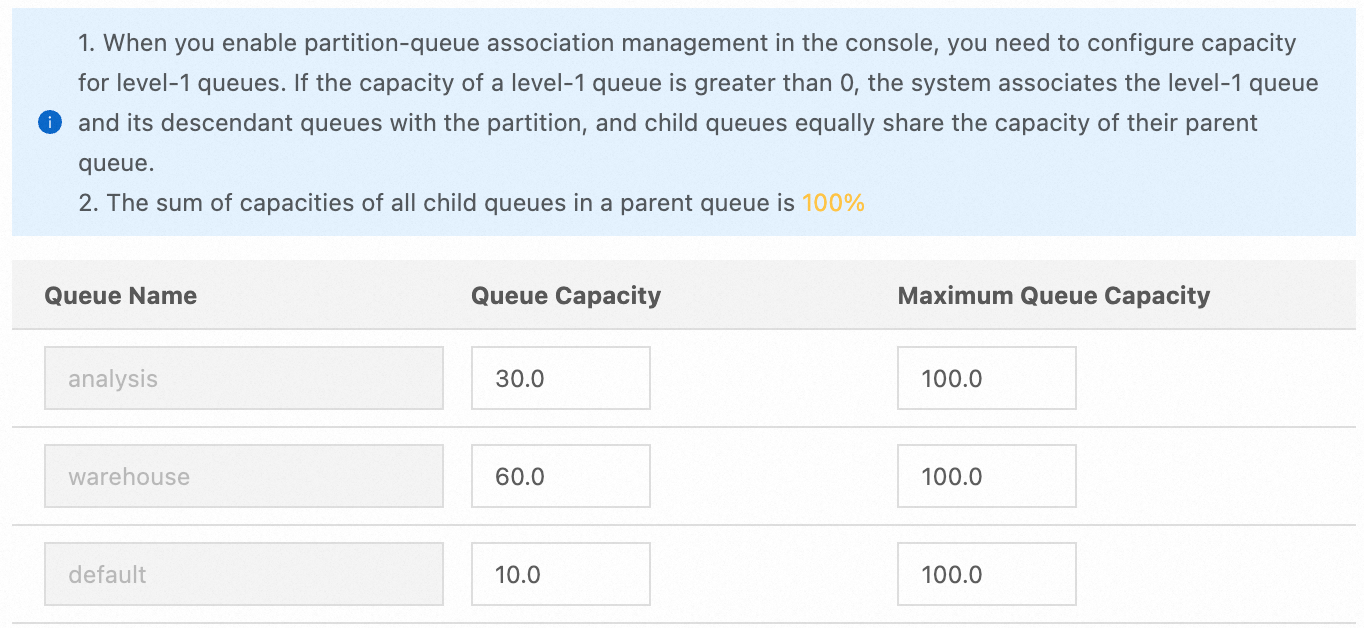

On the Edit Resource Queue tab, select the

batchpartition from the Select Partition drop-down list, then turn on Enable Partition-queue Association Management. -

In the Enable Partition-queue Association Management dialog box, set the Queue Capacity for each queue, then click OK. Repeat this step for the

streamingpartition.In this example, all queues have access to both partitions. To restrict a partition to jobs from specific queues only, set the capacity of other queues to

0.Queue Capacity in batch partition Capacity in streaming partition warehouse 60 70 analysis 30 20 default 10 10

-

Click Not Effective Yet. In the Configurations to Take Effect dialog box, click refresh_queues in the Actions column.

-

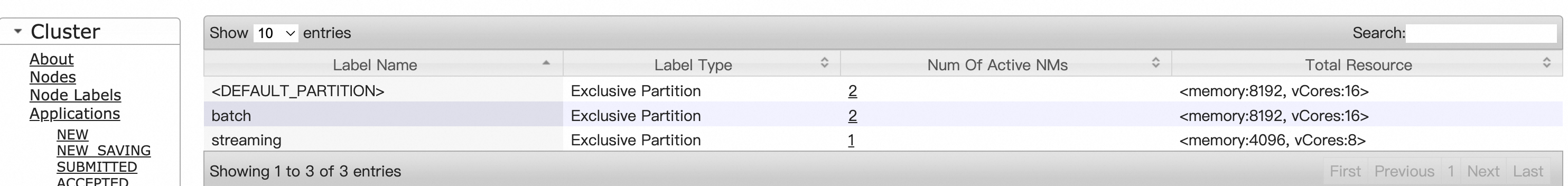

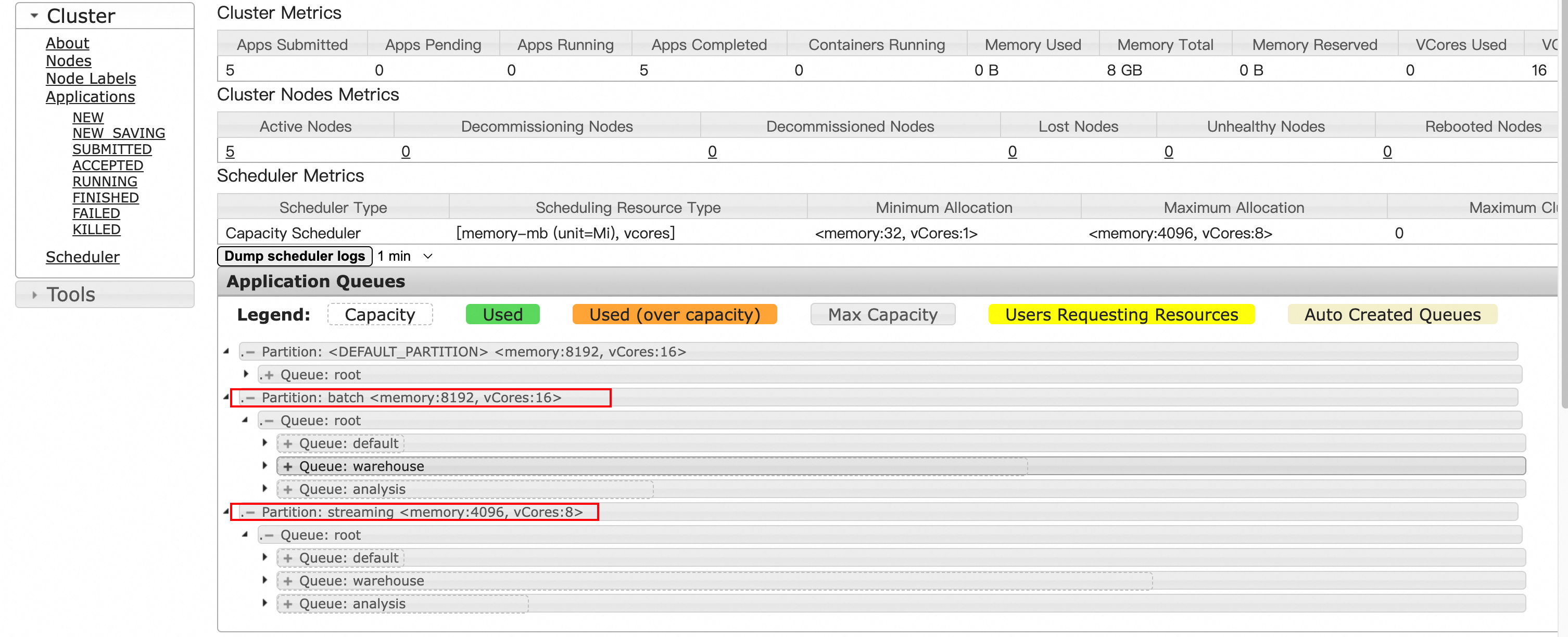

Verify that the node labels are active on the YARN web UI.

-

Node Labels page: confirms the partitions and their types.

-

Scheduler page: confirms the queue-partition capacity assignments.

-

Step 4: Submit jobs to specific partitions

With queues and node labels configured, submit jobs to a specific queue and partition using the spark.yarn.am.nodeLabelExpression and spark.yarn.executor.nodeLabelExpression parameters.

-

Log on to the master node and go to the Spark installation directory:

cd /opt/apps/SPARK3/spark-3.3.1-hadoop3.2-1.1.1 -

Submit a Spark job targeting the

warehousequeue andbatchpartition:If you specify only a queue without a node label expression, the job runs on the queue's default partition. To change a queue's default partition, click Edit in the Actions column of the queue on the Edit Resource Queue tab.

Parameter Description Value in this example --classMain class of the application org.apache.spark.examples.SparkLR--masterResource manager yarn--deploy-modeLocation where the driver starts cluster--driver-memoryDriver memory 1g--executor-memoryMemory per executor 2g--conf spark.yarn.am.nodeLabelExpressionPartition for the AM batch--conf spark.yarn.executor.nodeLabelExpressionPartition for executors batch--queueTarget queue warehouse./bin/spark-submit \ --class org.apache.spark.examples.SparkLR \ --master yarn \ --deploy-mode cluster \ --driver-memory 1g \ --executor-memory 2g \ --conf spark.yarn.am.nodeLabelExpression=batch \ --conf spark.yarn.executor.nodeLabelExpression=batch \ --queue=warehouse \ examples/jars/spark-examples_2.12-3.3.1.jarBoth

spark.yarn.am.nodeLabelExpressionandspark.yarn.executor.nodeLabelExpressionmust be set to route the ApplicationMaster (AM) and executors to the target partition. -

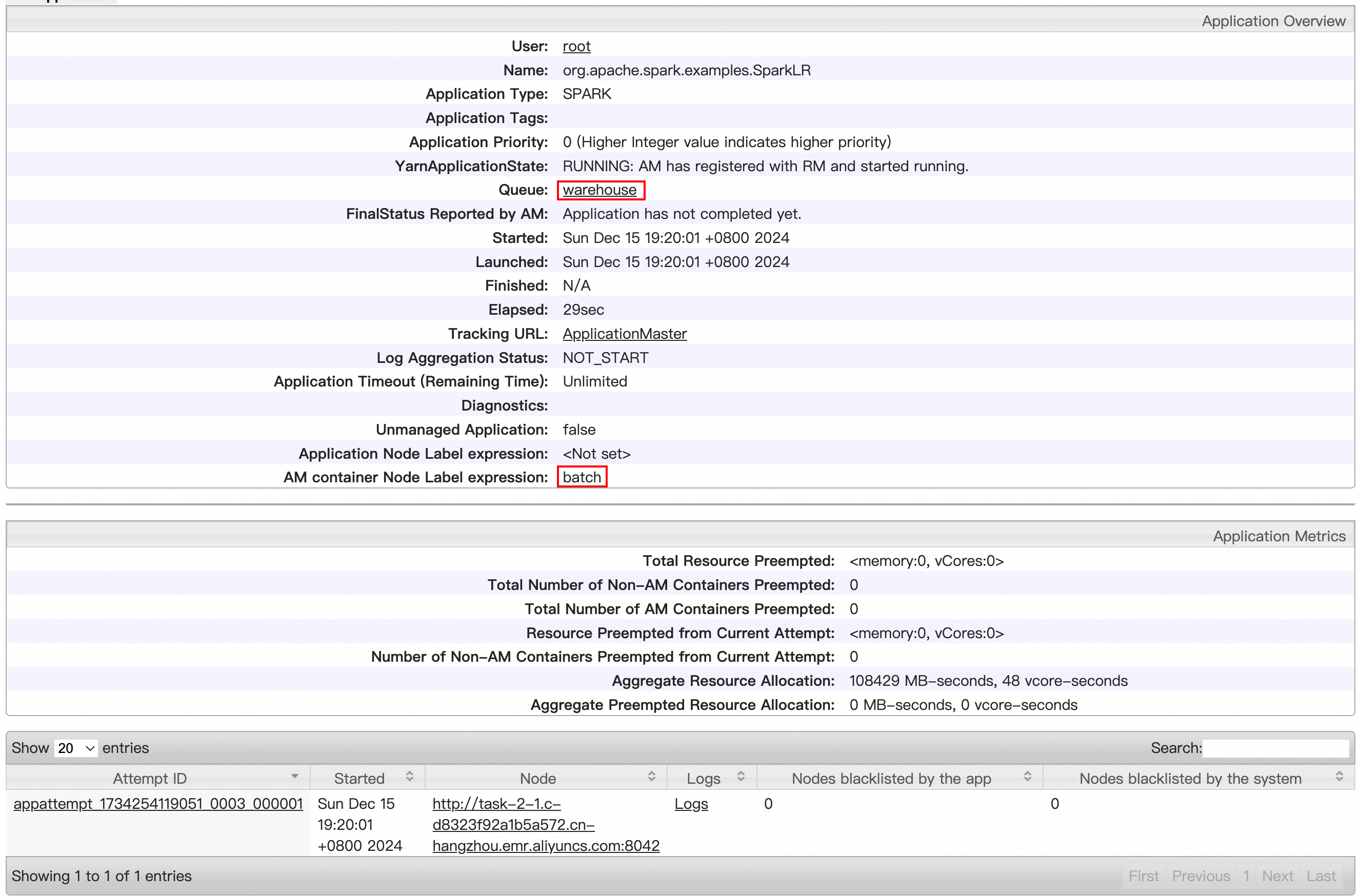

On the YARN web UI, confirm that the SparkLR job is running in the

warehousequeue on thebatchpartition.

FAQ

Jobs aren't running on the expected partition when using spark-submit.

Check that both spark.yarn.am.nodeLabelExpression and spark.yarn.executor.nodeLabelExpression are included in the submit command. If only the queue is specified, the job runs on the queue's default partition, not a named partition. Also verify that the queue and partition configurations have taken effect by refreshing them.

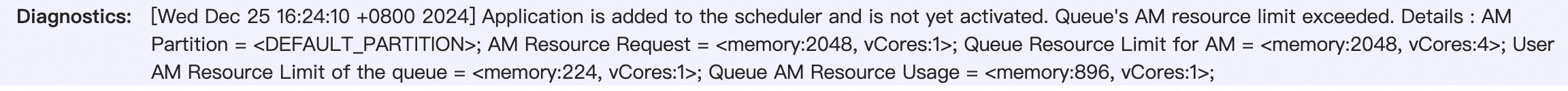

Jobs stay in ACCEPTED status and never start.

First, check whether the target queue has enough resources. If other jobs are consuming the queue's capacity, wait for them to complete before resubmitting.

If the queue appears to have sufficient resources, open the job on the YARN web UI and check the Diagnostics field. If it shows Queue's AM resource limit exceeded, the queue has resources but the ApplicationMaster resource percentage is too low. The AM resource pool is separate from the executor pool—when too many jobs compete for AM slots, new jobs get stuck.

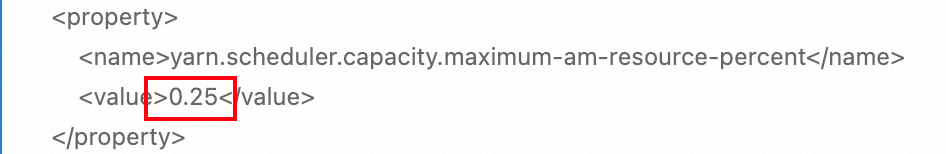

To increase the AM resource percentage, find capacity_scheduler.xml on the Configure tab of the YARN service page and raise the value of yarn.scheduler.capacity.maximum-am-resource-percent. For example, change it from 0.25 to 0.5.

Tune the AM resource percentage based on your workload pattern. Clusters running many small, short-lived jobs typically benefit from a higher AM resource percentage.