When the vCPUs or memory of Elastic Compute Service (ECS) instances in a node group can no longer meet your workload requirements, upgrade the instance type to a higher specification.

Prerequisites

Before you begin, ensure that you have:

An E-MapReduce (EMR) cluster. For more information, see Create a cluster

Limitations

ECS instances in big data instance families or instance families that use local SSDs do not support configuration upgrades.

Only upgrades are supported. Downgrading ECS instance configurations in a node group is not supported.

Impact of upgrading node configurations

Upgrading node configurations restarts all nodes in the affected node group, which can disrupt running jobs. Before you proceed:

Schedule the upgrade during off-peak hours.

If YARN is deployed, restarting the ResourceManager component may cause running jobs to fail.

To choose between restart modes:

| Restart mode | Behavior |

|---|---|

| Rolling Restart (off by default) | Restarts all ECS instances simultaneously |

| Rolling Restart (on) | Restarts one instance at a time, waiting for the instance and its big data services to recover before proceeding |

Upgrade the instance type

Step 1: Open the Nodes tab

Log on to the EMR console. In the left-side navigation pane, click EMR on ECS.

In the top navigation bar, select the region where your cluster resides and select a resource group.

On the EMR on ECS page, find the cluster and click Nodes in the Actions column.

Step 2: Start the upgrade

On the Nodes tab, find the node group, move the pointer over the ![]() icon in the Actions column, and then select Upgrade Configuration.

icon in the Actions column, and then select Upgrade Configuration.

Step 3: Configure and confirm the upgrade

In the Upgrade Configuration dialog box, set the parameters.

Parameter Description Instance Type Select a higher-specification instance type. Available types are shown in the EMR console. Rolling Restart Off by default — all ECS instances in the node group restart simultaneously. Turn on Rolling Restart to restart instances one at a time, waiting for each instance and its big data services to fully recover before proceeding to the next. Click OK. An order is generated after a short period.

Complete the payment.

ImportantAfter payment, the system restarts all nodes in the current node group. The new instance type appears in the ECS console after payment, but does not take effect until the upgrade process completes.

(Optional) Update YARN configurations

If YARN is deployed in your cluster, update the YARN resource parameters so the NodeManager can use the new instance's vCPUs and memory.

Step 1: Open YARN configuration

On the Services tab, find YARN and click Configure.

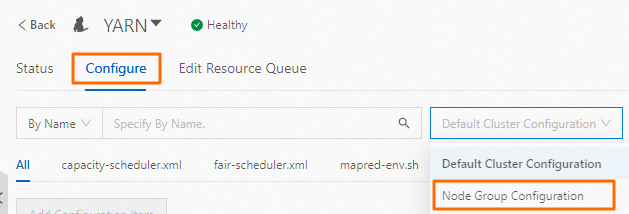

From the Default Cluster Configuration drop-down list, select Node Group Configuration.

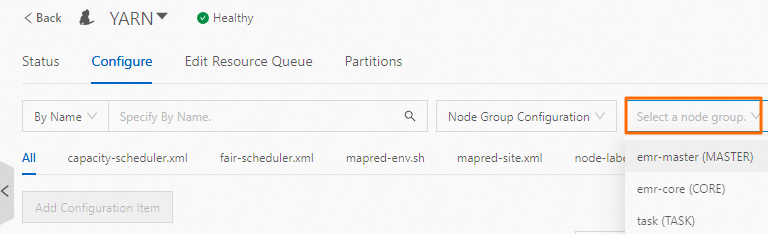

From the drop-down list, select the node group whose configurations you upgraded.

Step 2: Set vCPU and memory parameters

vCPU — yarn.nodemanager.resource.cpu-vcores

Set this parameter based on your workload type:

| Workload type | Recommended value | Example (32-vCPU instance) |

|---|---|---|

| Compute-intensive | Number of vCPUs per instance | 32 |

| Compute-intensive and I/O intensive | 1x to 2x the number of vCPUs per instance | 32–64 |

Memory — yarn.nodemanager.resource.memory-mb

Set this parameter to 80% of the memory size of each ECS instance, in MiB.

For example, for an instance with 32 GiB of memory, set yarn.nodemanager.resource.memory-mb to 26214.

Step 3: Save and apply the configurations

Click Save. In the dialog box, enter an Execution Reason and click Save.

Choose More > Configure. In the dialog box, enter an Execution Reason and click OK. In the confirmation dialog box, click OK.

Click Operation History in the upper-right corner to monitor the task. When the task reaches the Complete state, proceed to restart YARN.

Step 4: Restart the YARN service

Choose More > Restart. In the dialog box, enter an Execution Reason and click OK. In the confirmation dialog box, click OK.

Click Operation History to monitor the restart. The update takes effect when the task reaches the Complete state.

Restarting the ResourceManager component may cause running jobs to fail. Perform this step during off-peak hours.