Use Data Transmission Service (DTS) to continuously replicate row-level changes from an ApsaraDB RDS for MySQL instance to a DataHub project. This lets downstream stream computing and real-time analytics pipelines consume change events as they occur.

Prerequisites

Before you begin, make sure that you have:

An ApsaraDB RDS for MySQL instance. To create one, see Create an RDS for MySQL instance. For supported MySQL versions, see Overview of data synchronization scenarios.

DataHub service activated and a destination project created. See Quick Start and Manage projects.

Binary logging enabled and correctly configured on the source instance. See Binary logging requirements below.

Supported synchronization topologies

One-way one-to-one synchronization

One-way one-to-many synchronization

One-way many-to-one synchronization

For details, see Data synchronization topologies.

Supported SQL operations

| Operation type | SQL operations |

|---|---|

| DML | INSERT, UPDATE, DELETE |

| DDL | ADD COLUMN |

Usage notes

What DTS does not synchronize

DTS does not synchronize foreign keys, so cascade and delete operations on the source are not replicated to the destination. The following object types are also excluded: indexes, partitions, views, procedures, functions, triggers, and foreign keys.

Source database requirements

| Requirement | Details |

|---|---|

| Primary key or unique constraint | Tables must have a PRIMARY KEY or UNIQUE constraint with all fields unique. Without this, the destination may contain duplicate records. |

| Table count limit when renaming | If you select individual tables and need to rename objects, a single task supports up to 1,000 tables. For larger sets, split across multiple tasks or synchronize the entire database. |

| No DDL during synchronization | Do not run DDL statements that change database or table schemas during schema synchronization or full data synchronization. Doing so causes the task to fail. |

| Physical backup or cascade changes | Change data generated by physical backup restoration or cascade operations is not captured during an active synchronization. To include this data, remove the affected tables from the task scope, then re-add them. See Modify the objects to be synchronized. |

| Invisible columns (MySQL 8.0.23+) | Invisible columns cannot be captured, which causes data loss. To make a column visible, run: ALTER TABLE <table_name> ALTER COLUMN <column_name> SET VISIBLE; Tables without explicit primary keys automatically generate invisible primary keys — make these visible too. See Invisible Columns and Generated Invisible Primary Keys. |

Destination DataHub limits

A single string field cannot exceed 2 MB.

If a source table has a column named

record_id, use the object name mapping feature to rename it in the destination. Otherwise, an error occurs.

Encryption and EncDB

TDE (Transparent Data Encryption): Fully supported — schema synchronization, full data synchronization, and incremental data synchronization all work.

EncDB: Full data synchronization is not supported. No workaround is available; use a non-EncDB source if full data synchronization is required.

Data consistency

Writing data to the destination from sources other than DTS while a task is running causes data inconsistency. This includes running online DDL statements via DMS while other writes occur.

Task recovery

If a DTS task fails, DTS technical support restores it within 8 hours. During recovery, the task may be restarted and task-level parameters may be adjusted. Source and destination database parameters are not modified.

Binary logging requirements

Configure binary logging on the source database before starting the task.

ApsaraDB RDS for MySQL instances

Binary logging is enabled by default. Verify and set the following parameters:

| Parameter | Required value | Consequence if not set |

|---|---|---|

binlog_row_image | full | DTS fails the precheck and cannot start the task. |

| Binary log retention period | At least 3 days (7 days recommended) | Logs purged too early cause task failure or data loss during task startup and recovery. |

To set the retention period, see Delete binary log files.

To set

binlog_row_image, see Modify instance parameters.

Read-only RDS for MySQL 5.6 instances do not record transaction logs and cannot be used as a source.

DTS executes the CREATE DATABASE IF NOT EXISTS 'test' statement in the source database as scheduled to move forward the binary log file position.Self-managed MySQL databases

Enable binary logging and add the following to my.cnf:

binlog_format = ROW

binlog_row_image = FULLFor dual-primary clusters, also set:

log_slave_updates = ONWithout log_slave_updates, DTS may miss binary logs that were applied on the secondary primary node before the logs reached the first primary.

Retain binary logs for at least 7 days.

For a complete setup guide, see Create an account for a self-managed MySQL database and configure binary logging.

If you perform a primary/secondary switchover on a self-managed source while a task is running, the task fails.

Synchronization latency on self-managed sources

DTS calculates synchronization latency based on the timestamp of the latest synchronized record versus the current source timestamp. If no DML operations occur on the source for an extended period, the displayed latency may be inaccurate. To refresh the latency metric, run any DML operation on the source.

If you synchronize an entire database, create a heartbeat table that receives a write every second. DTS also executes CREATE DATABASE IF NOT EXISTS 'test' periodically to advance the binary log position.

Create a synchronization task

Step 1: Go to the Data Synchronization page

Use either console to access the Data Synchronization page.

DTS console

Log on to the DTS console.DTS console

In the left-side navigation pane, click Data Synchronization.

In the upper-left corner, select the region where the synchronization instance will reside.

DMS console

NoteSteps may vary based on your DMS console mode and layout. See Simple mode and Customize the layout and style of the DMS console.

Log on to the DMS console.DMS console

In the top navigation bar, move your pointer over Data + AI and choose DTS (DTS) > Data Synchronization.

From the drop-down list next to Data Synchronization Tasks, select the region.

Step 2: Configure source and destination databases

Click Create Task, then configure the parameters below.

After configuring databases, read the Limits displayed on the page. Skipping this step may cause task failures or data inconsistency.

Set the Task Name field. DTS generates a name automatically — replace it with a descriptive name that makes the task easy to identify.

Source Database

| Parameter | Value |

|---|---|

| Select DMS Database Instance | Select an existing instance, or leave blank and fill in the fields below. |

| Database Type | MySQL |

| Connection Type | Cloud Instance |

| Instance Region | Region of the source RDS for MySQL instance |

| Cross-account | No (for same-account synchronization) |

| RDS instance ID | ID of the source RDS for MySQL instance |

| Database Account | An account with read permissions on the objects to synchronize |

| Database Password | Password for the database account |

| Encryption | Non-encrypted or SSL-encrypted. To use SSL encryption, enable SSL on the RDS instance before configuring the task. |

Destination Database

| Parameter | Value |

|---|---|

| Select DMS Database Instance | Select an existing instance, or leave blank and fill in the fields below. |

| Database Type | DataHub |

| Connection Type | Cloud Instance |

| Instance Region | Region of the destination DataHub instance |

| Project | The DataHub project that receives the synchronized data |

Step 3: Test connectivity

Click Test Connectivity and Proceed at the bottom of the page.

DTS server CIDR blocks must be added to the security settings of both source and destination databases. See Add the CIDR blocks of DTS servers. For self-managed databases not using Alibaba Cloud Instance access, click Test Connectivity in the CIDR Blocks of DTS Servers dialog.

Step 4: Configure objects to synchronize

In the Configure Objects step, set the following options.

Synchronization Type

Select Schema Synchronization, Full Data Synchronization, and Incremental Data Synchronization. Full data synchronization copies historical data to the destination first, establishing the baseline for incremental synchronization.

Naming rules for additional columns

DTS adds metadata columns to each DataHub topic. If these column names conflict with existing columns in the destination, the task fails. Select New Rule or Old Rule based on your destination schema.

Check for name conflicts before selecting a rule. Conflicts cause data loss or task failure. For column names and definitions, see Names and definitions of additional columns.

Processing mode of conflicting tables

| Mode | Behavior |

|---|---|

| Precheck and report errors | Fails the precheck if source and destination have tables with identical names. Use object name mapping to rename destination tables and resolve conflicts. |

| Ignore errors and proceed | Skips the precheck for name conflicts. During full data synchronization, records with matching primary or unique keys in the destination are retained. During incremental synchronization, they are overwritten. Schema mismatches may cause partial data loss. |

Case Policy for Destination Object Names

By default, DTS default policy is used. Adjust this to match your source or destination casing requirements. See Specify the capitalization of object names in the destination instance.

Select objects

In the Source Objects section, select the tables to synchronize and click the arrow icon to move them to Selected Objects.

You can select tables as the objects to be synchronized.

To rename objects, right-click a table in Selected Objects. For bulk renaming, click Batch Edit. See Map object names.

To filter rows using SQL conditions, right-click a table in Selected Objects and configure WHERE conditions. See Set filter conditions.

To set a Shardkey for partitioning, right-click a table in Selected Objects, clear Synchronize All Tables, then set the Shardkey.

Step 5: Configure advanced settings

Click Next: Advanced Settings and configure the following options.

| Setting | Description |

|---|---|

| Dedicated cluster for task scheduling | By default, tasks run on the shared cluster. For higher stability, purchase a dedicated cluster. |

| Retry time for failed connections | How long DTS retries if the source or destination is unreachable after the task starts. Range: 10–1,440 minutes. Default: 720 minutes. Set to at least 30 minutes. If multiple tasks share the same source or destination, the shortest retry time applies. |

| Retry time for other issues | How long DTS retries if DDL or DML operations fail. Range: 1–1,440 minutes. Default: 10 minutes. Must be less than the retry time for failed connections. |

| Enable throttling for full data synchronization | Limits QPS to the source, RPS, and data migration speed to reduce load during full data synchronization. Available only when Full Data Synchronization is selected. |

| Enable throttling for incremental data synchronization | Limits RPS and data synchronization speed during incremental synchronization. |

| Environment tag | Optional label to identify the instance. |

| Whether to delete SQL operations on heartbeat tables of forward and reverse tasks | Yesalert notification settingsalert notification settings: DTS does not write heartbeat SQL to the source (a task latency indicator may appear). No: DTS writes heartbeat SQL to the source (may affect physical backup and cloning). |

| Configure ETL | Enable extract, transform, and load (ETL) to apply data transformations during synchronization. See What is ETL? and Configure ETL. |

| Monitoring and alerting | Configure alerts for task failures or latency exceeding a threshold. See Configure monitoring and alerting. |

Step 6: Run the precheck

Click Next: Save Task Settings and Precheck.

To view the OpenAPI parameters for this task configuration, hover over Next: Save Task Settings and Precheck and click Preview OpenAPI parameters.

DTS runs a precheck before starting the task. Address the results as follows:

Failed items: Click View Details next to the failed item, fix the underlying issue, then click Precheck Again.

Alert items that cannot be ignored: Fix the issue and rerun the precheck.

Alert items that can be ignored: Click Confirm Alert Details > Ignore > OK, then click Precheck Again. Ignoring alerts may lead to data inconsistency.

Step 7: Purchase and start the instance

Wait until the Success Rate reaches 100%, then click Next: Purchase Instance.

On the purchase page, configure the billing and instance settings.

| Parameter | Description |

|---|---|

| Billing method | Subscription: pay upfront for a fixed term; more cost-effective for long-term use. Pay-as-you-go: billed hourly; release the instance when no longer needed to stop charges. |

| Resource group | The resource group for this instance. Default: default resource group. See What is Resource Management? |

| Instance class | Determines synchronization speed. See Instance classes of data synchronization instances. |

| Subscription duration | Available for subscription billing: 1–9 months, or 1, 2, 3, or 5 years. |

Read and accept Data Transmission Service (Pay-as-you-go) Service Terms.

Click Buy and Start, then click OK in the confirmation dialog.

The task appears in the task list. Monitor its progress there.

DataHub topic schema

When DTS writes change events to a DataHub topic, it adds metadata columns alongside the business data columns. The naming of these columns depends on which naming rule you selected in Step 4.

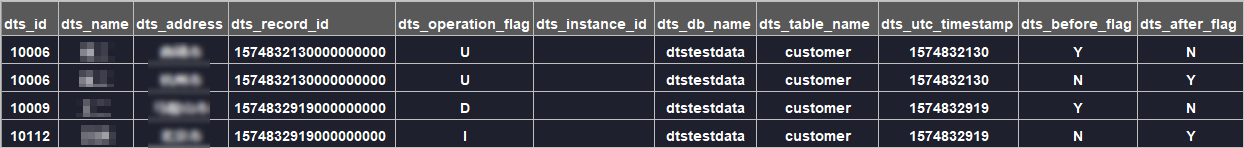

The following table lists all metadata columns under both naming conventions. In the example below, the business fields are id, name, and address, with the old naming rule in effect (DTS adds a dts_ prefix to business fields).

| Old column name | New column name | Data type | Description |

|---|---|---|---|

dts_record_id | new_dts_sync_dts_record_id | String | Unique ID of the incremental log record. Auto-increments by default. In disaster recovery scenarios, the ID may not be monotonically increasing or unique. For UPDATE operations, the two split records share the same dts_record_id. |

dts_operation_flag | new_dts_sync_dts_operation_flag | String | Operation type: I (INSERT), D (DELETE), U (UPDATE), F (full data synchronization). |

dts_instance_id | new_dts_sync_dts_instance_id | String | Server ID of the source database. |

dts_db_name | new_dts_sync_dts_db_name | String | Source database name. |

dts_table_name | new_dts_sync_dts_table_name | String | Source table name. |

dts_utc_timestamp | new_dts_sync_dts_utc_timestamp | String | Binary log timestamp of the operation (UTC). |

dts_before_flag | new_dts_sync_dts_before_flag | String | Y if the record contains pre-update column values; N otherwise. |

dts_after_flag | new_dts_sync_dts_after_flag | String | Y if the record contains post-update column values; N otherwise. |

How DTS represents change events

DTS uses dts_before_flag and dts_after_flag to indicate whether each record holds the state before or after a change.

INSERT

An INSERT produces one record. All column values are the newly inserted values (post-insert state).

dts_operation_flag | dts_before_flag | dts_after_flag |

|---|---|---|

I | N | Y |

UPDATE

DTS represents an UPDATE as two separate records with the same dts_record_id, dts_operation_flag, and dts_utc_timestamp. The first record holds the pre-update values; the second holds the post-update values.

| Record | dts_operation_flag | dts_before_flag | dts_after_flag | Contents |

|---|---|---|---|---|

| 1 (before) | U | Y | N | Column values before the update |

| 2 (after) | U | N | Y | Column values after the update |

To reconstruct the full change, join the two records on dts_record_id. For example, if a row changes name from "Alice" to "Alice Smith":

Record 1: dts_record_id=1001, dts_operation_flag=U, dts_before_flag=Y, dts_after_flag=N, id=42, name="Alice", address="..."

Record 2: dts_record_id=1001, dts_operation_flag=U, dts_before_flag=N, dts_after_flag=Y, id=42, name="Alice Smith", address="..."DELETE

A DELETE produces one record. All column values are from the deleted row (pre-delete state).

dts_operation_flag | dts_before_flag | dts_after_flag |

|---|---|---|

D | Y | N |