Data Transmission Service (DTS) continuously replicates data from a self-managed PostgreSQL database to an ApsaraDB RDS for PostgreSQL instance, keeping the destination in sync with minimal latency. This topic covers prerequisites, source database setup, and task configuration.

Overview

To set up the synchronization, complete the following steps:

Verify prerequisites and review limitations.

Configure the source PostgreSQL database.

Create and configure the DTS synchronization task.

Purchase the DTS instance and monitor progress.

Prerequisites

Before you begin, make sure that you have:

A self-managed PostgreSQL database (source) and an ApsaraDB RDS for PostgreSQL instance (destination). To create the destination instance, see Create an RDS for PostgreSQL instance.

A destination database version that is the same as or later than the source database version. For supported version combinations, see Overview of data synchronization solutions.

Enough available storage in the destination instance to accommodate the data in the source database.

Billing

| Synchronization type | Cost |

|---|---|

| Schema synchronization and full data synchronization | Free |

| Incremental data synchronization | Charged. See Billing overview. |

Supported synchronization topologies

One-way one-to-one synchronization

One-way one-to-many synchronization

One-way cascade synchronization

One-way many-to-one synchronization

For details, see Synchronization topologies.

Supported synchronization objects

| Object type | Details |

|---|---|

SCHEMA, TABLE | Includes PRIMARY KEY, UNIQUE KEY, FOREIGN KEY, built-in data types (DATATYPE), and DEFAULT CONSTRAINT |

VIEW, PROCEDURE (PostgreSQL 11+), FUNCTION, RULE, SEQUENCE, EXTENSION, TRIGGER, AGGREGATE, INDEX, OPERATOR, DOMAIN | — |

SQL operations that can be synchronized

DML: INSERT, UPDATE, DELETE

DDL: Supported only for tasks created after May 12, 2023 (UTC+8).

For tasks created before May 12, 2023 (UTC+8), you must create triggers and functions in the source database to capture DDL changes before configuring the task. See Use triggers and functions to implement incremental DDL migration for PostgreSQL databases.

Supported DDL statements (requires a privileged account and self-managed PostgreSQL minor version 20210228 or later):

CREATE TABLE,DROP TABLEALTER TABLE(includingRENAME TABLE,ADD COLUMN,ADD COLUMN DEFAULT,ALTER COLUMN TYPE,DROP COLUMN,ADD CONSTRAINT,ADD CONSTRAINT CHECK,ALTER COLUMN DROP DEFAULT)TRUNCATE TABLE(source PostgreSQL 11 or later)CREATE INDEX ON TABLE

The following DDL statements and scenarios are not supported:

CASCADEorRESTRICTclauses in DDL statementsDDL from sessions that run

SET session_replication_role = replicaDDL executed by calling a

FUNCTIONMixed commits containing both DML and DDL statements — the DDL is not synchronized

DDL for objects that are not selected for synchronization

DDL executed through the Server Programming Interface (SPI)

For data synchronization tasks created before May 12, 2023 (UTC+8), you must create triggers and functions in the source database to capture DDL information before you configure the task. For more information, see Use triggers and functions to implement incremental DDL migration for PostgreSQL databases.

BITtype data during incremental data synchronization

Required database account permissions

| Database | Required permissions | Reference |

|---|---|---|

| Self-managed PostgreSQL | superuser | CREATE USER and GRANT |

| ApsaraDB RDS for PostgreSQL | Owner permission on the schema | Create an account |

Limitations

Source database limitations

Primary key or UNIQUE constraint required. Tables to be synchronized must have a primary key or a UNIQUE constraint with unique field values. Without this, duplicate data may appear in the destination database. If the target table is not created by DTS (that is, Schema synchronization is not selected), the table must have the same primary key or non-null UNIQUE constraint as the source table.

Database name cannot contain hyphens. Names like

dts-testdataare invalid.Table-level sync with 5,000+ tables. If you need to edit objects during synchronization (such as mapping column names) and a single task includes more than 5,000 tables, split the task or synchronize the entire database instead.

Unsupported objects. DTS does not synchronize temporary tables, internal triggers, C language functions, or internal functions for

PROCEDUREandFUNCTION. DTS does synchronize custom data types (COMPOSITE,ENUM,RANGE) and the following constraints: primary key, foreign key, unique, and CHECK.Write-Ahead Logging (WAL) requirements: If DTS cannot read WAL logs because the retention period is too short, the task fails and is not covered by the DTS Service-Level Agreement (SLA).

Parameter Required value Why wal_levellogicalEnables logical replication, which DTS requires to read change events WAL retention (incremental sync only) More than 24 hours Ensures DTS can access logs if connectivity is briefly interrupted WAL retention (full + incremental sync) At least 7 days Covers the full sync phase; reduce to more than 24 hours after full sync completes max_wal_sendersGreater than (used replication slots + DTS instances using this source) Each DTS instance occupies one sender slot; slots remain open even when a task is not running max_replication_slotsGreater than (used replication slots + DTS instances using this source) Each DTS instance occupies one replication slot; slots remain open even when a task is not running Primary/secondary failover. If a primary/secondary failover occurs in the source database, the synchronization task fails.

Long-running transactions. If the source database has long-running transactions, WAL logs may accumulate before those transactions commit, which can exhaust disk space.

Data size limit. If a single incremental change exceeds 256 MB, the synchronization instance fails and cannot be recovered. Reconfigure the synchronization instance to resolve this.

Google Cloud SQL for PostgreSQL. When the source is Google Cloud Platform Cloud SQL for PostgreSQL, the database account must have

cloudsqlsuperuserpermissions. Select only objects that this account can manage, or grant the owner permission for objects to be synced using:A

cloudsqlsuperuseraccount cannot manage data owned by anothercloudsqlsuperuseraccount.GRANT <owner of the object to be synced> TO <source database account used by the task>DDL during initial synchronization. Do not run DDL operations that change schemas or tables while initial schema synchronization or full data synchronization is in progress. DTS creates metadata locks during full sync, which may block DDL operations on the source.

Major version upgrade. If you upgrade the source database to a major version while the synchronization instance is running, the instance fails and cannot be recovered. Reconfigure the instance.

Other limitations

One database per task. A single synchronization task can sync only one database. Configure separate tasks for multiple databases.

Unsupported table types. TimescaleDB extension tables and tables with cross-schema inheritance are not supported.

SERIAL type fields. If a table contains SERIAL type fields and Schema synchronization is selected, also select Sequence or synchronize the entire schema. Otherwise, the task may fail.

REPLICA IDENTITY FULL requirement. Run

ALTER TABLE schema.table REPLICA IDENTITY FULL;on tables before writing data to them in these three scenarios: Replaceschemaandtablewith the actual schema and table names. Run this command during off-peak hours. If you skip the related precheck item, DTS runs this command automatically during initialization.When the instance runs for the first time.

When synchronizing at the schema level and a new table is created, or an existing table is rebuilt with

RENAME.When using the modify synchronization objects feature.

Sequence validation. DTS validates data content but not metadata such as sequences. Validate sequence metadata yourself. After switching your business to the destination, new sequences do not increment from the maximum source sequence values — update sequence values in the destination before the switch. See Update sequence values in the destination database.

DTS temporary tables. DTS creates the following temporary tables in the source database to support incremental sync. Do not delete them during synchronization — the task will fail. DTS removes them automatically after the instance is released:

public.dts_pg_class,public.dts_pg_attribute,public.dts_pg_type,public.dts_pg_enum,public.dts_postgres_heartbeat,public.dts_ddl_command,public.dts_args_session,public.aliyun_dts_instanceHeartbeat table. DTS adds a heartbeat table named

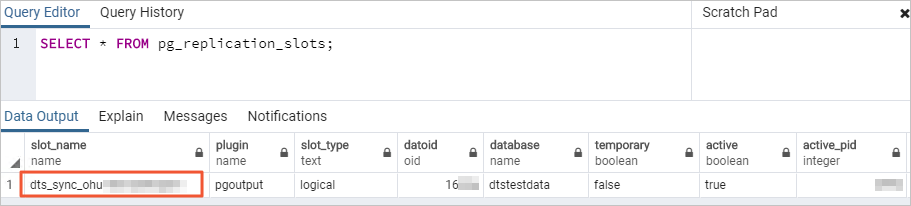

dts_postgres_heartbeatto the source database to ensure accurate synchronization latency display.Replication slot. DTS creates a replication slot prefixed with

dts_sync_in the source database. This slot provides access to incremental logs from the last 15 minutes. DTS attempts to clean up the slot when the task fails or the instance is released. If cleanup fails (because the source account password changed or the DTS IP whitelist was removed from the source), manually clean up the slot to prevent disk space exhaustion. If a failover occurred in the source database, log in to the secondary database to perform the cleanup.

Performance impact. Initial full data synchronization runs concurrent

INSERToperations, which increases load on both the source and destination. The destination tablespace will be larger than the source after initialization due to fragmentation. Run synchronization during off-peak hours.Writes from other sources. During synchronization, do not allow data from sources other than DTS to be written to the destination database. Data from other sources causes inconsistency. This applies when using Data Management (DMS) for online DDL — if other sources write to the destination simultaneously, data may be lost. For lock-free DDL using DMS, see Change schemas without locking tables.

Foreign keys, triggers, and event triggers. If the tables to be synchronized contain foreign keys, triggers, or event triggers, DTS sets

session_replication_roletoreplicaat the session level if the destination account is privileged or has superuser permissions. If the account does not have these permissions, manually setsession_replication_roletoreplicain the destination. During this period, cascade updates or deletes in the source may cause data inconsistency. After the DTS task is released, resetsession_replication_roletoorigin.Instance failure recovery. If a DTS instance fails, the DTS helpdesk attempts to recover it within 8 hours. During recovery, the instance may be restarted or have its parameters adjusted. Only DTS instance parameters are modified — database parameters are not changed. See Modify instance parameters for parameters that may be modified.

Prepare the source database

The following steps use Linux as an example.

All versions

Log in to the server hosting the self-managed PostgreSQL database.

Check the number of replication slots currently in use:

SELECT count(1) FROM pg_replication_slots;Edit

postgresql.confto configure WAL parameters. Setwal_leveltological. Setmax_wal_sendersandmax_replication_slotsto values greater than the sum of currently used replication slots and the number of DTS instances that will use this source:wal_level = logical # enables logical replication max_wal_senders = 10 # adjust based on: used slots + planned DTS instances max_replication_slots = 10 # adjust based on: used slots + planned DTS instancesRestart the database for these changes to take effect.

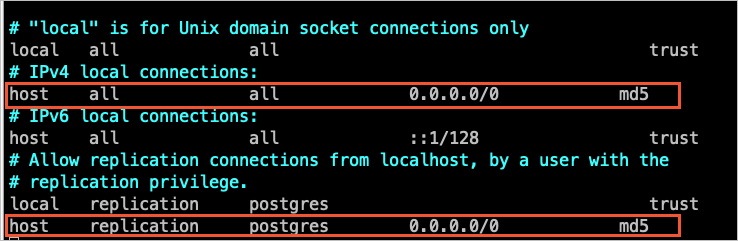

Add the DTS server CIDR blocks for the destination region to

pg_hba.conf. For the full list of CIDR blocks, see Add the CIDR blocks of DTS servers to the whitelist of a self-managed database. After editingpg_hba.conf, runSELECT pg_reload_conf();or restart the database to apply the changes. If you have already set the trusted address to0.0.0.0/0, skip this step.

In the destination ApsaraDB RDS for PostgreSQL instance, create a database and schema that match the source. Schema names must be identical. See Create a database and Manage schemas.

PostgreSQL 9.4.8 to 10.0 only

If your source PostgreSQL version is between 9.4.8 and 10.0, install the ali_decoding extension before configuring the task.

Download and compile the PostgreSQL source code:

Log in to the source database server.

Download the source code for your PostgreSQL version from the PostgreSQL website.

Run the following commands to compile and install:

sudo ./configure sudo make sudo make installImportantThe operating system version must be compatible with the GCC version.

If

sudo ./configurefails with areadline library not founderror, runsudo ./configure --without-readlineinstead.If you use a different installation method, compile

ali_decodingin an environment that matches your production OS and GCC versions.

Download and install the

ali_decodingextension:Download ali_decoding.

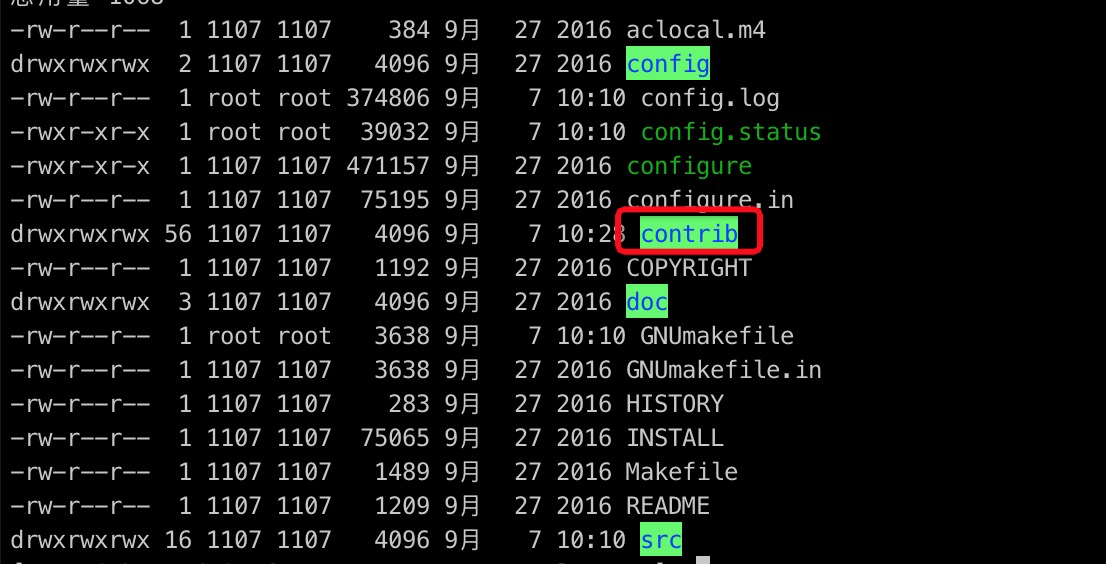

Copy the entire

ali_decodingdirectory to thecontribdirectory of the compiled PostgreSQL installation.

In the

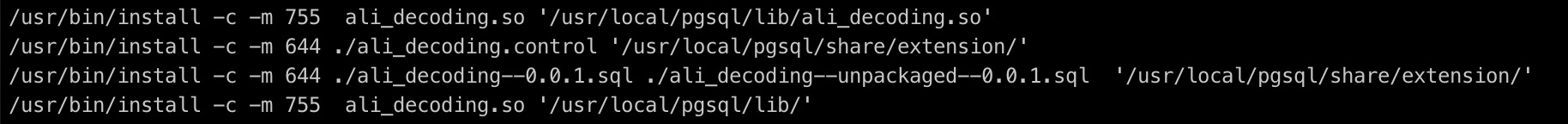

ali_decodingdirectory, replace theMakefilecontent with:# contrib/ali_decoding/Makefile MODULE_big = ali_decoding MODULES = ali_decoding OBJS = ali_decoding.o DATA = ali_decoding--0.0.1.sql ali_decoding--unpackaged--0.0.1.sql EXTENSION = ali_decoding NAME = ali_decoding #subdir = contrib/ali_decoding #top_builddir = ../.. #include $(top_builddir)/src/Makefile.global #include $(top_srcdir)/contrib/contrib-global.mk #PG_CONFIG = /usr/pgsql-9.6/bin/pg_config #pgsql_lib_dir := $(shell $(PG_CONFIG) --libdir) #PGXS := $(shell $(PG_CONFIG) --pgxs) #include $(PGXS) # Use the following for source code installation ifdef USE_PGXS PG_CONFIG = pg_config PGXS := $(shell $(PG_CONFIG) --pgxs) include $(PGXS) else subdir = contrib/ali_decoding top_builddir = ../.. include $(top_builddir)/src/Makefile.global include $(top_srcdir)/contrib/contrib-global.mk endifIn the

ali_decodingdirectory, compile and install: ``bash sudo make sudo make install``Copy the output files to the locations shown below:

Create the database and schema in the destination instance. Schema names must match the source. See Create a database and Manage schemas.

Configure the synchronization task

Step 1: Open the Data Synchronization page

Use one of the following methods:

DTS console

Log in to the DTS console.DTS console

In the left navigation pane, click Data Synchronization.

In the upper-left corner, select the region where the synchronization instance resides.

DMS console

The DMS console layout may vary. See Simple mode and Customize the layout and style of the DMS console.

Log in to the DMS console.DMS console

In the top navigation bar, move the pointer over Data + AI and choose DTS (DTS) > Data Synchronization.

From the drop-down list next to Data Synchronization Tasks, select the region where the instance resides.

Step 2: Configure source and destination databases

Click Create Task, then configure the parameters described below.

Read the Limits section displayed on the page after filling in source and destination details. Skipping this may cause the task to fail or result in data inconsistency.

| Category | Parameter | Description |

|---|---|---|

| General | Task Name | A descriptive name for the task. DTS generates a default name — uniqueness is not required. |

| Source Database | Database Type | Select PostgreSQL. |

| Access Method | Select Cloud Enterprise Network (CEN). | |

| Instance Region | Select the region where the self-managed PostgreSQL database resides. | |

| CEN Instance ID | Select the Cloud Enterprise Network (CEN) instance connected to the source database. | |

| Connected VPC | Select the VPC connected to the source database. | |

| Domain Name or IP | Enter the IP address of the source database server. | |

| Port Number | Enter the service port. Default: 3433. | |

| Database Name | Enter the name of the source database containing the objects to sync. | |

| Database Account | Enter the source database account. See Required database account permissions. | |

| Database Password | Enter the password for the source database account. | |

| Encryption | Select Non-encrypted or SSL-encrypted. For SSL, upload the CA Certificate and, if using client certificates, the Client Certificate, Private Key of Client Certificate, and Private Key Password of Client Certificate. For RDS SSL configuration, see SSL encryption. | |

| Destination Database | Database Type | Select PostgreSQL. |

| Access Method | Select Alibaba Cloud Instance. | |

| Instance Region | Select the region where the destination RDS for PostgreSQL instance resides. | |

| Instance ID | Select the destination ApsaraDB RDS for PostgreSQL instance. | |

| Database Name | Enter the name of the destination database to receive the synced objects. | |

| Database Account | Enter the destination database account. See Required database account permissions. | |

| Database Password | Enter the password for the destination database account. | |

| Encryption | Select Non-encrypted or SSL-encrypted. Configuration is the same as the source Encryption field above. |

Click Test Connectivity and Proceed at the bottom of the page.

DTS server CIDR blocks must be added to the security settings of both source and destination databases. See Add DTS server IP addresses to a whitelist.

If the source or destination is a self-managed database (not Alibaba Cloud Instance), click Test Connectivity in the CIDR Blocks of DTS Servers dialog.

Step 3: Select objects to synchronize

In the Configure Objects step, configure the following settings:

| Setting | Description |

|---|---|

| Synchronization Types | By default, Incremental Data Synchronization is selected. Also select Schema Synchronization and Full Data Synchronization. DTS synchronizes historical data from the source first, then switches to incremental sync. Selecting Schema Synchronization also syncs foreign keys. |

| Processing Mode of Conflicting Tables | Precheck and Report Errors (default): The precheck fails if source and destination have tables with identical names, blocking the task from starting. If tables with the same names exist in the destination and cannot be deleted, use object name mapping to rename them. See Map object names. Ignore Errors and Proceed: Skips the name conflict check. During full sync, DTS retains existing destination records with the same primary or unique key. During incremental sync, DTS overwrites them. Use with caution — schema differences may cause initialization failures. |

| Synchronization Topology | Select One-way Synchronization. |

| Capitalization of Object Names in Destination Instance | Specifies the casing for database, table, and column names in the destination. Default: DTS default policy. See Specify the capitalization of object names in the destination instance. |

| Source Objects | Select schemas or tables, then click |

| Selected Objects | Right-click an object to rename it or filter its SQL operations. Click Batch Edit to rename multiple objects at once. To filter data by row, right-click a table and specify WHERE conditions. See Set filter conditions. If you rename an object, other objects that depend on it may fail to sync. |

Step 4: Configure advanced settings

Click Next: Advanced Settings and configure the following:

| Setting | Description |

|---|---|

| Dedicated Cluster for Task Scheduling | By default, DTS uses the shared cluster. For improved stability, purchase a dedicated cluster. See What is a DTS dedicated cluster. |

| Retry Time for Failed Connections | How long DTS retries if the source or destination becomes unreachable after the task starts. Range: 10–1440 minutes. Default: 720 minutes. Set to more than 30 minutes. If multiple tasks share the same source or destination, the shortest retry time across those tasks takes effect. Note that you are billed for the DTS instance during retries. |

| Retry Time for Other Issues | How long DTS retries if DDL or DML operations fail. Range: 1–1440 minutes. Default: 10 minutes. Set to more than 10 minutes. This value must be less than Retry Time for Failed Connections. |

| Enable Throttling for Full Data Synchronization | Limits read/write throughput during full sync to reduce source and destination database load. Configure Queries per second (QPS) to the source database, RPS of Full Data Migration, and Data migration speed for full migration (MB/s). Available only when Full Data Synchronization is selected. |

| Enable Throttling for Incremental Data Synchronization | Limits throughput during incremental sync. Configure RPS of Incremental Data Synchronization and Data synchronization speed for incremental synchronization (MB/s). |

| Environment Tag | An optional tag to identify the instance environment. |

| Configure ETL | Enable the extract, transform, and load (ETL) feature to apply data transformations during sync. Select Yesalert notification settings and enter processing statements, or select No to skip. See What is ETL? and Configure ETL in a data migration or data synchronization task. |

| Monitoring and Alerting | Receive notifications if the task fails or synchronization latency exceeds a threshold. Select Yes to configure alert thresholds and contacts. See Configure monitoring and alerting when you create a DTS task. |

Step 5: Configure data verification

Click Next Step: Data Verification to set up data verification. See Configure a data verification task.

Step 6: Save settings and run the precheck

Click Next: Save Task Settings and Precheck.

To preview the API parameters for this task configuration, hover over the button and click Preview OpenAPI parameters before clicking through.

The task cannot start until it passes the precheck.

If any check item fails, click View Details to see the cause, fix the issue, and click Precheck Again.

If a check item triggers an alert:

If the alert cannot be ignored, fix the issue and rerun the precheck.

If the alert can be ignored, click Confirm Alert Details, then click Ignore in the dialog, click OK, and then click Precheck Again. Ignoring alerts may result in data inconsistency.

Step 7: Purchase the instance

Wait for the Success Rate to reach 100%, then click Next: Purchase Instance.

On the purchase page, configure the following:

Section Parameter Description New Instance Class Billing Method Subscription: Pay upfront for a fixed term. More cost-effective for long-term use. Pay-as-you-go: Billed by the hour. Release the instance when no longer needed to stop charges. Resource Group Settings The resource group for the instance. Default: default resource group. See What is Resource Management? Instance Class The synchronization speed tier. See Instance classes of data synchronization instances. Subscription Duration Available for the Subscription billing method. Options: 1–9 months, or 1, 2, 3, or 5 years. Read and select Data Transmission Service (Pay-as-you-go) Service Terms.

Click Buy and Start, then click OK in the dialog.

The task appears in the task list. You can monitor its progress there.