This topic describes how to create a Function Compute sink connector to synchronize data from a source topic in your ApsaraMQ for Kafka instance to a function in Function Compute.

Prerequisites

The following requirements are met:

ApsaraMQ for Kafka

The connector feature is enabled for the ApsaraMQ for Kafka instance. For more information, see Enable the connector feature.

A topic is created in the ApsaraMQ for Kafka instance. For more information, see Step 1: Create a topic.

In this example, the topic is named fc-test-input.

Function Compute

A function is created in Function Compute. For more information, see Quickly create a function.

ImportantThe function that you create must be an event function.

In this example, an event function named hello_world is created for the guide-hello_world service that runs in the Python runtime environment. Function code:

# -*- coding: utf-8 -*- import logging # To enable the initializer feature # please implement the initializer function as below: # def initializer(context): # logger = logging.getLogger() # logger.info('initializing') def handler(event, context): logger = logging.getLogger() logger.info('hello world:' + bytes.decode(event)) return 'hello world:' + bytes.decode(event)

Optional:EventBridge

EventBridge is activated. For more information about how to activate EventBridge, see Activate EventBridge and grant permissions to a RAM user.

NoteYou need to activate EventBridge only if the instance to which the Function Compute sink connector belongs is in the China (Hangzhou) or China (Chengdu) region.

Usage notes

You can synchronize data from a source topic in a ApsaraMQ for Kafka instance to a function in Function Compute only within the same region. For more information about the limits on connectors, see Limits.

If the instance to which the Function Compute sink connector belongs is in the China (Hangzhou) or China (Chengdu) region, the connector is deployed to EventBridge.

You can use EventBridge free of charge. For more information, see Billing.

When you create a connector, EventBridge automatically creates the following service-linked roles: AliyunServiceRoleForEventBridgeSourceKafka and AliyunServiceRoleForEventBridgeConnectVPC.

If these service-linked roles are unavailable, EventBridge automatically creates them so that EventBridge can use these roles to access ApsaraMQ for Kafka and Virtual Private Cloud (VPC).

If these service-linked roles are available, EventBridge does not create them again.

For more information about the service-linked roles, see Service-linked roles.

You cannot view the operation logs of tasks that are deployed to EventBridge. After a connector is run, you can check the progress of the synchronization task by viewing the consumption details of the consumer group that subscribes to the source topic. For more information, see View consumer details.

Procedure

To use a Function Compute sink connector to synchronize data from a source topic in a ApsaraMQ for Kafka instance to a function in Function Compute, perform the following steps:

Optional:Allow Function Compute sink connectors to access Function Compute across regions.

ImportantIf you do not need to use Function Compute sink connectors to access Function Compute across regions, skip this step.

Optional:Allow Function Compute sink connectors to access Function Compute across Alibaba Cloud accounts.

ImportantIf you do not need to use Function Compute sink connectors to access Function Compute across Alibaba Cloud accounts, skip this step.

Optional:Create the topics and consumer group that are required by a Function Compute sink connector.

ImportantIf you do not need to customize the names of the topics and consumer group, skip this step.

Specific topics that are required by a Function Compute sink connector must use the local storage engine. If the major version of your ApsaraMQ for Kafka instance is V0.10.2, you cannot manually create topics that use the local storage engine. In this case, these topics must be automatically created.

Verify the result.

Enable Internet access for Function Compute sink connectors

If you need to use Function Compute sink connectors to access other Alibaba Cloud services across regions, enable Internet access for Function Compute sink connectors. For more information, see Enable Internet access for a connector.

Create a custom policy.

Create a custom policy to grant access to Function Compute within the Alibaba Cloud account to which you want to synchronize data.

Log on to the RAM console.

In the left-side navigation pane, choose .

On the Policies page, click Create Policy.

On the Create Policy page, create a custom policy.

Click the JSON tab, enter the script of the custom policy in the code editor, and then click Next Step.

Sample script:

{ "Version": "1", "Statement": [ { "Action": [ "fc:InvokeFunction", "fc:GetFunction" ], "Resource": "*", "Effect": "Allow" } ] }In the Basic Information section, enter KafkaConnectorFcAccess in the Name field.

Click OK.

Create a RAM role

Create a RAM role within the Alibaba Cloud account to which you want to synchronize data. You cannot select ApsaraMQ for Kafka as the trusted service when you create a RAM role. Therefore, select a supported service as the trusted service first. Then, modify the trust policy of the created RAM role.

In the left-side navigation pane, choose .

On the Roles page, click Create Role.

In the Create Role panel, create a RAM role.

Set the Select Trusted Entity parameter to Alibaba Cloud Service and click Next.

Set the Role Type parameter to Normal Service Role. In the RAM Role Name field, enter AliyunKafkaConnectorRole. From the Select Trusted Service drop-down list, select Function Compute. Then, click OK.

On the Roles page, find and click AliyunKafkaConnectorRole.

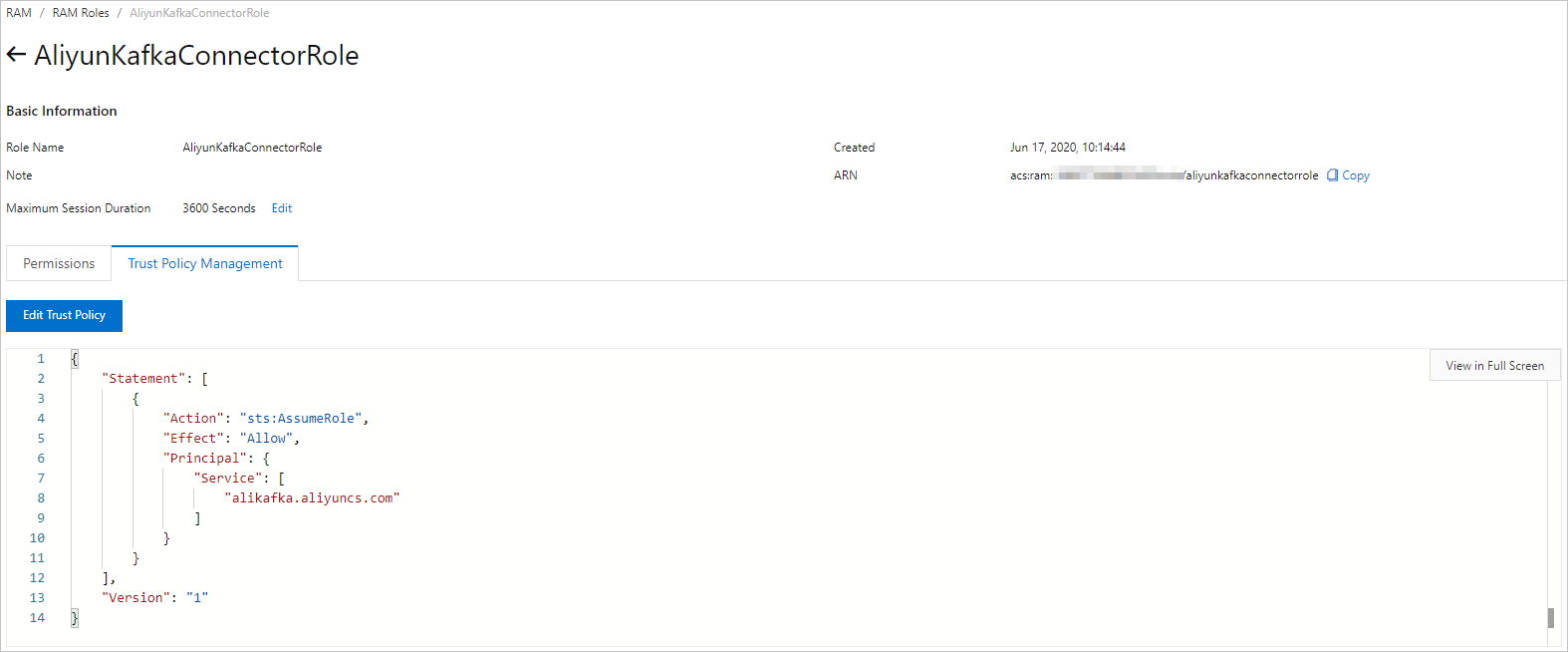

On the AliyunKafkaConnectorRole page, click the Trust Policy Management tab. On this tab, click Edit Trust Policy.

In the Edit Trust Policy panel, replace fc in the script with alikafka and click OK.

Grant permissions to the RAM role

Grant the created RAM role the permissions to access Function Compute within the Alibaba Cloud account to which you want to synchronize data.

In the left-side navigation pane, choose .

On the Roles page, find AliyunKafkaConnectorRole and click Add Permissions in the Actions column.

In the Add Permissions panel, attach the KafkaConnectorFcAccess policy to the RAM role.

In the Select Policy section, click Custom Policy.

In the Authorization Policy Name column, find and click KafkaConnectorFcAccess.

Click OK.

Click Complete.

Create the topics that are required by a Function Compute sink connector

In the ApsaraMQ for Kafka console, create the following topics that are required by a Function Compute sink connector: task offset topic, task configuration topic, task status topic, dead-letter queue topic, and error data topic. These topics differ in the partition count and storage engine. For more information, see Parameters in the Configure Source Service step.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where the ApsaraMQ for Kafka instance that you want to manage resides.

ImportantYou must create topics in the region where your Elastic Compute Service (ECS) instance is deployed. A topic cannot be used across regions. For example, if the producers and consumers of messages run on an ECS instance that is deployed in the China (Beijing) region, the topic must also be created in the China (Beijing) region.

On the Instances page, click the name of the instance that you want to manage.

In the left-side navigation pane, click Topics.

On the Topics page, click Create Topic.

In the Create Topic panel, specify the properties of the topic and click OK.

Parameter

Description

Example

Name

The topic name.

demo

Description

The topic description.

demo test

Partitions

The number of partitions in the topic.

12

Storage Engine

NoteYou can specify the storage engine type only if you use a Professional Edition instance. If you use a Standard Edition instance, cloud storage is selected by default.

The type of the storage engine that is used to store messages in the topic.

ApsaraMQ for Kafka supports the following types of storage engines:

Cloud Storage: If you select this value, the system uses Alibaba Cloud disks for the topic and stores data in three replicas in distributed mode. This storage engine features low latency, high performance, long durability, and high reliability. If you set the Instance Edition parameter to Standard (High Write) when you created the instance, you can set this parameter only to Cloud Storage.

Local Storage: If you select this value, the system uses the in-sync replicas (ISR) algorithm of open source Apache Kafka and stores data in three replicas in distributed mode.

Cloud Storage

Message Type

The message type of the topic. Valid values:

Normal Message: By default, messages that have the same key are stored in the same partition in the order in which the messages are sent. If a broker in the cluster fails, the order of messages that are stored in the partitions may not be preserved. If you set the Storage Engine parameter to Cloud Storage, this parameter is automatically set to Normal Message.

Partitionally Ordered Message: By default, messages that have the same key are stored in the same partition in the order in which the messages are sent. If a broker in the cluster fails, messages are still stored in the partitions in the order in which the messages are sent. Messages in some partitions cannot be sent until the partitions are restored. If you set the Storage Engine parameter to Local Storage, this parameter is automatically set to Partitionally Ordered Message.

Normal Message

Log Cleanup Policy

The log cleanup policy that is used by the topic.

If you set the Storage Engine parameter to Local Storage, you must configure the Log Cleanup Policy parameter. You can set the Storage Engine parameter to Local Storage only if you use an ApsaraMQ for Kafka Professional Edition instance.

ApsaraMQ for Kafka provides the following log cleanup policies:

Delete: the default log cleanup policy. If sufficient storage space is available in the system, messages are retained based on the maximum retention period. After the storage usage exceeds 85%, the system deletes the earliest stored messages to ensure service availability.

Compact: the log compaction policy that is used in Apache Kafka. Log compaction ensures that the latest values are retained for messages that have the same key. This policy is suitable for scenarios such as restoring a failed system or reloading the cache after a system restarts. For example, when you use Kafka Connect or Confluent Schema Registry, you must store the information about the system status and configurations in a log-compacted topic.

ImportantYou can use log-compacted topics only in specific cloud-native components, such as Kafka Connect and Confluent Schema Registry. For more information, see aliware-kafka-demos.

Compact

Tag

The tags that you want to attach to the topic.

demo

After a topic is created, you can view the topic on the Topics page.

Create the consumer group that is required by a Function Compute sink connector

In the ApsaraMQ for Kafka console, create the consumer group that is required by a Function Compute sink connector. The name of the consumer group must be in the connect-Task name format. For more information, see Parameters in the Configure Source Service step.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where the ApsaraMQ for Kafka instance that you want to manage resides.

On the Instances page, click the name of the instance that you want to manage.

In the left-side navigation pane, click Groups.

On the Groups page, click Create Group.

In the Create Group panel, enter a group name in the Group ID field and a group description in the Description field, attach tags to the group, and then click OK.

After a consumer group is created, you can view the consumer group on the Groups page.

Create and deploy a Function Compute sink connector

Create and deploy a Function Compute sink connector that synchronizes data from ApsaraMQ for Kafka to Function Compute.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where the ApsaraMQ for Kafka instance that you want to manage resides.

In the left-side navigation pane, click Connectors.

On the Connectors page, select the instance to which the connector belongs from the Select Instance drop-down list and click Create Connector.

Complete the Create Connector wizard.

In the Configure Basic Information step, set the parameters that are described in the following table and click Next.

Parameter

Description

Example

Name

The name of the connector. Specify a connector name based on the following rules:

The connector name must be 1 to 48 characters in length. It can contain digits, lowercase letters, and hyphens (-), but cannot start with a hyphen (-).

Each connector name must be unique within a ApsaraMQ for Kafka instance.

The data synchronization task of the connector must use a consumer group that is named in the connect-Task name format. If you have not created such a consumer group, Message Queue for Apache Kafka automatically creates one for you.

kafka-fc-sink

Instance

The information about the Message Queue for Apache Kafka instance. By default, the name and ID of the instance are displayed.

demo alikafka_post-cn-st21p8vj****

In the Configure Source Service step, select Message Queue for Apache Kafka as the source service, set the parameters that are described in the following table, and then click Next.

NoteIf you have created the topics and consumer group in advance, set the Resource Creation Method parameter to Manual and enter the names of the created resources in the fields below. Otherwise, set the Resource Creation Method parameter to Auto.

Table 1. Parameters in the Configure Source Service step

Parameter

Description

Example

Data Source Topic

The name of the source topic from which data is to be synchronized.

fc-test-input

Consumer Thread Concurrency

The number of concurrent consumer threads used to synchronize data from the source topic. By default, six concurrent consumer threads are used. Valid values:

1

2

3

6

12

6

Consumer Offset

The offset from which consumption starts. Valid values:

Earliest Offset: Consumption starts from the earliest offset.

Latest Offset: Consumption starts from the latest offset.

Earliest Offset

VPC ID

The ID of the VPC in which the data synchronization task runs. Click Configure Runtime Environment to display the parameter. The default value is the VPC ID that you specified when you deployed the ApsaraMQ for Kafka instance. You do not need to change the value.

vpc-bp1xpdnd3l***

vSwitch ID

The ID of the vSwitch in which the data synchronization task runs. Click Configure Runtime Environment to display the parameter. The vSwitch must be deployed in the same VPC as the ApsaraMQ for Kafka instance. The default value is the vSwitch ID that you specified when you deployed the ApsaraMQ for Kafka instance.

vsw-bp1d2jgg81***

Failure Handling Policy

Specifies whether to retain the subscription to the partition in which an error occurs after the relevant message fails to be sent. Click Configure Runtime Environment to display the parameter. Valid values:

Continue Subscription: retains the subscription to the partition in which an error occurs and returns the logs.

Stop Subscription: stops the subscription to the partition in which an error occurs and returns the logs.

NoteFor more information, see Manage a connector.

For more information about how to troubleshoot errors based on error codes, see Error codes.

Continue Subscription

Resource Creation Method

The method used to create the topics and consumer group that are required by the Function Compute sink connector. Click Configure Runtime Environment to display the parameter. Valid values:

Auto

Manual

Auto

Connector Consumer Group

The consumer group that is used by the data synchronization task of the connector. Click Configure Runtime Environment to display the parameter. The name of this consumer group must be in the connect-Task name format.

connect-kafka-fc-sink

Task Offset Topic

The topic that is used to store consumer offsets. Click Configure Runtime Environment to display the parameter.

Topic: We recommend that you start the topic name with connect-offset.

Partitions: The number of partitions in the topic must be greater than 1.

Storage Engine: The storage engine of the topic must be set to Local Storage.

cleanup.policy: The log cleanup policy for the topic must be set to Compact.

connect-offset-kafka-fc-sink

Task Configuration Topic

The topic that is used to store task configurations. Click Configure Runtime Environment to display the parameter.

Topic: We recommend that you start the topic name with connect-config.

Partitions: The topic can contain only one partition.

Storage Engine: The storage engine of the topic must be set to Local Storage.

cleanup.policy: The log cleanup policy for the topic must be set to Compact.

connect-config-kafka-fc-sink

Task Status Topic

The topic that is used to store the task status. Click Configure Runtime Environment to display the parameter.

Topic: We recommend that you start the topic name with connect-status.

Partitions: We recommend that you set the number of partitions in the topic to 6.

Storage Engine: The storage engine of the topic must be set to Local Storage.

cleanup.policy: The log cleanup policy for the topic must be set to Compact.

connect-status-kafka-fc-sink

Dead-letter Queue Topic

The topic that is used to store the error data of the Kafka Connect framework. Click Configure Runtime Environment to display the parameter. To save topic resources, you can create a topic and use the topic as both the dead-letter queue topic and the error data topic.

Topic: We recommend that you start the topic name with connect-error.

Partitions: We recommend that you set the number of partitions in the topic to 6.

Storage Engine: The storage engine of the topic can be set to Local Storage or Cloud Storage.

connect-error-kafka-fc-sink

Error Data Topic

The topic that is used to store the error data of the connector. Click Configure Runtime Environment to display the parameter. To save topic resources, you can create a topic and use the topic as both the dead-letter queue topic and the error data topic.

Topic: We recommend that you start the topic name with connect-error.

Partitions: We recommend that you set the number of partitions in the topic to 6.

Storage Engine: The storage engine of the topic can be set to Local Storage or Cloud Storage.

connect-error-kafka-fc-sink

In the Configure Destination Service step, select Function Compute as the destination service, set the parameters that are described in the following table, and then click Create.

NoteIf the instance to which the Function Compute sink connector belongs is in the China (Hangzhou) or China (Chengdu) region, the Service Authorization message appears when you select Function Compute as the destination service. The following service-linked roles are automatically created if you click OK: AliyunServiceRoleForEventBridgeSourceKafka and AliyunServiceRoleForEventBridgeSourceKafka. Click OK in the Service Authorization message, set the parameters that are described in the following table, and then click Create. If the service-linked roles have been created, the Service Authorization message does not appear.

Parameter

Description

Example

Cross-account/Cross-region

Specifies whether the Function Compute sink connector synchronizes data to Function Compute across Alibaba Cloud accounts or regions. By default, this parameter is set to No. Valid values:

No: The Function Compute sink connector synchronizes data to Function Compute within the same region and the same Alibaba Cloud account.

Yes: The Function Compute sink connector synchronizes data to Function Compute across regions but within the same Alibaba Cloud account, within the same region but across Alibaba Cloud accounts, or across regions and Alibaba Cloud accounts.

No

Region

The region in which Function Compute is activated. By default, the region in which the Function Compute sink connector resides is selected. To synchronize data across regions, enable Internet access for the connector and select the destination region. For more information, see Enable Internet access for Function Compute sink connectors.

ImportantThe Region parameter is displayed only if you set the Cross-account/Cross-region parameter to Yes.

cn-hangzhou

Service Endpoint

The endpoint of Function Compute. In the Function Compute console, you can view the endpoints of Function Compute in the Common Info section of the Overview page.

Internal endpoint: We recommend that you use the internal endpoint for lower latency. The internal endpoint can be used if the ApsaraMQ for Kafka instance and Function Compute are in the same region.

Public endpoint: We recommend that you do not use the public endpoint due to a higher latency. The public endpoint can be used if the ApsaraMQ for Kafka instance and Function Compute are in different regions. To use the public endpoint, you must enable Internet access for the connector. For more information, see Enable Internet access for Function Compute sink connectors.

ImportantThe Service Endpoint parameter is displayed only if you set the Cross-account/Cross-region parameter to Yes.

http://188***.cn-hangzhou.fc.aliyuncs.com

Alibaba Cloud Account

The ID of the Alibaba Cloud account that is used to log on to Function Compute. In the Function Compute console, you can view the ID of the Alibaba Cloud account in the Common Info section of the Overview page.

ImportantThe Alibaba Cloud Account parameter is displayed only if you set the Cross-account/Cross-region parameter to Yes.

188***

RAM Role Name

The name of the RAM role that ApsaraMQ for Kafka assumes to access Function Compute.

If you do not need to synchronize data across Alibaba Cloud accounts, you must create a RAM role and grant the RAM role the specific permissions within the current Alibaba Cloud account. Then, enter the name of the RAM role. For more information, see Create a custom policy., Create a RAM role, and Grant permissions to the RAM role.

If you need to synchronize data across Alibaba Cloud accounts, you must create a RAM role by using the Alibaba Cloud account to which you want to synchronize data. Then, grant the RAM role the specific permissions and enter the name of the RAM role. For more information, see Create a custom policy., Create a RAM role, and Grant permissions to the RAM role.

ImportantThe RAM Role Name parameter is displayed only if you set the Cross-account/Cross-region parameter to Yes.

AliyunKafkaConnectorRole

Service Name

The name of the service in Function Compute.

guide-hello_world

Function Name

The name of the function in the service in Function Compute.

hello_world

Version or Alias

The version or alias of the service in Function Compute.

ImportantYou must set this parameter to Specified Version or Specified Alias if you set the Cross-account/Cross-region parameter to No.

You must specify a service version or alias if you set the Cross-account/Cross-region parameter to Yes.

LATEST

Service Version

The version of the service in Function Compute.

ImportantThe Service Version parameter is displayed only if you set the Cross-account/Cross-region parameter to No and the Version or Alias parameter to Specified Version.

LATEST

Service Alias

The alias of the service in Function Compute.

ImportantThe Service Alias parameter is displayed only if you set the Cross-account/Cross-region parameter to No and the Version or Alias parameter to Specified Alias.

jy

Transmission Mode

The mode in which messages are sent. Valid values:

Asynchronous: recommended.

Synchronous: not recommended. In synchronous mode, if Function Compute processes messages for a long period of time, ApsaraMQ for Kafka waits for Function Compute to complete the processing. If a batch of messages fail to be processed within 5 minutes, the ApsaraMQ for Kafka client rebalances the traffic.

Asynchronous

Data Size

The maximum number of messages that can be sent at a time. Default value: 20. The connector aggregates messages to be concurrently sent based on the maximum number of messages and the maximum message size allowed in a request. The size of an aggregate message cannot exceed 6 MB in synchronous mode and 128 KB in asynchronous mode. For example, messages are sent in asynchronous mode, and up to 20 messages can be sent at a time. You want to send 18 messages, among which 17 messages have a total size of 127 KB, and one message is 200 KB in size. In this case, the connector aggregates the 17 messages into a single batch and sends this batch first, and then sends the remaining message whose size is more than 128 KB.

NoteIf you set the key parameter to null when you send a message, the request does not contain the key parameter. If you set the value parameter to null, the request does not contain the value parameter.

If the size of messages in a batch does not exceed the maximum message size allowed in a request, the request contains all content of the messages. Sample request:

[ { "key":"this is the message's key2", "offset":8, "overflowFlag":false, "partition":4, "timestamp":1603785325438, "topic":"Test", "value":"this is the message's value2", "valueSize":28 }, { "key":"this is the message's key9", "offset":9, "overflowFlag":false, "partition":4, "timestamp":1603785325440, "topic":"Test", "value":"this is the message's value9", "valueSize":28 }, { "key":"this is the message's key12", "offset":10, "overflowFlag":false, "partition":4, "timestamp":1603785325442, "topic":"Test", "value":"this is the message's value12", "valueSize":29 }, { "key":"this is the message's key38", "offset":11, "overflowFlag":false, "partition":4, "timestamp":1603785325464, "topic":"Test", "value":"this is the message's value38", "valueSize":29 } ]If the size of a single message exceeds the maximum message size allowed in a request, the request does not contain the content of the message. Sample request:

[ { "key":"123", "offset":4, "overflowFlag":true, "partition":0, "timestamp":1603779578478, "topic":"Test", "value":"1", "valueSize":272687 } ]NoteTo obtain the content of the message, you must pull the message based on its offset.

50

Retries

The maximum number of retries allowed after a message fails to be sent. Default value: 2. Valid values: 1 to 3. In specific cases when a message fails to be sent, retries are not supported. The following rules describe the types of error codes and whether retries are supported. For more information, see Error codes.

4XX: Retries are not supported except in the case when 429 is returned.

5XX: Retries are supported.

NoteThe connector calls the InvokeFunction operation to send messages to Function Compute.

If a message still fails to be sent to Function Compute after the maximum number of retries, the message is sent to the dead-letter queue topic. Messages in the dead-letter queue topic cannot be synchronized to Function Compute by using the connector. We recommend that you configure an alert rule for the topic to monitor the topic resources in real time. This way, you can troubleshoot issues at the earliest opportunity.

2

After the connector is created, you can view the connector on the Connectors page.

Go to the Connectors page, find the connector that you created, and then click Deploy in the Actions column.

To configure Function Compute resources, choose in the Actions column to go to the Function Compute console and complete the configuration.

Send a test message

After you deploy the Function Compute sink connector, you can send a message to the source topic in the ApsaraMQ for Kafka instance to test whether the message can be synchronized to Function Compute.

On the Connectors page, find the connector that you want to manage and click Test in the Actions column.

In the Send Message panel, configure the parameters to send a message for testing.

If you set the Sending Method parameter to Console, perform the following steps:

In the Message Key field, enter the message key. Example: demo.

In the Message Content field, enter the message content. Example: {"key": "test"}.

Configure the Send to Specified Partition parameter to specify whether to send the test message to a specific partition.

If you want to send the test message to a specific partition, click Yes and enter the partition ID in the Partition ID field. Example: 0. For information about how to query partition IDs, see View partition status.

If you do not want to send the test message to a specific partition, click No.

If you set the Sending Method parameter to Docker, run the Docker command in the Run the Docker container to produce a sample message section to send the test message.

If you set the Sending Method parameter to SDK, select an SDK for the required programming language or framework and an access method to send and subscribe to the test message.

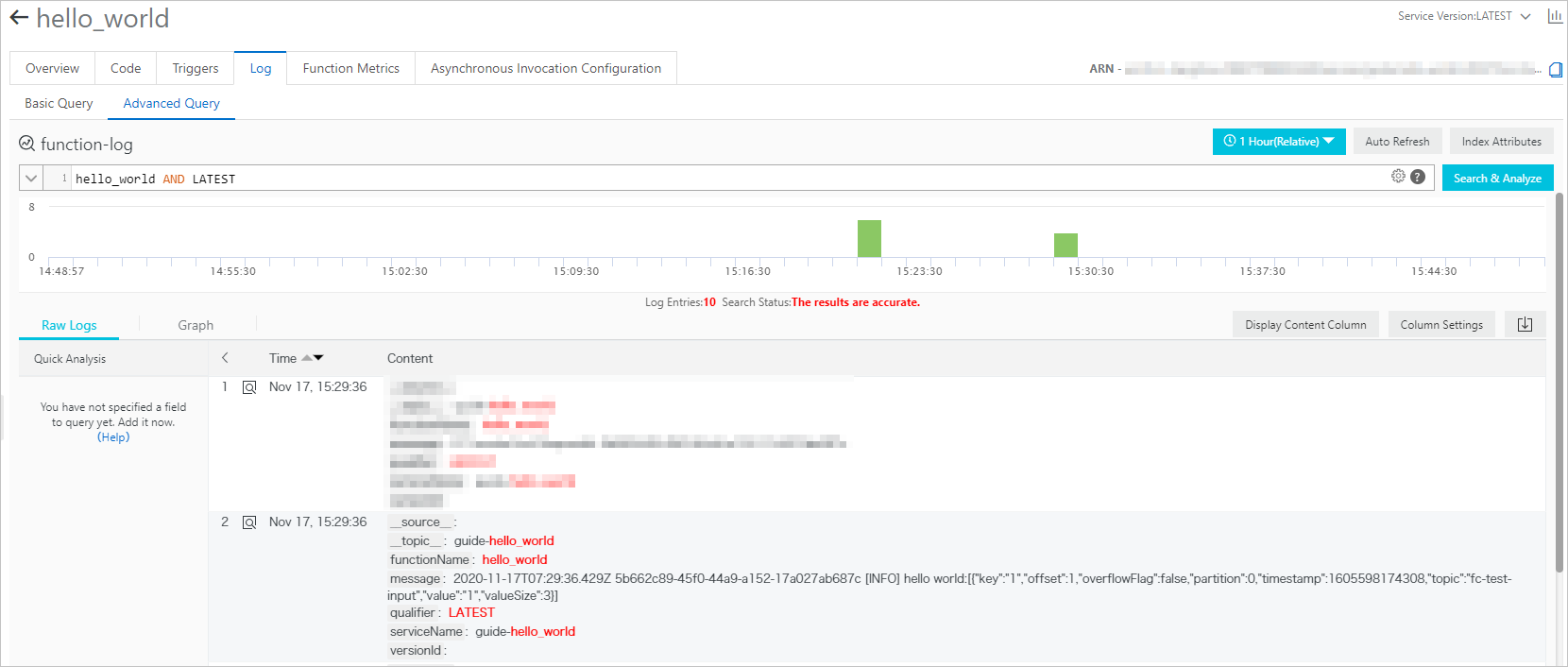

View function logs

After you send a message to the source topic in the ApsaraMQ for Kafka instance, you can view the function logs to check whether the message is received. For more information, see Configure logging.

If the test message that you sent appears in the logs as shown in the following figure, the data synchronization task is successful.