Data Integration supports the real-time synchronization of entire databases from sources such as MySQL to Elasticsearch. This topic uses a MySQL-to-Elasticsearch scenario to describe how to perform full and incremental real-time synchronization of an entire MySQL database.

Prerequisites

-

Data source preparation

-

You have created a MySQL data source and an Elasticsearch data source. For more information, see Data source configuration.

-

Binlog must be enabled for the MySQL data source. For more information, see Prerequisites.

-

-

Resource group: You have purchased a serverless resource group.

-

Network connectivity: Network connectivity is established between the resource group and the data sources. For more information, see Overview of network connectivity solutions.

Configure the task

Step 1: Create a synchronization task

Go to the Data Integration page.

Log on to the DataWorks console. In the top navigation bar, select the desired region. In the left-side navigation pane, choose . On the page that appears, select the desired workspace from the drop-down list and click Go to Data Integration.

-

In the left-side navigation pane, click Synchronization Task, and then click Create Synchronization Task at the top of the page. Configure the task information:

-

Source Type:

MySQL. -

Destination Type:

Elasticsearch. -

Specific Type:

Real-time migration of entire database. -

Synchronization Mode:

-

Schema Migration: Creates a matching index structure, such as indexes and field mappings, in the destination. This step does not migrate data.

-

Full Synchronization (Optional): Copies all historical data from specified source objects, like tables, to the destination in a single operation. This is typically used for initial data migration or data initialization.

-

Incremental Sync (Optional): After the full synchronization is complete, continuously captures data changes (inserts, updates, and deletes) from the source and synchronizes them to the destination.

-

-

Step 2: Configure data sources and resources

-

For Source, select your added

MySQLdata source. For Destination, select your addedElasticsearchdata source. -

In the Running Resources section, select the Resource Group for the synchronization task and allocate CU for the task.

NoteWhen the task log shows a message about insufficient resources, such as

Please confirm whether there are enough resources..., it means that the available Computing Units (CUs) in the current resource group are insufficient to start or run the task. You can increase the number of CUs that the task uses in the Configure Resource Group panel to allocate more computing resources.For recommended resource settings, see Recommended CUs for Data Integration. Adjust the settings based on your actual workload.

-

Ensure that both the source and destination data sources pass the Connectivity Check.

Step 3: Configure synchronization plan

1. Configure the data source

-

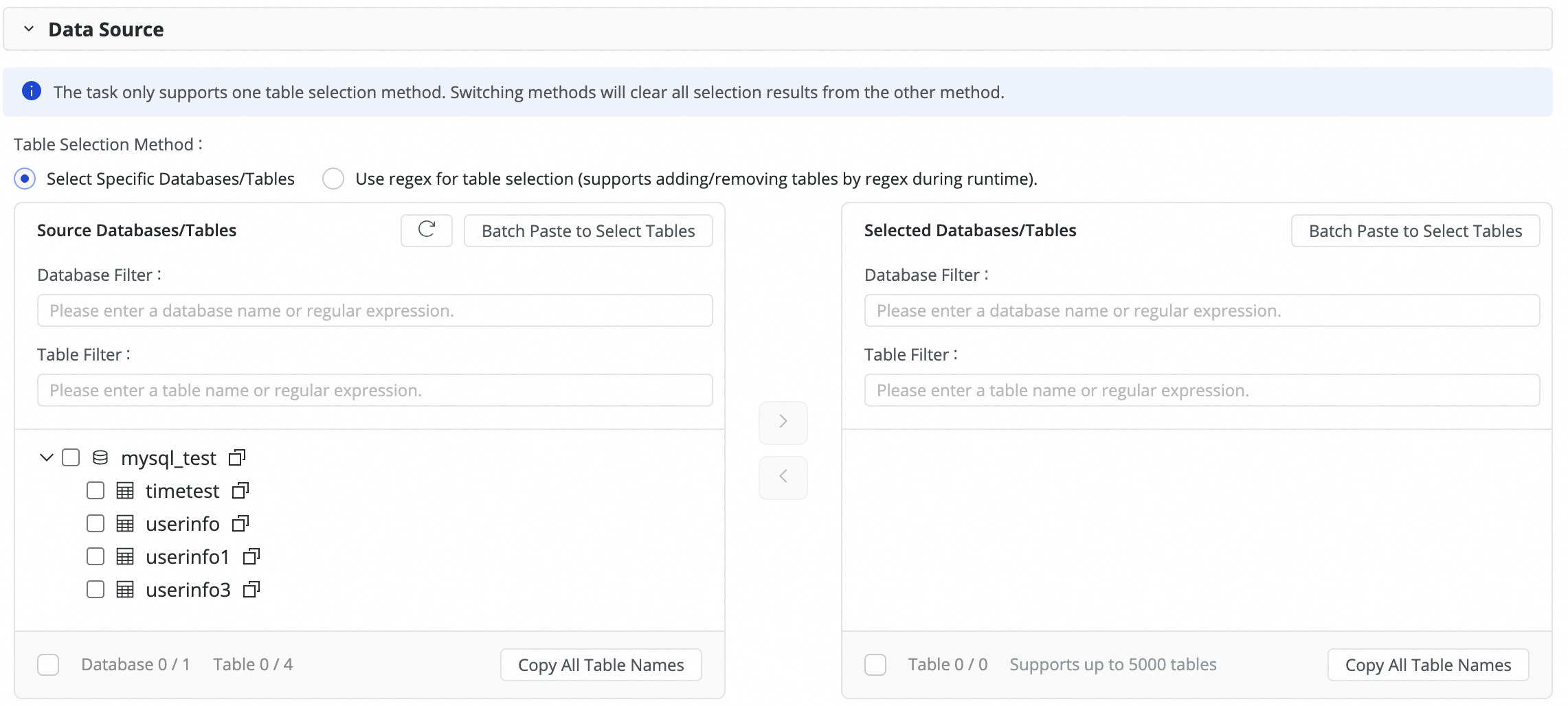

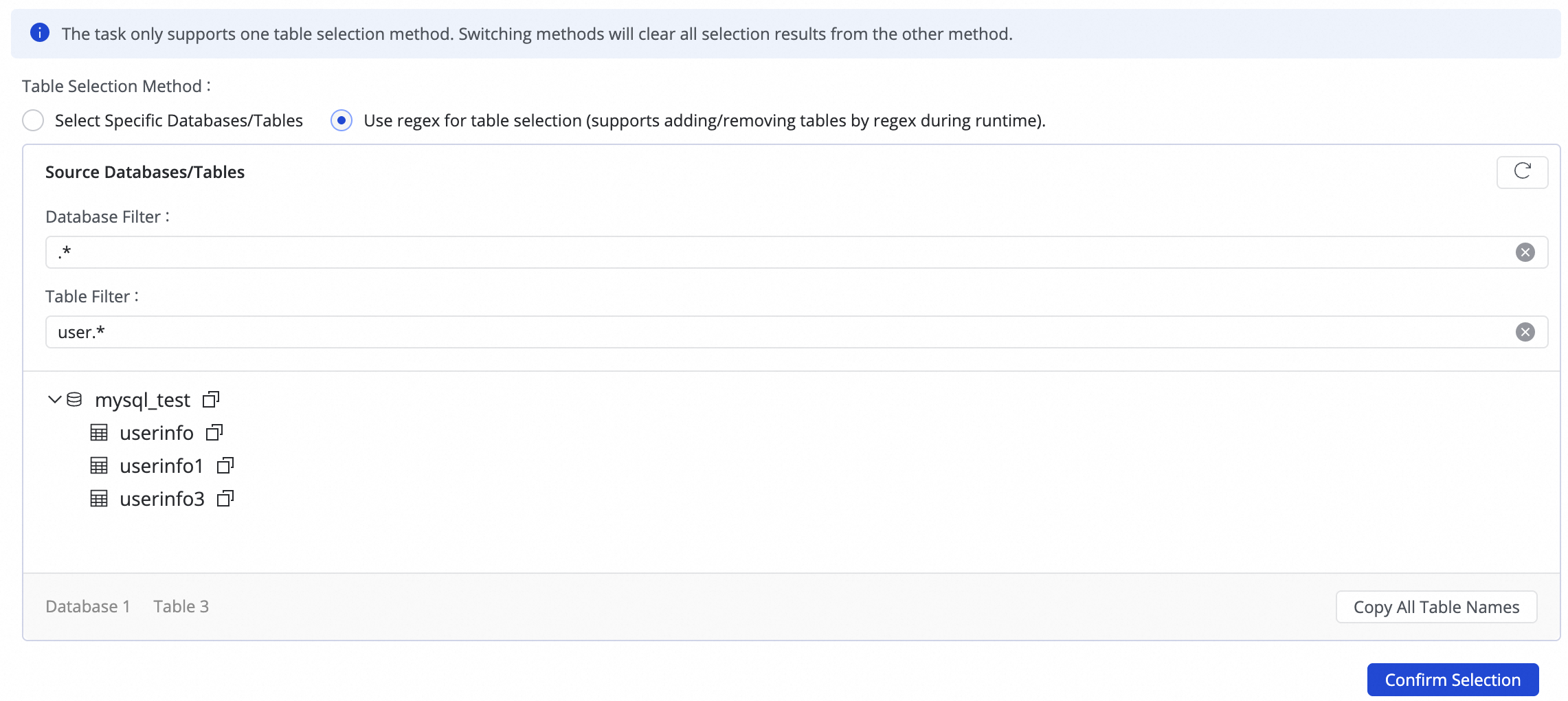

In this step, select the tables to synchronize from the Source Tables list and click the

icon to move them to the Selected Tables list. If you have many tables, you can use Database Filtering or Table filtering to select tables by configuring regular expressions.

icon to move them to the Selected Tables list. If you have many tables, you can use Database Filtering or Table filtering to select tables by configuring regular expressions.

-

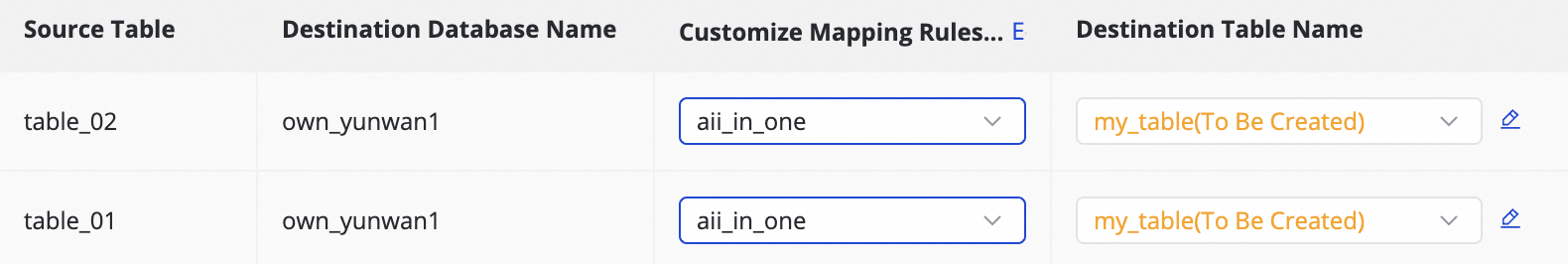

To write data from multiple sharded databases and tables with the same structure into a single destination table, you can use regex for table selection.

Enter a regular expression in the source table configuration. DataWorks automatically identifies all matching source tables and writes their data to the destination table that corresponds to the expression.NoteThis method is suitable for merging and synchronizing sharded tables, which improves configuration efficiency by eliminating the need to add multiple many-to-one synchronization rules.

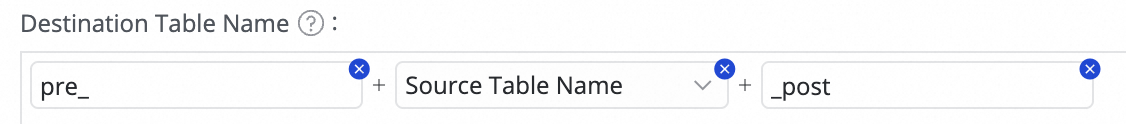

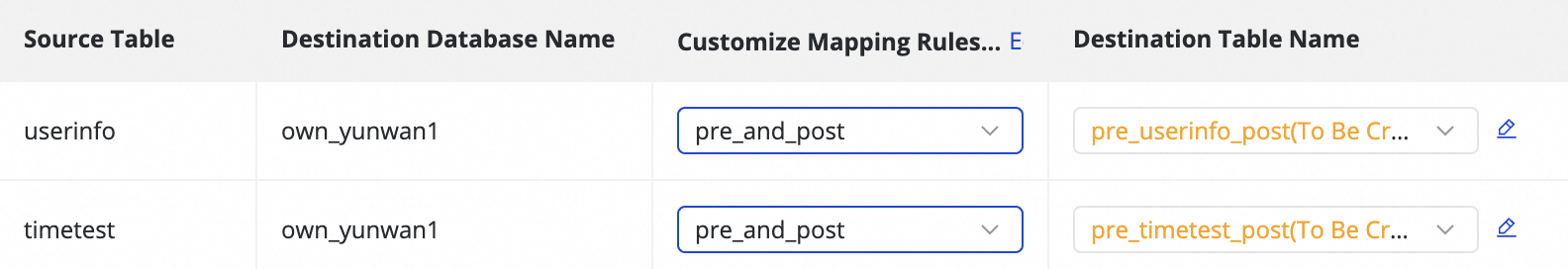

2. Configure destination index mapping

|

Actions |

Description |

||||||||||||

|

Refresh |

The system lists the source tables you selected. You must refresh and confirm the destination index properties for them to take effect.

|

||||||||||||

|

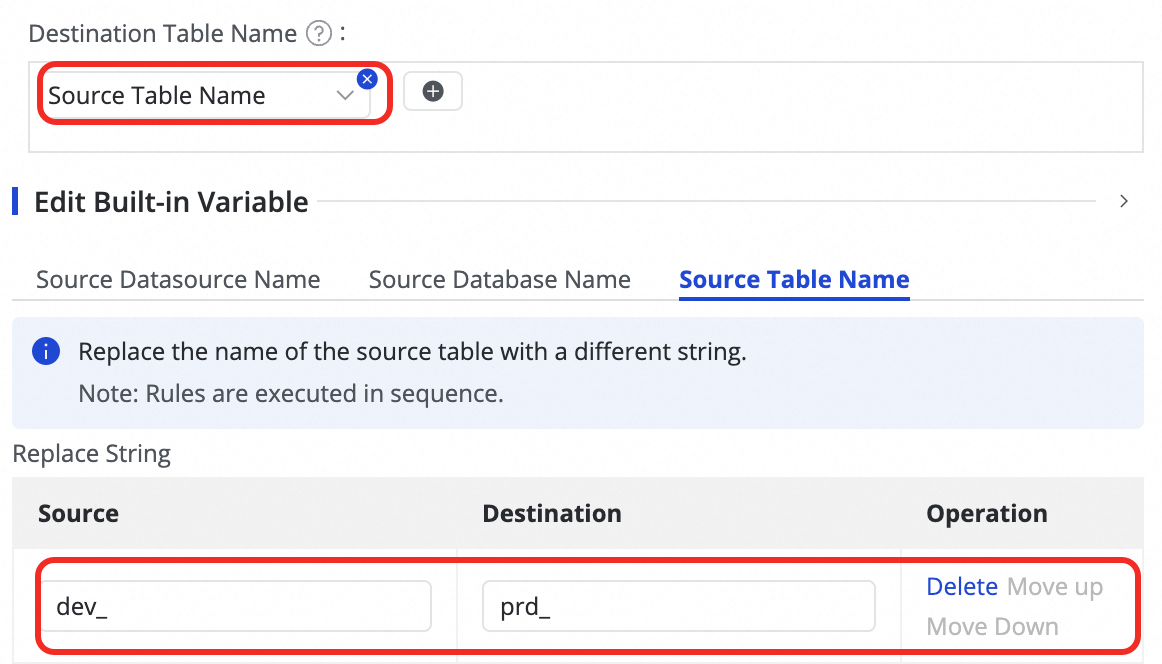

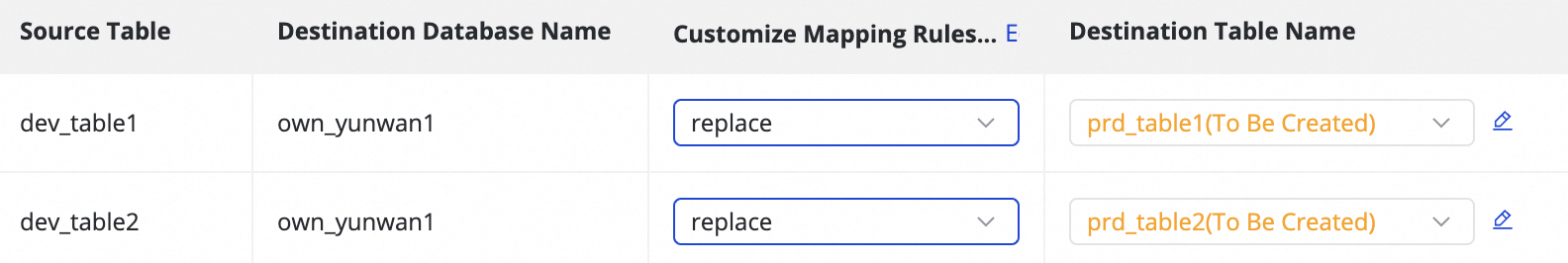

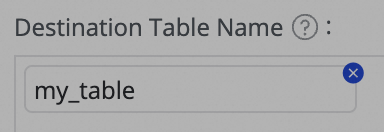

Custom Mapping Rule for Destination Index Name (Optional) |

The system uses a default rule to generate index names:

This feature supports the following scenarios:

|

||||||||||||

|

Edit field type mapping (Optional) |

The system provides a default mapping between source type and destination type. You can click Edit Mapping of Field Data Types in the upper-right corner of the table to customize the field type mapping between source tables and destination indexes. After configuration, click Apply and Refresh Mapping. When editing field type mappings, ensure that type conversion rules are correct. Incorrect rules can cause conversion failures, generate dirty data, and disrupt task execution. |

||||||||||||

|

Edit destination index (Optional) |

Based on the custom index name mapping rules, the system automatically creates new destination indexes or reuses existing ones with matching names. DataWorks automatically generates the destination index structure based on the source table structure. In most cases, manual intervention is not required. When the destination index status is to be created, you can add fields to the destination index on top of its original structure. Follow these steps:

|

||||||||||||

|

Value assignment |

Native fields are automatically mapped based on matching field names between the source and destination. You must manually assign values to the new fields and destination index properties added in the previous step. Follow these steps:

You can assign constants and variables by switching the Value Type. The following options are supported:

|

||||||||||||

|

Source split column |

You can select a field from the source table from the source split column dropdown or select Not Split. When the synchronization task runs, it splits into multiple sub-tasks based on this column to read data concurrently in batches. We recommend using the table's primary key as the source split column. String, float, and date types are not supported. Currently, the source split column is supported only when the source is MySQL. |

||||||||||||

|

Skip full synchronization |

You can choose to skip full synchronization for individual tables. This is useful if you have already synchronized the full data to the destination using other methods. |

||||||||||||

|

Full condition |

Applies a filter to the source data during the full synchronization phase. Enter the filter conditions that would normally follow a |

||||||||||||

|

Configure DML Rule |

DML message processing applies fine-grained filtering and control to the change data ( |

Step 4: Advanced settings

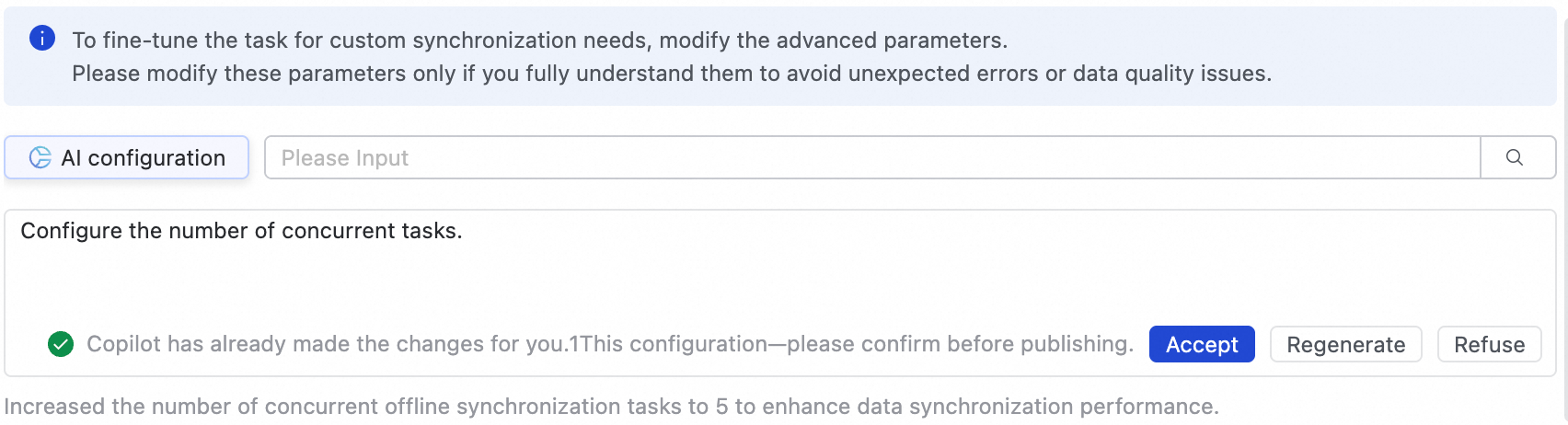

Advanced parameter configuration

For fine-grained task configuration to meet custom synchronization needs, go to the Advanced Parameters tab to modify advanced parameters.

-

Click Advanced Settings in the upper-right corner to go to the advanced parameter configuration page.

-

Modify the parameter values based on the descriptions provided.

-

You can also use AI-powered configuration. Input a command in natural language, such as adjusting the task concurrency, and the large language model generates recommended parameter values. You can choose whether to accept the AI-generated parameters.

Modify these parameters only if you fully understand their meaning to avoid unexpected issues such as task latency, excessive resource consumption that blocks other tasks, or data loss.

DDL configuration

Some real-time synchronization tasks can detect metadata changes in the source table structure and notify the destination. The destination can then either synchronize the update or take other actions, such as sending an alert, ignoring the change, or terminating the task.

You can click Configure DDL Capability in the upper-right corner of the UI to set a processing policy for each type of change. The supported policies vary depending on the channel.

-

Normal processing: The destination processes the DDL change from the source.

-

Ignore: The change message is ignored, and no modification is made at the destination.

-

Error: The whole database real-time synchronization task is terminated, and its status is set to Error.

-

Alert: An alert is sent to the user when this type of change occurs at the source. You must configure a DDL notification rule in the Configure Alert Rule settings.

When DDL synchronization adds a new column to the destination, the system does not backfill data for that column in existing rows.

Step 5: Deploy and run the task

-

After configuring the task, click Save.

-

Whole database synchronization tasks cannot be debugged directly. They must be deployed to the Operation Center to run. Therefore, whether you are creating a new task or editing an existing one, you must Deploy the task for the changes to take effect.

-

When you deploy the task, if you select Start immediately after deployment, the task is also started. Otherwise, after the deployment is complete, go to the page and manually start the task in the Actions column of the target task.

-

Click the Name/ID of the corresponding task in the Tasks to view the detailed execution process.

Step 6: Configure alarms

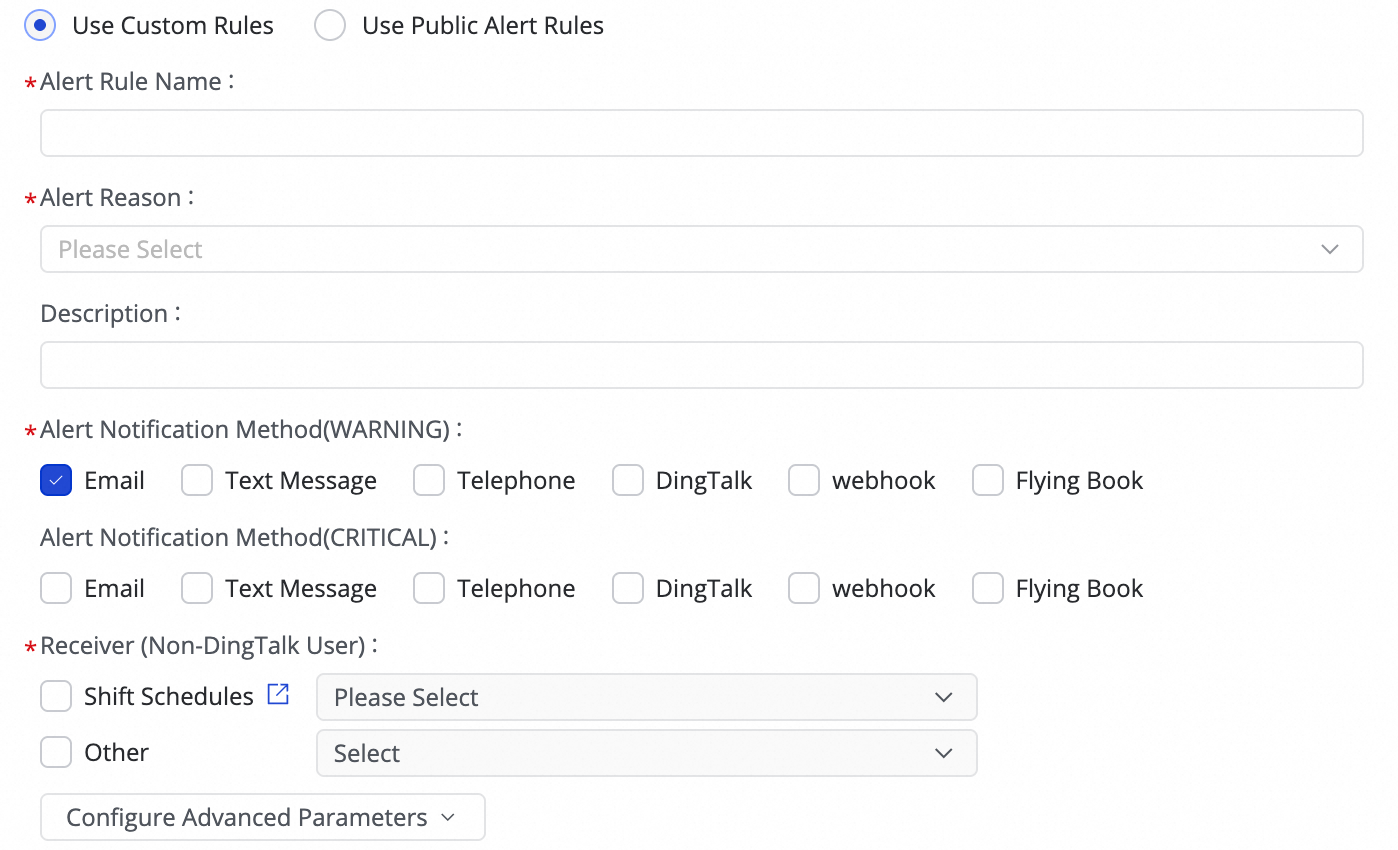

1. Add an alarm rule

In the list, find the corresponding real-time full-database synchronization task, and click in the Actions column to configure an alert policy for the task.

(1) Click Create Rule to configure an alarm rule.

By setting the Alert Reason, you can monitor task metrics such as Business delay, Failover, Task status, DDL Notification, and Task Resource Utilization. You can set CRITICAL or WARNING alarm levels based on specified thresholds.

-

By using Configure Advanced Parameters, you can control the interval for sending alarm messages to prevent alert fatigue and message backlogs.

-

If you select Business delay, Task status, or Task Resource Utilization as the alarm trigger, you can also enable recovery notifications to inform recipients when the task returns to a normal state.

(2) Manage alarm rules.

For created alarm rules, you can use the alarm switch to enable or disable them. You can also send alarms to different personnel based on the alarm level.

2. View alarms

Click for a task to go to the Alarm Events page, where you can view information about triggered alarms.

Manage the task

Edit the task

-

On the page, find the created synchronization task. In the Operation column, click More and then Edit to modify the task information. The steps are the same as for configuring a new task.

-

For tasks that are not running, you can directly modify and save the configuration, then publish the task to the production environment to apply the changes.

-

For tasks that are Running, when you edit and publish the task without selecting Start immediately after deployment, the original start button changes to Apply Updates. You must click this button for the changes to take effect in the live environment.

-

After you click Apply Update, the system applies the changes by stopping, publishing, and restarting the task.

-

If the change is to add new tables or switch existing tables:

You cannot select a position when applying the update. After you confirm, the system performs schema migration and full data initialization for the new tables. Once initialization is complete, incremental synchronization begins for these tables along with the original ones.

-

If you modify other information:

You can select a position when applying the update. After confirmation, the task resumes from the specified position. If you do not specify a position, it resumes from the position where it last stopped.

Unmodified tables are not affected. After the update and restart, they continue running from the last stopping point.

-

View the task

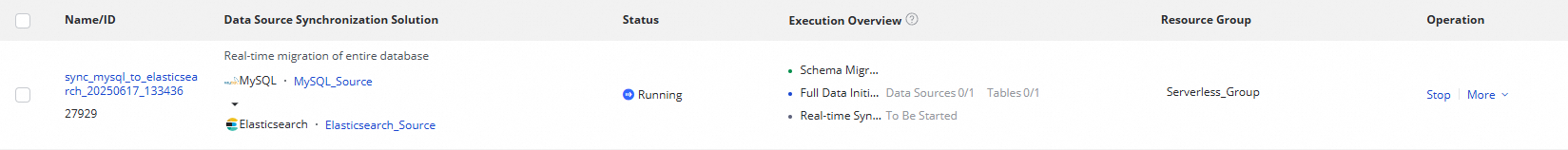

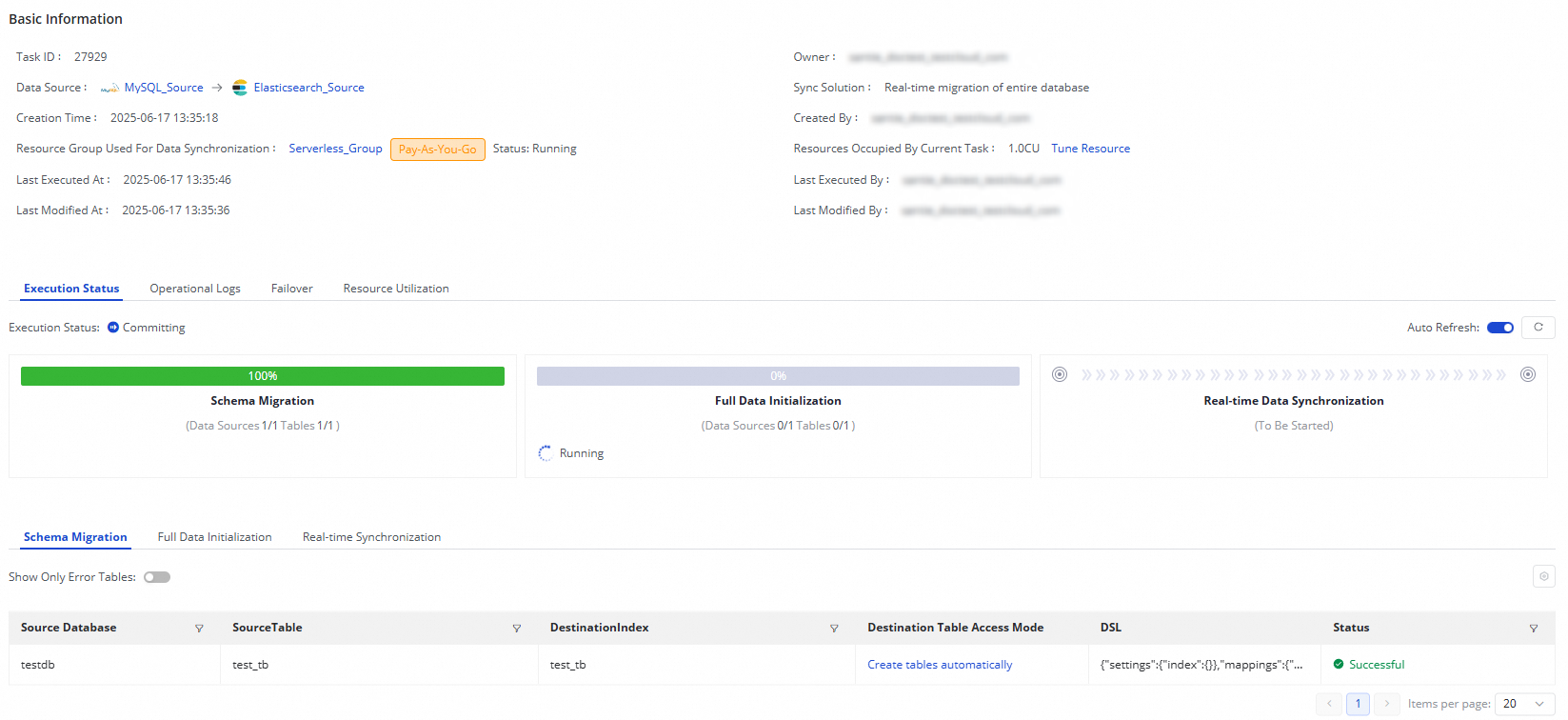

After creating a synchronization task, you can view the list of created tasks and their basic information on the Synchronization Task page.

-

In the Operation column, you can Start or Stop a synchronization task. Click More for additional options, such as View and Edit.

-

For running tasks, you can view their basic status in the Execution Overview. You can also click a specific area of the overview to see execution details.

A real-time database synchronization task from MySQL to Elasticsearch consists of three steps:

-

Schema Migration: Shows how the destination index was created (existing index or auto-created). If auto-created, the DDL statement is displayed.

-

Full Data Initialization: Displays information about the synchronized tables, their progress, and the number of records written.

-

Real-time Data Synchronization: Displays real-time statistics, including progress, DDL and DML records, and alert information.

-

Rerun the synchronization task

In some special cases, if you add tables to or remove tables from the source, or change the schema or name of a destination table, you can click More in the Operation column of the synchronization task and then click Rerun to rerun the task after the change. During the rerun process, the synchronization task synchronizes data only from the newly added tables to the destination or only from the mapped source table to the destination table whose schema or name is changed.

If you want to rerun the synchronization task without modifying the configuration of the task, click More in the Actions column and then click Rerun to rerun the task to perform full synchronization and incremental synchronization again.

If you want to rerun the synchronization task after you add tables to or remove tables from the task, click Complete after the change. In this case, Apply Updates is displayed in the Actions column of the synchronization task. Click Apply Updates to trigger the system to rerun the synchronization task. During the rerun process, the synchronization task synchronizes data from the newly added tables to the destination. Data in the original tables is not synchronized again.

Resume from checkpoint

Use cases

Manually resetting the position when starting or restarting a task is mainly applicable in the following scenarios:

-

Task recovery: If a task is interrupted, you can manually reset the starting position to the time of the interruption to ensure data synchronization resumes from the correct point.

-

Data troubleshooting and rollback: If you find missing or abnormal data after synchronization, you can roll back the position to a time before the issue occurred to replay and correct the problematic data.

-

Major task configuration changes: After making significant adjustments to the task configuration (such as destination index structure or field mappings), we recommend resetting the position to start synchronization from a specific point in time to ensure data accuracy with the new configuration.

Instructions

When you click Start, you can choose whether to reset the position in the dialog box:

-

Do not reset the position: The task resumes from the last position (checkpoint).

-

Reset the position to a specific time: The task starts from the specified time. Ensure the selected time is not earlier than the earliest available position in the source's Binlog.

If you encounter an error indicating an invalid or non-existent position during task execution, try the following solutions:

-

Reset the position: When starting the real-time synchronization task, reset the position and select the earliest available position in the source database's binlog.

-

Adjust log retention time: If the database position has expired, consider increasing the log retention period in the database, for example, to 7 days.

-

Resynchronize data: If data has been lost, consider performing a full synchronization again, or configure an offline synchronization task to manually synchronize the missing data.

FAQ

For FAQs about real-time database synchronization, see Data Integration FAQs and Data Integration errors.

button in the Destination Index Name column and add fields by editing the Statement Used to Create Index.

button in the Destination Index Name column and add fields by editing the Statement Used to Create Index.