This topic answers frequently asked questions about real-time synchronization in DataWorks Data Integration.

Configuration

What data sources does real-time synchronization support?

For a complete list of supported data sources, see Supported data sources for real-time synchronization.

Why is my real-time synchronization task latency high?

Start by identifying where the latency is coming from. On the Real-time Computing Nodes page in Operation Center, check the Latency value for your task.

| Symptom | Cause | Solution |

|---|---|---|

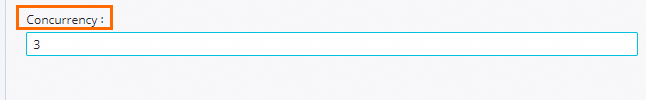

| High source latency only | Data is changing faster than the task can consume it at the source. | Increase the task's concurrency based on the number of databases or tables being synchronized, up to the limit supported by your resource group. If the source data volume has grown or you've added more tables, upgrade your resource group. |

| High destination latency only | The destination database is under heavy load. | Adjusting task concurrency will not help here — contact your database administrator. |

| Both source and destination latency are high | Network bottleneck, typically from running synchronization over the public internet. A significant performance difference between the source and destination databases or high database load can also cause high latency. | Switch to a private connection and sync over the internal network. If the database load is high, contact your database administrator. |

| Offset set too early | The starting offset is too far in the past and the task needs to catch up. | Wait for the task to catch up. |

For LogHub sources, set concurrency based on the number of shards. Make sure concurrency does not exceed the maximum connections allowed by the source database (for example, an RDS instance). For details, see DataWorks resource group overview.

For detailed troubleshooting steps, see How to resolve high latency in real-time synchronization tasks.

Why is using the public internet not recommended for real-time synchronization?

Public internet connections introduce the following risks:

-

Network instability and frequent packet loss degrade synchronization performance.

-

The connection offers lower security compared to a private network.

Use a private connection to sync data over the internal network.

What extra columns does real-time synchronization add to the destination?

When Data Integration synchronizes data from MySQL, Oracle, LogHub, or PolarDB to DataHub or Apache Kafka in real time, it adds five extra columns to the destination table. These columns support metadata management, record sorting, and deduplication. For field details, see Column format in real-time synchronization.

How does real-time synchronization handle TRUNCATE operations?

TRUNCATE is supported and takes effect during incremental and full-incremental merges. If you configure the task to ignore TRUNCATE, redundant data may accumulate in the destination during synchronization.

How do I improve synchronization write speed?

Increase write-side concurrency and tune the JVM parameters. JVM memory allocation depends on the frequency of data changes, not the number of synchronized databases. If the resource group machine has available memory, allocating more reduces Full GC frequency and improves throughput.

Can I run a real-time synchronization task directly from the DataWorks console?

No. After configuring the task, submit and deploy the real-time node, then run it in the production environment. For details, see Operate and maintain a real-time synchronization task.

Why does synchronization slow down when using a MySQL source?

Binlogs are generated at the instance level, not the table level. Even if your task only synchronizes Table A, any changes to other tables on the same instance (Table B, Table C, and so on) generate binlogs that the task still has to process. A higher volume of binlogs across the instance slows down synchronization.

Why does selecting multiple databases use more memory than two separate tasks?

When you select two or more databases, the task switches to full-instance real-time synchronization mode. This single task in full-instance mode consumes more resources than two separate tasks each synchronizing a single database.

What DDL change policies does real-time synchronization support?

Each DDL event at the source is handled according to the policy you configure:

| Policy | Behavior |

|---|---|

| Processing | The DDL message is forwarded to the destination for processing. The exact behavior depends on the destination data source. |

| Ignore | The DDL message is discarded. The destination takes no action. |

| Alert | The DDL message is discarded and an alert is sent. The alert is only delivered if an alert rule is configured for the task. |

| Error | The task stops and enters an error state. An alert is sent if an alert rule is configured. |

Support by DDL type:

| DDL type | Supported destinations | Notes |

|---|---|---|

| Create Table | Apache Hudi tasks; sharded database tasks | For Hudi: a new table is created when the name matches the task's filter rules. For sharded databases: data is routed to the existing destination table; no new destination table is created. All other task types can only ignore, alert, or error. |

| Drop Table | Sharded database tasks | Data stops syncing from the dropped sub-table, but the destination table is not dropped. All other task types can only ignore, alert, or error. |

| Add Column | MaxCompute, Hologres, MySQL, Oracle, AnalyticDB for MySQL | Append new columns to the end of the table. Inserting a column in the middle may cause data anomalies. All other task types can only ignore, alert, or error. |

| Drop Column | — | Not supported. Configure the task to ignore, alert, or error. |

| Rename Table | — | Not supported. Configure the task to ignore, alert, or error. |

| Rename Column | — | Not supported. Configure the task to ignore, alert, or error. |

| Alter Column Type | Apache Hudi only | See Schema Evolution for details. All other task types can only ignore, alert, or error. |

| Clear Table (TRUNCATE) | MaxCompute, Hologres, MySQL, Oracle, AnalyticDB for MySQL | For sharded databases: only the sub-table's data is deleted from the destination. All other task types can only ignore, alert, or error. |

What should I know about DDL and DML behavior during synchronization?

After a new column is added at the source and the change is applied to the destination:

-

Column with a default value: The new column in the destination remains NULL until new data is written to that column at the source.

-

Virtual column: The new column in the destination remains NULL until new data is written at the source.

For MySQL and PolarDB for MySQL sources, append new columns to the end of the table rather than inserting them in the middle. If you must insert a column in the middle:

-

Do not add the column during the full synchronization phase. This will cause data anomalies in the subsequent real-time synchronization phase.

-

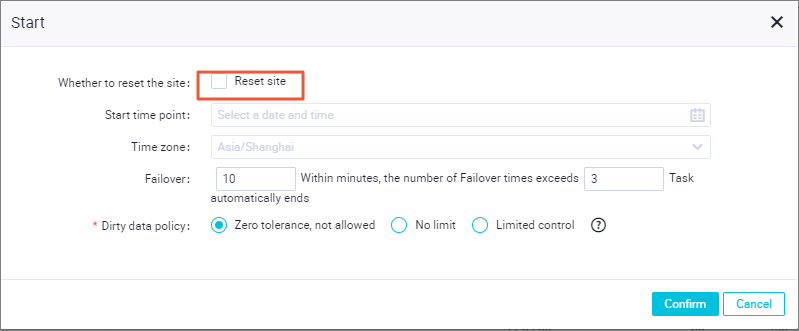

During real-time synchronization, reset the offset to a point after the DDL event that added the column.

If data anomalies occur, re-initialize the table by running a full data synchronization.

Do not use the Rename command to swap two column names (for example, swapping Column A and Column B). This can cause data loss or corruption.

Will default values and NOT NULL constraints be retained in destination tables?

No. When DataWorks creates a destination table, it retains only column names, data types, and comments from the source table. Default values, NOT NULL constraints, and indexes are not carried over.

Why is there high latency after a failover in a PostgreSQL source task?

This is inherent to how PostgreSQL handles replication after a failover. If the latency is unacceptable, stop and restart the task to trigger a full and incremental data synchronization.

How do I trigger a full synchronization for an existing task?

In Data Integration, open the Synchronization Task list, find your task, then in the Operation column click More > Rerun.

Common issues when synchronizing MySQL data

MySQL task stops reading data

-

Run the following command in the source database to check the binlog file the instance is currently writing to:

SHOW MASTER STATUS; -

Search the task logs for an entry like

journalName=MySQL-bin.000001,position=50to confirm the binlog file the task is reading. -

If data is being written to the database but the binlog position is not advancing, contact your database administrator.

Common issues for Oracle, PolarDB, and MySQL

Real-time synchronization task fails repeatedly

DDL messages from Oracle, PolarDB, and MySQL sources are not processed by default. If a DDL change other than CREATE TABLE occurs, the task fails. Because the resume feature re-reads from a slightly earlier offset on restart, it may re-read the DDL event and fail again even after the change is no longer present at the source.

To recover:

-

Manually apply the same DDL change to the destination database.

-

Start the task and temporarily change the DDL handling policy from Error to Ignore.

-

Stop the task, change the DDL handling policy back to Error, and restart the task.

The temporary switch to Ignore prevents the task from failing when the resume feature re-reads the DDL event.

Do not use the Rename command to swap two column names (for example, swapping Column A and Column B). This can cause data loss or corruption.

Error messages and solutions

Kafka error: Startup mode for the consumer set to timestampOffset, but no begin timestamp was specified

Reset the starting offset using the Reset offset function in Data Integration. This sets a new starting point for synchronization and is useful when recovering from an error or resynchronizing data from a specific time or position.

MySQL error: Cannot replicate because the master purged required binary logs

The required binlog offset was not found in MySQL. Check the binlog retention period in your MySQL settings and ensure that the task's starting offset falls within this period.

To resolve this:

-

Check the binlog retention period in your MySQL configuration.

-

Make sure the task's starting offset falls within the current retention window.

-

If you cannot subscribe to the binlog at the required position, reset the offset to the current time.

MySQL error: MySQLBinlogReaderException

The full error message is: The database you are currently syncing is the standby database, but the current value of log_slave_updates is OFF, you need to enable the binlog log update of the standby database first.

Binary logging is not enabled on the standby database. To synchronize from a standby database, enable cascaded binary logging. For setup instructions, see Step 3: Enable binary logging for MySQL. Contact your database administrator if you need help enabling this setting.

MySQL error: show master status' has an error!

The underlying cause is a permissions issue: Access denied; you need (at least one of) the SUPER, REPLICATION CLIENT privilege(s) for this operation.

The account configured for the data source is missing the required database privileges. Grant the account the following privileges: SELECT, REPLICATION SLAVE, and REPLICATION CLIENT. For setup instructions, see Step 2: Create an account and configure permissions.

MySQL error: parse.exception.PositionNotFoundException: can't find start position forxxx

The task could not find the specified offset. Reset the offset.

PolarDB error: The mysql server does not enable the binlog write function. Please enable the mysql binlog write function first

The loose_polar_log_bin parameter is not enabled on the PolarDB source. Enable this parameter following the instructions in Configure a PolarDB data source.

Hologres error: permission denied for database xxx

The current user lacks admin permissions on the Hologres instance. Grant the user admin permissions, which include the ability to create schemas. For details, see Hologres permission model.

MaxCompute error: ODPS-0410051:invalid credentials-accessKeyid not found

The temporary AccessKey used for the MaxCompute data source has expired. Temporary AccessKeys expire after seven days. The platform automatically restarts the task when it detects this failure. If you have configured monitoring alerts for task failures, you will receive an alert when this happens.

Oracle error: logminer doesn't init, send HeartbeatRecord

This is not an error — it indicates the task is still initializing. When a task starts, it loads the previous archive log to find a suitable synchronization offset. If the archive log is large, this initialization can take 3 to 5 minutes to complete.