DataWorks Data Integration supports bidirectional synchronization with MySQL — both offline batch and real-time change data capture (CDC).

To synchronize data from a DRDS for MySQL database, configure it as a DRDS data source instead of a MySQL data source.

Compatibility

Supported versions

Both offline and real-time synchronization support MySQL 5.5.x, 5.6.x, 5.7.x, and 8.0.x. For MySQL 8.0.x, real-time synchronization supports all features except new 8.0-specific features such as functional indexes.

Supported instance types

| Instance type | Offline read | Offline write | Real-time read |

|---|---|---|---|

| Generic MySQL | Supported | Supported | Supported |

| ApsaraDB RDS for MySQL | Supported | Supported | Supported |

| Amazon RDS for MySQL | Supported | Supported | Supported |

| Amazon Aurora MySQL | Supported | Supported | Supported (primary instance only — see note below) |

| Azure MySQL | Supported | Supported | Supported |

For Amazon Aurora MySQL, connect real-time synchronization tasks to the primary (writer) instance. AWS does not allow enabling binary logging on Aurora MySQL read replicas, which real-time synchronization requires for incremental updates.

Offline synchronization also supports reading data from views.

Supported data types

The following table lists the major data types supported in MySQL 8.0.x. For a complete list, see the official MySQL documentation.

| Data type | Offline read | Offline write | Real-time read | Real-time write |

|---|---|---|---|---|

| TINYINT | Supported | Supported | Supported | Supported |

| SMALLINT | Supported | Supported | Supported | Supported |

| INTEGER | Supported | Supported | Supported | Supported |

| BIGINT | Supported | Supported | Supported | Supported |

| FLOAT | Supported | Supported | Supported | Supported |

| DOUBLE | Supported | Supported | Supported | Supported |

| DECIMAL/NUMERIC | Supported | Supported | Supported | Supported |

| REAL | Not supported | Not supported | Not supported | Not supported |

| VARCHAR | Supported | Supported | Supported | Supported |

| JSON | Supported | Supported | Supported | Supported |

| TEXT | Supported | Supported | Supported | Supported |

| MEDIUMTEXT | Supported | Supported | Supported | Supported |

| LONGTEXT | Supported | Supported | Supported | Supported |

| VARBINARY | Supported | Supported | Supported | Supported |

| BINARY | Supported | Supported | Supported | Supported |

| TINYBLOB | Supported | Supported | Supported | Supported |

| MEDIUMBLOB | Supported | Supported | Supported | Supported |

| LONGBLOB | Supported | Supported | Supported | Supported |

| ENUM | Supported | Supported | Supported | Supported |

| SET | Supported | Supported | Supported | Supported |

| BOOLEAN | Supported | Supported | Supported | Supported |

| BIT | Supported | Supported | Supported | Supported |

| DATE | Supported | Supported | Supported | Supported |

| DATETIME | Supported | Supported | Supported | Supported |

| TIMESTAMP | Supported | Supported | Supported | Supported |

| TIME | Supported | Supported | Supported | Supported |

| YEAR | Supported | Supported | Supported | Supported |

| LINESTRING | Not supported | Not supported | Not supported | Not supported |

| POLYGON | Not supported | Not supported | Not supported | Not supported |

| MULTIPOINT | Not supported | Not supported | Not supported | Not supported |

| MULTILINESTRING | Not supported | Not supported | Not supported | Not supported |

| MULTIPOLYGON | Not supported | Not supported | Not supported | Not supported |

| GEOMETRYCOLLECTION | Not supported | Not supported | Not supported | Not supported |

Limitations

Real-time synchronization

Read-only instances: Synchronizing data from MySQL read-only instances earlier than version 5.6.x is not supported.

Functional indexes: Synchronizing tables that contain functional indexes is not supported.

XA transactions: XA ROLLBACK is not supported. Data in the XA PREPARE state is written to the destination, but if an XA ROLLBACK occurs, real-time synchronization does not roll back that data. To handle this, remove the affected table from the real-time synchronization task and add it back to restart synchronization.

Binary log format: Only the ROW binary log format is supported. STATEMENT and MIXED formats are not supported.

Cascade deletes: Records deleted by a cascade delete operation in associated tables are not synchronized.

Online DDL: Only adding columns (Add Column) via Data Management (DMS) is supported for online DDL operations.

Stored procedures: Reading stored procedures from MySQL is not supported.

Offline synchronization

Sharded databases: When synchronizing from multiple tables in a sharded database, the number of sharded tasks equals the number of tables by default. To enable sharding for a single table, set the number of concurrent tasks to a value greater than the number of tables.

Stored procedures: Reading stored procedures from MySQL is not supported.

Prerequisites

Before adding a MySQL data source in DataWorks, complete the following setup on your MySQL database.

Step 1: Confirm the MySQL version

Run the following statement to check your MySQL version:

SELECT version();See the Compatibility section to confirm your version is supported.

Step 2: Configure account permissions

Create a dedicated MySQL account for DataWorks. Grant the account the minimum permissions required for your synchronization type.

Offline synchronization:

Read:

SELECTpermission on the target tablesWrite:

INSERT,DELETE, andUPDATEpermissions on the target tables

Real-time synchronization:

The account needs SELECT, REPLICATION SLAVE, and REPLICATION CLIENT permissions on the database. Run the following command to grant permissions (replace sync_account with your account name):

-- Create the sync account (optional if the account already exists)

-- CREATE USER 'sync_account'@'%' IDENTIFIED BY 'password';

-- Grant permissions

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'sync_account'@'%';*.* grants the permissions on all tables in all databases. To grant permissions on specific tables, use a targeted statement instead. For example:

GRANT SELECT, REPLICATION CLIENT ON test.user TO 'sync_account'@'%';REPLICATION SLAVE is a global permission and cannot be granted on a specific table.Step 3: Enable binary logging (real-time synchronization only)

Data Integration performs real-time synchronization by subscribing to MySQL binary logs. Complete the following checks before configuring a synchronization task.

If a binary log is being consumed, the database cannot delete it. High-latency real-time synchronization tasks may cause binary logs to accumulate on the source database and consume disk space. Set up latency alerts and monitor disk space.

Retain binary logs for at least 72 hours. If a task fails and the binary log at the last-consumed offset has been deleted, you must perform a full offline synchronization to backfill the data.

Check 1: Confirm binary logging is enabled

SHOW variables LIKE "log_bin";If the result is ON, binary logging is enabled. For a secondary database, also run:

SHOW variables LIKE "log_slave_updates";If binary logging is not enabled, enable it based on your MySQL type:

Open source MySQL: see the official MySQL documentation

ApsaraDB RDS for MySQL: see RDS for MySQL log backup

PolarDB for MySQL: see Enable binary logging

Check 2: Confirm the binary log format is ROW

SHOW variables LIKE "binlog_format";Real-time synchronization requires the ROW format. If the result is STATEMENT or MIXED, update the binlog_format configuration.

Check 3: Confirm full row image logging is enabled

SHOW variables LIKE "binlog_row_image";Real-time synchronization requires the FULL row image. If the result is MINIMAL, update the binlog_row_image configuration.

Step 4 (optional): Authorize binary log access from OSS

This step applies only when all of the following are true:

You are adding the data source in Alibaba Cloud instance mode

Your RDS for MySQL instance is in the same region as the DataWorks workspace

You plan to enable Enable binary log reading from OSS

When enabled, if DataWorks cannot access the RDS binary logs directly, it falls back to obtaining them from OSS, preventing real-time synchronization task interruptions.

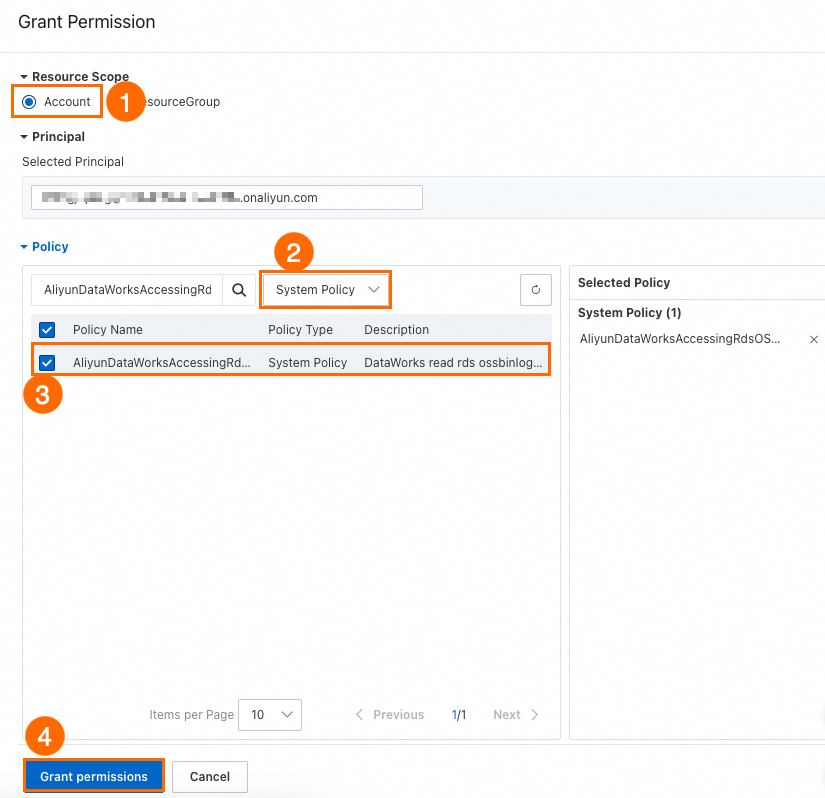

If you set Identity to access binary logs from OSS to Alibaba Cloud RAM user, configure the RAM user as follows:

Log on to the RAM console and find the RAM user.

In the Actions column, click Add permissions.

Set Resource scope to Account level, set Policy to System policy, and set Policy name to

AliyunDataWorksAccessingRdsOSSBinlogPolicy. Click Grant permissions.

If you set Identity to access binary logs from OSS to Alibaba Cloud RAM role, configure the RAM role as follows:

Log on to the RAM console and create a RAM role for a trusted Alibaba Cloud account with these parameters:

Principal type: Alibaba Cloud Account

Principal name: Other Alibaba Cloud Account — enter the ID of the Alibaba Cloud account that owns the DataWorks workspace

Role name: A custom name of your choice

Grant permissions to the RAM role with these parameters:

Policy: System policy

Policy name:

AliyunDataWorksAccessingRdsOSSBinlogPolicy

Modify the trust policy of the RAM role to the following:

{

"Statement": [

{

"Action": "sts:AssumeRole",

"Effect": "Allow",

"Principal": {

"Service": [

"<Alibaba_Cloud_account_ID_of_the_DataWorks_user>@di.dataworks.aliyuncs.com",

"<Alibaba_Cloud_account_ID_of_the_DataWorks_user>@dataworks.aliyuncs.com"

]

}

}

],

"Version": "1"

}Add a data source

Alibaba Cloud instance mode

Use this mode if your MySQL database is an ApsaraDB RDS for MySQL instance.

| Parameter | Description |

|---|---|

| Data source name | A unique name within the workspace. Use a name that identifies the business and environment, for example, rds_mysql_order_dev. |

| Configuration mode | Select Alibaba Cloud instance mode. For details, see Scenario 1: Instance mode (current Alibaba Cloud account) and Scenario 2: Instance mode (other Alibaba Cloud accounts). |

| Alibaba Cloud account | Select Current Alibaba Cloud account or Other Alibaba Cloud account. If you select Other Alibaba Cloud account, configure cross-account authorization and specify the Other Alibaba Cloud account ID and the RAM role for authorization provided by that account. |

| Region | The region where the RDS instance is located. |

| Instance | The RDS instance to connect to. |

| Configure secondary instance | If the instance has a read-only instance, you can select it for read operations. This offloads reads to the read-only instance and avoids affecting the primary instance. |

| Instance address | After selecting the instance, click Get latest endpoint to view the public or private endpoint, VPC, and vSwitch. |

| Database | The database name. The specified account must have permissions to access this database. |

| Username/Password | The credentials of the MySQL account. For RDS instances, manage accounts in the Account management section. |

| Enable binary log reading from OSS | When enabled, DataWorks falls back to OSS to obtain binary logs if it cannot access the RDS binary logs directly. This prevents real-time synchronization task interruptions. Configure authorization first — see Step 4 (optional): Authorize binary log access from OSS. Then set OSS binlog access identity based on your authorization configuration. |

| Authentication method | Select No authentication or SSL authentication. If you select SSL authentication, SSL must be enabled on the instance. Upload the certificate file to Certificate file management. |

| Version | Run SELECT VERSION() on the MySQL server to get the version number. |

Connection string mode

Use this mode to connect using a JDBC URL, which works for any MySQL-compatible database.

| Parameter | Description |

|---|---|

| Data source name | A unique name within the workspace. Use a name that identifies the business and environment, for example, rds_mysql_order_dev. |

| Configuration mode | Select Connection string mode. |

| JDBC connection string preview | DataWorks automatically builds the JDBC URL from the endpoint and database name you enter. |

| Connection address | The IP address or domain name of the database host, and the port (default: 3306). For RDS instances, find the endpoint on the instance page. |

| Database name | The database name. The specified account must have permissions to access this database. |

| Username/Password | The credentials of the MySQL account. For RDS instances, manage accounts in the Account management section. |

| Authentication method | Select No authentication or SSL authentication. If you select SSL authentication, upload the certificate file to Authentication file management. |

| Version | Run SELECT VERSION() on the MySQL server to get the version number. |

| Advanced parameters | Select a parameter name (for example, connectTimeout) and enter a value (for example, 3000). DataWorks appends the parameter to the JDBC URL: jdbc:mysql://192.168.90.28:3306/test?connectTimeout=3000. |

Verify that the resource group can connect to the data source before proceeding. Network configuration requirements depend on your network environment and connection mode. For details, see Test connectivity.

Configure a synchronization task

Offline synchronization (single table)

Codeless UI: Configure an offline synchronization task in the codeless UI

Code editor: Configure an offline synchronization task in the code editor

For a complete parameter reference and script examples, see Appendix: Script examples and parameter reference.

Real-time synchronization (single table)

Database-level synchronization (offline, full + incremental, and sharded)

FAQ

An error is reported during real-time synchronization of MySQL data

Why does the speed of real-time synchronization for a MySQL data source slow down?

For other common Data Integration issues, see FAQ about Data Integration.

Appendix: Script examples and parameter reference

When configuring an offline synchronization task in the code editor, use the unified script format. For the general code editor setup, see Configure a task in the code editor.

Reader script examples

The comments in the following JSON examples are for demonstration purposes only. Remove comments before using the script.

Single table in a single database

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "mysql",

"parameter": {

"column": [

"id"

],

"connection": [

{

"querySql": [

"select a,b from join1 c join join2 d on c.id = d.id;"

],

"datasource": ""

}

],

"where": "",

"splitPk": "",

"encoding": "UTF-8"

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "stream",

"parameter": {},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {

"record": "0"

},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [

{

"from": "Reader",

"to": "Writer"

}

]

}

}Sharded tables

The MySQL Reader supports reading from multiple tables with the same schema across different data sources and writing to a single destination table. To configure database-level sharding, create a task in Data Integration and select the database-level sharding feature.

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "mysql",

"parameter": {

"indexes": [

{

"type": "unique",

"column": [

"id"

]

}

],

"envType": 0,

"useSpecialSecret": false,

"column": [

"id",

"buyer_name",

"seller_name",

"item_id",

"city",

"zone"

],

"tableComment": "Test order table",

"connection": [

{

"datasource": "rds_dataservice",

"table": [

"rds_table"

]

},

{

"datasource": "rds_workshop_log",

"table": [

"rds_table"

]

}

],

"where": "",

"splitPk": "id",

"encoding": "UTF-8"

},

"name": "Reader",

"category": "reader"

},

{

"stepType": "odps",

"parameter": {},

"name": "Writer",

"category": "writer"

},

{

"name": "Processor",

"stepType": null,

"category": "processor",

"copies": 1,

"parameter": {

"nodes": [],

"edges": [],

"groups": [],

"version": "2.0"

}

}

],

"setting": {

"executeMode": null,

"errorLimit": {

"record": ""

},

"speed": {

"concurrent": 2,

"throttle": false

}

},

"order": {

"hops": [

{

"from": "Reader",

"to": "Writer"

}

]

}

}Reader parameters

| Parameter | Description | Required | Default |

|---|---|---|---|

datasource | The data source name. Must match the name of the data source added in DataWorks. | Yes | None |

table | The table to read from. A single task can read from one logical table. To read from sharded tables, specify a range: "table_[0-99]" reads from table_0 through table_99. For zero-padded suffixes such as table_000 through table_999, use ["table_00[0-9]","table_0[10-99]","table_[100-999]"]. All matched tables must contain the columns specified by column. | Yes | None |

column | The columns to synchronize, as a JSON array. Use ["*"] for all columns. Supports column cropping, reordering, and constant values. Constants follow MySQL SQL syntax — for example: ["id", "1", "'literal_string'", "2.3", "true", "null"]. | Yes | None |

splitPk | The shard key for parallel data extraction. Use the primary key for even distribution. Supports integer types only — strings, floats, and dates are not supported. If omitted or left blank, the task uses a single channel. | No | None |

splitFactor | The sharding factor. The total number of shards is concurrent threads × splitFactor. For example, 5 threads × 5 factor = 25 shards processed by 5 threads. Keep the value between 1 and 100 — a value that is too large may cause an out-of-memory (OOM) error. | No | 5 |

where | A filter condition appended to the SQL query, for example, gmt_create>$bizdate for daily incremental synchronization. Omit this parameter or leave it blank to synchronize all data. Do not use LIMIT — it does not comply with MySQL WHERE clause constraints. | No | None |

querySql | A custom SQL query that overrides table, column, where, and splitPk. Use this for complex scenarios such as multi-table joins: select a,b from table_a join table_b on table_a.id = table_b.id. The datasource parameter is still used to resolve credentials. This parameter is case-sensitive — querysql (lowercase) does not take effect. Not available in the codeless UI. | No | None |

useSpecialSecret | When multiple source data sources are configured with different credentials, set to true to use each data source's own password. | No | false |

Writer script example

{

"type": "job",

"version": "2.0",

"steps": [

{

"stepType": "stream",

"parameter": {},

"name": "Reader",

"category": "reader"

},

{

"stepType": "mysql",

"parameter": {

"postSql": [],

"datasource": "",

"column": [

"id",

"value"

],

"writeMode": "insert",

"batchSize": 1024,

"table": "",

"nullMode": "skipNull",

"skipNullColumn": [

"id",

"value"

],

"preSql": [

"delete from XXX;"

]

},

"name": "Writer",

"category": "writer"

}

],

"setting": {

"errorLimit": {

"record": "0"

},

"speed": {

"throttle": true,

"concurrent": 1,

"mbps": "12"

}

},

"order": {

"hops": [

{

"from": "Reader",

"to": "Writer"

}

]

}

}Writer parameters

| Parameter | Description | Required | Default |

|---|---|---|---|

datasource | The data source name. Must match the name of the data source added in DataWorks. | Yes | None |

table | The destination table name. | Yes | None |

writeMode | The write mode. Options: insert, update, replace. See the table below for behavior details. | No | insert |

column | The destination columns to write to, as a comma-separated list — for example, ["id","name","age"]. Use ["*"] to write to all columns in order. | Yes | None |

nullMode | How to handle null values from the source. writeNull: write NULL to the destination field. skipNull: skip writing to the destination field; the column's default value is used if one is defined. When using skipNull, also configure skipNullColumn. | No | writeNull |

skipNullColumn | When nullMode is skipNull, these columns use their default values instead of being forced to NULL. Format: ["c1","c2",...]. The specified columns must be a subset of column. | No | All columns in the task |

preSql | SQL statements to run before the synchronization task starts — for example, TRUNCATE TABLE tablename to clear existing data. The codeless UI supports one statement; the code editor supports multiple. Transactions are not supported across multiple statements. | No | None |

postSql | SQL statements to run after the synchronization task completes — for example, adding a timestamp column. The codeless UI supports one statement; the code editor supports multiple. Transactions are not supported across multiple statements. | No | None |

batchSize | The number of records submitted per batch. Larger values reduce round-trips to MySQL and improve throughput, but may cause OOM errors if set too high. | No | 256 |

updateColumn | When writeMode is update, the columns to update on a primary key or unique index conflict — for example, ["name","age"]. | No | None |

Write mode behavior

| Code editor value | UI option | Behavior on conflict |

|---|---|---|

insert | INSERT INTO | Conflicting rows are skipped and counted as dirty data |

update | ON DUPLICATE KEY UPDATE | Specified fields in the existing row are updated with values from the new row |

replace | REPLACE INTO | The existing row is deleted and the new row is inserted |

When nullMode is set to skipNull, the task dynamically generates the write SQL to support column default values. This increases the number of FLUSH operations and reduces synchronization speed. In the worst case, a FLUSH is performed for each record.