The StarRocks data source lets you read data from and write data to StarRocks in DataWorks Data Integration tasks.

Supported versions

| Deployment type | Supported versions |

|---|---|

| EMR Serverless StarRocks | All versions |

| E-MapReduce on ECS | StarRocks 2.1 |

| StarRocks Community Edition | Supported (must be deployed on E-MapReduce on ECS) |

DataWorks connects to StarRocks only over an internal network. If you use StarRocks Community Edition, deploy it on E-MapReduce on ECS. If you encounter compatibility issues, submit a ticket.

Supported data types

Only numeric, string, and date data types are supported.

Limitations

-

For real-time synchronization of an entire database from MySQL to StarRocks, the destination StarRocks table must use a primary key model.

-

During real-time synchronization of an entire database from MySQL to StarRocks, DDL operations other than TRUNCATE are not supported. Configure the task to either ignore these DDL operations or report an error.

Network connectivity

Before adding StarRocks as a data source, make sure the DataWorks resource group can reach your StarRocks instance.

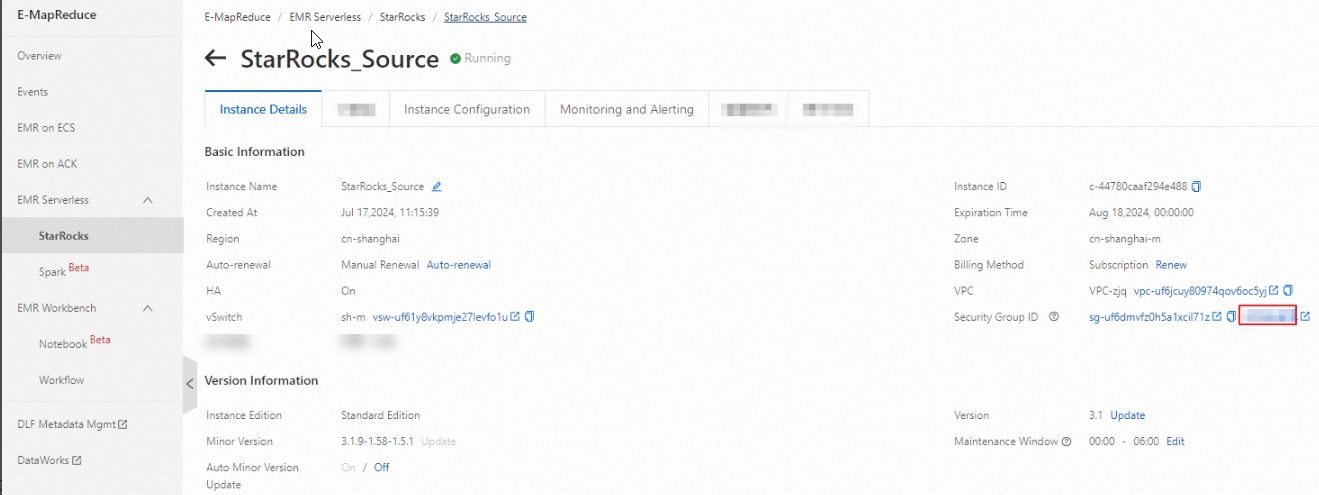

EMR Serverless StarRocks

Add the IP addresses of the DataWorks resource group to the IP allowlist of your EMR Serverless StarRocks instance.

-

For the IP addresses of DataWorks resource groups, see General configurations: Add IP addresses to an allowlist.

-

Add IP addresses to the allowlist in the EMR console.

Self-managed StarRocks

Make sure the DataWorks resource group can reach the query port, FE port, and BE port of your StarRocks instance. The default ports are 9030, 8030, and 8040.

Add a data source

Before developing a synchronization task, add StarRocks as a data source in DataWorks. For instructions, see Data source management. Parameter descriptions are available in the DataWorks console when you add the data source.

Select a connection mode based on your network environment:

Internal network connection (recommended)

Use this mode when your StarRocks instance and the Serverless resource group are in the same Virtual Private Cloud (VPC). An internal network connection provides low latency without exposing data to the public internet.

Both Alibaba Cloud instance mode and Connection string mode are supported:

-

ApsaraDB for RDS: Select a StarRocks instance in the same VPC directly. The system retrieves the connection information automatically.

-

User-created Data Store with Public IP Addresses: Enter the internal address or IP address, port, and Load URL of the instance.

Public network connection

Use this mode when you need to access StarRocks over the public network, for example, for cross-region access or from an on-premises environment. Only Connection string mode is supported.

-

Enable public network access on your StarRocks instance before connecting.

-

User-created Data Store with Public IP Addresses: Enter the public address or IP address, port, and Load URL of the instance.

Serverless resource groups cannot access the public network by default. To connect to StarRocks over a public endpoint, configure a NAT Gateway and an Elastic IP address (EIP) for the bound VPC. Make sure the Serverless resource group can reach the query port, FE port, and BE port (defaults: 9030, 8030, and 8040).

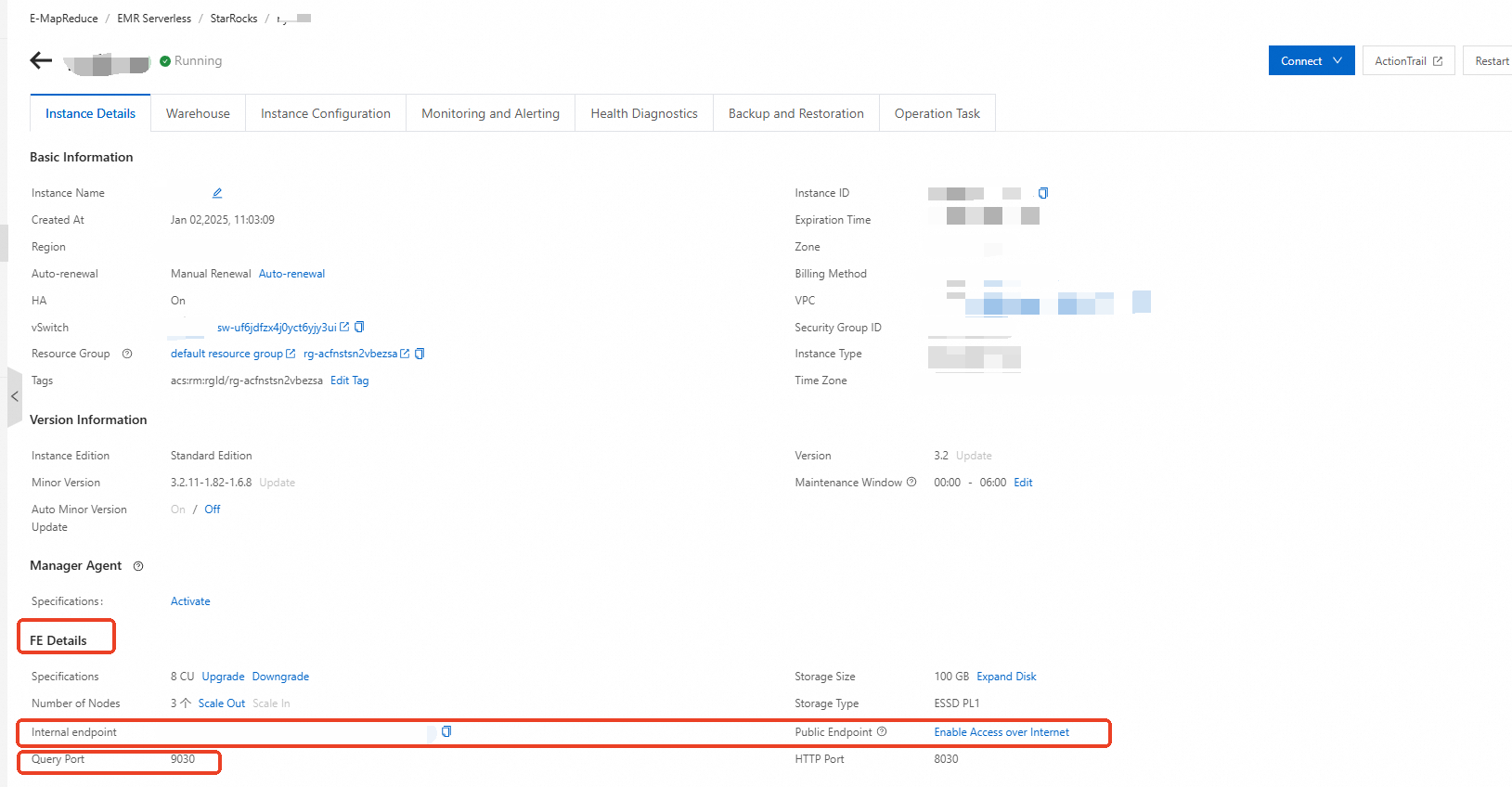

For Alibaba Cloud EMR StarRocks Serverless, set Host Address/IP Address to Internal Endpoint or Public network address, and set the port to a query port.

-

FE: Get the FE information from the instance details page.

-

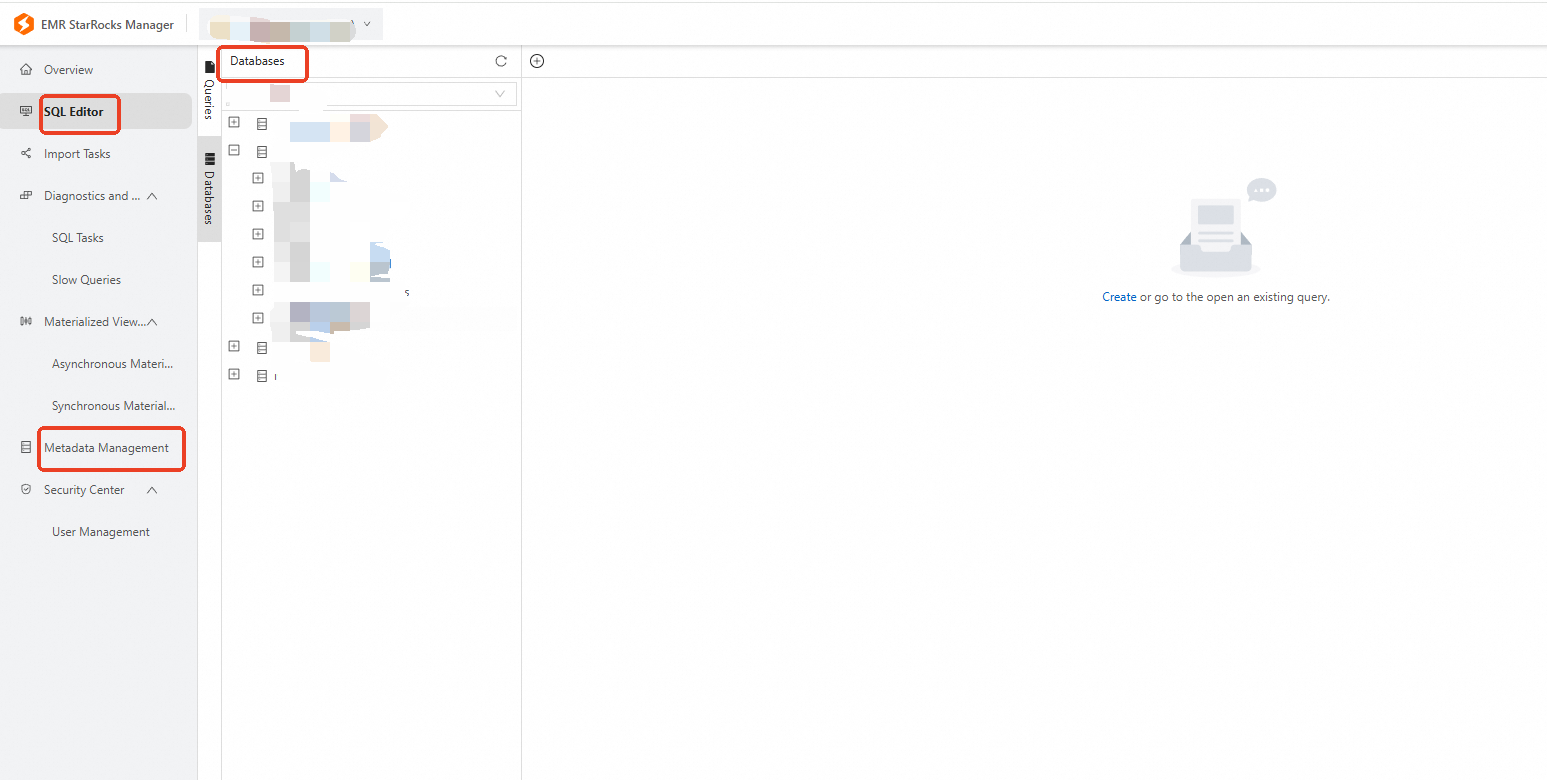

Database: After connecting through EMR StarRocks Manager, view databases in SQL Editor or Metadata Management.

To create a database, run SQL commands directly in the SQL editor.

Configure a synchronization task

Select a configuration guide based on your synchronization type.

| Synchronization type | Supported sources | Configuration guide |

|---|---|---|

| Batch synchronization for a single table | All data source types supported by Data Integration | Codeless UI / Code Editor |

| Real-time synchronization for a single table | Kafka | Configure a full-database real-time synchronization task |

| Batch synchronization for a full database | MySQL | Configure a full-database real-time synchronization task |

| Real-time synchronization for a full database | MySQL, Oracle, and PolarDB | Configure a full-database real-time synchronization task |

For batch synchronization using the Code Editor, see the parameter reference in Appendix: Code and parameters.

Appendix: Code and parameters

Configure a batch synchronization task using the Code Editor

When configuring a batch synchronization task in the Code Editor, set the parameters in the script according to the unified script format. For format requirements, see Use the Code Editor.

Reader

Code example

{

"stepType": "starrocks",

"parameter": {

"selectedDatabase": "didb1",

"datasource": "starrocks_datasource",

"column": [

"id",

"name"

],

"where": "id>100",

"table": "table1",

"splitPk": "id"

},

"name": "Reader",

"category": "reader"

}Parameters

| Parameter | Required | Default | Description |

|---|---|---|---|

datasource |

Yes | None | The name of the StarRocks data source. |

table |

Yes | None | The source table. |

column |

Yes | None | The column names to synchronize. To add a SET_VAR hint, prepend it to the first column name. For example, to add SET_VAR(enable_spill = true) when reading the id column, configure column as ["/*+ SET_VAR(enable_spill = true)*/ id"]. |

selectedDatabase |

No | Database from the data source configuration | The name of the StarRocks database. |

where |

No | None | A filter condition for incremental synchronization. For example, gmt_create>${bizdate} syncs records created on the current business date. Omit this parameter for a full sync. |

splitPk |

No | None | The field used to shard data for concurrent synchronization. Use the table's primary key for even data distribution and to avoid hot spots. |

Writer

Code example

{

"stepType": "starrocks",

"parameter": {

"selectedDatabase": "didb1",

"loadProps": {

"row_delimiter": "",

"column_separator": ""

},

"datasource": "starrocks_public",

"column": [

"id",

"name"

],

"loadUrl": [

"1.1.X.X:8030"

],

"table": "table1",

"preSql": [

"truncate table table1"

],

"postSql": [

],

"maxBatchRows": 500000,

"maxBatchSize": 5242880,

"strategyOnError": "exit"

},

"name": "Writer",

"category": "writer"

}Parameters

| Parameter | Required | Default | Description |

|---|---|---|---|

datasource |

Yes | None | The name of the StarRocks data source. |

table |

Yes | None | The destination table. |

column |

Yes | None | The destination columns to write data to. |

loadUrl |

Yes | None | The FE IP address and HTTP port (default: 8030). For multiple FE nodes, separate entries with a comma. For example: ["192.168.1.1:8030","192.168.1.2:8030"]. |

loadProps |

Yes | None | Request parameters for the StarRocks StreamLoad job. Set to {} if no special configuration is needed. See loadProps parameters for details. |

selectedDatabase |

No | Database from the data source configuration | The name of the StarRocks database. |

preSql |

No | None | An SQL statement to run before the synchronization task starts. For example: TRUNCATE TABLE table1. |

postSql |

No | None | An SQL statement to run after the synchronization task completes. |

maxBatchRows |

No | 500000 | The maximum number of rows per write batch. |

maxBatchSize |

No | 5242880 | The maximum data size per write batch, in bytes. |

strategyOnError |

No | exit |

The error handling policy for batch writes. exit: fail and exit the task if an error occurs. batchDirtyData: record the failed batch as dirty data and continue. |

loadProps parameters

loadProps configures the underlying StreamLoad import job. The available parameters depend on the import format.

CSV format (default)

| Parameter | Default | Description |

|---|---|---|

column_separator |

\t |

The column separator. |

row_delimiter |

\n |

The row delimiter. |

If your data contains the default delimiter characters, specify alternative delimiters:

{"column_separator": "<your-separator>", "row_delimiter": "<your-delimiter>"}JSON format

Set "format": "json" to import data in JSON format:

{

"format": "json"

}Additional parameters for JSON imports:

| Parameter | Default | Description |

|---|---|---|

strip_outer_array |

false |

Whether to strip the outermost array [] and import each element as a separate row. Set to true when the JSON data is wrapped in an outer array. |

ignore_json_size |

— | Whether to bypass the 100 MB JSON body size check. If the JSON body exceeds 100 MB, set this to true to skip the check. |

compression |

— | The compression algorithm for data transmission. Supported values: GZIP, BZIP2, LZ4_FRAME, ZSTD. |

strict_mode |

false |

Whether to enable strict mode. true: filter out rows with conversion errors and import only valid rows. false: convert fields that fail type conversion to NULL and import them along with valid rows. |