When a real-time synchronization node falls behind, work through these checks in order to identify the root cause and apply the right fix.

Identify whether the bottleneck is at the source or destination

Open the Details tab for the node in Operation Center (Real-Time Node O&M > Real-time Synchronization Nodes > click the node name on the Real Time DI page).

In the Reader Statistics and Writer Statistics sections, compare the waitTimeWindow (5 min) values. This metric shows how long the node waited — either to read from the source or to write to the destination — during the past 5 minutes. The side with the higher value is where the bottleneck is.

For more information on navigating to this view, see Manage real-time synchronization tasks.

A real-time synchronization node reads from the source and writes to the destination in sequence. If writing is slower than reading, the destination imposes backpressure on the reader, which slows down ingestion. Focus your investigation on the side with the higher waitTimeWindow (5 min) value — that side is where the bottleneck originates.

Check for exceptions during the latency period

On the Log tab of the same panel, search for any of the following keywords within the time window when latency spiked:

-

Error -

error -

Exception -

exception -

OutOfMemory

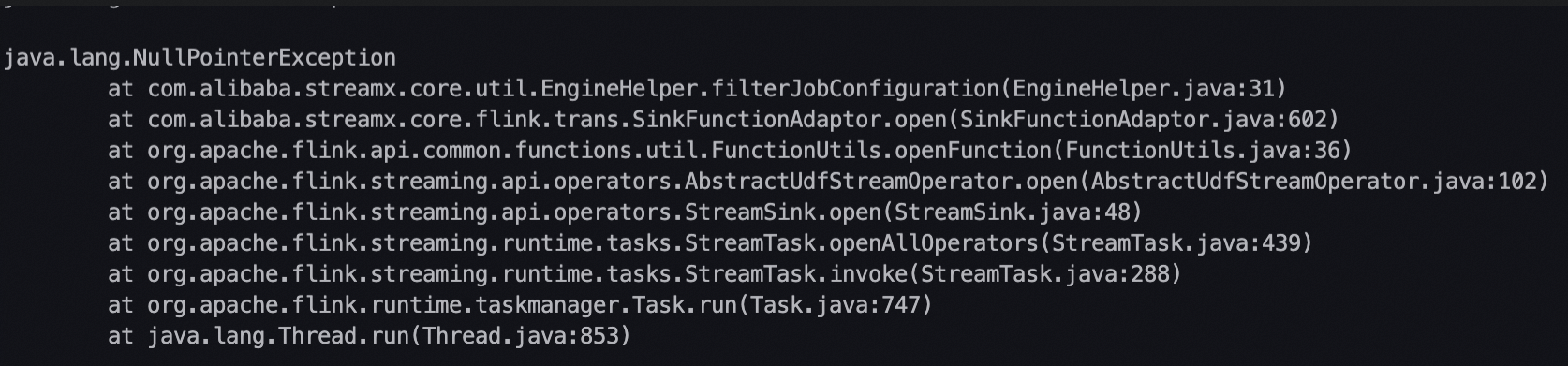

If exception log entries appear during the latency period, see FAQ about real-time synchronization to determine whether optimizing the node configuration resolves the issue.

Check for out of memory (OOM) errors

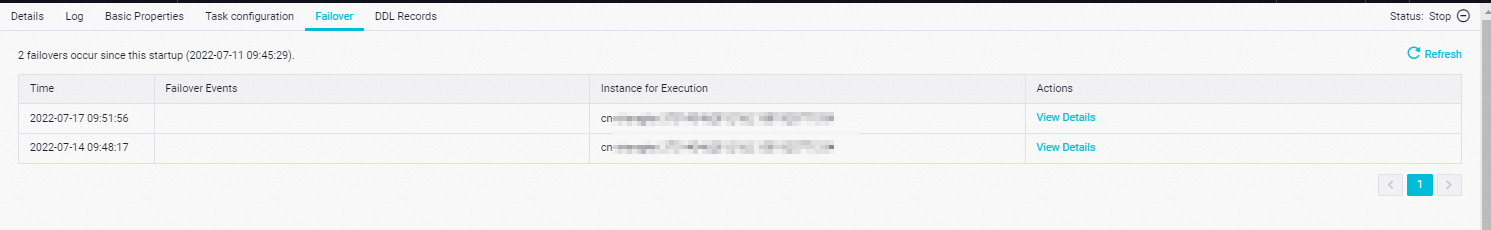

On the Failover tab, review whether failovers occur more than once within 10 minutes. Failovers that frequent suggest the node is running out of memory.

To confirm the cause, hover over a failover event in the Failover Events column, or click View Details in the Actions column to see the complete pre-failover logs. Search for OutOfMemory in either location. If the error appears, the node needs more memory.

Increase the memory size using the method that matches how the node was created:

| How the node was created | Where to configure memory |

|---|---|

| DataStudio — single table to single table | Configuration tab > Basic Configuration |

| DataStudio — database to data source (e.g., DataHub) | Configuration tab > Basic Configuration (right pane) |

| Generated by a data synchronization solution | Configure Resources step on the solution's configuration page |

Check for data skew or throughput limits (Kafka, DataHub, LogHub sources)

For nodes reading from Kafka, DataHub, or LogHub: each partition or shard is consumed by a single thread. If most incoming data is concentrated in a few partitions or shards, those channels become a bottleneck and the node falls behind regardless of how many parallel threads are configured.

Diagnose data skew:

On the Details tab under Reader Statistics, check the Total Bytes metric. Compare it against the same metric for other nodes running normally on the same source. A significantly higher value indicates that this node's source partitions are receiving disproportionately more data.

The Total Bytes value is cumulative from the node's start offset. For nodes that have been running for a long time, this figure may not reflect the current skew. In that case, check the metrics directly in the source system.

If data skew is confirmed, the fix must be applied upstream — rebalancing how data is distributed across partitions or shards in the Kafka topic, DataHub topic, or LogHub Logstore. Adjusting the node's own configuration will not resolve a skew at the source.

Diagnose throughput limit exhaustion:

If the transmission rate from the upstream system to the source, or from a single partition or shard to the node, is hitting the source system's ceiling, add more partitions or shards.

| Source | Per-partition/shard read limit |

|---|---|

| Kafka | Configurable on the Kafka cluster |

| DataHub | 4 MB/s per partition |

| LogHub | 10 MB/s per shard |

If multiple real-time synchronization nodes read from the same Kafka topic, DataHub topic, or LogHub Logstore, check whether the combined read rate of all nodes exceeds the source's limit.

Check for large transactions or bulk DDL/DML operations (MySQL source)

For nodes reading from MySQL: if large transactions are committed or a large number of DDL or data manipulation language (DML) operations run on the source, binary logs grow faster than the node can consume them, causing the node to fall behind.

Examples of operations that expand binary logs quickly:

-

Updating a field value across a large table

-

Deleting a large number of rows

Diagnose the cause:

On the Details tab, check the data synchronization speed:

-

A high synchronization speed confirms that binary log volume is growing rapidly.

-

If the displayed speed is not unusually high, check audit logs and binary log metrics directly on the MySQL server to get the actual binary log growth rate.

The synchronization speed shown on the Details tab may undercount the actual binary log consumption rate. If source databases or tables are not specified in the node configuration, the node filters data *after* reading it — so the read throughput for those tables is excluded from the displayed speed and total byte count.

If large transactions or temporary bulk changes are the cause, wait for the node to catch up. After the backlog of binary log data is processed, latency decreases on its own.

Check for excessive flush operations (MaxCompute destination with dynamic partitioning)

For nodes writing to MaxCompute with dynamic partitioning by field value: the node maintains a set of write queues, one per unique partition value seen within the flush interval (default: 1 minute). The maximum queue size defaults to 5.

When the number of distinct partition values within a flush interval exceeds the queue limit, the node triggers a flush for all buffered data. Frequent flushes significantly reduce write throughput.

Diagnose the issue:

On the Log tab, search for:

uploader map size has reached uploaderMapMaximumSizeIf this message appears, open the destination configuration panel and increase the partition cache queue size.

Increase parallel threads or enable distributed execution mode

If none of the preceding causes apply and the latency is driven by sustained business traffic growth, increasing the number of parallel threads can reduce it.

Thread and memory configuration guidelines:

| Parallel threads | Recommendation |

|---|---|

| 1–20 | Safe to run on a single machine |

| 21–32 | May experience resource contention on a single machine; consider enabling distributed execution mode if supported |

| > 32 | Not recommended without distributed execution mode enabled |

Always increase memory proportionally when adding threads. Use a 4:1 ratio — for example, doubling the thread count requires doubling the memory allocation.

Configure parallel threads and memory using the method that matches how the node was created:

| How the node was created | Where to configure threads | Where to configure memory |

|---|---|---|

| DataStudio — single table to single table | Basic Configuration (right pane) | Basic Configuration (right pane) |

| DataStudio — database to data source (e.g., DataHub) | Configure Resources step | Basic Configuration (right pane) |

| Generated by a data synchronization solution | Configure Resources step | Configure Resources step |

Distributed execution mode removes the single-machine resource ceiling. It is currently supported for the following combinations:

| Node type | Source | Destination |

|---|---|---|

| DataStudio ETL node | Kafka | MaxCompute |

| DataStudio ETL node | Kafka | Hologres |