The DataWorks Shell node runs standard Shell scripts as part of a data pipeline. Use it to perform file operations, interact with Object Storage Service (OSS) or Apsara File Storage NAS, invoke external tools, or execute scripts in other languages — all within a scheduled workflow.

Use cases

File operations: Copy, move, compress, and archive files as pipeline steps.

OSS and NAS access: Read from and write to OSS buckets or NAS mounts using

ossutilor datasets.Cross-language script execution: Call Python, R, or other scripts from a Shell node and wait for them to complete before the pipeline continues.

Custom environment tasks: Run scripts in a tailored container image when your pipeline requires specific dependencies.

Scheduled automation: Run recurring maintenance scripts — log rotation, data export, cleanup — on a defined schedule.

Prerequisites

Before you begin, make sure you have:

A DataWorks workspace

A RAM user with the Developer or Workspace Administrator role in the workspace (see Add members to a workspace)

Usage notes

Syntax

Standard Shell syntax is supported. Interactive syntax is not supported.

Serverless resource group limits

If the target service has an IP address allowlist, add the IP addresses of the Serverless resource group to that allowlist before running the node.

A single task supports up to 64 CU. Keep tasks within 16 CU to avoid resource shortages that delay task startup.

Custom development environment

To run scripts that require specific dependencies, use the custom image feature to build a tailored execution environment. For details, see Custom images.

Child processes and script calls

Avoid spawning a large number of child processes within a single Shell node. Shell nodes have no resource limits, so excessive child processes can affect other tasks on the same resource group.

When a Shell node calls another script (for example, a Python script), the Shell node waits for the called script to finish before completing.

Quick start

This section walks through creating, debugging, and deploying a Shell node with a simple echo "Hello DataWorks!" example.

Develop the node

Log in to the DataWorks console. Switch to the target region, click Data Development and O&M > Data Development, select the target workspace, and click Go to DataStudio.

On the Data Studio page, create a Shell node.

In the script editor, enter your Shell code. Interactive syntax is not supported.

echo "Hello DataWorks!"Click Run a task in the right panel. Select a resource group for debugging. Click

Run to start debugging.

Run to start debugging.The resource group you select here determines network access. If your script connects to a service with an IP address allowlist, make sure the resource group's egress IPs are already added to that allowlist before running.

After the script passes debugging, click Properties in the right panel. Configure the scheduling cycle, dependencies, and parameters.

Save the node before proceeding to deployment.

Deploy and manage the node

Submit and deploy the Shell node to the production environment. For the full deployment process, see Node and workflow deployment.

After deployment, the task runs on the configured schedule. To monitor it, click the

icon in the upper-left corner, then navigate to All Products > Data Studio and Operations > Operation Center. In the left navigation pane, choose Task Operations > Cycle Task Operations > Cycle Task. For more information, see Getting started with Operation Center.

icon in the upper-left corner, then navigate to All Products > Data Studio and Operations > Operation Center. In the left navigation pane, choose Task Operations > Cycle Task Operations > Cycle Task. For more information, see Getting started with Operation Center.

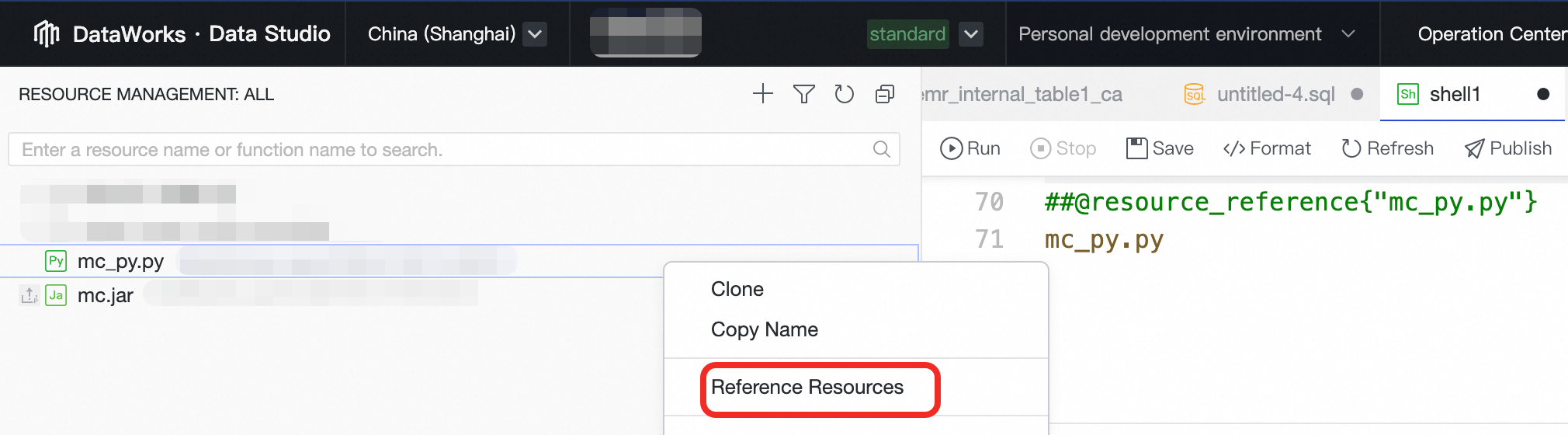

Reference resources in a Shell node

Upload the resource files using the Resource Management feature. For details, see Resource management.

Publish a resource before referencing it in a node. If the node runs in the production environment, also deploy the resource to production.

Open the Shell node to open the script editor.

In the left navigation pane, click

to open the Resource menu. Right-click the resource you want to use and select Reference Resource. The system inserts a declaration comment at the top of the script.

to open the Resource menu. Right-click the resource you want to use and select Reference Resource. The system inserts a declaration comment at the top of the script.The inserted comment follows the format

##@resource_reference{resource_name}. This identifier lets DataWorks recognize the resource dependency and automatically mount the resource at runtime. Do not modify or delete this comment.

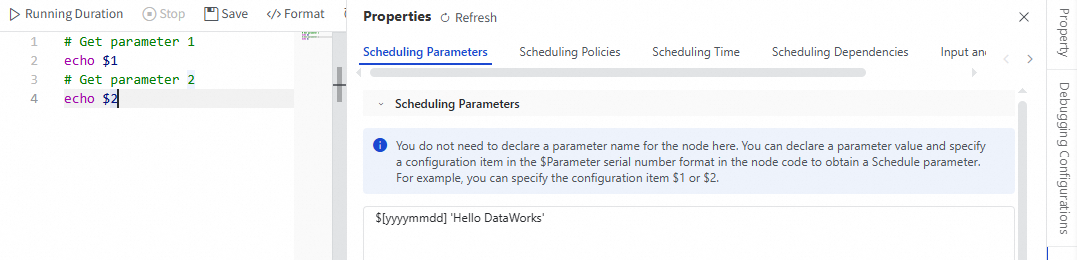

Pass scheduling parameters to a Shell node

DataWorks injects scheduling parameters as positional parameters — custom variable names are not supported. Parameters are passed in the order you define them in Properties > Parameters, mapped to $1, $2, $3, and so on.

Key rules for scheduling parameters:

| Rule | Details |

|---|---|

| Order | The order of values in the Parameters tab must exactly match the positions referenced in the script. |

| Separator | Separate parameter values with spaces. |

| More than nine parameters | Use braces for positions beyond nine — for example, ${10} and ${11} — to ensure correct parsing. |

| Values with spaces | Enclose the value in quotation marks. The entire quoted string is treated as a single parameter. |

| Upstream output parameters | To receive output from an upstream node, add a parameter in Properties > Node Context > Input and set its value to the upstream node's output parameter. |

Example

$1is set to the current date using the date macro:$[yyyymmdd]$2is set to the fixed stringHello DataWorks

Access OSS using ossutil

ossutil is pre-installed in the DataWorks execution environment — no manual installation is needed. The default path is /home/admin/usertools/tools/ossutil64. Use it to manage OSS buckets, upload and download files, and run batch operations.

Two ways to configure OSS credentials for ossutil:

Command-line parameters: see Access OSS using command-line parameters.

Configuration file: see Access OSS using a configuration file.

For a more secure approach, associate a RAM role with the node. The node then uses Alibaba Cloud Security Token Service (STS) to obtain temporary security credentials at runtime, eliminating the need to hardcode a long-term AccessKey in the script. For details, see Configure node-associated roles.

Access OSS or NAS using datasets

Create a dataset for OSS or Apsara File Storage NAS. Once the dataset is created, configure the Shell node to use the dataset so it can read from and write to the storage during task execution.

Run a node with an associated RAM role

Associate a RAM role with the node to enable fine-grained permission control. The node runs under that role's permissions, improving security without storing long-term credentials in the script.

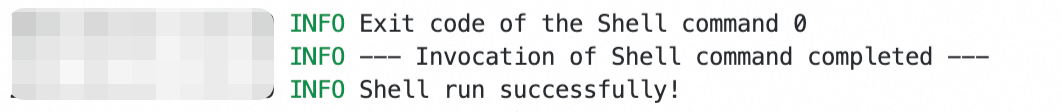

Appendix: Exit codes

A Shell node's exit code is determined by the last command the script executes.

| Exit code | Task status |

|---|---|

0 | Success |

-1 | Terminated |

2 | Platform automatically reruns the task once |

| Any other code | Failure |

The following image shows a standard run log for a Shell node that completed successfully (exit code 0).

Due to the underlying Shell mechanism, the exit code is determined by the last command executed in the script.