The EMR PySpark node lets you run Python-based Spark jobs directly from DataWorks. It combines a Python code editor and a spark-submit command editor in a single dual-pane interface, and integrates with Alibaba Cloud E-MapReduce (EMR) semi-managed clusters and EMR Serverless Spark (fully managed).

Prerequisites

Before you begin, ensure that you have:

A supported compute resource. The node supports two cluster types:

EMR compute resources (semi-managed): Only DataLake clusters and Custom clusters are supported. To enable EMR PySpark nodes on a semi-managed cluster, submit a ticket and specify the cluster type, EMR version, and your use case. We will assess the feasibility and provide support.

EMR Serverless Spark compute resources (fully managed)

A Serverless resource group. Only Serverless resource groups are supported.

The Dev or Workspace Administrator role. Log in as a root account or a RAM user with the Dev or Workspace Administrator role. To add members, see Add a member to a workspace.

How it works

The EMR PySpark node uses a dual-pane editor:

Upper pane: A Python code editor for your core business logic. Reference uploaded resource files (such as

.pymodules) using the##@resource_referenceannotation.Lower pane: A command editor where you write the

spark-submitcommand to submit your job to an EMR cluster.

When you click Run, DataWorks merges the Python script and its referenced resources, submits the job to the EMR cluster using spark-submit, and displays the execution logs and results.

Only the entire Python file can be submitted as a Spark job. Running a selected portion of the code is not supported.

Create a node

Log on to the DataWorks console and switch to the target region. In the left-side navigation pane, click Data Development and O&M > Data Development. Select a workspace from the drop-down list and click Go to Data Development.

On the Data Studio page, create an EMR PySpark node.

Set the Path and Name for the node. This example uses

emr_pyspark_testas the node name.

Develop a node

The following example estimates the value of pi (π) using the Monte Carlo method on a distributed cluster.

Step 1: Upload dependent resources

Upload your custom Python file to the Resource Management module so the node can reference it at runtime.

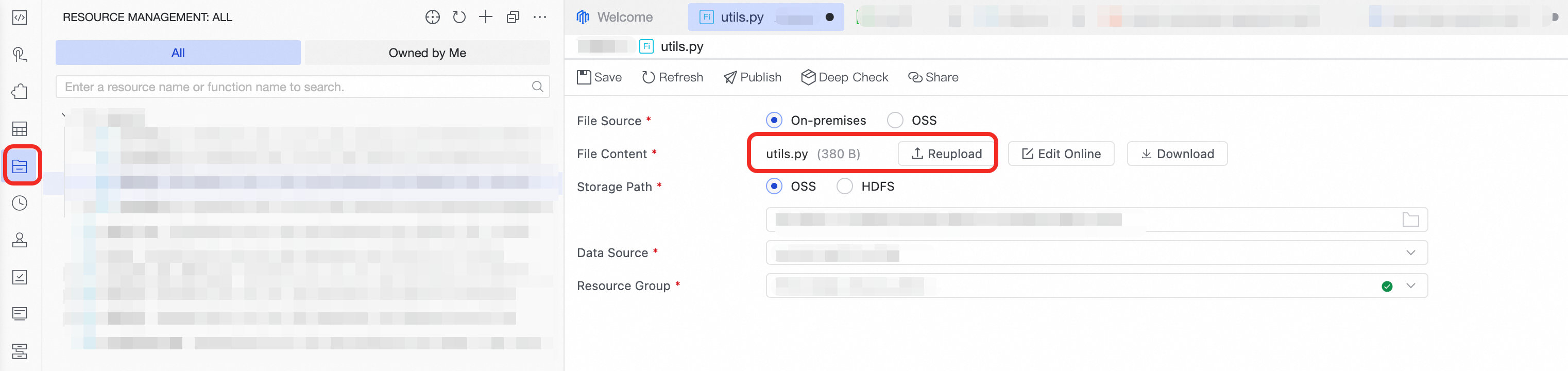

In Data Development, go to the resource management page and click Create Resource. Select EMR File as the resource type and set the resource Name. For detailed steps, see EMR resources and functions.

Click Re-Upload to upload the example utils.py file. This file defines the Monte Carlo sampling logic executed within each Spark task.

Select the Storage path, Connection, and Resource Groups, then click Save.

Step 2: Write the Python code

In the Python code editor (upper pane), write the following code. The program divides the workload across partitions, runs Monte Carlo sampling in parallel on worker nodes, and then aggregates the results to estimate pi.

##@resource_reference{"utils.py"}

from pyspark.sql import SparkSession

from utils import estimate_pi_in_task

import sys

def main():

# Create a SparkSession

spark = SparkSession.builder \

.appName("EstimatePi") \

.getOrCreate()

sc = spark.sparkContext

# Total number of samples

total_samples = int(sys.argv[1])

num_partitions = ${test1}

# Number of samples per partition

samples_per_partition = total_samples // num_partitions

# Create an RDD where each partition executes estimate_pi_in_task once

rdd = sc.parallelize(range(num_partitions), num_partitions)

# Map each partition to execute the sampling task

inside_counts = rdd.map(lambda _: estimate_pi_in_task(samples_per_partition))

# Aggregate the results from all partitions

total_inside = inside_counts.sum()

pi_estimate = 4.0 * total_inside / total_samples

print(f"Total samples: {total_samples}")

print(f"Samples inside the circle: {total_inside}")

print(f"Estimated value of Pi: {pi_estimate:.6f}")

spark.stop()

if __name__ == "__main__":

main()The code uses two types of parameters:

| Parameter | Type | Description | Example value |

|---|---|---|---|

sys.argv[1] | Command-line argument | Passed after the script name in the spark-submit command | 10000 (total samples) |

${test1} | Script parameter | Replaced by DataWorks with the actual value at runtime | 100 (number of partitions) |

Step 3: Write the spark-submit command

In the command editor (lower pane), write the spark-submit command. The required parameters differ depending on your cluster type.

For semi-managed clusters (EMR compute resources) — uses the standard open-source Apache Spark spark-submit tool:

# Submit to a semi-managed EMR cluster (DataLake or Custom)

spark-submit \

--master yarn \ # Specify YARN as the Spark cluster manager.

--deploy-mode cluster \ # Specify the cluster deployment mode.

--py-files utils.py \ # Explicitly declare the dependent Python resource file.

emr_pyspark_test.py 10000 # Main entry file of the program.For fully managed clusters (EMR Serverless Spark) — uses the Alibaba Cloud EMR Serverless spark-submit toolkit, which handles cluster management automatically:

# Submit to EMR Serverless Spark

spark-submit \

--py-files utils.py \

emr_pyspark_test.py 10000For a full list of supported parameters:

Semi-managed clusters: see the Apache Spark documentation on submitting applications

EMR Serverless Spark: see Submit a job by using spark-submit

Run a node

In the Run Settings section, configure the following parameters:

Parameter Description Compute resource Select an EMR compute resource (semi-managed) or EMR Serverless Spark compute resource (fully managed). If none are available, select create a compute resource from the drop-down list. Resource group Select a resource group bound to the workspace. Script Parameter If your code uses ${parameter_name}variables, specify the Value for this run here. DataWorks replaces the variable with this value at runtime. This value takes precedence over the default parameter value and applies only to the current execution.In the toolbar, click Run. DataWorks merges the Python script and its referenced resources, submits the job to the EMR cluster, and displays the execution logs and results.

What's next

Schedule a node: Set Scheduling Policies in the Scheduling section on the right side of the node page to run the node on a recurring schedule.

Publish a node: Click the

icon to publish the node to the production environment. A node runs on a schedule only after it is published.

icon to publish the node to the production environment. A node runs on a schedule only after it is published.Node O&M: After publishing, monitor the auto-triggered task status in Operation Center. See Get started with Operation Center.