DataWorks uses two complementary scheduling mechanisms — dependency-driven and time-constrained — to determine when each node runs. Choosing the right mechanism, or combining them, directly affects data delivery timeliness, resource utilization, and the operational stability of your pipelines.

This topic covers time property configuration. For scheduling dependencies, see Configure scheduling dependencies.

How scheduling time works

Every node instance runs only when both of the following conditions are met:

All upstream scheduling dependencies have completed successfully.

The node's own scheduled time has arrived.

This dual-gate model gives you precise control: let dependency completion drive execution speed, enforce a hard time constraint, or combine both.

The two patterns below cover the most common configurations.

Pattern 1: Dependency-driven execution

Set a specific scheduled time only for the first node in a workflow. Set all downstream nodes to 00:00. Downstream nodes start immediately after their upstream dependency completes — no waiting for a clock time.

Use this pattern when the goal is to complete the workflow as fast as possible after the first node runs.

How it works:

02:00 ~02:10 ~02:18

| | |

A runs B triggered C triggered

(scheduled) (dep met) (dep met)Node | Scheduled time | Actual runtime | Trigger |

A (start) |

|

| Runs at its scheduled time |

B (downstream) |

|

| Triggered immediately after A completes |

C (downstream) |

|

| Triggered immediately after B completes |

Pattern 2: Time-constrained execution

Set a specific scheduled time for any node that must not run before a certain clock time, regardless of when its upstream dependencies complete.

Use this pattern when external systems, business rules, or maintenance windows require a node to wait until a specific time.

How it works:

02:00 05:00 08:00

| | |

A completes B runs C runs

(dep met, (scheduled (scheduled

B waits) time reached) time reached)Node | Scheduled time | Actual runtime | Trigger |

A (start) |

|

| Runs at its scheduled time |

B (time-constrained) |

|

| Waits for both: upstream A complete AND clock reaches 05:00 |

C (time-constrained) |

|

| Waits for both: upstream B complete AND clock reaches 08:00 |

Plan scheduled times for delivery deadlines

When downstream teams or systems depend on data being ready by a specific time, plan scheduled times by working backward from the deadline.

Calculate scheduled times

Use this formula to find the latest start time for any node with a delivery deadline:

Latest start time = Delivery deadline − (Estimated runtime + Buffer time)Example: Node E must be ready by 09:00. Its estimated runtime is 20 minutes, and a 10-minute buffer is needed.

Node E scheduled time = 09:00 − (20 min + 10 min) = 08:30Choose a configuration approach

Manual time setting | Dependency-driven (recommended) | |

What you configure | Precise scheduled times for every node in the workflow | Scheduled time for the start node only |

Intermediate nodes | Each node has a manually calculated time | Remain at the default |

Maintenance cost | High — any runtime change requires recalculating all nodes | Low — only the start point needs adjustment |

Flexibility | Low | High |

Dependency-driven approach (recommended): Set the start node's scheduled time so the workflow launches on time. Let the system manage everything else through dependencies.

The default scheduled time for a daily node is randomly generated within the00:00–00:30window, not exactly00:00.

Example — five-node workflow with a 09:00 delivery deadline:

Node | Manual approach | Dependency-driven approach | Actual runtime |

A |

|

|

|

B |

|

|

|

C |

|

|

|

D |

|

|

|

E |

|

|

|

Handle resource contention at peak times

When many nodes use dependency-driven execution with a 00:00 default time, they can all start simultaneously, causing resource contention and queuing. Use baselines to prioritize core nodes without manually staggering start times.

Assign core nodes (such as Operational Data Store (ODS) layer extraction) to a high-priority baseline.

The scheduling engine gives high-priority nodes first access to computing resources.

Non-core report nodes queue behind them without competing for resources.

Before optimization | After optimization | |

Scenario | All jobs (core A/B, report C/D) scheduled at | Core nodes A/B assigned to a high-priority baseline |

Result | High concurrency, resource competition, widespread queuing | A/B run at |

Configure complex scheduling scenarios

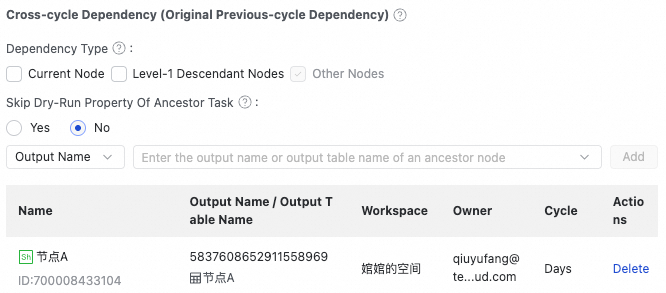

Cross-cycle dependencies

Configure a cross-cycle dependency when a node must wait for all instances of an upstream node from the previous scheduling cycle to complete before running.

Scenario: A daily summary node B runs at 02:00 each day. Its data source is an hourly node A. Node B runs only after all 24 hourly instances of node A from the previous day (00:00–23:00) have completed.

Configuration: In node B's scheduling dependency settings, set its dependency on node A as a cross-cycle dependency.

Result: The node B instance for data timestamp 2025-12-02 waits until all node A instances for data timestamp 2025-12-01 complete successfully.

If node B has no other upstream nodes, add the workspace root vertex as its upstream node.

For more scenarios involving nodes with different scheduling granularities, see Principles and examples for configuring scheduling in complex dependency scenarios.

Quarterly and other irregular recurring schedules

For nodes that run on irregular but predictable dates — such as a financial closing node that runs on the last day of each quarter — combine a yearly scheduling cycle with scheduling parameters.

Scenario: A financial closing node must run on the last day of each quarter (the end of January, April, July, and October) and process data from the entire preceding quarter.

When setting a quarter-end closing date, allow extra time for special month-end items such as cross-month supplementary orders, refund reversals, and manual audits.

Configuration:

In the node's time properties, set the scheduling cycle to yearly, specify the months as

1, 4, 7, 10, and select Last day of the month for the date. DataWorks automatically handles months with 30 or 31 days and leap years.In your code, use scheduling parameters to dynamically calculate the start and end dates of the quarter based on the current data timestamp.

Running logic: DataWorks identifies the correct last day for each month automatically. On non-execution days within the schedule, instances perform a dry-run without consuming computing resources, maintaining dependency continuity.

Scheduling calendars for business-day-specific schedules

A scheduling calendar acts as a date filter. Combined with a scheduled time, it lets you run a node only on specific business days — such as trading days — without generating instances on other days.

Scenario: A securities clearing node must run at 22:00 on every trading day. On weekends and public holidays, the node must not run.

Configuration:

In the DataWorks resource center, create a custom "Trading Calendar" with all trading dates for the year. For details, see Configure a scheduling calendar.

In the node's scheduling properties, set the trigger time to

22:00and associate the node with the Trading Calendar.

Execution logic:

Trading day: The node runs at

22:00.Non-trading day (such as a public holiday): The system skips instance generation, or the generated instance performs a dry-run without consuming computing resources.

Combine a scheduling calendar with hourly or minute-level schedules for dual date-and-time filtering. For example, an hourly node configured to run at08:00and18:00daily, when associated with a calendar that includes only Mondays and Fridays, runs only at those times on Mondays and Fridays.

Best practices

Decouple scheduling logic from business logic

Use a layered strategy so that changes to business requirements don't force you to reconfigure the entire workflow.

Linear workflows: Set the scheduled time for the first node only (for example,

07:00). Downstream nodes run through dependency-driven execution.Time-constrained nodes: Set precise, independent scheduled times for nodes with hard external deadlines. Avoid setting a start node's scheduled time later than its downstream node — that prevents the downstream node from running on time.

Dynamic date handling: Use scheduling parameters such as

${yyyymmdd}to dynamically replace date values in your code, avoiding hardcoded dates.Active period control: Use scheduling calendars and effective date ranges to limit when a node runs. For example, restrict a node to weekdays between January 1, 2026, and December 31, 2026.

Use baselines for intelligent scheduling

Baselines guarantee delivery times for core nodes and eliminate the need to manually manage resource contention.

Prerequisite: Create a baseline and configure node priorities.

Define committed completion times: Attach core nodes to a high-priority baseline with a committed completion time (for example,

09:00). The scheduling engine identifies the critical path and ensures high-priority nodes — such as ODS layer extraction — get computing resources first.Automatic resource peak-load shifting: Non-core report nodes are queued behind high-priority nodes. No manual staggering of start times is needed.

Dynamic prediction and alerts: Based on historical runtimes, the system predicts early in the day whether a workflow will miss its delivery time. If a delay is detected at

07:00(for example, a predicted finish of09:15instead of09:00), it triggers an alert and highlights the bottleneck nodes on the critical path — shifting the response from post-incident recovery to proactive intervention.

Combining static planning with intelligent baselines: Set the start node's scheduled time through static planning. Use baselines to handle dynamic priority-based scheduling across the rest of the workflow. This reduces manual maintenance costs and ensures a high degree of certainty for core data output.