Dataphin supports a wide range of Python-based data processing scenarios by enabling you to create Python compute nodes that use native Python syntax. This topic describes how to create a Python compute node in Dataphin.

Background information

Python 3.7 better supports diverse big data processing requirements. For example, Python 3.7 introduces the list.clear() method, which is not available in Python 2.7. For more information, see Python.

If your project’s compute source uses the Databricks engine and the Python version is 3.11, you can enable Databricks Connect. For more information, see What is Databricks Connect. If you use Databricks Python Connect outside Dataphin, refer to the following code.

from databricks.connect import DatabricksSession

from databricks.sdk.core import Config

from databricks.sdk import WorkspaceClient

# Add the following to the .databrickscfg file in the $HOME directory

# [<profile-name>]

# host = <host-name>

# client_id = <client_id>

# client_secret = <client_secret>

# cluster_id = <cluster_id>

# Method 1

config = Config(

profile = "<profile-name>"

)

spark = DatabricksSession.builder.sdkConfig(config).getOrCreate()

w = WorkspaceClient(profile = "DEFAULT")

# Method 2

# spark = DatabricksSession.builder.profile("<profile-name>").getOrCreate()

# Method 3

# Create environment variables

# DATABRICKS_HOST =

# DATABRICKS_CLIENT_ID =

# DATABRICKS_CLIENT_SECRET =

# DATABRICKS_CLUSTER_ID =

# spark = DatabricksSession.builder.getOrCreate()

employees = [{"name": "John D.", "age": 30},

{"name": "Alice G.", "age": 25},

{"name": "Bob T.", "age": 35},

{"name": "Eve A.", "age": 28}]

df = spark.createDataFrame(employees)

df.write.saveAsTable("bdec.dataphin_test.demo_connect")

df_demo = spark.read.table("bdec.dataphin_test.demo_connect")

df_demo.show()

spark.sql("select * from bdec.dataphin_test.demo_connect").show()

fs = w.dbutils.fs

fs.put(file = "/demo/first_file.txt", contents = "hello world")

fs.head("/demo/first_file.txt")Limits

Python 3.7 is not backward compatible with Python 2.7. You cannot directly upgrade existing Python 2.7 nodes.

Starting with Dataphin version 2.9.3, Python 3.7 compute nodes are supported by default. You can change the Python version only for nodes in the Draft state. Supported versions include Python 2.7, Python 3.7, and Python 3.11.

You can enable Databricks Connect only when your project’s compute source uses the Databricks engine and the Python version is 3.11.

Task execution

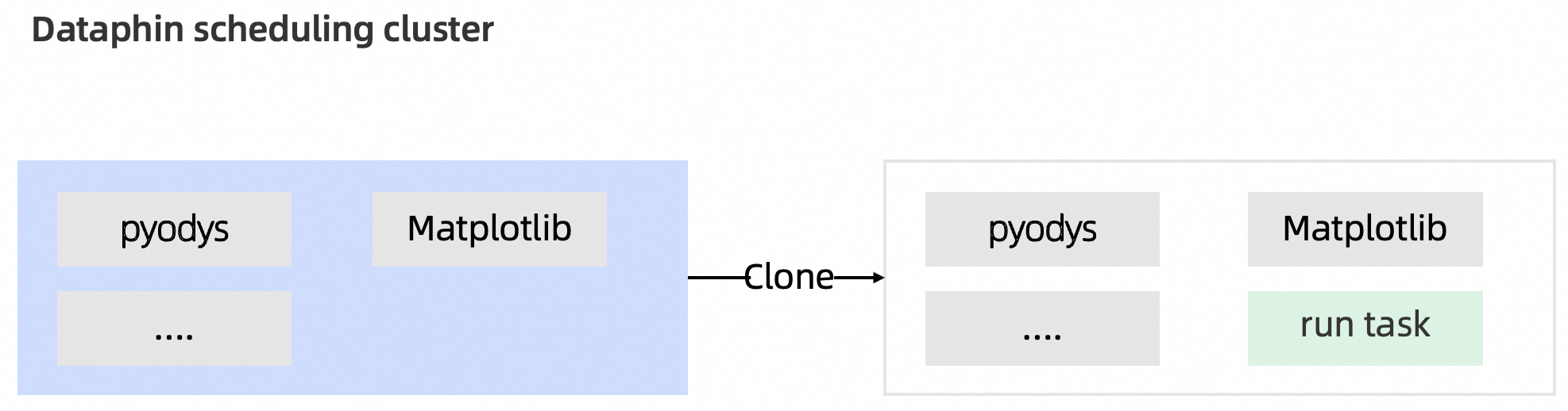

After you edit a Python node in Dataphin, the Dataphin scheduling cluster executes it. During execution, the cluster clones a built-in template image that contains common Python resource packages. You can use these pre-installed packages to develop your Python nodes. For more information, see Appendix: Pre-installed Python resource packages.

If the built-in resource packages do not meet your needs, you can install required resource packages as third-party Python packages in the Management Center. You can then reference the installed packages. At runtime, the system automatically places the referenced packages in the runtime environment for node execution. Because Dataphin clones a built-in template image for each run, if you use the

pip installcommand to use a resource package, thepip installcommand runs every time the node runs. Use third-party Python packages.

Procedure

On the Dataphin home page, choose Develop > Data Development from the top menu bar.

On the Develop page, select a project from the top menu bar. If you are using Dev-Prod mode, also select an environment.

In the left navigation pane, select Data Processing > Jobs. In the Jobs list, click the

icon and select Python.

icon and select Python.In the Create Python Node dialog box, configure the following parameters.

Parameter

Description

Task Name

Enter a name for the code node.

The name can be up to 256 characters long and cannot contain vertical bars (|), forward slashes (/), backslashes (\), colons (:), question marks (?), angle brackets (<>), asterisks (*), or double quotation marks (").

Schedule Type

Select a schedule type for the node. Schedule Type includes:

Recurring Task: The node is periodically scheduled by the system.

One-Time Task: You must manually trigger the node to run.

Select Directory

Select the folder that contains the task.

If no folder is created, you can **create a new folder** as follows:

Above the compute node list, click the

icon to open the Create Folder dialog box.

icon to open the Create Folder dialog box.In the Create Folder dialog box, enter a Name for the folder and Select Directory as needed.

Click OK.

Use Template

Reference a code template for efficient development. The code in a template node is read-only and cannot be edited. You only need to configure the template parameters to complete code development.

Python Version

You can select Python 2.7, Python 3.7, or Python 3.11.

Third-party Python packages

To use third-party Python packages, select a Python Version, and then select the Python third-party packages to import. The Python version defaults to the version selected for Python Default Version in Platform Settings > Development. You can select Python 2.7, Python 3.7, or Python 3.11. If you select multiple third-party Python packages, you can adjust their upload order in the list below.

For more information about third-party Python packages, see Install and manage third-party Python packages.

NoteAfter you add a third-party module to Python third-party packages, you must declare a reference in the task before you can import the module in your code. You can configure the referenced module in Compute Task Properties > Python Third-Party Packages Configuration Item.

Databricks Connect

After you enable this option, you must also select Development httpPath (Cluster) and Production httpPath (Cluster). For Basic projects, you only need to configure the production httpPath (Cluster).

You can select any general compute that is configured in the Databricks cluster to which the current development or production project's compute source belongs. These are HTTP paths that start with

sql/protocolv1/o.Description

Enter a brief description of the task, up to 1000 characters.

Click OK.

In the code editor on the current Python node tab, write the code for the Python compute node. After you finish editing the code, click Run above the code editor.

NoteWhen developing a Python compute node, you typically need to install resource packages based on your business scenario. Dataphin pre-installs common resource packages in the system. During development, add an

import {package_name}statement at the beginning of your code, such asimport configparser. For more information, see Appendix: Pre-installed Python resource packages.When developing a Python compute node, we recommend adding a comment in the first two lines of the file to specify the encoding. This prevents the system from using the default system encoding, which may cause errors during execution.

To import uploaded resource files into Python, see Upload and reference resources.

Click Property in the right-side sidebar. In the Property panel, configure the node’s Basic Information, Runtime Resources, Python Third-party Packages, Runtime Parameter, Schedule Property (for auto triggered tasks), Schedule Dependency (for auto triggered tasks), Runtime Configuration, and Resource Configuration.

Basic Information

Specifies basic information such as the node name, owner, and description. For configuration instructions, see Configure basic information for a node.

Runtime Resources

Specifies the CPU and memory resources allocated to run the node. The default is 0.1 cores and 256 MB. For configuration instructions, see Configure runtime resources for an offline node.

Python Third-party Packages

Select the third-party Python packages to import. For more information, see Install Python modules.

Runtime Parameter

If your node references parameter variables, assign values to those parameters in this section. The system replaces the variables with their corresponding values during scheduling. For configuration instructions, see Configure and use node parameters.

Schedule Property (for auto triggered tasks)

If the schedule type of the offline compute node is Recurring Task, you must configure its schedule properties in addition to its Basic Information. For configuration instructions, see Configure schedule properties.

Schedule Dependency (for auto triggered tasks)

If the schedule type of the offline compute node is Recurring Task, you must configure its schedule dependencies in addition to its Basic Information. For configuration instructions, see Configure schedule dependencies.

Runtime Configuration

You can configure a node-level runtime timeout and a retry policy for failed executions, based on your business scenario. If not configured, the node inherits the default tenant-level settings. For configuration instructions, see Configure runtime settings for a compute node.

Resource Configuration

You can assign a resource group to the current compute node. The node uses the resources of this group for scheduling. For configuration instructions, see Configure resources for a compute node.

On the current Python node tab, save and submit the node.

Click the

icon above the code editor to save the code.

icon above the code editor to save the code.Click the

icon above the code editor to submit the code.

icon above the code editor to submit the code.

On the Submitting Log page, review the Submission Content and the results of the Pre-check, and enter any remarks. For more information, see Submit an offline compute node.

NoteWhen you use Databricks Connect in Dataphin, the system checks whether the current user has the required permissions for objects referenced in the code.

spark.sql()

For table queries, the system checks whether the current user has permissions on the fields of the queried table.

For DML statements, the system checks whether the current user has permission to modify the table data.

For global variables, the system checks whether access control is enabled for the variable and whether the current user has permission to use it.

spark.read.table("<table_name>")andspark.table("<table_name>"): The system checks for read permissions on the entire<table_name>.df.write.saveAsTable("<table_name>"): The system checks for write permissions on<table_name>. `df` is a DataFrame object.

After confirmation, click OK and Submit.

NoteTo ensure data security, if your Python node code includes

from dataphin import hivecorimport dataphin, a code review is triggered upon submission. A code review ticket is automatically generated. The node can be submitted only after the review is approved.The code reviewer is the project administrator of the current project. If multiple project administrators exist, approval from any one of them is sufficient.

What to do next

If your development mode is Dev-Prod mode, after submitting the node, go to the publish list and publish the node to the production environment. For more information, see Manage published nodes.

If your development mode is Basic mode, the submitted Python node runs in the production environment. You can view your published nodes in the Operation Center. For more information, see View and manage script nodes and View and manage one-time nodes.