Offline full-database migration synchronizes data from your on-premises data center or a self-managed database hosted on an ECS instance to big data compute services such as MaxCompute, Hive, and TDH Inceptor. This topic describes how to create and configure a full-database migration task.

Prerequisites

You have created the required data sources for migration. Full-database migration supports source databases such as MySQL, Microsoft SQL Server, Oracle, and OceanBase. For a complete list of supported data sources, see Supported data sources for full-database migration.

Function introduction

Offline full-database migration is a fast and efficient tool that reduces effort and cost. Instead of configuring individual offline pipelines one by one, you can use this feature to batch-configure multiple offline pipelines and synchronize all tables in a database at once.

Procedure

On the Dataphin homepage, in the top menu bar, choose Development > Data Integration.

In the top menu bar, select the target project.

In the left navigation pane, choose Database Migration > Offline Full-Database Migration.

On the Offline Full-Database Migration page, configure the parameters described in the following table.

Configure basic information.

Full-database migration folder name: Enter a name that is up to 256 characters in length. The following characters are not supported: vertical line (|), forward slash (/), backslash (\), colon (:), question mark (?), angle brackets (<>), asterisk (*), and quotation mark (").

Configure data source information.

Source

Parameter

Description

Data source type

Select the type of the source data source. For a list of supported data sources and instructions on creating them, see Supported data sources for full-database migration.

Oracle data source

Schema: Cross-schema table selection is supported. Select the schema where the tables reside. If not specified, the schema configured in the data source is used by default.

File encoding: If you select Oracle, choose the encoding. Supported options are UTF-8, GBK, and ISO-8859-1.

Microsoft SQL Server, PostgreSQL, Amazon Redshift, Amazon RDS for PostgreSQL, Amazon RDS for MySQL, Amazon RDS for SQL Server, Amazon RDS for Oracle, Amazon RDS for DB2, PolarDB-X 2.0, and GBase 8C data sources

Schema: Cross-schema table selection is supported. Select the schema where the tables reside. If not specified, the schema configured in the data source is used by default.

DolphinDB data source

Database: Select the database where the tables reside. If left blank, the database specified during data source registration is used.

Hive data source

If you select Hive, configure the following items.

File encoding: Supported options are UTF-8 and GBK.

ORC table compression format: Supported options are zlib, hadoop-snappy, lz4, and none.

Text table compression format: Supported options are gzip, bzip2, lzo, lzo_deflate, hadoop_snappy, framing-snappy, zip, and zlib.

Parquet table compression format: Supported options are hadoop_snappy, gzip, and lzo.

Field delimiter: The specified delimiter will be used when writing to the destination table. If left blank, the default is

\u0001.

Time Zone

Select the time zone that matches your database configuration. In China regions, Data Integration uses

GMT+8by default, which does not observe daylight saving time. If your database uses a time zone that observes daylight saving time—such asAsia/Shanghai—and the synchronized timestamp falls within a daylight saving period, select a time zone likeAsia/Shanghai. Otherwise, the synchronized data will differ by one hour from the source data.Supported time zones include the following: GMT+1, GMT+2, GMT+3, GMT+5:30, GMT+8, GMT+9, GMT+10, GMT-5, GMT-6, GMT-8, Africa/Cairo, America/Chicago, America/Denver, America/Los_Angeles, America/New York, America/Sao Paulo, Asia/Bangkok, Asia/Dubai, Asia/Kolkata, Asia/Shanghai, Asia/Tokyo, Atlantic/Azores, Australia/Sydney, Europe/Berlin, Europe/London, Europe/Moscow, Europe/Paris, Pacific/Auckland, and Pacific/Honolulu.

Datasource

Select the source data source. If the required data source is not available, click Create Data Source to create one.

Batch read size

When the source data source is Oracle, Microsoft SQL Server, OceanBase, IBM DB2, PostgreSQL, Amazon Redshift, Amazon RDS for PostgreSQL, Amazon RDS for MySQL, Amazon RDS for SQL Server, Amazon RDS for Oracle, Amazon RDS for DB2, DolphinDB, or GBase 8C, you can configure the number of records to read in a single batch. The default value is 1024.

Sync Target

Parameter

Description

Data source type

Select the destination data source type. For a list of supported data sources and instructions on creating them, see Supported data sources for full-database migration.

NoteWhen synchronizing to AnalyticDB for PostgreSQL, the system creates a date-based partition for the destination table each day.

To use other partitioning strategies, modify the preparation statement for individual pipelines after they are generated.

Datasource

Select the destination data source. If the required data source is not available, click Create Data Source to create one. For a list of supported data sources and instructions on creating them, see Supported data sources for full-database migration.

TDH Inceptor and ArgoDB destination types.

You must configure the storage format.

TDH Inceptor: Supports PARQUET, ORC, and TEXTFILE storage formats.

ArgoDB: Supports PARQUET, ORC, TEXTFILE, and HOLODESK storage formats.

Hive destination type.

The configuration options vary depending on whether you select a data lake table format (Hudi, Iceberg) or leave it unselected (standard Hive).

NoteYou can select Hudi or Iceberg only if the selected data source or the current project’s compute engine has data lake table format enabled and you explicitly choose Hudi or Iceberg.

Data lake table format set to none (i.e., standard Hive)

External table: Toggle the switch to specify whether the table is external. Disabled by default.

Storage format: Supports PARQUET, ORC, and TEXTFILE.

File encoding: When the Hive storage format is ORC, you can configure file encoding. Supported options are UTF-8 and GBK.

Compression format:

ORC storage format: Supports zlib, hadoop-snappy, and none.

PARQUET storage format: Supports gzip and hadoop-snappy.

TEXTFILE storage format: Supports gzip, bzip2, lzo, lzo_deflate, hadoop-snappy, and zlib.

Performance settings: When the Hive storage format is ORC, you can configure performance-related settings. In scenarios with many fields and sufficient memory, increasing this value may improve write performance. If memory is limited, decreasing it may reduce GC time and improve write performance. The default is

{"hive.exec.orc.default.buffer.size":16384}bytes. Do not exceed 262144 bytes (256 KB).Field delimiter: For TEXTFILE storage format, you can configure the field delimiter. The system uses the specified delimiter when writing to the destination table. If left blank, the default is

\u0001.Field delimiter handling: For TEXTFILE storage format, you can configure how to handle field delimiters. If your data contains the default or custom field delimiter, choose a strategy to prevent write errors: keep, remove, or replace with.

Row delimiter handling: For TEXTFILE storage format, you can configure how to handle row delimiters. If your data contains line feeds (

\r\nor\n), choose a strategy to prevent write errors: keep, remove, or replace with.Development Data Source Location: In a Dev-Prod mode project, you can specify the storage location of tables in table creation statements for the development data source. For example,

hdfs://path_to_your_external_table.Production Data Source Location: For projects in Dev-Prod mode, you can specify the table's storage location in the table creation statement for the production data source. For example,

hdfs://path_to_your_external_table.NoteBasic mode projects require only one data source Location.

Data lake table format set to Hudi

Execution engine: If Spark is enabled for the selected data source, you can choose Spark or Hive. If Spark is not enabled, only Hive is available.

Hudi table type: Choose MOR (merge on read) or COW (copy on write). Default is MOR.

Extended properties: Enter Hudi-supported configuration properties in the format

k=v.

Data lake table format set to Iceberg

Execution engine: If Spark is configured for the selected data source, Spark is displayed and selected by default. Otherwise, only Hive is available.

External table: Toggle the switch to specify whether the table is external. Disabled by default.

Storage format: Supports PARQUET, ORC, and TEXTFILE.

File encoding: When the Hive storage format is ORC, you can configure file encoding. Supported options are UTF-8 and GBK.

Compression format:

ORC storage format: Supports zlib, hadoop-snappy, and none.

PARQUET storage format: Supports gzip and hadoop-snappy.

TEXTFILE storage format: Supports gzip, bzip2, lzo, lzo_deflate, hadoop-snappy, and zlib.

Performance settings: When the Hive storage format is ORC, you can configure performance-related settings. In scenarios with many fields and sufficient memory, increasing this value may improve write performance. If memory is limited, decreasing it may reduce GC time and improve write performance. The default is

{"hive.exec.orc.default.buffer.size":16384}bytes. Do not exceed 262144 bytes (256 KB).Field delimiter: For TEXTFILE storage format, you can configure the field delimiter. The system uses the specified delimiter when writing to the destination table. If left blank, the default is

\u0001.Field delimiter handling: For TEXTFILE storage format, you can configure how to handle field delimiters. If your data contains the default or custom field delimiter, choose a strategy to prevent write errors: keep, remove, or replace with.

Row delimiter handling: For TEXTFILE storage format, you can configure how to handle row delimiters. If your data contains line feeds (

\r\nor\n), choose a strategy to prevent write errors: keep, remove, or replace with.Development Data Source Location: For projects that use the Dev-Prod pattern, you can specify the storage location of a table in the CREATE TABLE statement for the development data source, such as

hdfs://path_to_your_external_table.Prod data source Location: In Dev-Prod mode projects, specify the HDFS location for table storage in the production environment’s CREATE TABLE statement. Example:

hdfs://path_to_your_extemal_table.NoteBasic mode projects require only one data source Location.

Data lake table format set to Paimon

Execution engine: Only Spark is supported.

Paimon table type: Choose MOR (merge on read), COW (copy on write), or MOW (merge on write). Default is MOR.

Extended properties: Enter Hudi-supported configuration properties in the format

k=v.

AnalyticDB for PostgreSQL and GaussDB (DWS) destination types.

Configure the following items.

ImportantThe conflict resolution policy applies only to AnalyticDB for PostgreSQL with kernel version higher than 4.3 in Copy mode. Use caution when selecting this option for versions below 4.3 or unknown versions to avoid task failure.

Conflict resolution policy: In Copy mode, you can set the policy to either error on conflict or overwrite on conflict.

Schema: Cross-schema table selection is supported. Select the schema where the tables reside. If not specified, the schema configured in the data source is used by default.

Lindorm Target Data Source Type

Configure the following items.

Storage format: Supports PARQUET, ORC, TEXTFILE, and ICEBERG.

Compression format: Varies by storage format.

ORC storage format: Supports zlib, hadoop-snappy, lz4, and none.

PARQUET storage format: Supports gzip and hadoop-snappy.

TEXTFILE storage format: Supports gzip, gzip2, lzo, lzo_deflate, hadoop-snappy, and zlib.

Dev data source Location: In Dev-Prod mode projects, specify the root HDFS path for table storage in the development environment’s CREATE TABLE statement. Example:

/user/hive/warehouse/xxx.db.Prod data source Location: In Dev-Prod mode projects, specify the root HDFS path for table storage in the production environment’s CREATE TABLE statement. Example:

/user/hive/warehouse/xxx.db.

MaxCompute data source

MaxCompute table type: Choose standard table or Delta table.

Databricks data source

Schema: Cross-schema table selection is supported. Select the schema where the tables reside. If not specified, the schema configured in the data source is used by default.

Loading Policy

For Hive (when data lake table format is Hudi or Paimon), TDH Inceptor, ArgoDB, StarRocks, Oracle, MaxCompute, Lindorm (compute engine), and Data Lake Formation destinations, the loading policy supports overwrite, append, and update.

Overwrite: If data already exists, delete it before writing new data.

Append: Add new data without overwriting existing data.

Update: Update records based on primary key. Insert new records if the primary key does not exist.

NoteIf the MaxCompute table type is standard table, choose append or overwrite. If it is Delta table, choose update or overwrite.

For Data Lake Formation destinations, only append and update are supported.

For Hive destinations (when data lake table format is not selected), the loading policy supports overwrite only integrated data, append, and overwrite all data.

For AnalyticDB for PostgreSQL and GaussDB (DWS) destinations, the loading policy supports insert and copy.

insert: Synchronizes data record by record. Suitable for small data volumes to ensure accuracy and completeness.

copy: Synchronizes data via files. Suitable for large data volumes to improve speed.

Batch write size

For Hive (when data lake table format is Hudi), AnalyticDB for PostgreSQL, and StarRocks destinations, you can configure the maximum amount of data written in a single batch. You can also set the batch write count. The system writes data when either limit is reached first.

Batch write count

For Hive (when data lake table format is Hudi), AnalyticDB for PostgreSQL, and StarRocks destinations, you can configure the number of records written in a single batch.

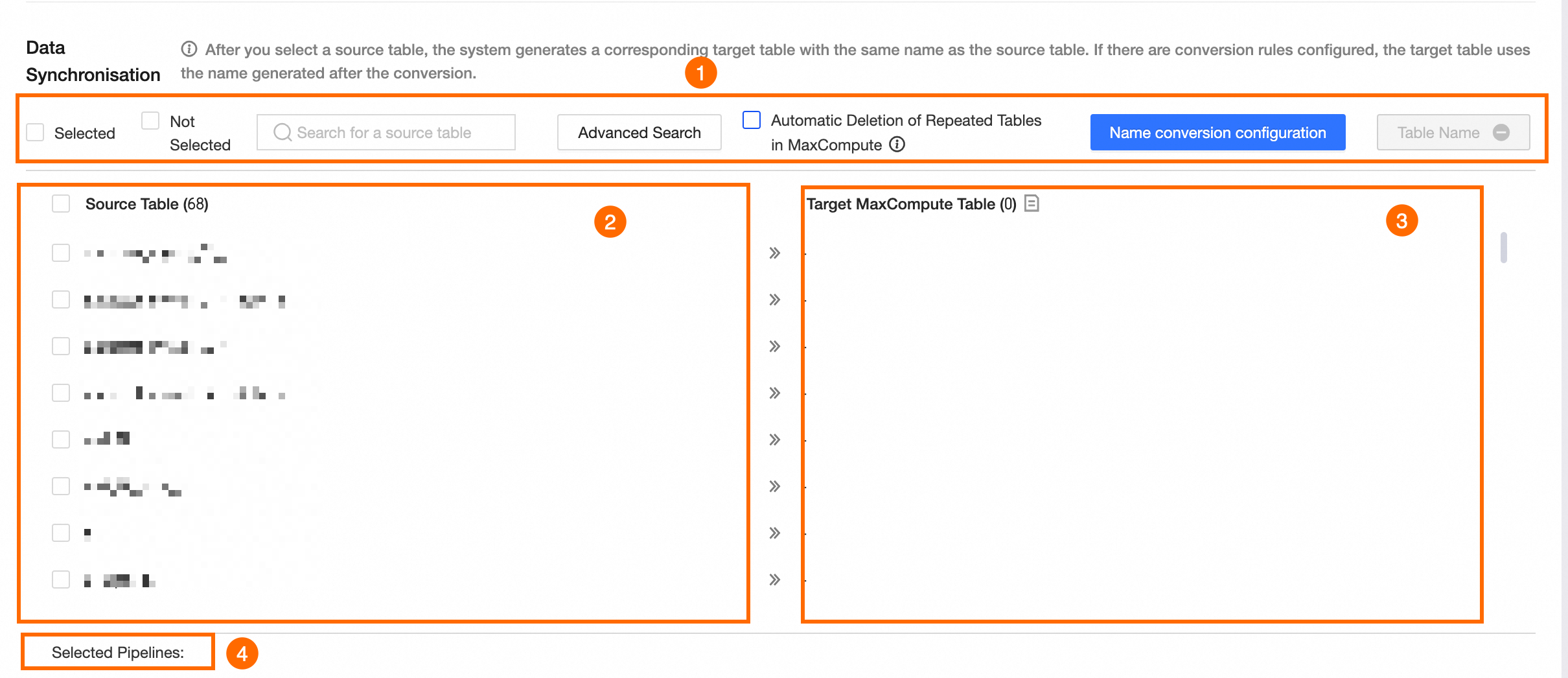

Configure data synchronization.

Source is not FTP.

By default, after you select the source tables, corresponding destination tables are generated with the same names. If you configure naming conversion rules, the names are transformed accordingly.

NoteIf the source data source is external and a metadata collection task is not configured, the metadata for the source is unavailable and the metadata fields are empty. You can go to the Metadata Center to configure a collection task.

Section

Description

① Operations area

Selected and unselected: Filter source tables by selected or unselected status.

Search source tables: Search by table name (case-sensitive).

Advanced search: The page displays up to 10,000 tables. Advanced search lets you find tables across the entire database. Click Advanced Search and configure parameters in the Advanced Search dialog box.

Set search method.

Choose between exact table name input and fuzzy search.

Exact table name input: Enter multiple table names in the search content field, separated by the configured delimiter (default is

\n). You can customize the delimiter.Fuzzy search: Enter keywords in the search content field. The system performs a fuzzy match on table names.

Enter search content.

The input format depends on the search method.

Exact table name input: Enter multiple table names separated by the configured delimiter.

Fuzzy search: Enter table name keywords.

Search results.

After setting the search method and search content, click Search. In the results, select tables and choose an action: select all or deselect all. Click OK to apply the selection.

Automatically delete existing tables with the same name: When enabled, Dataphin deletes any existing tables in the data source that have the same name as those generated by full-database migration, then recreates them.

ImportantFor project data sources, this deletes tables in both production and development environments. Use caution.

Naming conversion rules: Optional. These rules let you replace or filter table and field names during synchronization.

Click Naming Conversion Rules.

On the Naming Conversion Rules page, configure transformation rules.

Table name conversion rule: Click Create Rule. Enter the source string to replace and the replacement string. Example: To change

dataworktodataphin, set the source string toworkand the replacement tophin.Table name prefix: Enter a prefix in the Table name prefix field. During synchronization, the prefix is added automatically. Example: Prefix

pre_+ table namedataphin=pre_dataphin.Table Name Suffix: Enter the suffix for the destination database table in the Table Name Prefix field. During sync, the destination database table name is automatically generated. For example, if you enter

_prodas the suffix and the original table name isdataphin, the generated destination database table name will bepre_dataphin_prod.Field name rules: Click Add Rule. Enter the source field string to replace and the replacement string. Example: To change field name

dataworktodataphin, set the source string toworkand the replacement tophin.

After configuration, click OK. The corresponding destination tables will display the converted names.

NoteEnglish characters in replacement strings and table name prefixes/suffixes are automatically converted to lowercase.

Validate table names: Checks whether the destination database already contains tables with the current destination names.

②Source tables

Select the source tables to synchronize from the Source tables list.

③Corresponding destination tables

After selecting source tables, corresponding destination tables are generated with names matching the source tables by default. If you configure naming conversion rules, the names are transformed accordingly.

NoteDestination table names support only letters, digits, and underscores. If source table names contain other characters, configure table name conversion rules.

④Pipeline count

Number of pipelines currently selected.

Source is FTP.

You can click Download Excel Template, fill it out as instructed, and upload the file. Follow the template format exactly to avoid parsing failures.

NoteYou can upload a single .xlsx file or a single .zip archive that contains one or more .xlsx files. The file size cannot exceed 50 MB.

After you upload the file, click Parse File.

Parameter

Description

① Operation Area

Search source files: Search by file name.

Show only failed tasks: Displays only tasks that failed parsing.

Automatically delete existing tables with the same name: When enabled, deletes any existing tables in the data source that have the same name as those generated by full-database migration, then recreates them.

ImportantFor project data sources, this deletes tables in both production and development environments. Use caution.

Validate table names: Checks whether the destination database already contains tables with the current destination names.

②Source files and Corresponding destination tables

Source files: Select the files to synchronize from the Source files list.

Corresponding destination tables: After parsing, destination tables are generated based on the template file.

③Pipeline count

Number of pipelines currently selected.

Task naming configuration

Generated By specifies how the name for the offline full-database migration task is generated. You can choose System Default or Custom Rule.

Parameter

Description

Generated By

System Default

Generates task names using the system’s default naming convention.

Custom Rule

ImportantBefore configuring a custom task name rule, select both the source and destination data sources. Otherwise, you cannot configure the rule.

Default rule: After selecting source and destination data sources and choosing Custom Rule, the system generates a default rule in the task naming rule text box. The default rule is

${source_data_source_type}2${destination_data_source_type}_${source_table_name}.Example: If the source is MySQL, the destination is Oracle, and the first source table is named

source_table_name1, the default rule isMySQL2Oracle_${source_table_name}, and the previewed task name isMySQL2Oracle_source_table_name1.NoteThis default rule is not the same as the system default generation method.

Custom rule: Enter a naming rule in the Task naming rule text box on the left. You can delete the default rule or modify it.

Names cannot contain vertical line (|), forward slash (/), backslash (\), colon (:), question mark (?), angle brackets (<>), asterisk (*), or quotation mark ("). Maximum length is 256 characters. Click the Available metadata list on the right to copy valid metadata placeholders.

NoteWhen metadata placeholders are used, their values in the task name preview are taken from the first table in the source table list.

Set synchronization method and data filtering.

Parameter

Description

Synchronization Method

Select the synchronization method: Daily Sync, One-Time Sync, or Daily Sync + One-Time Sync.

Daily Sync: Creates a scheduled pipeline task that runs daily, typically for incremental or full daily data sync.

One-Time Sync: Creates a manual pipeline task, typically for historical full data sync.

Daily Sync + One-Time Sync: Creates both a scheduled daily task and a manual task. Typically used for initial full sync followed by daily incremental or full sync.

NoteFTP sources do not support Daily Sync + One-Time Sync.

The destination table is created as

Select whether to create partitioned or non-partitioned destination tables. Rules vary by sync method:

Daily Sync: If partitioned, the destination table is created as partitioned, with data written to partition

ds=${bizdate}by default. If non-partitioned, the table is created as non-partitioned.One-Time Sync: If partitioned, the destination table is created as partitioned. You must configure the one-time sync partition parameter, which supports constants or partition parameters. Examples: constant

20230330or partition parameterds=${bizdate}. If non-partitioned, the table is created as non-partitioned.Daily Sync + One-Time Sync: Partitioned tables are used by default and cannot be changed. You must configure the one-time sync partition parameter, which supports constants or partition parameters. Examples: constant

20230330or partition parameterds=${bizdate}.NoteCurrently, one-time sync data can only be written to a single specified partition. To write historical full data to multiple partitions, use an SQL task after one-time sync to populate the correct partitions, or use daily incremental sync followed by a data backfill operation.

Data filtering

Source is not Hive or MaxCompute

Daily sync filter condition: When using daily sync, configure a filter condition. Example:

ds=${bizdate}extracts all data whereds=${bizdate}from the source and writes it to the specified destination partition.One-time sync filter condition: When using one-time sync, configure a filter condition. Example:

ds=<${bizdate}extracts all data whereds=<${bizdate}from the source and writes it to the specified destination table (or partition).

Source is Hive or MaxCompute

Daily Sync Partition: When the source database is Hive, or MaxCompute, you must specify the partitions of the partitioned table to read daily. This feature supports reading a single partition, such as

ds=${bizdate}. It also supports reading multiple partitions, such as/*query*/ds>=20230101 and ds<=20230107.One-time Sync Partitions: If the source database is Hive or MaxCompute and the Sync Mode includes One-time Sync, you must specify the partitions to read from the partitioned table. You can read a single partition by entering

ds=${bizdate}, or multiple partitions by entering/*query*/ds>=20230101 and ds<=20230107.If partition does not exist: Choose a strategy:

Mark task as failed: Stops the task and marks it as failed.

Mark task as successful: Completes the task successfully with no data written.

Use latest non-empty partition: For MaxCompute sources, uses the table’s latest non-empty partition (max_pt). If no non-empty partitions exist, the task fails. Not supported for Hive sources.

NoteFTP sources do not support data filtering.

Parameter Settings

For FTP sources, you can use parameters in the source file path.

Scheduling configuration

Parameter

Description

Scheduling Configuration

Select a scheduling configuration. The options include Concurrent Scheduling and Batch Scheduling.

Concurrent Scheduling: Runs sync tasks for the selected tables from the source database at 00:00 (in the specified scheduling time zone) every day.

Batch Scheduling: Runs sync tasks for the selected tables from the source database in batches. Supports a scheduling interval from 0 to 23 hours and a maximum of 142 sync tasks. For example, to sync 100 tables and you set the schedule to sync 10 tables every 2 hours, it will take 20 hours to start all sync tasks in one cycle. The sync interval cannot exceed 24 hours.

Runtime timeout

If a sync task runs longer than the specified threshold, it is automatically stopped and marked as failed. You can select System configuration or Custom.

System configuration: Uses the system's default timeout period. For more information, see Runtime configurations.

Custom: Set a custom timeout period. Enter a number greater than 0 and up to 168. The value can have up to two decimal places.

Auto rerun upon failure

If a task instance or data backfill instance fails, the system automatically reruns it based on your configuration. You can set the number of retries to an integer from 0 to 10 and the retry interval to an integer from 1 to 60.

Upstream dependency

Click Add Dependency to add a physical node or a logical table node as an upstream dependency for this node. If you do not configure a dependency, the tenant's virtual root vertex is used as the default upstream dependency. You can also manually add a virtual node as a dependent object for this node. This is useful for scenarios such as unified data backfills.

Resource configuration

Resource Group: Offline full-database migration tasks consume scheduling resources from a resource group that you specify. Each generated task instance consumes quota from the specified group. If insufficient resources are available, the task enters a waiting for scheduling resources state. Resource groups are isolated to ensure scheduling stability.

You can select only resource groups that are configured for the task daily scheduling scenario and are associated with the current project. For more information, see Resource group configuration.

NoteBasic projects support a Resource Group, while Dev-Prod projects support a Development Task Scheduling Resource Group and a Production Task Scheduling Resource Group.

If you select Project Default Resource Group, the configuration is automatically updated when the project's default resource group is changed.

By default, both development and production tasks use the project's default scheduling resource group. You can change this to any other resource group that is associated with the current project, including registered scheduling cluster resource groups.

After configuring all parameters, you can click Generate Pipelines to create the offline full-database migration pipelines.

In the Run Results section, you can view the results of the pipeline tasks. The results include details such as source tables, destination tables, sync methods, task status, and remarks.

After the pipelines are generated, a folder for the offline full-database migration task is created under Offline Integration. The folder contains the generated offline pipeline tasks. You can configure and publish these tasks. For more information, see Configure offline pipeline task properties.

If some tables fail to be created or you need to add new tables later, you can manually create offline pipeline or script tasks for those tables and move them into the full-database migration folder. To do this, follow these steps:

Click the

button next to the target full-database migration folder, and then select Batch Pipeline or Batch Script.

button next to the target full-database migration folder, and then select Batch Pipeline or Batch Script.In the Create Offline Pipeline or Create Offline Script dialog box, configure the settings and click OK. For configuration details, see Create an integration task using a single pipeline, Create an integration task using script mode.

NoteThe newly created offline pipeline and script tasks appear in the current full-database migration folder.

You cannot move folders into or out of the full-database migration folder.

To move an existing offline pipeline or script task into the full-database migration folder, click the

icon next to the task name, select Move, and then select the destination folder in the dialog box that appears.

icon next to the task name, select Move, and then select the destination folder in the dialog box that appears.Deleting the full-database migration folder also deletes all tasks that it contains, including offline pipeline and script tasks.

What to do next

After you create and publish the offline full-database migration task, you can monitor and manage it in the Operation Center to ensure that it runs as expected. For more information, see Operation Center.