Container Compute Service (ACS) provides on-demand GPU computing without requiring you to manage the underlying hardware or node configuration. ACS is easy to deploy, supports pay-as-you-go billing, and is ideal for large language model (LLM) inference tasks, which helps reduce inference costs. This guide walks you through deploying a ComfyUI service on ACS and using the deepgpu-comfyui plugin to accelerate Wan2.1 text-to-video and image-to-video generation.

By the end of this guide, you will have:

Downloaded the Wan2.1 model files to a persistent NAS volume

Deployed a ComfyUI service on an ACS GPU cluster

Run an accelerated text-to-video workflow using the ApplyDeepyTorch node

Background

ComfyUI

ComfyUI is an open-source, node-based UI for running and customizing Stable Diffusion pipelines. Instead of writing code, you build generation workflows by connecting nodes on a visual canvas.

Wan model

Tongyi Wanxiang, also known as Wan, is a large AI art and text-to-image (AI-Generated Content (AIGC)) model from Alibaba's Tongyi Lab. It is the visual generation branch of the Tongyi Qianwen large model series. Wan is the world's first AI art model to support Chinese prompts. It has multimodal capabilities and can generate high-quality artwork from text descriptions, hand-drawn sketches, or image style transfers.

Prerequisites

Before you begin, ensure that you have:

ACS account authorization. If this is your first time using ACS, assign the default role so ACS can access Elastic Compute Service (ECS), Object Storage Service (OSS), Apsara File Storage NAS, Cloud Parallel File Storage (CPFS), and Server Load Balancer (SLB). For details, see Quick start for first-time ACS users

An ACS GPU cluster with L20 (GN8IS) or G49E GPU cards

A NAS or OSS persistent volume to store model files. This guide uses a NAS volume. For setup instructions, see Create a NAS file system as a volume or Use a statically provisioned OSS volume

Git installed in your local environment. See Git downloads

Step 1: Prepare the model data

Run the following commands in the directory where the NAS volume is mounted.

Clone the ComfyUI repository.

git clone https://github.com/comfyanonymous/ComfyUI.gitDownload the three Wan2.1 model files to their corresponding ComfyUI directories. The files are hosted on the Wan_2.1_ComfyUI_repackaged project on ModelScope.

The diffusion model (

wan2.1_t2v_14B_fp16.safetensors):cd ComfyUI/models/diffusion_models wget https://modelscope.cn/models/Comfy-Org/Wan_2.1_ComfyUI_repackaged/resolve/master/split_files/diffusion_models/wan2.1_t2v_14B_fp16.safetensorsThe VAE (

wan_2.1_vae.safetensors):cd ComfyUI/models/vae wget https://modelscope.cn/models/Comfy-Org/Wan_2.1_ComfyUI_repackaged/resolve/master/split_files/vae/wan_2.1_vae.safetensorsThe text encoder (

umt5_xxl_fp8_e4m3fn_scaled.safetensors):cd ComfyUI/models/text_encoders wget https://modelscope.cn/models/Comfy-Org/Wan_2.1_ComfyUI_repackaged/resolve/master/split_files/text_encoders/umt5_xxl_fp8_e4m3fn_scaled.safetensors

The download takes around 30 minutes. If your connection is slow, increase the peak public bandwidth before starting.

Download and extract the ComfyUI-deepgpu plugin.

cd ComfyUI/custom_nodes wget https://aiacc-inference-public-v2.oss-cn-hangzhou.aliyuncs.com/deepgpu/comfyui/nodes/20250513/ComfyUI-deepgpu.tar.gz tar zxf ComfyUI-deepgpu.tar.gz

Step 2: Deploy the ComfyUI service

Log in to the ACS console. In the left navigation pane, choose Clusters. Click the target cluster name. Then choose Workloads > Deployments and click Create from YAML.

Paste the following YAML manifest and click Create.

Replace

persistentVolumeClaim.claimNamewith the name of your persistent volume claim (PVC). This example uses the inference-nv-pytorch 25.07 image from thecn-beijingregion to minimize image pull times. To use this image from other regions, see Usage method and update the image path in the manifest. The container image in this example has the deepgpu-torch and deepgpu-comfyui plugins pre-installed. To use these plugins in a different container environment, contact a solution architect (SA) to get the installation packages.apiVersion: apps/v1 kind: Deployment metadata: labels: app: wanx-deployment name: wanx-deployment-test namespace: default spec: replicas: 1 selector: matchLabels: app: wanx-deployment template: metadata: labels: alibabacloud.com/compute-class: gpu alibabacloud.com/compute-qos: default alibabacloud.com/gpu-model-series: L20 #Supported GPU card types: L20 (GN8IS), G49E app: wanx-deployment spec: containers: - command: - sh - -c - DEEPGPU_PUB_LS=true python3 /mnt/ComfyUI/main.py --listen 0.0.0.0 --port 7860 image: acs-registry-vpc.cn-beijing.cr.aliyuncs.com/egslingjun/inference-nv-pytorch:25.07-vllm0.9.2-pytorch2.7-cu128-20250714-serverless imagePullPolicy: Always name: main resources: limits: nvidia.com/gpu: "1" cpu: "16" memory: 64Gi requests: nvidia.com/gpu: "1" cpu: "16" memory: 64Gi terminationMessagePath: /dev/termination-log terminationMessagePolicy: File volumeMounts: - mountPath: /dev/shm name: cache-volume - mountPath: /mnt #/mnt is the path in the pod where the NAS volume claim is mapped name: data dnsPolicy: ClusterFirst restartPolicy: Always schedulerName: default-scheduler securityContext: {} terminationGracePeriodSeconds: 30 volumes: - emptyDir: medium: Memory sizeLimit: 500G name: cache-volume - name: data persistentVolumeClaim: claimName: wanx-nas #wanx-nas is the volume claim created from the NAS volume --- apiVersion: v1 kind: Service metadata: name: wanx-test spec: type: LoadBalancer ports: - port: 7860 protocol: TCP targetPort: 7860 selector: app: wanx-deploymentKey parameters in this manifest:

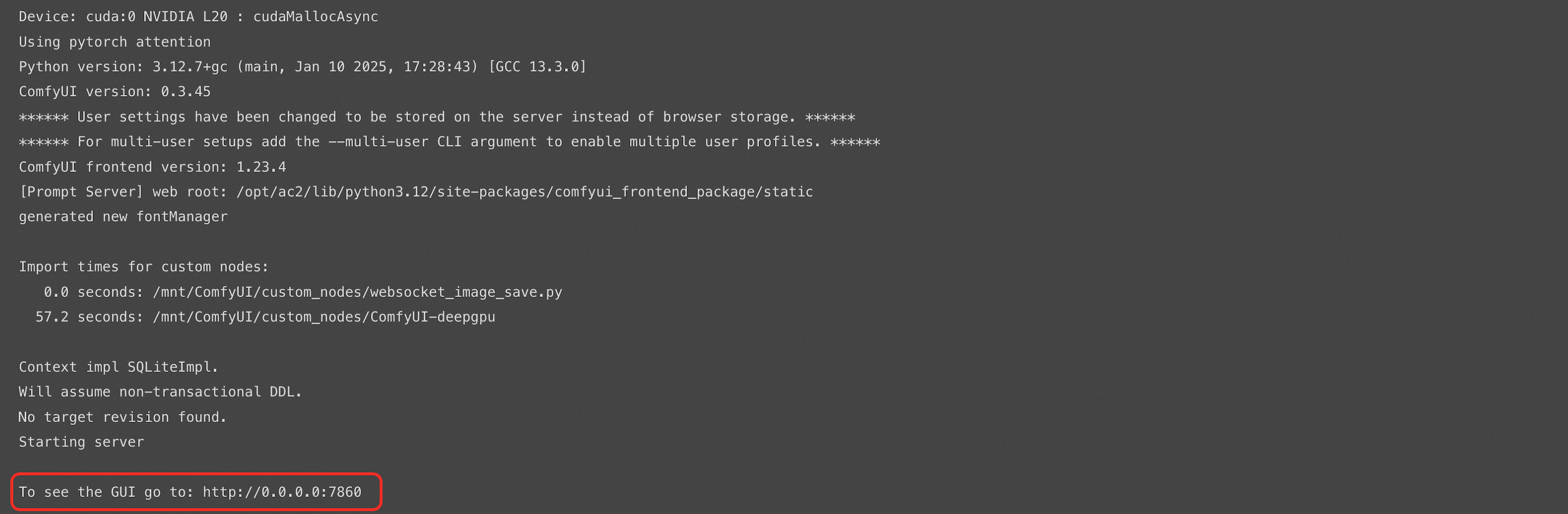

Parameter Description alibabacloud.com/gpu-model-seriesGPU card type. Supported values: L20(GN8IS instance) andG49E.nvidia.com/gpu: "1"Requests one GPU for the container. resources.limits/requestsSets CPU to 16 cores and memory to 64 GiB. /dev/shmemptyDir (sizeLimit: 500G)Shared memory volume mounted at /dev/shm. Required for large model inference.mountPath: /mntThe path inside the pod where the NAS volume is mounted. ComfyUI and model files are accessed from this path. persistentVolumeClaim.claimNameName of your PVC. Replace wanx-naswith your actual PVC name.In the dialog that appears, click View to open the workload details page. Click the Logs tab. When the service starts successfully, the log output looks like this:

Step 3: Access the ComfyUI interface

On the workload details page, click the Access Method tab to get the external endpoint of the service, such as

8.xxx.xxx.114:7860.

Open

http://8.xxx.xxx.114:7860/in a browser.The first time you access the URL, it may take about 5 minutes to load.

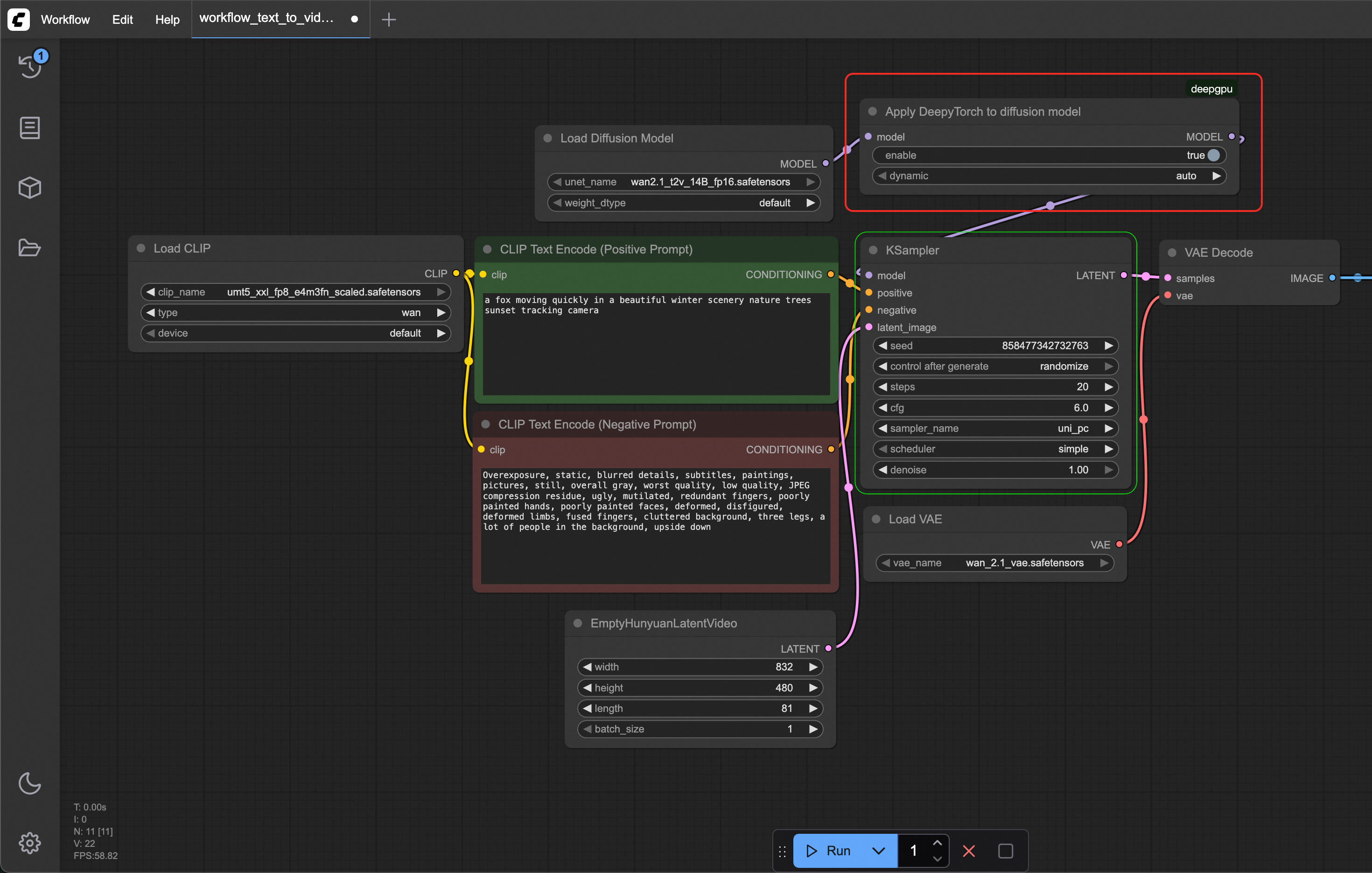

In the ComfyUI interface, right-click anywhere and click Add Node to browse the available DeepGPU nodes from the plugin. The ApplyDeepyTorch node optimizes diffusion model inference by applying GPU-level acceleration. Insert it after the last model-loading node in your workflow. The node looks like this:

Step 4: Run the accelerated workflow

Download one or both of the following pre-built Wan2.1 workflows to your local machine:

Image-to-video workflow:

https://aiacc-inference-public-v2.oss-cn-hangzhou.aliyuncs.com/deepgpu/comfyui/wan/workflows/workflow_image_to_video_wan_1.3b_deepytorch.jsonText-to-video workflow:

https://aiacc-inference-public-v2.oss-cn-hangzhou.aliyuncs.com/deepgpu/comfyui/wan/workflows/workflow_text_to_video_wan_deepytorch.json

The following steps use the accelerated text-to-video workflow as an example.

In ComfyUI, choose Workflow > Open and select the downloaded

workflow_text_to_video_wan_deepytorch.jsonfile.Find the Apply DeepyTorch to diffusion model node. Set its enable parameter to true.

The DeepyTorch-accelerated workflow inserts an ApplyDeepyTorch node after the Load Diffusion Model node.

Click Run and wait for the video to generate.

Click the Queue button on the left to view the generation time and preview the output.

The first run takes longer than subsequent runs as the model warms up. Run the workflow two or three more times to see stable performance.

(Optional) To compare generation time without acceleration, restart the ComfyUI service and run the non-accelerated workflow:

https://aiacc-inference-public-v2.oss-cn-hangzhou.aliyuncs.com/deepgpu/comfyui/wan/workflows/workflow_text_to_video_wan.json