The ack-koordinator component includes the Koordinator Descheduler module, which detects nodes running above a safe load threshold and automatically evicts Pods to rebalance the cluster. Unlike the native Kubernetes LowNodeUtilization plugin, which acts on resource allocation rates, the LowNodeLoad plugin acts on actual resource utilization—giving you a more accurate picture of cluster health.

This topic describes how to enable load-aware hot spot descheduling and configure its advanced parameters.

Limits

-

Only ACK managed Pro clusters are supported.

-

The following component versions are required:

Component Version ACK Scheduler v1.22.15-ack-4.0 or later, v1.24.6-ack-4.0 or later ack-koordinator v1.1.1-ack.1 or later Helm v3.0 or later

The Koordinator Descheduler only evicts Pods; rescheduling is handled by the ACK Scheduler. Use descheduling together with load-aware scheduling so that the ACK Scheduler avoids placing evicted Pods back onto hot spot nodes.

During descheduling, old Pods are evicted before new Pods are created. Make sure your application has enough redundant replicas to prevent eviction from affecting availability.

Descheduling uses the standard Kubernetes eviction API. Make sure your application Pods are re-entrant so that restarts after eviction do not disrupt your service.

Billing

ack-koordinator is free to install and use. Additional charges may apply in these scenarios:

-

Worker node resources: ack-koordinator is a self-managed component and consumes worker node resources after installation. Configure resource requests for each module when you install the component.

-

Prometheus custom metrics: If you enable Enable Prometheus Monitoring for ACK-Koordinator and use the Alibaba Cloud Prometheus service, the exposed metrics are counted as custom metrics and incur fees. Fees depend on cluster size and number of applications. Review the billing of Prometheus instances documentation before enabling this feature. Query usage data to monitor and manage your resource consumption.

How it works

Execution cycle

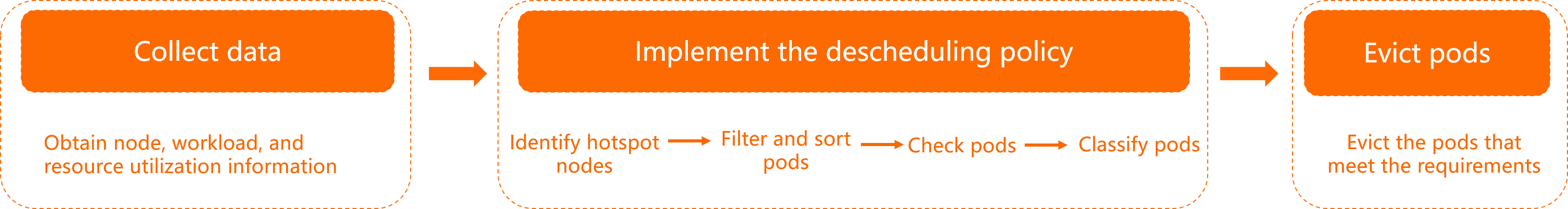

The Koordinator Descheduler runs periodically. Each execution cycle has three stages:

-

Data collection: Fetches node and workload information along with their resource utilization data.

-

Plugin execution (using LowNodeLoad as the example):

-

Identifies hot spot nodes based on

highThresholdsandlowThresholds. -

Traverses all hot spot nodes, scores the eligible Pods, and sorts them for eviction. See Pod scoring policy.

-

Checks each candidate Pod against migration constraints—cluster capacity, resource utilization, and replica ratio limits. See Load-aware hot spot descheduling policies.

-

Marks Pods that pass all checks as migration candidates and skips those that do not.

-

-

Pod eviction and migration: Evicts the migration candidates using the eviction API.

Node classification

The LowNodeLoad plugin classifies nodes into three categories based on two thresholds: lowThresholds (idle threshold) and highThresholds (hot spot threshold).

Using lowThresholds = 45% and highThresholds = 70% as an example:

-

Idle node: Resource utilization is below

lowThresholds(< 45%). -

Normal node: Resource utilization is between

lowThresholdsandhighThresholds(45%–70%). This is the target range. -

Hot spot node: Resource utilization exceeds

highThresholds(> 70%). Pods are evicted until the node load drops to 70% or below.

Resource utilization data is updated every minute and reflects the 5-minute average.

If all nodes in the cluster are abovelowThresholds, the overall cluster load is considered high. In this state, the Koordinator Descheduler suspends descheduling even if some nodes exceedhighThresholds.

Load-aware hot spot descheduling policies

| Policy | Description |

|---|---|

| Hot spot check retry | A node is identified as a hot spot only after it consecutively exceeds highThresholds for a configured number of execution cycles (default: 5). This prevents false positives from momentary monitoring spikes. |

| Node sorting | Among identified hot spot nodes, descheduling starts with the node with the highest resource usage. CPU and memory usage are compared in order. |

| Pod scoring | For each hot spot node, Pods are scored and sorted before eviction: (1) lower Priority class first (default is 0, the lowest); (2) lower QoS class; (3) for equal priority and QoS, Pods are ranked by resource utilization and startup time. If you need a specific eviction order, configure different Priority or QoS classes for your Pods. |

| Filter | Scopes descheduling to specific namespaces, Pods, or nodes using label selectors. See evictableNamespaces, podSelectors, and nodeSelector in LowNodeLoad plugin configuration. |

| Pre-check | Before evicting a Pod, the descheduler checks that: (1) matching nodes exist in the cluster after accounting for Node Affinity, Node Selector, Tolerations, and available resources; (2) the idle node's capacity is sufficient without pushing it above highThresholds. Available capacity is calculated as: (highThresholds − current load) × total capacity. For example, if an idle node has 20% load, highThresholds is 70%, and the node has 96 vCores, the available capacity is (70% − 20%) × 96 = 48 vCores. |

| Migration throttling | Limits concurrent pod migrations per node, namespace, and workload. A time window prevents pods in the same workload from being migrated too frequently. The Koordinator Descheduler is compatible with the Kubernetes Pod Disruption Budget (PDB) mechanism for fine-grained availability controls. |

| Observability | Migration events are emitted for each Pod, showing the reason and status. Run kubectl get event | grep <pod-name> to view migration details. |

Prerequisites

Before you begin, make sure you have:

-

ACK Scheduler v1.22.15-ack-4.0 or later, ack-koordinator v1.1.1-ack.1 or later, and Helm v3.0 or later

Step 1: Enable descheduling in ack-koordinator

-

New installation: Install ack-koordinator and select Enable Descheduling For Ack-koordinator on the component configuration page. See Install the ack-koordinator component.

-

Existing installation: On the component configuration page, select Enable Descheduling For Ack-koordinator. See Modify the ack-koordinator component.

Step 2: Enable the LowNodeLoad plugin

-

Create a

koord-descheduler-config.yamlfile with the following content. This ConfigMap enables the LowNodeLoad descheduling plugin.# koord-descheduler-config.yaml apiVersion: v1 kind: ConfigMap metadata: name: koord-descheduler-config namespace: kube-system data: koord-descheduler-config: | # System configuration for koord-descheduler. Do not modify this section. apiVersion: descheduler/v1alpha2 kind: DeschedulerConfiguration leaderElection: resourceLock: leases resourceName: koord-descheduler resourceNamespace: kube-system deschedulingInterval: 120s # Execution interval. The descheduler runs every 120s. # Must not exceed detectorCacheTimeout (default: 5m). dryRun: false # Set to true to run in read-only mode (no evictions). # End of system configuration. profiles: - name: koord-descheduler plugins: balance: enabled: - name: LowNodeLoad # Enable the LowNodeLoad plugin for hot spot descheduling. evict: enabled: - name: MigrationController # Enable the eviction and migration controller. pluginConfig: - name: MigrationController args: apiVersion: descheduler/v1alpha2 kind: MigrationControllerArgs defaultJobMode: EvictDirectly - name: LowNodeLoad args: apiVersion: descheduler/v1alpha2 kind: LowNodeLoadArgs # A node is idle if usage of ALL resources is below lowThresholds. lowThresholds: cpu: 20 # 20% CPU utilization memory: 30 # 30% memory utilization # A node is a hot spot if usage of ANY resource exceeds highThresholds. highThresholds: cpu: 50 # 50% CPU utilization memory: 60 # 60% memory utilization # Scopes descheduling to specific namespaces. # include and exclude are mutually exclusive—configure only one. evictableNamespaces: include: - default # exclude: # - "kube-system" # - "koordinator-system" -

Apply the ConfigMap to the cluster.

kubectl apply -f koord-descheduler-config.yaml -

Restart the Koordinator Descheduler to load the new configuration.

kubectl -n kube-system scale deploy ack-koord-descheduler --replicas 0 kubectl -n kube-system scale deploy ack-koord-descheduler --replicas 1

Step 3 (optional): Enable load-aware scheduling

For optimal load balancing, enable load-aware scheduling so the ACK Scheduler avoids placing Pods on hot spot nodes after eviction. See Step 1: Enable load-aware scheduling.

Set loadAwareThreshold in the load-aware scheduling policy to the same value as highThresholds. If the values differ, evicted Pods may be rescheduled back onto hot spot nodes—especially when only a few nodes are available with similar utilization levels.

Step 4: Verify descheduling

This example uses a three-node cluster where each node has 104 cores and 396 GiB of memory.

-

Create a

stress-demo.yamlfile with the following content.apiVersion: apps/v1 kind: Deployment metadata: name: stress-demo namespace: default labels: app: stress-demo spec: replicas: 6 selector: matchLabels: app: stress-demo template: metadata: name: stress-demo labels: app: stress-demo spec: containers: - args: - '--vm' - '2' - '--vm-bytes' - '1600M' - '-c' - '2' - '--vm-hang' - '2' command: - stress image: polinux/stress imagePullPolicy: Always name: stress resources: limits: cpu: '2' memory: 4Gi requests: cpu: '2' memory: 4Gi restartPolicy: Always -

Deploy the stress-testing workload.

kubectl create -f stress-demo.yamlExpected output:

deployment.apps/stress-demo created -

Verify the Pods are running and note which node they are scheduled to.

kubectl get pod -o wideExpected output:

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES stress-demo-588f9646cf-s**** 1/1 Running 0 82s 10.XX.XX.53 cn-beijing.10.XX.XX.53 <none> <none> -

Increase the load on

cn-beijing.10.XX.XX.53and check node utilization.kubectl top nodeExpected output:

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% cn-beijing.10.XX.XX.215 17611m 17% 24358Mi 6% cn-beijing.10.XX.XX.53 63472m 63% 11969Mi 3%Node

cn-beijing.10.XX.XX.53is at 63% CPU, which exceeds the configured hot spot threshold of 50%. Nodecn-beijing.10.XX.XX.215is at 17% CPU, which is below the idle threshold of 20%. -

Enable the LowNodeLoad plugin as described in Step 2: Enable the LowNodeLoad plugin.

-

Watch for Pod changes.

By default, a node must exceed

highThresholdsfor five consecutive checks before it is classified as a hot spot. With the default 120-second interval, this takes approximately 10 minutes.kubectl get pod -wExpected output:

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES stress-demo-588f9646cf-s**** 1/1 Terminating 0 59s 10.XX.XX.53 cn-beijing.10.XX.XX.53 <none> <none> stress-demo-588f9646cf-7**** 1/1 ContainerCreating 0 10s 10.XX.XX.215 cn-beijing.10.XX.XX.215 <none> <none> -

Check the eviction event.

kubectl get event | grep stress-demo-588f9646cf-s****Expected output:

2m14s Normal Evicting podmigrationjob/00fe88bd-**** Pod "default/stress-demo-588f9646cf-s****" evicted from node "cn-beijing.10.XX.XX.53" by the reason "node is overutilized, cpu usage(68.53%)>threshold(50.00%)" 101s Normal EvictComplete podmigrationjob/00fe88bd-**** Pod "default/stress-demo-588f9646cf-s****" has been evicted 2m14s Normal Descheduled pod/stress-demo-588f9646cf-s**** Pod evicted from node "cn-beijing.10.XX.XX.53" by the reason "node is overutilized, cpu usage(68.53%)>threshold(50.00%)" 2m14s Normal Killing pod/stress-demo-588f9646cf-s**** Stopping container stressThe Pod on the hot spot node has been evicted and migrated to

cn-beijing.10.XX.XX.215.

Advanced configuration

All Koordinator Descheduler parameters are set in the ConfigMap created in Step 2. The following shows the full advanced configuration for load-aware hot spot descheduling.

# koord-descheduler-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: koord-descheduler-config

namespace: kube-system

data:

koord-descheduler-config: |

# System configuration. Do not modify this section.

apiVersion: descheduler/v1alpha2

kind: DeschedulerConfiguration

leaderElection:

resourceLock: leases

resourceName: koord-descheduler

resourceNamespace: kube-system

deschedulingInterval: 120s # Execution interval. Must not exceed detectorCacheTimeout.

dryRun: false # Set to true for read-only mode (no evictions).

# End of system configuration.

profiles:

- name: koord-descheduler

plugins:

deschedule:

disabled:

- name: "*" # All plugins disabled by default (shown for reference only).

balance:

enabled:

- name: LowNodeLoad

evict:

disabled:

- name: "*" # All plugins disabled by default (shown for reference only).

enabled:

- name: MigrationController

pluginConfig:

- name: MigrationController

args:

apiVersion: descheduler/v1alpha2

kind: MigrationControllerArgs

defaultJobMode: EvictDirectly

maxMigratingPerNode: 1 # Max Pods migrating simultaneously per node. 0 = unlimited.

maxMigratingPerNamespace: 1 # Max Pods migrating simultaneously per namespace.

maxMigratingPerWorkload: 1 # Max Pods migrating simultaneously per workload (e.g., Deployment).

maxUnavailablePerWorkload: 2 # Max unavailable replicas per workload during migration.

evictLocalStoragePods: false # Whether to evict Pods using HostPath or emptyDir volumes.

objectLimiters:

workload: # At most 1 replica migrated per workload within 5 minutes.

duration: 5m

maxMigrating: 1

- name: LowNodeLoad

args:

apiVersion: descheduler/v1alpha2

kind: LowNodeLoadArgs

lowThresholds:

cpu: 20

memory: 30

highThresholds:

cpu: 50

memory: 60

anomalyCondition:

consecutiveAbnormalities: 5 # Number of consecutive checks above highThresholds

# before a node is classified as a hot spot.

# Counter resets after eviction.

detectorCacheTimeout: "5m" # Cache duration for hot spot checks.

# Must be >= deschedulingInterval.

evictableNamespaces:

include:

- default

# exclude:

# - "kube-system"

# - "koordinator-system"

nodeSelector: # Process only the specified nodes.

matchLabels:

alibabacloud.com/nodepool-id: np77f520e1108f47559e63809713ce****

podSelectors: # Process only the specified Pods.

- name: lsPods

selector:

matchLabels:

koordinator.sh/qosClass: "LS"Koordinator Descheduler system configuration

| Parameter | Type | Value | Description | Example |

|---|---|---|---|---|

dryRun |

boolean | true / false (default) |

Read-only mode switch. When enabled, no Pod migration is initiated. | false |

deschedulingInterval |

time.Duration | > 0s | Execution interval. Must not be greater than detectorCacheTimeout in the LowNodeLoad plugin. |

120s |

Eviction and migration control configuration

| Parameter | Type | Value | Description | Example |

|---|---|---|---|---|

maxMigratingPerNode |

int64 | ≥ 0 (default: 2) | Max Pods in a migrating state per node. 0 means no limit. |

2 |

maxMigratingPerNamespace |

int64 | ≥ 0 (default: unlimited) | Max Pods in a migrating state per namespace. 0 means no limit. |

1 |

maxMigratingPerWorkload |

intOrString | ≥ 0 (default: 10%) | Max Pods or percentage of Pods in a migrating state per workload (e.g., Deployment). 0 means no limit. Workloads with a single replica are not descheduled. |

1 or 10% |

maxUnavailablePerWorkload |

intOrString | ≥ 0 (default: 10%), less than total replicas | Max unavailable replicas or percentage per workload. 0 means no limit. |

1 or 10% |

evictLocalStoragePods |

boolean | true / false (default) |

Whether to evict Pods configured with HostPath or emptyDir volumes. Disabled by default for data safety. | false |

objectLimiters.workload |

struct | Duration > 0s (default: 5m); MaxMigrating ≥ 0 (default: 10%) |

Migration throttling at the workload level. Duration sets the time window; MaxMigrating sets the max replicas migrated within that window. Defaults to maxMigratingPerWorkload. |

duration: 5m / maxMigrating: 1 — at most 1 replica migrated per workload within 5 minutes. |

LowNodeLoad plugin configuration

| Parameter | Type | Value | Description | Example |

|---|---|---|---|---|

highThresholds |

map[string]float64 | [0, 100] (CPU and memory, as percentages) | Hot spot threshold. Pods on nodes exceeding this are eligible for eviction. If all nodes are above lowThresholds, the Koordinator Descheduler suspends descheduling even if some nodes exceed this threshold. |

cpu: 55 / memory: 75 |

lowThresholds |

map[string]float64 | [0, 100] (CPU and memory, as percentages) | Idle threshold. If all nodes exceed this, the overall cluster load is considered high and descheduling is suspended. | cpu: 25 / memory: 25 |

anomalyCondition.consecutiveAbnormalities |

int64 | > 0 (default: 5) | Number of consecutive execution cycles a node must exceed highThresholds before it is classified as a hot spot. The counter resets after eviction. |

5 |

detectorCacheTimeout |

\*metav1.Duration | See Duration format (default: 5m) |

Cache duration for hot spot checks. Must be ≥ deschedulingInterval. |

1h, 300s, 2m30s |

evictableNamespaces |

include: string / exclude: string | Namespaces in the cluster | Scopes descheduling to specific namespaces. Leave blank to process all Pods. include and exclude are mutually exclusive. |

exclude: ["kube-system", "koordinator-system"] |

nodeSelector |

metav1.LabelSelector | See Labels and selectors | Scopes descheduling to specific nodes using a label selector. Supports single node pool (matchLabels) and multiple node pools (matchExpressions). |

matchLabels: {alibabacloud.com/nodepool-id: np****} |

podSelectors |

list of PodSelector | See Labels and selectors | Scopes descheduling to specific Pods using label selectors. Multiple selector groups are supported. | matchLabels: {koordinator.sh/qosClass: "LS"} |

FAQ

Node utilization exceeds the threshold but Pods are not evicted

The most common cause is the descheduler configuration not being in effect. Check these items in order:

-

Scope not configured: The descheduler only processes namespaces and nodes that are explicitly included (or not excluded). Check

evictableNamespacesandnodeSelectorto confirm the target namespace and node are in scope. -

Descheduler not restarted after config change: Configuration changes take effect only after a restart. See Step 2: Enable the LowNodeLoad plugin for restart instructions.

-

Interval longer than cache timeout:

deschedulingInterval(default: 2 minutes) must not exceeddetectorCacheTimeout(default: 5 minutes). If it does, hot spot detection becomes invalid. Adjust the values and restart the descheduler. -

Node not consistently above threshold: The descheduler calculates a smoothed average over multiple cycles. A node is classified as a hot spot only after exceeding

highThresholdsforconsecutiveAbnormalitiesconsecutive cycles (default: 5 cycles, about 10 minutes). The value returned bykubectl top nodereflects only the last minute. Monitor the node over a longer period to confirm sustained high utilization. -

Insufficient cluster capacity: Before evicting a Pod, the descheduler checks that at least one other node has enough free capacity to absorb the Pod. If no node does, the eviction is skipped. Add nodes to increase overall cluster capacity.

-

Single-replica workload: Single-replica Pods are not evicted by default to protect availability. To override this behavior, add the annotation

descheduler.alpha.kubernetes.io/evict: "true"to the Pod or the workload'sspec.template.metadata. This annotation is not supported in ack-koordinator v1.3.0-ack1.6, v1.3.0-ack1.7, or v1.3.0-ack1.8. Upgrade to the latest version to use this feature. -

Pod uses HostPath or emptyDir: These Pods are excluded from descheduling by default. Set

evictLocalStoragePods: truein the MigrationController configuration to allow their eviction. See Eviction and migration control configuration. -

Too many unavailable or migrating replicas: If the number of unavailable or migrating replicas in a workload has already reached

maxUnavailablePerWorkloadormaxMigratingPerWorkload, no further evictions are initiated for that workload. Wait for in-progress evictions to complete, or increase these limits. -

Replica count ≤ migration limit: If the total number of replicas is less than or equal to

maxMigratingPerWorkloadormaxUnavailablePerWorkload, the descheduler skips the workload. Decrease these values or switch them to percentages.

The descheduler frequently restarts

An invalid or missing ConfigMap causes the descheduler to restart in a loop. Check the ConfigMap content and format. See Advanced configuration for the correct structure. After fixing the ConfigMap, restart the descheduler as described in Step 2: Enable the LowNodeLoad plugin.

How load-aware scheduling and hot spot descheduling work together

Enable both features to achieve optimal load balancing. Hot spot descheduling evicts Pods from overloaded nodes; load-aware scheduling directs the ACK Scheduler to place the rescheduled Pods on lower-utilization nodes instead of back onto hot spot nodes.

Set the loadAwareThreshold parameter in the load-aware scheduling policy to the same value as highThresholds. See Use load-aware scheduling and Scheduling policies for details.

What utilization data does the descheduler use?

The descheduler monitors resource usage over multiple cycles and calculates a smoothed average. A node triggers eviction only when its average usage stays above highThresholds for the configured number of consecutive cycles (default: about 10 minutes).

For memory, the descheduler excludes page cache from its calculations because the OS can reclaim it on demand. The value shown by kubectl top node includes page cache. Use Managed Service for Prometheus to view the actual memory utilization metric used by the descheduler.