This topic explains how to troubleshoot common issues with ossfs 1.0 volumes in Container Service for Kubernetes (ACK).

Issue navigation

Mount

Slow OSS volume mounts

Symptom

Mounting an OSS volume takes longer than expected.

Cause

If both of the following conditions are met, kubelet performs a chmod or chown operation during volume mounting, which increases the mount time.

-

Set

AccessModestoReadWriteOncein the PV and PVC. -

securityContext.fsgroupis configured in the application template.

Solution

-

The ossfs mount tool provides parameters to modify the UID, GID, and mode of files within its mount point.

Parameter

Description

uid

Specifies the UID of the user who owns the subdirectories and files in the mount directory.

gid

Specifies the GID of the user group that owns the subdirectories and files in the mount directory.

umask

Sets the permission mask for files and folders in the mount target.

Allows all users on the system to access the mount point directory. Note that this only affects the mount point itself, not the files within it. File permissions must be managed separately (such as via the

umaskoption orchmod). This option takes no value and is specified simply as-oallow_other. By default, only the root user can use it.Note-

Version 1.91.*: The default permission for files is 0640, and the default permission for folders is 0750.

-

Version 1.80.*: The default permission for both files and folders is 0777.

After you configure these parameters, remove the fsgroup parameter from securityContext.

-

-

For Kubernetes clusters of version 1.20 and later, you can also set fsGroupChangePolicy to OnRootMismatch. This ensures that the

chmodandchownoperations are performed only on the first startup. Subsequent mount times will be normal. For more information about fsGroupChangePolicy, see Configure a Security Context for a Pod or Container.

OSS volume mount permission issues

In the following scenarios, you may encounter a Permission Denied or Operation not permitted error.

Scenario 1: Insufficient client OSS access permissions

Cause

The RAM user or RAM role for the OSS volume has insufficient permissions. For example, access is blocked by an OSS bucket policy, or the Resource field in the RAM policy does not include the full mount path.

Solution

-

Check and correct the OSS bucket policy:

Ensure that the bucket policy does not block access to the following API operations: ListObjects, GetObject, PutObject, DeleteObject, AbortMultipartUpload, and ListMultipartUploads. For more information, see Bucket Policy.

-

Check and correct the RAM policy:

Ensure that the

Actionfield in the policy of the RAM user or RAM role used by the OSS volume includes the required permissions listed in the preceding step.To restrict permissions to a specific subdirectory of a bucket (for example,

path/), you must grant permissions to both the directory itself (path/) and all objects within the directory (path/*).The following code provides an example:

{ "Statement": [ { "Action": [ "oss:Get*", "oss:List*" ], "Effect": "Allow", "Resource": [ "acs:oss:*:*:mybucket/path", "acs:oss:*:*:mybucket/path/*" ] } ], "Version": "1" }

Scenario 2: Permission denied for the mount directory

Cause

By default, ossfs mounts the volume as root with 700 permissions. A container process running as a non-root user will lack sufficient permissions.

Solution

Use the allow_other option to change the permissions of the mount directory.

|

Parameter |

Description |

|

allow_other |

Sets the permissions of the mount directory to 777. |

Scenario 3: Permission denied for externally uploaded files

Cause

Files uploaded with other tools have default permissions of 640 in ossfs. If a container process runs as a non-root user, it does not have sufficient permissions to access these files.

Solution

Run the chmod command as the root user to modify the permissions of the target files. Alternatively, use the following configuration item to modify the permissions of subdirectories and files in the mount directory.

|

Parameter |

Description |

|

umask |

Sets the permission mask for files and folders in the mount target. Allows all users on the system to access the mount point directory. Note that this only affects the mount point itself, not the files within it. File permissions must be managed separately (such as via the Note

|

The umask option modifies file permissions only for the current ossfs instance. The changes do not persist after the volume is remounted and are not visible to other ossfs processes. For example:

-

If you configure

-o umask=022and use thestatcommand to view a file uploaded from the OSS console, the file permission is 755. If you remove the-o umask=022option and remount the volume, the permission reverts to 640. -

If a container process running as the root user configures

-o umask=133and then you usechmodto set a file's permission to 777, thestatcommand still shows the file permission as 644. If you remove the-o umask=133option and remount the volume, the permission changes to 777.

Scenario 4: Inter-container file permission conflicts

Cause

Regular files created in ossfs have default permissions of 644. Configuring the fsGroup field in securityContext or running chmod or chown on a file can change its permissions or owner. When a process in another container, running as a different user, tries to access the file, permission errors can occur.

Solution

Run the stat command to check the permissions of the target file. If the permissions are insufficient, run the chmod command as the root user to modify the permissions.

The solutions for the preceding three scenarios resolve the issue by increasing directory or file permissions for the current container process. You can also resolve the issue by changing the owner of the subdirectories and files in the ossfs mount directory.

If you specify a user when you build a container image, or if the securityContext.runAsUser and securityContext.runAsGroup fields in the application template are set during deployment, the application's container process runs as a non-root user.

Use the following options to change the owner UID and GID of files and subdirectories in the ossfs mount directory to match the container process's user.

|

Parameter |

Description |

|

uid |

Specifies the UID of the user who owns the subdirectories and files in the mount directory. |

|

gid |

Specifies the GID of the user group that owns the subdirectories and files in the mount directory. |

For example, if the process that the container uses to access OSS has the IDs uid=1000(biodocker), gid=1001(biodocker), and groups=1001(biodocker), you must configure -o uid=1000 and -o gid=1001.

Scenario 5: After an AccessKey is revoked, its updated value in a Secret does not take effect for an OSS mount that uses a PV with the nodePublishSecretRef field

Cause

An OSS volume is a FUSE file system mounted with the ossfs tool. After the volume is mounted, the AccessKey information cannot be updated. Applications with a mounted OSS volume continue sending requests to the OSS server with the original AccessKey.

Solution

After you update the Secret with the new AccessKey information, remount the volume. For non-containerized versions or containerized versions with exclusive mount mode enabled, simply restart the application pod to trigger an ossfs restart. For more information, see How do I restart the ossfs process in shared mount mode?.

Scenario 6: "Operation not permitted" for hard links

Cause

OSS volumes do not support hard link operations. In earlier CSI versions, a hard link operation returned an Operation not permitted error.

Solution

Modify your application to avoid hard link operations on OSS volumes. If your application requires hard links, consider using a different storage type.

Scenario 7: Insufficient permissions for subpath or subpathExpr mounts

Cause

Non-root application containers lack permissions for files in the /path/subpath/in/oss/ directory, which defaults to 640. When you mount an OSS volume by using the subpath method, ossfs actually mounts the path directory defined in the PV (in this example, /path), not /path/subpath/in/oss/. The allow_other mount option takes effect only on the /path directory. The subdirectory /path/subpath/in/oss/ still has the default permission of 640.

Solution

Use the umask option to change the default permissions of subdirectories. For example, -o umask=000 changes the default permission to 777.

OSS volume mount failure and application Pod event: FailedMount

Symptom

An OSS volume fails to mount, the pod cannot start, and the event log shows a FailedMount error.

Cause

-

Cause 1: Earlier versions of ossfs do not support mounting a directory that does not exist in the bucket.

ImportantA subdirectory path that is visible in the OSS console might not actually exist on the server. The response from the ossutil tool or OSS API calls is the source of truth. For example, if you directly create the

/a/b/c/directory,/a/b/c/is a standalone directory object, but the/a/or/a/b/directory objects do not actually exist. Similarly, if you upload files such as/a/*,/a/band/a/care individual file objects, but the/a/directory object does not exist. -

Cause 2: The AccessKey or RRSA role information is incorrect, or the associated permissions are insufficient.

-

Cause 3: The application's runtime environment is blocked by the OSS bucket policy.

-

Cause 4: The event contains the following message:

failed to get secret secrets "xxx" is forbidden: User "serverless-xxx" cannot get resource "secrets" in API group "" in the namespace "xxx". For applications created on virtual nodes (ACS pods), if the PVC specifies authentication information by using thenodePublishSecretReffield, the Secret must be in the same namespace as the PVC. -

Cause 5: In CSI version 1.30.4 and later, the pod in which OSSFS resides runs in the

ack-csi-fusenamespace. During a mount, the CSI driver first starts the pod where OSSFS resides and then sends an RPC request to start the OSSFS process in the pod. If the event contains the messageFailedMount /run/fuse.ossfs/xxxxxx/mounter.sock: connect: no such file or directory, it indicates that the pod where OSSFS resides failed to start or was unexpectedly deleted. -

Cause 6: The event contains the message

Failed to find executable /usr/local/bin/ossfs: No such file or directory. This indicates that OSSFS failed to be installed on the node. -

Cause 7: The event contains the message

error while loading shared libraries: xxxxx: cannot open shared object file: No such file or directory. The mount fails because in the current CSI version, ossfs runs directly on the node, and the node's operating system lacks the dynamic libraries that ossfs requires. This error can occur in the following cases:-

You installed an ossfs version on the node that is incompatible with the operating system.

-

An operating system upgrade on the node changed the default OpenSSL version. For example, upgrading from Alibaba Cloud Linux 2 to Alibaba Cloud Linux 3.

-

When ossfs runs on a node, it supports only CentOS, Alibaba Cloud Linux, ContainerOS, and Anolis OS.

-

On a node with a compatible operating system, you deleted the default dynamic libraries required by ossfs, such as FUSE, cURL, or xml2, or you changed the default OpenSSL version.

-

-

Cause 8: When mounting an OSS subdirectory, the mount fails if the AccessKey or RRSA role only has permissions for that subdirectory. The ossfs pod logs contain both

403 AccessDeniedand404 NoSuchKeyerrors.When ossfs starts, it automatically checks the permissions and connectivity of the OSS bucket. When the mount target is an OSS subdirectory, ossfs versions earlier than 1.91.5 first attempt to access the bucket's root directory. If access fails, it then retries accessing the subdirectory. With full read-only permissions on the bucket, newer versions of ossfs can mount a subdirectory that does not exist in the OSS bucket.

Therefore, if the AccessKey or the role used by RRSA has permissions only on the subdirectory, a

403 AccessDeniederror is reported during the initial validation. If the subdirectory also does not exist, a404 NoSuchKeyerror is subsequently reported and the process exits, causing the mount to fail. -

Cause 9: The bucket is configured for mirroring-based back-to-origin, but the mount directory has not been synchronized from the origin site.

-

Cause 10: The bucket is configured for static website hosting. When ossfs checks the mount directory on the OSS side, the request is forwarded to files such as index.html.

Solution

-

Solution for Cause 1:

Check whether the subpath exists on the OSS server.

Suppose the mount path of the PV is

sub/path/. You can use stat (View bucket and object information) to query an object where theobjectnameissub/path/, or use the HeadObject OpenAPI to query an object where thekeyissub/path/. If a 404 error is returned, the subdirectory does not exist on the server side.-

You can use tools like ossutil, an SDK, or the OSS console to manually create the missing bucket or subdirectory, and then remount.

-

ossfs 1.91 and later do not require the mount directory to exist. Upgrading the ossfs version also resolves this issue. For more information, see New features and performance tests of ossfs 1.0. If the mount still fails after the upgrade, see Cause 8 of this issue.

-

-

Solution for Cause 2:

-

Verify that the policy for the RAM user or RAM role used for mounting includes the permissions listed in 2. Create and authorize a RAM role.

-

Verify the file system permissions of the root mount path and the subpath. For more information, see Scenarios 2 and 7 in OSS volume mount permission issues.

-

For volumes mounted by using RAM user AccessKey authentication, verify whether the AccessKey used for mounting has been disabled or rotated. For more information, see Scenario 5 in OSS volume mount permission issues.

-

For volumes mounted by using RRSA authentication, verify that a correct trust policy is configured for the RAM role. For information about how to configure a trust policy, see 1. Enable RRSA for a cluster. By default, the trusted ServiceAccount is

csi-fuse-ossfsin theack-csi-fusenamespace, not the ServiceAccount used by the application.ImportantRRSA authentication is supported only for clusters of version 1.26 or later that use CSI components of version 1.30.4 or later. If you used the RRSA feature in versions prior to 1.30.4, see [Product Change] CSI ossfs version upgrade and mount process optimization to add the required RAM role authorization configuration.

-

-

Solution for Cause 3:

Check and correct the OSS bucket policy. For more information, see Scenario 1 in OSS volume mount permission issues.

-

Solution for Cause 4:

Create the Secret in the same namespace as the PVC. Then, when you create a new PV, point its

nodePublishSecretReffield to this Secret. For more information, see OSS read-only permission policy. -

Solution for Cause 5:

-

Run the following command to confirm that the ossfs pod exists. In the command,

PV_NAMEis the name of the mounted OSS PV, andNODE_NAMEis the name of the node where the application pod that requires the volume is located.kubectl -n ack-csi-fuse get pod -l csi.alibabacloud.com/volume-id=<PV_NAME> -owide | grep <NODE_NAME>If the pod exists but is in an abnormal state, investigate the cause of the abnormality. Ensure that the pod is in the Running state, and then restart the application pod to trigger a remount. If the pod does not exist, proceed to the next steps.

-

(Optional) Check audit logs or other sources to confirm whether the pod was unexpectedly deleted. Common causes for unexpected deletion include cleanup scripts, node draining, and node self-healing. We recommend that you make adjustments to prevent this issue from recurring.

-

After you confirm that both the CSI provisioner and CSI plugin are upgraded to version 1.30.4 or later, perform the following steps:

-

Run the following command to check for any lingering VolumeAttachment resources.

kubectl get volumeattachment | grep <PV_NAME> | grep <NODE_NAME>If any exist, delete the VolumeAttachment resource.

-

Restart the application pod to trigger a remount and confirm that the OSSFS pod is created as expected.

-

-

-

Solution for Cause 6:

-

We recommend that you upgrade the csi-plugin to v1.26.2 or later. This version fixes the issue where ossfs installation fails during the initialization of a newly scaled-out node.

-

Run the following command to restart the csi-plugin on the corresponding node and then check if the pod can start normally.

In the following code,

csi-plugin-****is the pod name of the csi-plugin on the node.kubectl -n kube-system delete pod csi-plugin-**** -

If the issue persists after you upgrade or restart the component, log in to the node and run the following command:

ls /etc/csi-toolPartial expected output:

... ossfs_<ossfsVer>_<ossfsArch>_x86_64.rpm ...-

If the output contains the OSSFS RPM package, run the following command and check if the pod can start normally:

rpm -i /etc/csi-tool/ossfs_<ossfsVer>_<ossfsArch>_x86_64.rpm -

If the output does not contain the OSSFS RPM package, see Install ossfs 1.0 to download the latest version.

-

-

-

Solution for Cause 7:

-

If you manually installed the ossfs tool, check whether its OS compatibility matches the node's OS.

-

If you upgraded the node's operating system, you can run the following command to restart the csi-plugin, update the ossfs version, and then try mounting again:

kubectl -n kube-system delete pod -l app=csi-plugin -

We recommend that you upgrade the CSI driver to version 1.28 or later. When you mount an OSS volume, ossfs runs as a container in the cluster and has no requirements for the node's operating system.

-

If you cannot upgrade the CSI driver version, you can switch to a compatible OS or manually install the missing dynamic libraries. The following example uses an Ubuntu node:

-

Use the which command to find the current installation location of ossfs (the default path is

/usr/local/bin/ossfs).which ossfs -

Use the ldd command to identify the missing dynamic library files for ossfs.

ldd /usr/local/bin/ossfs -

Use the apt-file command to find the package that a missing dynamic library file (such as libcrypto.so.10) belongs to.

apt-get install apt-file apt-file update apt-file search libcrypto.so.10 -

Use the apt-get command to install the corresponding package (such as libssl1.0.0).

apt-get install libssl1.0.0

-

-

-

Solution for Cause 8:

-

Recommended solution: Upgrade the CSI version to v1.32.1-35c87ee-aliyun or later.

-

Alternative solution 1: See the solution for Cause 1 to confirm whether the subdirectory exists.

-

Alternative solution 2: If your application requires long-term mounting of a subdirectory, we recommend expanding the permission scope to the entire bucket.

-

-

Solution for Cause 9:

You must synchronize the data from the origin site before you mount the volume. For more information, see Back-to-origin configuration overview.

-

Solution for Cause 10:

You must disable or adjust the static website hosting configuration before you mount the volume. For more information, see Static website hosting.

OSS volume mount failure: the application Pod reports the event:FailedAttachVolume

Symptom

An OSS volume fails to mount, the pod cannot start, and the event log shows a FailedAttachVolume error.

Cause

The event contains the message AttachVolume.Attach failed for volume "xxxxx" : attaching volumes from the kubelet is not supported. This error indicates that the enable-controller-attach-detach configuration is not enabled for the node's kubelet.

This typically occurs when you migrate from FlexVolume to CSI. CSI versions 1.30.4 and later rely on this parameter to perform the AttachVolume operation, but the node's kubelet configuration was not correctly updated during the migration.

Solution

-

Check the node's kubelet configuration

Log on to the node and check the kubelet's

enable-controller-attach-detachparameter.In the command,

/etc/systemd/system/kubelet.service.d/10-kubeadm.confis the default configuration file path for kubelet. Replace it with the actual path in your cluster.cat /etc/systemd/system/kubelet.service.d/10-kubeadm.conf | grep enable-controller-attach-detachThe expected output is

true.…… --enable-controller-attach-detach=true …… -

Update the node configuration

If the

enable-controller-attach-detachconfiguration is not enabled for the kubelet, first ensure that all FlexVolume volumes in the cluster have been migrated. Then, see Migrate FlexVolumes to CSI for a cluster without persistent storage and follow the instructions in Step 3: Modify the configurations of all node pools in the cluster and Step 4: Modify the configurations of existing nodes to update the configurations for the node pool and existing nodes.

Mount a single file from an OSS volume

An OSS volume uses ossfs to mount an OSS path as a file system in a pod. ossfs does not support mounting a single file. If you want a pod to see only one specific file from OSS, use the subPath feature.

Assume you want to mount the a.txt and b.txt files from bucket:/subpath in OSS into two different pods, with the target path in each pod being /path/to/file/. You can create the corresponding PV by using the following YAML template:

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-oss

spec:

capacity:

storage: 5Gi

accessModes:

- ReadOnlyMany

persistentVolumeReclaimPolicy: Retain

csi:

driver: ossplugin.csi.alibabacloud.com

volumeHandle: pv-oss

volumeAttributes:

bucket: bucket

path: subpath # The parent path of a.txt and b.txt.

url: "oss-cn-hangzhou.aliyuncs.com"After you create the corresponding PVC, configure the volumeMounts for the PVC in the pod as follows:

volumeMounts:

- mountPath: /path/to/file/a.txt # The mount path in the pod that corresponds to bucket:/subpath.

name: oss-pvc # Must match the name in Volumes.

subPath: a.txt # Or b.txt. The relative path of the file in bucket:/subpath. After mounting, the full path to access a.txt in the pod is /path/to/file/a.txt, which actually accesses bucket:/subpath/a.txt.

For basic instructions on how to use OSS volumes, see Use a statically provisioned ossfs 1.0 volume.

-

In the preceding example, the actual OSS path that corresponds to the mount point on the node is

bucket:/subpath. For processes such as file scanning on the node, or for pods that are mounted without usingsubPath, the visible content is stillbucket:/subpath. -

For containers that run as non-root users, pay attention to the

subPathpermission configuration. For more information, see Errors occur when you mount an OSS volume by using subpath or subpathExpr.

Use a specific ARN or ServiceAccount for RRSA

The default RRSA authentication for OSS volumes might not meet certain requirements, such as using a third-party OIDC identity provider or a non-default ServiceAccount.

In such cases, you can specify the RAM role name in the PV with the roleName configuration item. This allows the CSI plugin to obtain the default Role ARN and OIDC Provider ARN. If you need to implement customized RRSA authentication, you must modify the PV configuration as follows:

The roleArn and oidcProviderArn parameters must be configured together. After they are configured, you do not need to configure roleName.

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv-oss

spec:

capacity:

storage: 5Gi

accessModes:

- ReadOnlyMany

persistentVolumeReclaimPolicy: Retain

csi:

driver: ossplugin.csi.alibabacloud.com

volumeHandle: pv-oss # Must be the same as the PV name.

volumeAttributes:

bucket: "oss"

url: "oss-cn-hangzhou.aliyuncs.com"

otherOpts: "-o umask=022 -o max_stat_cache_size=0 -o allow_other"

authType: "rrsa"

oidcProviderArn: "<oidc-provider-arn>"

roleArn: "<role-arn>"

#roleName: "<role-name>" # After roleArn and oidcProviderArn are configured, roleName becomes invalid.

serviceAccountName: "csi-fuse-<service-account-name>" |

Parameter |

Description |

|

oidcProviderArn |

You can obtain the |

|

roleArn |

You can obtain the |

|

serviceAccountName |

Optional. The name of the ServiceAccount used by the ossfs container pod. You must create this ServiceAccount in advance. If this parameter is left empty, the default ServiceAccount maintained by the CSI driver is used. Important

The ServiceAccount name must start with |

Mount an OSS bucket from another account

We recommend that you use RRSA authentication to mount an OSS bucket from another account.

Make sure that the cluster and CSI component versions meet the requirements for RRSA authentication.

Before creating a volume mounted with RRSA authentication, you must complete the required RAM authorization. The following example shows how to mount a bucket from Account B (where the OSS bucket is located) in Account A (where the cluster is located).

-

Perform the following operations in Account B:

-

In Account B, create a RAM role named

roleBthat trusts Account A. For more information, see Create a RAM role for a trusted Alibaba Cloud account. -

Grant

roleBthe required permissions on the OSS bucket that you want to mount. -

In the RAM console, go to the details page for

roleBand copy its ARN, such asacs:ram::130xxxxxxxx:role/roleB.

-

-

Perform the following operations in Account A:

-

Create a RAM role named

roleAfor RRSA authentication for the application, and set the trusted entity type to OIDC identity provider. -

Grant

roleAthe permission to assumeroleB. For more information, see 1. Enable RRSA for a cluster (for static volumes) or Use a dynamically provisioned ossfs 1.0 volume (for dynamic volumes).roleA does not require OSS-related permission policies. However, it requires a permission policy that includes the

sts:AssumeRoleAPI, such as the system policyAliyunSTSAssumeRoleAccess.

-

-

Configure the volume in the cluster:

When creating a volume, set the

assumeRoleArnparameter to the ARN ofroleB:-

Static volume (PV): In the

spec.csi.volumeAttributesfield, addassumeRoleArn.assumeRoleArn: <ARN of roleB> -

Dynamic volumes (StorageClass): Add

assumeRoleArnto theparametersfield.assumeRoleArn: <ARN of roleB>

-

Use CoreDNS to resolve OSS access endpoints

To point an OSS endpoint to an internal cluster domain, you can configure a specific DNS policy for the pod where ossfs runs. This forces it to prioritize the cluster's CoreDNS during mounting.

This feature is supported only by CSI component version v1.34.2 and later. To upgrade, see Upgrade CSI components.

-

Static volume (PV): In the

spec.csi.volumeAttributesfield, adddnsPolicy.dnsPolicy: ClusterFirstWithHostNet -

Dynamic volumes (StorageClass): In the

parametersfield of the StorageClass, adddnsPolicy.dnsPolicy: ClusterFirstWithHostNet

Enable exclusive mount mode for containerized ossfs

Symptom

Multiple pods on the same node mounting the same OSS volume share a single mount point.

Cause

Before containerization, ossfs used exclusive mount mode by default. In this mode, an ossfs process is started on the node for each pod that mounts an OSS volume. The mount points of different ossfs processes are completely independent, so read and write operations by different pods on the same OSS volume do not affect each other.

After containerization, the ossfs process runs as a container in a pod, specifically a pod named csi-fuse-ossfs-* in the kube-system or ack-csi-fuse namespace. In multi-mount scenarios, exclusive mount mode starts a large number of pods in the cluster, which can lead to issues such as insufficient elastic network interfaces. Therefore, after containerization, shared mount mode is used by default. In this mode, multiple pods on the same node that mount the same OSS volume share a single mount point. They all correspond to the same csi-fuse-ossfs-* pod and are mounted by the same ossfs process.

Solution

CSI versions 1.30.4 and later no longer support exclusive mount mode. If you need to restart or change the ossfs configuration, see How do I restart the ossfs process in shared mount mode? If you have other requirements for exclusive ossfs mounting, join DingTalk group 33936810 to contact us.

For CSI versions prior to 1.30.4, you can revert to the exclusive mount behavior from before containerization by adding the useSharedPath configuration item when you create the OSS volume and setting it to "false". The following code provides an example:

apiVersion: v1

kind: PersistentVolume

metadata:

name: oss-pv

spec:

accessModes:

- ReadOnlyMany

capacity:

storage: 5Gi

csi:

driver: ossplugin.csi.alibabacloud.com

nodePublishSecretRef:

name: oss-secret

namespace: default

volumeAttributes:

bucket: bucket-name

otherOpts: -o max_stat_cache_size=0 -o allow_other

url: oss-cn-zhangjiakou.aliyuncs.com

useSharedPath: "false"

volumeHandle: oss-pv

persistentVolumeReclaimPolicy: Delete

volumeMode: FilesystemErrors with subpath or subpathExpr mounts

Symptom

When you mount an OSS volume by using the subpath or subpathExpr method, the following errors may occur:

-

Mount failure: After the pod that mounts the OSS volume is created, it remains in the

CreateContainerConfigErrorstate, and an event similar to the following appears.Warning Failed 10s (x8 over 97s) kubelet Error: failed to create subPath directory for volumeMount "pvc-oss" of container "nginx" -

Read/write error: When you perform read or write operations on the mounted OSS volume, a permission error such as

Operation not permittedorPermission deniedis reported. -

Unmount failure: When you delete the pod with the mounted OSS volume, the pod remains in the Terminating state.

Cause

To explain the causes and solutions, assume the PV is configured as follows:

...

volumeAttributes:

bucket: bucket-name

path: /path

...And the pod is configured as follows:

...

volumeMounts:

- mountPath: /path/in/container

name: oss-pvc

subPath: subpath/in/oss

...In this case, the subpath mount directory on the OSS server is /path/subpath/in/oss/ in the bucket.

-

Causes of mount failure:

-

Cause 1: The mount directory

/path/subpath/in/oss/does not exist in OSS, and the volume's user or role has not been grantedPutObjectpermission. For example, in a read-only scenario, only OSSReadOnlypermission is configured.Kubelet fails to create the

/path/subpath/in/oss/directory on the OSS server due to insufficient permissions. -

Cause 2: A directory object at some level of the mount directory

/path/subpath/in/oss/on the OSS server (a key ending with /, such aspath/orpath/subpath/) is parsed as a file in the file system, which prevents Kubelet from correctly determining the subpath status.

-

-

Cause of read/write errors: Non-root application containers lack permissions for files in the

/path/subpath/in/oss/directory, which defaults to 640. When you mount an OSS volume by using thesubpathmethod, ossfs actually mounts thepathdirectory defined in the PV (in this example,/path), not/path/subpath/in/oss/. Theallow_othermount option takes effect only on the/pathdirectory. The subdirectory/path/subpath/in/oss/still has the default permission of 640. -

Cause of unmount failure: The

/path/subpath/in/oss/mount directory on the OSS server was deleted, which blocks kubelet from reclaiming the subpath and causes the unmount to fail.

Solution

-

Solution for mount failure:

-

For Cause 1:

-

Pre-create the

/path/subpath/in/oss/directory on the OSS server to provide a mount path for kubelet. -

If you need to create a large number of directories (for example, when mounting an OSS volume by using the

subpathExprmethod) and cannot pre-create them all, grantPutObjectpermission to the user or role used by the OSS volume.

-

-

For Cause 2:

-

See the solution for Cause 1 in A directory is displayed as a file object after being mounted to check whether each directory object on the OSS server exists (with keys like

path/andpath/subpath/; do not start the key with / when querying) and inspect theircontent-typeandcontent-lengthfields. The directory object might be incorrectly identified as a file if the following conditions are met:The directory object exists (The API must return a 2XX code for the

content-typeandcontent-lengthfields to be meaningful), itscontent-typeis notplain,octet-stream, orx-directory(such asjsonortar), and itscontent-lengthis not 0. -

If these conditions are met, see the solution for Cause 1 in A directory is displayed as a file object after being mounted to remove the abnormal directory objects.

-

-

-

Solution for read/write errors: Use the

umaskoption to change the default permissions of subdirectories. For example,-o umask=000changes the default permission to 777. -

Solution for unmount failure: See the solution for Cause 2 in Unmounting a statically provisioned OSS volume fails and the pod remains in the Terminating state.

Usage

Slow bucket access with OSS volumes

Symptom

Accessing a bucket by using an OSS volume is slow.

We recommend that you first see Best practices for optimizing the performance of OSS volumes for information about common performance issues and tuning solutions. This topic provides only supplementary information.

Cause

-

Cause 1: After versioning is enabled for OSS, the performance of listObjectsV1 deteriorates when a large number of delete markers exist in the bucket.

-

Cause 2: The storage class of the bucket is set to a storage class other than Standard on the OSS server. Other storage classes can degrade data access performance.

Solution

-

Solution for Cause 1:

-

Upgrade the CSI plugin to v1.26.6 or later. This version allows ossfs to access buckets by using listObjectsV2.

-

In the

otherOptsfield of the static OSS PV, add-o listobjectsv2to resolve the issue.

-

-

Solution for Cause 2: Change the storage class or restore objects.

Files show a size of 0 in the OSS console

Symptom

After you mount an OSS data volume in a container and write data to a file, the OSS console shows that the file size is 0.

Cause

When a container uses ossfs to mount an OSS bucket, the mount is a FUSE-based operation. Therefore, the file content is uploaded to the OSS server only when the file is closed or flushed.

Solution

Use the lsof <file_name> command to check whether the file is in use by another process. If it is, close the process to release the file descriptor (fd). For more information, see lsof.

Transport endpoint is not connected error

Symptom

After an OSS data volume is mounted in a container, access to the mount point suddenly fails with a "Transport endpoint is not connected" error.

Cause

A container uses ossfs to mount an OSS bucket. If the ossfs process exits unexpectedly while an application is accessing data in the OSS bucket, the mount point becomes disconnected.

The main reasons that the ossfs process exits unexpectedly are as follows:

-

Insufficient resources, for example, the process is killed due to an Out of Memory (OOM) error.

-

The ossfs process exits due to a segmentation fault while accessing data.

Solution

-

Identify why the ossfs process exited unexpectedly.

ImportantIf your production application is affected by the disconnected mount point and this is an intermittent issue, you can redeploy the application container to remount the OSS volume as a quick fix.

Note that remounting the OSS volume causes some information required for the following troubleshooting steps to be lost. This is indicated where applicable.

-

Check whether the process exited due to insufficient resources.

-

Pod-level resource insufficiency: If you are using CSI v1.28 or later, check whether the ossfs Pod has been restarted and whether the last exit was caused by an OOM error or a similar issue. For more information about how to obtain the ossfs Pod, see Troubleshoot ossfs 1.0 exceptions.

-

Node-level resource insufficiency: If the problematic Pod was deleted after a remount or if you are using a CSI version earlier than 1.28, check the ACK or ECS monitoring dashboard to determine whether the node was experiencing high resource usage when the volume was mounted.

-

-

Check whether the process exited due to a segmentation fault.

-

If you are using a CSI version earlier than 1.28, ossfs runs as a process on the node. You must log on to the node and query the system logs for information about segmentation faults.

journalctl -u ossfs | grep segfault -

If the CSI version is 1.28 or later, check the logs of the ossfs Pod for information related to segmentation fault exits, such as

"signal: segmentation fault".NoteIn the following cases, the relevant logs cannot be obtained. If you have confirmed that the ossfs process did not exit due to insufficient resources, we recommend that you proceed with troubleshooting based on the assumption of a segmentation fault.

-

If the segmentation fault occurred a long time ago, the node or Pod logs may have been lost due to log rotation.

-

If the application container was remounted, the logs were lost when the Pod was deleted.

-

-

-

-

If the ossfs process exited due to insufficient resources, adjust the resource limits of the ossfs Pod or schedule the application Pod that uses the OSS volume to a node with more available resources.

If you confirm that ossfs itself is consuming a large amount of memory, the cause might be that an application or third-party scanning software is performing readdir operations on the mount point. This triggers ossfs to send a large number of HeadObject requests to the OSS server. In this case, you can enable readdir optimization. For more information, see New feature: readdir optimization.

-

Most segmentation fault issues that occur in earlier versions of ossfs are fixed in version 1.91 and later. If the ossfs process exits due to a segmentation fault, we recommend that you upgrade ossfs to 1.91.8.ack.2 or later, which means upgrading the CSI version to 1.34.4 or later. For more information about ossfs versions, see ossfs 1.0 versions.

If your CSI version is already up to date, follow these steps to collect the segmentation fault coredump file and submit a ticket.

-

If a node runs Alibaba Cloud Linux 3, its coredump parameters are configured by default. After an ossfs segmentation fault occurs, you can log in to the node and find the packaged coredump file

core.ossfs.xxx.lz4in the/var/lib/systemd/coredump/path. -

For nodes that do not run Alibaba Cloud Linux 3, ensure that the node allows processes to generate coredump files. For example, on a node that runs Alibaba Cloud Linux 2, log on to the node and run the following command:

echo "|/usr/lib/systemd/systemd-coredump %P %u %g %s %t %c %h %e" > /proc/sys/kernel/core_patternOnce configured, similar to the Alibaba Cloud Linux 3 operating system, when an ossfs segmentation fault occurs, you can log in to the node and find the packaged coredump file

core.ossfs.xxx.xzin the/var/lib/systemd/coredump/path.

-

Input/output error when accessing a mount point

Symptom

An application reports an "Input/output error" when accessing the mount point.

Cause

-

Cause 1: The name of an object in the OSS mount path contains special characters, which prevents the server response from being parsed.

-

Cause 2: The FUSE file system does not support operations such as chmod and chown on the root mount point.

-

Cause 3: When a RAM policy grants permissions to a single bucket or a directory within a bucket, the authorization is incomplete.

Solution

-

Solution for Cause 1:

Obtain the ossfs client logs as described in Troubleshoot ossfs 1.0 exceptions. The logs contain an error message similar to the following:

parser error : xmlParseCharRef: invalid xmlChar value 16 <Prefix>xxxxxxx/</Prefix>In this case,

represents an unparsable Unicode character, andxxxxxxx/represents the full name of the object (a directory object in this example). You can use tools such as the API and the console to confirm that the object exists on the OSS server. In the OSS console, this character may be displayed as a space.Rename the object on the OSS server as described in Rename an object. If the object is a directory, we recommend that you use ossbrowser 2.0 to rename the entire directory as described in Common operations.

-

Solution for Cause 2:

Use the

-o allow_otherand-o umaskmount parameters to achieve an effect similar tochmodon the mount path:Parameter

Description

allow_other

Sets the permissions of the mount directory to 777.

Use the

-o gidand-o uidmount parameters to achieve an effect similar to chown on the mount path:Parameter

Description

uid

Specifies the user ID (UID) of the user who owns the subdirectories and files in the mount directory.

gid

Specifies the group ID (GID) of the user who owns the subdirectories and files in the mount directory.

-

Solution for Cause 3:

If you need to grant permissions to only a specific bucket or a path within a bucket, you must grant permissions to both

mybucketandmybucket/*for the bucket, or to bothmybucket/subpathandmybucket/subpath/*for the path. For more information, see 2. Create a RAM role and grant permissions.

Directory is displayed as a file

Symptom

When an OSS data volume is mounted in a container, a directory is displayed as a file.

Cause

-

Reason 1: If the content-type of a directory object on the OSS server is not the default

application/octet-streamtype (such as text/html or image/jpeg), or if the size of the directory object is not 0, ossfs considers it a file object based on its metadata. -

Reason 2: The cause is not the one described in Reason 1. The directory object is missing the

x-oss-meta-modemetadata.

Solution

-

Solution for Cause 1:

You can retrieve the metadata of a directory object by using HeadObject or stat (View bucket and object information). The name of the directory object must end with a forward slash (

"/"), for example,a/b/. The following is an example of an API response.{ "server": "AliyunOSS", "date": "Wed, 06 Mar 2024 02:48:16 GMT", "content-type": "application/octet-stream", "content-length": "0", "connection": "keep-alive", "x-oss-request-id": "65E7D970946A0030334xxxxx", "accept-ranges": "bytes", "etag": "\"D41D8CD98F00B204E9800998ECFxxxxx\"", "last-modified": "Wed, 06 Mar 2024 02:39:19 GMT", "x-oss-object-type": "Normal", "x-oss-hash-crc6xxxxx": "0", "x-oss-storage-class": "Standard", "content-md5": "1B2M2Y8AsgTpgAmY7Phxxxxx", "x-oss-server-time": "17" }In the preceding response example:

-

content-type: The value isapplication/octet-stream. This type is used for directory objects. -

content-length: The value is 0. The size of a directory object is 0.

If these conditions are not met, you can fix the issue in one of the following ways:

-

Retrieve the object by using GetObject or the ossutil command-line tool quick start to confirm if the data is useful. If the data is useful or you are not sure, we recommend that you back it up. For example, you can change its name (for a

xx/directory object, do not usexxas the new name) and upload it to OSS. -

Use DeleteObject or rm (delete) to delete the problematic directory object, and then check whether ossfs displays the directory correctly.

-

-

Solution for Cause 2:

If the solution for Cause 1 does not fix the problem, you can resolve the issue by adding

-o complement_statto theotherOptsfield of the OSS static PV when you mount an OSS data volume in a container.NoteThis option is enabled by default in CSI plugin v1.26.6 and later. You can upgrade the storage component to v1.26.6 or a later version, and then restart the application Pod to remount the static OSS volume to resolve the issue.

Abnormal request traffic on the OSS server

Symptom

When an OSS data volume is mounted in a container, the number of requests monitored on the OSS server is much higher than expected.

Cause

When ossfs mounts OSS, a mount path is created on the node. Scans of the mount point by other processes on the ECS instance are also converted into requests to OSS. An excessive number of requests may incur fees.

Solution

Audit and trace the requesting processes to resolve the issue. You can perform the following operations on the node.

-

Install and start auditd.

sudo yum install auditd sudo service auditd start -

Set the ossfs mount path as the directory to monitor.

-

To add all mount paths, run the following command:

for i in $(mount | grep -i ossfs | awk '{print $3}');do auditctl -w ${i};done -

To add the mount path of a specific PV, run the following command:

<pv-name>is the name of the specified PV.for i in $(mount | grep -i ossfs | grep -i <pv-name> | awk '{print $3}');do auditctl -w ${i};done

-

-

Check the audit logs to see which processes accessed the paths in the OSS bucket.

ausearch -iAn audit log analysis example is as follows. In the following example, the audit logs between the

---separators form a single group, which records a single operation on the monitored mount point. This example indicates that theupdatedbprocess performed anopenoperation on a subdirectory in the mount point, and the process ID (PID) is 1636611.--- type=PROCTITLE msg=audit(2023年09月22日 15:09:26.244:291) : proctitle=updatedb type=PATH msg=audit(2023年09月22日 15:09:26.244:291) : item=0 name=. inode=14 dev=00:153 mode=dir,755 ouid=root ogid=root rdev=00:00 nametype=NORMAL cap_fp=none cap_fi=none cap_fe=0 cap_fver=0 type=CWD msg=audit(2023年09月22日 15:09:26.244:291) : cwd=/subdir1/subdir2 type=SYSCALL msg=audit(2023年09月22日 15:09:26.244:291) : arch=x86_64 syscall=open success=yes exit=9 a0=0x55f9f59da74e a1=O_RDONLY|O_DIRECTORY|O_NOATIME a2=0x7fff78c34f40 a3=0x0 items=1 ppid=1581119 pid=1636611 auid=root uid=root gid=root euid=root suid=root fsuid=root egid=root sgid=root fsgid=root tty=pts1 ses=1355 comm=updatedb exe=/usr/bin/updatedb key=(null) --- -

Use the logs to check for calls from non-application processes and resolve any issues.

For example, if the audit log shows that updatedb has scanned the mounted directories, you can modify

/etc/updatedb.confto make it skip them. The procedure is as follows.-

Add

fuse.ossfsafterRUNEFS =. -

Add the mounted directory after

PRUNEPATHS =.

-

File Content-Type is always application/octet-stream

Symptom

The Content-Type metadata of all file objects written through an OSS volume is application/octet-stream. This prevents browsers or other clients from correctly identifying and processing these files.

Cause

-

If no Content-Type is specified, ossfs treats file objects as binary stream files by default.

-

A Content-Type was specified in the /etc/mime.types configuration file, but the configuration did not take effect.

Solution

-

Check the CSI component version. CSI component versions 1.26.6 and 1.28.1 have compatibility issues with Content-Type configurations. If you are using one of these versions, upgrade CSI to the latest version. For more information, see [Component Announcement] Compatibility issues with csi-plugin and csi-provisioner versions 1.26.6 and 1.28.1.

-

If you have specified the Content-Type by using

mailcapormime-supportto generate the/etc/mime.typesfile on a node, remount the corresponding OSS volume after you upgrade the CSI version. -

If you have not specified a Content-Type, you can do so in one of the following ways:

-

Node-level configuration: A

/etc/mime.typesconfiguration file is generated on the node, which applies to all OSS volumes that are newly mounted to the node. For more information, see FAQ. -

Cluster-level configuration: This configuration applies to all newly mounted OSS volumes in the cluster. The content of

/etc/mime.typesmatches the default content generated bymailcap.-

Check whether the csi-plugin configuration file exists.

kubectl -n kube-system get cm csi-pluginIf the

csi-pluginConfigMap does not exist, create it by using the following content. If themime-support="true"setting is not present in thedata.fuse-ossfssection of the ConfigMap, add it.apiVersion: v1 kind: ConfigMap metadata: name: csi-plugin namespace: kube-system data: fuse-ossfs: | mime-support=true -

Restart csi-plugin to apply the configuration.

Restarting csi-plugin does not affect the use of currently mounted volumes.

kubectl -n kube-system delete pod -l app=csi-plugin

-

-

-

Remount the corresponding OSS volume.

Error creating hard links

Symptom

The error Operation not supported or Operation not permitted is returned when creating a hard link.

Cause

OSS volumes do not support hard link operations and will return an Operation not supported error. In earlier CSI versions, the error returned for hard link operations was Operation not permitted.

Solution

Modify your application to avoid hard link operations when using OSS volumes. If your application must use hard link operations, we recommend that you use a different type of storage.

View access logs for OSS volumes

You can view OSS operation records in the OSS console. Make sure that you have enabled real-time log query.

Log on to the OSS console.

In the left-side navigation pane, click Buckets. On the Buckets page, find and click the desired bucket.

-

In the left-side navigation pane, choose .

-

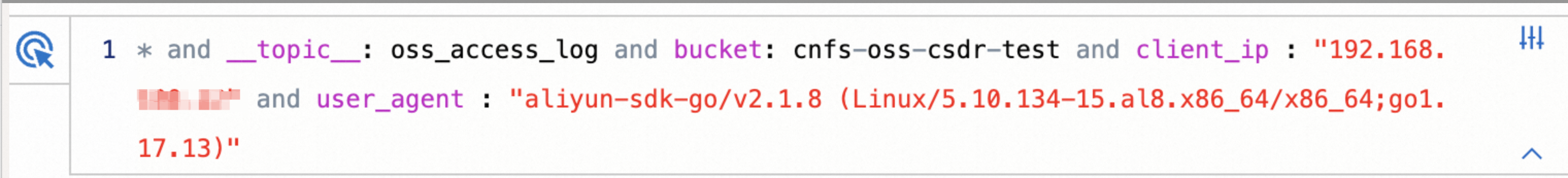

On the Real-time Log Query tab, enter query and analysis statements based on the query syntax and analysis syntax to analyze OSS logs. You can use the user_agent and client_ip fields to determine whether the logs originate from ACK.

-

To locate OSS operation requests sent from ACK, select the user_agent field. The expanded information shows that the user_agent contains ossfs.

Important-

The value of the user-agent field varies depending on the ossfs version, but it always starts with

aliyun-sdk-http/1.0()/ossfs. -

If you also use ossfs to mount volumes on an ECS instance, the related logs are also included here.

-

-

To locate a specific ECS instance or cluster, select the client_ip field and then select the corresponding IP address.

The following figure shows an example of the logs queried by using these two fields.

Description of log query fields

Field

Description

operation

The type of operation performed on OSS. Examples: GetObject and GetBucketStat. For more information, see Operations.

object

The name of the object, which can be a directory or a file in OSS.

request_id

The unique identifier of the request. You can use the request ID to query a specific request.

http_status, error_code

Used to query the result of a request. For more information, see Error responses.

-

Restart the ossfs process for shared mounts

Symptom

After you modify authentication information or the ossfs version, the running ossfs process does not change automatically.

Cause

-

Configurations such as authentication information cannot be changed after ossfs is running. Changing the configuration requires you to restart the ossfs process (in containerized versions, the process is the

csi-fuse-ossfs-*Pod in thekube-systemorack-csi-fusenamespace) and the corresponding application Pods, which causes a service interruption. Therefore, by default, CSI does not apply changes to a running ossfs instance. -

Under normal conditions, CSI handles the deployment and deletion of ossfs. Manually deleting the Pod that contains the ossfs process does not trigger CSI to redeploy ossfs or delete related resources, such as

VolumeAttachment.

Solution

Restarting the ossfs process requires restarting the application Pods that mount the corresponding OSS volume. Proceed with caution.

If you are using a non-containerized CSI version or have enabled dedicated mounts, you can directly restart the corresponding application Pod. Containerized versions use shared mounts by default, meaning all application Pods on a node that mount the same OSS volume share a single ossfs process.

-

Identify which application Pods are using the current FUSE Pod.

-

Run the following command to identify the

csi-fuse-ossfs-*Pod that you need to modify.Where

<pv-name>is the PV name, and<node-name>is the node name.For CSI versions earlier than 1.30.4, run the following command:

kubectl -n kube-system get pod -lcsi.alibabacloud.com/volume-id=<pv-name> -owide | grep <node-name>For CSI versions 1.30.4 and later, run the following command:

kubectl -n ack-csi-fuse get pod -lcsi.alibabacloud.com/volume-id=<pv-name> -owide | grep <node-name>Expected output:

csi-fuse-ossfs-xxxx 1/1 Running 0 10d 192.168.128.244 cn-beijing.192.168.XX.XX <none> <none> -

Run the following command to identify all Pods that are mounting the OSS volume.

Where

<ns>is the namespace name, and<pvc-name>is the PVC name. kubectl -n <ns> describe pvc <pvc-name>Expected output (containing a "Used By" section):

Used By: oss-static-94849f647-4**** oss-static-94849f647-6**** oss-static-94849f647-h**** oss-static-94849f647-v**** oss-static-94849f647-x****-

Run the following command to retrieve the Pods mounted by

csi-fuse-ossfs-xxxx, which are the Pods that run on the same node as csi-fuse-ossfs-xxxx.kubectl -n <ns> get pod -owide | grep cn-beijing.192.168.XX.XXExpected output:

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES oss-static-94849f647-4**** 1/1 Running 0 10d 192.168.100.11 cn-beijing.192.168.100.3 <none> <none> oss-static-94849f647-6**** 1/1 Running 0 7m36s 192.168.100.18 cn-beijing.192.168.100.3 <none> <none>

-

-

Restart the application and the ossfs process.

Simultaneously delete the application Pods (in the preceding example, oss-static-94849f647-4**** and oss-static-94849f647-6****) by using a command such as

kubectl scale. When no application Pods are mounted, thecsi-fuse-ossfs-xxxxPod is automatically reclaimed. After the replica count is restored, the PV is remounted with the new configuration, and CSI creates a newcsi-fuse-ossfs-yyyyPod.If you cannot ensure that these Pods are deleted simultaneously (for example, deleting Pods managed by a Deployment, StatefulSet, or DaemonSet triggers an immediate restart), or if the Pods can tolerate OSS read/write failures:

-

If the CSI version is earlier than 1.30.4, you can directly delete the

csi-fuse-ossfs-xxxxPod, which causes read and write operations to OSS from within the application Pod to return adisconnected error. -

For CSI versions 1.30.4 and later, you can run the following command:

kubectl get volumeattachment | grep <pv-name> | grep cn-beijing.192.168.XX.XXExpected output:

csi-bd463c719189f858c2394608da7feb5af8f181704b77a46bbc219b********** ossplugin.csi.alibabacloud.com <pv-name> cn-beijing.192.168.XX.XX true 12mIf you delete the VolumeAttachment directly, read/write operations to OSS within the application Pod will return a

disconnected error.

Then, restart the application Pods one by one. The restarted Pods will restore read and write access to OSS through the new

csi-fuse-ossfs-yyyyPod created by CSI. -

Check the ossfs version

The ossfs version is updated with the CSI version. The ossfs versions used in ACK are based on the public versions of ossfs, with version numbers in a format like 1.91.1.ack.1. For more information, see Version history. For more information about public versions, see Install ossfs 1.0 and Install ossfs 2.0.

-

The default ossfs version used to mount an OSS volume varies based on the CSI component version. For more information, see csi-provisioner.

NoteFor CSI versions earlier than 1.28 (excluding v1.26.6), ossfs 1.0 runs on the node, and the version used is the one actually installed on the node. You must query the ossfs version by using the following methods.

-

For an already mounted OSS volume, see View the ossfs version.

The version of the CSI component determines the default version of ossfs. The ossfs version used by ACK adds a .ack.x suffix, such as 1.91.1.ack.1, to its community version number. For more information, see Version notes.

For information about public community versions, see Install ossfs 1.0 and Install ossfs 2.0.

You can find the version information in the following ways:

-

Version mapping: To learn about the ossfs version that corresponds to a specific CSI version, see csi-provisioner.

For older CSI versions (earlier than v1.28 and not v1.26.6), ossfs 1.0 runs directly on the node. The version is determined by the version installed on the node, not by CSI. You must query the actual installed version directly.

-

Verify the actual version: To confirm the exact ossfs version running for a currently mounted volume, see View the ossfs version.

Scaling

Exceeding configured volume capacity

OSS does not limit the capacity of buckets or subdirectories and does not provide a capacity quota feature. Therefore, the settings for the .spec.capacity field of a PV and the .spec.resources.requests.storage field of a PVC are ignored and do not take effect. You only need to ensure that the capacity values of the bound PV and PVC are the same.

If your actual storage usage exceeds the configured capacity, the volume continues to operate normally. No scaling is required.

Unmount

OSS static volume fails to unmount

Symptom

A statically provisioned OSS volume fails to unmount, and the pod remains in the Terminating state.

Cause

A pod can become stuck in the Terminating state for several reasons. Start by checking the kubelet logs to identify the issue. The most common causes are:

-

Cause 1: The volume's mount point on the node is in use, preventing the CSI plugin from unmounting it.

-

Cause 2: The OSS bucket or directory specified in the PV has been deleted, so the CSI plugin cannot determine the status of the mount point.

Solution

-

Solution for Cause 1

-

Run the following command to get the pod's UID.

Replace <ns-name> and <pod-name> with your pod's namespace and name.

kubectl -n <ns-name> get pod <pod-name> -ogo-template --template='{{.metadata.uid}}'Expected output:

5fe0408b-e34a-497f-a302-f77049**** -

Log in to the node where the pod in the

Terminatingstate is running. -

Run the following command on the node to check if any processes are using the mount point.

lsof /var/lib/kubelet/pods/<pod-uid>/volumes/kubernetes.io~csi/<pv-name>/mount/If the command returns any output, identify and terminate these processes.

-

-

Solution for Cause 2

Log on to the OSS console.

-

Check if the bucket or directory has been deleted. If you used

subPathto mount the volume, also check if the subdirectory specified bysubPathhas been deleted. -

If you confirm that the unmount failure is due to a deleted directory, perform the following steps:

-

Run the following command to get the pod's UID.

Replace <ns-name> and <pod-name> with your pod's namespace and name.

kubectl -n <ns-name> get pod <pod-name> -ogo-template --template='{{.metadata.uid}}'Expected output:

5fe0408b-e34a-497f-a302-f77049**** -

Log in to the node where the pod in the

Terminatingstate is running. Then, run the following command on the node to find the mount points used by the pod.mount | grep <pod-uid> | grep fuse.ossfsExpected output:

ossfs on /var/lib/kubelet/pods/<pod-uid>/volumes/kubernetes.io~csi/<pv-name>/mount type fuse.ossfs (ro,nosuid,nodev,relatime,user_id=0,group_id=0,allow_other) ossfs on /var/lib/kubelet/pods/<pod-uid>/volume-subpaths/<pv-name>/<container-name>/0 type fuse.ossfs (ro,relatime,user_id=0,group_id=0,allow_other)The path between

ossfs onandtypeis the actual mount point on the node. -

Manually unmount the mount points.

umount /var/lib/kubelet/pods/<pod-uid>/volumes/kubernetes.io~csi/<pv-name>/mount umount /var/lib/kubelet/pods/<pod-uid>/volume-subpaths/<pv-name>/<container-name>/0 -

Wait for kubelet to reclaim the resources on its next retry, or force-delete the pod using the

--forceflag.

-