ACK integrates with Simple Log Service (SLS) to collect, query, and analyze container logs from your Kubernetes clusters. This topic covers installing a log collection component in DaemonSet mode, creating a collection configuration for text logs or stdout, and querying the collected logs.

DaemonSet mode runs one log collection agent per node. It works best for clusters with consistent log types or single-purpose workloads. For large-scale or mixed-workload clusters, use Sidecar mode instead. For a comparison of the two modes, see Collection method.

For SLS pricing, see Billing overview.

Prerequisites

Before you begin, make sure that you have:

Overview

| Step |

What you do |

| Step 1: Install a log collection component |

Install LoongCollector (recommended) or Logtail on your cluster |

| Step 2: Create a collection configuration |

Configure text log or stdout collection using a Custom Resource Definition (CRD), the SLS console, or environment variables |

| Step 3: Query and analyze logs |

Search and analyze collected logs in the SLS console |

Step 1: Install a log collection component

ACK supports two log collection components:

-

LoongCollector (recommended): The next-generation log collection agent from Simple Log Service. LoongCollector supersedes Logtail and is designed to incorporate capabilities from Application Real-Time Monitoring Service (ARMS) agents, including Managed Service for Prometheus-based collection and Extended Berkeley Packet Filter (eBPF)-based non-intrusive collection.

-

Logtail: The previous-generation agent. Logtail collects logs from multiple data sources — including Elastic Compute Service (ECS) instances, on-premises servers, and third-party cloud servers — without requiring application code changes.

Important

LoongCollector and logtail-ds cannot coexist on the same cluster. If logtail-ds is already installed, upgrade to LoongCollector first, then uninstall logtail-ds. Upgrading before uninstalling ensures log collection continues without gaps during migration. See Upgrade from Logtail to LoongCollector.

Install LoongCollector (recommended)

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the cluster you want to manage. In the left-side navigation pane, choose Operations > Add-ons.

-

On the Logs and Monitoring tab, find the loongcollector component and click Install.

After installation completes, SLS automatically creates a project named k8s-log-${your_k8s_cluster_id} and the following resources:

| Resource type |

Resource name |

Purpose |

| Machine group |

k8s-group-${your_k8s_cluster_id} |

Used by loongcollector-ds for log collection. Example: k8s-group-my-cluster-123 |

| Machine group |

k8s-group-${your_k8s_cluster_id}-cluster |

Used by loongcollector-cluster for metric collection. Example: k8s-group-my-cluster-123-cluster |

| Machine group |

k8s-group-${your_k8s_cluster_id}-singleton |

Used to create per-instance LoongCollector configurations. Example: k8s-group-my-cluster-123-singleton |

| Logstore |

config-operation-log |

Stores loongcollector-operator logs. Do not delete this Logstore. |

Verify the installation

-

Log on to the Simple Log Service console.

-

In the Projects section, confirm that the project k8s-log-${your_k8s_cluster_id} appears.

-

In the project, go to Machine Groups and check that the machine group k8s-group-${your_k8s_cluster_id} shows a Running heartbeat status. A Running heartbeat confirms that loongcollector-ds is active on the nodes and ready to collect logs. If the status is not yet Running, refresh the page — the agent may take a short time to register after installation.

Install Logtail

Install Logtail in an existing cluster

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the cluster you want to manage. In the left-side navigation pane, click Add-ons.

-

On the Logs and Monitoring tab, find the logtail-ds component and click Install.

Install Logtail when creating a cluster

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

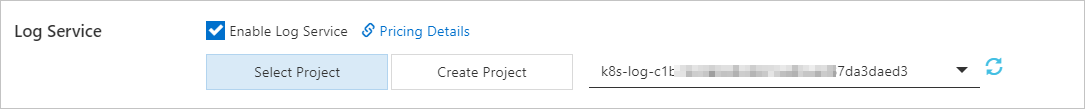

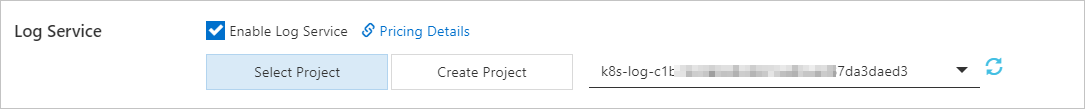

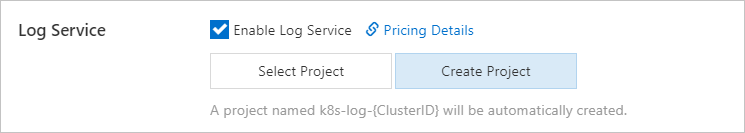

On the Clusters page, click Create Kubernetes Cluster. In the Component Configurations step, select Enable Log Service. The wizard prompts you to associate an SLS project. Choose one of the following:

Important

In the Component Configurations step, Control Plane Component Logs is enabled by default. When enabled, the system automatically collects logs from control plane components and charges you under the pay-as-you-go billing method. Disable this option if you do not need control plane logs to avoid unexpected charges. For details, see Collect logs of control plane components in ACK managed clusters.

After installation, SLS automatically creates a project named k8s-log-<YOUR_CLUSTER_ID> and the following resources:

| Resource type |

Resource name |

Purpose |

| Machine group |

k8s-group-<YOUR_CLUSTER_ID> |

Used by logtail-daemonset for log collection. Example: k8s-group-my-cluster-123 |

| Machine group |

k8s-group-<YOUR_CLUSTER_ID>-statefulset |

Used by logtail-statefulset for metric collection. Example: k8s-group-my-cluster-123-statefulset |

| Machine group |

k8s-group-<YOUR_CLUSTER_ID>-singleton |

Used to create per-instance Logtail configurations. Example: k8s-group-my-cluster-123-singleton |

| Logstore |

config-operation-log |

Stores logs of the alibaba-log-controller component. Do not create a Logtail configuration for this Logstore. You can delete it — after deletion, the system stops collecting alibaba-log-controller operational logs. Charged at the standard Logstore rate. See Billable items for the pay-by-ingested-data mode. |

Step 2: Create a collection configuration

Choose one of four methods to create a collection configuration. Use only one method per configuration to avoid conflicts.

Important

For configurations created through a Custom Resource (CR), always update the CR to modify the configuration. Changes made directly in the SLS console are not synced back to the CR.

Choose a configuration method

| Method |

Best for |

Limitations |

| CRD - AliyunPipelineConfig (recommended) |

Complex collection and processing; version consistency between the configuration and the LoongCollector container |

Requires logtail-ds version > 1.8.10 when used with Logtail. No version requirement for LoongCollector. |

| CRD - AliyunLogConfig |

Clusters already using this CRD. Migrate to AliyunPipelineConfig for better extensibility — see CRDs for differences. |

Old CRD version; limited extensibility |

| Simple Log Service console |

Quick setup without accessing the Kubernetes cluster |

Cannot batch-create configurations; advanced features unavailable |

| Environment variables |

Simple single-line log collection configured directly in pod specs |

Single-line logs only; not suitable for edge computing scenarios |

When using CRD - AliyunPipelineConfig with logtail-ds, the installed version must be later than 1.8.10. See Upgrade the latest version of Logtail for upgrade steps. LoongCollector has no version requirement.

Collect text logs

Text logs are written by applications to files in specified directories inside containers. They are well-suited for long-term analysis and troubleshooting.

CRD - AliyunPipelineConfig (recommended)

Creating a CR from the AliyunPipelineConfig CRD automatically activates the LoongCollector configuration.

-

Log on to the ACK console.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Custom Resources.

-

On the Custom Resources page, click the CRDs tab, then click Create from YAML.

-

Modify the following YAML and click Create. The example below collects multi-line text logs from test.LOG in /data/logs/app_1/ for pods labeled app: ^(.*app.*)$ in the default namespace, and sends them to the k8s-file Logstore in k8s-log-<YOUR_CLUSTER_ID>. Replace the following placeholders before applying: For the full list of config parameters (inputs, outputs, processing plug-ins, and container filters), see PipelineConfig and CR parameters.

-

project.name: Your project name, for example, k8s-log-<YOUR_CLUSTER_ID>. Find the project name in the SLS console after installation.

-

IncludeK8sLabel: Pod label filter. app: ^(.*app.*)$ collects pods whose app label contains app. To collect all pods whose names contain test, replace IncludeK8sLabel with K8sContainerRegex: ^(.*test.*)$.

-

FilePaths: Log file path inside the container. See File path mapping for containers.

-

Endpoint and Region: Your region endpoint and ID, for example, cn-hangzhou.log.aliyuncs.com and cn-hangzhou.

apiVersion: telemetry.alibabacloud.com/v1alpha1

kind: ClusterAliyunPipelineConfig

metadata:

# The resource name must be unique in the cluster. It becomes the LoongCollector configuration name.

name: example-k8s-file

spec:

project:

name: k8s-log-<YOUR_CLUSTER_ID>

logstores:

- name: k8s-file

config:

# Sample log (optional)

sample: |

2024-06-19 16:35:00 INFO test log

line-1

line-2

end

inputs:

# input_file collects multi-line text logs from containers.

- Type: input_file

FilePaths:

- /data/logs/app_1/**/test.LOG

EnableContainerDiscovery: true

CollectingContainersMeta: true

ContainerFilters:

# Filter by namespace (supports regular expressions)

K8sNamespaceRegex: default

# Filter by pod label (supports regular expressions)

IncludeK8sLabel:

app: ^(.*app.*)$

# Remove the Multiline block to collect single-line logs.

Multiline:

Mode: custom

# Regular expression matching the first line of each log entry

StartPattern: '\d+-\d+-\d+\s\d+:\d+:\d+'

processors:

# Parse logs using a regular expression.

- Type: processor_parse_regex_native

SourceKey: content

Regex: (\d+-\d+-\d+\s\S+)(.*)

Keys: ["time", "detail"]

flushers:

# Send logs to the specified Logstore.

- Type: flusher_sls

Logstore: k8s-file

Endpoint: cn-beijing.log.aliyuncs.com

Region: cn-beijing

TelemetryType: logs

CRD - AliyunLogConfig

Creating a CR from the AliyunLogConfig CRD automatically activates the LoongCollector configuration.

-

Log on to the ACK console.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Custom Resources.

-

On the Custom Resources page, click the CRDs tab, then click Create from YAML.

-

Modify the following YAML and click Create. The example below creates a configuration named example-k8s-file that collects test.LOG from /data/logs/app_1/ for all containers whose names start with app, and sends the data to the k8s-file Logstore in k8s-log-<YOUR_CLUSTER_ID>. Replace the following placeholders: For the full list of logtailConfig parameters, see AliyunLogConfigDetail and CR parameters.

-

logPath: The log file directory, for example, /data/logs/app_1.

-

filePattern: The log file name. Wildcards (*, ?) are supported, for example, log_*.log.

apiVersion: log.alibabacloud.com/v1alpha1

kind: AliyunLogConfig

metadata:

# The resource name must be unique in the cluster.

name: example-k8s-file

namespace: kube-system

spec:

# If left blank, the project k8s-log-<your_cluster_id> is used.

# project: k8s-log-test

# Logstore name. Created automatically if it doesn't exist.

logstore: k8s-file

logtailConfig:

# Set to "file" to collect text logs.

inputType: file

configName: example-k8s-file

inputDetail:

logType: common_reg_log

logPath: /data/logs/app_1

filePattern: test.LOG

# Set to true to collect from containers.

dockerFile: true

# Remove logBeginRegex to collect single-line logs.

logBeginRegex: \d+-\d+-\d+.*

advanced:

k8s:

K8sPodRegex: '^(app.*)$'

Simple Log Service console

This method does not require access to the Kubernetes cluster, but cannot batch-create configurations.

-

Log on to the Simple Log Service console.

-

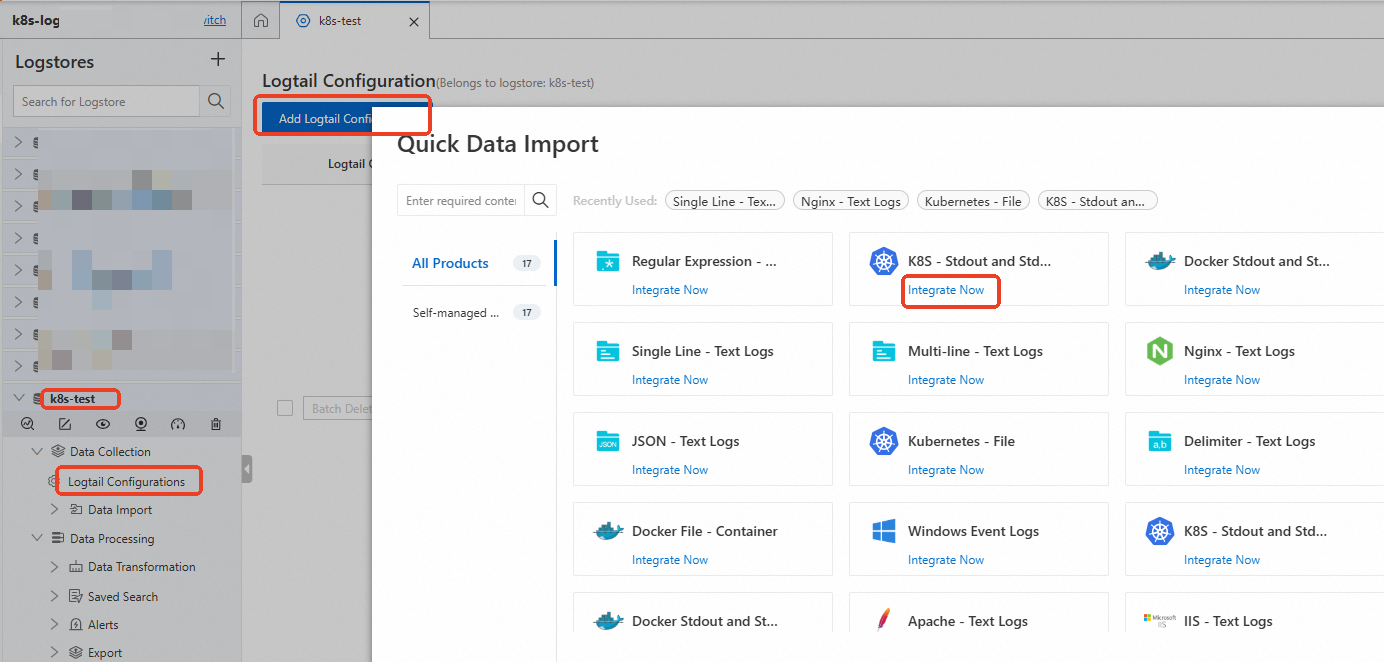

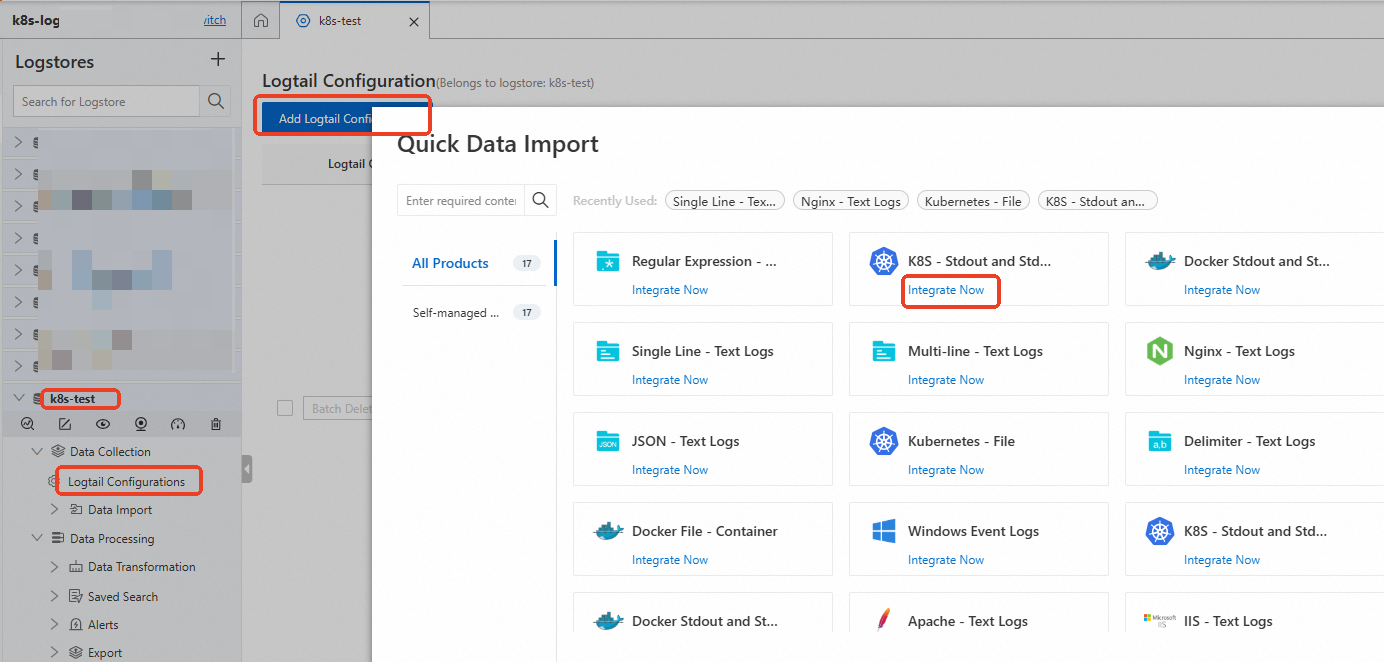

In the Projects section, click your project (for example, k8s-log-<YOUR_CLUSTER_ID>). Click the target Logstore, then click Logtail Configurations > Add Logtail Configuration. In the Quick Data Import dialog box, find the Kubernetes - File card and click Integrate Now.

-

In the Machine Group Configurations step, set Scenario to Kubernetes Clusters and Deployment Method to ACK Daemonset. Select k8s-group-${your_k8s_cluster_id} under Source Machine Group and click > to move it to Applied Server Groups. Click Next.

-

In the Logtail Configuration step, configure the required parameters and click Next. The configuration takes about one minute to take effect. For the full parameter list, see Create a Logtail configuration.

-

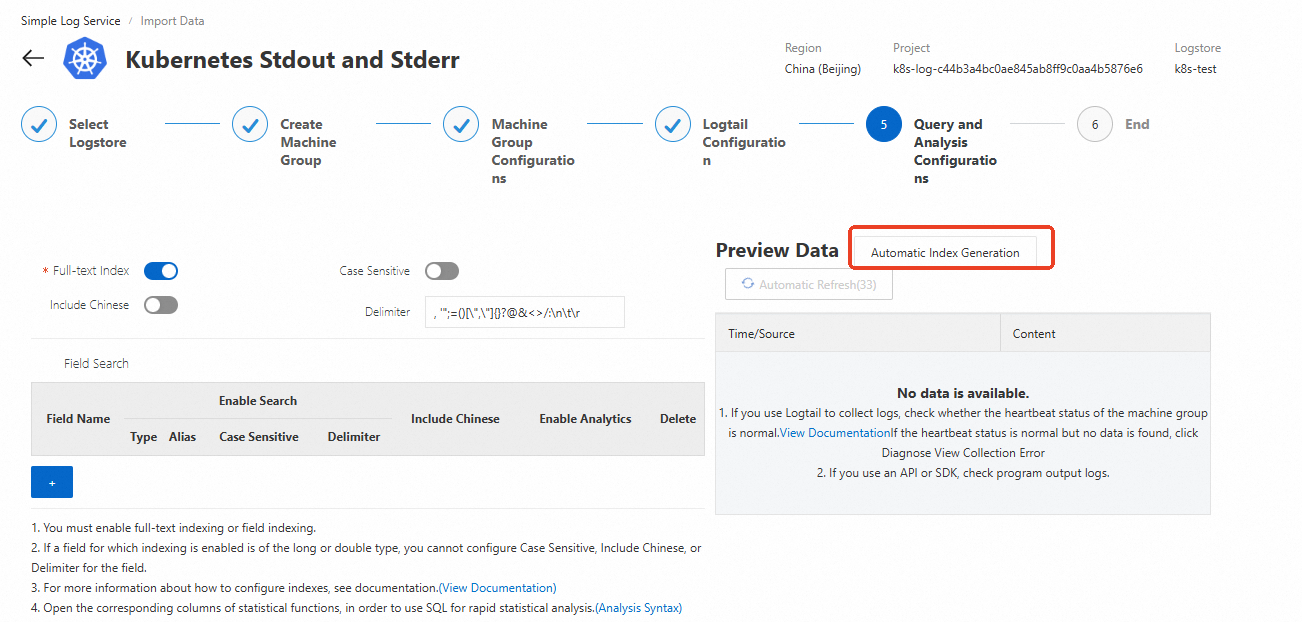

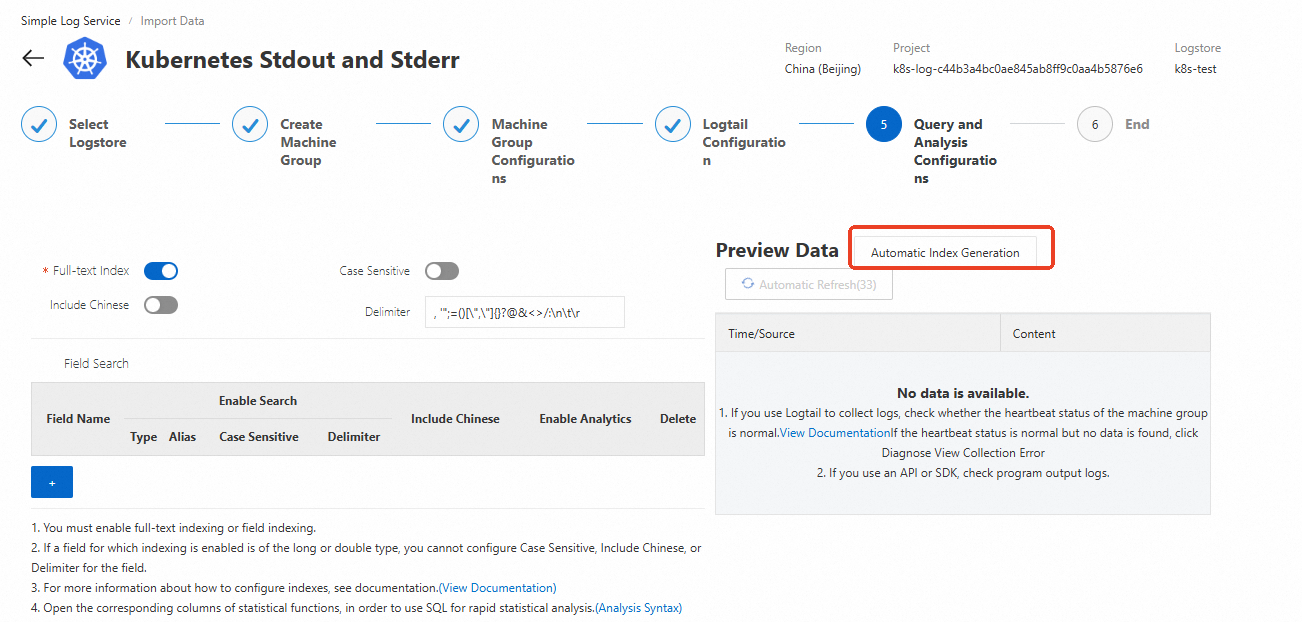

Create indexes and preview data. By default, SLS enables full-text indexing so all log fields are queryable. Alternatively, click Automatic Index Generation to create field indexes for more targeted queries at lower cost. For details, see Create indexes.

Environment variables

This method supports single-line text logs only. For multi-line logs or other formats, use one of the CRD or console methods above. Environment variables cannot be used in edge computing scenarios.

Configure via the ACK console

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Deployments.

-

On the Deployments page, click Create From Image.

-

On the Application Basic Information tab, set the application name and click Next. On the Container Configuration page, set the image name. For settings unrelated to SLS, see Create a stateless workload (Deployment).

-

In the Log section:

-

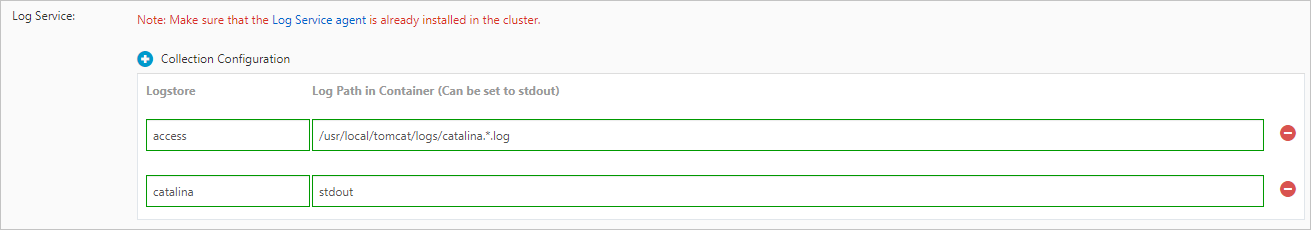

Click Collection Configuration to create a Logtail configuration. Each configuration requires:

-

Logstore: The Logstore where collected logs are stored. If the Logstore does not exist, ACK creates it automatically in the project associated with your cluster. > Note: The default log retention period is 90 days.

-

Log Path in Container: The path from which to collect logs, for example, /usr/local/tomcat/logs/catalina.*.log.

-

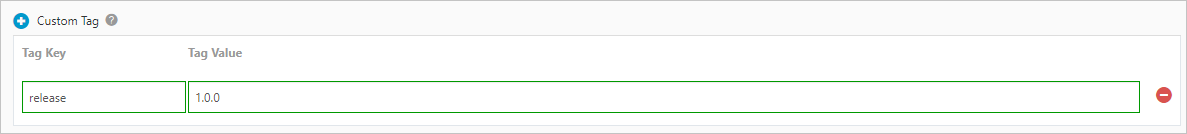

(Optional) Click Custom Tag to add key-value tags to collected logs, for example, a version number.

Configure via YAML

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Deployments.

-

On the Deployments page, select a namespace and click Create From YAML in the upper-right corner.

-

Configure the YAML. Use the env field to add collection configurations and custom tags, then add corresponding volumeMounts and volumes. All collection-related environment variables use the aliyun_logs_ prefix.

-

Collection configuration format: aliyun_logs_{key}, where {key} uses only lowercase letters, digits, and hyphens. The value is the log file path inside the container. If aliyun_logs_{key}_logstore is not set, a Logstore named {key} is created automatically.

-

Custom tag format: aliyun_logs_{key}_tags with value {tag-key}={tag-value}. The tag name {key} must not contain underscores.

-

If the collection path is not stdout, add a corresponding volumeMounts entry.

apiVersion: v1

kind: Pod

metadata:

name: my-demo

spec:

containers:

- name: my-demo-app

image: 'registry.cn-hangzhou.aliyuncs.com/log-service/docker-log-test:latest'

env:

# Collection configuration: collect /var/log/*.log to the log-varlog Logstore

- name: aliyun_logs_log-varlog

value: /var/log/*.log

# Custom tag: append tag1=v1 to all collected logs

- name: aliyun_logs_mytag1_tags

value: tag1=v1

volumeMounts:

- name: volumn-sls-mydemo

mountPath: /var/log

command: ["sh", "-c"]

args: ["sleep 3600"]

volumes:

- name: volumn-sls-mydemo

emptyDir: {}

-

Click Create to apply the configuration.

Advanced environment variable parameters

| Variable |

Required |

Default |

Description |

aliyun_logs_{key} |

Yes |

— |

Log path inside the container, or stdout to collect standard output. {key} uses lowercase letters, digits, and hyphens only. |

aliyun_logs_{key}_tags |

No |

— |

Custom tags in {tag-key}={tag-value} format. |

aliyun_logs_{key}_project |

No |

Project selected at install |

A different SLS project. Must be in the same region as LoongCollector. |

aliyun_logs_{key}_logstore |

No |

{key} |

Logstore name. |

aliyun_logs_{key}_shard |

No |

2 |

Number of shards (1–10). No effect if the Logstore already exists. |

aliyun_logs_{key}_ttl |

No |

90 days |

Log retention period in days (1–3650). Set to 3650 for permanent retention. No effect if the Logstore already exists. |

aliyun_logs_{key}_machinegroup |

No |

Default LoongCollector machine group |

Machine group name. See Collect container logs from ACK clusters. |

aliyun_logs_{key}_logstoremode |

No |

standard |

Logstore type: standard or query. Requires logtail-ds image version >= 1.3.1. No effect if the Logstore already exists. |

Logstore types

| Type |

SQL analysis |

Best for |

standard |

Yes |

Real-time monitoring, interactive analysis, and comprehensive observability |

query |

No |

Large data volumes, long retention periods (weeks or months), no log analysis needed. Index traffic fee is about half that of standard. |

Custom scenarios

Collect from multiple applications to the same Logstore

Set aliyun_logs_{key}_logstore to the same Logstore name for all applications. Each application uses a unique {key}.

Application 1:

- name: aliyun_logs_app1-stdout

value: stdout

- name: aliyun_logs_app1-stdout_logstore

value: stdout-logstore

Application 2:

- name: aliyun_logs_app2-stdout

value: stdout

- name: aliyun_logs_app2-stdout_logstore

value: stdout-logstore

Collect from different applications to different projects

-

In each target project, create a machine group with the custom identifier k8s-group-{cluster-id}, where {cluster-id} is your cluster ID.

-

For each application, set the project, Logstore, and machine group environment variables.

Application 1:

- name: aliyun_logs_app1-stdout

value: stdout

- name: aliyun_logs_app1-stdout_project

value: app1-project

- name: aliyun_logs_app1-stdout_logstore

value: app1-logstore

- name: aliyun_logs_app1-stdout_machinegroup

value: app1-machine-group

Application 2:

- name: aliyun_logs_app2-stdout

value: stdout

- name: aliyun_logs_app2-stdout_project

value: app2-project

- name: aliyun_logs_app2-stdout_logstore

value: app2-logstore

- name: aliyun_logs_app2-stdout_machinegroup

value: app1-machine-group

Collect stdout

stdout is the real-time output written by applications running inside containers, including both stdout and stderr. It is best suited for program debugging and quick troubleshooting.

The same four configuration methods apply. See the method-specific instructions below.

CRD - AliyunPipelineConfig (recommended)

-

Log on to the ACK console.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Custom Resources.

-

On the Custom Resources page, click the CRDs tab, then click Create from YAML.

-

Modify the following YAML and click Create. The example below captures stdout and stderr from pods labeled app: ^(.*test.*)$ in the default namespace using multi-line mode, and sends them to the k8s-stdout Logstore in k8s-log-<YOUR_CLUSTER_ID>. Replace the following placeholders: For a complete list of parameters, see PipelineConfig and CR parameters. Use the Logtail configuration generator to generate a YAML template for your scenario.

-

project.name: Your project name, for example, k8s-log-<YOUR_CLUSTER_ID>.

-

IncludeK8sLabel: Pod label filter. app: ^(.*test.*)$ collects pods whose app label contains test.

-

Endpoint and Region: For example, ap-southeast-1.log.aliyuncs.com and ap-southeast-1.

apiVersion: telemetry.alibabacloud.com/v1alpha1

kind: ClusterAliyunPipelineConfig

metadata:

# The resource name must be unique in the cluster. It becomes the LoongCollector configuration name.

name: example-k8s-stdout

spec:

project:

name: k8s-log-<YOUR_CLUSTER_ID>

logstores:

- name: k8s-stdout

config:

# Sample log (optional)

sample: |

2024-06-19 16:35:00 INFO test log

line-1

line-2

end

inputs:

# service_docker_stdout collects stdout and stderr from containers.

- Type: service_docker_stdout

Stdout: true

Stderr: true

# Filter by namespace (supports regular expressions)

K8sNamespaceRegex: "^(default)$"

CollectContainersFlag: true

# Filter by pod label (supports regular expressions)

IncludeK8sLabel:

app: ^(.*test.*)$

# Regular expression for the first line of a multi-line log entry.

# Remove this line to collect single-line logs.

BeginLineRegex: \d+-\d+-\d+.*

flushers:

- Type: flusher_sls

Logstore: k8s-stdout

Endpoint: ap-southeast-1.log.aliyuncs.com

Region: ap-southeast-1

TelemetryType: logs

CRD - AliyunLogConfig

-

Log on to the ACK console.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Custom Resources.

-

On the Custom Resources page, click the CRDs tab, then click Create from YAML.

-

Modify the following YAML and click Create. The example below creates a configuration named simple-stdout-example that collects stdout and stderr from all containers whose names start with app using multi-line mode, and sends the data to the k8s-stdout Logstore in k8s-log-<YOUR_CLUSTER_ID>. For the full list of logtailConfig parameters, see AliyunLogConfigDetail and CR parameters.

apiVersion: log.alibabacloud.com/v1alpha1

kind: AliyunLogConfig

metadata:

# The resource name must be unique in the cluster.

name: simple-stdout-example

spec:

# Optional. Defaults to k8s-log-<your_cluster_id>.

# project: k8s-log-test

logstore: k8s-stdout

logtailConfig:

# Set to "plugin" to collect stdout.

inputType: plugin

configName: simple-stdout-example

inputDetail:

plugin:

inputs:

- type: service_docker_stdout

detail:

Stdout: true

Stderr: true

# Filter by namespace (supports regular expressions)

K8sNamespaceRegex: "^(default)$"

# Filter by container name (supports regular expressions)

K8sContainerRegex: "^(app.*)$"

# Regular expression for the first line of a multi-line log entry.

BeginLineRegex: \d+-\d+-\d+.*

Simple Log Service console

-

Log on to the Simple Log Service console.

-

In the Projects section, click your project (for example, k8s-log-<YOUR_CLUSTER_ID>). Click the target Logstore, then click Logtail Configurations > Add Logtail Configuration. Click Integrate Now under K8s - Stdout and Stderr - Old Version.

-

Select Use Existing Machine Groups.

-

On the Machine Group Configurations page, select k8s-group-${your_k8s_cluster_id} under ACK Daemonset in the Kubernetes Clusters scenario, add it to the applied machine group, then click Next.

-

In the Logtail Configuration step, configure the required parameters and click Next. The configuration takes about one minute to take effect. In Global Configuration, enter the configuration name. For the full parameter list, see Global Configurations.

-

Create indexes and preview data. Full-text indexing is enabled by default. Click Automatic Index Generation to create field indexes for targeted queries at lower cost. For details, see Create indexes.

Environment variables

This method supports single-line logs only. For multi-line logs, use one of the CRD methods above.

Configure via the ACK console

-

Log on to the ACK console and click Clusters in the left-side navigation pane.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Deployments.

-

On the Deployments page, select a namespace and click Create from Image.

-

On the Basic Information page, set the application name and click Next. For settings unrelated to SLS, see Create a stateless workload (Deployment).

-

In the Log section:

-

Click Collection Configuration. For Log Path in Container, set the value to stdout to collect standard output and error output. > Note: The default log retention period for new Logstores is 90 days.

-

(Optional) Click Custom Tag to add key-value tags to collected logs.

-

After configuring all settings, click Next to continue. See Create a stateless workload (Deployment) for subsequent steps.

Configure via YAML

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the target cluster. In the left-side navigation pane, choose Workloads > Deployments.

-

On the Deployments page, select a namespace and click Create from YAML.

-

Configure the YAML. Set aliyun_logs_{key} to stdout to collect standard output.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: deployment-stdout

name: deployment-stdout

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: deployment-stdout

template:

metadata:

labels:

app: deployment-stdout

spec:

containers:

- args:

- >-

while true; do date '+%Y-%m-%d %H:%M:%S'; echo 1; echo 2; echo 3;

sleep 10; done

command:

- /bin/sh

- '-c'

- '--'

env:

# Collect stdout to the log-stdout Logstore

- name: aliyun_logs_log-stdout

value: stdout

image: 'mirrors-ssl.aliyuncs.com/busybox:latest'

name: timestamp-test

-

Click Create to apply the configuration.

For advanced environment variable parameters, see the Advanced environment variable parameters table in the text log section above. The parameters are the same for stdout collection.

Step 3: Query and analyze logs

-

Log on to the Simple Log Service console.

-

In the Projects section, click the project to open its details page.

-

In the left-side navigation pane, click the  icon of the target Logstore and select Search & Analysis to view logs collected from your Kubernetes cluster.

icon of the target Logstore and select Search & Analysis to view logs collected from your Kubernetes cluster.

Default log fields

Each collected log record includes default fields. Use these fields in the SLS query editor to filter logs by pod, container, namespace, or file path.

Text log fields

| Field |

Description |

Common query uses |

__tag__:__hostname__ |

Name of the container host |

Filter logs from a specific node |

__tag__:__path__ |

Log file path inside the container |

Distinguish logs from different file paths on the same pod |

__tag__:_container_ip_ |

IP address of the container |

Correlate logs with network traffic |

__tag__:_image_name_ |

Name of the image used by the container. If multiple images share the same hash, one name is selected based on the hash and may differ from the name in your YAML. |

Filter by image version |

__tag__:_pod_name_ |

Name of the pod |

Isolate logs from a specific pod instance |

__tag__:_namespace_ |

Namespace of the pod |

Scope queries to a namespace |

__tag__:_pod_uid_ |

Unique identifier (UID) of the pod |

Trace logs across pod restarts |

stdout fields

| Field |

Description |

Common query uses |

_time_ |

Time when the log was collected |

Time-range filtering |

_source_ |

Log source type: stdout or stderr |

Filter errors by querying _source_: stderr |

_image_name_ |

Name of the image |

Filter by image version |

_container_name_ |

Name of the container |

Isolate a specific container in a multi-container pod |

_pod_name_ |

Name of the pod |

Isolate logs from a specific pod instance |

_namespace_ |

Namespace of the pod |

Scope queries to a namespace |

_pod_uid_ |

Unique identifier of the pod |

Trace logs across pod restarts |

What's next

-

Query and analyze logs: Use SLS query and analysis features to explore collected logs. See Query and analyze logs.

-

Visualize logs: Build dashboards to visualize log trends. See Create a dashboard.

-

Set up alerts: Get notified when log anomalies occur. See Configure alert rules.

-

Collect historical logs: By default, only incremental logs are collected. To import historical logs, see Import historical logs from log files.

-

Troubleshoot collection errors:

![]()

icon of the target Logstore and select Search & Analysis to view logs collected from your Kubernetes cluster.

icon of the target Logstore and select Search & Analysis to view logs collected from your Kubernetes cluster.