A Deployment manages a set of identical pods and keeps the specified number running at all times. It is the standard workload type for stateless applications in Kubernetes—services that don't depend on persistent local state, such as web servers, API backends, and batch processors.

This topic shows how to create a Deployment in an ACK cluster using the console or kubectl.

Prerequisites

Before you begin, ensure that you have:

-

An ACK cluster. See Workloads to understand workload concepts and considerations.

-

Internet access for the cluster or its nodes, because the quick-start examples pull a public image:

-

(Recommended) Enable Internet access for the cluster — creates an Internet NAT gateway for the VPC, giving all resources in the cluster outbound access.

-

Assign a static public IP address to each node where the workload runs.

-

Create a deployment

Create a Deployment using the console

The steps below walk through the fastest path to a running Deployment. After you're comfortable with the basics, see Console configuration reference to customize the workload further.

-

Configure basic information. Log in to the Container Service for Kubernetes console. In the left navigation pane, click Clusters. On the Clusters page, click the name of your cluster, then choose Workloads > Deployments in the left navigation pane. On the Deployments page, click Create from Image. On the Basic Information page, set the application name and other basic settings, then click Next to go to the Container page.

-

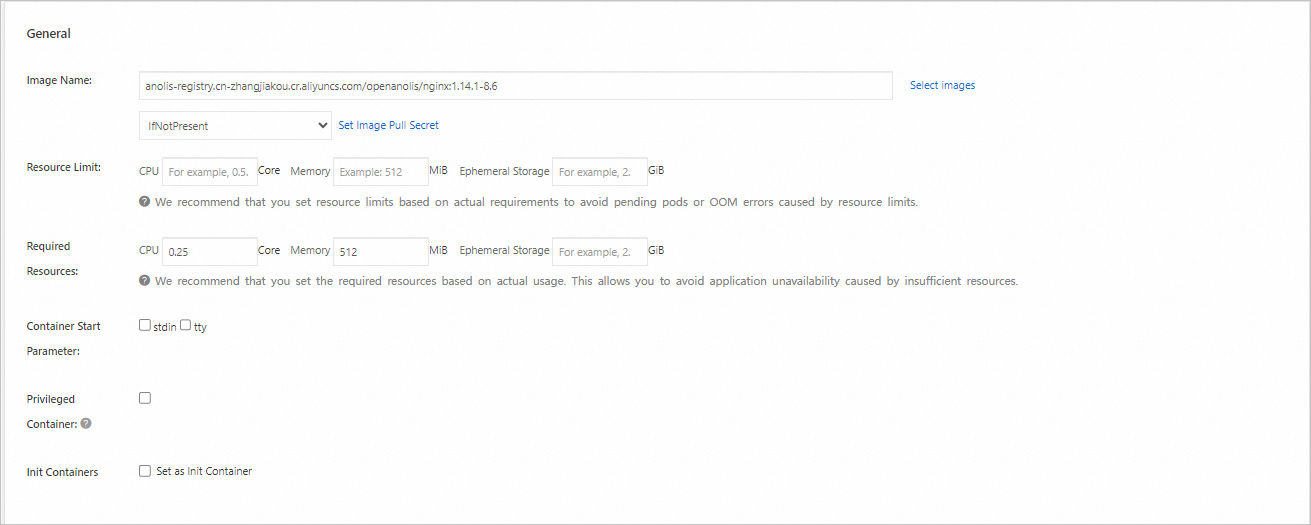

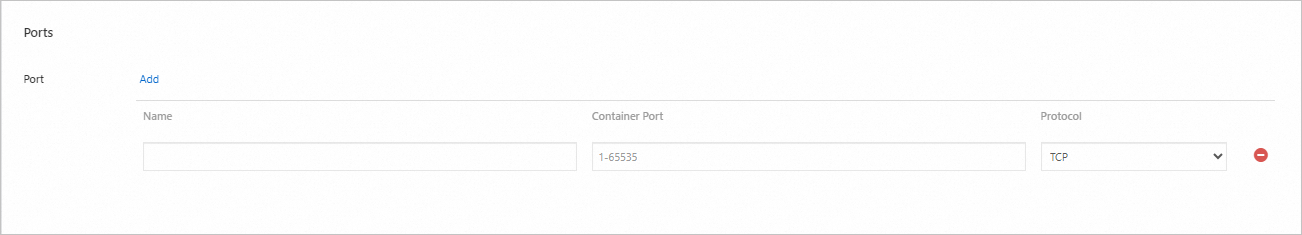

Configure the container. In the Container section, set Image Name to the following address and set Port to

80. Leave all other settings at their defaults, then click Next to go to the Advanced page.ImportantTo pull this image, the cluster must have Internet access. If you selected Configure SNAT for VPC when creating the cluster, Internet access is already enabled. Otherwise, enable it now.

anolis-registry.cn-zhangjiakou.cr.aliyuncs.com/openanolis/nginx:1.14.1-8.6

-

Complete the advanced configuration. On the Advanced page, click Create on the right side of Services to create a SLB type Service to expose the workload over the internet. Configure Scaling, Scheduling, and Labels and Annotations as needed, then click Create at the bottom of the page.

ImportantCreating an SLB-type service provisions a Server Load Balancer (SLB) instance, which incurs pay-as-you-go charges. See Pay-as-you-go for pricing details. Release the SLB instance when you no longer need it.

-

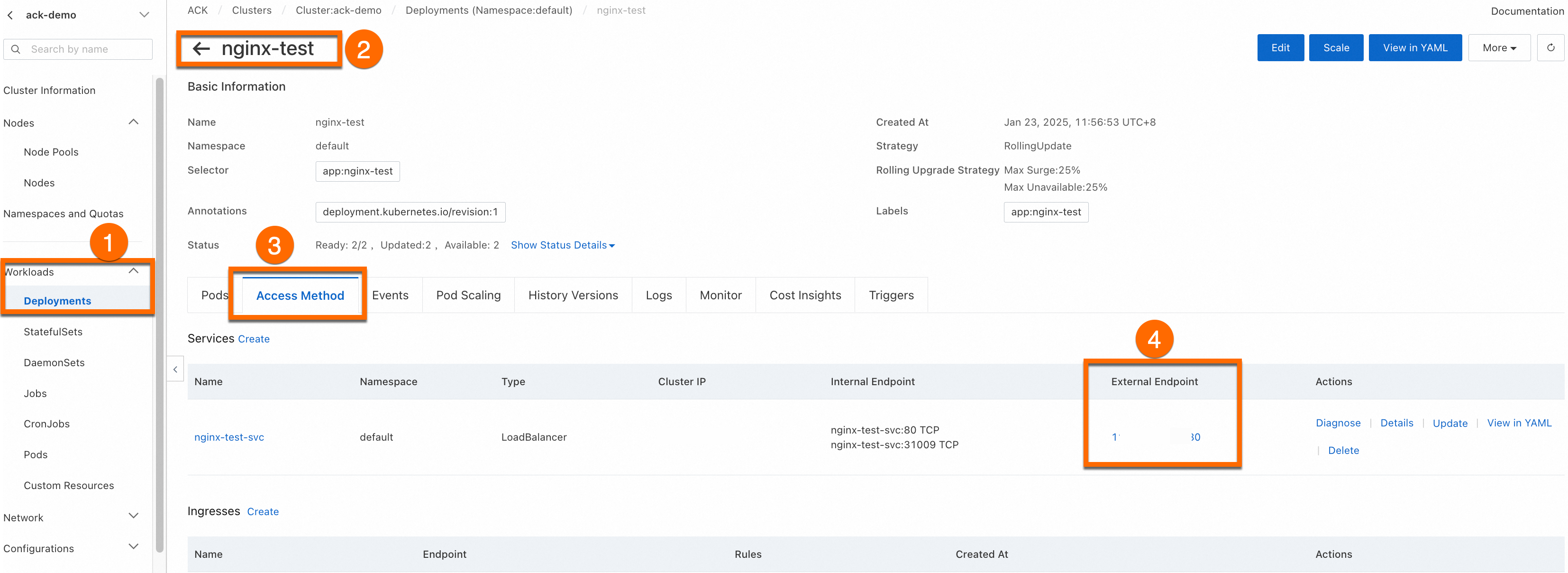

Access the application. On the Complete page, click View Details in the Creation Task Submitted panel. Click the Access Method tab, find the service named

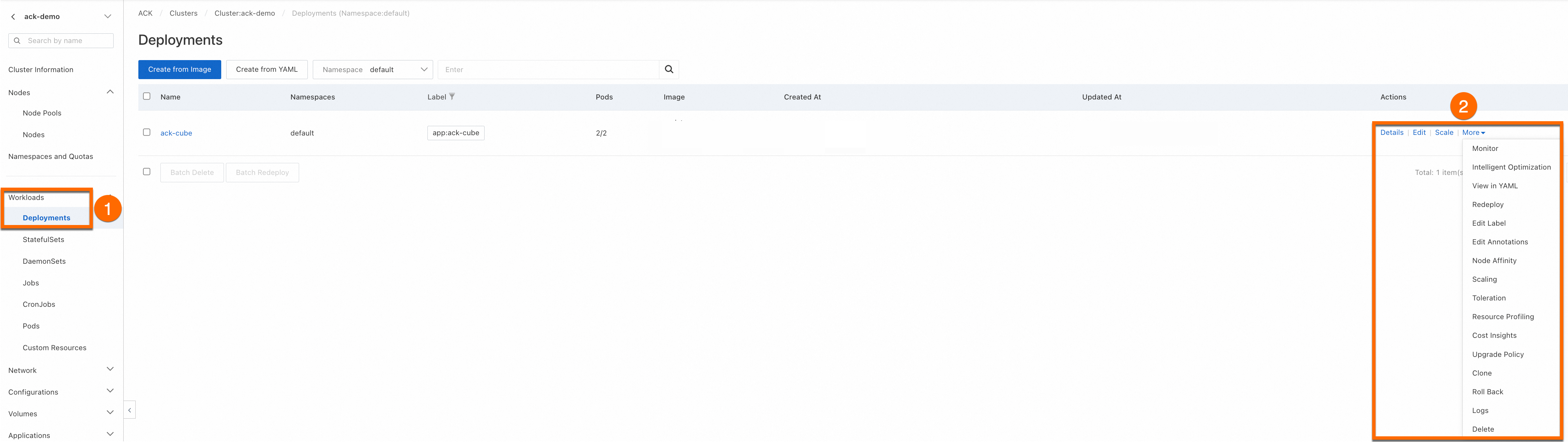

nginx-test-svc, and click the link in the External Endpoint column. From the Deployments page, you can View, Edit, and Redeploy the workload at any time.

Create a Deployment using kubectl

Connect to the cluster with kubectl before proceeding. See Obtain the kubeconfig file of a cluster and use kubectl to connect to the cluster.

-

Copy the following YAML content and save it as

deployment.yaml. It defines a Deployment with two replicas and a LoadBalancer service that exposes the Deployment on port 80.apiVersion: apps/v1 kind: Deployment # Workload type metadata: name: nginx-test namespace: default # Change the namespace as needed labels: app: nginx spec: replicas: 2 # Specify the number of pods selector: matchLabels: app: nginx template: # Pod configuration metadata: labels: # Pod labels app: nginx spec: containers: - name: nginx # Container name image: anolis-registry.cn-zhangjiakou.cr.aliyuncs.com/openanolis/nginx:1.14.1-8.6 # Use a specific version of the Nginx image ports: - containerPort: 80 # Port exposed by the container protocol: TCP # Specify the protocol as TCP or UDP. The default is TCP. --- # service apiVersion: v1 kind: Service metadata: name: nginx-test-svc namespace: default # Change the namespace as needed labels: app: nginx spec: selector: app: nginx # Match labels to ensure the service points to the correct pods ports: - port: 80 # Port provided by the service within the cluster targetPort: 80 # Points to the port listened to by the application inside the container (containerPort) protocol: TCP # Protocol. The default is TCP. type: LoadBalancer # Service type. The default is ClusterIP for internal access. -

Apply the manifest:

kubectl apply -f deployment.yamlExpected output:

deployment.apps/nginx-test created service/nginx-test-svc created -

Run the following command to view the public IP address of the service:

kubectl get svcExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 172.16.**.*** <none> 443/TCP 4h47m nginx-test-svc LoadBalancer 172.16.**.*** 106.14.**.*** 80:31130/TCP 1h10m -

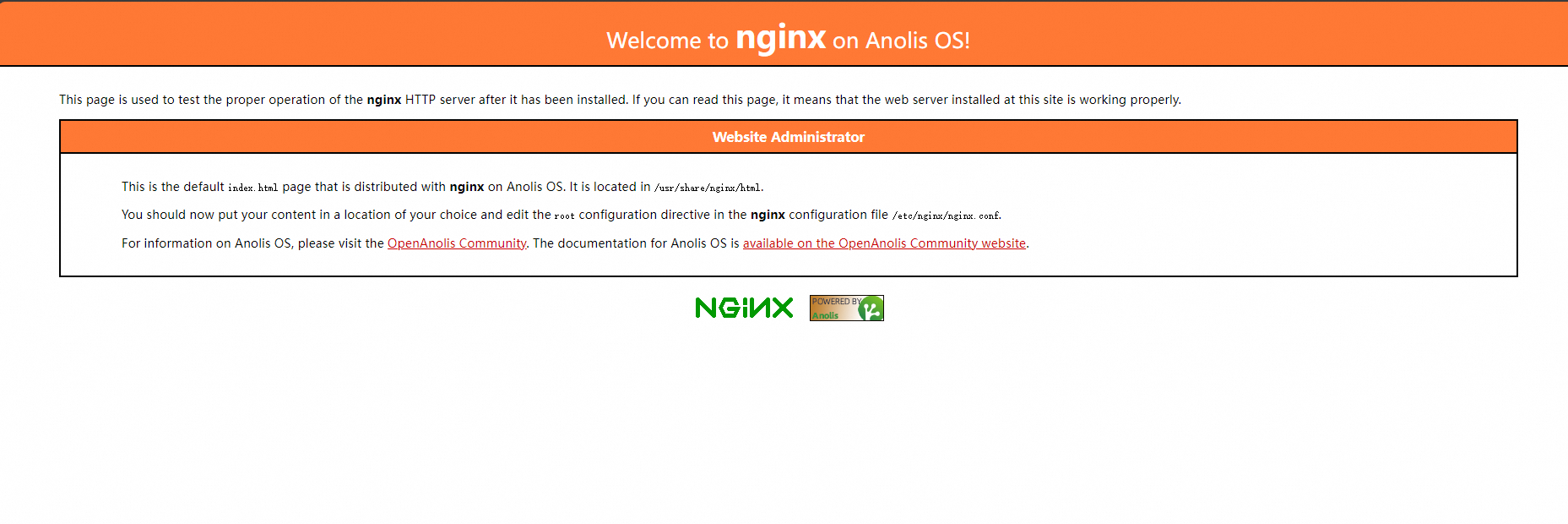

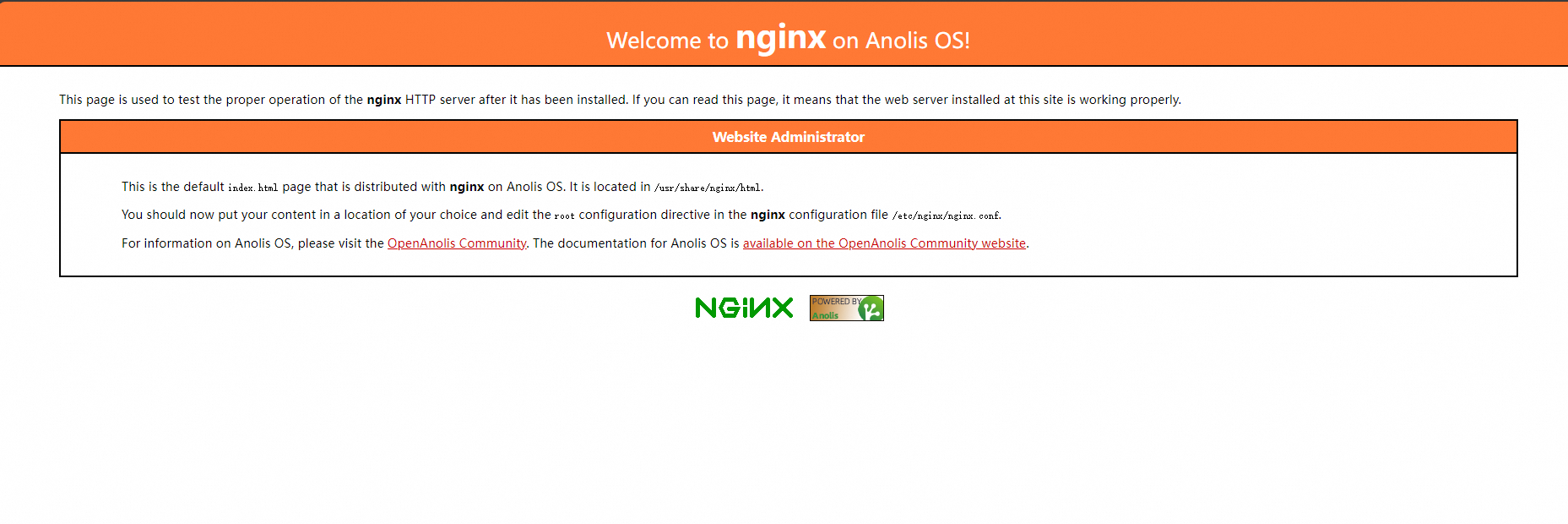

In a browser, enter the public IP address of Nginx (

106.14..*) to access the Nginx application.

Console configuration reference

Basic information

|

Configuration Item |

Description |

|

Name |

The name of the workload. The names of the pods belonging to the workload are generated based on this name. |

|

Namespace |

The namespace to which the workload belongs. |

|

Replicas |

The number of pods in the workload. The default is 2. |

|

Type |

The type of the workload. To choose a workload type, see Create a workload. |

|

Label |

The labels of the workload. |

|

Annotations |

The annotations of the workload. |

|

Synchronize Timezone |

Specifies whether the container uses the same time zone as the node where it resides. |

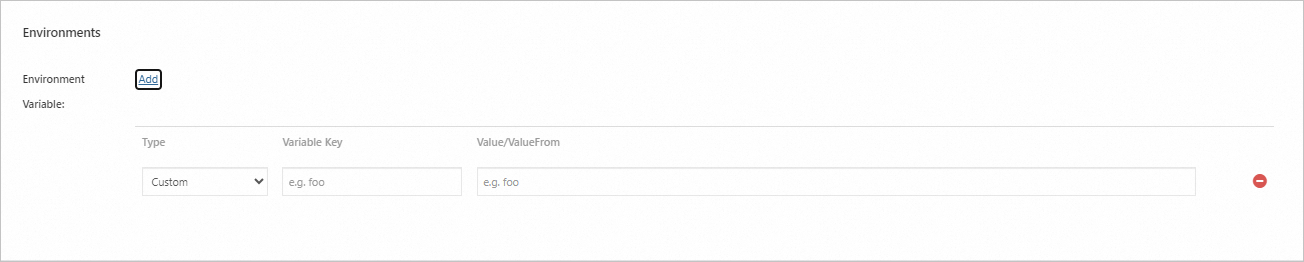

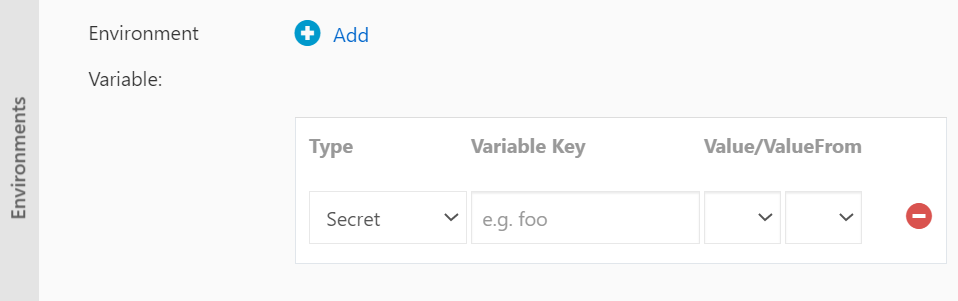

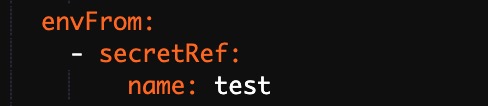

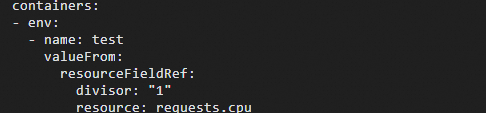

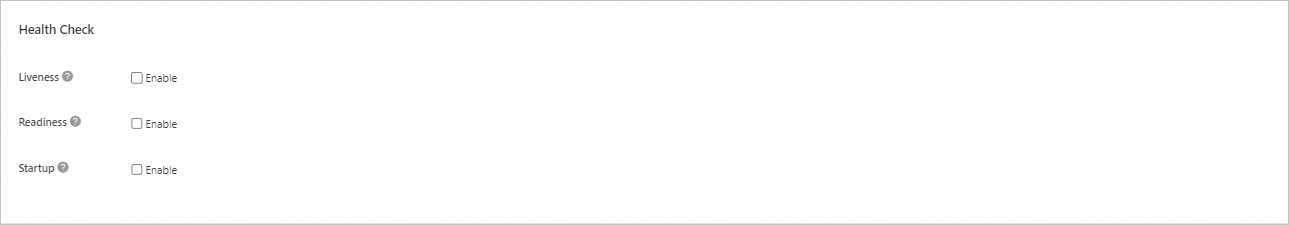

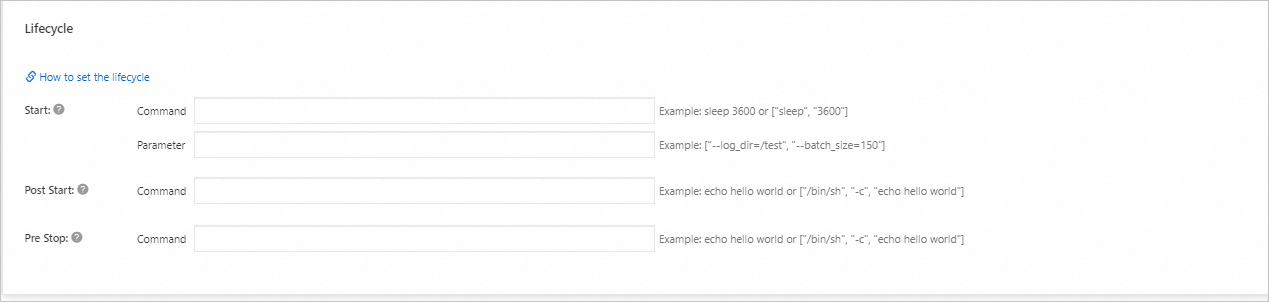

Container

Advanced configuration

|

Configuration Card |

Configuration Item |

Description |

|

Access Control |

Services |

A service provides a fixed, unified Layer 4 (transport-layer) entry point for a group of pods. It is a required resource for exposing a workload. Services support multiple types, including Cluster IP, Node Port, and SLB. Before configuring a service, see Service management to understand the basics of services. |

|

Ingresses |

An Ingress provides a Layer 7 (application layer) entry point for multiple services in a cluster and forwards requests to different services based on domain name matching. Before using an Ingress, you need to install an Ingress controller. ACK provides several options for different scenarios. See Comparison of Nginx Ingress, ALB Ingress, and MSE Ingress to make a selection. |

|

|

Scaling |

HPA |

Triggers autoscaling by monitoring the performance metrics of containers. Metrics-based scaling helps you automatically adjust the total resources used by a workload when the business load fluctuates, scaling out to handle high loads and scaling in to save resources during low loads. For more information, see Use Horizontal Pod Autoscaling (HPA). |

|

CronHPA |

Triggers workload scaling at scheduled times. This is suitable for scenarios with periodic changes in business load, such as the cyclical traffic peaks on social media after lunch and dinner. For more information, see Use CronHPA for scheduled horizontal pod autoscaling. |

|

|

Scheduling |

Upgrade Method |

The mechanism by which a workload replaces old pods with new ones when the pod configuration changes.

|

|

Affinity, anti-affinity, and toleration configurations are used for scheduling to ensure pods run on specific nodes. Scheduling operations are complex and require advance planning based on your needs. For detailed operations, see Scheduling. |

|

|

Labels and Annotations |

Pod Labels |

Adds a label to each pod belonging to this workload. Various resources in the cluster, including workloads and services, match with pods through labels. ACK adds a default label to pods in the format |

|

Pod Annotations |

Adds an annotation to each pod belonging to this workload. Some features in ACK use annotations. You can edit them when using these features. |

Sample workload YAML

The following comprehensive YAML demonstrates common configuration options, including resource limits, health checks, environment variables from a ConfigMap, and an Ingress.

apiVersion: apps/v1

kind: Deployment # Workload type

metadata:

name: nginx-test

namespace: default # Change the namespace as needed

labels:

app: nginx

spec:

replicas: 2 # Specify the number of pods

selector:

matchLabels:

app: nginx

template: # Pod configuration

metadata:

labels: # Pod labels

app: nginx

annotations: # Pod annotations

description: "This is an application deployment"

spec:

containers:

- name: nginx # Image name

image: nginx:1.7.9 # Use a specific version of the Nginx image

ports:

- name: nginx # name

containerPort: 80 # Port exposed by the container

protocol: TCP # Specify the protocol as TCP or UDP. The default is TCP.

command: ["/bin/sh"] # Container start command

args: [ "-c", "echo $(SPECIAL_LEVEL_KEY) $(SPECIAL_TYPE_KEY) && exec nginx -g 'daemon off;'"] # Output variables, add command to start nginx

stdin: true # Enable standard input

tty: true # Allocate a virtual terminal

env:

- name: SPECIAL_LEVEL_KEY

valueFrom:

configMapKeyRef:

name: special-config # Name of the configuration item

key: SPECIAL_LEVEL # Key name of the configuration item

securityContext:

privileged: true # true enables privileged mode, false disables it. The default is false.

resources:

limits:

cpu: "500m" # Maximum CPU usage, 500 millicores

memory: "256Mi" # Maximum memory usage, 256 MiB

ephemeral-storage: "1Gi" # Maximum ephemeral storage usage, 1 GiB

requests:

cpu: "200m" # Minimum requested CPU usage, 200 millicores

memory: "128Mi" # Minimum requested memory usage, 128 MiB

ephemeral-storage: "500Mi" # Minimum requested ephemeral storage usage, 500 MiB

livenessProbe: # Liveness probe configuration

httpGet:

path: /

port: 80

initialDelaySeconds: 30

periodSeconds: 10

readinessProbe: # Readiness probe configuration

httpGet:

path: /

port: 80

initialDelaySeconds: 5

periodSeconds: 10

volumeMounts:

- name: tz-config

mountPath: /etc/localtime

readOnly: true

volumes:

- name: tz-config

hostPath:

path: /etc/localtime # Mount the host's /etc/localtime file to the same path in the container using volumeMounts and volumes fields.

---

# service

apiVersion: v1

kind: Service

metadata:

name: nginx-test-svc

namespace: default # Change the namespace as needed

labels:

app: nginx

spec:

selector:

app: nginx # Match labels to ensure the service points to the correct pods

ports:

- port: 80 # Port provided by the service within the cluster

targetPort: 80 # Points to the port listened to by the application inside the container (containerPort)

protocol: TCP # Protocol. The default is TCP.

type: ClusterIP # Service type. The default is ClusterIP for internal access.

---

# ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx-ingress

namespace: default # Change the namespace as needed

annotations:

kubernetes.io/ingress.class: "nginx" # Specify the Ingress controller type

# If using Alibaba Cloud SLB Ingress controller, you can specify the following:

# service.beta.kubernetes.io/alibaba-cloud-loadbalancer-id: "lb-xxxxxxxxxx"

# service.beta.kubernetes.io/alibaba-cloud-loadbalancer-spec: "slb.spec.s1.small"

spec:

rules:

- host: foo.bar.com # Replace with your domain name

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: nginx-service # Backend service name

port:

number: 80 # Backend service port

tls: # Optional, for enabling HTTPS

- hosts:

- foo.bar.com # Replace with your domain name

secretName: tls-secret # TLS certificate Secret nameWhat's next

-

For applications that require persistent storage, such as databases, use a StatefulSet instead. See Create a stateful workload (StatefulSet).

-

If you encounter issues creating a workload, see Workload FAQ.

-

If a pod is in an abnormal state, see Troubleshoot pod exceptions.