MaxCompute associates Alibaba Cloud Object Storage Service (OSS) with external tables to export data to OSS. However, due to the unique directory structure of MaxCompute external tables, you are unable to perform further analytics with tools other than MaxCompute. This limits the applicable scenarios for external tables. Therefore, Alibaba Cloud Data Lake Analytics (DLA) has recently provided the function for analyzing the data in MaxCompute external tables. With DLA's powerful ecosystem, you can easily analyze MaxCompute's external tables and join other data sources for data query.

This article uses a simple example to describe how to use this function.

Go to the MaxCompute workspace, create an ODPS SQL node, and run the following statement to create an OSS external table.

CREATE EXTERNAL TABLE parquet

(

a int,

b int

)

STORED AS PARQUET

LOCATION 'oss://${accessKeyId}:${accessKeySecret}@${endpoint}/${bucket}/${userPath}/';For more information about the syntax for creating external tables, see the MaxCompute documentation.

After you create the table, you can run the following statements to write a few pieces of test data to it.

insert into parquet values(1,1);

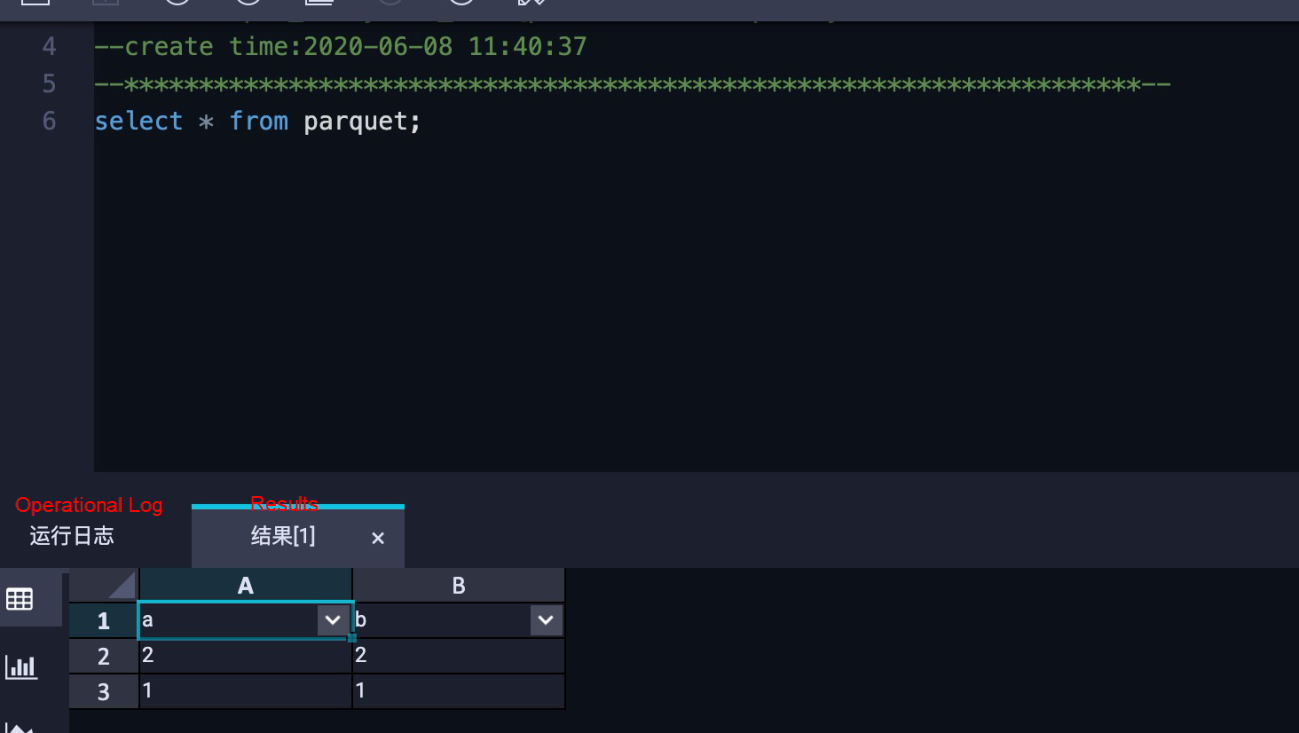

insert into parquet values(2,2);Perform a query. You can see that data has been written to the external table.

This section describes how you can use Data Lake Analytics (DLA) to analyze MaxCompute external tables. To begin, first create an OSS schema:

CREATE SCHEMA dla_oss_db with DBPROPERTIES(

catalog='oss',

location 'oss://${bucket}/${userPath}/'

)Run the following statement to create a table:

CREATE EXTERNAL TABLE odps_parquet (

`a` int,

`b` int

)

STORED AS PARQUET

LOCATION 'oss://${bucket}/${userPath}/'

TBLPROPERTIES (

'directory.odps' = 'true'

);Unlike other OSS files, a MaxCompute's external table has an additional table property: 'directory.odps' = 'true'. This property indicates that the directory is organized according to the format of the MaxCompute's external table.

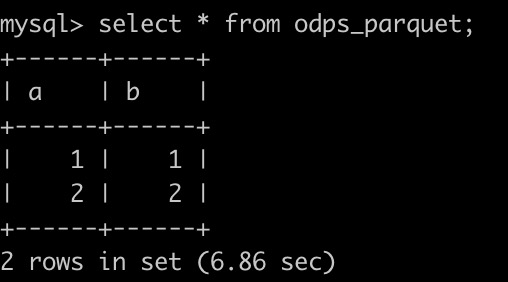

Perform a query:

We recommend that you use Data Lake Analytics (DLA), for inexpensive, fast, and convenient big data solutions.

DLA supports multiple data sources, such as user-created and hosted relational databases (RDS), NoSQL, and OSS. It also supports data files in various formats, including JSON, CSV, and Parquet.

DLA supports pay-as-you-go pricing that starts from zero. You will pay for the amount of data scanned in the provided elastic services, without the need to purchase fixed resources. Therefore, you can always use appropriate resources to address business fluctuations and elastic requirements for analytics. In addition, you can also significantly reduce the O&M and usage costs.

The platform hosts large clusters in the underlying layer and supports auto scaling. When it analyzes a certain amount of data, its analytics performance is 400% higher than that of small user-created clusters.

You can quickly pull MySQL, PostgreSQL, SQL Server, and ApsaraDB PolarDB databases to DLA to analyze the issues that you formerly dared not use MySQL to analyze. On the TPC-H 10 GB benchmark, the analytics performance of DLA is 10 times higher than that of MySQL 8c16g. The performance of MySQL decreases as the data volume increases. If the data volume increases to 1 TB, it will be hard for your queries to succeed in MySQL.

To learn more about DLA, visit the following links:

Tech Insights - Two-phase Commit Protocol for Distributed Transactions

The Evolution of Online Analytics from Alibaba Economy Ecosystem to the Cloud

Alibaba Cloud Storage - December 3, 2018

Alibaba Clouder - November 15, 2018

Alibaba Clouder - August 7, 2020

ApsaraDB - November 17, 2020

Alibaba Clouder - November 9, 2018

ApsaraDB - May 19, 2023

Data Lake Formation

Data Lake Formation

An end-to-end solution to efficiently build a secure data lake

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn MoreMore Posts by ApsaraDB