11.11 Big Sale for Cloud. Get unbeatable offers with up to 90% off on cloud servers and up to $300 rebate for all products! Click here to learn more.

AMAP envisions to connect the real world and make travel better, and to accomplish this vision, we strive to build a smart connection between location-based service (LBS), big data and users. Information retrieval is a key technology to this end, and the AMAP's suggestion service is an indispensable part of information retrieval.

This article describes the specific application of machine learning for the AMAP suggestion service, particularly the attempts at model optimization. These explorations and practices are verified and have achieved good results in laying the foundation for personalization, deep learning, and vector indexing for the coming years.

The suggestion service helps to automatically complete a user-input query or point of interest (POI), which can either be a shop, residential area, or bus station annotated in a geographic information system. After query completion, all candidates are intelligently listed and sorted. Using the suggestion service is expected to reduce users' input costs by giving smart prompts.

Though the suggestion service features a fast response, it does not support information retrieval for complex queries. It can be understood as a simplified LBS-specific information retrieval service.

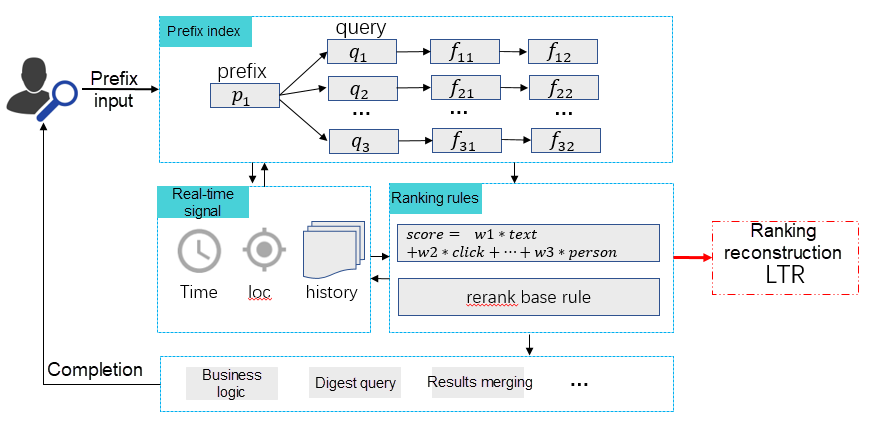

Similar to general information retrieval systems, the suggestion service is divided into two phases: document recall and ranking (in LBS, documents are also referred to as POIs). In the ranking phase, weighted scores are sorted based on the textual relevance between queries and documents and the features (such as weights and clicks) of documents.

As manual parameter tuning gets complicated with the growing business and increasing features, rule-based ranking no longer meets the requirements. In this scenario, business problems seeking solutions demand Patching, hence, making the code difficult to maintain.

Therefore, we decide to reconstruct the ranking module through learning to rank (LTR), which is undoubtedly a good choice.

LTR applies machine learning to solve ranking issues in retrieval systems. The GBRank model is commonly used, while pair-wise is most frequently used by the loss solution. Here we follow these practices. When we apply LTR to solve actual problems, we need to address the paramount issue of how to acquire samples.

AMAP has a huge daily access volume that is served by massive candidate POIs which makes it unfeasible to acquire samples through manual annotation.

Alternatively, applying automatic sample construction methods, such as building sample pairs based on POI clicks by users requires addressing the following issues:

In the preceding cases, no click data is available for modeling. Even though a user clicks a POI, he/she might not be necessarily satisfied.

We also have to address the challenge of feature sparsity during the model learning process. Statistical learning is intended to minimize global errors. Sparse features are often ignored by models because they affect few samples and have limited global impact. However, in practice, these features play an important role in solving long-tail cases. This attaches high importance to optimization during the model-learning process.

The preceding section describes two modeling challenges, first, how to construct samples and then, how to optimize model learning. We propose the following solution for sample construction:

Follow the steps below to successfully implement the solution for model optimization:

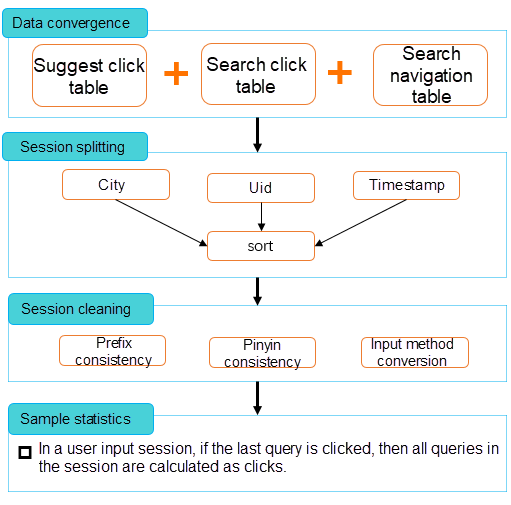

1. Data Convergence: Integrate multiple log tables on the server, including search suggestions, searches, and navigation.

2. Session Splitting: Split and clean sessions.

3. Session Cleaning: Calculate the clicks during the last query of the input session and generalize the calculation result for all queries of the particular session to minimize the input session. Refer to the following illustration.

4. Sample Statistics: Extract random queries with more than one million online click logs and recall the first N candidate POIs from each query.

With the preceding sample construction solution, we acquire tens of millions of valid samples to be used as the training samples for GBRrank.

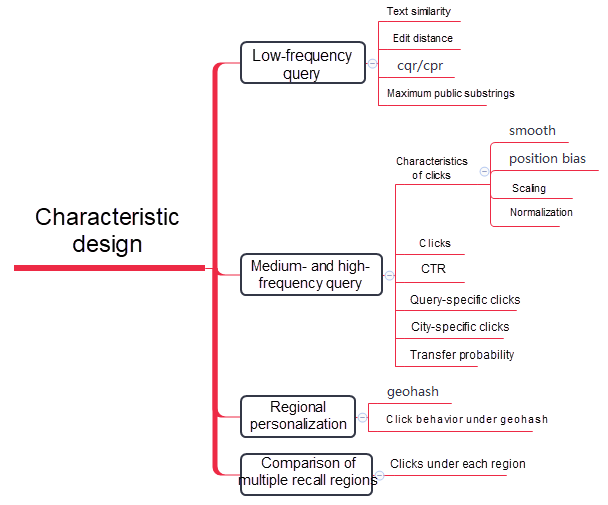

As for features, we consider the following four modeling requirements and propose a feature design solution for each requirement.

The following figure shows the feature design in detail.

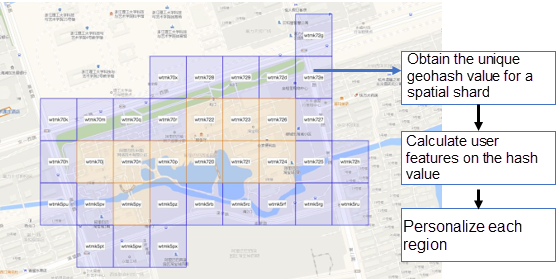

After feature design, we conduct necessary feature engineering, including scaling, feature smoothing, position bias removal, and normalization to maximize the effect of features.

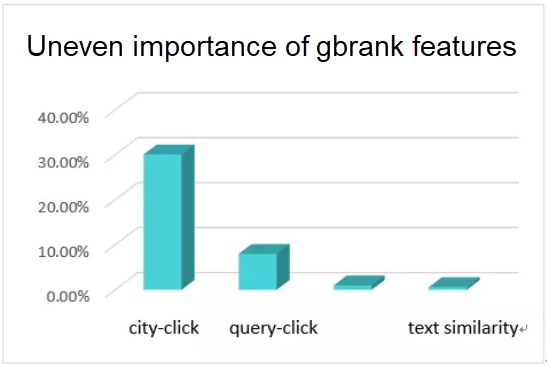

Once all rules are removed from the initial version, the MRR of the test set improves by five points approximately. However, model learning issues still exist. For example, GBRank feature learning is highly uneven. When a tree node is split, only a few features are selected, while other features do not work. This pertains to the second modeling challenge, model learning optimization. Specifically, we need to address the uneven importance of GBRank features. This issue is described in detail below.

Let's take a look at the feature importance of the model with the help of the following chart:

Post analysis, we identify the following key causes of feature-learning imbalance:

In aggregate, due to various causes, the same-feature city-click is always selected as the tree node during the tree model learning process, rendering other features ineffective. This problem can be solved through the following two methods:

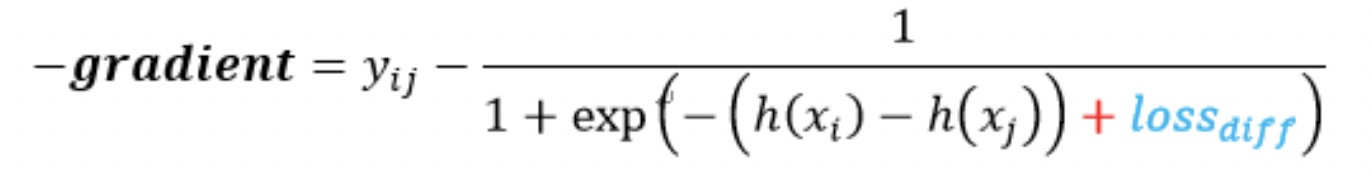

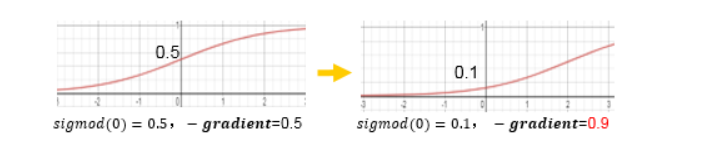

The preceding formula is the negative gradient of the cross-entropy loss function. loss_diff is equivalent to a translation of the Sigmoid function.

The difference is proportional to the value of loss_diff, the penalty, and the split gain of the feature during the next iteration.

After calling the loss, we retrain the model to acquire a two-point MRR improvement for the test set based on the initial model. The rate of solving for historical ranking cases increases from 40% to 70%, which is a significant improvement.

Learning to Rank (LTR) replaces the rule-based ranking method of the AMAP suggestion service and eliminates the need for policy coupling and patching in addition to several significant benefits. The GBRank model is launched to cover the ranking requirements of queries with all frequencies.

We have successfully launched the models for general population personalization and individual personalization, and are actively promoting the application of deep learning, vector indexing, and user behavior sequence prediction in the AMAP suggestion service.

Application of Machine Learning in Traffic Sign Detection and Fine-grained Classification

amap_tech - April 20, 2020

Alibaba Clouder - February 2, 2021

Alibaba Clouder - June 17, 2020

Alibaba Cloud Product Launch - December 12, 2018

Alibaba Cloud Native Community - April 17, 2025

Alibaba Cloud Community - January 12, 2026

Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn MoreMore Posts by amap_tech