For a brief moment, OpenClaw was the name on every developer's lips. It wasn't just another AI project; it was an open-source framework that showed us workflows could be modular, intelligent, and self-directed. OpenClaw made developers imagine a future where AI agents weren't just assistants, but orchestrators of complex systems.

But movements evolve. Commercial platforms like Alibaba's QoderWork moved fast, closing the gap with production-ready offerings. What once felt experimental is now becoming standardized. The agent paradigm is no longer a fringe idea; it's a mainstream development strategy. In that sense, OpenClaw's moment has passed — not because the idea failed, but because it succeeded so well that commercial platforms industrialized it.

OpenClaw's true contribution wasn't its codebase or its features. It was the architectural shift it triggered. It normalized the idea that AI agents could be trusted to handle complex workflows. It made enterprises ask not if they should adopt agents, but how fast they could build them. The baton has passed from hype-driven pioneers to commercially viable platforms, and the race is only just beginning.

The next chapter isn't about gluing together disparate tools. It's about building on a unified foundation that just works together. Developers want to focus on agent logic, not infrastructure plumbing. They want services that integrate seamlessly, scale automatically, and don't require a DevOps team to maintain.

This is where Alibaba Cloud's triad comes in. ACS, ACK, and OSS aren't three separate products you need to wire together — they're a cohesive platform designed to handle the full lifecycle of an AI agent.

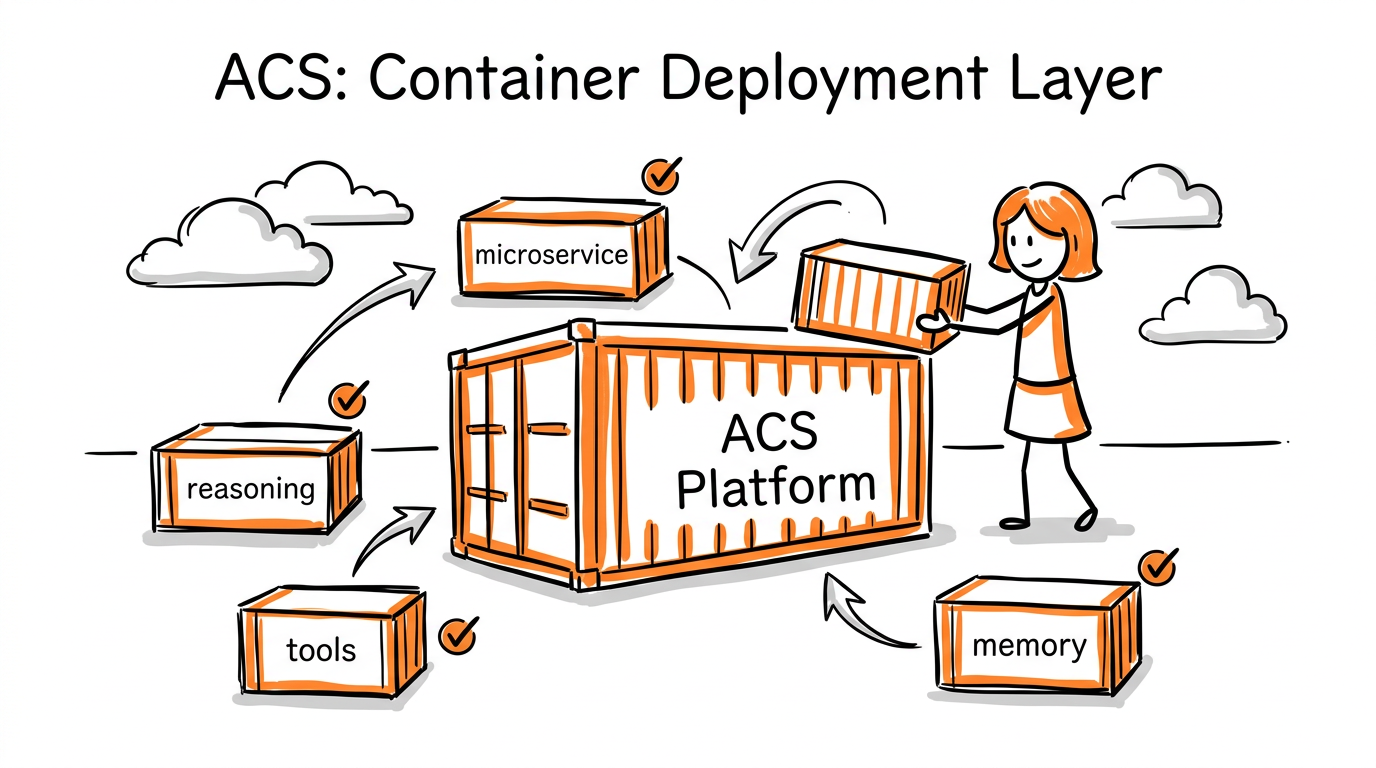

ACS (Alibaba Cloud Container Service) is your deployment layer. When you build an agent, you're really building a set of microservices: one for reasoning, one for tool execution, one for memory retrieval. ACS lets you deploy these as containerized services without worrying about the underlying VMs. You define your services, ACS handles the scheduling, health checks, and rolling updates.

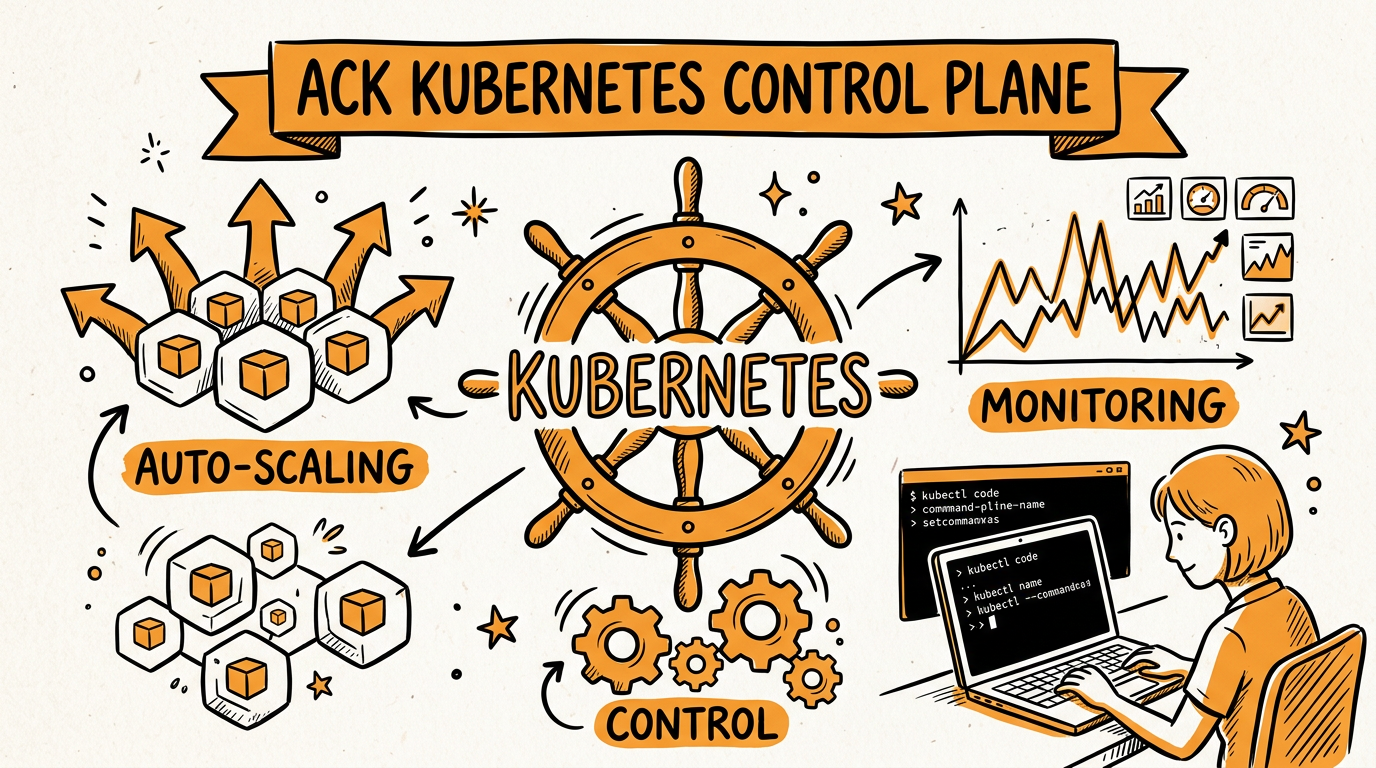

ACK (Alibaba Cloud Kubernetes) is your control plane. ACS runs on top of ACK, which means you get Kubernetes-native workflows: declarative configurations, auto-scaling based on custom metrics, and the entire ecosystem of K8s tools. If you know kubectl, you already know how to debug your agents. If you need to scale from ten requests per minute to ten thousand, ACK's Horizontal Pod Autoscaler handles it without code changes.

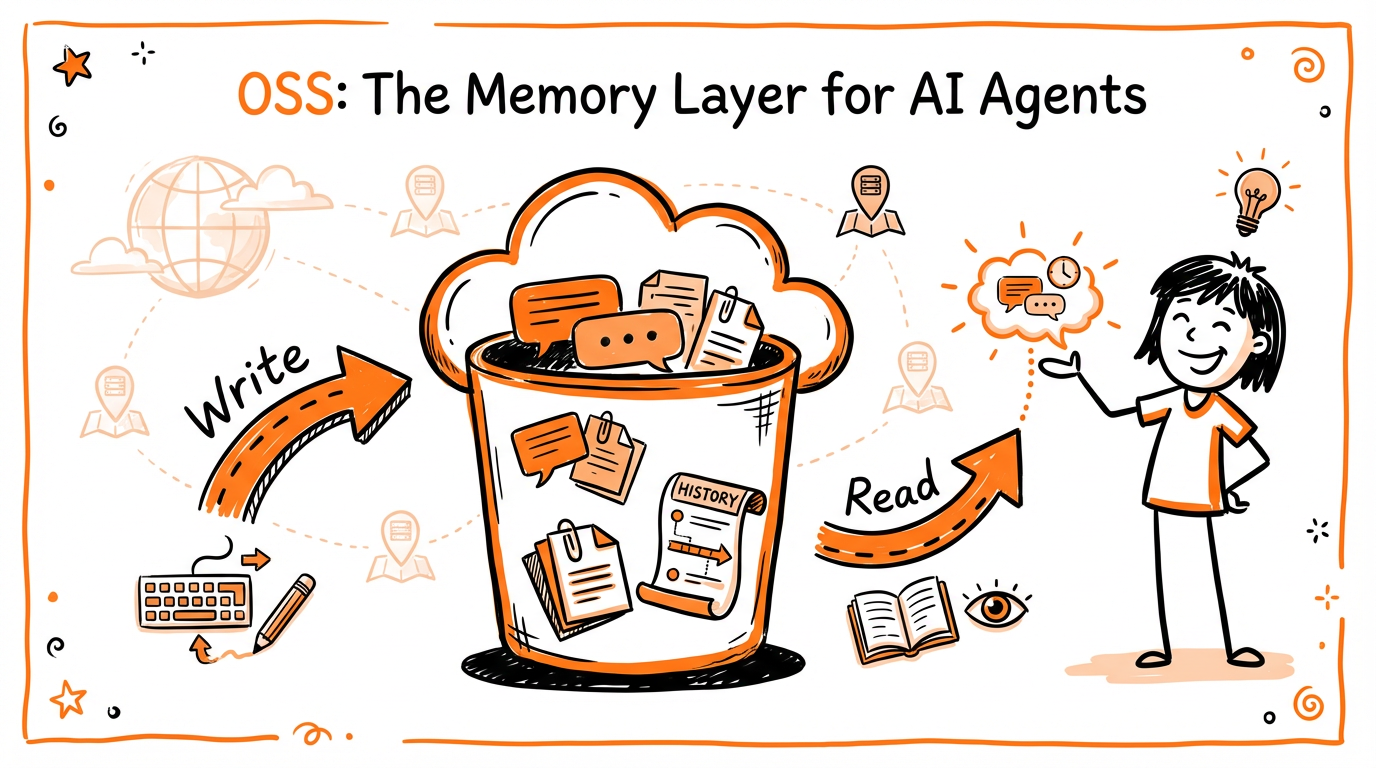

OSS (Object Storage Service) is your persistence layer. Agents need memory — not just the in-context window of an LLM, but long-term storage for conversation history, generated artifacts, and knowledge bases. OSS gives you that with S3-compatible APIs, lifecycle policies, and geo-redundancy. Store a user's session history in OSS, retrieve it when they return, archive old data automatically.

Running agents in production isn't just about getting them to work — it's about keeping them secure, available, and cost-effective at scale. Here's how the triad addresses enterprise requirements:

AI agents often handle sensitive data and execute privileged operations. You need isolation boundaries that prevent one compromised agent from affecting others or accessing unauthorized resources.

Network policies in ACK. Define which services can talk to each other. Your reasoning service can reach the LLM API, but your memory retrieval service can't reach the internet. If one microservice is compromised, the blast radius is contained.

Pod security policies. Run agent containers with minimal privileges. Drop unnecessary capabilities, enforce read-only root filesystems, and prevent privilege escalation. ACK integrates with Alibaba Cloud's security scanning to detect vulnerable container images before deployment.

Secret management. Agents need API keys, database credentials, and LLM access tokens. ACK integrates with Alibaba Cloud KMS to inject secrets as environment variables or mounted volumes — never hardcoded in your container images. Rotate credentials automatically without redeploying.

OSS encryption. Data at rest is encrypted by default with OSS-managed keys. For regulated industries, bring your own keys (BYOK) via Alibaba Cloud KMS. Data in transit uses TLS 1.3. Your agent's conversation history and knowledge bases remain protected even if the underlying storage media is compromised.

Agent workloads are unpredictable. A customer support agent might handle ten conversations in an hour, then suddenly face a hundred during a product launch. Your infrastructure needs to absorb these spikes without manual intervention or runaway costs.

Horizontal Pod Autoscaler (HPA). ACK monitors custom metrics — queue depth, request latency, or your own business metrics — and scales agent pods up and down automatically. Scale from three replicas to fifty in seconds, then back down when the spike passes. You pay for what you use.

Cluster Autoscaler. When HPA adds pods and your nodes are full, ACK automatically provisions new ECS instances, adds them to the cluster, and schedules the pending pods. When load drops, it drains and terminates underutilized nodes. No 3 AM pages to add capacity.

Resource quotas and limits. Prevent one misbehaving agent from consuming all cluster resources. Define CPU and memory limits per pod, namespace quotas per team, and priority classes to ensure critical agents get scheduled before experimental ones.

GPU support for inference. Some agents run local LLM inference rather than calling APIs. ACK supports GPU-accelerated nodes with NVIDIA A10, V100, or A100 instances. Schedule GPU workloads with device plugins, share GPUs across multiple agent pods with time-slicing, or dedicate full GPUs to high-priority inference tasks.

When an agent makes a wrong decision or gets stuck in a loop, you need visibility into what happened. Distributed tracing and structured logging aren't luxuries — they're requirements for production systems.

Log Service integration. ACK automatically ships container logs to Alibaba Cloud Log Service. Query across all agent pods with SQL-like syntax. Filter by trace ID to follow a single request through your reasoning, tool execution, and memory retrieval services. Set alerts when error rates spike.

Application Real-Time Monitoring Service (ARMS). Monitor agent performance metrics without code changes. Track request latency, error rates, and throughput. Identify which tool calls are slowest, which LLM prompts generate the most tokens, and where your agents spend most of their time.

Distributed tracing. Instrument your agent services with OpenTelemetry and send traces to ARMS. See the full execution path of an agent task: which microservices were called, how long each took, and where bottlenecks occur. Essential for debugging complex multi-step agent workflows.

OSS access logs. Track which agents read or wrote which objects, when, and from which IP addresses. Audit data access for compliance, detect anomalous patterns that might indicate a compromised agent, and investigate data incidents with complete visibility.

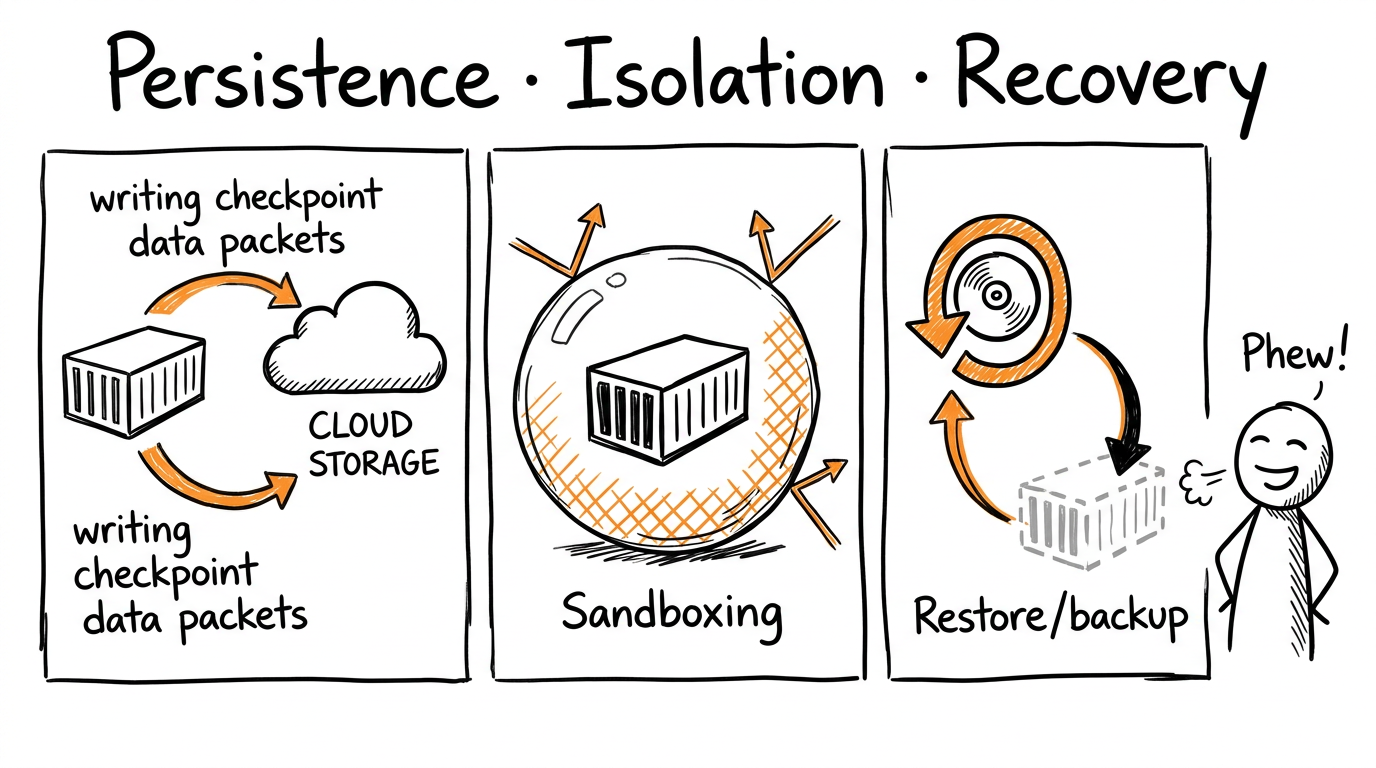

Production agents run for hours, days, or weeks. They maintain internal state: partially completed tasks, accumulated context, in-progress workflows. When a pod crashes, a node fails, or you need to deploy a new version, you can't afford to lose that state and start over.

Persistent volumes for agent state. ACK supports multiple storage classes via Container Storage Interface (CSI). Use cloud disks for low-latency state storage, NAS for shared state across multiple agent replicas, or OSS via CSI drivers for durable, cost-effective persistence. Mount volumes into your agent containers, write checkpoint files, and resume from the last checkpoint after restart.

OSS for session persistence. Store conversation state, user preferences, and workflow progress in OSS as JSON or binary blobs. When an agent pod restarts, it reads its state from OSS and continues where it left off. Users never know there was an interruption. Design your agents to be stateless at the container level but stateful at the application level via external storage.

Workflow state machines. Complex agent workflows — research, analysis, multi-step reasoning — benefit from explicit state machines. Store workflow state in OSS, transition between states atomically using conditional writes, and resume interrupted workflows from any step. ACK Jobs and CronJobs can trigger state machine transitions, ensuring workflows complete even when individual agent instances fail.

Not all agents are equally trusted. An internal data analysis agent might have broad database access, while a customer-facing support agent should only read from a limited knowledge base. You need isolation mechanisms that enforce these boundaries.

Namespace isolation. Run different agent classes in separate ACK namespaces. Apply network policies that prevent a customer-facing agent in namespace A from reaching internal databases in namespace B. Set resource quotas so experimental agents can't starve production agents of cluster capacity.

Container sandboxing with gVisor. For untrusted or third-party agent code, run containers in gVisor for additional isolation. This provides a user-space kernel boundary between the agent and the host. Even if the agent escapes its container, it remains trapped in the sandbox.

Policy enforcement with OPA. Use Open Policy Agent to enforce organizational rules: agents must not mount host paths, must not run as root, must not access the internet without explicit allowlisting. Gatekeeper rejects non-compliant deployments before they reach the cluster. Shift security left — catch policy violations at deploy time, not runtime.

OSS bucket policies and IAM. Enforce least-privilege access to storage. Customer-facing agents get read-only access to specific buckets. Internal agents get write access to staging buckets but not production. Use OSS bucket policies to restrict access by IP range or VPC.

When things go wrong — and they will — you need a plan to recover. A region-wide outage, catastrophic data corruption, or security incident can take your agents offline. The difference between a brief blip and a days-long outage is preparation.

Multi-region deployment. Run ACK clusters in multiple Alibaba Cloud regions. Use Global Accelerator to route traffic to the nearest healthy region. Deploy agents to all active regions, or maintain a warm standby that scales up when the primary region fails. OSS cross-region replication keeps data synchronized across regions with sub-minute latency.

Point-in-time restore. Implement regular snapshots of persistent volumes and OSS buckets. When corruption occurs, restore from a known-good state. OSS supports object versioning — every overwrite preserves the previous version. Recover accidentally deleted or corrupted data with a single API call.

Backup testing and runbooks. A backup you can't restore is worthless. Test your restore procedures quarterly. Document runbooks for common failure scenarios: single pod failure, node failure, cluster failure, regional outage. Practice restoring agent state from OSS, recovering from persistent volume snapshots, and failing over to a secondary region.

RTO and RPO planning. Define your Recovery Time Objective (how long you can be down) and Recovery Point Objective (how much data you can lose). For critical agents, aim for RTO under 5 minutes and RPO under 1 minute using multi-region active-active deployment and synchronous replication. ACK and OSS provide the building blocks — you choose the right reliability tier for each agent workload.

Here's what building an agent looks like on this stack:

/run endpoint. The service takes a task, breaks it into steps, and executes them using an LLM.That's it. No custom orchestration code. No hand-rolled scaling logic. No worrying about what happens when a node fails — ACK reschedules your pods automatically.

There are other ways to build agents. You could use serverless functions, manage your own VMs, or stitch together services from multiple clouds. Here's why developers are choosing the ACS/ACK/OSS triad:

Predictable performance. Kubernetes gives you fine-grained control over resource allocation. Your agents get consistent compute, not the cold-start latency of serverless. Set CPU and memory requests and limits per container, and ACK guarantees those resources or refuses to schedule the pod. No noisy neighbors, no resource contention surprises.

Elastic scale without waste. The combination of HPA and Cluster Autoscaler means your agents scale from zero to thousands of requests and back down automatically. During peak hours, you run dozens of replicas. At night, you run the minimum. Your cloud bill reflects actual usage, not provisioned capacity.

Portable patterns. The skills you build here transfer. Kubernetes is Kubernetes. If you learn to debug agents on ACK, you can debug them anywhere. Your manifests, Helm charts, and operational playbooks work across clouds. You're not locked into Alibaba Cloud — you're investing in portable expertise.

Cost efficiency at scale. OSS pricing is storage-class based. Infrequently accessed session data goes to Archive storage at a fraction of the cost. ACK's cluster autoscaler shuts down unused nodes. You're not paying for idle capacity. For high-throughput agent systems, this cost structure beats serverless pricing by orders of magnitude.

Single-vendor integration. No egress charges between ACS, ACK, and OSS. No IAM configuration across cloud boundaries. One support channel when things go wrong. Your networking, storage, compute, and security policies integrate seamlessly because they're built by the same vendor to work together.

Compliance and governance. Alibaba Cloud maintains certifications for SOC 2, ISO 27001, GDPR, and regional standards. ACK and OSS support resource tagging for cost allocation, access logging for audit trails, and encryption for data protection. Build agents that satisfy enterprise security requirements without custom compliance engineering.

Here's a reality check: AI agents can and do delete data accidentally. An agent with write access to your database, file system, or cloud storage can misinterpret a command, hallucinate a destructive operation, or simply make a mistake. When that happens, having a backup isn't optional — it's the difference between a minor incident and a catastrophic data loss.

This is why enterprise backup is non-negotiable when deploying agents at scale. OSS provides the foundation for this with:

Versioning. Enable object versioning on your OSS buckets, and every overwrite creates a preserved copy. If an agent corrupts or deletes a file, you can restore the previous version instantly.

Cross-region replication. Replicate critical data to a secondary OSS bucket in a different region. Even if an agent goes rogue and deletes data from the primary bucket, your replica remains untouched.

Lifecycle policies with archive tiers. Not every backup needs to be instantly accessible. OSS Archive and Cold Archive tiers let you retain historical data at a fraction of the cost, with retrieval times measured in minutes or hours — acceptable for disaster recovery scenarios.

Point-in-time recovery. Combine OSS with Alibaba Cloud Database Backup Service for your structured data. When an agent executes a bad query that wipes a table, you can restore to the moment before the mistake.

The pattern is simple: treat agents as you would any other automated system with write access — assume they will eventually make a mistake, and design your architecture to survive it. The convenience of autonomous agents isn't worth the risk of unrecoverable data loss.

OpenClaw's moment has passed, but the architecture it pioneered is now available as a managed platform. Commercial offerings have taken the experimental ideas and made them production-ready. Developers aren't spending weekends configuring infrastructure anymore — they're shipping agents on ACS, ACK, and OSS.

The agent era isn't coming. It's here. And the developers building it are choosing foundations that let them focus on what matters: the agent logic, not the infrastructure beneath it.

The question now isn't whether to adopt agents — it's whether your stack lets you build them without the yak shaving.

Why CPFS Is the Unsung Hero of the AI Revolution (And Why It's Getting More Expensive)

11 posts | 1 followers

FollowJustin See - March 11, 2026

Alibaba Cloud Native Community - April 30, 2026

Justin See - March 19, 2026

Justin See - March 27, 2026

CloudSecurity - April 30, 2026

Alibaba Cloud Native Community - March 5, 2026

11 posts | 1 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More OSS(Object Storage Service)

OSS(Object Storage Service)

An encrypted and secure cloud storage service which stores, processes and accesses massive amounts of data from anywhere in the world

Learn MoreMore Posts by Justin See