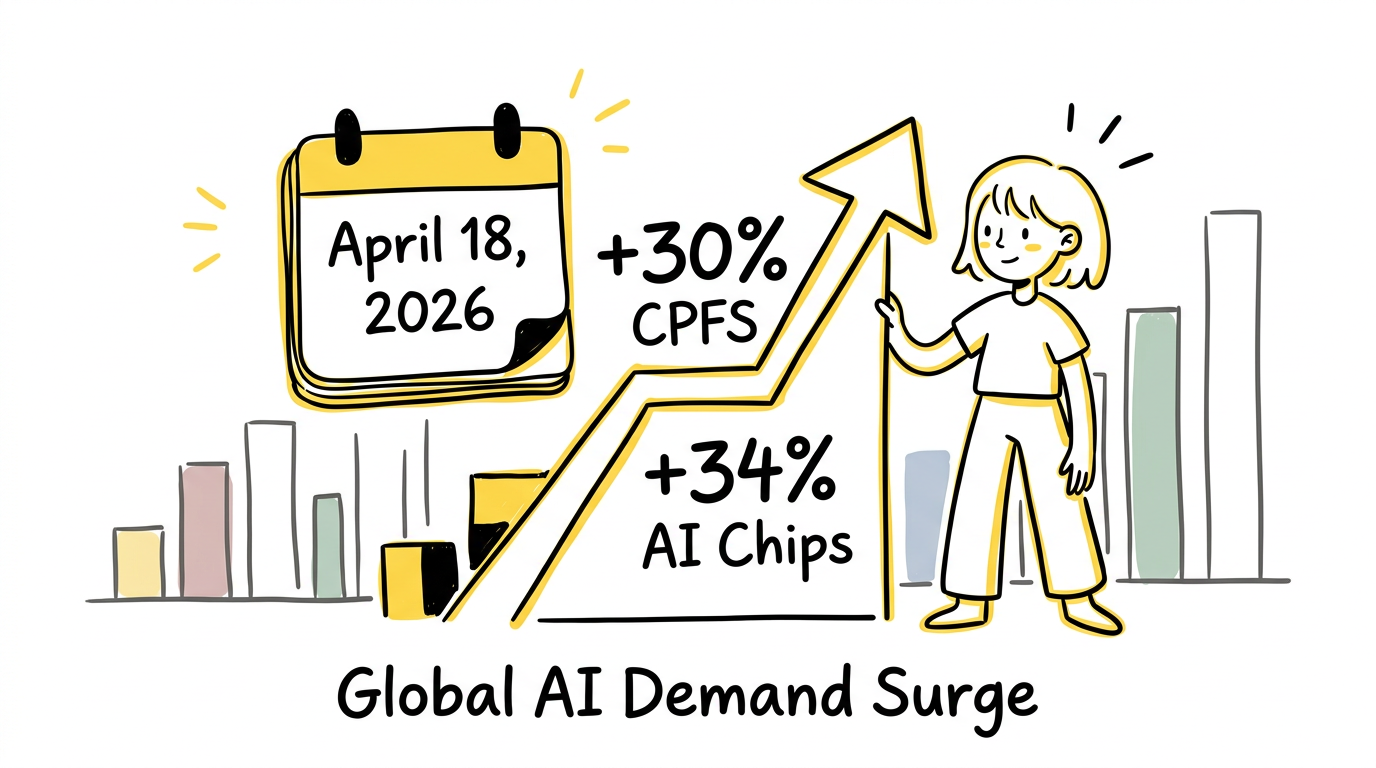

On March 18, 2026, Alibaba Cloud made an announcement that sent ripples through the AI infrastructure world: a price increase of up to 34% on AI computing and storage products, effective April 18, 2026. While the headline number grabbed attention, the real story lies in one specific product line that saw increases of 30% — CPFS (Cloud Parallel File Storage).

This isn't just another routine price adjustment. It's a signal. A signal that the infrastructure powering the AI revolution is hitting capacity constraints. A signal that the "data feeding" problem for AI training has become so critical that enterprises are willing to pay premium prices for storage that can keep their GPUs fed.

Sources:

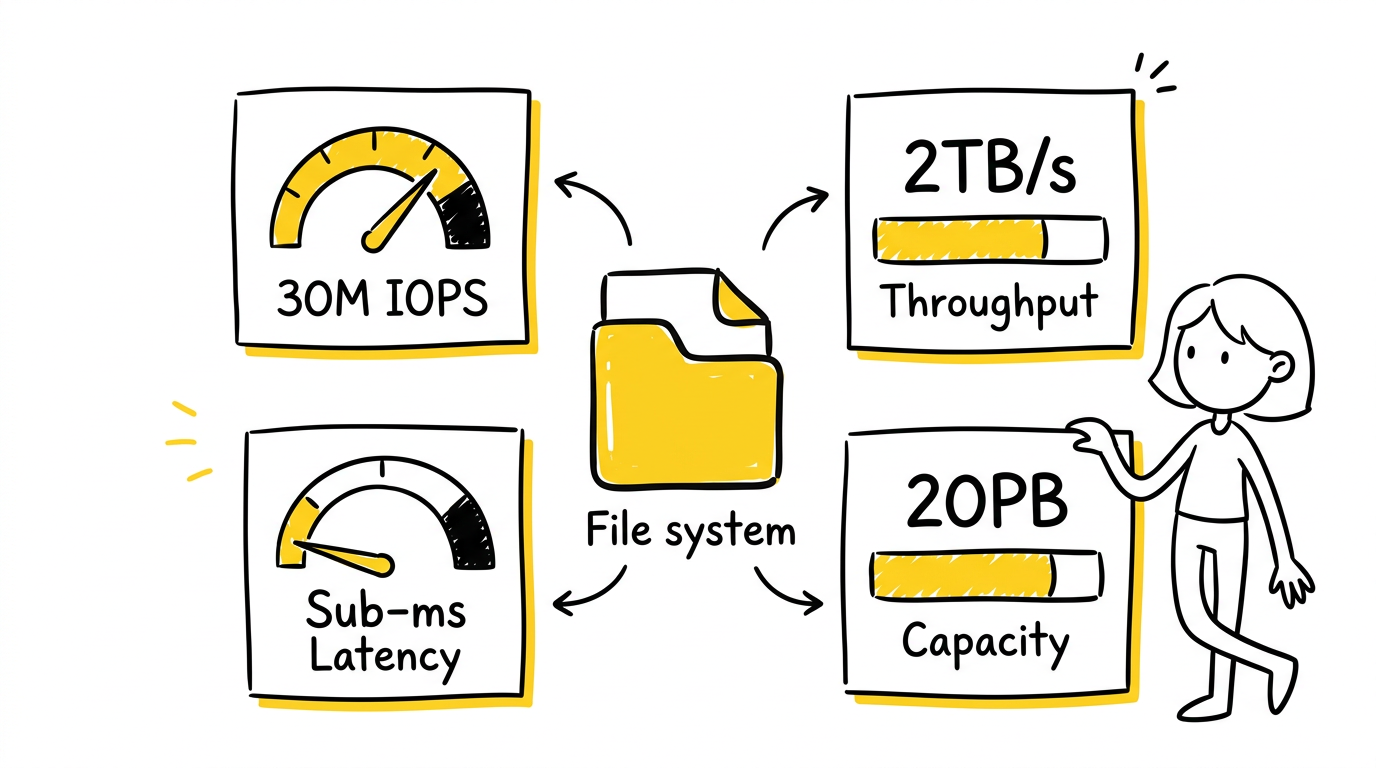

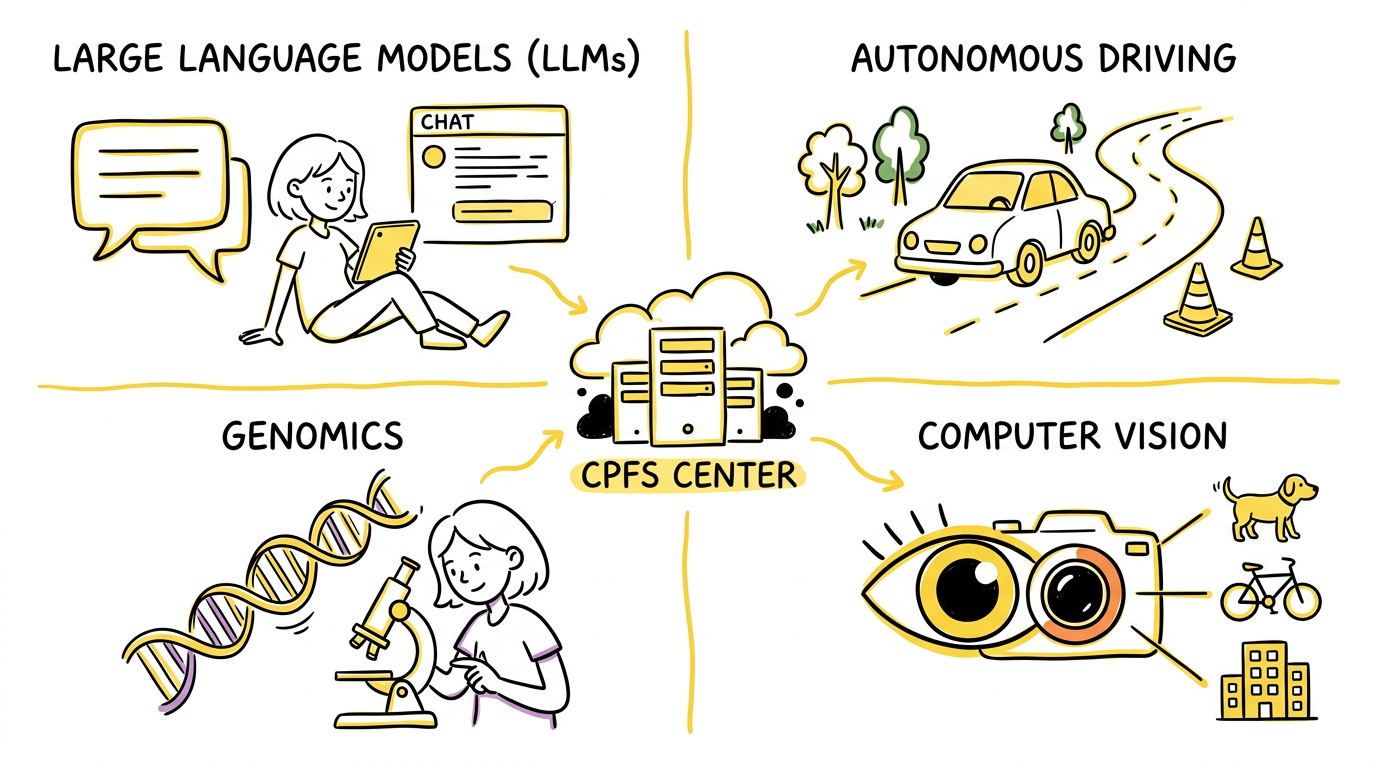

CPFS (Cloud Parallel File Storage) is Alibaba Cloud's fully managed, serverless parallel file system designed specifically for high-performance computing and AI workloads. But to understand why it's commanding premium pricing, we need to look at what it actually delivers.

CPFS isn't your ordinary cloud storage. It's built for scenarios where milliseconds matter and throughput is measured in terabytes:

Source: Alibaba Cloud CPFS Product Page

These numbers aren't just impressive on a spec sheet — they're the difference between a GPU cluster running at 95% utilization versus one starved for data and sitting idle at 50%.

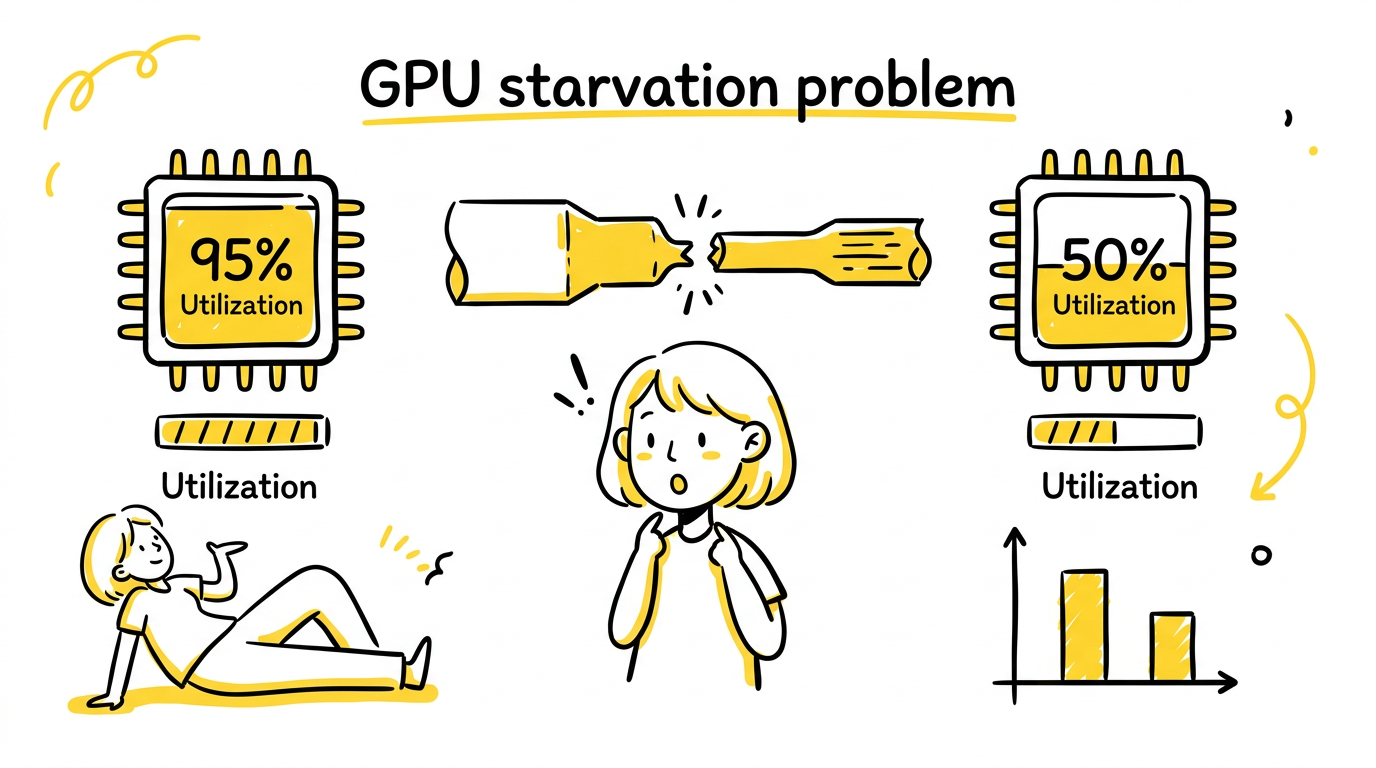

Here's the uncomfortable truth about AI training: your GPUs are only as fast as your storage can feed them.

Modern AI training — especially for large language models — involves massive datasets that don't fit in GPU memory. During training, the system must continuously stream data from storage to GPUs. If the storage can't keep up, GPUs sit idle, burning electricity and rental costs while waiting for data.

An NVIDIA H100 GPU costs approximately $2-3 per hour to rent in the cloud. When storage bottlenecks cause GPU utilization to drop from 95% to 50%, you're essentially paying double for every unit of computation. At scale — with hundreds or thousands of GPUs — this inefficiency translates to millions of dollars in wasted compute budget.

Traditional cloud storage systems (object storage, standard file systems) weren't designed for the access patterns of AI training:

Standard storage systems crumble under these patterns. Metadata servers become bottlenecks. Throughput collapses. GPUs starve.

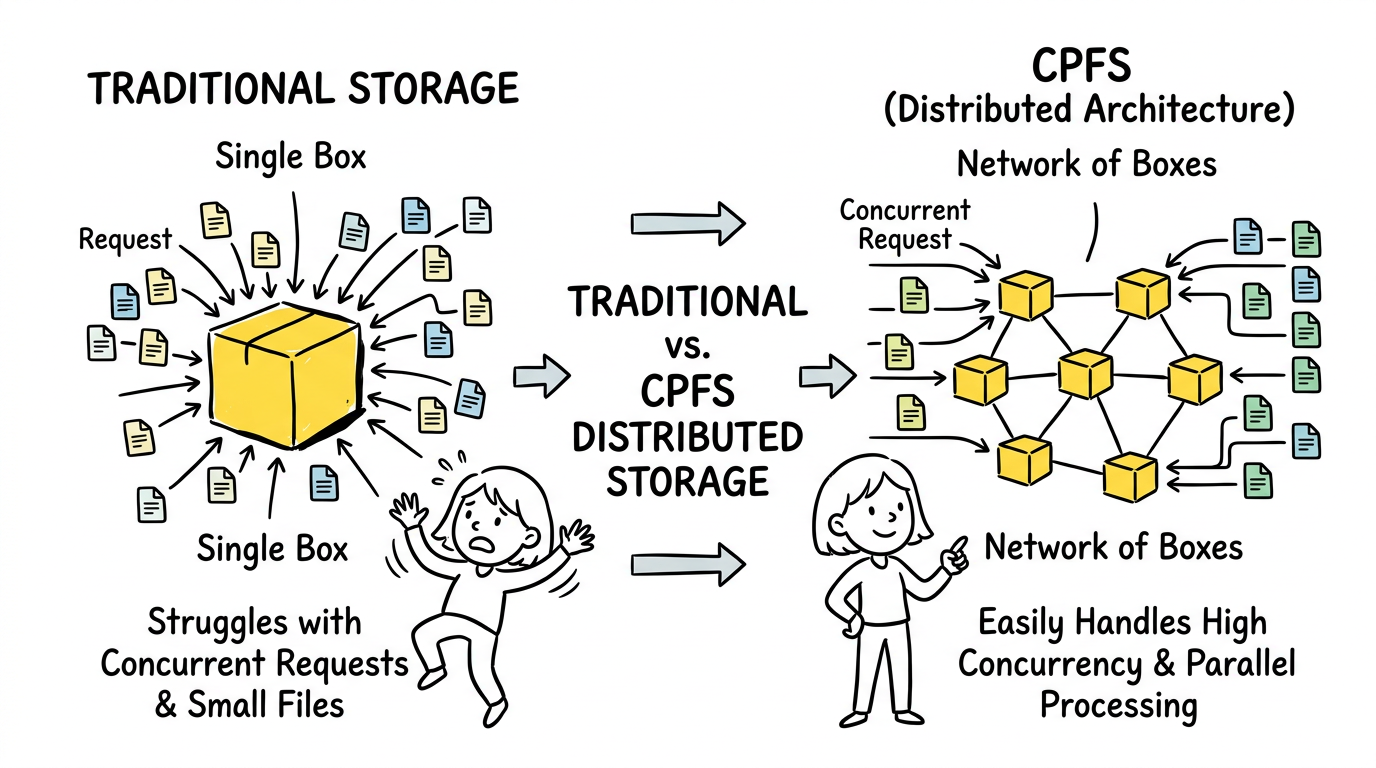

CPFS is built on a distributed parallel architecture that fundamentally rethinks how storage serves AI workloads.

Unlike traditional file systems with centralized metadata servers, CPFS distributes both data and metadata across the cluster. This means:

CPFS supports multiple access protocols — POSIX, MPI-IO, and NFS — enabling it to integrate with existing AI frameworks without modification. Whether you're running PyTorch distributed training, TensorFlow, or custom MPI-based workloads, CPFS presents a familiar interface.

AI datasets have hot and cold data. The data being actively trained on needs maximum performance. Older checkpoints and historical data need cost-effective storage. CPFS integrates with Alibaba Cloud Object Storage Service (OSS) to provide automatic tiering — hot data stays on high-performance CPFS, cold data moves to cost-effective object storage.

Perhaps most importantly for modern AI operations, CPFS is serverless. Performance scales automatically with demand. When you spin up a massive training cluster, CPFS scales to match. When training completes and you scale down, storage costs scale down with you. No over-provisioning. No capacity planning headaches.

Let's examine specific AI workloads where CPFS isn't just beneficial — it's essential.

Training a 70B+ parameter model requires:

Without parallel file storage, checkpoint writes alone can stall training for minutes. With CPFS's 2 TB/s throughput, checkpointing becomes a background operation that doesn't interrupt training.

Autonomous vehicle training involves:

The small file problem is acute here — a single hour of driving might generate millions of individual sensor frames. CPFS's ability to handle millions of IOPS makes it ideal for this workload.

Genome sequencing and molecular dynamics simulations require:

CPFS's combination of high performance and POSIX compatibility makes it a natural fit for bioinformatics pipelines originally built for on-premise HPC clusters.

Training vision models on billions of images presents unique challenges:

CPFS's distributed metadata architecture prevents the metadata server from becoming a bottleneck when training on image datasets.

Modern AI training doesn't happen on single machines. It happens across clusters of hundreds or thousands of GPUs distributed across multiple nodes. This creates a storage challenge that traditional systems simply can't handle.

In multi-node training, poor storage and network configuration can bottleneck GPU utilization to 40-50%. This happens because:

CPFS is designed for exactly this scenario:

The 30% CPFS price increase isn't arbitrary. It reflects fundamental supply and demand dynamics in AI infrastructure.

The explosion of generative AI has created unprecedented demand for AI training infrastructure. Every major tech company, every startup, every enterprise is building AI capabilities. The demand for high-performance storage that can feed GPU clusters has outstripped supply.

High-performance parallel file systems run on premium hardware:

As AI demand has surged, the cost of this infrastructure has risen. Supply chain constraints affect everything from NAND flash to networking chips.

Enterprises running AI training at scale have done the math. Paying 30% more for storage that keeps GPUs at 95% utilization is cheaper than paying for GPUs that sit idle because of storage bottlenecks. The price increase reflects the value CPFS delivers — it's not just storage, it's GPU efficiency insurance.

The CPFS price hike is a harbinger of broader trends in AI infrastructure.

The future of AI infrastructure involves tighter integration between storage and compute. We're seeing the emergence of:

CPFS represents a category of storage purpose-built for AI. Expect to see:

For teams building AI systems, the message is clear:

CPFS's 30% price increase is more than a vendor pricing decision — it's a market signal. It signals that the infrastructure layer of the AI revolution is maturing, that the "easy" gains have been captured, and that the next phase of AI development requires serious investment in high-performance infrastructure.

For enterprises training large AI models, CPFS has become essential infrastructure, not a nice-to-have. The ability to feed GPUs at scale, to checkpoint massive models without stalling training, and to handle the unique I/O patterns of AI workloads — these capabilities justify the premium.

As AI models continue to grow — trillion-parameter models are on the horizon — the storage systems that can feed them will become even more critical. The CPFS price hike is just the beginning. The age of AI-optimized infrastructure has arrived, and it comes with a premium price tag.

OpenClaw Sparked the Movement — Now Enterprise Build on Kubernetes, Containers, Object Storage

11 posts | 1 followers

FollowAlibaba Cloud Community - September 10, 2025

Alibaba Clouder - November 18, 2020

Neel_Shah - August 27, 2025

Farah Abdou - January 19, 2026

Justin See - November 7, 2025

Adrian Peng - April 23, 2021

11 posts | 1 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Simple Log Service

Simple Log Service

An all-in-one service for log-type data

Learn MoreMore Posts by Justin See