By Siddharth Pandey, Solution Architect at Alibaba Cloud

Organizations have stored information about their applications in databases for a very long time. This has worked well for decades. Storing information in databases eventually forces us to think about information gathering as a subset of an object or a thing. A common example of this would be a 'user'. We think of information gathering from a 'user' perspective and store the 'state' relevant to this user (age, address, etc.) in the database. However, the enormous amount of data being exchanged today between applications has resulted in the new wave of information gathering suggests thinking about 'events' first, rather than objects/things first.

Events also have a state, which is an indication in time about what has happened, and this state is stored in a structure called a log. This log is accessed by various applications that post and consume information about events in real-time. This is where the concept of event streaming comes, and Apache Kafka comes with it.

Event streaming captures data in real-time from event sources like databases, sensors, mobile devices, cloud services, and software applications in the form of streams of events. It stores and processes these event streams in real-time and routes the event streams to different destination technologies as needed.

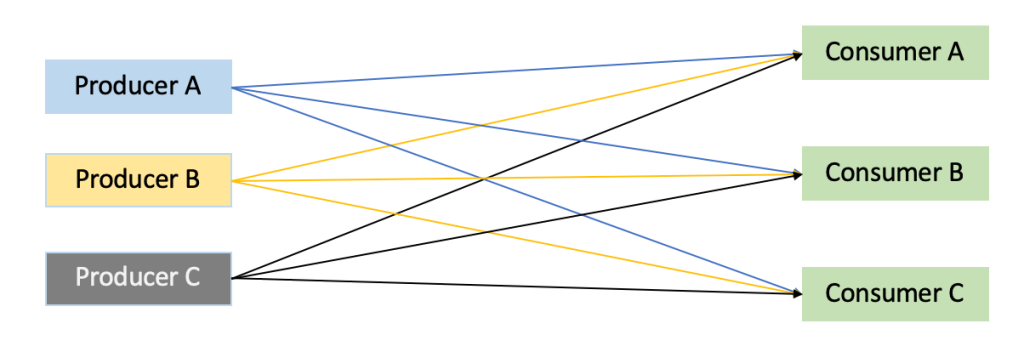

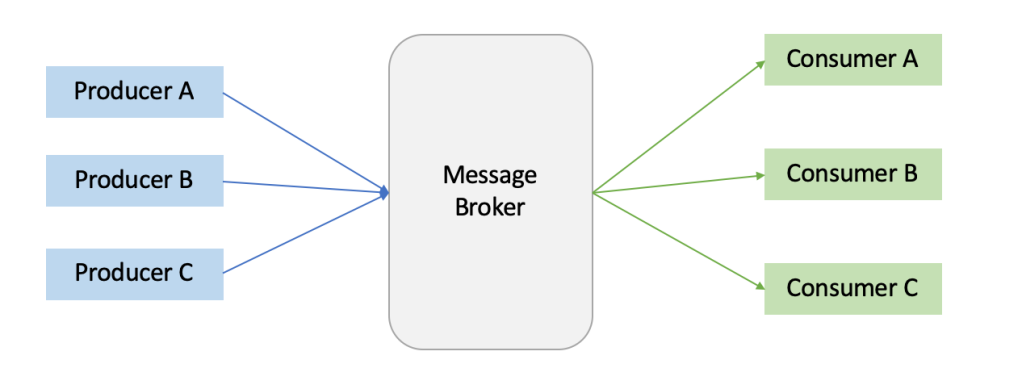

Apache Kafka is an open-source distributed event streaming platform used by organizations worldwide to manage event streaming and work as a communication layer for inter-communicating applications. Here, the events produced by applications are stored in log format, and other applications can consume data from the log as required. In the absence of a solution like Kafka, organizations will have to connect applications to each other individually and maintain that connection between these applications. A solution like Kafka becomes a common connection point between all the applications that need to communicate with each other. Let's look at key terminology used in the solution to understand the core concept of Apache Kafka:

In the absence of a common messaging solution, all applications would need to be connected to each other with an individual connection setup.

Event: An event records when "something happens" in the world or your business. It is also known as message or data. Every event has a state, which is expressed in the form of key, value, timestamp, and other optional metadata.

Example:

Producer: A producer is any application that sends data to Kafka. In the example above where Bob is paying $100 to Kevin, the event was probably produced by the payment application that published the payment information and sent it to Kafka for storage and processing.

Consumer: A consumer is any application that reads data from Kafka. In the example above, the payment application sent payment data to Kafka. Any application interested in this data can request Kafka to read it, given it has permission to do so. The consumer application here could be a payment history database or another application that uses this event information.

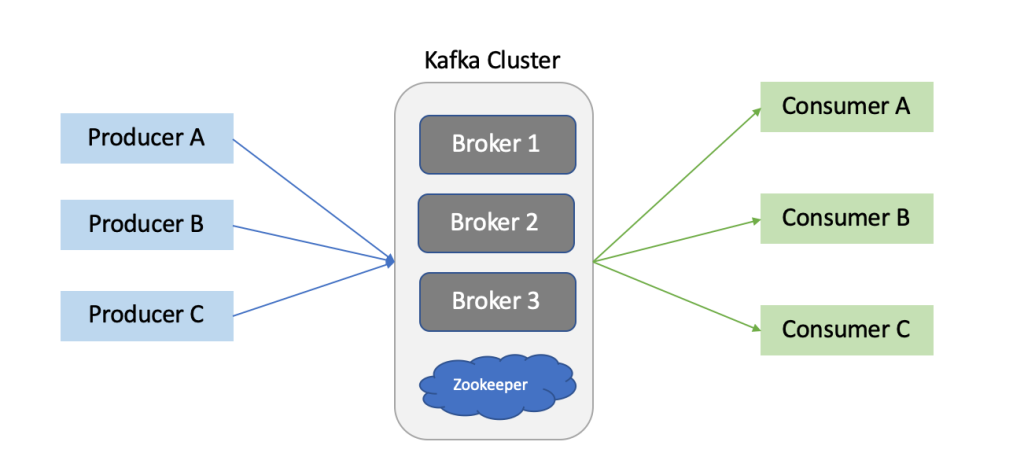

Broker: Kafka servers are known as brokers, and rightly so, as consumer and producer applications do not interact with each other directly but through Kafka servers that act as brokers in between.

Cluster: A Kafka cluster is a group of Kafka brokers deployed together to enable the benefits of distributed computing. Multiple brokers ensure the ability of the brokers to manage higher message load. It also helps in ensure fault tolerance in the broker architecture.

Zookeeper: Zookeeper, with regards to Kafka, is an application that works as a centralized service for managing the brokers and performing operations like maintaining configuration information, naming, providing distributed synchronization, and providing group services for Kafka brokers.

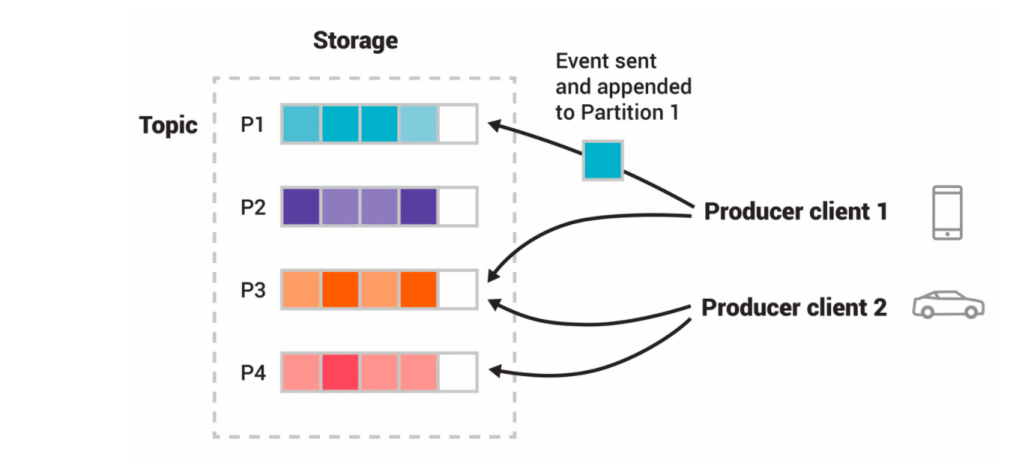

Topic: A topic is a unique name for a data stream that producer and consumer applications use. Data published by producers is segmented into various topics (Payment Data, Inventory Data, Customer Data, etc.) Consumer applications can subscribe to whichever topic they want to access data from. More than one producer can publish data to a single topic, and more than one consumer can pull data from a single topic.

Partition: We have established that brokers store data streams in topics. The data stream can vary in size ranging from a few bits to a size more than the storage of an individual broker. Due to this, a data stream can be divided into smaller packets called partitions. Kafka can divide a topic into partitions and spread it among different brokers in the cluster. Creating multiple partitions and storing in different brokers also help Kafka establish fault tolerance in the system.

Offset: Offset is a sequence ID given to the message as it arrives in the partition. This ID is unique inside the partition and helps set up an address for the message. If we want to access a message in Kafka, all we need to know is the Topic name, Partition number, and the Offset.

This example topic has four partitions P1–P4. Two different producer clients are publishing new events to the topic independently from each other by writing events over the network to the topic's partitions. Events with the same key (denoted by their color in the figure) are written to the same partition. Note: Both producers can write to the same partition if appropriate. - Source: kafka.apache.org

Consumer Group: This is a group of consumers that divide partitions among them to manage increasing workloads coming from Kafka brokers. When there are hundreds of producer applications sending data to multiple brokers in Kafka, a single consumer can get overwhelmed with the velocity of incoming messages. Therefore, a group of consumers can be created where partitions are assigned to these consumers to manage the consumption of data effectively.

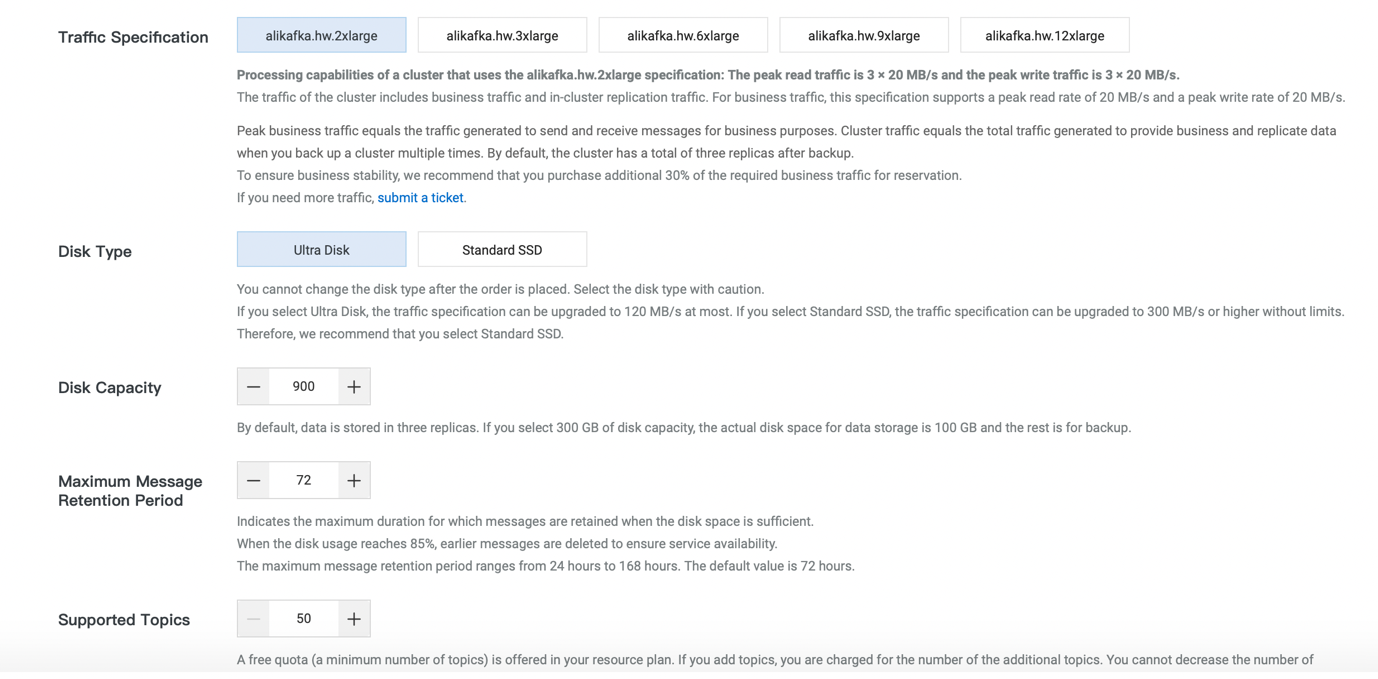

Select the specification of the instance in terms of traffic specification, type of disk, capacity of the disk, and maximum retention period for the messages and number of topics

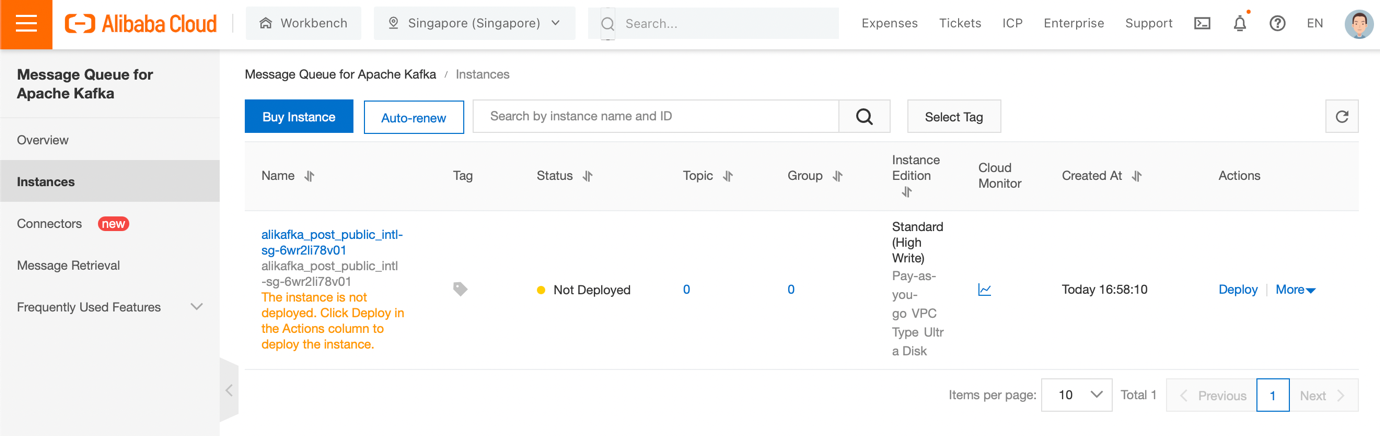

Once the instance is purchased, come back to the Instances page to see the newly created instance:

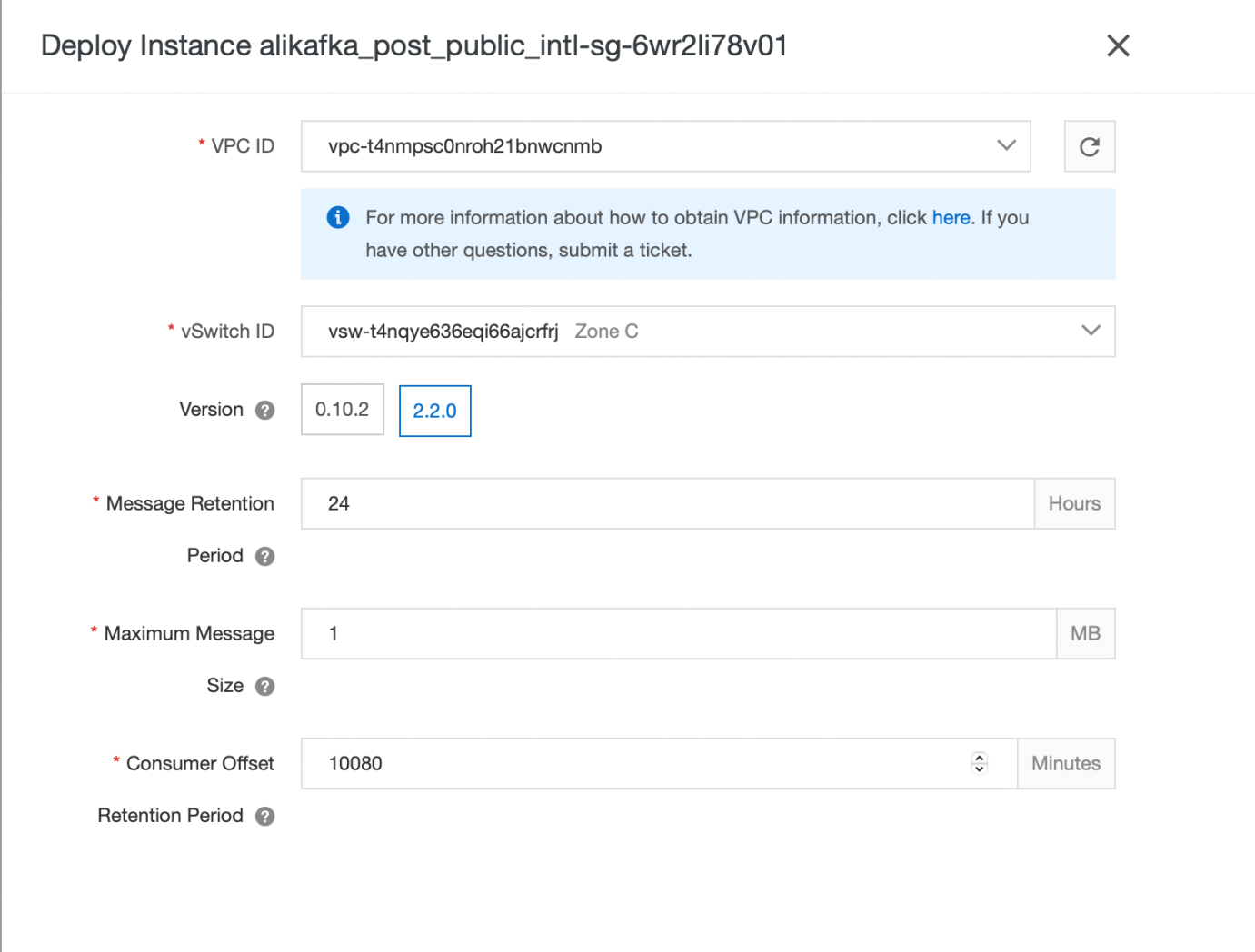

Now, we will deploy this instance to allow access from a VPC. Click Deploy. It will ask you for the configuration of the instance and the details of the VPC and vSwitch you want to deploy the instance in:

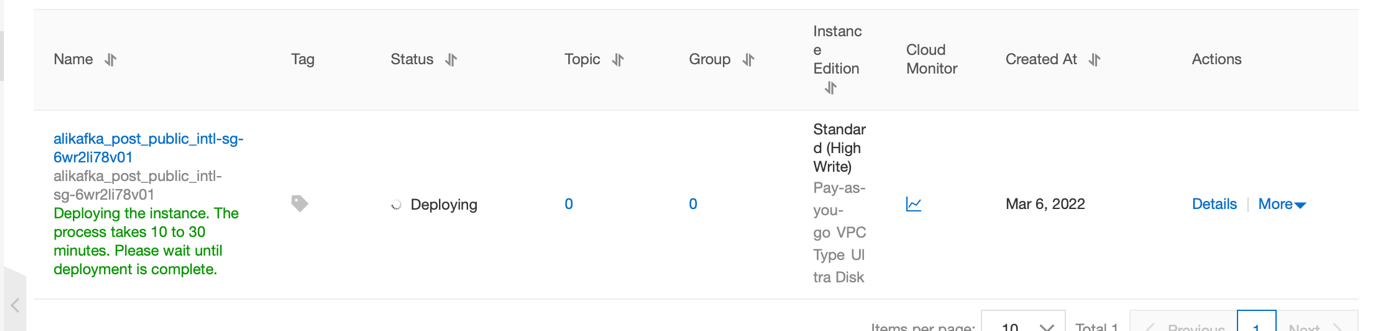

The deployment will take roughly ten minutes:

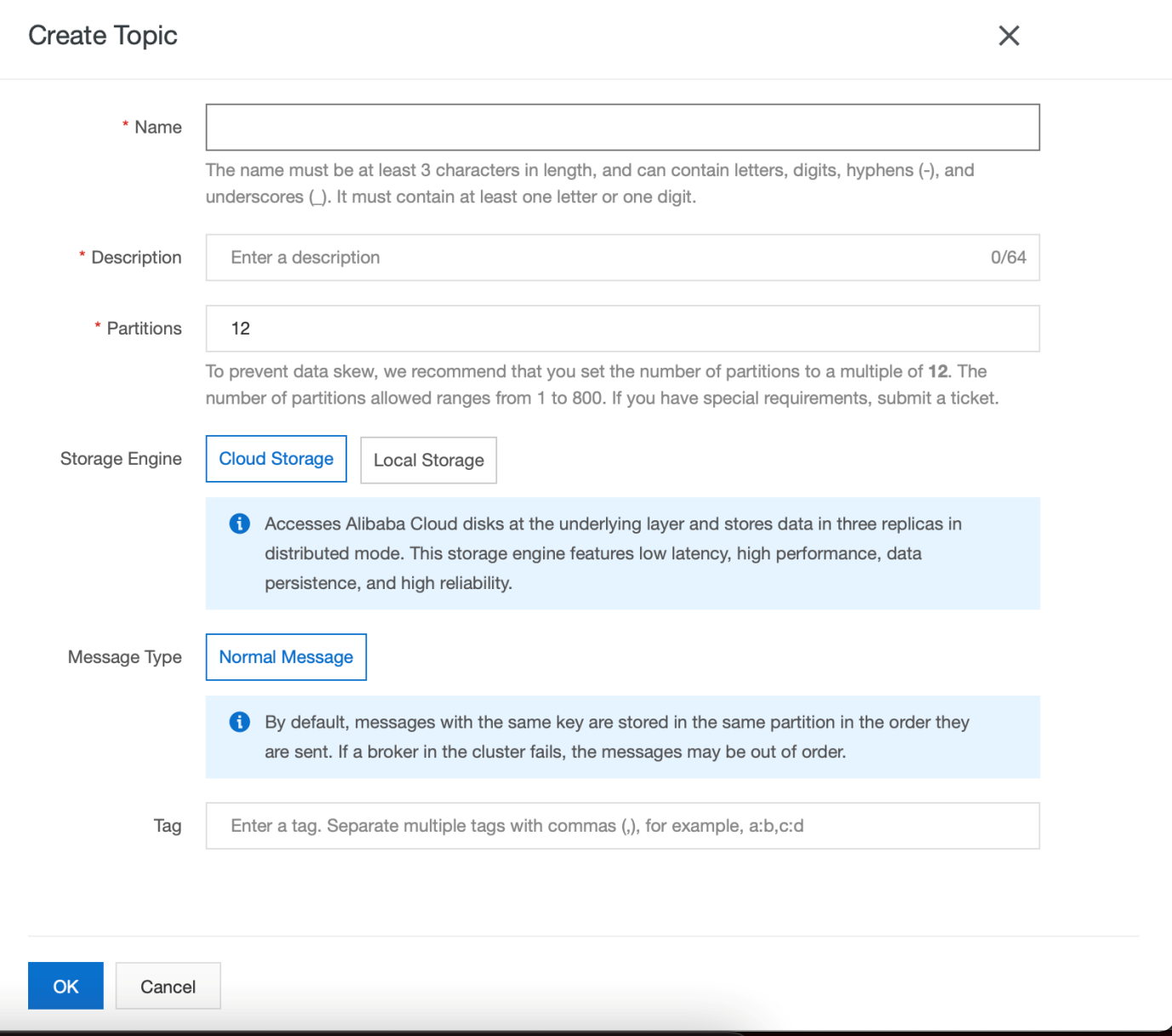

Once the instance is deployed, click Details and go to Topics. We will create a topic and a consumer group. Enter the topic name, number of partitions, and storage engine:

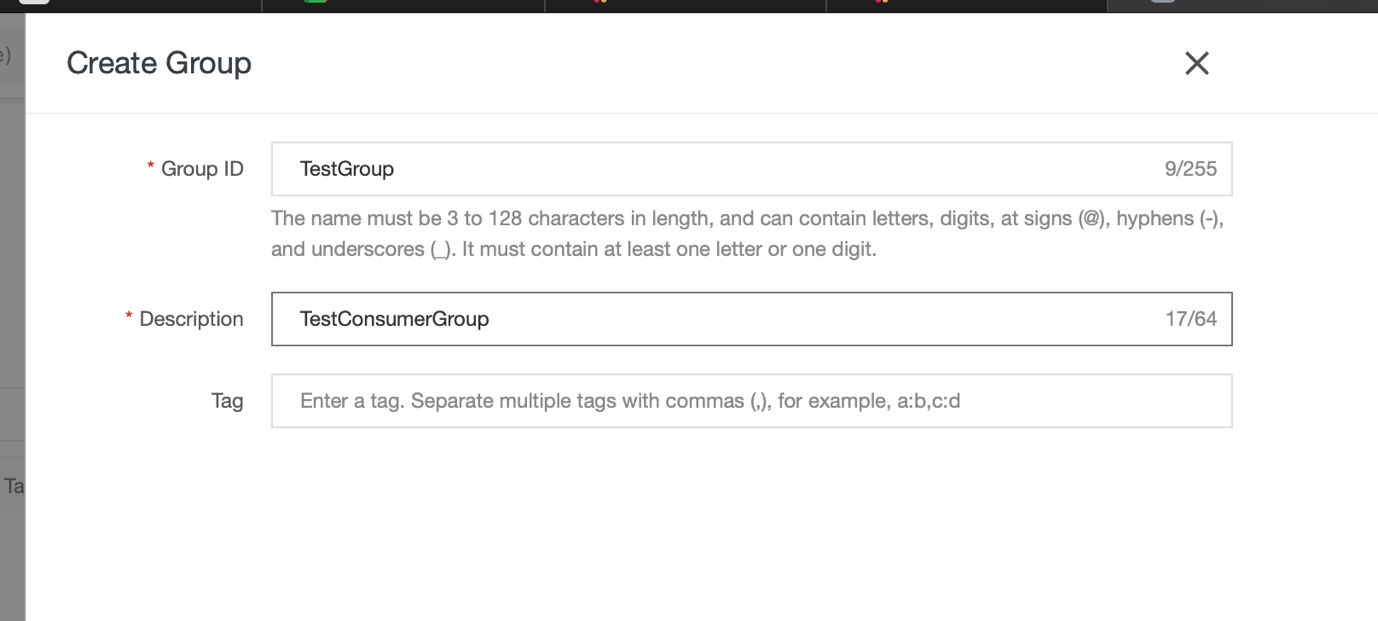

Now, go to groups on the left panel and click create group. Enter the Group Name and description and click OK. A consumer group will be created:

Now, we have a Kafka setup in place and ready to go. Next, we will create two applications (Producer and Consumer) and transmit events from Producer to Consumer via Kafka.

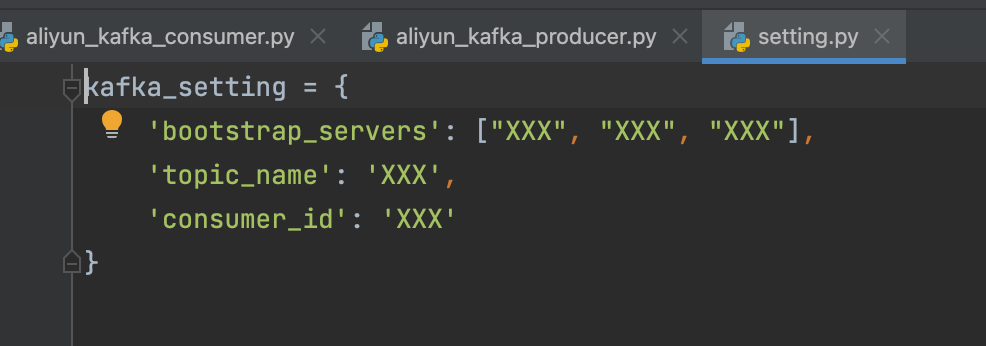

You can find SDK to create producer and consumer apps for various languages here. For this demo, I have selected Python SDK. There are three files in the zip file for Python: Producer.py, consumer.py, and settings.py. We need to configure Settings.py with our server IDs, topic name, and consumer ID.

The bootstrap servers detail can be taken from the Kafka Alibaba console → Instance Details → Endpoint Information → Domain Name. You can see the server information here. Topic name and consumer group name (ID) can also be seen in the 'Topics' and 'Groups' panels.

I am running the Python script on my Mac. I had to ensure I had relevant access to the consumer and producer file. If you get a Permission Denied error while running the .py scripts, you need to change access on these files:

sudo chown 755 <path of consumer.py>Similarly, you can change the access levels for producer.py.

We also need to ensure we have Kafka and kafka-python installed on our local machine. If you get the following error while running the script, these components have not been installed:

ImportError: No module named kafkaIf you are using a Mac, you can install these components using Brew:

Brew install kafka

Brew install kafka-pythonNow, we can run aliyun_kafka_producer.py to produce the message stream and run aliyun_kafka_consumer.py to consume the messages on a different terminal. If you want to use some other language SDK, you can download those SDK from this link.

DataWorks Data Modeling - A Package of Data Model Management Solutions

Three Evolution Directions of Cloud Application Architecture

1,374 posts | 490 followers

FollowAlibaba Cloud MaxCompute - April 26, 2020

Alibaba Cloud Indonesia - January 20, 2023

JJ Lim - January 11, 2022

Alibaba Clouder - March 31, 2021

Alibaba Cloud Native - July 5, 2023

Alibaba Container Service - July 19, 2021

1,374 posts | 490 followers

Follow Message Queue for Apache Kafka

Message Queue for Apache Kafka

A fully-managed Apache Kafka service to help you quickly build data pipelines for your big data analytics.

Learn More Realtime Compute for Apache Flink

Realtime Compute for Apache Flink

Realtime Compute for Apache Flink offers a highly integrated platform for real-time data processing, which optimizes the computing of Apache Flink.

Learn More ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn MoreMore Posts by Alibaba Cloud Community