By Xining Wang

The content of this article is based on the author's speech at the 2022 Cloud-native Industry Conference.

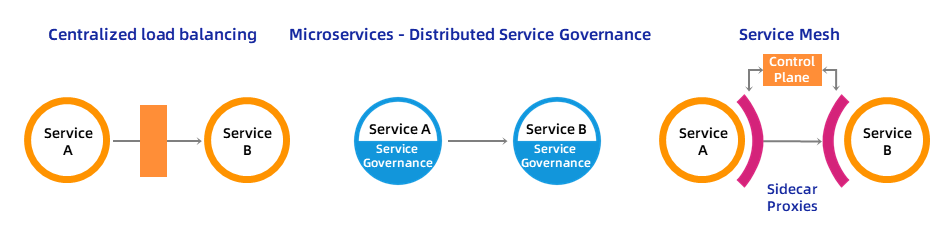

Let's review the evolution of the application service architecture. Judging from the processing methods of service callers and providers, we can divide it into three stages.

The first phase is centralized load balancing, which means that the service caller is routed to the corresponding service provider through an external load balancing. The advantages are obvious. It has no intrusion into the application itself. It can support multi-language and multi-framework for developing and implementing the application itself. Load balancing also has unified and centralized management and is simple to deploy. However, because it is centralized, it has limited scalability. Furthermore, the service governance capability of this centralized load balancing is relatively weak.

The second phase is the distributed governance of microservices, that is, the built-in governance capabilities of service callers are integrated into applications in the form of SDK libraries. The advantages are good overall scalability and strong service governance capabilities. At the same time, its disadvantages include intrusion on the application itself, difficulty in supporting multiple languages due to SDK dependence, and complexity brought by distributed management and deployment.

The third phase is the current service mesh technology. By using Sidecar, the service governance capabilities can be decoupled from the application itself. Multiple programming languages can also be supported. At the same time, these Sidecar capabilities do not need to rely on a specific technical framework. These Sidecar proxies form a mesh data plane through which traffic between all services is processed and observed. Control manages these Sidecar agents in a unified manner, but it brings a certain amount of complexity.

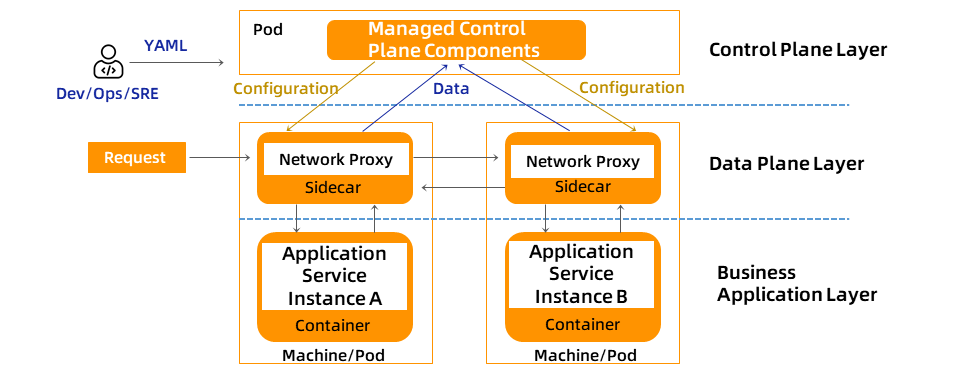

The following figure shows the architecture of the service mesh. As mentioned earlier, under the service mesh technology, each application service instance will be accompanied by a Sidecar proxy. The service code is not aware of the existence of Sidecar. This Sidecar agent is responsible for intercepting the traffic of applications and provides three major functions: traffic governance, security, and observability.

In the cloud-native application model, an application may contain several services, each of which is composed of several instances. Therefore, the Sidecar proxies of hundreds of applications form a data plane, which is the data plane layer in the diagram.

Managing these Sidecar agents in a unified way is a key problem to be solved in the control plane part of the service mesh. The control plane can be thought of the "brain" of the service mesh. It is responsible for issuing configurations for the Sidecar agent of the data plane and managing how the components of the data plane execute. It also provides a unified API for mesh users to easily manipulate mesh management capabilities.

Generally speaking, after enabling service mesh, developers, operations personnel and the SRE team will solve application service management problems in a unified and declarative way.

As a fundamental core technology used to manage application service communication, service mesh brings safe, reliable, fast, application-aware traffic routing, security, and observability for calls between application services.

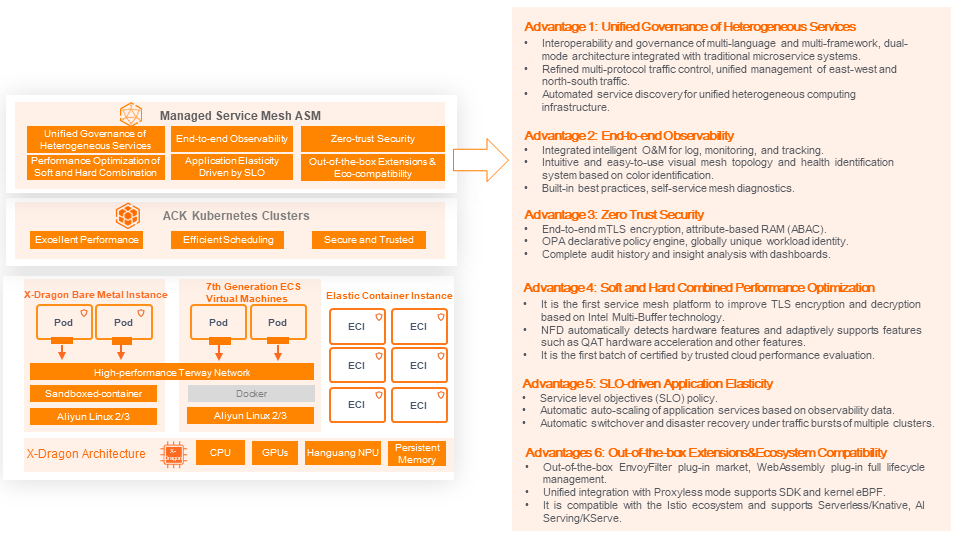

As shown in the image below, the cloud-native application infrastructure with service mesh brings important advantages, which are divided into six aspects.

• Interoperability and governance of multi-language and multi-framework, dual-mode architecture integrated with traditional microservice systems.

• Refined multi-protocol traffic control, unified management of east-west and north-south traffic.

• Automated service discovery for unified heterogeneous computing infrastructure.

• Integrated intelligent O&M for log, monitoring, and tracking integration.

• Intuitive and easy-to-use visual mesh topology and health identification system based on color identification.

• Built-in best practices, self-service mesh diagnostics.

• End-to-end mTLS encryption, attribute-based RAM (ABAC).

• OPA declarative policy engine, globally unique workload identity.

• Complete audit history and insight analysis with dashboards.

• It is the first service mesh platform to improve TLS encryption and decryption based on Intel Multi-Buffer technology.

• NFD automatically detects hardware features and adaptively supports features such as AVX instruction set and QAT acceleration.

• It is the first batch of advanced certifications to pass the trusted cloud service mesh platform and performance evaluation.

• Service level objectives (SLO) policy.

• Automatic auto-scaling of application services based on observability data.

• Automatic switchover and disaster recovery under traffic bursts of multiple clusters.

• Out-of-the-box EnvoyFilter plug-in market, WebAssembly plug-in full lifecycle management.

• Unified integration with Proxyless mode supports SDK and kernel eBPF mode.

• It is compatible with the Istio ecosystem and supports Serverless/Knative, AI Serving/KServe.

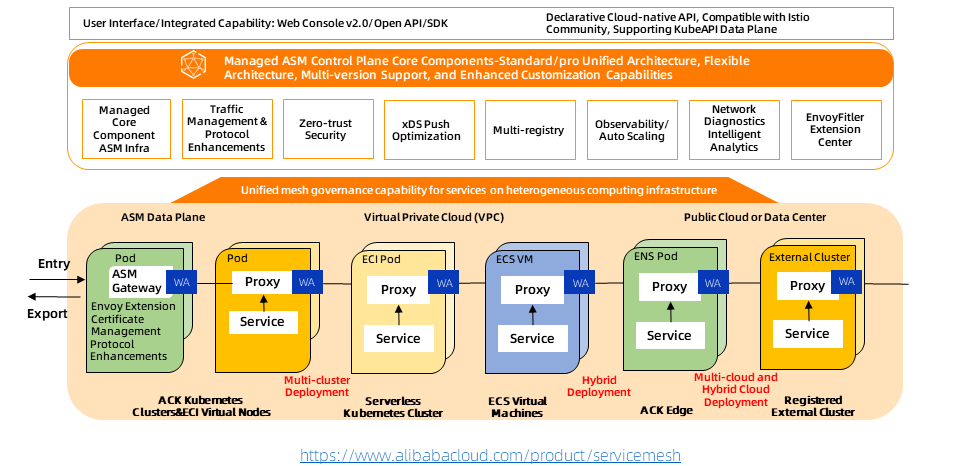

The following figure shows the current architecture of Alibaba Cloud Service Mesh (ASM) and related products. As the first fully managed Istio-compatible service mesh product in the industry, ASM maintains consistency with the community and industry trends from the beginning of the architecture. The components of the control plane are hosted on the Alibaba Cloud side and are independent of the user clusters on the data side. ASM products are customized and implemented based on the community's open source Istio. It provides component capabilities for supporting refined traffic management and security management on the managed control surface side. The managed mode decouples the lifecycle management of Istio components from the managed K8s clusters, making the architecture more flexible and improving system scalability.

ASM has become the infrastructure for the unified management of various heterogeneous computing services. It provides unified traffic management capabilities, unified service security capabilities, unified service observability capabilities, and WebAssembly-based unified agent scalability capabilities to build enterprise-level capabilities.

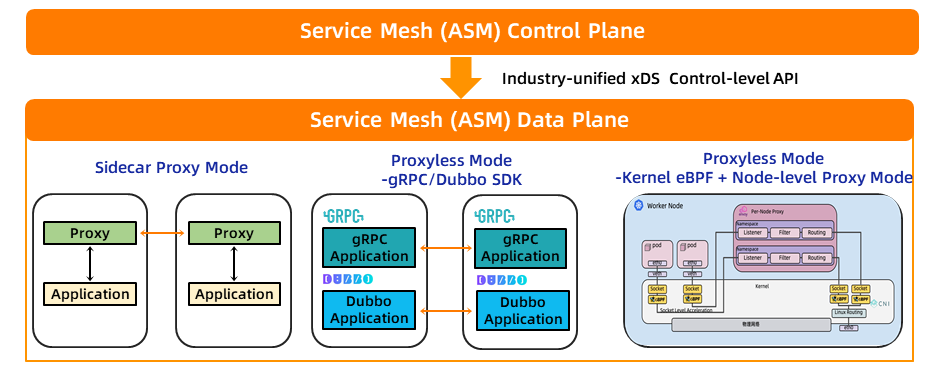

The fusion of the Sidecar Proxy and Proxyless modes is the same control plane that supports different data plane forms. The same control plane refers to the use of ASM hosting components as a unified standard control portal. This control plane runs on the Alibaba Cloud side and belongs to the hosted hosting mode.

The data plane supports the integration of Sidecar Proxy and Proxyless modes. Although the components of the data plane are not in hosted mode, they are also in managed mode. In other words, the lifecycle of these components is managed by ASM, including distribution to the data plane, upgrade, and uninstall.

Specifically, in Sidecar Proxy mode, in addition to the current standard Envoy proxy, our architecture can easily support other Sidecar, such as Dapr Sidecar. Currently, Microsoft OSM + Dapr adopts this dual Sidecar mode.

In Proxyless mode, to improve QPS and reduce latency, you can use SDK mode. For example, gRPC already supports xDS protocol clients, and our Dubbo team is also on this road. This year, I and the north latitude team can make some breakthroughs at this point together.

The other proxyless mode is the-kernel eBPF + Node-level proxy mode. This mode is a fundamental change to the sidecar mode. A node has only one proxy and can be offloaded to the node. We will also develop more products this year.

There is a series of application-centric ecosystems in the industry built around service mesh technologies. Among them, Alibaba Cloud ASM supports the following ecosystems, including:

Service mesh core principles (security, reliability, and observability) support modern software development lifecycle management and DevOps innovation. They provide flexibility, scalability, and testability for how architecture design, development, automated deployment, and O&M are performed in a cloud computing environment. Thus, service mesh provides a solid foundation for handling modern software development. Any team building and deploying applications for Kubernetes should seriously consider implementing service mesh.

One of the important components of DevOps is to create continuous integration and deployment (CI/CD) to deliver containerized application code to production systems faster and more reliably. Enabling canary or blue-green deployments in CI/CD Pipeline provides more robust testing of new application versions in production systems and adopts a secure rollback strategy. In this case, the service mesh facilitates canary deployment in the production system. Currently, Alibaba Cloud ASM supports integration with ArgoCD, Argo Rollout, KubeVela, Apsara DevOps, and Flagger systems to implement the blue-green or canary release of applications, as follows:

ArgoCD [1] is responsible for monitoring the changes in application orchestration in the Git repository, comparing the actual running status of applications in the cluster, and automatically /manually de-synchronizing the changes in application orchestration to the deployment cluster. How to integrate ArgoCD into Alibaba Cloud ASM to release and update applications, simplifying O&M costs.

Argo Rollouts [2] provides more powerful blue-green and canary deployment capabilities. In practice, the two can be combined to provide progressive delivery capabilities based on GitOps.

KubeVela [3] is a modern and out-of-the-box platform used to deliver and manage applications. ASM is integrated with KubeVela to implement progressive releases for applications. This allows you to release updated applications gradually.

Alibaba Cloud's Cloud Flow [4] provides a blue-green release of Kubernetes applications based on Alibaba Cloud service mesh ASM.

Flagger [5] is another progressive delivery tool that automates the publishing process of applications running on Kubernetes. It reduces the risk of introducing new software versions in production by gradually shifting traffic to new versions while measuring metrics and running conformance tests. Alibaba Cloud service mesh ASM supports this progressive release capability through Flagger.

Compatible with Microservices Framework [6]

It supports seamless migration of Spring Boot/Cloud applications to service mesh for unified management and governance. It provides the ability to solve typical problems that occur during the convergence process, including common scenarios such as how services inside and outside the container cluster can communicate with each other and how services in different languages can be interconnected.

Serverless Containers and Traffic Mode-based Automatic Scaling [7]

Serverless and Service Mesh are two popular cloud-native technologies from which customers are exploring how to create value. As we delve into these solutions with our customers, the problem often arises in the intersection between these two popular technologies and how they complement each other. Can we leverage Service Mesh to protect, observe, and expose our Knative serverless applications? It supports Knative-based serverless containers and automatic scaling based on traffic patterns on an ASM technology platform. This can replace the complexity of how to use hosted service mesh to simplify the maintenance of the underlying infrastructure, allowing users to easily build their serverless platforms.

AI Serving [8]

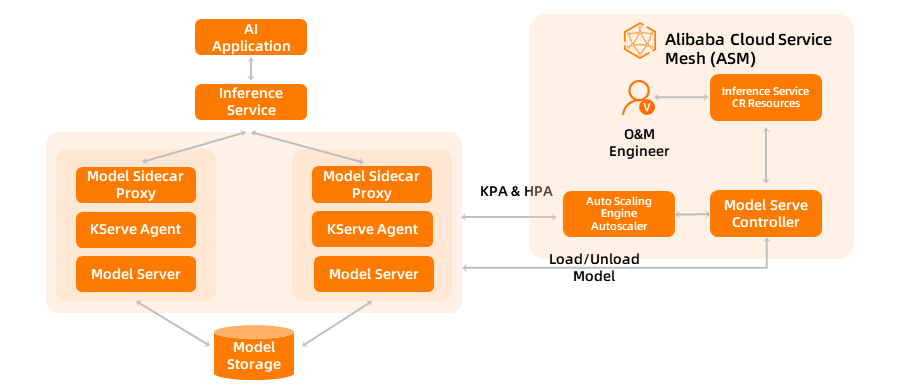

Kubeflow Serving is a community project led by Google based on Kubernetes support Machine Learning Platform for AI. Its next-generation name is changed to KServe. The purpose of this project is to support different Machine Learning frameworks in cloud-native ways and to implement traffic control and model version updates and rollbacks based on service mesh.

Zero Trust Security and Policy As Code [9]

In addition to using Kubernetes Network Policy to implement three-tier network security control, ASM provides OPA-based policy control capabilities including peer identity authentication and request identity authentication, Istio authorization policies, and more refined management Open Policy Agent.

Specifically, building a service mesh-based zero-trust security capability system includes the following aspects:

• The foundation of zero trust: workload identity. How to provide a unified identity for cloud-native workloads; ASM products provide an easy-to-use identity definition for each workload under the service mesh, and provide a customized mechanism for extending the identity construction system according to specific scenarios while being compatible with the community SPIFFE standard.

• Zero-trust carrier: security certificates. ASM products provide mechanisms such as how to issue certificates and manage the lifecycle and rotation of certificates. An X509 TLS certificate is used to establish identity. Each agent uses the certificate and provides certificate and private key rotation.

• Zero-trust engine: policy execution. The policy-based trust engine is the key core for building zero-trust. In addition to supporting Istio RBAC authorization policies, ASM products also provide more fine-grained authorization policies based on OPA.

• Insight of zero trust: visualization and analysis. ASM products provide observable mechanisms to watch the logs and indicators of policy execution to judge the implementation of each policy, etc.

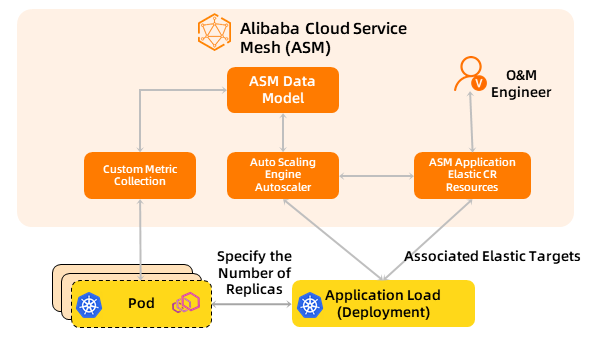

Transformation into cloud-native applications brings a lot of business value. One of them is elastic scaling, which can better cope with traffic peaks and troughs and achieve the purpose of reducing costs and improving efficiency. ASM provides a non-intrusive ability to generate telemetry data for communication between application services. You do not need to modify the application logic for metrics acquisition.

According to the four golden metric dimensions of monitoring (latency, traffic, errors, and saturation), Service Mesh generates a series of metrics for managed services. It supports multiple protocols, including HTTP,HTTP/2,GRPC, and TCP. In addition, Service Mesh has more than 20 built-in monitoring tags, supports all Envoy proxy metric attribute definitions, the common expression language CEL, and supports custom metrics generated by Istio. At the same time, we are also exploring new scenarios that broaden the service mesh drive. Here is an example of AI Serving [10].

This source of demand also comes from our actual customers. The customer's usage scenario is to run KServe on top of service mesh technology to implement AI services. KServe runs smoothly on the service mesh to implement the blue /green and canary deployment of model services and traffic distribution between revisions. It supports auto-scaling serverless inference workload deployment, high scalability, and concurrency-based intelligent load routing.

As the industry's first fully managed Istio-compatible Alibaba Cloud service mesh product, ASM has maintained consistency with the community and industry trends from the beginning of the architecture. The components of the control plane are hosted on the Alibaba Cloud side and are independent of the user clusters on the data side. ASM products are customized and implemented based on community Istio, providing component capabilities for supporting refined traffic management and security management on the managed control surface side. The managed mode decouples the lifecycle management of Istio components from the managed K8s clusters, making the architecture more flexible and improving system scalability.

Alibaba Cloud Service Mesh (ASM) is now officially available to the public, which provides more abundant capabilities, larger scale support, and more comprehensive technical support to better meet different customer requirements.

[2] Argo Rollouts:

https://www.alibabacloud.com/blog/istio-ecosystem-on-asm-2-integrate-argo-rollouts-into-alibaba-cloud-service-mesh-for-canary-rollout_599395

[4] Alibaba Yunxiao Assembly Line Flow:

https://www.alibabacloud.com/help/en/alibaba-cloud-devops/latest/alibaba-cloud-devops-flow

[5] Flagger:

https://docs.flagger.app/install/flagger-install-on-alibaba-servicemesh

[6] The Microservices Framework Compatibility:

https://www.alibabacloud.com/blog/the-seamless-transition-from-traditional-microservice-frameworks-to-asm_599312

[7] Serverless Containers and Automatic Scaling Based on Traffic Patterns:

https://www.alibabacloud.com/blog/serverless-containers-and-automatic-scaling-based-on-traffic-patterns_599328

[8] AI Serving:

https://www.alibabacloud.com/blog/istio-ecosystem-on-asm-3-integrate-kserve-into-alibaba-cloud-service-mesh_599396

[9] Zero Trust Security and Policy As Code:

https://www.alibabacloud.com/blog/alibaba-cloud-service-mesh-asm-helps-achieve-zero-trust-and-enhance-application-service-security_599311

[10] Example of AI Serving:

https://www.alibabacloud.com/blog/istio-ecosystem-on-asm-3-integrate-kserve-into-alibaba-cloud-service-mesh_599396

Cloud Forward: Cloud-Native Container Platform Episode 12 | ACK@Edge

212 posts | 13 followers

FollowAlibaba Cloud New Products - September 11, 2020

Alibaba Cloud Community - March 8, 2022

Alibaba Cloud Native Community - December 6, 2022

Alibaba Cloud Storage - February 10, 2021

Alibaba Developer - January 5, 2022

Alibaba Cloud Native - February 7, 2021

212 posts | 13 followers

Follow DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Alibaba Cloud Flow

Alibaba Cloud Flow

An enterprise-level continuous delivery tool.

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn MoreMore Posts by Alibaba Cloud Native