By Luo Jianlong (Nickname: Shengdong)

This article introduces Kubernetes through the eyes of an Alibaba technical expert, provides suggestions on the best way to learn Kubernetes, and shares experiences in troubleshooting Kubernetes cluster problems.

First of all, let's define Kubernetes. This section will explain Kubernetes from four different perspectives.

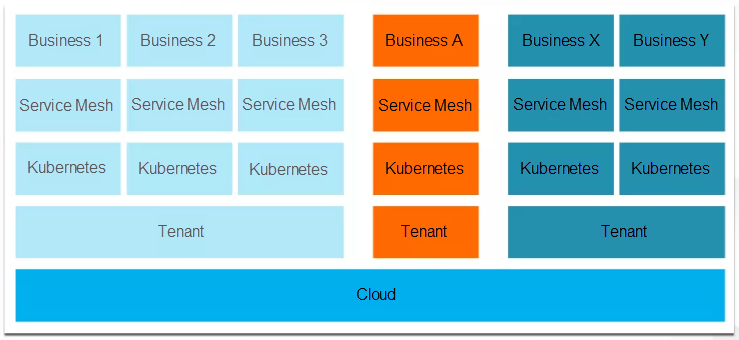

The preceding figure shows the architecture of the backend IT infrastructure we expect most companies to adopt in the future. In the future, we believe all companies will deploy their IT infrastructures on the cloud. Kubernetes allows you to divide underlying cloud resources into specific clusters for different businesses. As microservice architectures become the norm, the service governance logic of service mesh will change to mirror the two underlying layers, becoming part of the infrastructure.

Currently, almost all Alibaba businesses run on the cloud. Among these businesses, almost half of them have been migrated to custom Kubernetes clusters. As I understand it, Alibaba plans to deploy all of its business on Kubernetes clusters this year.

In some Alibaba divisions, such as Ant Financial, service mesh is already used by online businesses.

Although you may think I am exaggerating, the trend toward Kubernetes is very obvious to me. In the next few years, Kubernetes will be as popular as Linux and serve as the operating system for clusters everywhere.

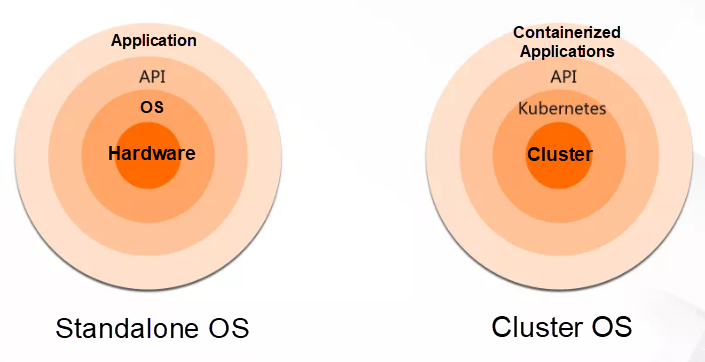

The preceding figure compares a traditional operating system with Kubernetes. Traditional operating systems, like Linux or Windows, serve to abstract underlying hardware. They are designed to manage computer hardware resources beneath them, such as the memory and CPU. Then, they abstract the underlying hardware resources into easy-to-use interfaces to provide support for the application layer above them.

Similarly, we can see Kubernetes as an operating system. In simple terms, an operating system is an abstraction layer. In Kubernetes' case, the managed lower-layer hardware is not memory or CPU resources, but a cluster composed of multiple computers. These computers are ordinary standalone systems with their own operating systems and hardware. Kubernetes manages these computers as a resource pool to provide support for upper-layer applications.

The applications that run on Kubernetes are containerized applications. If you are not familiar with containers, you can think of them as application installers. An installer packages all the required application dependencies, such as libc files. In Kubernetes, applications do not depend on the library files of the underlying operating system.

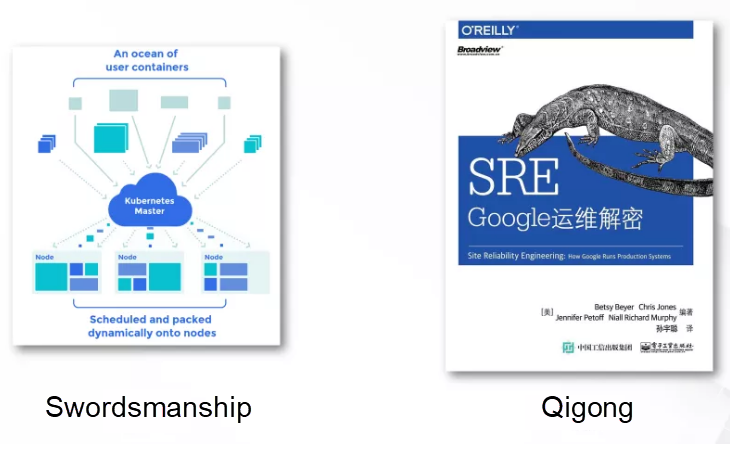

In the preceding figure, a Kubernetes cluster is shown on the left and a famous book, Site Reliability Engineering: How Google Runs Production Systems, is shown on the right. Many of you may have read this book, and many companies are currently practicing the methods it describes, such as fault management and O&M scheduling.

The relationship between Kubernetes and this book is something like the relationship between swordsmanship and the Chinese martial art Qigong. I do not know how many of you have read The Smiling, Proud Wanderer, but it is a book about a legendary swordsman. In the book, there is a school of warriors that is divided into two sections, one practices the martial art Qigong and the other practices swordsmanship. Qigong is a philosophical approach that seeks to coordinate the body, breath, and mind, whereas swordsmanship emphasizes skills with the sword. In the book, the school of warriors is separated into two sections because two disciples learned from a secret book, but each disciple only learned one part of the book.

Kubernetes is derived from Google's cluster automation management and scheduling system, Borg. This system is also the subject of the book, Site Reliability Engineering: How Google Runs Production Systems. The Borg system and the various O&M methods described in the book can be seen as two sides of the same thing. If a company only learns the O&M methods, such as establishing an SRE post, but does not understand the system managed by these methods, it's like only learning one part of the whole system.

Borg is an internal system of Google that is not open to the public. Comparatively, Kubernetes inherits some of its key methods in automatic cluster management. Therefore, if you have read this book and think it is awesome or want to practice the methods it describes, you must first have a deep understanding of Kubernetes.

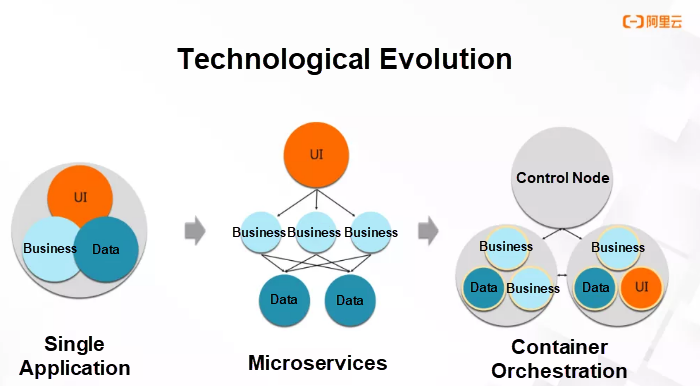

In the early days, we would build a website backend and could combine all the modules in one executable file. Just as in the preceding figure, we would have three modules: UI, data, and business. These modules would be compiled into an executable file and run on a server.

However, if our business volume grew significantly, we could not expand the capacity of the application by upgrading the server configuration. For this reason, we would have to break up the application into microservices.

Microservices are used to split a single application into smaller and loosely coupled applications. Each of these small applications is responsible for a business. Each application has a dedicated server, and they call each other over network connections.

The major advantage of this architecture is that we can scale out the small applications by increasing the number of instances. This solves the problem of our inability to expand an individual server.

Microservices introduce a new problem; a single instance occupies a single server. This deployment pattern wastes a lot of resources. The solution is to deploy multiple instances together on underlying servers.

However, such hybrid deployment introduces two more problems. First, we will encounter problems with the compatibility of dependency libraries. The versions of the library files on which these applications depend may be completely different, resulting in errors when they are installed in the same operating system. The other problem involves application scheduling and cluster resource management.

For example, when a new application is created, we need to consider the target server to host this application, and whether the resources of the server will be sufficient after the application is scheduled to it.

The compatibility of dependency libraries can be solved by containerizing applications. This means that each application comes with its own dependency library and only shares the kernel with other applications on the same server. Kubernetes is partially designed to address scheduling and resource management problems.

Incidentally, when there are too many applications deployed in a cluster and their relationships are complex, we cannot troubleshoot problems, such as slow responses to requests. Therefore, service governance technologies, such as service mesh will become a trend of the future.

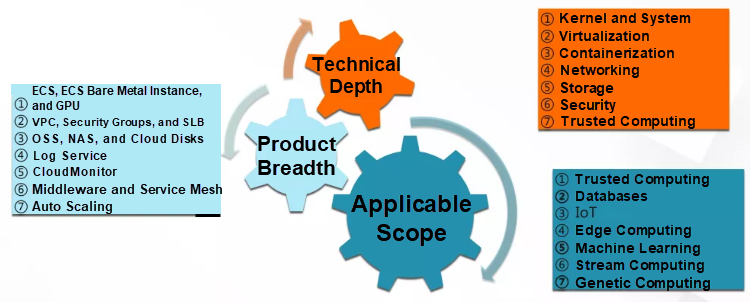

In general, Kubernetes is difficult to learn because it has a deep technology stack that includes the kernel, virtualization, containers, software-defined networking (SDN), storage, security, and trusted computing. You could also say it is a full-stack technology.

At the same time, the implementation of Kubernetes in the cloud environment will involve a lot of cloud products. For example, on Alibaba Cloud, our Kubernetes clusters use a variety of cloud products, including Elastic Compute Service (ECS), Virtual Private Cloud (VPC), Server Load Balancer (SLB), security groups, Log Service, CloudMonitor, and the middleware products, Application High Availability Service (AHAS), Application Real-Time Monitoring Service (ARMS), Alibaba Cloud Service Mesh (ASM), and Auto Scaling.

Finally, Kubernetes is a general-purpose computing platform, so it is used in a variety of business scenarios, such as databases. As far as I know, we plan to build our PolarDB Box database appliance based on Kubernetes. In addition, Kubernetes is also used for edge computing, machine learning, and stream computing.

In my personal experience, to learn Kubernetes, you need to understand it through three approaches: background, practice, and conceptualization.

Background knowledge is very important. In particular, it is important to understand the evolution and the current landscape of the technology.

More specifically, you need to know how the various technologies that makeup Kubernetes evolved, such as how container technology developed from the chroot command. You also need to be aware of the problems that drove the evolution of the technology. Only by understanding the evolution of the technology and the driving forces behind it can you form a judgment on the future direction of technological evolution.

In addition, we need to understand the technology landscape. For Kubernetes, we need to understand the entire cloud-native technology stack, including containers, continuous integration and continuous delivery (CI/CD), microservices, and service mesh. This will show us Kubernetes' place in the overall technology stack.

In addition to this basic background knowledge, it is very important to learn about Kubernetes technology and practice it.

From my experience in solving problems with a large number of engineers, few of them carefully study the technical details. We often joke that there are two kinds of engineers, one is a search engineer, and the other is a research engineer. When many engineers encounter a problem, they will do a Google search. If this does not work, they immediately submit a ticket. This approach is not conducive to understanding technology in depth.

Finally, you need to think about the technology and conceptualize it in your head. In my personal experience, I've found that after understanding the technical details, we need to constantly ask ourselves whether there is something more essential behind the details. In other words, we need to dive into the complex details to discover the pattern that links them together.

I will use two examples to better explain this approach.

The first example deals with cluster controllers. When you are learning Kubernetes, you will hear a lot of terms like declarative APIs, operators, and desired-state-oriented design. In essence, these concepts all refer to Kubernetes controllers.

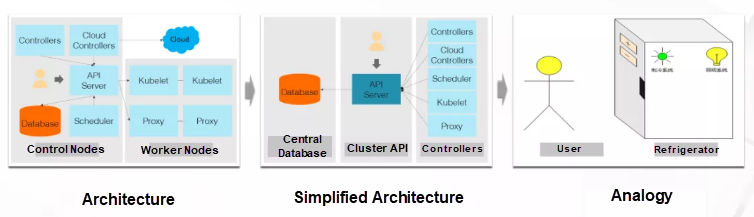

So, what is a Kubernetes controller? The preceding figure shows a classic Kubernetes architecture, which contains cluster control nodes and worker nodes. The control nodes are a central database, an API Server, a scheduler, and some controllers.

The central database is the core storage system of the cluster, the API Server is the control portal of the cluster, and the scheduler is responsible for scheduling applications to nodes with sufficient resources. Here, let's focus on the controllers. To describe the function of a controller in a sentence, we could say it is used "to make dreams come true." In this sense, I often play the role of the controller. If my daughter says, "Dad, I want to eat ice cream," then my daughter is a cluster user, and I am the person responsible for realizing her wish, that is, the controller.

In addition to the control nodes, the Kubernetes cluster contains many worker nodes, all of which have two proxies: Kubelet and Proxy. Kubelet manages worker nodes, including by starting and stopping the applications on nodes. Proxy is responsible for implementing service definitions as specific iptables or IPVS rules. Here, the service concept means using iptables or IPVS for load balancing.

If we look at the first picture from the controller's point of view, we will get the second picture. A cluster includes a database, a cluster entry, and multiple controllers. These components, including the scheduler, Kubelet, and Proxy, continuously observe the definitions of various resources in the cluster, and implement the definitions as specific configurations, such as container startup or iptables configurations.

When considering Kubernetes from the perspective of controllers, we can see its most fundamental principle, the use of controllers.

Such controllers are ubiquitous in our daily lives. A refrigerator provides a good example. When we control the refrigerator, we do not directly control the refrigeration system or lighting system in the refrigerator; instead, when we open the refrigerator, the light inside turns on. After we set the desired temperature, the refrigeration system will keep this temperature even when we are not home. In this condition, a controller is at work.

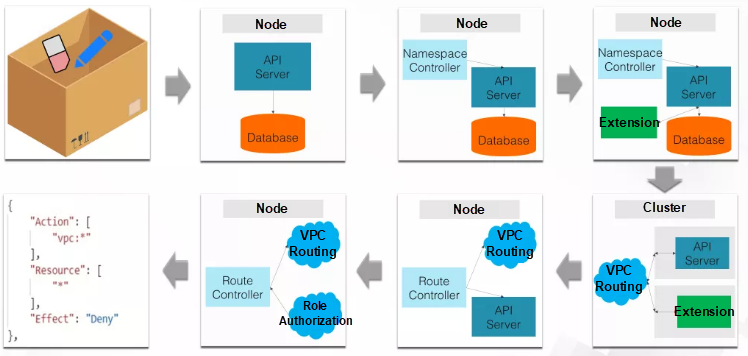

In our second example, let's look at troubleshooting a real problem. The problem is a namespace that cannot be deleted. The troubleshooting process is a little complicated, so let's go through it step-by-step.

A namespace is like a storage box in the Kubernetes cluster, just as in the first picture in the preceding figure. This box is a namespace, which contains erasers and pencils.

Namespaces can be created and deleted. However, we often encounter a problem where a namespace cannot be deleted. What happens if we have no idea how to solve this problem? First, we may consider studying how an API Server handles this deletion operation, as an API Server is the management portal for the cluster.

An API Server is an application. We can increase the log level of this application to understand how it works. For this problem, we find that an API Server receives a deletion command but no other information.

Now, we need to understand the namespace deletion process. When you delete a namespace, the namespace is not immediately deleted. Instead, it first enters the "deleting" state. The namespace controller then detects this status.

To understand the behavior of the namespace controller, we can raise the log level of the controller to see the detailed logs. Then, we find that the controller is trying to obtain all API groups.

Here, we need to understand two things. First, why does the controller try to obtain API groups when deleting a namespace? Second, what is an API group?

Let's start with the second question. API groups are a classification mechanism for cluster APIs. For example, network-related APIs are placed in the networking group. Resources created by the networking API group also belong to this group.

Next, we need to understand why the namespace controller fetches API groups. The controller does this because, when it deletes a namespace, it deletes all the resources in the namespace as well. This operation is not like how , when we delete a folder, all the files it contains are also deleted.

A namespace contains resources, which are pointed to this namespace through an index-like mechanism. The cluster must traverse all API groups to find all the resources that point to the namespace to be deleted and then delete these resources one by one.

Traversing API groups causes the cluster's API Server to communicate with its extension. This is because the API Server extension can also implement some API groups. Therefore, an API Server must communicate with the extension to see if the namespace to be deleted contains resources defined by this extension.

Now, we see the problem is a communication problem between an API Server and its extension. Therefore, the resource deletion problem is a network problem.

On Alibaba Cloud, Kubernetes clusters are created in VPC instances, which are virtual local area networks (VLANs.) By default, the VPC instance only knows its own CIDR block, while containers in the cluster generally use a different CIDR block from the VPC instance. For example, if the VPC instance uses CIDR block 172, the containers may use CIDR block 192.

By adding a route entry for the container CIDR block to the route table of the VPC instance, we can permit the containers to communicate over the VPC network.

In the lower-right corner of the figure, the two cluster nodes are located in CIDR block 172, so we must add a route entry for CIDR block 192 to the route table. Then, the VPC instance can forward the data sent to the container to the correct node, and the node can send the data to the specific container.

Here, a route entry is added by the route controller when a node is added to the cluster. The routing controller adds a route entry to the route table immediately after detecting that a new node has been added to the cluster.

Adding a route entry is a VPC operation. Authorization is required to perform this operation because it is similar to accessing cloud resources from a local machine, which always requires authorization.

The authorization performed by the routing controller is to bind the routing controller to its resident cluster node in the RAM role. The RAM role generally has a series of authorization rules.

Finally, by checking the RAM role, we find that a user modified the authorization rules, which caused the problem.

To learn more about Kubernetes on Alibaba Cloud, visit https://www.alibabacloud.com/product/kubernetes

The views expressed herein are for reference only and don't necessarily represent the official views of Alibaba Cloud.

Setting Up Your E-commerce Website with Simple Application Server

2,593 posts | 794 followers

FollowAlibaba Clouder - February 26, 2021

Alibaba Developer - November 18, 2020

Alibaba Developer - February 4, 2021

Alibaba Clouder - January 8, 2021

Alibaba Clouder - October 18, 2019

Alibaba Cloud Community - July 5, 2023

2,593 posts | 794 followers

Follow Microservices Engine (MSE)

Microservices Engine (MSE)

MSE provides a fully managed registration and configuration center, and gateway and microservices governance capabilities.

Learn More DevOps Solution

DevOps Solution

Accelerate software development and delivery by integrating DevOps with the cloud

Learn More Alibaba Cloud Flow

Alibaba Cloud Flow

An enterprise-level continuous delivery tool.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Clouder