By Zhang Tianyi (Chengtan)

First, let's clarify some key definitions to avoid confusion:

This article compares the two open-source implementations from the three aspects of performance and cost, reliability, and security, hoping to provide some reference for enterprises going through Kubernetes gateway selection.

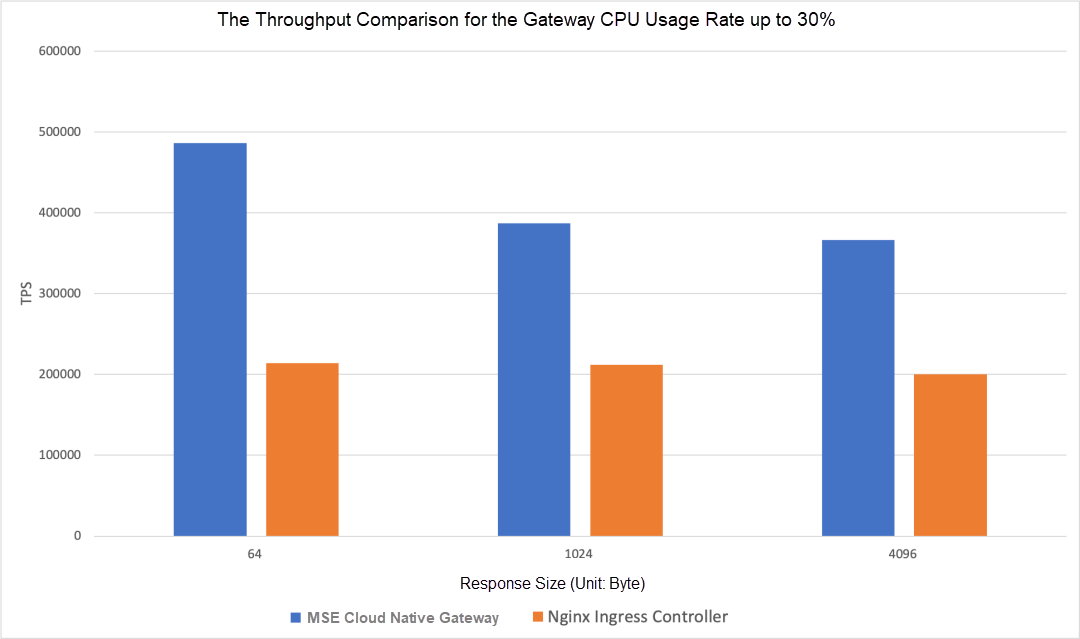

The throughput performance of the MSE cloud-native gateway is almost double that of the Nginx Ingress Controller, especially when transmitting small text. The following figure shows the throughput comparison for the gateway CPU usage rate up to 30%:

Gateway Specification: 16 Cores, 32G * 4 Nodes

ECS Model: ecs.c7.8xlarge

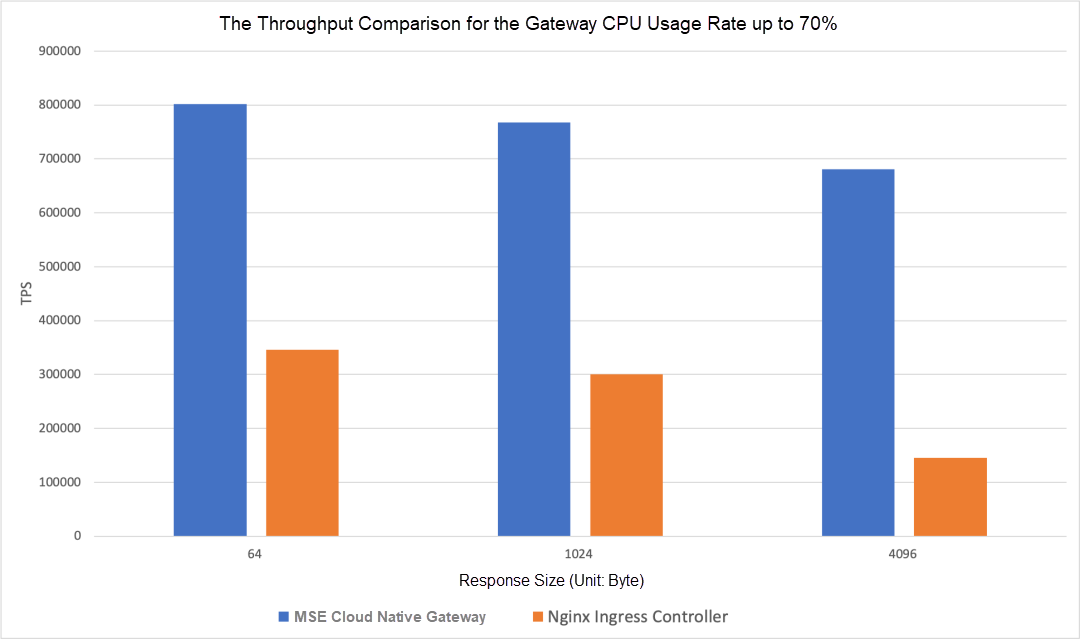

When the CPU workload increases, the throughput gap becomes bigger. The following figure shows the throughput comparison when the CPU usage rate reaches 70%:

The decrease of throughput in the Nginx Ingress Controller under high loads is due to the pod restart. Please see the Reliability analysis in the next section for more information.

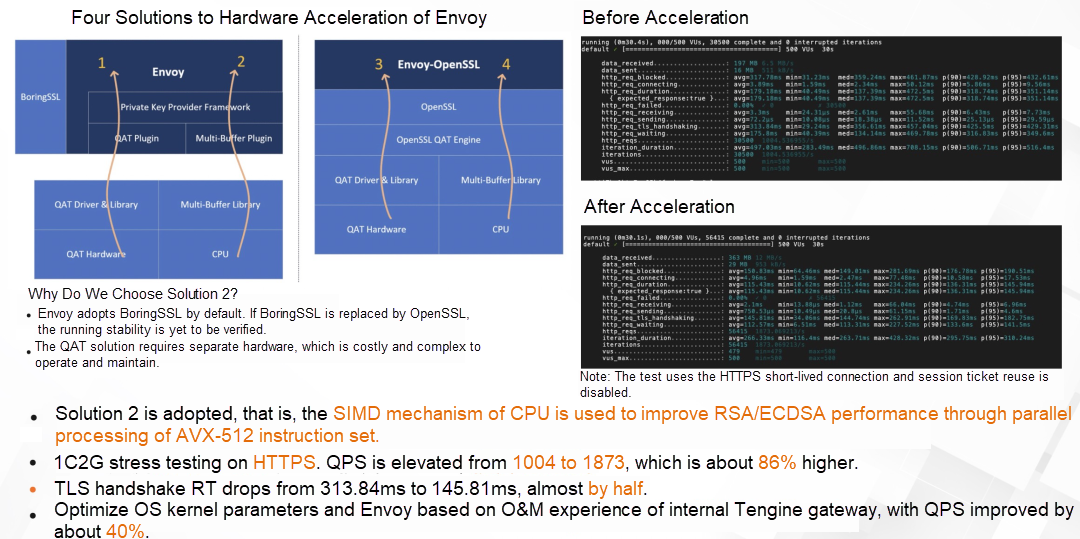

With the increasing attention paid to network security, HTTPS is now widely used in the Internet industry for encrypted data transmission. The asymmetric encryption algorithm TLS used for HTTPS implementation consumes most CPU resources on the gateway side. As such, MSE cloud-native gateway uses CPU SIMD technology to implement the hardware acceleration of TLS encryption and decryption algorithm:

The pressure test data in the preceding figure shows that after the TLS hardware acceleration solution is applied, compared with HTTPS requests, the latency of the TLS handshake is half of that without acceleration, and the limit value of QPS is increased by more than 80%.

Based on the data above, using the MSE cloud-native gateway can achieve the throughput of the Nginx Ingress Controller with only half the resources, and the throughput can be improved further in HTTPS with hardware acceleration optimization.

As mentioned earlier, under high loads, the Nginx Ingress Controller will experience a drop in throughput due to pod restarts. There are two main reasons for pod restarts:

These two problems are essentially caused by the unreasonable deployment architecture of the Nginx Ingress Controller. The control plane (Controller implemented by Go) and the data plane (Nginx) run in one container. Under high loads, CPU preemption occurs between the data plane and the control plane. The control plane process is responsible for livenessProbe and collecting monitoring metrics. OOM and livenessProbe timeout are caused due to request backlog as a consequence of insufficient CPU memory.

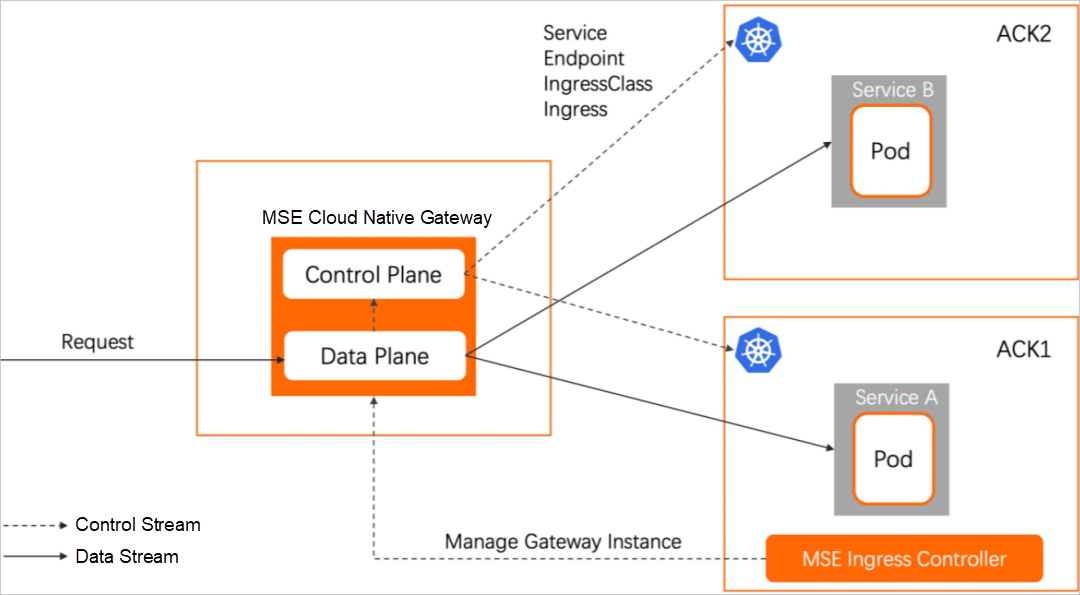

This is extremely dangerous and can cause an avalanche effect on the gateway under high loads, which has a serious impact on the business. The MSE cloud-native gateway uses an architecture in which the data plane and the control plane are isolated. This architecture has an advantage in reliability:

As shown in the figure above, the MSE cloud-native gateway is not deployed in the user's Kubernetes cluster but in a pure managing model. This model has more advantages in reliability:

If the Nginx Ingress Controller is used to achieve high-reliability deployment, the Nginx Ingress Controller needs to occupy ECS nodes exclusively, and multiple ECS nodes need to be deployed to avoid a single point of failure. And the resource cost increases sharply. In addition, since the Nginx Ingress Controller is deployed in a user cluster, there is no SLA assurance for gateway availability.

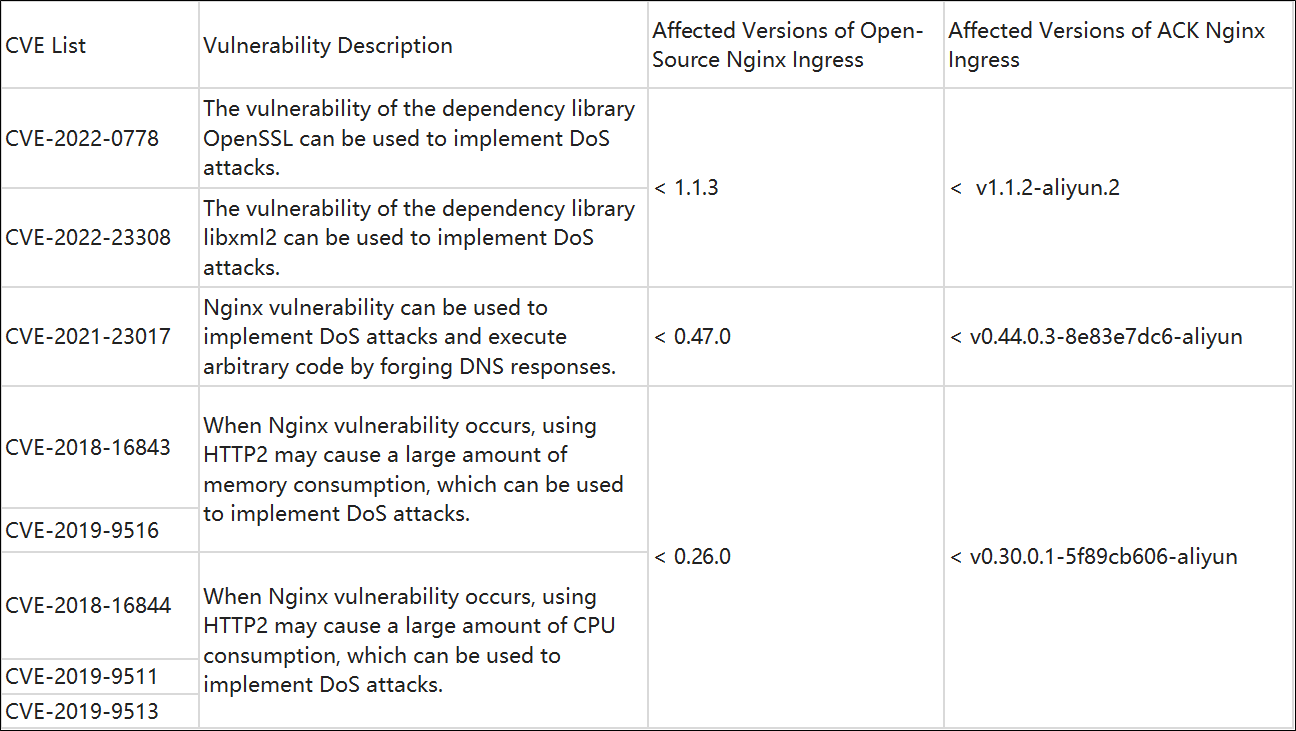

Different versions of the Nginx Ingress Controller still have some potential CVEs. Please see the following table for specific affected versions:

After the change from the Nginx Ingress Controller to the MSE cloud-native gateway, all CVEs are fixed at one time. In addition, the MSE cloud-native gateway provides a smooth upgrade solution. Once a new security vulnerability occurs, the gateway version will be upgraded quickly while ensuring the upgrade process minimizes the impact on the business.

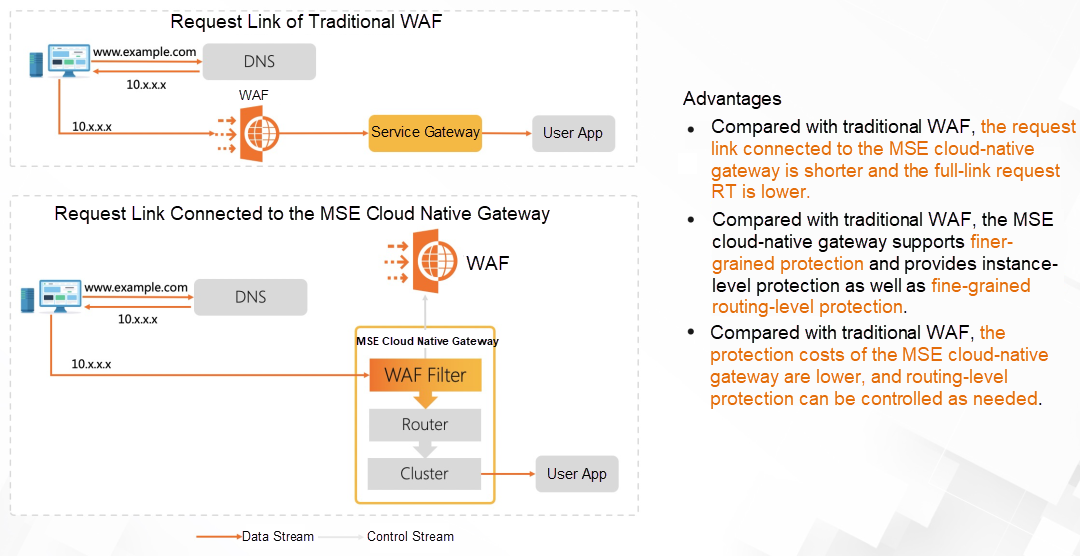

In addition, the MSE cloud-native gateway has a built-in Alibaba Cloud Web Application Firewall (WAF). Compared with traditional WAF, the requested link is shorter, and the RT is lower. Compared with Nginx Ingress Controller, it can achieve fine-grained routing-level protection. The usage cost is 2/3 of the current Alibaba Cloud WAF architecture.

The MSE cloud-native gateway has been launched on Alibaba Cloud Container Application Market, and it can replace the gateway component Nginx Ingress Controller installed by default.

The MSE cloud-native gateway has been used on a large scale as a gateway middleware within Alibaba Group. Its strong performance and reliable stability have been verified throughout the many years of service during the Double 11 Global Shopping Festival.

In the scenario of the Kubernetes container service, compared with the default Nginx Ingress Controller, the MSE cloud-native gateway has the following advantages:

The MSE cloud-native gateway provides more features in routing strategy, gray governance, and observability. It also supports the development of custom extended plugins in multiple languages. Please see this link for more information.

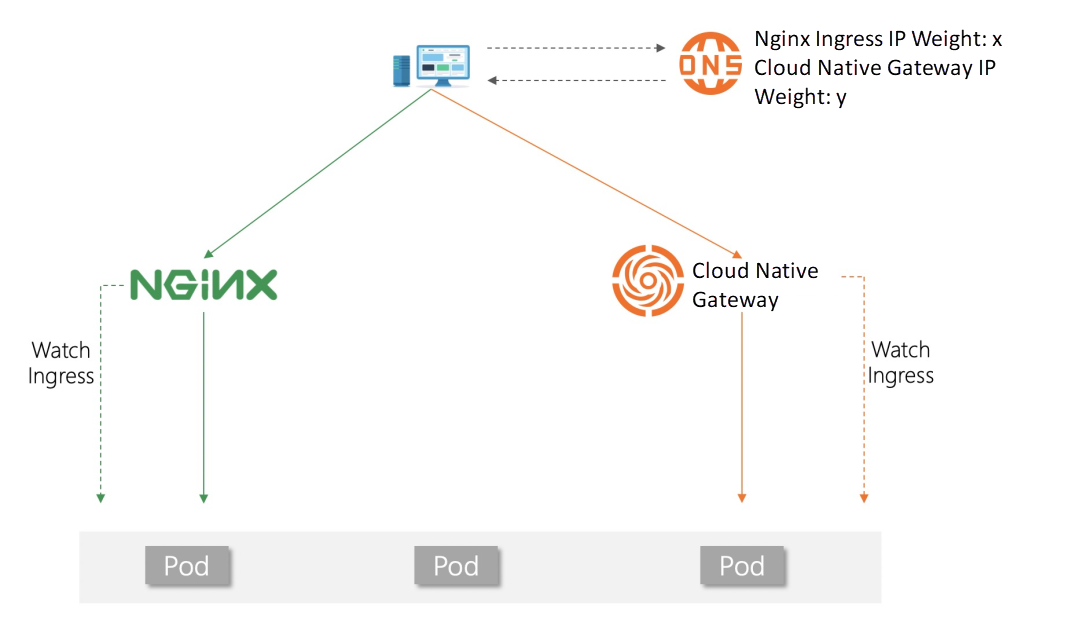

Deploying an MSE cloud-native gateway does not directly affect the original gateway traffic. You can use the DNS weight configuration to implement smooth business traffic migration without any awareness of backend services. The following figure shows the core traffic migration processes:

The complete steps are listed below:

Click here to learn more about cloud-native gateway products!

A Study on the Deployment of Istio Microservice Application Based on ASK

212 posts | 13 followers

FollowAlibaba Cloud Native Community - June 27, 2025

Alibaba Cloud Native - February 15, 2023

Alibaba Cloud Native Community - April 7, 2026

Alibaba Cloud Native Community - March 21, 2024

Alibaba Cloud Native Community - April 4, 2023

Alibaba Cloud Native Community - March 25, 2026

212 posts | 13 followers

Follow Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More Microservices Engine (MSE)

Microservices Engine (MSE)

MSE provides a fully managed registration and configuration center, and gateway and microservices governance capabilities.

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn MoreMore Posts by Alibaba Cloud Native