By Queyue

As one of the four major technical directions of the Frontend Committee of Alibaba, the frontend intelligent project created tremendous value during the 2019 Double 11 Shopping Festival. The frontend intelligent project automatically generated 79.34% of the code for Taobao's and Tmall's new modules. During this period, the R&D team experienced a lot of difficulties and had many thoughts on how to solve them. In the series "Intelligently Generate Frontend Code from Design Files," we talk about the technologies and ideas behind the frontend intelligent project.

A typical development cycle often goes through product requirements, interaction files, design files, and frontend development. In the Design2Code (D2C) project, designers draw up product visual effects and produce design files, while frontend developers write code based on design files. Therefore, to replace manual analysis, image editing, and other tedious work, we need to implement a capability that can automatically parse design file information for intelligent frontend development. Thanks to the ever-increasing capabilities of third-party plugins for mainstream design tools such as Sketch, Photoshop, and Adobe XD in recent years, we can restore some basic structured information and style information with these official APIs to extract raw information from the design file. In this article, we take the Sketch plugin of imgcook (a frontend intelligent product we have developed) as an example. This example shows how we process design files with a plugin and export the element information for downstream layout algorithms based on absolute position layout.

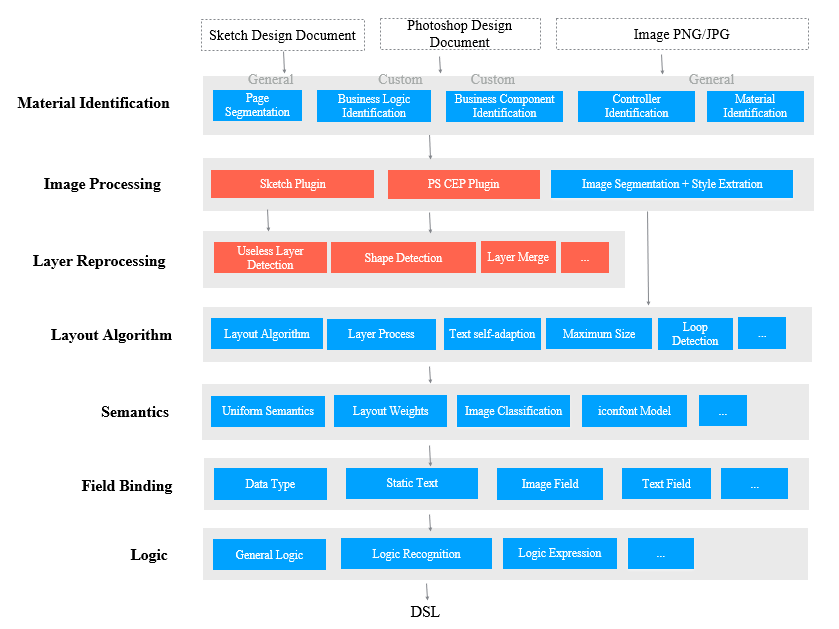

As the following figure shows, the first layer in the process is a material identification layer. This layer uses the design file as the input and identifies materials in pages and modules, such as the basic components, business components, and basic controls. This helps page segmentation and feeds the downstream with relevant element information. After that, the design file's raw information (document) is delivered to the image processing layer, which is implemented by the plugin set out in this article.

With the software capabilities, plugins installed in design tools such as Sketch or Photoshop can extract raw information from the design file. Extraction on this layer offers us data in the JSON format that conforms to the imgcook specification. The process includes extracting all elements' relevant information, such as absolute positions of elements and CSS attributes. Eventually, it will help the plugin understand modules and pages based on absolute positioning.

Designers may organize the design layers and structure by following specifications different from those in traditional frontend development. Due to this, we also use computer vision and intelligence capabilities in the image reprocessing layer to reprocess the layers that appear in the original design. For example, we can filter out redundant layers or merge layers that can be considered whole.

Hierarchical architecture of D2C technology

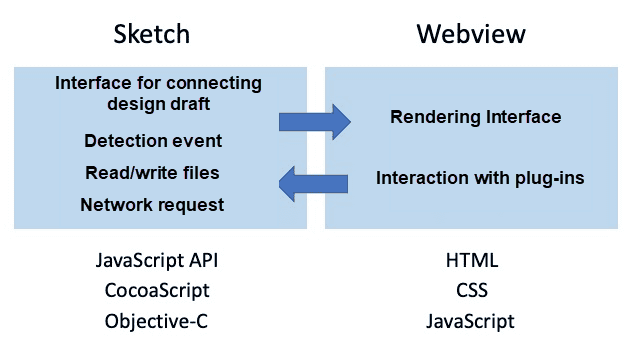

As the following figure shows, we use Sketch's JS API to access the original design file and get information from it. For features not officially offered in the JS API, we use CocoaScript to call the original Objective-C interface. Meanwhile, we can render the plugin interface using the frontend technology stack with WebView. We use the Create and Publish Plugin features in Sketch Plugin Manager (SKPM).

Plugin selection

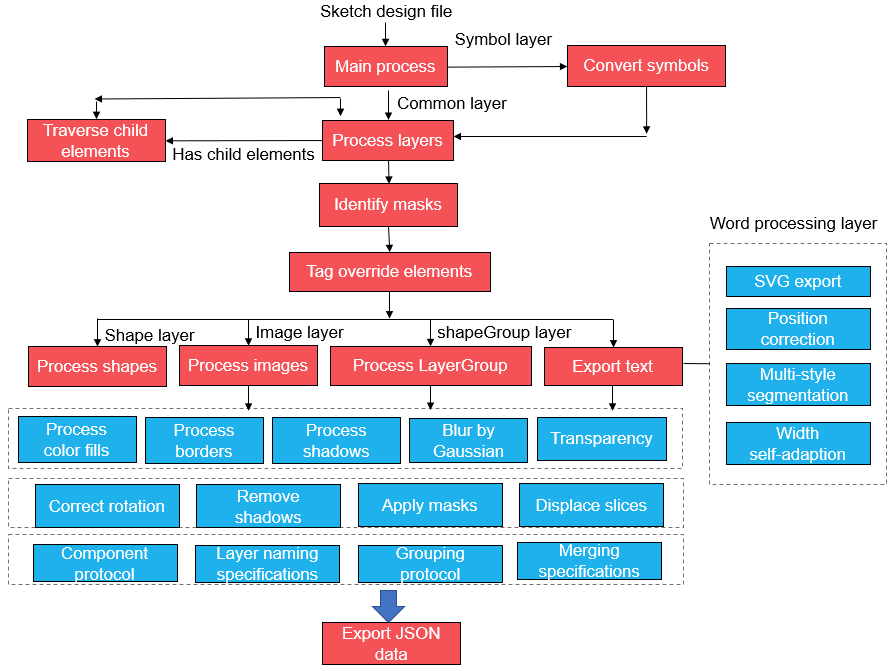

Plugin layer processing flowchart

As the flowchart shows, imgcook's Sketch plugin reads the design file, traverses all types of layers using the Depth-first search (DFS) algorithm, and extracts basic information about the layers, including the position and size information. It is worth noting that a Symbol in Sketch can override some of the properties of its Symbol master since it is considered a subclass of its Symbol master. So for Symbol layers, we must identify its Symbol master to extract corresponding information.

After layer information extraction, we mark all elements affected by the mask layer or covered by other layers to prevent the current layer's visual output from being affected by the mask layer or other layers. With consideration of each type's style, we process the shape, image, text, and other layers separately and convert the relevant Sketch properties into CSS attributes. imgcook has defined some design protocols for designers to follow. They can specify layers in the design file as groups or components by assigning different names. By doing this, designers can reveal component information relevant to them. Let's proceed to two challenges in this process.

Masks in Sketch act like a base layer. If parts of the layers above the mask fall outside the mask, they will be cropped. The processing of masks differs from other layers in the following aspects:

To tackle these challenges and accurately restore the visuals, we have developed an algorithm to calculate the mask's areas and shapes and all layers it affects. If both the area and the shape are regular, we use the CSS attributes for regular cropping. A screenshot is taken for the Sketch design file's visible area in circumstances where CSS attributes fail to specify the area to be cropped. In this process, we can also detect redundant layers. If a layer falls outside its mask area, it is considered a redundant layer and deleted. Meanwhile, if a layer completely falls within its mask area and is not affected by the mask layer, it will be processed according to the original logic.

Text layers are a bit more complex to process than shape and image layers for several reasons:

To solve the first problem, we conduct a cyclic check on the SVG information to examine whether each SVG child node's CSS-related attributes have changed. If so, a child node is created to store the information. To solve the second problem, we export the accurate width and the number of lines for a fixed-width text box. The layout algorithm then examines the information for accuracy. The third problem requires thorough elaboration. Since the SVG information of rich text may be inaccurate, we have designed a computer vision based algorithm to correct the text box's baseline. The process is as follows:

Layer merging is another issue between a design file and frontend development. Unlike frontend developers, designers usually focus on achieving desired visual effects in their design files, rather than the rationality of element structures and nesting relationships. As a result, sometimes, in a design file, a designer may piece several small layers together to achieve a visual effect for an icon or an atmosphere image. However, the graphic layers pieced together should be present as a whole from a structural view. Therefore, in this case, we need to merge these layers into one and export the screenshot. We use an algorithm that automatically merges layers to achieve this. The algorithm automatically detects whether layers should be merged into a group. If so, it will merge them while exporting for subsequent processing.

A designer may add layers that do not impact the final layout or visuals in a design file. Consequently, we need to filter out these layers to rationalize and streamline the structure. Layers are checked and processed in the following ways:

Since the Sketch plugin is upstream in imgcook's intelligent code generation system, Sketch needs to be tested rigorously before every code change and release to ensure its functionality. So, we use SKPM-Test, a unit test system built with a Jest-like test framework under the SKPM system. The test system can cover 95% of the plugin's functionality.

As mentioned above, the information exported by the Sketch plugin contains the absolute position of each child node and related CSS attributes. The attributes and types of each node are mapped to the corresponding HTML code. Therefore, we can convert the exported JSON data to HTML and CSS code to view the effect. You can paste the exported JSON strings to https://imgcook.taobao.org/design-restore to view the effect.

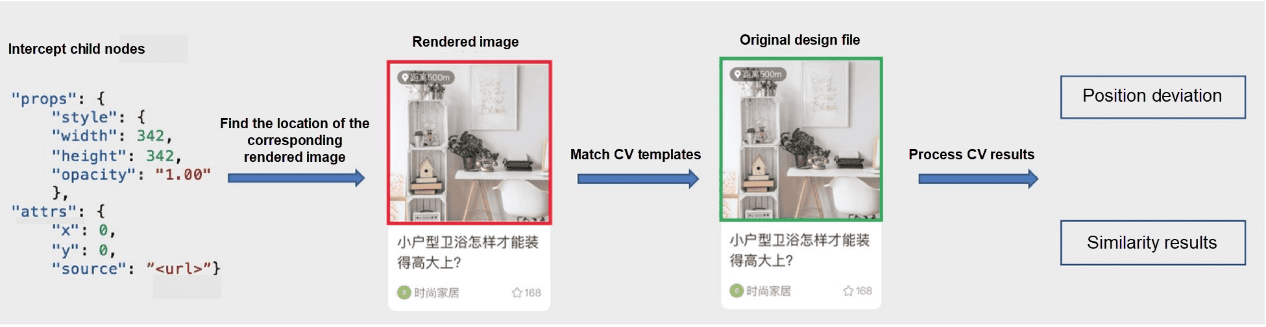

Visual measurement process of the plugin

We have developed an algorithm with OpenCV (a computer vision processing library) to measure whether the exported JSON data fully restores the original design file's visual effects.

OpenCV calculates the visual restoration score in the following steps:

Preprocess layers.

Analyze the exported JSON data and perform the following operations on each child element in the JSON data:

Each JSON array contains the following properties of an element:

After the original and restored positions of each layer are obtained with computer vision and the similarity between them compared, we take the following metrics into account to measure the restoration score:

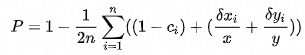

Based on these three metrics, we design the following formula to calculate the restoration score:

P is the restoration score, n denotes the total number of layers, and Cᵢ indicates the similarity between the i-th restored layer and the i-th original layer. dxᵢ denotes the x difference between the i-th restored layer and the i-th original layer, with x being the total width. dyᵢ indicates the y difference between the i-th restored layer and the i-th original layer, with y being the total height. According to this formula, if none of the layers are restored (with the similarity being 0), then P=0. If all the layers are perfectly restored (with the similarity being 1, and both the x-directional shift and y-directional shift being 0), then P=1. The score can measure the visual restoration accuracy.

To achieve better restoration results, we have laid out some design specifications for designers to follow when they produce design files. However, the number of specifications once ran into a score and were streamlined into three through intelligent layer processing. Next, we will gradually waive these restrictions for designers and frontend developers through intelligent technologies.

We will continue to improve the Sketch plugin's visual restoration performance for future Sketch versions. According to the measurement system, on average, we can achieve a restoration rate of around 95% for Sketch design files, which we will strive to improve further in later versions.

Due to the large number of high-speed processes that the plugin runs, the export speed is not ideal sometimes. We have researched this issue and found that when the plugin is processing multiple layers, especially layers with multiple images, the upload speed is slow. In the future, we aim to improve the restoration speed significantly.

With the rapid development of technology, frontend intelligence has seen many advancements. In the future, machines will gradually replace simple, repetitive, and regular development. Machines must have a better understanding of design files to do this. We hope that the plugin can achieve a higher level of intelligence in the future to understand designers' intents accurately and serve frontend development better.

Intelligently Generate Frontend Code from Design Files: imgcook

66 posts | 5 followers

FollowAlibaba F(x) Team - February 26, 2021

Alibaba F(x) Team - February 24, 2021

Alibaba F(x) Team - February 2, 2021

Alibaba F(x) Team - February 25, 2021

Alibaba F(x) Team - February 5, 2021

Alibaba F(x) Team - February 23, 2021

66 posts | 5 followers

Follow mPaaS

mPaaS

Help enterprises build high-quality, stable mobile apps

Learn More YiDA Low-code Development Platform

YiDA Low-code Development Platform

A low-code development platform to make work easier

Learn More Intelligent Speech Interaction

Intelligent Speech Interaction

Intelligent Speech Interaction is developed based on state-of-the-art technologies such as speech recognition, speech synthesis, and natural language understanding.

Learn More Super App Solution for Telcos

Super App Solution for Telcos

Alibaba Cloud (in partnership with Whale Cloud) helps telcos build an all-in-one telecommunication and digital lifestyle platform based on DingTalk.

Learn MoreMore Posts by Alibaba F(x) Team