By Xiaodi

As one of the four major technical directions of the Frontend Committee of Alibaba, the frontend intelligent project created tremendous value during the 2019 Double 11 Shopping Festival. The frontend intelligent project automatically generated 79.34% of the code for Taobao's and Tmall's new modules. During this period, the R&D team experienced a lot of difficulties and had many thoughts on how to solve them. In the series "Intelligently Generate Frontend Code from Design Files," we talk about the technologies and ideas behind the frontend intelligent project.

imgcook is an ingenious chef specializing in cooking with various images such as Sketch, Photoshop Document (PSD), and static images. With a single click, imgcook intelligently generates maintainable frontend code, including view code, field binding code, component code, and a part of business logic code, from different types of visual design files. As one of the many imgcook services, intelligent field binding can help accurately identify bindable data fields in visual design files in vertical fields such as marketing. This service dramatically improves module development efficiency and enhances the accuracy of transforming visual design files to code. This service is divided into the following parts: data type rules, static text recognition, image field binding, and text field binding.

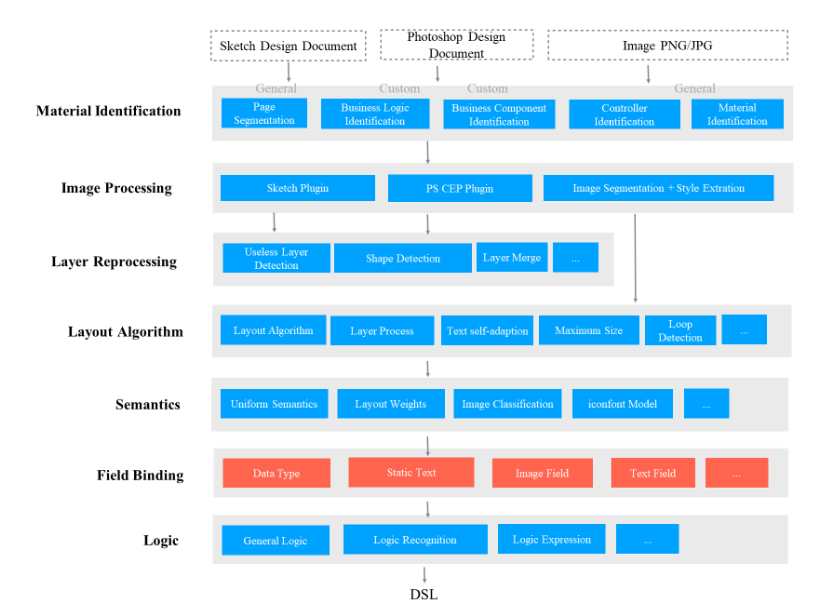

As shown in the figure, the intelligent field binding layer is divided into the following parts: data type rules, static text recognition, image field binding, and text field binding.

Hierarchical architecture of Design to Code (D2C) technology

The intelligent field binding layer relies on the semantic layer, which marks a node's type as "what" based on empirical data. Then, the intelligent field binding layer transforms this "what" node into a business domain field. To improve accuracy, high-confidence rules are used as binding conditions. The analysis and optimization suggestions for existing problems are as follows:

The semantic layer and the field binding layer are associated too tightly.

The semantic layer uses hard rules.

The semantic layer does not make full use of machine learning algorithms.

Business domain fields frequently change.

The number of hard rules needs to be increased.

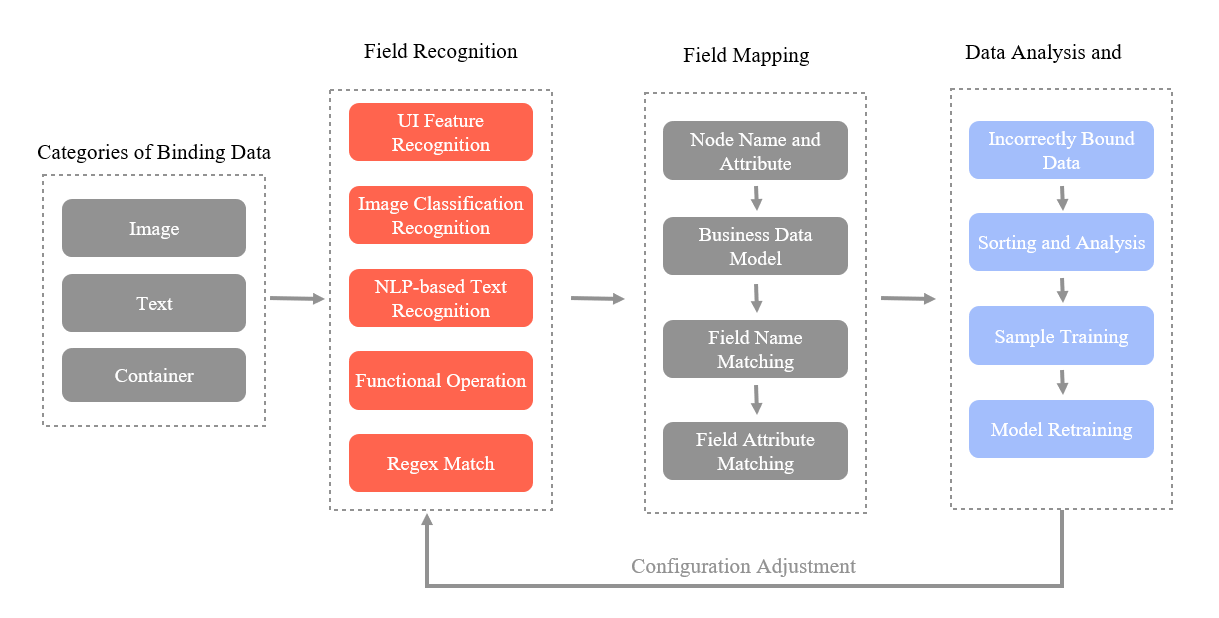

Field binding uses Natural Language Processing (NLP)-based text recognition and image classification to recognize the content in the virtual DOM (VDOM) to determine the fields mapped to the data model, implementing intelligent field binding. The following figure shows the core flowchart of field binding.

Core flowchart of field binding

The core of field binding is NLP-based text recognition and image classification models, which are described in detail below.

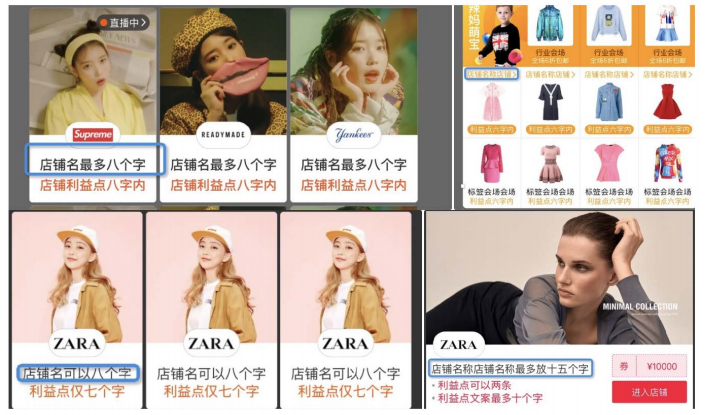

We will use the following examples to illustrate the relationship between common fields in the business domain and texts in design files.

Product title design file

Store name design file

Store description design file

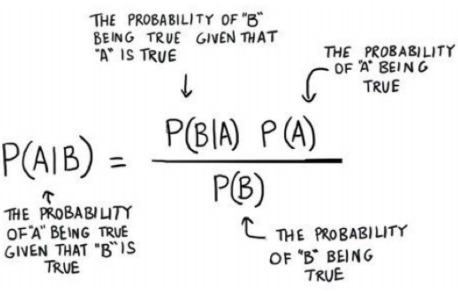

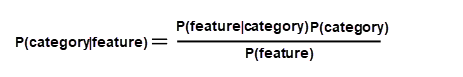

One of the problems we have with NLP-based recognition in field binding is that we do not have enough samples. In particular, we rely on tenants uploading their own samples to train models for their specific business. However, tenants often do not have a large amount of data. In this case, we can use the Naive Bayes classifier for classification because the Naive Bayes formula originates from classical mathematics. Naive Bayes's posterior probability is derived from prior probability and adjustment factors and does not depend on the amount of data. Naive Bayes is robust for handling small data sets and noise. The Bayes' theorem is expressed in the following formula:

It is much easier to understand if we express the formula in the following form:

We just need to calculate P(category|feature).

Before classifying each sample, we need to extract its features, which means to segment the sample. On the AI machine learning platform, we use Alibaba Word Segmenter (AliWS) by default. AliWS is a lexical analysis system that is widely used in various product lines of Alibaba Group. AliWS provides the following features: ambiguity segmentation, multi-granularity segmentation, named entity recognition (NER), part-of-speech (POS) tagging, and semantic tagging. You can maintain your own dictionaries and handle or correct word segmentation errors. In our project, NER applies to simple entities, phone numbers, time, and dates.

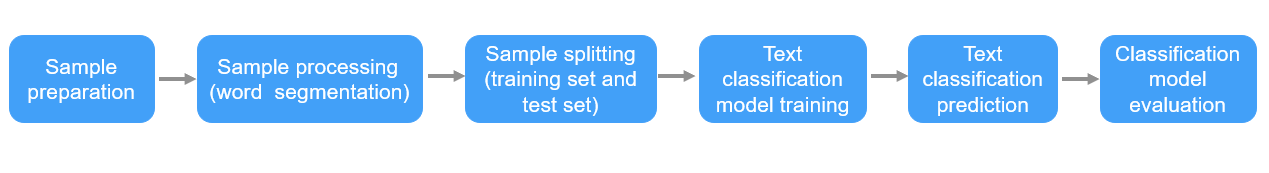

We use the machine learning platform for rapid model construction. The machine learning platform encapsulates the word segmentation algorithm of AliWS and the multi-class classification of Naive Bayes. The following figure shows the process of model construction.

Process of training a text NLP model

As the figure shows, the first step is to run an SQL script to pull training samples from the database and then perform word segmentation on the samples. After that, the system proportionally splits the samples into a training set and a test set. The system uses a Naive Bayes classifier to classify the samples in the training set and then uses the test set for prediction and evaluation based on the classification results. Finally, the results are uploaded to Object Storage Service (OSS) using the odpscmd command.

We find the following eight common types of images in various business scenarios: label, icon, avatar, store logo, scenario image, white background image, atmosphere image, and Gaussian blurred image.

In the previous model, we use some rules to recognize images based on information such as image size and position. However, recognition in this mode may be inaccurate and less flexible. For example, the icon sizes may vary in different business scenarios, and the information such as image position is of high uncertainty. Meanwhile, analysis shows that it is sufficient to distinguish images based on these categories mentioned above. Therefore, we consider using a deep learning model for image classification recognition.

For image classification, our first choice is to use Convolutional Neural Network (CNN), the most popular algorithm for image processing. CNN's idea is based on human vision principles. Compared to traditional neural networks, image analysis using the convolution kernel greatly reduces the number of parameters to be trained. In addition, CNN exhibits an overwhelming advantage in feature extraction over traditional machine learning models.

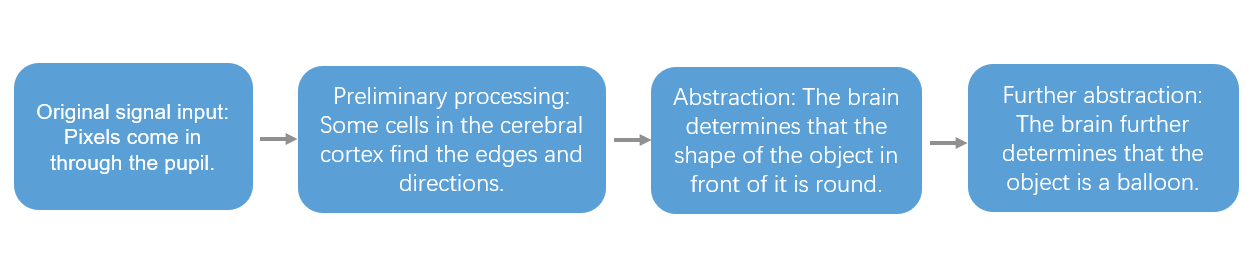

So, how CNN is implemented? Before we understand the principles of CNN, let's take a look at human vision principles.

The following figure shows human vision principles.

Human vision principles

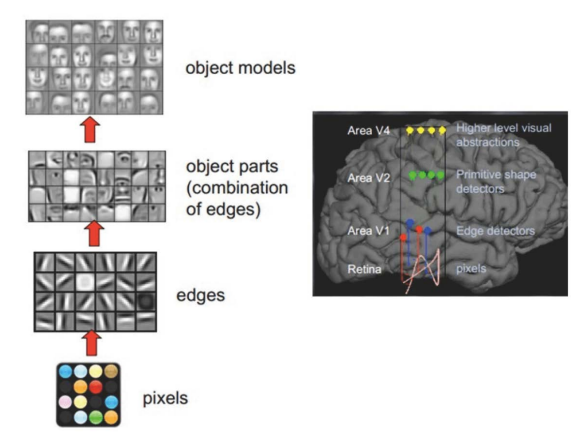

Example of facial recognition in the human brain (image sourced from the internet)

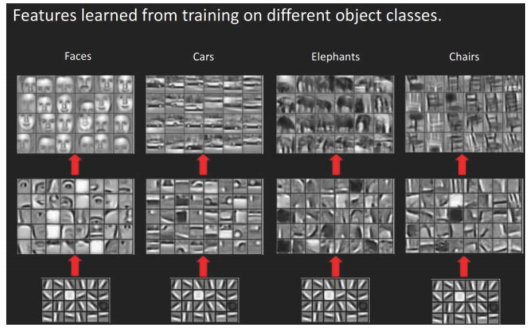

The human visual system also recognizes different objects hierarchically.

The human visual system recognizes different objects layer by layer (image sourced from the internet)

We can see that the features at the bottom layer are similar, mostly being edges. The higher it goes, the more features are extracted from objects, such as wheels, eyes, and torso. When it reaches the top layer, different advanced features eventually combine and form corresponding images, so human beings can accurately distinguish different objects.

It makes us wonder whether we can simulate this human brain's feature by constructing a multi-layer neural network. Can we recognize primary image features at lower layers, combine several primary features to form a more advanced feature at a higher layer, and finally make a classification at the top layer through the combination of multiple layers? The answer is yes, and this discovery also inspired many deep learning algorithms, including CNN.

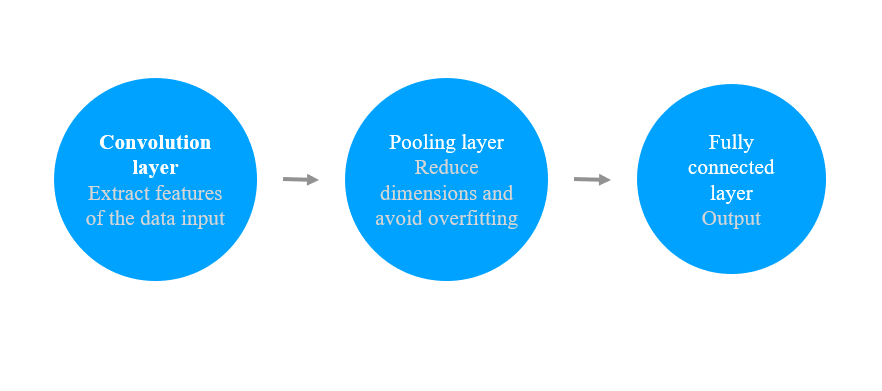

A CNN includes the input layer, the hidden layer, and the output layer. Under the hidden layer, it includes the convolution layer, the pooling layer, and the fully connected layer. Respectively, the convolution layer extracts features from the input data. The pooling layer is used for downsampling parameters through dimension reduction and to avoid overfitting. The fully connected layer is similar to a traditional neural network and is used to produce the desired results.

Basic principles of CNN

Since our images are mostly obtained from the internal network with limited computing resources, we need to choose an ideal training method for limited data and computing resources. Therefore, we choose to train the model through transfer learning. Transfer learning is a technique to retrain based on other trained models. Models such as VGG, ResNet, or MobileNet, which are trained based on datasets such as ImageNet after many calculations, are already capable of extracting image features and outputting information. Based on predecessors' achievements, we only need to adjust the model to train our data, which greatly reduces the training cost.

In our project, we consider using ResNet for transfer learning. ResNet's most fundamental motivation is to address the degradation problem, that is, accuracy degrades when model depth increases. This is due to the increasing optimization difficulty caused by the deepening network depth dependence. The residual network is connected by shortcuts. When the network performs back-propagation, the gradient will never vanish, which solves the problems caused by deep networks.

The machine learning platform provides comprehensive services for traditional machine learning and deep learning, from data processing, model training, service deployment to prediction. The machine learning platform supports the TensorFlow framework at the underlying layer as well as CPU/GPU hybrid scheduling and efficient resource reuse. We leverage the machine learning platform's computing power and GPU resources for training and deploy the inference model to EAS, the machine learning platform's online prediction service.

We create three features that will be used later in training. image/encoded is the bytes stream of the image. label is the category of the classification. image/format is the image type, which will be used in the slim.tfexample_decoder.Image function for parsing TFRecords.

TF-Slim is a lightweight high-level API of TensorFlow for defining, training, and evaluating models. TF-Slim provides many famous pre-trained CNN models. You can download the model parameters from GitHub and call the methods in the tensorflow.contrib.slim.net to load the model structure. The predict function is defined as follows. This function defines the parts that go through the model in the data flow diagram during training and prediction. Note that the pre-training model only provides the convolution layer implementation. To solve our classification problems, we need to flatten the output convolution result and add a fully connected layer and the softmax function for prediction.

We use the assign_from_checkpoint_fn function provided by TF-Slim to load the mobileNet pre-trained model parameters that we downloaded. Then, we use the previously defined data flow diagram to train and generate the checkpoints and related logs during the training.

When training the model, we save the training data periodically by using tf.train.Saver of TensorFlow. TensorFlow generates the following four types of files:

In fact, we can see that a lot of information is generated when the model is saved. Much of the information is not needed for prediction. Therefore, we need to optimize the exported records to achieve high-performance predictions.

First, we freeze the saved model. When we freeze a TensorFlow model, it saves the computational diagram and the model weights into a single file. It retains only the parts required for prediction in the computational graph, removing the training-related parts. The following code snippet shows how we convert all the variables in the computational diagram to constants.

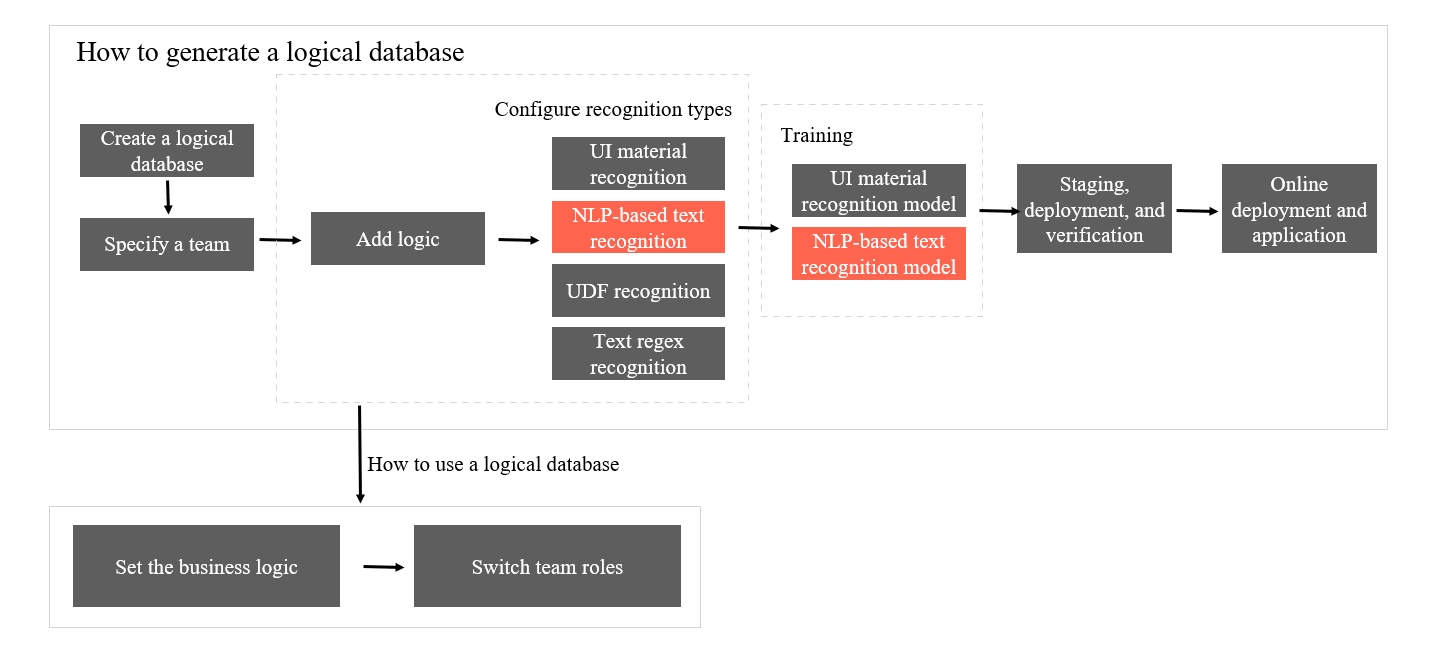

In different business scenarios, we may use different data models, and the intelligently identifiable and bindable fields are also different. Considering this, we have developed an online model training service that allows us to add categories and configure samples online. We use the service through configurations.

Product flowchart

Automatic field binding effect:

Field binding effect

Intelligently Generate Frontend Code from Design Files: Image Segmentation

Intelligently Generate Frontend Code from Design Files: Basic Component Recognition

66 posts | 5 followers

FollowAlibaba F(x) Team - February 25, 2021

Alibaba F(x) Team - February 26, 2021

Alibaba F(x) Team - February 3, 2021

Alibaba Clouder - December 31, 2020

Alibaba F(x) Team - December 30, 2020

Alibaba F(x) Team - June 20, 2022

66 posts | 5 followers

Follow Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Epidemic Prediction Solution

Epidemic Prediction Solution

This technology can be used to predict the spread of COVID-19 and help decision makers evaluate the impact of various prevention and control measures on the development of the epidemic.

Learn More Intelligent Speech Interaction

Intelligent Speech Interaction

Intelligent Speech Interaction is developed based on state-of-the-art technologies such as speech recognition, speech synthesis, and natural language understanding.

Learn More Machine Translation

Machine Translation

Relying on Alibaba's leading natural language processing and deep learning technology.

Learn MoreMore Posts by Alibaba F(x) Team