This is part of the Building End-to-End Customer Segmentation Solution Alibaba Cloud's post. These posts are written by Bima Putra Pratama, Data Scientist, DANA Indonesia.

Check see the first post and check other posts, click here.

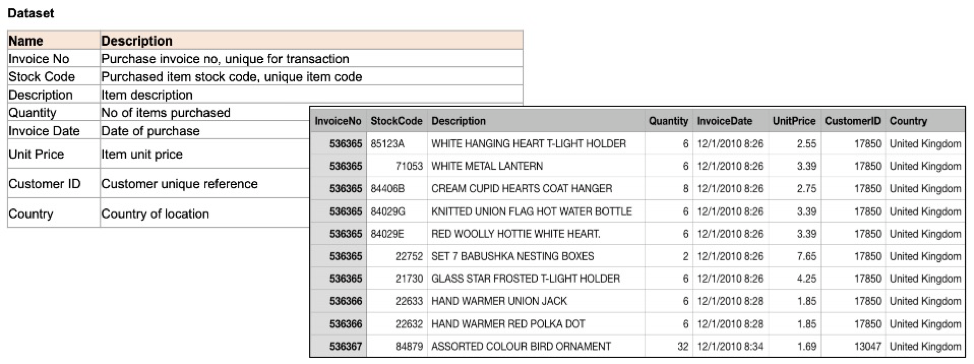

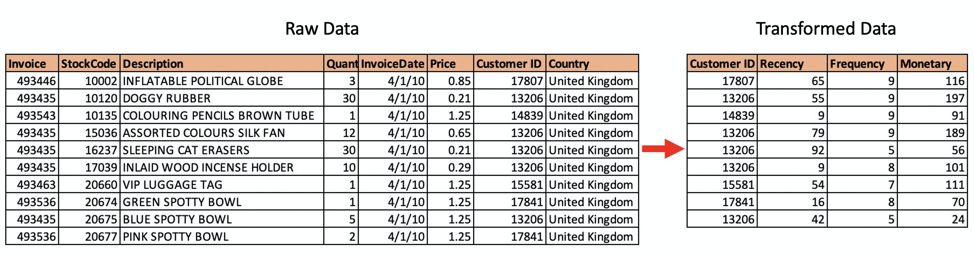

First, let’s get to know our data. We use online retail data from the UCI Machine Learning Repository (you can download this data from this link). This dataset consists of transaction data from 2010 and 2011.

Step 1: Data Collection

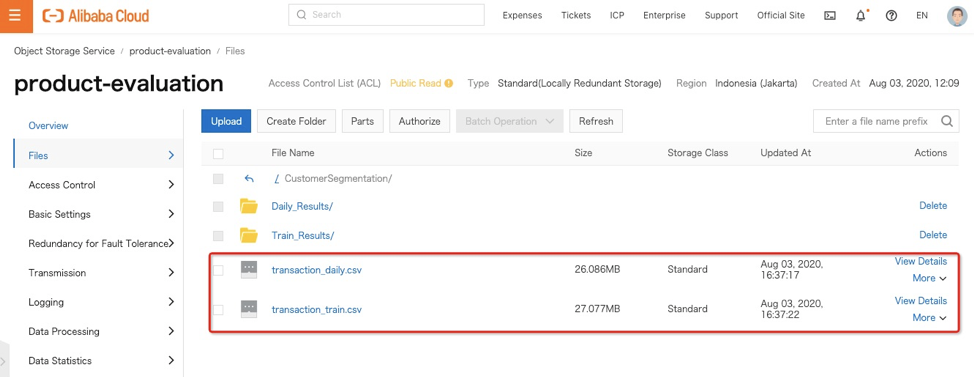

We will use 2010 data for our model training purposes and rename the file to transaction_train.csv. Then data as an example of daily data that needs to be processed is 2011, and we rename it to transaction_daily.csv. We need to store this data set into Alibaba Object Storage Service (OSS).

Step 2: Data Ingestion

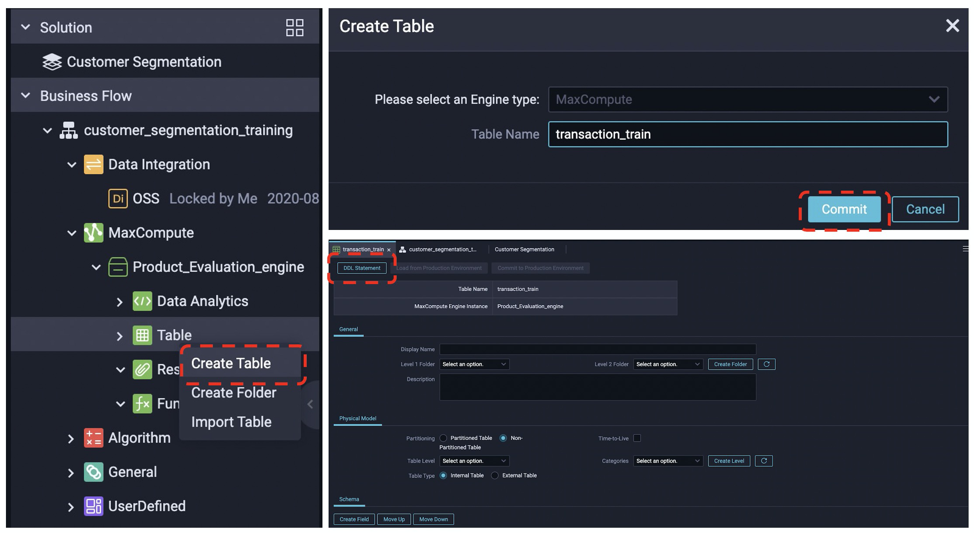

We then use MaxCompute and Data Works to orchestrate the process. This step aims to ingest data from the OSS into MaxCompute using Data Works.

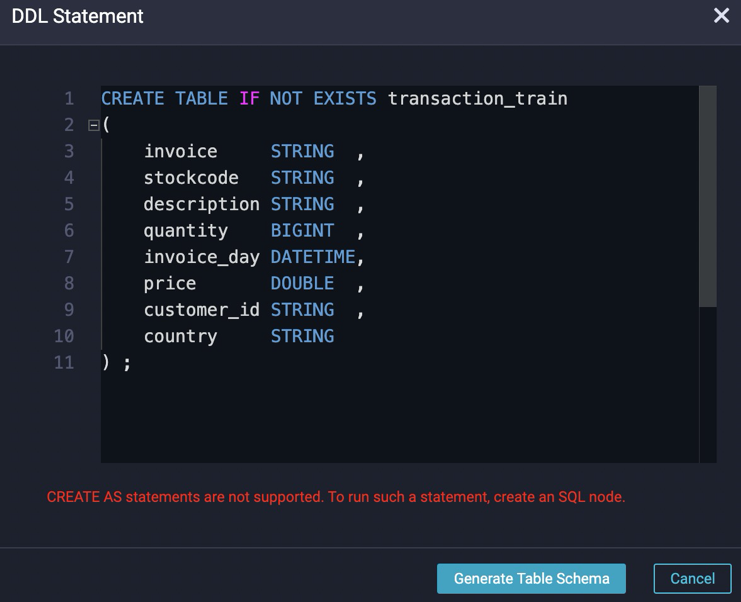

First, we need to create a table to store data in the MaxCompute. This table should match our data set schema. We can do it by right-clicking the business flow and choosing Create Table. Then we can create the schema by using the DDL statement and follow the process.

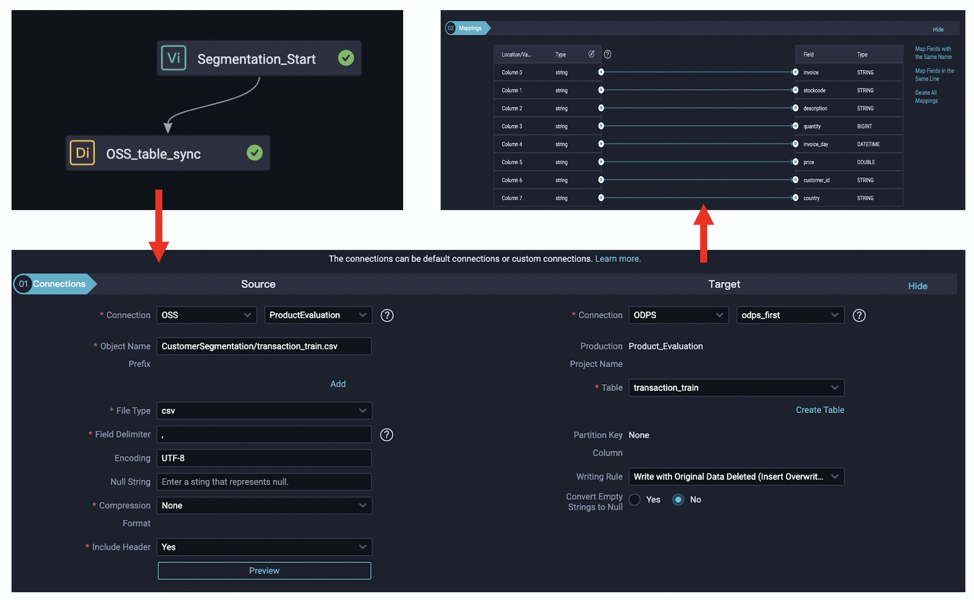

Now we have the table ready. We need to create a Data Integration Node to sync our data from OSS to MaxCompute. In this node, we need to set up the source and target, followed by mapping each column.

Then we can run the component to ingest the data from OSS to MaxCompute and get our data in place.

Step 3: Data Cleaning and Transformation

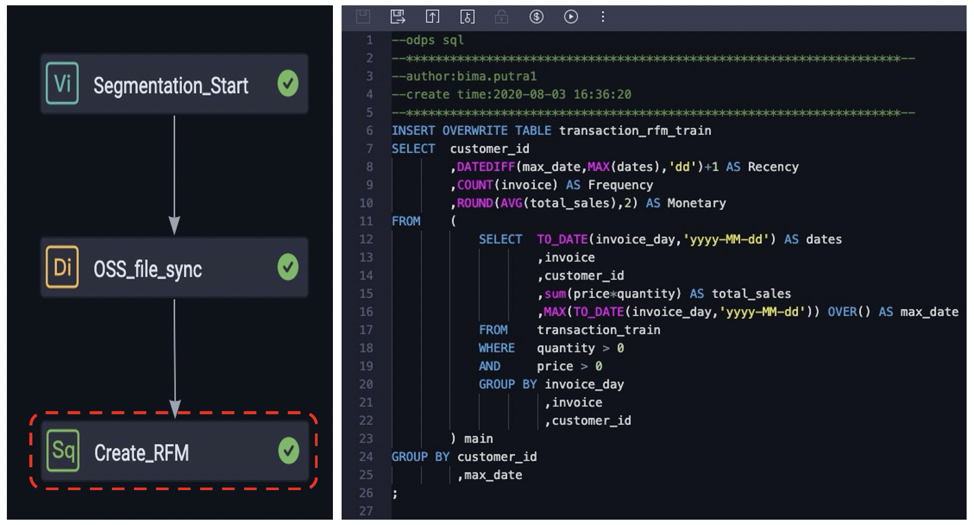

Our data needs to be cleaned and transformed before we use it for model training. First, we will clean the data from invalid values. Then we transform the data by calculating the Recency, Frequency, and Monetary values of each customer.

In DataWorks, we will use a SQL node to perform this task. We can start by creating a new table to store the result, then we create the node and write a DML query to complete the task.

The result of this task is we will have RFM values for each customer.

We will use this data preparation step in the Model Training and Model Serving process. In model training, we only do it once until we have a model. However, this data preparation will run batch daily in the Model Serving pipeline.

To continue to the next phase: Model Training, please click here.

To check see the first post and check other posts, click here.

Building End-to-End Customer Segmentation Solution Alibaba Cloud

126 posts | 22 followers

FollowAlibaba Clouder - March 15, 2021

Alibaba Cloud Indonesia - August 28, 2020

Alibaba Cloud Indonesia - August 28, 2020

Alibaba Cloud Indonesia - August 28, 2020

xungie - November 23, 2020

Alibaba Cloud Community - September 3, 2025

126 posts | 22 followers

Follow MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More OSS(Object Storage Service)

OSS(Object Storage Service)

An encrypted and secure cloud storage service which stores, processes and accesses massive amounts of data from anywhere in the world

Learn More Hologres

Hologres

A real-time data warehouse for serving and analytics which is compatible with PostgreSQL.

Learn More Data Lake Analytics

Data Lake Analytics

A premium, serverless, and interactive analytics service

Learn MoreMore Posts by Alibaba Cloud Indonesia