Xie Xuansong (Xingtong), a Senior Algorithm Expert at DAMO Academy

Today, AI can be found in all walks of life. The Alibaba vision platform provides easy-to-use and inclusive vision API services for vision intelligence technical enterprises and developers based on Alibaba's hands-on experience in visual intelligence technology. This platform helps enterprises quickly build a comprehensive visual artificial intelligence (AI) capability platform to implement various visual intelligence functions. In this article, Xie Xuansong (Xingtong), a Senior Algorithm Expert at DAMO Academy, will discuss and show visual-production-related technologies and applications.

Generally speaking, visual technologies are divided into two categories. The first category is visual understanding, such as detection and segmentation. The second category is visual production, which can also be understood as "how to produce vision" or "how to produce new visual expressions" through one or a series of visual processes. As shown in the following figure, we need to pay attention to two points. The first is that visual expressions here refer to images and videos that can be perceived by people or machines instead of labels or features. The second is that new visual expressions that are different from inputs are produced. In the past, the process shown in the figure was mostly done by designers and art engineers using Photoshop and other tools. Now, we hope that this process can be completed with other technologies.

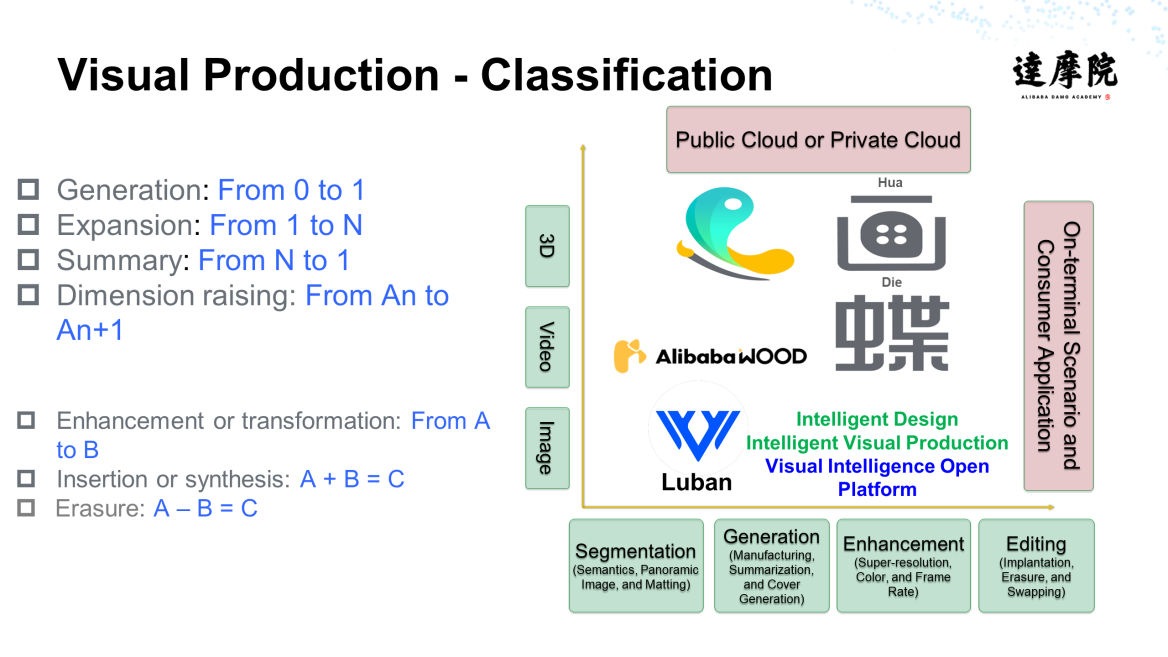

As shown in the following figure, visual production mainly includes generation, expansion, summarization, dimension raising, enhancement and transformation, insertion and synthesis, and erasure. DAMO Academy has invested lots of time and energy in this field and has developed some products, such as Luban, Huadie, and the visual intelligence open platform.

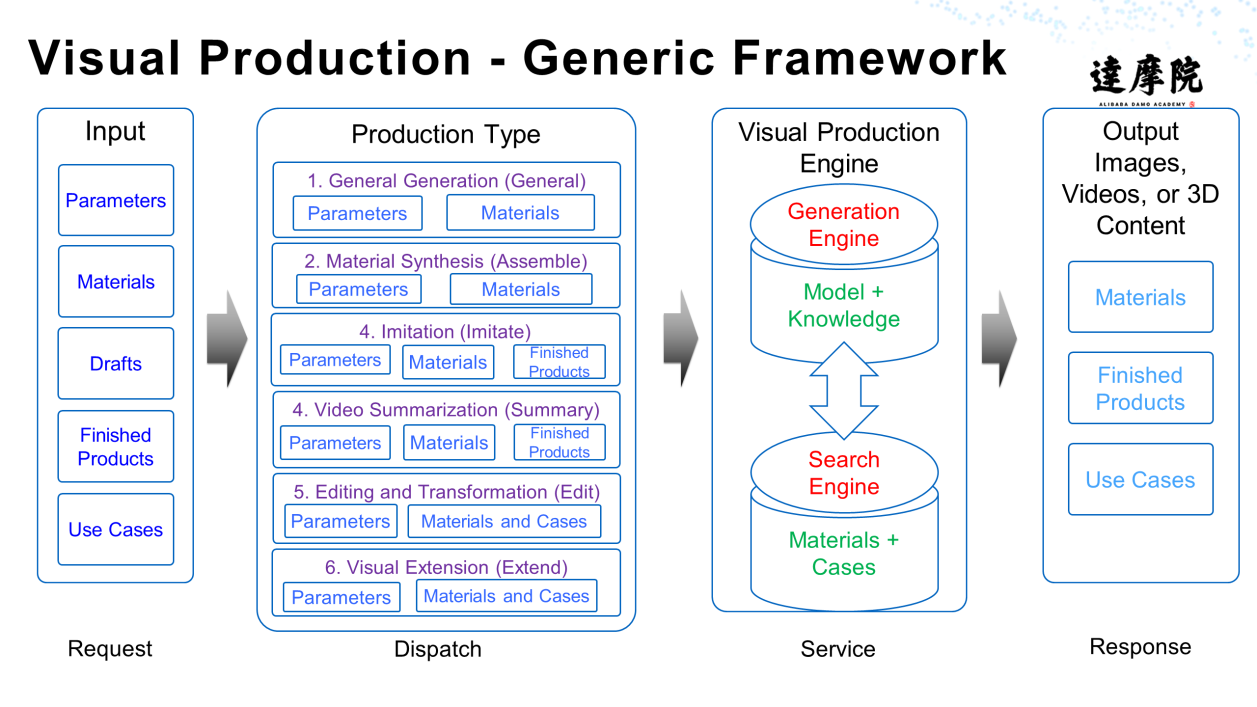

Visual production has its own generic framework, as shown in the following figure. Despite some minor differences in details, the logic of visual production implementations is similar and contains four parts: Request, Dispatch, Service, and Response.

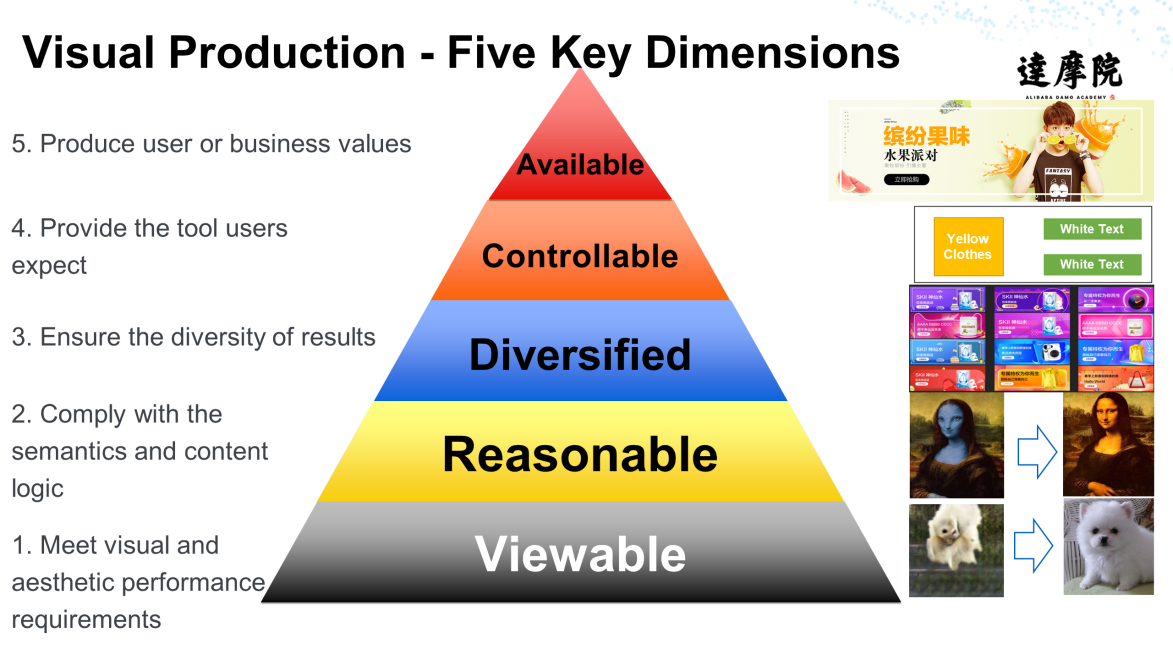

As shown in the following figure, to obtain a good or usable result, visual production must meet the requirements in five dimensions at minimum, that is, viewability, rationality, diversity, controllability, and availability. This is the only way visual production can create real values in the industry rather than theoretical.

To produce a vision, we must first understand the input vision, that is, we need to have an in-depth understanding of the vision. This understanding includes the following processes:

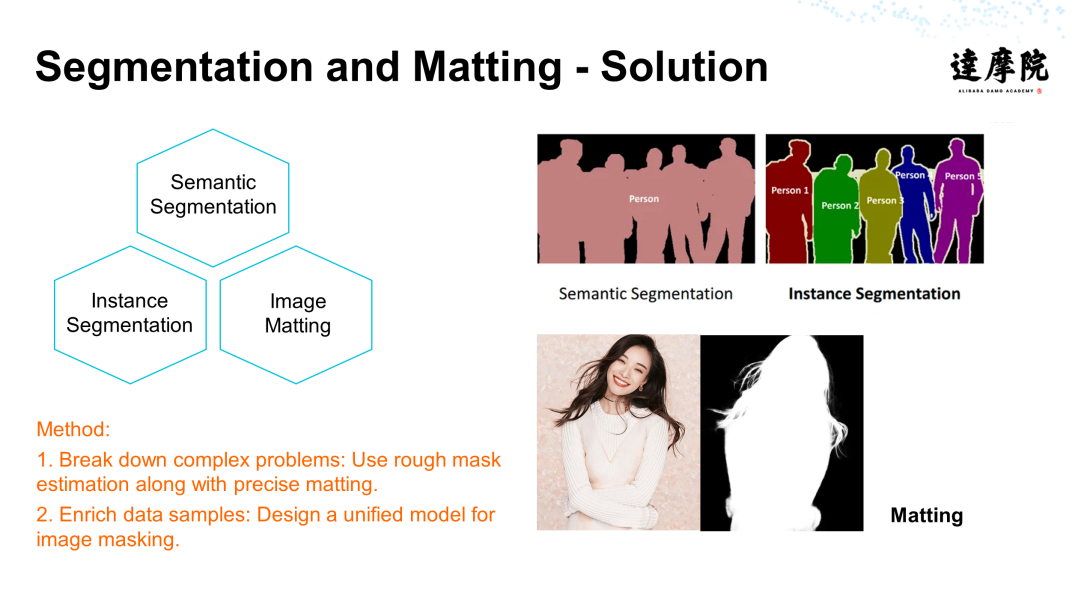

Among them, visual segmentation is an essential step before production. Visual segmentation is a hot topic in the industry and academia. It is also a tough issue because the segmentation process often involves complex backgrounds and various shielding relationships, or segmentation requirements are often rigid. For example, hair-level segmentation and hollowing are demanding. We may also face problems, such as the segmentation of the edge hair color, transparent materials, and multiple targets or scales. All of these problems in segmentation essentially result from high annotation costs and serious data deficiencies. Furthermore, even if annotations are completed, the cost will be multiplied if you want to implement refined segmentation.

As shown in the following figure, the segmentation and matting process involves semantic segmentation, instance segmentation, and image matting.

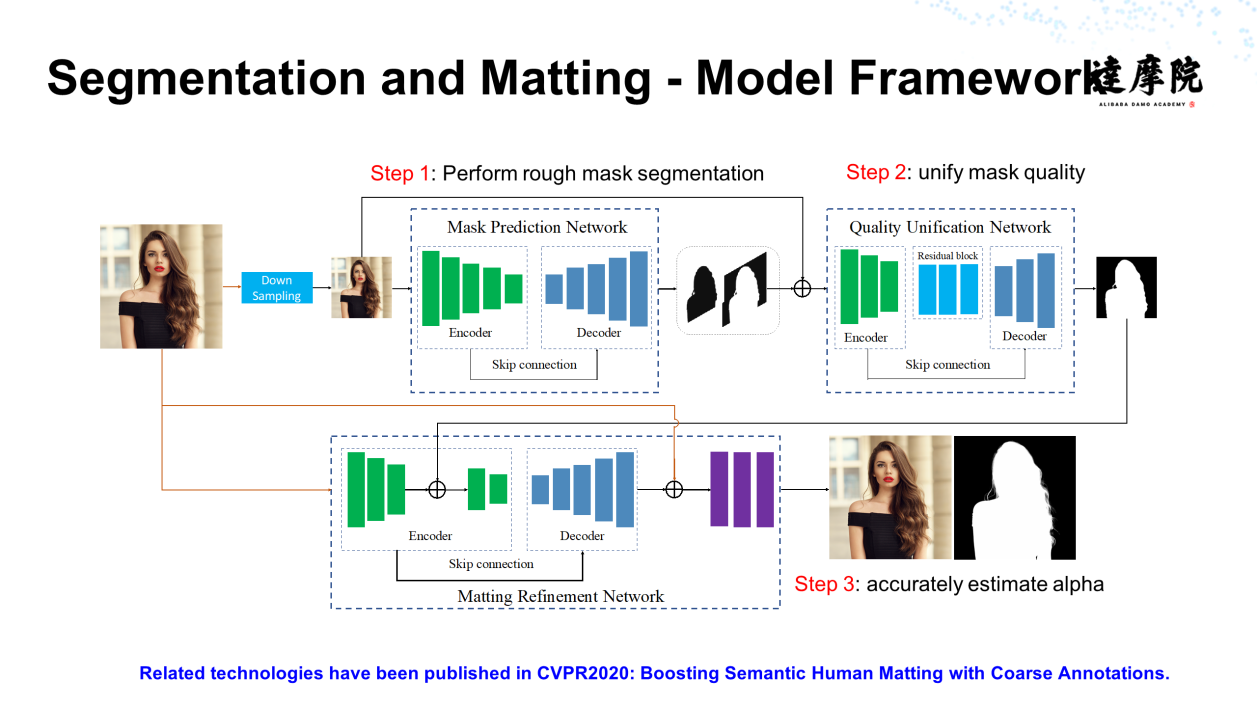

In general, the segmentation and matting process is complex. Our idea is to first split the image and then enrich the data samples. The following figure shows the framework:

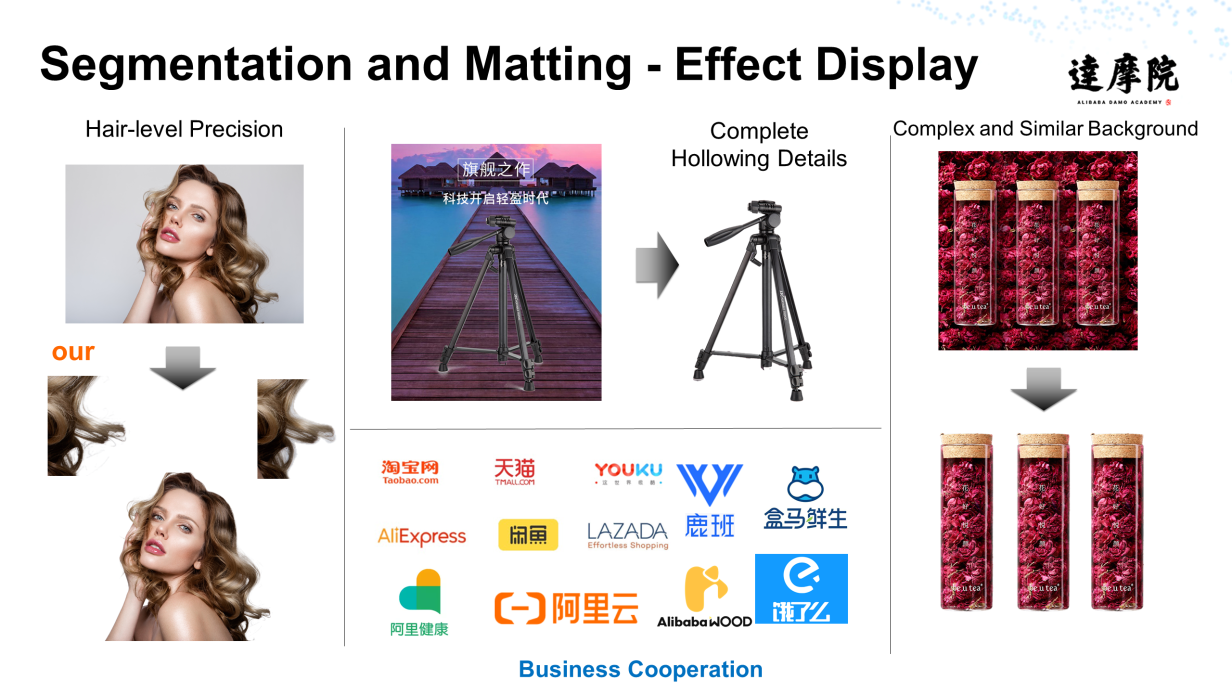

As shown in the following figure, by adopting the aforementioned idea, we have achieved good results in special scenarios, such as hair-level segmentation and detail hollowing. Currently, segmentation and matting technology is the most widely used visual AI technology within Alibaba.

Based on the segmentation and matting technology, we can expand the segmentation and implement diversified segmentation. For example, when we segment a human image, we can extract a person's head, hair, or face separately from the image. In addition to still images, we can extract human images from dynamic videos. Similarly, we can expand the segmentation of animal, vehicle, commodity, and animation images to increase our segmentation granularity. Regarding scenario matting, for example, during the segmentation of the sky, we can segment people and objects to expand scenario segmentation.

After we complete segmentation, we can receive a refined understanding of vision before proceeding with the next step.

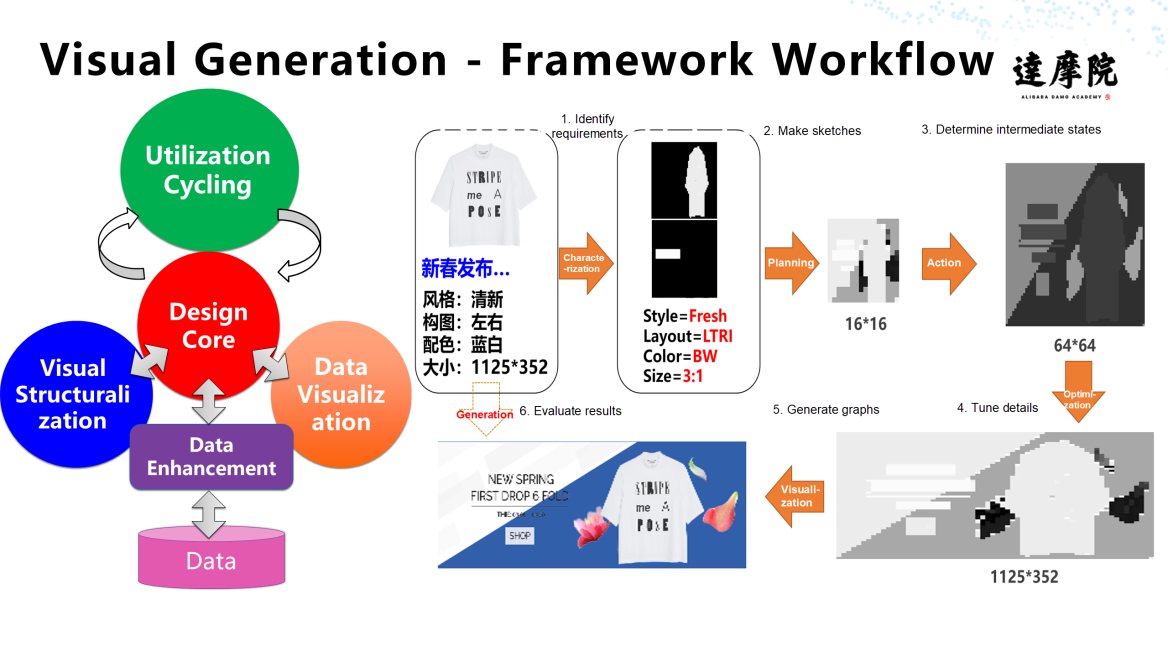

At the very beginning, we developed Luban for the visual generation. Luban is a pioneering product in the visual generation field and provides large-scale online AI design services. Designed for graphic image recognition, Luban was initially used at a large-scale within Alibaba and is now available for external users as an Alibaba Cloud service. The following figure shows the visual generation framework of Luban, which consists of six steps, that is, identifying requirements, making sketches, determining intermediate states, tuning details, generating graphs, and evaluating the result.

Luban is widely used in various fields, and initially in the e-commerce field. It has two major capabilities:

As shown in the following figure, Luban can also be used as an intelligent scenario art engineer that can implement scenario design using AI. This greatly reduces labor costs.

In addition to the preceding industries, Luban is widely applied in all walks of life. It also provides different capabilities for various industries based on specific scenarios.

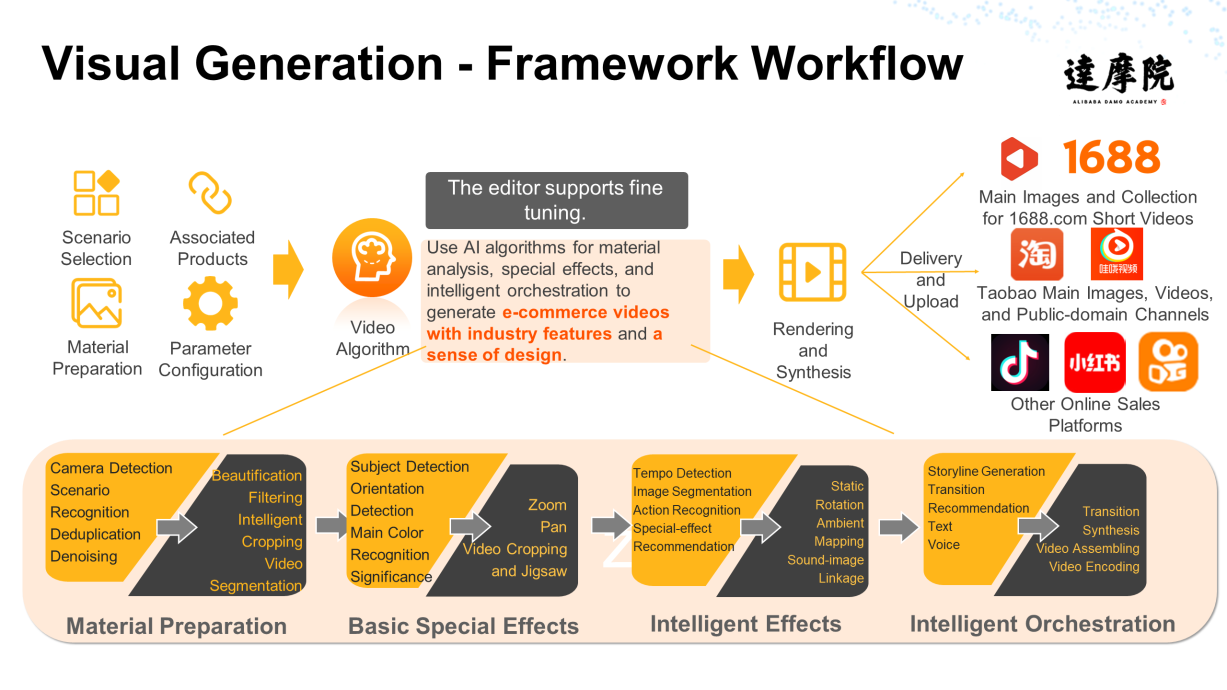

Luban is mainly used to generate graphics, but more scenarios require the capability of generating videos, such as popular short videos. To meet this requirement, Alibaba designed AlibabaWood, which has generated over 20 million short videos. AlibabaWood also provides other useful functions, such as script generation, intelligent copywriting, automatic editing, and intelligent music recommendation. The following figure shows the framework of AlibabaWood, which includes four steps that involve many technologies, such as material preparation, basic effects, intelligent effects, and intelligent orchestration.

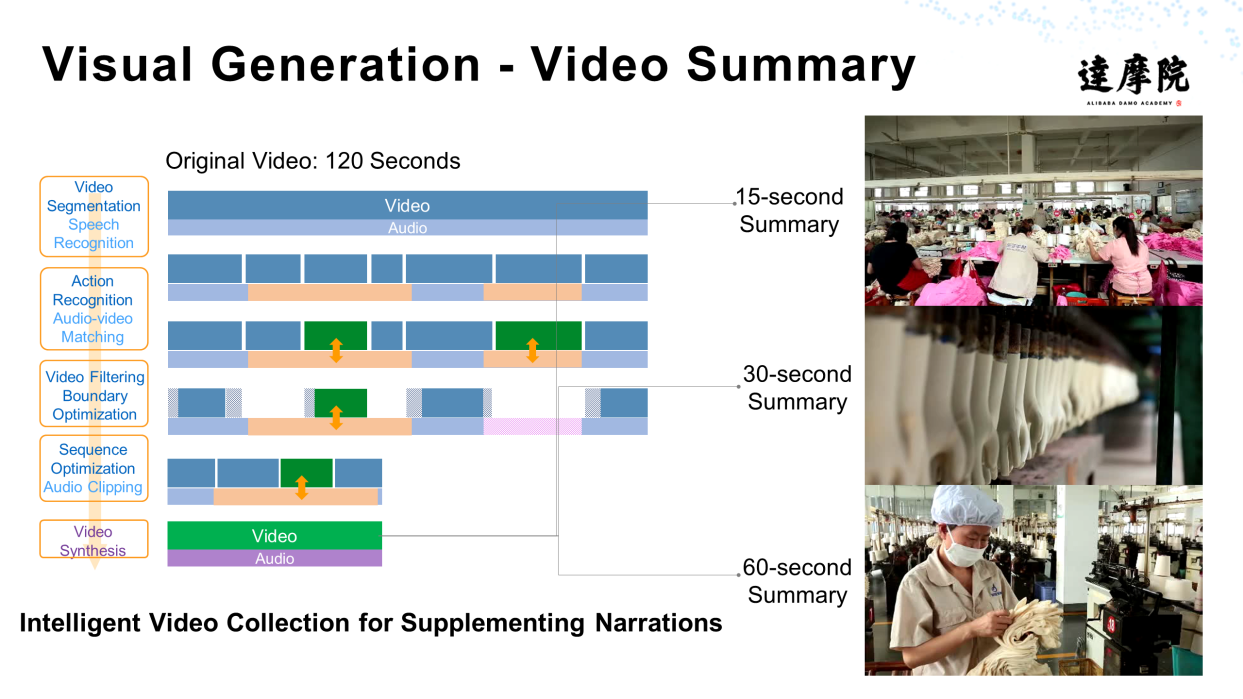

AlibabaWood is very versatile. For example, you can use it to create scenario-based intelligent videos or massive animated videos. As shown in the following figure, after multiple videos are generated, you can use AlibabaWood to create a video summary and an intelligent video collection that supplements narrations.

The generation of a video cover is another important application of AlibabaWood. As shown in the following figure, AlibabaWood can automatically complete video quality audit, content analysis, and image enhancement, and output multi-frame still or dynamic images. In this process, technologies, such as image enhancement and content analysis, are used. This is also a very important technical application in addition to video generation.

In video practices, sometimes, we wonder whether a video can be transformed into another video. The short answer is yes. However, to achieve it, you need video editing technology that provides the addition, deletion, query, and modification functions.

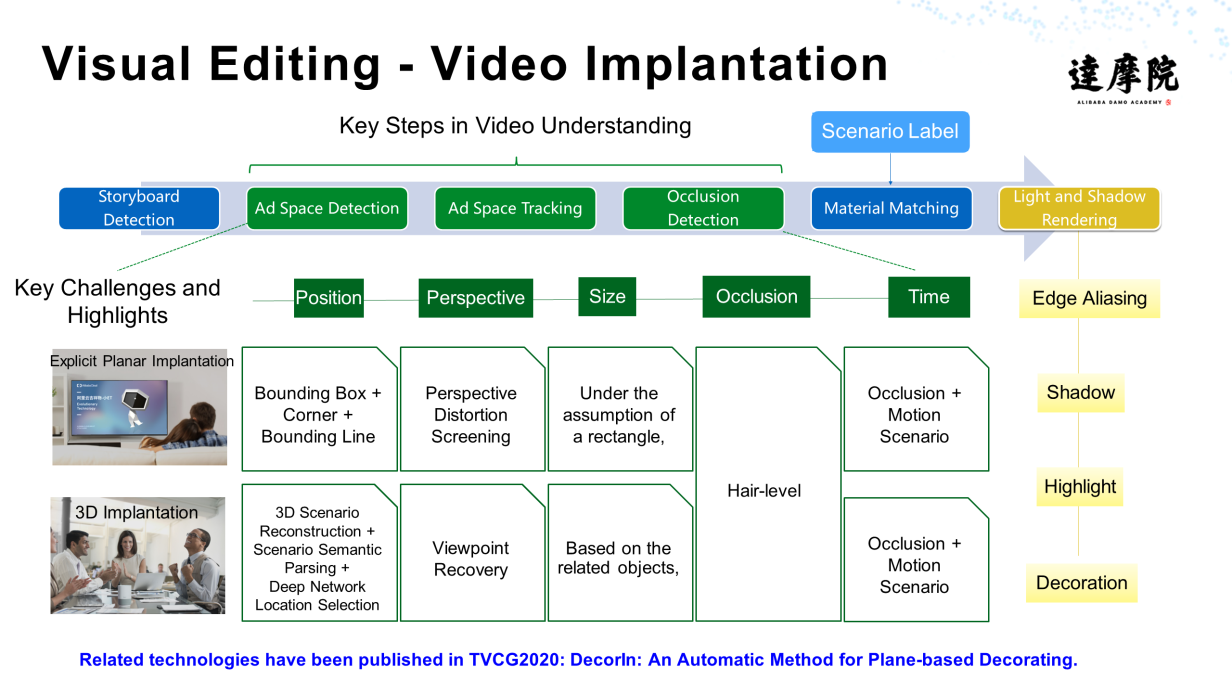

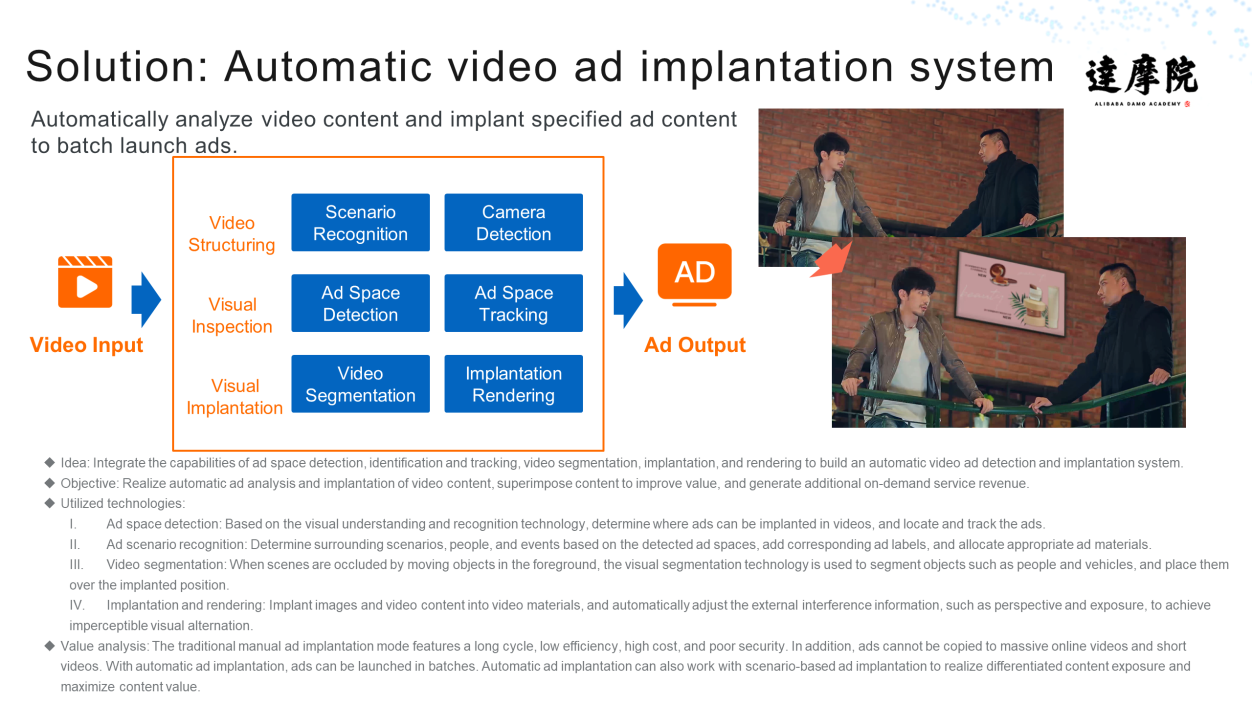

Video implantation is to add content to videos, as shown in the figure below. Video implantation is now widely used in the advertising industry.

As shown in the following figure, video implantation is a complex technology that must consider different aspects, such as ad space detection and tracking. Sometimes, complex situations, such as occlusion and out-of-screen, need to be tracked. After video implantation is completed, you also need to consider the matching between the ad and video details, light and shadow rendering, and other issues.

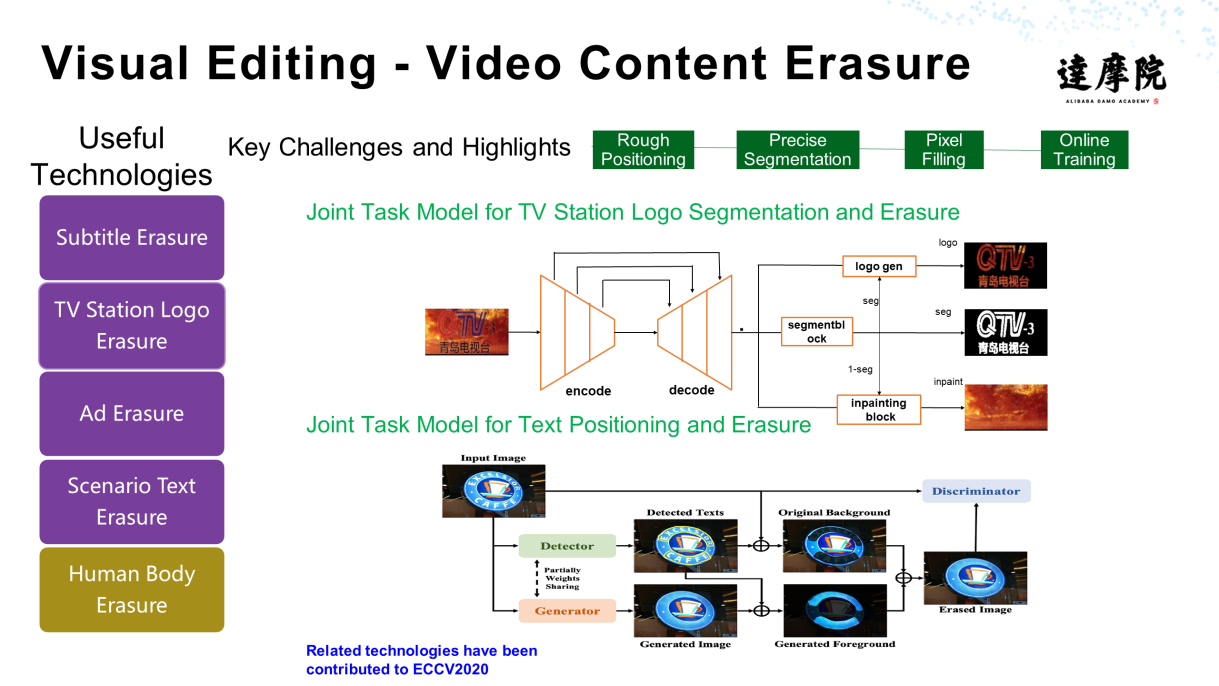

In video implantation, we need to add something to a video. However, we also need to erase something from a video sometimes, such as letters, TV station logos, and ads. The main challenge is segmentation. Accurate content erasure depends on precise segmentation.

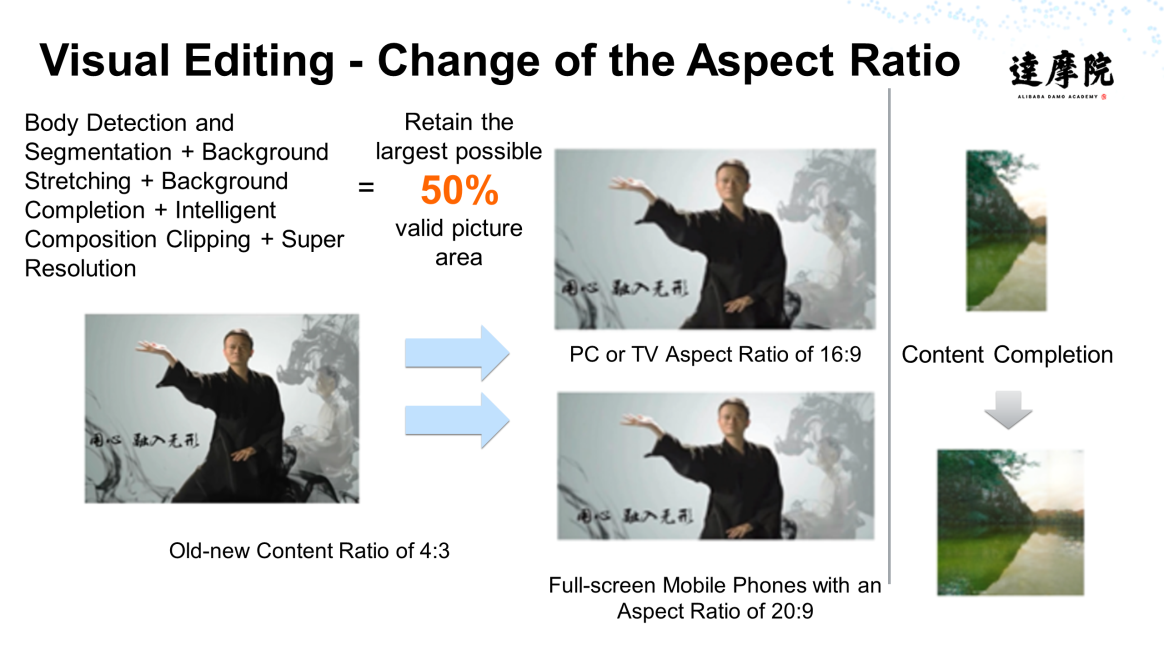

Sometimes, we need to modify a video. For example, a video was originally recorded in the 4:3 aspect ratio. However, when the video is played on an iPad, PC, or mobile phone, an aspect ratio mismatch occurs. In this case, the aspect ratio needs to be changed. After the change is made, to obtain a complete visual effect, we need to supplement the content, as shown in the following figure:

To save time and energy, we can also automatically change the image size, so posters designed for specific scenarios can be easily used in other scenarios.

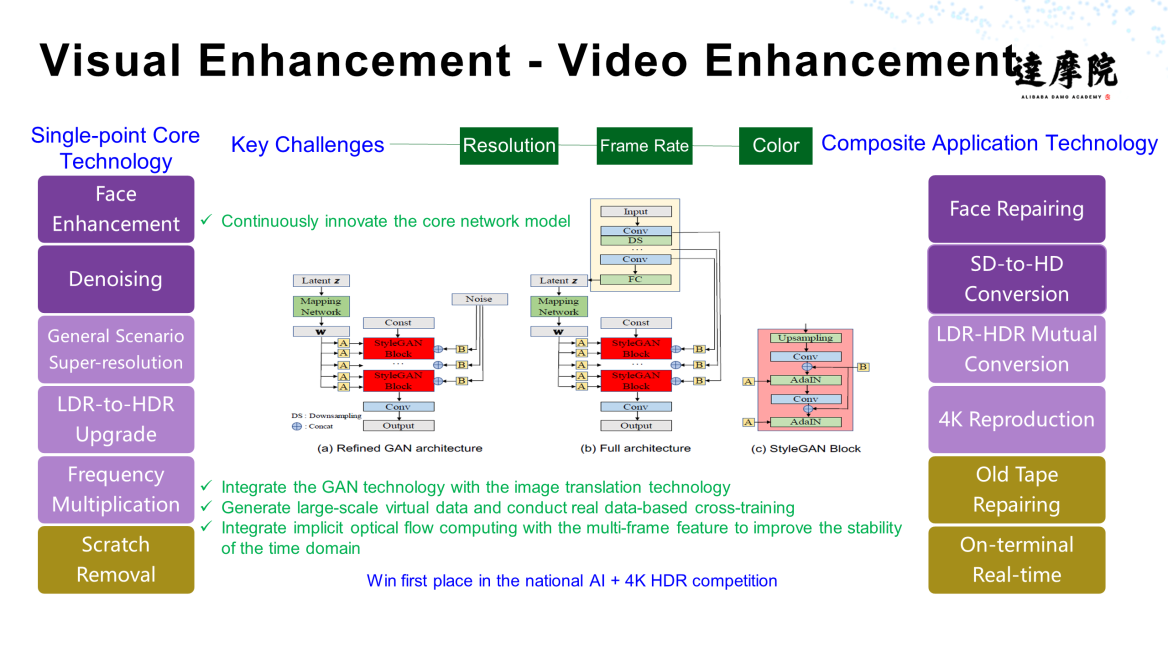

Visual enhancements make changes to the video content to improve some aspects of the video.

Video enhancement involves many technologies, such as the single-point core technology and composite application technology, as shown in the following figure:

The face is one of the most important target objects. It is difficult yet important to enhance the details of a portrait. We can use the visual enhancement technology to repair and enhance the face and highlight the main information, as shown in the following figure.

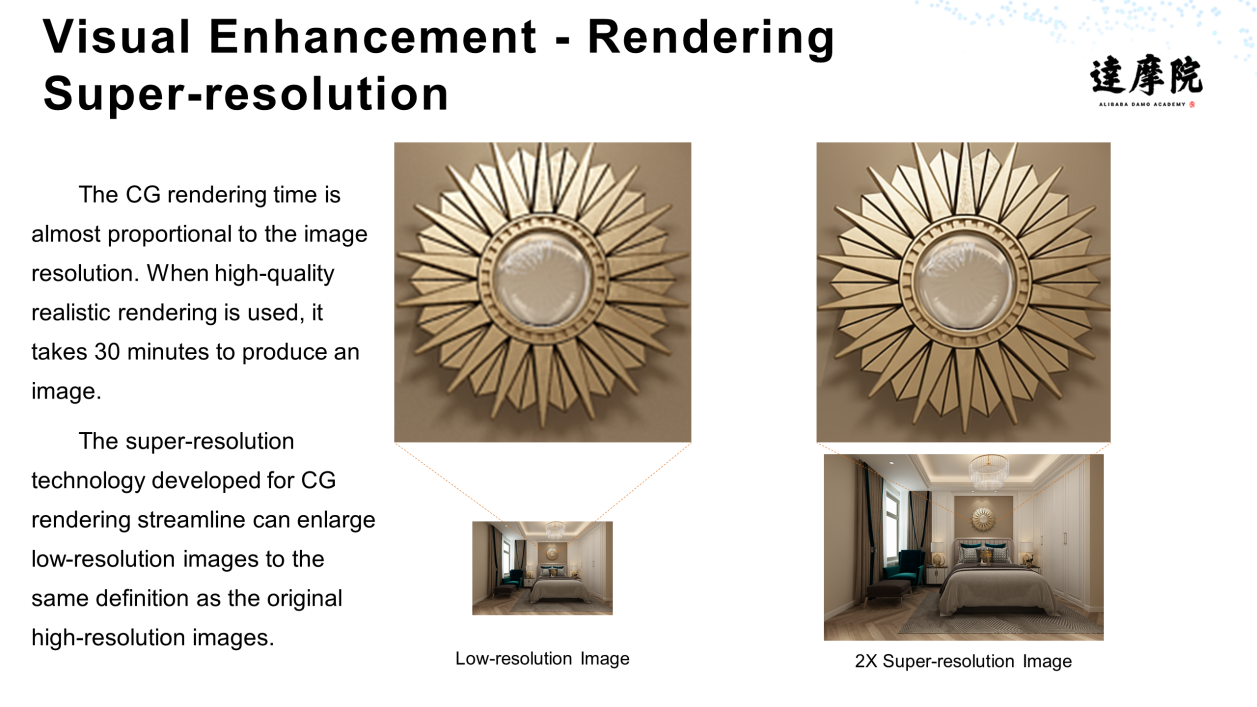

a. Rendering Super-Resolution

The CG rendering time is almost proportional to the image resolution. When high-quality realistic rendering is used, it takes 30 minutes to produce an image. We can use the super-resolution technology developed for CG rendering to enlarge a low-resolution image to the same definition as the original high-resolution image.

b. Video Super-Resolution

In addition to image super-resolution, we can also apply super-resolution to videos to increase the clearness of videos.

c. Video Frame Insertion

When frame insertion is applied to common videos, the enhancement is minor. However, in scenarios, such as dynamic videos and online videos under poor network conditions, frame insertion during video playback can effectively reduce video lagging.

d. HDR Color Extension

In addition to the frame rate, color is another important factor and an indispensable prerequisite for high-definition videos. The visual enhancement technology can be used to implement HDR color extension and enhance video display.

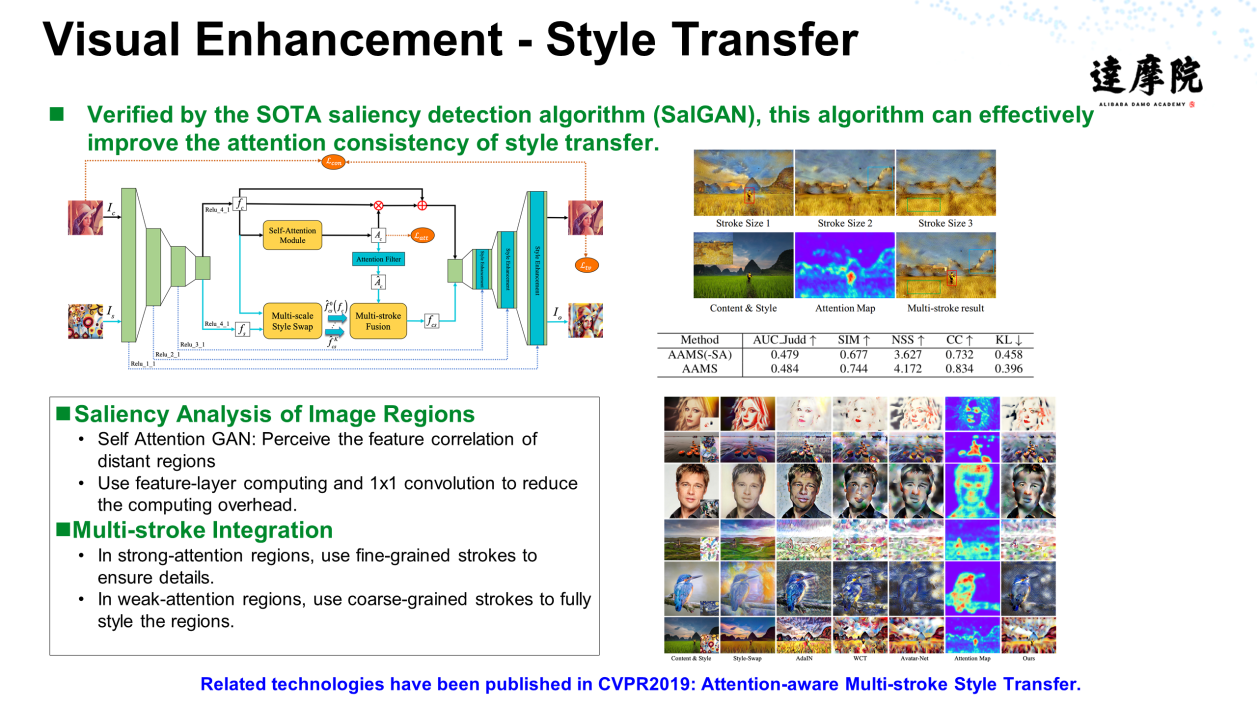

e. Style Transfer and Color Extension

You can also use visual enhancements to transfer styles. For example, some camera software can transfer styles of some famous paintings to photos taken by users to diversify the styles of photos.

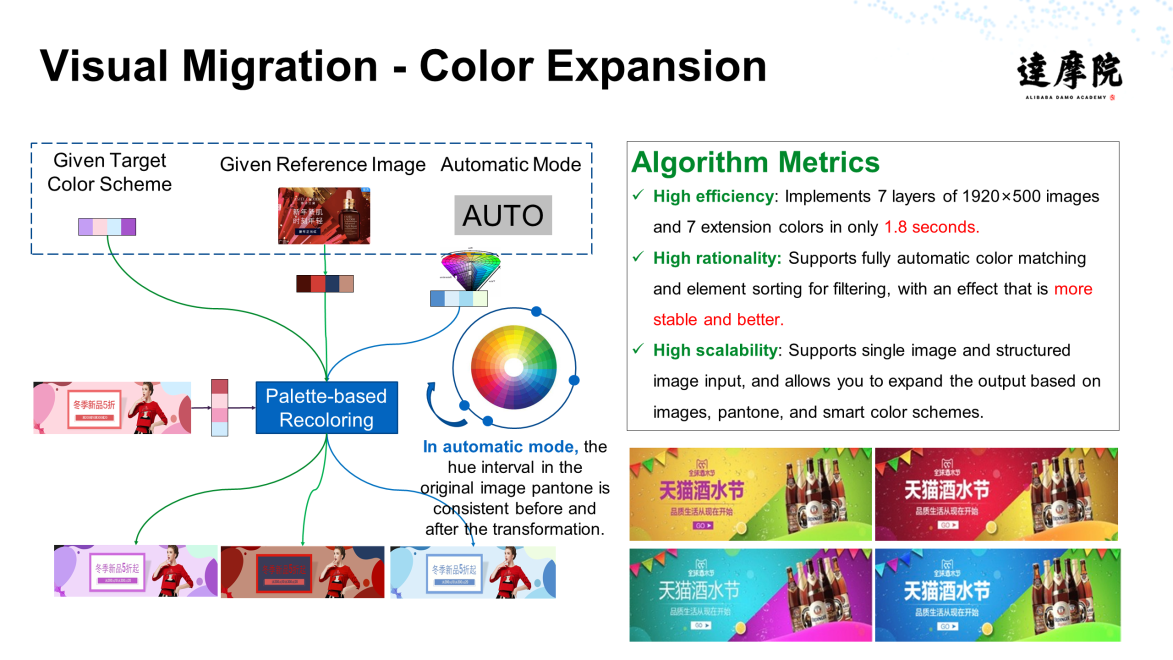

In addition, visual enhancement can also extend colors. For example, based on the ad shown in the following figure, ads with different colors can be produced concurrently to meet different requirements and achieve color diversity.

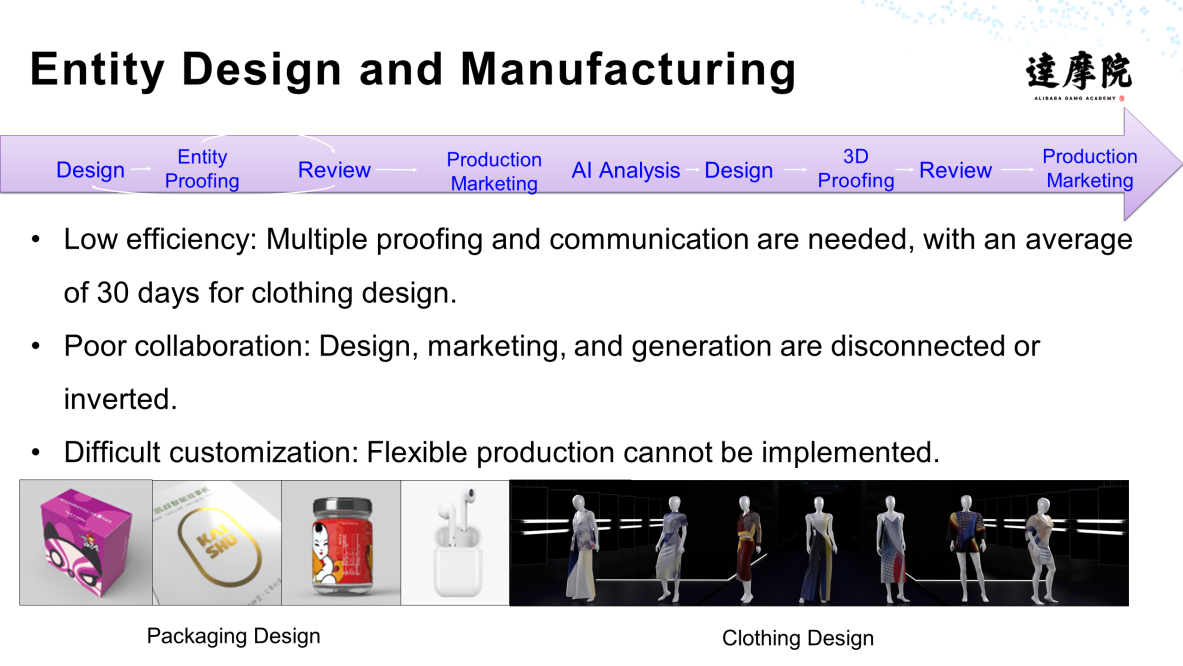

The previous sections talked about digital content. Can we associate virtual content with physical content? Yes, we can. The following figure shows examples of packaging design and clothing design. We can use visual manufacturing technology to address low efficiency, poor collaboration, and difficult customization in the production process.

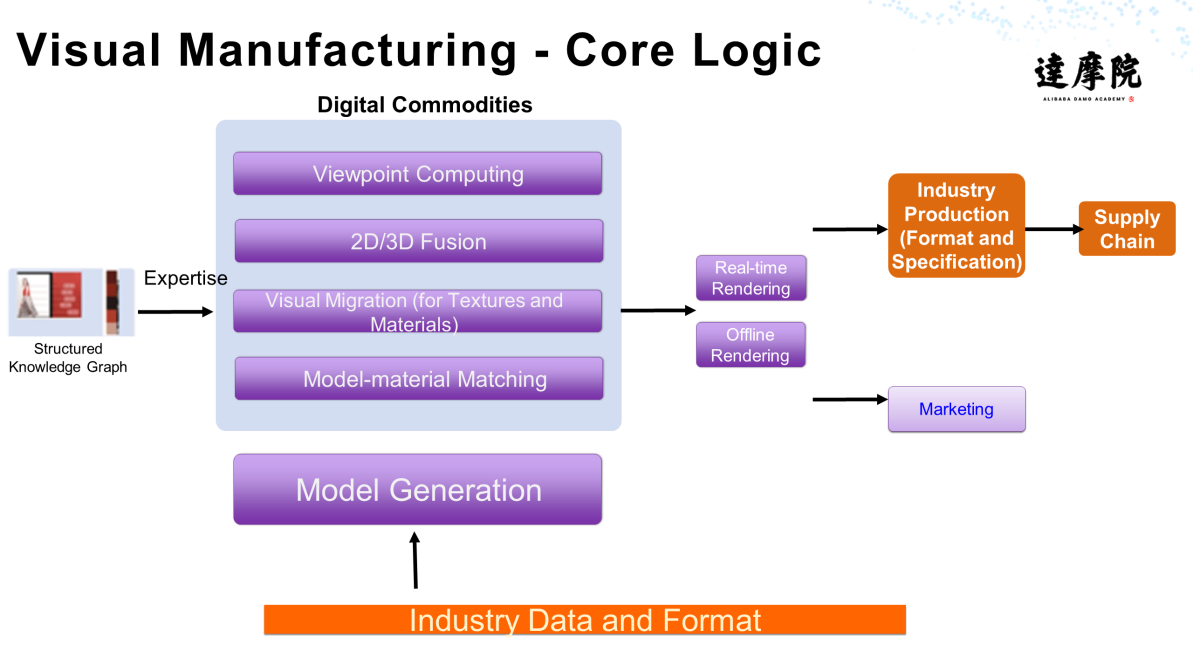

The following figure shows the core logic of visual manufacturing:

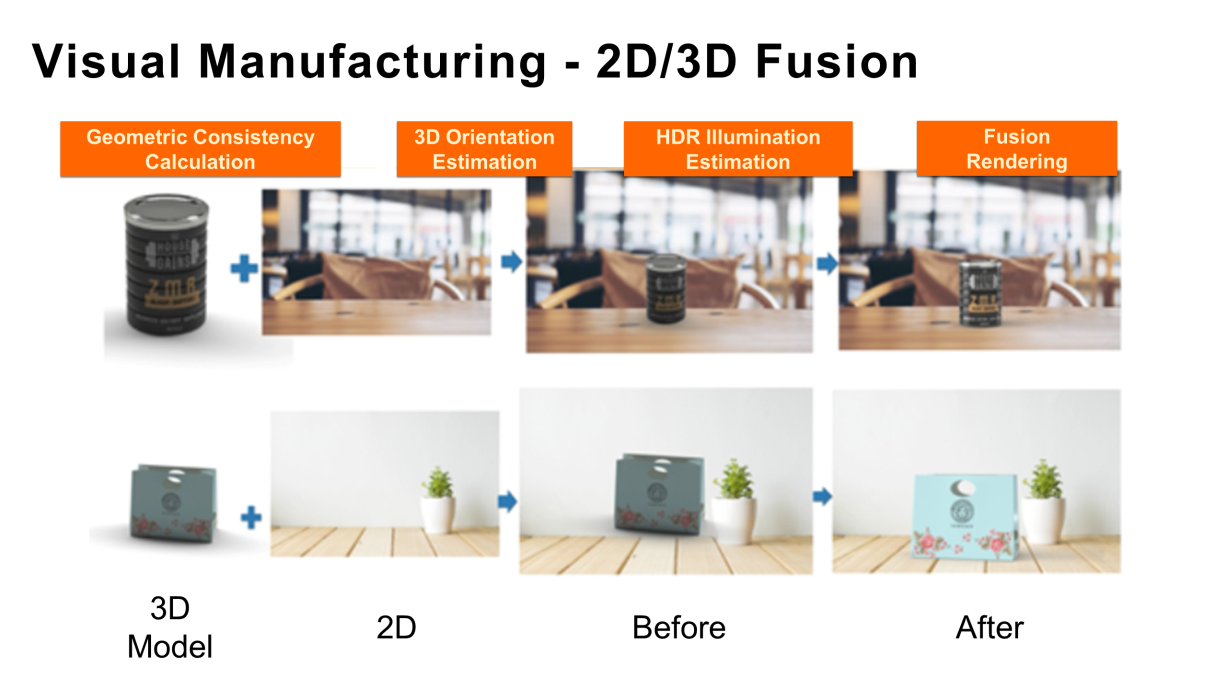

A variety of technologies are used during the visual manufacturing process, such as the geometric generation of packaging, geometric generation of clothing, diversified generation of materials and textures, visual migration and integration, and diversity expansion. After we obtain an object or a commodity model, we can use 2D and 3D integration to blend the object or model with a background or other commodities for direct rendering or proofing of commodities, as shown in the following figure. We can also complete the conversion from 3D rendering to 2D rendering to form a closed loop, which greatly improves the efficiency of the industry.

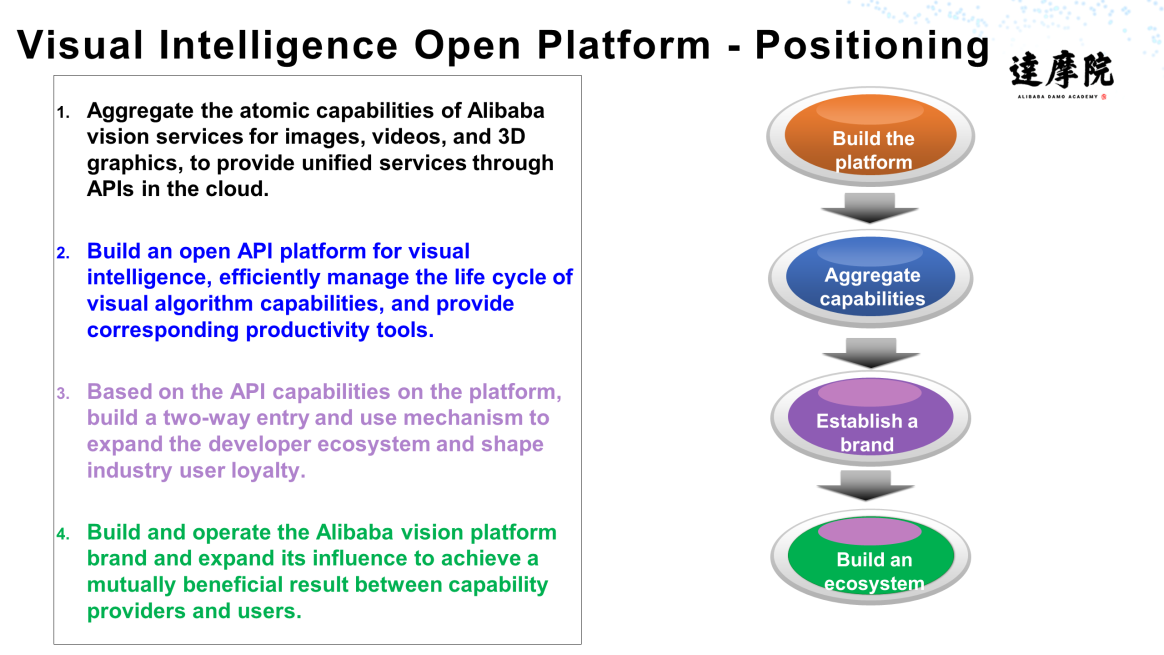

All of the aforementioned technologies are available on Alibaba's visual intelligence open platform at vision.aliyun.com (currently only available in Chinese). Please take a look if you want to learn more.

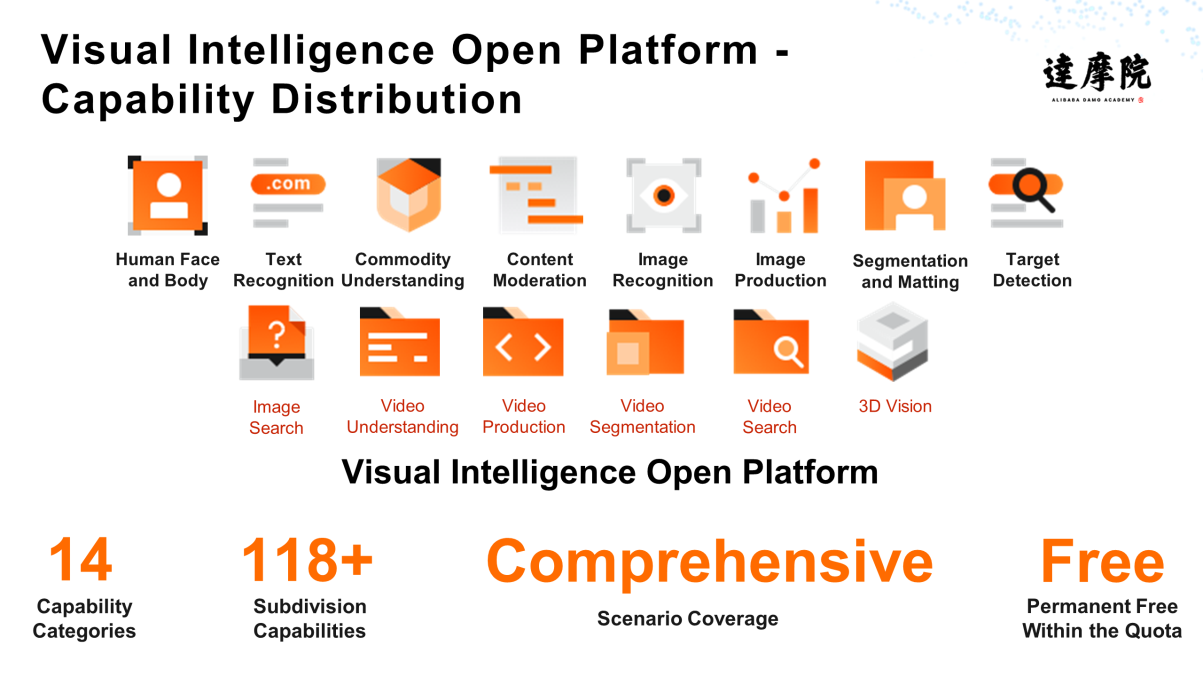

The platform has been open for more than two months and mainly provides capabilities shown in the following figure, including image and video-related capabilities. These capabilities are further divided into more than 100 divisions that cover various scenarios.

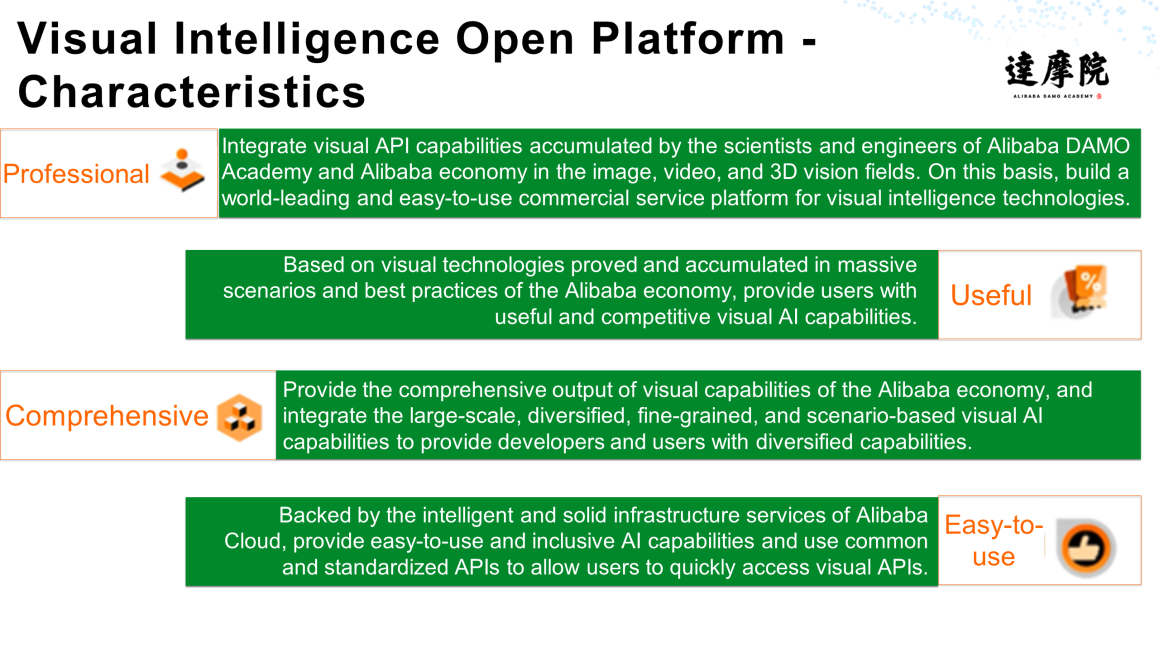

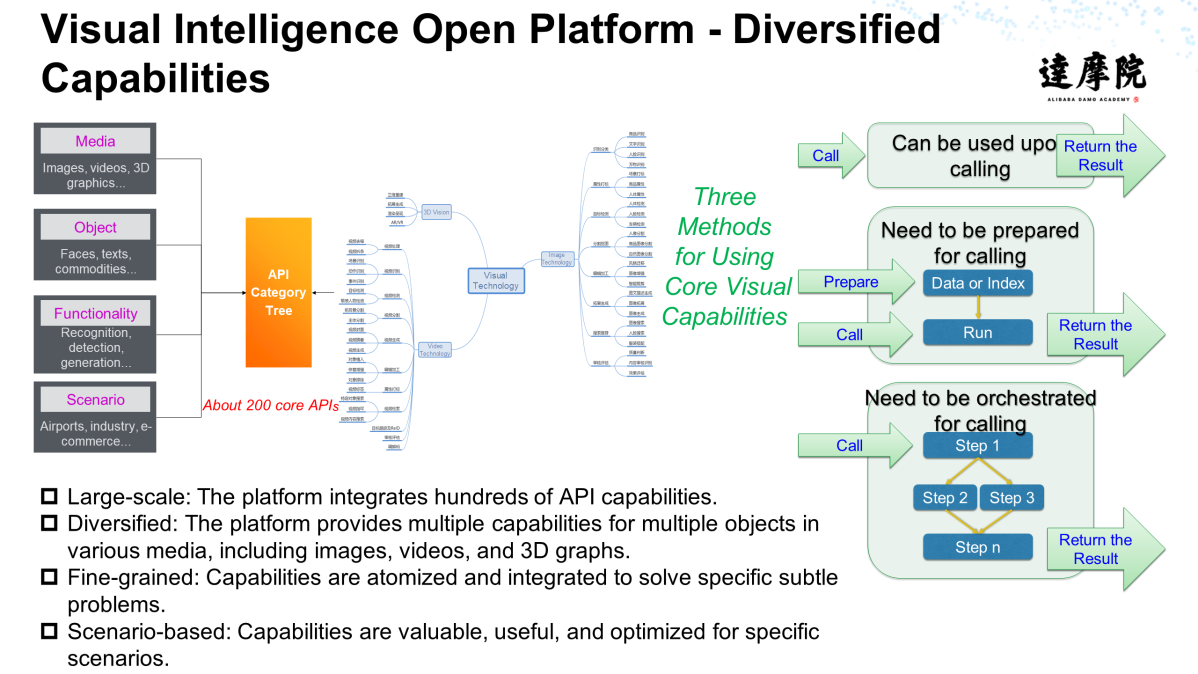

The platform has four characteristics: professional, practical, comprehensive, and easy to use. Users can select the desired diversified capabilities on this platform.

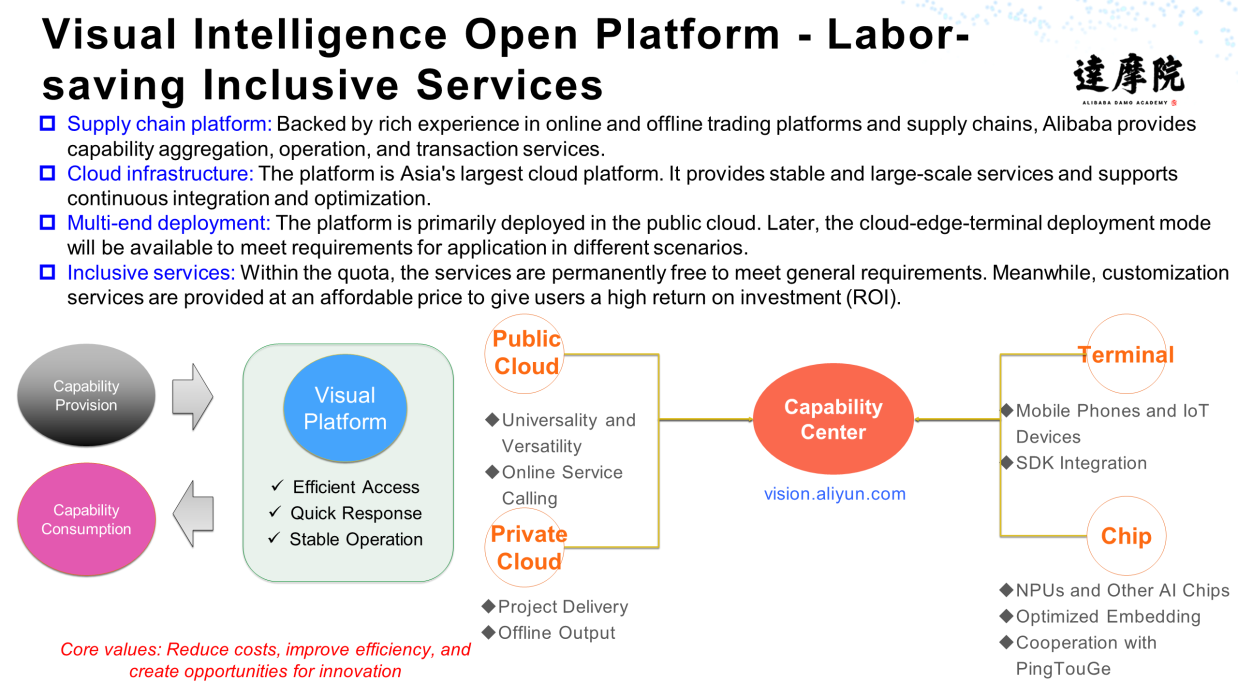

With a powerful supply chain platform and infrastructure, the visual intelligence open platform provides a wide range of services in the public and private clouds and enables users to enjoy affordable services.

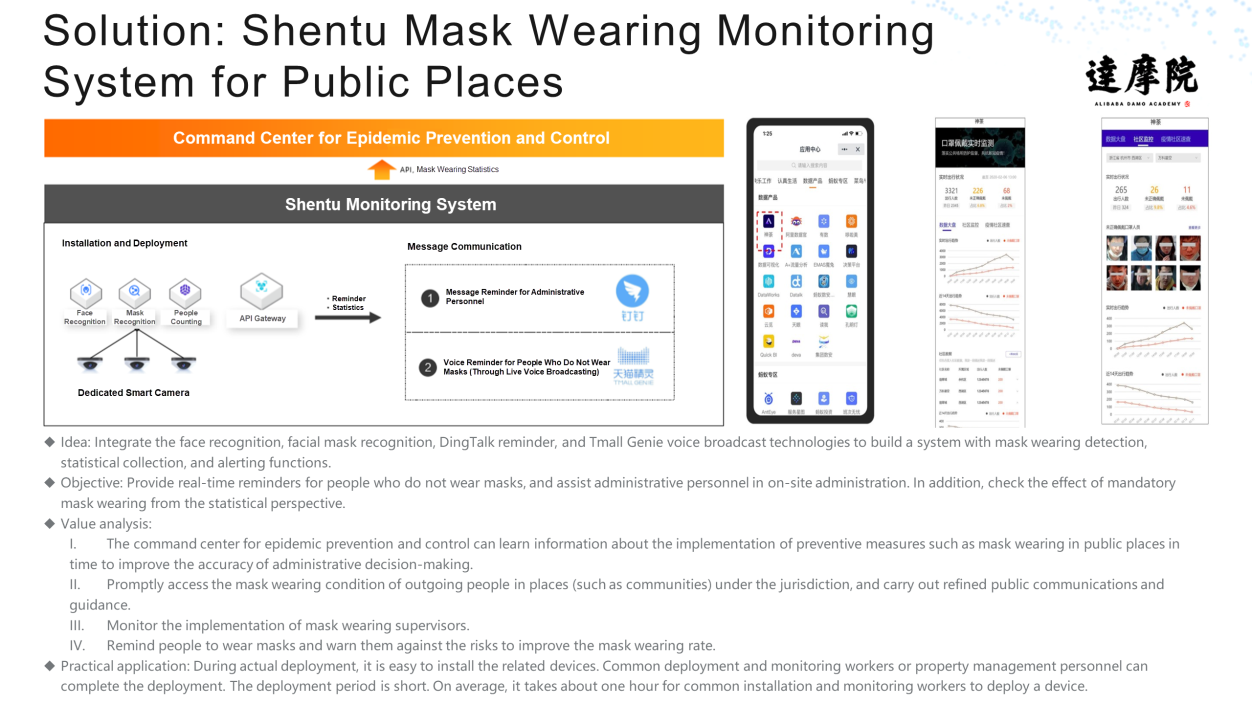

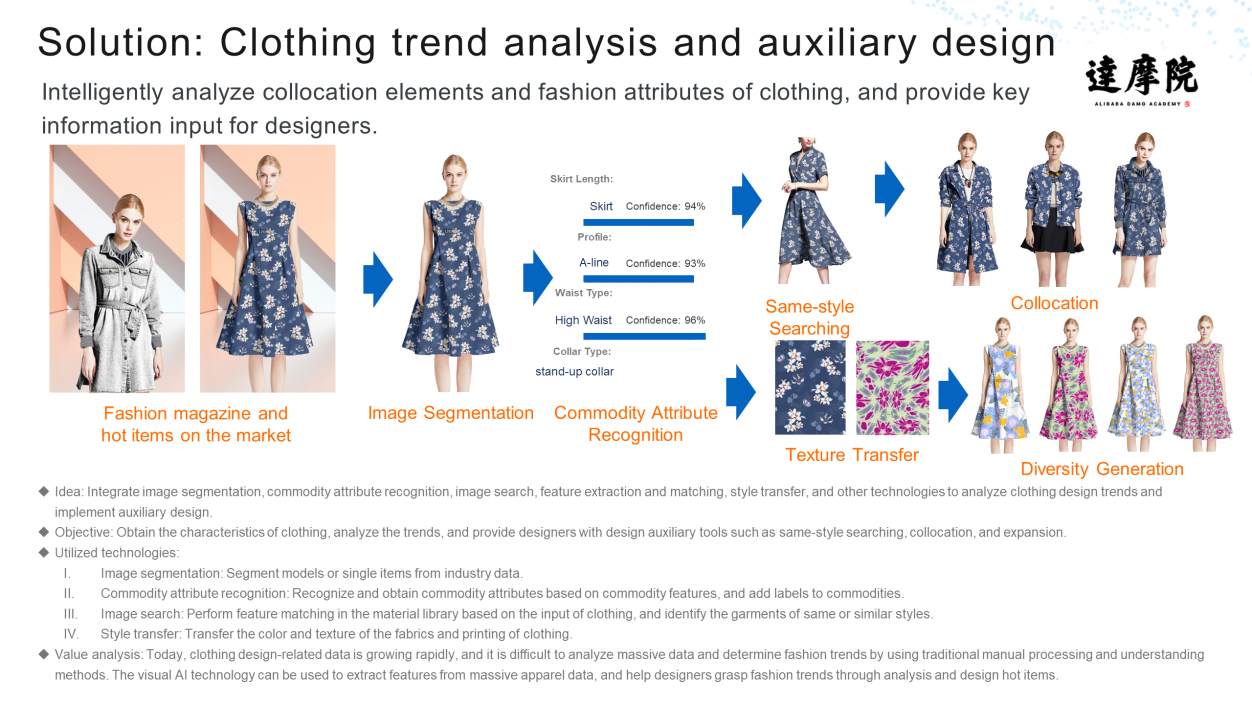

As shown in the following figure, the visual intelligence open platform provides complete scenario-based solutions, such as the Shentu mask-wearing detection system in public places, automatic video ad implantation system, clothing trend analysis, and auxiliary design.

Alibaba Clouder - December 31, 2020

Alibaba Cloud Serverless - July 9, 2024

Alibaba Clouder - January 19, 2021

Alibaba Cloud Native Community - June 13, 2025

Alibaba EMR - July 20, 2022

Alibaba Clouder - July 15, 2020

Image Search

Image Search

An intelligent image search service with product search and generic search features to help users resolve image search requests.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More CT Image Analytics Solution

CT Image Analytics Solution

This technology can assist realizing quantitative analysis, speeding up CT image analytics, avoiding errors caused by fatigue and adjusting treatment plans in time.

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More